Provable Deterministic Leverage Score Sampling

We explain theoretically a curious empirical phenomenon: "Approximating a matrix by deterministically selecting a subset of its columns with the corresponding largest leverage scores results in a good low-rank matrix surrogate". To obtain provable gu…

Authors: Dimitris Papailiopoulos, Anastasios Kyrillidis, Christos Boutsidis

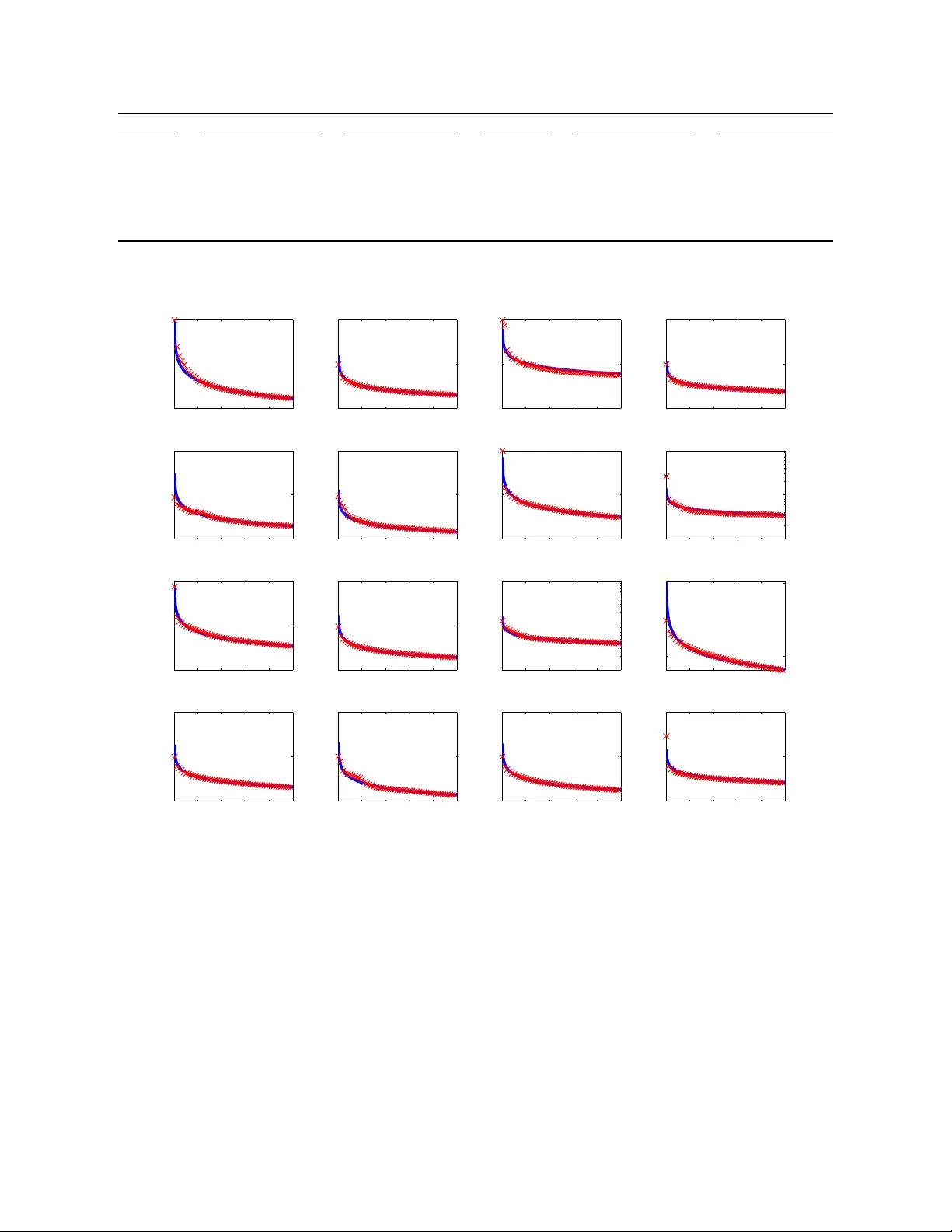

Provable Deterministic Leverage Score Sampling ∗ Dimitris Papailiopoulos † Anastasios Kyrillidis ‡ Christos Boutsidis § Abstract W e explain theoretically a curious empirical phenomenon: “Approximating a matrix by deterministically selecting a subset of its columns with the corresponding largest leverage scor es results in a good low-rank matrix surrogate”. T o obtain provable guarantees, previous work requires randomized sampling of the columns with probabilities pr oportional to their leverage scores. In this work, we provide a novel theoretical analysis of deterministic leverage score sampling . W e show that such deterministic sampling can be provably as accurate as its randomized counterparts, if the lever- age scor es follow a moderately steep power -law decay . W e support this power -law assumption by provid- ing empirical evidence that such decay laws are abundant in real-world data sets. W e then demonstrate empirically the performance of deterministic leverage score sampling, which many times matches or out- performs the state-of-the-art techniques. 1 Introduction Recently , there has been a lot of interest on selecting the “best” or “more repr esentative” columns from a data matrix [13, 28]. Qualitatively , these columns reveal the most important information hidden in the underlying matrix structure. This is similar to what principal components carry , as extracted via Principal Components Analysis (PCA) [24]. In sharp contrast to PCA, using actual columns of the data matrix to form a low-rank surrogate offers interpretability , making it more attractive to practitioners and data analysts [35, 5, 36, 28]. T o make the discussion precise and to rigorously characterize the “best” columns of a matrix, let us introduce the following Column Subset Selection Pr oblem (CSSP). C O L U M N S U B S E T S E L E C T I O N P R O B L E M . Let A ∈ R m × n and let c < n be a sampling parameter . Find c columns of A – denoted as C ∈ R m × c – that minimize k A − CC † A k F or k A − CC † A k 2 , where C † denotes the Moore-Penr ose pseudo-inverse. State of the art algorithms for the CSSP utilize both deterministic and randomized techniques; we dis- cuss related work in Section 5. Here, we describe two algorithms from prior literature that suffice to high- light our contributions. A central part of our discussion will involve the leverage scores of a matrix A , which we define below . Definition 1. [Leverage scores] Let V k ∈ R n × k contain the top k right singular vectors of a m × n matrix A with rank ρ = rank ( A ) ≥ k . Then, the (rank- k ) leverage score of the i -th column of A is defined as ` ( k ) i = k [ V k ] i, : k 2 2 , i = 1 , 2 , . . . , n. Here, [ V k ] i, : denotes the i -th row of V k . ∗ An extended abstract of this article appeared in the 20th ACM SIGKDD Confer ence on Knowledge Discovery and Data Mining. † Electrical and Computer Engineering, UT Austin, dimitris@utexas.edu. ‡ School of Computer and Communication Sciences, EPFL, anastasios.kyrillidis@epfl.ch § Y ahoo! Labs, New Y ork, boutsidis@yahoo-inc.com 1 One of the first algorithms for column subset selection dates back to 1972: in [22], Joliffe proposes a deterministic sampling of the columns of A that correspond to the largest leverage scores ` ( k ) i , for some k < rank ( A ) . Although this simple approach has been extremely successful in practice [22, 23, 31, 8], to the best of our knowledge, there has been no theor etical explanation why the approximation errors k A − CC † A k F and k A − CC † A k 2 should be small. One way to circumvent the lack of a theoretical analysis for the above deterministic algorithm is by utilizing randomization. Drineas et al. [13] proposed the following approach: for a tar get rank k < rank ( A ) , define a probability distribution over the columns of A , i.e., the i th column is associated with a pr obability p i = ` ( k ) i /k ; observe that P i p i = 1 , since P i ` ( k ) i = k V k k 2 F = k . Then, in c independent and identically distributed passes, sample with replacement c columns from A , with probabilities given by p i . Drineas et al. [13], using results in [32], show that this random subset of columns C ∈ R m × c approximates A , with constant probability , within relative error: k A − CC † A k F ≤ (1 + ε ) k A − A k k F , when the number of sampled columns is c = O ( k log k /ε 2 ) , for some 0 < ε < 1 . Here, A k ∈ R m × n is the best rank- k matrix obtained via the SVD. There are two important remarks that need to be made: (i) the randomized algorithm in [13] yields a matrix estimate that is “near optimal”, i.e., has error close to that of the best rank- k approximation; and (ii) the above random sampling algorithm is a straightforward randomized version of the deterministic algorithm of Joliffe [22]. From a practical perspective, the deterministic algorithm of Joliffe [22] is extremely simple to imple- ment, and is computationally efficient. Unfortunately , as of now , it did not admit provable performance guarantees. An important open question [13, 31, 8] is: Can one simply keep the columns having the largest leverage scores, as suggested in [22], and still have a pr ovably tight approximation? 1.1 Contributions In this work, we establish a new theor etical analysis for the deterministic leverage score sampling algorithm of Jolif fe [22]. W e show that if the leverage scores ` ( k ) i follow a suf ficiently steep power -law decay , then this deterministic algorithm has provably similar or better performance to its randomized counterparts (see Theorems 2 and 3 in Section 2). This means that under the power-law decay assumption, deterministic leverage score sampling provably obtains near optimal low-rank approximations and it can be as accurate as the “best” algorithms in the literature [4, 19]. From an applications point of view , we support the power law decay assumption of our theoretical analysis by demonstrating that several real-world data-sets have leverage scores following such decays. W e further run several experiments on synthetic and real data sets, and compare deterministic leverage score sampling with the state of the art algorithms for the CSSP . In most experiments, the deterministic algorithm obtains tight low-rank approximations, and is shown to perform similar , if not better , than the state of the art. 1.2 Notation W e use A , B , . . . to denote matrices and a , b , . . . to denote column vectors. I n is the n × n identity matrix; 0 m × n is the m × n matrix of zeros; e i belongs to the standard basis (whose dimensionality will be clear from the context). Let C = [ a i 1 , . . . , a i c ] ∈ R m × c , contain c columns of A . W e can equivalently write C = AS , wher e the sampling matrix is S = [ e i 1 , . . . , e i c ] ∈ R n × c . W e define the Frobenius and the spectral norm of a matrix as k A k 2 F = P i,j A 2 ij and k A k 2 = max x : k x k 2 =1 k Ax k 2 , respectively . 2 2 Deterministic Column Sampling In this section, we describe the details of the deterministic leverage score sampling algorithm. In Section 3, we state our approximation guarantees. In the remaining of the text, given a matrix A of rank ρ, we assume that the “target rank” is k < ρ . This means that we wish to approximate A using a subset of c ≥ k of its columns, such that the resulting matrix has an err or close to that of the best rank- k approximation. The deterministic leverage score sampling algorithm can be summarized in the following thr ee steps: Step 1: Obtain V k , the top- k right singular vectors of A . This can be carried by simply computing the singular value decomposition (SVD) of A in O (min { m, n } mn ) time. Step 2: Calculate the leverage scores ` ( k ) i . For simplicity , we assume that ` ( k ) i are sorted in descending order , hence the columns of A have the same sorting as well. 1 Step 3: Output the c columns of A that correspond to the largest c leverage scores ` ( k ) i such that their sum P c i =1 ` ( k ) i is more than θ . This ensures that the selected columns have accumulated “energy” at least θ . In this step, we have to carefully pick θ , our stopping threshold . This parameter essentially controls the quality of the approximation . In Section 7, we provide some guidance on how the stopping parameter θ should be chosen. Note that, if θ is such that c < k , we force c = k . This is a necessary step that prevents the error in the approximation from “blowing up” (see Section 7). The exact steps are given in Algorithm 1. Algorithm 1 LeverageScoresSampler ( A , k , θ ) Input: A ∈ R m × n , k, θ 1: Compute V k ∈ R n × k (top k right sing. vectors of A ) for i = 1 , 2 , . . . , n 2: ` ( k ) i = k [ V k ] i, : k 2 2 end for without loss of generality , let ` ( k ) i ’s be sorted: ` ( k ) 1 ≥ · · · ≥ ` ( k ) i ≥ ` ( k ) i +1 ≥ · · · ≥ ` ( k ) n . 3: Find index c ∈ { 1 , . . . , n } such that: c = argmin c c X i =1 ` ( k ) i > θ ! . 4: If c < k , set c = k . Output: S ∈ R n × c s.t. AS has the top c columns of A . Algorithm 1 requires O (min { m, n } mn ) arithmetic operations. In Section 8 we discuss modifications to this algorithm which improve the r unning time. 3 Approximation guarantees Our main technical innovation is a bound on the approximation err or of Algorithm 1 in regar d to the CSSP; the proof of the following theor em can be found in Section 6. Theorem 2. Let θ = k − ε, for some ε ∈ (0 , 1) , and let S be the n × c output sampling matrix of Algorithm 1. Then, for C = AS and ξ = { 2 , F } , we have k A − CC † A k 2 ξ < (1 − ε ) − 1 · k A − A k k 2 ξ . Choosing ε ∈ (0 , 1 / 2) implies (1 − ε ) − 1 ≤ 1 + 2 ε and, hence, we have a relative-error appr oximation: k A − CC † A k 2 ξ < (1 + 2 ε ) · k A − A k k 2 ξ . 1 Otherwise, one needs to sort them in O ( n log n ) time-cost. 3 3.1 Bounding the number of sampled columns Algorithm 1 extracts at least c ≥ k columns of A . However , an upper bound on the number of output columns c is not immediate. W e study such upper bounds below . From Theorem 2, it is clear that the stopping parameter θ = k − ε directly controls the number of output columns c . This number , extracted for a specific error requir ement ε , depends on the decay of the leverage scores. For example, if the leverage scores decay fast, then we intuitively expect P c i =1 ` ( k ) i = k − ε to be achieved for a “small” c . Let us for example consider a case where the leverage scor es follow an extremely fast decay: ` ( k ) 1 = k − 2 k · ε, ` ( k ) 2 = . . . = ` ( k ) 2 k = ε, ` ( k ) 2 k +1 = . . . = ` ( k ) n = ε n − 2 k . Then, in this case P 2 k i =1 ` ( k ) i = k − ε, and Algorithm 1 outputs the c = 2 k columns of A that correspond to the 2 k largest leverage scores. Due to Theorem 2, this subset of columns C ∈ R n × 2 k comes with the following guarantee: k A − CC † A k 2 ξ < 1 1 − ε · k A − A k k 2 ξ . Hence, from the above example, we expect that, when the leverage scores decay fast, a small number of columns of A will offer a good appr oximation of the form CC † A . However , in the worst case Algorithm 1 can output a number of columns c that can be as large as Ω( n ) . T o highlight this subtle point, consider the case where the leverage scores are uniform ` ( k ) i = k n . Then, one can easily observe that if we want to achieve an err or of ε according to Theor em 2, we have to set θ = k − ε . This directly implies that we need to sample c > ( n/k ) θ columns. Hence, if ε = o (1) , then, c ≥ ( n/k ) θ = (1 − ε/k ) n = Ω( n ) . Hence, for ε → 0 we have c → n, which makes the result of Theorem 2 trivial. W e argued above that when the leverage scores decay is “fast” then a good approximation is to be expected with a ”small” c. W e make this intuition precise below 2 . The next theorem considers the case where the leverage scor es follow a power-law decay; the pr oof can be found in Section 6. Theorem 3. Let the leverage scores follow a power-law decay with exponent α k = 1 + η , for η > 0 : ` ( k ) i = ` ( k ) 1 i α k . Then, if we set the stopping parameter to θ = k − ε, for some ε with 0 < ε < 1 , the number of sampled columns in C = AS that Algorithm 1 outputs is c = max ( 2 k ε 1 1+ η − 1 , 2 k η · ε 1 η − 1 , k ) , and C achieves the following approximation err or k A − CC † A k 2 ξ < 1 1 − ε · k A − A k k 2 ξ , for ξ = { 2 , F } . 2 W e chose to analyze in detail the case where the leverage scores follow a power law decay; other models for the leverage scores, example, exponential decay , are also inter esting, and will be the subject of the full version of this work. 4 3.2 Theoretical comparison to state of the art W e compare the number of chosen columns c in Algorithm 1 to the number of columns chosen in the randomized leverage scores sampling case [13]. The algorithm of [13] requires c = O ( k log k /ε 2 ) columns for a relative-err or bound with respect to the Fr obenius error in the CSSP: k A − CC † A k 2 F ≤ (1 + ε ) k A − A k k 2 F . Assuming the leverage scores follow a power -law decay , Algorithm 1 requires fewer columns for the same accuracy ε when: max ( 2 k ε 1 1+ η , 2 k η · ε 1 η ) < C · k log k ε 2 , where C is an absolute constant. Hence, under the power law decay , Algorithm 1 offers provably a matrix approximation similar or better than [13]. Let us now compare the performance of Algorithm 1 with the results in [4], which are the current state of the art for the CSSP . Theor em 1.5 in [4] provides a randomized algorithm which selects c = 2 k ε (1 + o (1)) columns in C such that k A − CC † A k 2 F < (1 + ε ) · k A − A k k 2 F holds in expectation. This result is in fact optimal, up to a constant 2, since there is a lower bound indicating that such a relative err or approximation is not possible unless c = k /ε, (see Section 9.2 in [4]). The approximation bound of Algorithm 1 is indeed better than the upper/lower bounds in [4] for any η > 1 . W e should note here that the lower bound in [4] is for general matrices; however , the upper bound of Theorem 3 is applied to a specific class of matrices whose leverage scores follow a power law decay . Next, we compare the spectral norm bound of Theorem 3 to the spectral norm bound of Theorem 1.1 in [4], which indicates that there exists a deterministic algorithm selecting c > k columns with error k A − CC † A k 2 2 < O ( n/c ) · k A − A k k 2 2 . This upper bound is also tight, up to constants, since [4] provides a matching lower bound. Notice that a relative err or upper bound requir es c = Ω ( n/ (1 + ε )) in the general case. However , under the power law assumption in Theorem 3, we provide such a relative error bound with asymptotically fewer columns. T o our best knowledge, fixing η to a constant, this is the first relative-err or bound for the spectral norm version of the CSSP with c = poly ( k , 1 /ε ) columns. 4 Experiments In this section, we first provide evidence that power law decays are prevalent in real-world data sets. Then, we investigate the empirical performance of Algorithm 1 on real and synthetic data sets. Our experiments are not meant to be exhaustive; however , they provide clear evidence that: ( i ) the leverage scores of real world matrices indeed follow “sharp” power law decays; and ( ii ) deterministic leverage score sampling in such matrices is particularly ef fective. 5 Dataset m × n Description Dataset m × n Description Amazon 262111 × 262111 Pur chase netw . [26] Citeseer 723131 × 723131 Citation netw . [25] 4square 106218 × 106218 Social netw . [38] Github 56519 × 120867 Soft. netw . [25] Gnutella 62586 × 62586 P2P netw . [26] Google 875713 × 875713 W eb conn. [25] Gowalla 875713 × 875713 Social netw . [25] LJournal 4847571 × 4847571 Social netw . [26] Slashdot 82168 × 82168 Social netw . [26] NIPS 12419 × 1500 W ord/Docs [2] Skitter 1696415 × 1696415 System netw . [25] CT slices 386 × 53500 CT images [2] Cora 23166 × 23166 Citation netw . [25] W riter 81067 × 42714 W riters/W orks [25] Y outube 1134890 × 1134890 V ideo netw . [26] YT groups 94238 × 30087 Users/Groups [25] T able 1: Summary of datasets used in the experiments of Subsection 4.1 1 200 400 600 800 1000 10 − 5 10 0 α 10 =1 . 45 amazon 1 200 400 600 800 1000 10 − 5 10 0 10 5 α 10 =1 . 5 citeseer 1 200 400 600 800 1000 10 − 10 10 − 5 10 0 α 10 =1 . 7 foursquare 1 200 400 600 800 1000 10 − 5 10 0 10 5 α 10 =1 . 13 github 1 200 400 600 800 1000 10 − 5 10 0 10 5 α 10 =2 gnutella 1 200 400 600 800 1000 10 − 5 10 0 10 5 α 10 =1 . 6 google 1 200 400 600 800 1000 10 − 4 10 − 2 10 0 α 10 =0 . 9 gowalla 1 200 400 600 800 1000 10 − 3 10 − 2 10 − 1 α 10 =0 . 2 livejournal 1 200 400 600 800 1000 10 − 4 10 − 2 10 0 α 10 =0 . 9 slashdot 1 200 400 600 800 1000 10 − 5 10 0 10 5 α 10 =1 . 6 nips 1 200 400 600 800 1000 10 − 4 10 − 3 10 − 2 α 10 =0 . 2 skitter 1 200 400 600 800 1000 10 − 3.6 10 − 3.3 α 10 =0 . 12 slice 1 2 00 4 00 600 800 1 000 10 − 5 10 0 10 5 α 10 =1 . 58 cora 1 2 00 4 00 600 800 1 000 10 − 10 10 0 10 10 α 10 =4 writers 1 2 00 4 00 600 800 1 000 10 − 5 10 0 10 5 α 10 =1 . 75 youtube groups 1 2 00 4 00 600 800 1 000 10 − 4 10 − 2 10 0 α 10 =0 . 5 youtube Figure 1: W e plot the top 1 , 000 leverage scores for 16 differ ent data sets, obtained through V k for k = 10 . The plots are in logarithmic scale. For each data-set, we plot a fitting power-law curve β · x − α k . The exponent is listed on each figure as α 10 . The leverage scores are plotted with a r ed × marker , and the fitted curves ar e denoted with a solid blue line. W e observe that the power law fit offers a good approximation of the true leverage scores. W e further observe that many data sets exhibit sharp decays ( α k > 1 ), while only a few have leverage scores that decay slowly ( α k < 1 ). 4.1 Power-law decays in real data sets W e demonstrate the leverage score decay behavior of many real-world data sets. These range from social networks and pr oduct co-purchasing matrices to document-term bag-of-words data sets, citation networks, and medical imaging samples. Their dimensions vary fr om thousands to millions of variables. The data-set description is given in T able 1. In Figure 1, we plot the top 1 , 000 leverage scores extracted from the matrix of the right top- k singular 6 5 500 1000 0 0.5 1 1.5 ∥ A − CC † A ∥ 2 2 ∥ A − A k ∥ 2 2 c c =473 k =5 10 500 1000 0 0.5 1 1.5 c c =404 k =1 0 50 500 1000 0 0.5 1 1.5 2 c c =629 k =5 0 100 500 1000 0 2 4 6 c c =63 0 k =1 0 0 Figure 2: Nearly-uniform leverage scores case: Here, we plot as a blue curve the relative error ratio k A − CC † A k 2 2 / k A − A k k 2 2 achieved by Algorithm 1 as a function of the output columns c . The leftmost vertical cyan line corr esponds to the point where k = c . When c < k the output err or can be lar ge; this justifies why we enforce the algorithm to output c ≥ k columns. The rightmost vertical magenta line indicates the point where the c sampled columns offer as good an appr oximation as that of the best rank- k matrix A k . vectors V k . In all cases we set k = 10 . 3 For each dataset, we plot a fitting power-law curve of the form β · x − α k , where α k is the exponent of interest. W e can see from the plots that a power law indeed seems to closely match the behavior of the top leverage scores. What is more interesting is that for many of our data sets we observe a decay exponent of α k > 1 : this is the regime where deterministic sampling is expected to perform well. It seems that these sharp decays are naturally pr esent in many real-world data sets. W e would like to note that as we move to smaller scores (i.e., after the 10 , 000 -th score), we empirically observe that the leverage scores tail usually decays much faster than a power law . This only helps the bound of Theorem 2. 4.2 Synthetic Experiments In this subsection, we are interested in understanding the performance of Algorithm 1 on matrices with (i) uniform and (ii) power-law decaying leverage scor es. T o generate matrices with a prescribed leverage score decay , we use the implementation of [21]. Let V k ∈ R n × k denote the matrix we want to construct, for some k < n . Then, [21] provides algorithms to generate tall-and-skinny orthonormal matrices with specified row norms (i.e., leverage scores). Given the V k that is the output of the matrix generation algorithm in [21], we run a basis completion algorithm to find the perpendicular matrix V ⊥ k ∈ R n × ( n − k ) such that V T k V ⊥ k = 0 k × ( n − k ) . Then, we create an n × n matrix V = [ V k V ⊥ k ] where the first k columns of V are the columns of V k and the rest n − k columns are the columns of V ⊥ k ; hence, V is a full orthonormal basis. Finally we generate A ∈ R m × n as A = UΣV T ; where U ∈ R m × m is any orthonormal matrix, and Σ ∈ R m × n any diagonal matrix with min { m, n } positive entries along the main diagonal. Therefore, A = UΣV T is the full SVD of A with leverage scores equal to the squared ` 2 -norm of the rows of V k . In our experiments, we pick U as an orthonormal basis for an m × m matrix where each entry is chosen i.i.d. from the Gaussian distribution. Also, Σ contains min { m, n } positive entries (sorted) along its main diagonal, where each entry was chosen i.i.d. from the Gaussian distribution. 4.2.1 Nearly-uniform scores W e set the number of r ows to m = 200 and the number of columns to n = 1000 and construct A = UΣV T ∈ R m × n as described above. The row norms of V k are chosen as follows: First, all row norms are chosen equal to k /n , for some fixed k . Then, we introduce a small perturbation to avoid singularities: for every other pair of rows we add β ∈ N (0 , 1 / 100) to a row norm and subtract the same β from the other row norm – hence the sum of ` ( k ) i equals to k . 3 W e performed various experiments for larger k , e.g., k = 30 or k = 100 (not shown due to space limitations). W e found that as we move towards higher k , we observe a “smoothing” of the speed of decay . This is to be expected, since for the case of k = rank ( A ) all leverage scores ar e equal. 7 5 500 0 0.5 1 1.5 ∥ A − CC † A ∥ 2 2 ∥ A − A k ∥ 2 2 c c =10 k =5 10 500 0 0.5 1 1.5 c c =38 k =1 0 50 500 0 1 2 c c =97 k =5 0 100 500 0 2 4 6 c c =152 k =1 0 0 5 500 0 0.5 1 1.5 ∥ A − CC † A ∥ 2 2 ∥ A − A k ∥ 2 2 c c =7 10 500 0 0.5 1 1.5 c c =11 50 500 0 1 2 c c =88 100 500 0 2 4 6 c c =129 ↵ k =0 . 5 ↵ k =1 . 5 Figure 3: Power-law decaying leverage scores case: W e choose two power-law exponents: α k = 0 . 5 and α k = 1 . 5 . In the first row we plot the relative error of Algorithm 1 vs. c for the first decay profile, and the second row is the error performance of Algorithm 1 for the second, sharpest decay profile. The vertical cyan line corresponds to the point wher e k = c , and the vertical magenta line indicates the point where the c sampled columns offer a better appr oximation compared to the best rank- k matrix A k . W e set k to take the values { 5 , 10 , 50 , 100 } and for each k we choose: c = { 1 , 2 , . . . , 1000 } . W e present our findings in Figure 2, where we plot the relative error achieved k A − CC † A k 2 2 k A − A k k 2 2 , where the n × c matrix C contains the first c columns of A that correspond to the k largest leverage scores of V k , as sampled by Algorithm 1. Then, the leftmost vertical cyan line corresponds to the point where k = c , and the rightmost vertical magenta line indicates the point where the c sampled columns achieve an error of k A − A k k 2 2 , where A k is the best rank- k approximation. In the plots of Figure 2, we see that as we move to larger values of k , if we wish to achieve an error of k A − CC † A k 2 2 ≈ k A − A k k 2 2 , then we need to keep in C , approximately almost half the columns of A . This agrees with the uniform scores example that we showed earlier in Subsection 3.1. However , we observe that Algorithm 1 can obtain a moderately small relative error , with significantly smaller c . See for example the case where k = 100 ; then, c ≈ 200 sampled columns suffice for a relative error approximately equal to 2 , i.e., k A − CC † A k 2 2 ≈ 2 · k A − A k k 2 2 . This indicates that our analysis could be loose in the general case. 4.2.2 Power -law decay In this case, our synthetic eigenvector matrices V k have leverage scores that follow a power law decay . W e choose two power-law exponents: α k = 0 . 5 and α k = 1 . 5 . Observe that the latter complies with Theorem 3, that predicts the near optimality of leverage scor e sampling under such decay . In the first row of Figure 3, we plot the relative error vs. the number of output columns c of Algorithm 1 for α k = 0 . 5 . Then, in the second row of Figure 3, we plot the relative error vs. the number of output columns c of Algorithm 1 for α k = 1 . 5 . The blue line r epresents the relative error in terms of spectral norm. W e can see that the performance of Algorithm 1 in the case of the fast decay is surprising: c ≈ 1 . 5 · k suffices for an approximation as good as of that of the best rank- k approximation. This confirms the approximation performance in Theorem 3. 4.3 Comparison with other techniques W e will now compare the proposed algorithm to state of the art approaches for the CSSP , both for ξ = 2 and ξ = F. W e report results for the errors k A − CC † A k 2 ξ / k A − A k k 2 ξ . A comparison of the running time complexity of those algorithms is out of the scope of our experiments. 8 T able 2 contains a brief description of the datasets used in our experiments. W e employ the datasets used in [16], which presents exhaustive experiments for matrix approximations obtained through randomized leverage scores sampling. Dataset m n rank ( A ) Description Protein 357 6621 356 Sacchar omyces cerevisiae dataset SNPS 46 5523 46 Single Nucleotide - polymorphism dataset Enron 3000 3000 2569 A subgraph of the Enr on email graph T able 2: Summary of datasets used in the experiments of Subsection 4.3 [16] 4.3.1 List of comparison algorithms W e compar e Algorithm 1 against thr ee methods for the CSSP . First, the authors in [4] present a near-optimal deterministic algorithm, as described in Theorem 1.2 in [4]. Given A , k < rank ( A ) and c > k , the pr oposed algorithm selects ˜ c ≤ c columns of A in C ∈ R m × ˜ c with k A − CC † A k F ≤ 1 + 1 − p k /c − 1 k A − A k k F . Second, in [17], the authors present a deterministic pivoted QR algorithm such that: k A − CC † A k 2 ≤ 1 + √ n − k · 2 k k A − A k k 2 . This bound was pr oved in [18]. In our tests, we use the qr ( · ) built-in Matlab function, where one can select c = k columns of A as: [ Q , R , π ] = qr ( A , 0); C = A : , π 1: c , where A = QR , Q ∈ R m × n contains orthonormal columns, R ∈ R n × n is upper triangular , and π is a permutation information vector such that A : , π = QR . Third, we also consider the randomized leverage-scores sampling method with r eplacement, presented in [13]. According to this work and given A , k < rank ( A ) , and c = Ω( k log k ) , the bound provided by the algorithm is k A − CC † A k F ≤ 1 + O p k log k /c k A − A k k F , which holds only with constant probability . In our experiments, we use the software tool developed in [21] for the randomized sampling step. W e use our own Matlab implementation for each of these appr oaches. For [13], we execute 10 repetitions and report the one that minimizes the appr oximation error . 4.3.2 Performance Results T able 3 contains a subset of our results; a complete set of results is reserved for an extended version of this work. W e observe that the performance of Algorithm 1 is particularly appealing; particularly , it is almost as good as randomized leverage scores sampling in almost all cases - when randomized sampling is better the differ ence is often on the first or second decimal digit. Figure 4 shows the leverage scores for the three matrices used in our experiments. W e see that although the decay for the first data sets does not fit a “sharp” power law as it is required in Theorem 3, the per- formance of the algorithm is still competitive in practice. Inter estingly , we do observe good performance compared to the other algorithms for the third data set (Enron). For this case, the power law decay fits the decay profile needed to establish the near optimality of Algorithm 1. 9 Model k A − CC † A k 2 k A − A k k 2 k A − CC † A k F k A − A k k F k c [13] [17] This work [4] [13] [17] This work Protein 50 51 1 . 7334 2 . 2621 2 . 6809 1 . 1068 1 . 0888 1 . 1017 1 . 1000 118 1 . 3228 1 . 4274 2 . 2536 0 . 9344 0 . 9233 0 . 9258 0 . 9259 186 1 . 0846 1 . 0755 1 . 7357 0 . 7939 0 . 7377 0 . 7423 0 . 7346 253 0 . 9274 0 . 9281 1 . 3858 0 . 6938 0 . 5461 0 . 5326 0 . 5264 320 0 . 7899 0 . 7528 0 . 8176 0 . 5943 0 . 2831 0 . 2303 0 . 2231 100 101 1 . 8568 1 . 8220 2 . 5666 1 . 1789 1 . 1506 1 . 1558 1 . 1606 156 1 . 3741 1 . 3987 2 . 4227 0 . 9928 0 . 9783 0 . 9835 0 . 9820 211 1 . 3041 1 . 1926 2 . 3122 0 . 8182 0 . 8100 0 . 7958 0 . 7886 265 1 . 0270 1 . 0459 2 . 0509 0 . 6241 0 . 6004 0 . 5820 0 . 5768 320 0 . 9174 0 . 8704 1 . 8562 0 . 3752 0 . 3648 0 . 2742 0 . 2874 SNPS 5 6 1 . 4765 1 . 5030 1 . 5613 1 . 1831 1 . 0915 1 . 1030 1 . 1056 12 1 . 2601 1 . 2402 1 . 2799 1 . 0524 0 . 9649 0 . 9519 0 . 9469 18 1 . 0537 1 . 0236 1 . 1252 1 . 0183 0 . 8283 0 . 8187 0 . 8281 24 0 . 8679 0 . 9063 0 . 9302 0 . 9537 0 . 6943 0 . 6898 0 . 6975 30 0 . 7441 0 . 7549 0 . 8742 0 . 9558 0 . 5827 0 . 5413 0 . 5789 10 11 1 . 6459 1 . 5206 1 . 6329 1 . 2324 1 . 1708 1 . 1500 1 . 1413 16 1 . 3020 1 . 4265 1 . 5939 1 . 1272 1 . 0386 1 . 0199 1 . 0420 21 1 . 2789 1 . 1511 1 . 1676 1 . 0225 0 . 9170 0 . 8842 0 . 9011 25 1 . 1022 1 . 0729 1 . 0935 0 . 9838 0 . 8091 0 . 7876 0 . 8057 30 0 . 9968 0 . 9256 1 . 0020 0 . 8088 0 . 6636 0 . 6375 0 . 6707 Enron 10 11 2 . 2337 1 . 8320 1 . 7217 1 . 1096 1 . 0992 1 . 0768 1 . 0704 83 1 . 0717 1 . 0821 1 . 1464 1 . 0123 0 . 9381 0 . 9094 0 . 9196 156 0 . 8419 0 . 8172 0 . 8412 1 . 0044 0 . 8692 0 . 8091 0 . 8247 228 0 . 6739 0 . 6882 0 . 6993 0 . 9984 0 . 8096 0 . 7311 0 . 7519 300 0 . 6061 0 . 6041 0 . 6057 1 . 0000 0 . 7628 0 . 6640 0 . 6837 20 21 2 . 1726 1 . 9741 2 . 1669 1 . 1344 1 . 1094 1 . 0889 1 . 0931 91 1 . 3502 1 . 3305 1 . 3344 1 . 0194 0 . 9814 0 . 9414 0 . 9421 161 1 . 0242 1 . 0504 1 . 0239 0 . 9999 0 . 9004 0 . 8434 0 . 8484 230 0 . 9099 0 . 9025 0 . 9006 0 . 9730 0 . 8505 0 . 7655 0 . 7740 300 0 . 8211 0 . 7941 0 . 7936 0 . 9671 0 . 8037 0 . 6971 0 . 7087 50 51 2 . 6520 2 . 2788 2 . 2520 1 . 1547 1 . 1436 1 . 1053 1 . 1076 113 1 . 7454 1 . 6850 1 . 8122 1 . 0350 1 . 0425 0 . 9902 0 . 9929 176 1 . 3524 1 . 4199 1 . 4673 0 . 9835 0 . 9718 0 . 8999 0 . 9011 238 1 . 2588 1 . 2303 1 . 2450 0 . 9607 0 . 9187 0 . 8251 0 . 8282 300 1 . 2209 1 . 1014 1 . 1239 0 . 9384 0 . 8806 0 . 7593 0 . 7651 100 101 2 . 2502 2 . 2145 2 . 2721 1 . 1938 1 . 1805 1 . 1223 1 . 1238 151 2 . 2399 1 . 8677 1 . 8979 1 . 0891 1 . 1122 1 . 0357 1 . 0393 201 1 . 7945 1 . 6350 1 . 6332 1 . 0236 1 . 0631 0 . 9646 0 . 9664 250 1 . 6721 1 . 5001 1 . 5017 0 . 9885 1 . 0026 0 . 9025 0 . 9037 300 1 . 3946 1 . 3711 1 . 3847 0 . 9485 0 . 9672 0 . 8444 0 . 8467 T able 3: W e present the performance of Algorithm 1 as compared to the state of the art in CSSP . W e run experiments on 3 data sets described in the above table, for various values of k and c . The performance of Algorithm 1, especially in terms of the Frobenius norm error , is very close to optimal, while at the same time similar , if not better , to the performance of the more sophisticated algorithms of the comparison. 5 Related work W e give a quick overview of several column subset selection algorithms, both deterministic and random- ized. One of the first deterministic results regar ding the CSSP goes back to the seminal work of Gene Golub on pivoted QR factorizations [17]. Similar algorithms have been developed in [17, 20, 10, 11, 18, 37, 34, 3, 29]; see also [6] for a recent survey . The best of these algorithms is the so-called Strong Rank-revealing QR (Strong 10 1 2 00 4 00 600 800 1 000 10 − 3 10 − 2 10 − 1 α =0 . 35 Protein 1 2 00 4 00 600 800 1 000 10 − 3 10 − 2 10 − 1 α =0 . 4 SNPS 1 2 00 4 00 600 800 1 000 10 − 5 10 0 10 5 α =1 . 2 Enron Figure 4: The plots are for k = 10 and are in logarithmic scale. The exponent is listed on each figure as α . The leverage scores are plotted with a red × marker , and the fitted curves are denoted with a solid blue line. RRQR) algorithm in [18]: Given A , c = k , and constant f ≥ 1 , Strong RRQR requires O ( mnk log f n ) arithmetic operations to find k columns of A in C ∈ R m × k that satisfy k A − CC † A k 2 ≤ 1 + f 2 p k ( n − k ) + 1 · k A − A k k 2 . As discussed in Section 1, [22] suggests column sampling with the largest corresponding leverage scores. A related result in [37] suggests column sampling thr ough selection over V T k with Strong RRQR. Notice that the leverage scores sampling approach is similar , but the column selection is based on the largest Euclidean norms of the columns of V T k . From a probabilistic point of view , much work has followed the seminal work of [14] for the CSSP . [14] introduced the idea of randomly sampling columns based on specific probability distributions. [14] use a simple probability distribution where each column of A is sampled with probability proportional to its Euclidean norm. The approximation bound achieved, which holds only in expectation, is k A − CC † A k 2 F ≤ k A − A k k 2 F + ( k /c ) k A k 2 F . [13] improved upon the accuracy of this result by using a distribution over the columns of A wher e each column is sampled with probability proportional to its leverage score. From a different perspective, [12, 19] presented some optimal algorithms using volume sampling. [4] obtained faster optimal algorithms while [7] proposed optimal algorithms that r un in input sparsity time. Another line of resear ch includes row-sampling algorithms for tall-and-skinny orthonormal matrices, which is relevant to our results: we essentially apply this kind of sampling to the rows of the matrix V k from the SVD of A . See Lemma 5 in the Section 6 for a precise statement of our result. Similar results exist in [1]. W e should also mention the work in [39], which corresponds to a derandomization of the randomized sampling algorithm in [13]. 6 Proofs Before we proceed, we setup some notation and definitions. For any two matrices A and B with appropriate dimensions, k A k 2 ≤ k A k F ≤ p rank ( A ) k A k 2 , k AB k F ≤ k A k F k B k 2 , and k AB k F ≤ k A k 2 k B k F . k A k ξ indicates that an expression holds for both ξ = 2 , F . The thin (compact) Singular V alue Decomposition (SVD) of a matrix A ∈ R m × n with rank ( A ) = ρ is: A = U k U ρ − k | {z } U A ∈ R m × ρ Σ k 0 0 Σ ρ − k | {z } Σ A ∈ R ρ × ρ V T k V T ρ − k | {z } V T A ∈ R ρ × n , 11 with singular values σ 1 ( A ) ≥ . . . σ k ( A ) ≥ σ k +1 ( A ) ≥ . . . ≥ σ ρ ( A ) > 0 . The matrices U A ∈ R m × ρ and V A ∈ R m × ( ρ ) contain the left and right singular vectors, respectively . It is well-known that A k = U k Σ k V T k = U k U T k A = AV k V T k ∈ R m × n minimizes k A − X k ξ over all matrices X ∈ R m × n of rank at most k ≤ rank ( A ) . The best rank- k approximation to A satisfies k A − A k k 2 = σ k +1 ( A ) and k A − A k k 2 F = P ρ i = k +1 σ 2 i ( A ) . A † denotes the Moore-Penr ose pseudo-inverse of A . Let B ∈ R m × n ( m ≤ n ) and A = BB T ∈ R m × m ; then, for all i = 1 , ..., m , λ i ( A ) = σ 2 i ( B ) is the i -th eigenvalue of A . 6.1 Proof of Theorem 2 T o prove Theorem 2, we will use the following r esult. Lemma 4. [Eqn. 3.2, Lemma 3.1 in [4]] Consider A = AZZ T + E ∈ R m × n as a low-rank matrix factorization of A , with Z ∈ R n × k , and Z T Z = I k . Let S ∈ R n × c ( c ≥ k ) be any matrix such that r ank ( Z T S ) = k . Let C = AS ∈ R m × c . Then, for ξ = 2 , F : k A − CC † A k 2 ξ ≤ k A − Π ξ C ,k ( A ) k 2 ξ ≤ k E k 2 ξ · k S ( Z T S ) † k 2 2 . Here, Π ξ C ,k ( A ) ∈ R m × n is the best rank k approximation to A in the column space of C with respect to the ξ norm. W e will also use the following novel lower bound on the smallest singular value of the matrix V k , after deterministic selection of its rows based on the lar gest leverage scores. Lemma 5. Repeat the conditions of Theorem 2. Then, σ 2 k ( V T k S ) > 1 − ε. Proof. W e use the following perturbation result on the sum of eigenvalues of symmetric matrices. Lemma 6. [Theorem 2.8.1; part (i) in [9]] Let X and Y be symmetric matrices of order k and, let 1 ≤ i, j ≤ n with i + j ≤ k + 1 . Then, λ i ( X ) ≥ λ i + j − 1 ( X + Y ) − λ j ( Y ) . Let S ∈ R n × c sample c columns from A with c ≥ k . Similarly , let ˆ S ∈ R n × ( n − c ) sample the rest n − c columns from A . Hence, I k = V T k V k = V T k SS T V k + V T k ˆ S ˆ S T V k . Let X = V T k SS T V k , Y = V T k ˆ S ˆ S T V k , i = k , and j = 1 , in Lemma 6. Notice that i + j ≤ k + 1 , and λ k ( X + Y ) = 1 ; hence: λ k ( V T k SS T V k ) ≥ 1 − λ 1 ( V T k ˆ S ˆ S T V k ) = 1 − k V T k ˆ S k 2 2 ≥ 1 − k V T k ˆ S k 2 F > 1 − ( k − θ ) Replacing θ = k − ε and λ k ( V T k SS T V k ) = σ 2 k ( V T k S ) concludes the proof. The proof of Theorem 2 is a straightforward combination of the Lemmas 4 and 5. First, by picking Z = V k in Lemma 4 we obtain: k A − CC † A k 2 ξ ≤ k A − A k k 2 ξ · k S ( V T k S ) † k 2 2 ≤ k A − A k k 2 ξ · k S k 2 2 · k ( V T k S ) † k 2 2 = k A − A k k 2 ξ · k ( V T k S ) † k 2 2 = k A − A k k 2 ξ /σ 2 k ( V T k S ) 12 In the above, we used the facts that E = A − AV k V T k = A − A k , and the spectral norm of the sampling matrix S equals one. Also, we used that rank ( V T k S ) = k , which is implied from Lemma 5. Next, via the bound in Lemma 5: k A − CC † A k 2 ξ < k A − A k k 2 ξ / (1 − ε ) . 6.2 Proof of Theorem 3 Let α k = 1 + η for some η > 0 . W e assume that the leverage scores follow a power law decay such that: ` ( k ) i = ` ( k ) 1 /i 1+ η . According to the pr oposed algorithm, we select c columns such that c X i =1 ` ( k ) i > θ . Here, we bound the number of columns c required to achieve an ε := k − θ approximation in Theorem 2. T o this end, we use the extreme case P c i =1 ` ( k ) i = θ which guarantees an (1 + ε ) -approximation. For our analysis, we use the following well-known result. Proposition 7. [Integral test for convergence] Let f ( · ) ≥ 0 be a function defined over the set of positive reals. Furthermore, assume that f ( · ) is monotone decr easing. Then, Z J +1 j f ( i ) dx ≤ J X i = j f ( i ) ≤ f ( j ) + Z J j f ( x ) dx, over the interval [ j, . . . , J ] for j, J positive integers. In our case, consider f ( i ) = 1 i 1+ η . By definition of the leverage scores, we have: k = n X i =1 ` ( k ) i = ` ( k ) 1 n X i =1 1 i 1+ η = ⇒ ` ( k ) 1 = k P n i =1 1 i 1+ η . By construction, we collect c leverage scores such that k − θ = ε . This leads to: k − ε = ` ( k ) 1 · c X i =1 1 i 1+ η = k P n i =1 1 i 1+ η · c X i =1 1 i 1+ η = k P n i =1 1 i 1+ η − P n i = c +1 1 i 1+ η P n i =1 1 i 1+ η ! = k 1 − P n i = c +1 1 i 1+ η P n i =1 1 i 1+ η ! = ⇒ ε = k · P n i = c +1 1 i 1+ η P n i =1 1 i 1+ η . T o bound the quantity on the right hand side, we observe 13 P n i = c +1 1 i 1+ η P n i =1 1 i 1+ η ≤ 1 ( c +1) 1+ η + R n i = c +1 1 x 1+ η dx P n i =1 1 i 1+ η = 1 ( c +1) 1+ η + − 1 x 1+ η n i = c +1 P n i =1 1 i 1+ η = 1 ( c +1) 1+ η + 1 η 1 ( c +1) η − 1 n η P n i =1 1 i 1+ η ≤ 1 ( c +1) 1+ η + 1 η ( c +1) η P n i =1 1 i 1+ η = 1 ( c +1) 1+ η + 1 η ( c +1) η 1 + P n i =2 1 i 1+ η < 1 ( c + 1) · ( c + 1) η + 1 η ( c + 1) η ≤ max 2 ( c + 1) 1+ η , 2 η · ( c + 1) η where the first inequality is due to the right hand side of the integral test and the thir d inequality is due to 1 + n X i =2 1 i 1+ η > 1 . Hence, we may conclude: ε < k · max 2 ( c + 1) 1+ η , 2 η · ( c + 1) η . The above lead to the following two cases: if ε < 2 k ( c + 1) 1+ η , we have: c < 2 · k ε 1 1+ η − 1 , whereas in the case wher e ε < 2 · k η · ( c + 1) η , we get c < 2 · k η · ε 1 η − 1 . 7 The key role of θ In the proof of Theor em 2, we requir e that σ 2 k ( V T k S ) > 1 − ( k − θ ) := 1 − ε. For this condition to hold, the sampling matrix S should preserve the rank of V T k in V T k S , i.e., choose θ such that rank ( V T k S ) = k . 14 Failing to preserve the rank of V T k has immediate implications for the CSSP . T o highlight this, let A ∈ R m × n of rank k < min { m, n } with SVD A = U k Σ k V T k . Further , assume that the k th singular value of A is arbitrary large, i.e., σ k ( A ) → ∞ . Also, let rank ( V T S ) = γ < k and C = AS . Then, k A − CC † A k ξ = k U k Σ k V T k − U k Σ k V T k S ( U k Σ k V T k S ) † U k Σ k V T k k ξ = k Σ k − Σ k V T k S ( U k Σ k V T k S ) † U k Σ k k ξ = k Σ k − Σ k V T k S ( Σ k V T k S ) † ( U k ) † U k Σ k k ξ = k Σ k − Σ k V T k S ( Σ k V T k S ) † Σ k k ξ = k Σ k − U X U T X Σ k k ξ ≥ σ k ( A ) The second equality is due to the fact that both spectral and Frobenius norms are invariant to unitary transformations. In the third equality , we used the fact that ( W Z ) † = Z † W † if W T W is the identity matrix. Then, set X = Σ k V T k S ∈ R k × c where rank ( X ) = γ . Using this notation, let U X ∈ R m × γ be any orthonormal basis for span ( X ) . Observe U X U T X = XX † . The last inequality is due to U X U T X being an m × m diagonal matrix with γ ones along its main diagonal and the rest zer os. Thus, we may conclude that for this A : k A − CC † A k ξ ≥ σ k ( A ) → ∞ . 8 Extensions to main algorithm Algorithm 1 requir es O (min { m, n } mn ) arithmetic operations since, in the first step of the algorithm, it com- putes the top k right singular vectors of A thr ough the SVD. In this section, we describe how to improve the running time complexity of the algorithm while maintaining about the same approximation guaran- tees. The main idea is to replace the top k right singular vectors of A with some orthonormal vectors that “approximate” the top k right singular vectors in a sense that we make precise below . Boutsidis et al. introduced this idea to impr ove the running time complexity of column subset selection algorithms in [4]. 8.1 Frobenius norm W e start with a result which is a slight extension of a result appeared in [15]. Lemma 8 below appeared in [7] but we include the proof for completeness. For the description of the algorithm we refer to [27, 15]. Lemma 8 (Theorem 3.1 in [15]) . Given A ∈ R m × n of rank ρ , a tar get rank 1 ≤ k < ρ , and 0 < ε ≤ 1 , there exists a deterministic algorithm that computes Z ∈ R n × k with Z T Z = I k and k A − AZZ T k 2 F ≤ (1 + ε ) k A − A k k 2 F . The proposed algorithm runs in O mnk 2 ε − 2 time. Proof. Theorem 4.1 in [15] describes an algorithm that given A , k and ε constructs Q k ∈ R k × n such that k A − AQ T k Q k k 2 F ≤ (1 + ε ) k A − A k k 2 F . T o obtain the desired factorization, we just need an additional step to ortho-normalize the columns of Q T k , which takes O ( nk 2 ) time. So, assume that Q T k = ZR is a QR factorization of Q T k with Z ∈ R n × k and R ∈ R k × k . Then, k A − AZZ T k 2 F ≤ k A − AQ T k ( R T ) Z k 2 F = k A − AQ T k R T ( R T ) − 1 Q k k 2 F = k A − AQ T k Q k k 2 F ≤ (1 + ε ) k A − A k k 2 F . 15 In words, the lemma describes a method that constructs a rank k matrix AZZ T that is as good as the rank k matrix A k = AV k V T k from the SVD of A . Hence, in that “low-rank matrix approximation sense” Z can replace V k in our column subset selection algorithm. Now , consider an algorithm as in Algorithm 1 wher e in the first step, instead of V k , we compute Z as it was described in Lemma 8. This modified algorithm requires O mnk 2 ε − 2 arithmetic operations. For this deterministic algorithm we have the following theorem. Theorem 9. Let θ = k − ε, for some ε ∈ (0 , 1) , and let S be the n × c output sampling matrix of the modified Algorithm 1 described above. Then, for C = AS we have k A − CC † A k 2 F < (1 + ε ) · (1 − ε ) − 1 · k A − A k k 2 F . Proof. Let Z be constructed as in Lemma 8. Using this Z and ξ = F in Lemma 4 we obtain: k A − CC † A k 2 F ≤ k A − AZZ T k 2 F · k S ( Z T S ) † k 2 2 ≤ k A − AZZ T k 2 F · k S k 2 2 · k ( Z T S ) † k 2 2 = k A − AZZ T k 2 F · k ( Z T S ) † k 2 2 = k A − AZZ T k 2 F /σ 2 k ( Z T S ) In the above, we used the facts that E = A − AZZ T and the spectral norm of the sampling matrix S equals one. Also, we used that rank ( Z T S ) = k , which is implied from Lemma 5. Next, via the bound in Lemma 5 on Z 4 : k A − CC † A k 2 2 < k A − AZZ T k 2 2 / (1 − ε ) . Finally , using k A − AZZ T k 2 F ≤ (1 + ε ) k A − A k k 2 F according to Lemma 8 concludes the pr oof. 8.2 Spectral norm T o achieve a similar running time improvement for the spectral norm bound of Theorem 2, we need an analogous result as in Lemma 8, but for the spectral norm. W e are not aware of any such deterministic algorithm. Hence, we quote Lemma 11 from [4], which pr ovides a randomized algorithm. Lemma 10 (Randomized fast spectral norm SVD) . Given A ∈ R m × n of rank ρ , a target rank 2 ≤ k < ρ , and 0 < ε < 1 , there exists an algorithm that computes a factorization A = BZ T + E , with B = AZ , Z T Z = I k such that E [ k E k 2 ] ≤ √ 2 + ε k A − A k k 2 . This algorithm runs in O mnk ε − 1 log k − 1 min { m, n } time. In words, the lemma describes a method that constructs a rank k matrix AZZ T that is as good as the rank k matrix A k = AV k V T k from the SVD of A . Hence, in that “low-rank matrix approximation sense” Z can r eplace V k . The dif ference between Lemma 10 and Lemma 8 is that the matrix AZZ T approximates A k with respect to a dif ferent norm. Now consider an algorithm as in Algorithm 1 where in the first step we compute Z as it was described in Lemma 10. This algorithm takes O mnk ε − 1 log k − 1 min { m, n } time. For this randomized algorithm we have the following theorem. Theorem 11. Let θ = k − ε, for some ε ∈ (0 , 1) , and let S be the n × c output sampling matrix of the modified Algorithm 1 described above. Then, for C = AS we have E h k A − CC † A k 2 i < √ 2 + ε · q (1 − ε ) − 1 · k A − A k k 2 . 4 It is easy to see that Lemma 5 holds for any orthonormal matrix V k and it is not neccesary that V k contains the singular vectors of matrix A . 16 Proof. Let Z be constructed as in Lemma 10. Using this Z and ξ = 2 in Lemma 4 we obtain: k A − CC † A k 2 2 ≤ k A − AZZ T k 2 2 · k S ( Z T S ) † k 2 2 ≤ k A − AZZ T k 2 2 · k S k 2 2 · k ( Z T S ) † k 2 2 = k A − AZZ T k 2 2 · k ( Z T S ) † k 2 2 = k A − AZZ T k 2 2 /σ 2 k ( Z T S ) In the above, we used the facts that E = A − AZZ T and the spectral norm of the sampling matrix S equals one. Also, we used that rank ( Z T S ) = k , which is implied from Lemma 5. Next, via the bound in Lemma 5 on Z : k A − CC † A k 2 2 < k A − AZZ T k 2 2 / (1 − ε ) . T aking square root on both sides of this r elation we obtain: k A − CC † A k 2 < k A − AZZ T k 2 p (1 − ε ) − 1 . T aking expectations with respect to the randomness of Z yields, E h k A − CC † A k 2 i < E h k A − AZZ T k 2 i p (1 − ε ) − 1 . Finally , using E h k A − AZZ T k 2 i ≤ ( √ 2 + ε ) k A − A k k 2 - from Lemma 10 - concludes the pr oof. W e also mention that it is now straightforward to prove an analog of Theorem 3 for the algorithms we analyze in Theorems 9 and 11. One should replace the assumption of the power law decay of the leverage scores with an assumption of the power law decay of the row norms square of the matrix Z . Whether the row norms of those matrices Z follow a power law decay is an interesting open question which will be worthy to investigate in more detail. 8.3 Approximations of rank k Theorems 2, 3, 9, and 11 provide bounds for low rank approximations of the form CC † A ∈ R m × n , where C contains c ≥ k columns of A . The matrix CC † A could potentially have rank larger than k , indeed it can be as large as c . In this section, we describe how to construct factorizations that have rank k and are as accurate as those in Theorems 2, 3, 9, and 11. Constructing a rank k instead of a rank c column-based low-rank matrix factorization is a harder problem and might be desirable in certain applications (see, for example, Section 4 in [ ? ] where the authors apply rank k column-based low-rank matrix factorizations to solve the projective clustering pr oblem). Let A ∈ R m × n , let k < n be an integer , and let C ∈ R m × c with c ≥ k . Let Π ξ C ,k ( A ) ∈ R m × n be the best rank k approximation to A in the column space of C with respect to the ξ norm. Hence, we can write Π ξ C ,k ( A ) = CX ξ , where X ξ = argmin Ψ ∈ R c × n : rank ( Ψ ) ≤ k k A − CΨ k 2 ξ . In order to compute (or appr oximate) Π ξ C ,k ( A ) given A , C , and k , we will use the following algorithm: 1: Ortho-normalize the columns of C ∈ R m × c in O ( mc 2 ) time to construct the matrix Q ∈ R m × c . 2: Compute ( Q T A ) k ∈ R c × n via the SVD in O ( mnc + nc 2 ) ; ( Q T A ) k has rank k and denotes the best rank- k approximation of Q T A . 3: Return Q ( Q T A ) k ∈ R m × n in O ( mnk ) time. Clearly , Q ( Q T A ) k is a rank k matrix that lies in the column span of C . Note that though Π ξ C ,k ( A ) can depend on ξ , our algorithm computes the same matrix, independent of ξ . The next lemma was proved in [4]. 17 Lemma 12. Given A ∈ R m × n , C ∈ R m × c and an integer k , the matrix Q ( Q T A ) k ∈ R m × n described above (where Q is an orthonormal basis for the columns of C ) can be computed in O mnc + ( m + n ) c 2 time and satisfies: k A − Q ( Q T A ) k k 2 F = k A − Π F C ,k ( A ) k 2 F , k A − Q ( Q T A ) k k 2 2 ≤ 2 k A − Π 2 C ,k ( A ) k 2 2 . Finally , observe that Lemma 4 indeed provides an upper bound for the residual error k A − Π ξ C ,k ( A ) k 2 ξ . Hence, all bounds in Theor ems 2, 3, 9, and 11 hold for the error k A − Π ξ C ,k ( A ) k 2 ξ as well, and by Lemma 12 one can provide bounds for the err ors k A − Q ( Q T A ) k k 2 F and k A − Q ( Q T A ) k k 2 2 . 9 Concluding Remarks W e provided a rigorous theoretical analysis of an old and popular deterministic feature selection algorithm from the statistics literature [22]. Although randomized algorithms are often easier to analyze, we believe that deterministic algorithms are simpler to implement and explain, hence more attractive to practitioners and data analysts. One inter esting path for futur e resear ch is understanding the connection of this work with the so-called “spectral graph sparsification problem” [33]. In that case, edge selection in a graph is implemented via randomized leverage scores sampling from an appropriate matrix (see Theorem 1 in [33]). Note that in the context of graph sparsification, leverage scores correspond to the so-called “effective resistances” of the graph. Can deterministic effective resistances sampling be rigorously analyzed? What graphs have effective r esistances following a power law distribution? References [1] H. A vron and C. Boutsidis. Faster subset selection for matrices and applications. SIAM Journal on Matrix Analysis and Applications (SIMAX) , 2013. [2] K. Bache and M. Lichman. UCI machine learning repository , 2013. [3] C. H. Bischof and G. Quintana-Ort ´ ı. Computing rank-revealing QR factorizations of dense matrices. ACM T rans. Math. Softw , 24(2):226–253, 1998. [4] C. Boutsidis, P . Drineas, and M. Magdon-Ismail. Near optimal column based matrix reconstruction. SIAM Journal on Computing (SICOMP) , 2013. [5] C. Boutsidis, M. W . Mahoney , and P . Drineas. Unsupervised feature selection for principal components analysis. In KDD , pages 61–69, 2008. [6] C. Boutsidis, M. W . Mahoney , and P . Drineas. An improved approximation algorithm for the column subset selection problem. In SODA , pages 968–977, 2009. [7] C. Boutsidis and D. W oodruff. Optimal cur matrix decompositions. In STOC , 2014. [8] M. E. Broadbent, M. Br own, K. Penner , I. Ipsen, and R. Rehman. Subset selection algorithms: Random- ized vs. deterministic. SIAM Undergraduate Resear ch Online , 3:50–71, 2010. [9] A. A. E. Brouwer and W . H. Haemers. Spectra of graphs . Springer , 2012. [10] T . F . Chan and P . C. Hansen. Low-rank revealing QR factorizations. Numerical Linear Algebra with Applications , 1:33–44, 1994. [11] S. Chandrasekaran and I. C. F . Ipsen. On rank-revealing factorizations. SIAM J. Matrix Anal. Appl. , 15:592–622, 1994. 18 [12] A. Deshpande and L. Rademacher . Efficient volume sampling for row/column subset selection. In Proceedings of the 42th Annual ACM Symposium on Theory of Computing (ST OC) , 2010. [13] P . Drineas, M. W . Mahoney , and S. Muthukrishnan. Relative-error cur matrix decompositions. SIAM Journal Matrix Analysis and Applications , 30(2):844–881, 2008. [14] A. Frieze, R. Kannan, and S. V empala. Fast Monte-Carlo algorithms for finding low-rank approxima- tions. Journal of the ACM , 51(6):1025–1041, 2004. [15] M. Ghashami and J. W . Phillips. Relative Errors for Deterministic Low-Rank Matrix Approximations. In SODA , 2014. [16] A. Gittens and M. W . Mahoney . Revisiting the nystrom method for improved large-scale machine learning. In ICML (3) , pages 567–575, 2013. [17] G. H. Golub. Numerical methods for solving linear least squares problems. Numer . Math. , 7:206–216, 1965. [18] M. Gu and S. Eisenstat. Efficient algorithms for computing a strong rank-revealing QR factorization. SIAM Journal on Scientific Computing , 17:848–869, 1996. [19] V . Guruswami and A. K. Sinop. Optimal column-based low-rank matrix reconstruction. In SODA . SIAM, 2012. [20] Y . P . Hong and C. T . Pan. Rank-revealing QR factorizations and the singular value decomposition. Mathematics of Computation , 58:213–232, 1992. [21] I. C. Ipsen and T . W entworth. The effect of coherence on sampling from matrices with orthonormal columns, and preconditioned least squar es problems. arXiv preprint , 2012. [22] I. Jolliffe. Discarding variables in a principal component analysis. i: Artificial data. Applied Statistics , 21(2):160–173, 1972. [23] I. Jolliffe. Discarding variables in a principal component analysis. ii: Real data. Applied Statistics , 22(1):21–31, 1973. [24] I. Jolliffe. Principal Component Analysis . Springer; 2nd edition, 2002. [25] J. Kunegis. Konect: the koblenz network collection. In WWW . International W orld W ide W eb Confer- ences Steering Committee, 2013. [26] J. Leskovec. Snap stanford network analysis pr oject. 2009. [27] E. Liberty . Simple and deterministic matrix sketching Proceedings of the 19th ACM SIGKDD interna- tional conference on Knowledge discovery and data mining. 2013. [28] M. W . Mahoney and P . Drineas. Cur matrix decompositions for impr oved data analysis. Proceedings of the National Academy of Sciences , 106(3):697–702, 2009. [29] C. T . Pan. On the existence and computation of rank-revealing LU factorizations. Linear Algebra and its Applications , 316:199–222, 2000. [30] D. Papapailiopoulos, A. Kyrillidis, and C. Boutsidis. Provable Deterministic Leverage Score Sampling. arXiv preprint arXiv:1404.1530, 2014 [31] P . Paschou, E. Ziv , E. G. Burchard, S. Choudhry , W . Rodriguez-Cintron, M. W . Mahoney , and P . Drineas. Pca-correlated snps for structure identification in worldwide human populations. PLoS genetics , 3(9):e160, 2007. [32] M. Rudelson and R. V ershynin. Sampling from large matrices: An approach through geometric func- tional analysis. JACM: Journal of the ACM , 54, 2007. 19 [33] N. Srivastava and D. Spielman. Graph sparsifications by effective r esistances. In STOC , 2008. [34] G. Stewart. Four algorithms for the efficient computation of truncated QR approximations to a sparse matrix. Numerische Mathematik , 83:313–323, 1999. [35] J. Sun, Y . Xie, H. Zhang, and C. Faloutsos. Less is more: Compact matrix decomposition for large sparse graphs. In SDM . SIAM, 2007. [36] H. T ong, S. Papadimitriou, J. Sun, P . S. Y u, and C. Faloutsos. Colibri: fast mining of large static and dynamic graphs. In KDD . ACM, 2008. [37] E. T yrtyshnikov . Mosaic-skeleton approximations. Calcolo , 33(1):47–57, 1996. [38] R. Zafarani and H. Liu. Social computing data repository at ASU, 2009. [39] A. Zouzias. A matrix hyperbolic cosine algorithm and applications. In ICALP . Springer , 2012. 20

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment