MissForest - nonparametric missing value imputation for mixed-type data

Modern data acquisition based on high-throughput technology is often facing the problem of missing data. Algorithms commonly used in the analysis of such large-scale data often depend on a complete set. Missing value imputation offers a solution to t…

Authors: Daniel J. Stekhoven, Peter B"uhlmann

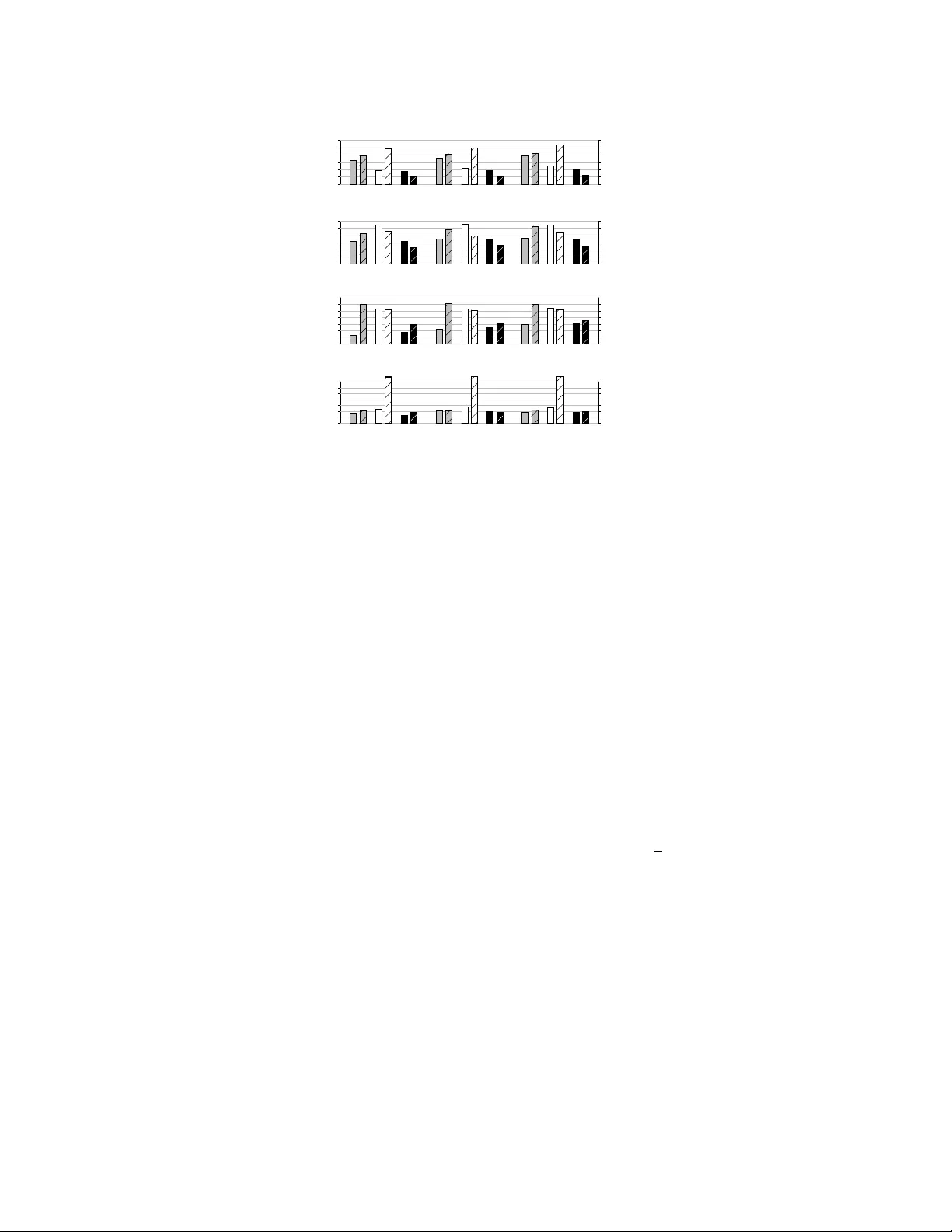

MissF orest - nonparametric missing v alue imputation for mix ed-type data Daniel J. Stekhov en 1,2,3, ∗ and Peter B ¨ uhlmann 1,3 1 Seminar for Statistics, ETH Zurich, Zurich, Switzerland, 2 Life Science Zurich PhD Program on Systems Biology of Complex Diseases, 3 Competence Center for Systems Physiology and Metabolic Diseases, Zurich, Switzerland Abstract Motivation: Modern data acquisition based on high-throughput technology is often facing the problem of missing data. Algorithms commonly used in the analysis of such large-scale data often depend on a complete set. Missing value imputation offers a solution to this problem. Howe v er , the majority of available imputation methods are restricted to one type of variable only: continuous or categorical. F or mixed-type data the dif ferent types are usually handled separately . Therefore, these methods ignore possible relations between variable types. W e propose a nonparametric method which can cope with different types of v ariables simultaneously . Results: W e compare se veral state of the art methods for the imputation of missing values. W e propose and ev aluate an iterativ e imputation method (missForest) based on a random forest. By av- eraging over many unpruned classification or regression trees random forest intrinsically constitutes a multiple imputation scheme. Using the built-in out-of-bag error estimates of random forest we are able to estimate the imputation error without the need of a test set. Evaluation is performed on multi- ple data sets coming from a div erse selection of biological fields with artificially introduced missing values ranging from 10% to 30%. W e show that missForest can successfully handle missing values, particularly in data sets including dif ferent types of v ariables. In our comparati ve study missForest outperforms other methods of imputation especially in data settings where complex interactions and nonlinear relations are suspected. The out-of-bag imputation error estimates of missForest prove to be adequate in all settings. Additionally , missForest exhibits attractiv e computational efficienc y and can cope with high-dimensional data. A v ailability: The R package missForest is freely av ailable from http://stat.ethz. ch/CRAN/ . Pre-print version: This article has been submitted to Oxford Journal’ s Bioinformatics c on 3rd of May 2011. V ersion 2 has been resubmitted on 27th of September 2011. 1 Intr oduction Imputation of missing values is often a crucial step in data analysis. Many established methods of analysis require fully observed data sets without any missing v alues. Ho we ver , this is seldom the case in medical and biological research today . The ongoing development of ne w and enhanced measurement techniques in these fields provides data analysts with challenges prompted not only by high-dimensional multi v ariate data where the number of variables may greatly e xceed the number of observations but also by mixed data types where continuous and categorical v ariables are present. In our context categorical ∗ to whom correspondence should be addressed 1 v ariables can arise as any kind ranging from technical settings in a mass spectrometer to a diagnostic expert opinion on a disease state. Additionally , such data sets often contain comple x interactions and nonlinear relation structures which are notoriously hard to capture with parametric procedures. Most prev alent imputation methods, lik e k nearest neighbours (KNNimpute, T royanskaya et al. (2001)) for continuous data, saturated multinomial model (Schafer (1997)) for categorical data and mul- ti v ariate imputation by chained equations (MICE, V an Buuren and Oudshoorn (1999)) for mixed data types depend on tuning parameters or specification of a parametric model. The choice of such tuning pa- rameters or models without prior knowledge is difficult and might hav e a dramatic effect on a method’ s performance. Excluding MICE the above methods and the majority of other imputation methods are restricted to one type of v ariable. Furthermore, all these methods make assumptions about the distri- bution of the data or subsets of the variables, leading to questionable situations, e.g. assuming normal distributions. The literature on mix ed-type data i mputation is rather scarce. Its first appearance w as in the dev elop- ing field of multiple imputation brought up by Rubin (1978). Little and Schluchter (1985) presented an approach based on maximum likelihood estimation combining the multiv ariate normal model for con- tinuous and the Poisson/multinomial model for cate gorical data. This idea was later on e xtended in the book of Little and Rubin (1987). See also Li (1988), Rubin and Schafer (1990) and Schafer (1997). A more refined method to combine different regression models for mixed-type data was proposed by V an Buuren and Oudshoorn (1999) using chained equations. The conditional model in MICE can be specified for the missing data in each incomplete v ariable. Therefore no multi variate model cov ering the entire data set has to be specified. Howe ver , it is assumed that such a full multiv ariate distribution exists and missing values are sampled from conditional distributions based on this full distribution (for more details see Section 3). Another similar method using variable-wise conditional distributions was proposed by Raghunathan et al. (2001) called sequential regression multiv ariate imputation. Unlike in MICE the predictors must not be incomplete. The method is focussed on survey data and therefore in- cludes strategies to incorporate restrictions on subsamples of indi viduals and logical bounds based on domain kno wledge about the v ariables, e.g., only women can have a number of pre gnancies recorded. Our moti vation is to introduce a method of imputation which can handle an y type of input data and makes as few as possible assumptions about structural aspects of the data. Random forest (RF , Breiman (2001)) is able to deal with mix ed-type data and as a nonparametric method it allo ws for interacti ve and nonlinear (regression) effects. W e address the missing data problem using an iterati ve imputation scheme by training a RF on observed v alues in a first step, followed by predicting the missing values and then proceeding iterativ ely . Mazumder et al. (2010) use a similar approach for the matrix completion problem using a soft-thresholded SVD iterati vely replacing the missing values. W e choose RF because it can handle mix ed-type data and is known to perform very well under barren conditions like high dimensions, complex interactions and nonlinear data structures. Due to its accuracy and robustness RF is well suited for the use in applied research often harbouring such conditions. Furthermore, the RF algorithm allows for estimating out-of-bag (OOB) error rates without the need for a test set. For further details see Breiman (2001). Here we compare our method with k -nearest neighbour imputation (KNNimpute, T royanskaya et al. (2001)) and the Missingness Pattern Alternating Lasso (MissP ALasso) algorithm by St ¨ adler and B ¨ uhlmann (2010) on data sets ha ving continuous v ariables only . For the cases of categorical and mixed type of vari- ables we compare our method with the MICE algorithm by V an Buuren and Oudshoorn (1999) and a dummy variable encoded KNNimpute. Comparisons are performed on se veral data sets coming from dif ferent fields of life sciences and using dif ferent proportions of missing values. W e sho w that our approach is competitive to or outperforms the compared methods on the used data sets irrespecti vely of the v ariable type composition, the data dimensionality , the source of the data or the amount of missing values. In some cases the decrease of imputation error is up to 50%. This performance 2 is typically reached within only a fe w iterations which makes our method also computationally attracti ve. The OOB imputation error estimates gi ve a v ery good approximation of the true imputation error ha ving on a verage a proportional deviation of no more than 10 - 15%. Furthermore, our approach needs no tuning parameter , hence, is easy to use and needs no prior knowledge about the data. 2 A pproach W e assume X = ( X 1 , X 2 , . . . , X p ) to be a n × p -dimensional data matrix. W e propose using a random forest to impute the missing v alues due to its earlier mentioned advantages as a regression method. The random forest algorithm has a built-in routine to handle missing values by weighting the frequency of the observed v alues in a variable with the random forest proximities after being trained on the initially mean imputed data set (Breiman (2001)). Ho wev er, this approach requires a complete response variable for training the forest. Instead, we directly predict the missing v alues using a random forest trained on the observ ed parts of the data set. For an arbitrary variable X s including missing values at entries i ( s ) mis ⊆ { 1 , . . . , n } we can separate the data set in four parts: 1. The observed v alues of variable X s , denoted by y ( s ) obs ; 2. the missing v alues of variable X s , denoted by y ( s ) mis ; 3. the v ariables other than X s with observ ations i ( s ) obs = { 1 , . . . , n } \ i ( s ) mis denoted by x ( s ) obs ; 4. the v ariables other than X s with observ ations i ( s ) mis denoted by x ( s ) mis . Note that x ( s ) obs is typically not completely observed since the index i ( s ) obs corresponds to the observed v alues of the v ariable X s . Likewise, x ( s ) mis is typically not completely missing. T o begin, make an initial guess for the missing v alues in X using mean imputation or another im- putation method. Then, sort the variables X s , s = 1 , . . . , p according to the amount of missing values starting with the lowest amount. For each variable X s the missing values are imputed by first fitting a random forest with response y ( s ) obs and predictors x ( s ) obs ; then, predicting the missing values y ( s ) mis by ap- plying the trained random forest to x ( s ) mis . The imputation procedure is repeated until a stopping criterion is met. The pseudo algorithm 1 gives a representation of the missF orest method. 3 Algorithm 1 Impute missing v alues with random forest. Require: X an n × p matrix, stopping criterion γ 1: Make initial guess for missing v alues; 2: k ← vector of sorted indices of columns in X w .r .t. increasing amount of missing values; 3: while not γ do 4: X imp old ← store pre viously imputed matrix; 5: f or s in k do 6: Fit a random forest: y ( s ) obs ∼ x ( s ) obs ; 7: Predict y ( s ) mis using x ( s ) mis ; 8: X imp new ← update imputed matrix, using predicted y ( s ) mis ; 9: end f or 10: update γ . 11: end while 12: retur n the imputed matrix X imp The stopping criterion γ is met as soon as the dif ference between the ne wly imputed data matrix and the previous one increases for the first time with respect to both variable types, if present. Here, the dif ference for the set of continuous v ariables N is defined as ∆ N = P j ∈ N ( X imp new − X imp old ) 2 P j ∈ N ( X imp new ) 2 , and for the set of categorical v ariables F as ∆ F = P j ∈ F P n i =1 I X imp new 6 = X imp old # N A , where # N A is the number of missing values in the cate gorical variables. After imputing the missing values the performance is assessed using the normalised root mean squared error (NRMSE, Oba et al. (2003)) for the continuous v ariables which is defined by NRMSE = s mean (( X true − X imp ) 2 ) v ar ( X true ) , where X true is the complete data matrix and X imp the imputed data matrix. W e use mean and v ar as short notation for empirical mean and v ariance computed ov er the continuous missing v alues only . For categorical variables we use the proportion of falsely classified entries (PFC) o ver the categorical missing v alues, ∆ F . In both cases good performance leads to a value close to 0 and bad performance to a v alue around 1. When a RF is fit to the observed part of a v ariable we also get an OOB error estimate for that v ariable. After the stopping criterion γ was met we average ov er the set of variables of the same type to approximate the true imputation errors. W e assess the performance of this estimation by comparing the absolute difference between true imputation error and OOB imputation error estimate in all simulation runs. 3 Methods W e compare missForest with four methods on ten different data sets where we distinguish between situations with continuous v ariables only , categorical v ariables only and mixed v ariable types. 4 The most well-known method for imputation of continuous data sets especially in the field of gene expression analysis is the KNNimpute algorithm by T royanskaya et al. (2001). A missing v alue v ariable X j is imputed by finding its k nearest observed v ariables and taking a weighted mean of these k v ariables for imputation. Thereby , the weights depend on the distance of the variable X j . The distance itself is usually chosen to be the Euclidean distance. When using KNNimpute the choice of the tuning parameter k can ha ve a large effect on the perfor- mance of the imputation. Howe ver , this parameter is not known beforehand. Since our method includes no such parameter we implement a cross-v alidation (see Algorithm 2) to obtain a suitable k . Algorithm 2 Cross-v alidation KNN imputation. Require: X an n × p matrix, number of validation sets l , range of suitable number of nearest neighbours K 1: X C V ← initial imputation using mean imputation; 2: f or t in 1 , . . . , l do 3: X C V mis,t ← artificially introduce missing v alues to X C V ; 4: f or k in K do 5: X C V K N N ,t ← KNN imputation of X C V mis,t using k nearest neighbours; 6: ε k,t ← error of KNN imputation for k and t ; 7: end f or 8: end f or 9: k best ← argmin k 1 l P l t =1 ε k,t ; 10: X imp ← KNN imputation of X using k best nearest neighbours. In the original paper of T royanskaya et al. (2001) the data was not standardized before applying the KNNimpute algorithm. This constitutes no issue in the case of gene expression data because such data generally consists of variables on similar scales. Howe ver , we are applying the KNNimpute algorithm to data sets with varying scales in the v ariables. T o a void v ariance based weighting of the variables we scale them to a unit standard de viation. W e also center the v ariables at zero. After imputation the data is retransformed such that the error is computed on the original scales. This last step is performed because missForest does not need any transformation of the data and we w ant to compare the performance of the methods on the original scales of the data. Another approach for continuous data, especially in the case of high-dimensional normal data ma- trices, is presented by St ¨ adler and B ¨ uhlmann (2010) using an EM-type algorithm. In their Missingness Pattern Alternating Imputation and l 1 -penalty (MissP ALasso) algorithm the missing v ariables are re- gressed on the observed ones using the lasso penalty by T ibshirani (1996). In the follo wing E step the obtained regression coef ficients are used to partially update the latent distribution. The MissP ALasso has also a tuning parameter λ for the penalty . As with KNNimpute we use cross-validation to tune λ (cf. Algorithm 2). When applying MissP ALasso the data is standardized as re gularization with a single λ requires the dif ferent regressions to be on the same scale. In the comparativ e e xperiments with categorical or mixed-type variables we use the MICE algorithm by V an Buuren and Oudshoorn (1999) based on the multi v ariate multiple imputation scheme of Schafer (1997). In contrast to the latter the conditional distribution for the missing data in each incomplete v ariable is specified in MICE, a feature called fully conditional specification by V an Buuren (2007). Ho we ver , the existence of a multi variate distrib ution from which the conditional distribution can be easily derived is assumed. Furthermore, iterative Gibbs sampling from the conditional distributions can generate draws from the multiv ariate distribution. W e want to point out that MICE in its default setup 5 is not mainly intended for simple missing value imputation. Using the multiple imputation scheme MICE allows for assessing the uncertainty of the imputed v alues. It includes features to pool multiple imputations, choose individual sampling procedures and allows for passive imputation controlling the sync of transformed variables. In our experiments we used MICE with either linear regression with normal errors or mean imputation for continuous v ariables, logistic regression for binary v ariables and polytomous logistic regression for cate gorical variables with more than two cate gories. For comparison across dif ferent types of variables we apply the KNNimpute algorithm with dummy coding for the categorical variables. This is done by coding a categorical v ariable X j into m dichoto- mous variables ˜ X j,m ∈ {− 1 , 1 } . Application of the KNNimpute algorithm for categorical data can be summarized as: 1. Code all categorical v ariables into {− 1 , 1 } -dummy v ariables; 2. standardize all v ariables to mean 0 and standard deviation 1; 3. apply the cross-v alidated KNNimpute method from Algorithm 2; 4. retransform the imputed data matrix to the original scales; 5. code the dummy v ariables back to categorical variables; 6. computed the imputation error . For each experiment we perform 50 independent simulations where 10%, 20% or 30% of the values are remo ved completely at random. Each method is then applied and the NRMSE, the PFC or both are computed (see Section 2). W e perform a paired W ilcoxon test of the error rates of the compared methods versus the error rates of missForest. In addition, the OOB error estimates of missForest is recorded in each simulation. 4 Results 4.1 Continuous variables only First, we focus on continuous data. W e in vestigate the follo wing four publicly av ailable data sets: • Isoprenoid gene network in A. thaliana : This gene network includes p = 39 genes each with n = 118 gene expression profiles corresponding to different experimental conditions. For more details on this data set see W ille et al. (2004). • V oice measur es in Parkinson’ s patients : The data described by Little et al. (2008) contains a range of biomedical voice measurements from 31 indi viduals, 23 with Parkinson’ s disease (PD). There are p = 22 particular voice measurements and n = 195 voice recordings from these indi- viduals. The data set also contains a response variable giving the health status. Dealing only with continuous v ariables the response was remo ved from the data. W e will return to this later on. • Shapes of musk molecules : This data set describes 92 molecules of which 47 are musks and 45 are non-musks. For each molecule p = 166 features describe its conformation, but since a molecule can have many conformations due to rotating bonds, there are n = 476 different low- energy conformations in the set. The classification into musk and non-musk molecules is removed. 6 0.0 0.3 0.0 0.3 Isoprenoid data 10% 20% 30% *** ### *** ### *** ### 0.0 0.3 0.0 0.3 P ar kinson's data 10% 20% 30% *** * *** *** *** *** 0.0 0.3 0.0 0.3 Musk data 10% 20% 30% *** *** *** *** *** *** 0.0 0.1 0.2 Insulin data 10% 20% 30% ## ## ## NRMSE Figure 1: Continuous data. A verage NRMSE for KNNimpute (grey), MissP ALasso (white) and miss- Forest (black) on four different data sets and three different amounts of missing values, i.e., 10%, 20% and 30%. Standard errors are in the order of magnitude of 10 − 4 . Significance lev els for the paired W ilcoxon tests in fa vour of missForest are encoded as “*” < 0.05, “**” < 0.01 and “***” < 0.001. If the av erage error of the compared method is smaller than that of missF orest the significance le vel is encoded by a hash (#) instead of an asterisk. In the lo wermost data set results for MissP ALasso are missing due to the methods limited capability with regard to high dimensions. • Insulin gene expression : This high-dimensional data set originates from an analysis by W u et al. (2007) of vastus lateralis muscle biopsies from three different types of patients follo wing insulin treatment. The three types are insulin-sensitiv e, insulin-resistant and diabetic patients. The anal- ysis in volves p = 12 0 626 genes whose expression le vels were measured from n = 110 muscle biopsies. Due to computation time we only perform 10 simulations instead of 50. Results are given in Figure 1. W e can see that missForest performs well, sometimes reducing the av erage NRMSE by up to 25% with respect to KNNimpute. In case of the musk molecules data the reduction is ev en above 50%. The MissP ALasso performs slightly better than missForest on the gene expression data. Howe ver , there are no results for the MissP ALasso in case of the Insulin data set because the high dimension makes computation not feasible. For continuous data the missF orest algorithm typically reaches the stopping criterion quite fast need- ing about 5 iterations. The imputation takes about 10 times as long as performing the cross-v alidated KNNimpute where { 1 , . . . , 15 } is the set of possible numbers of neighbours. For the Insulin data set an imputation takes on a verage 2 hours on a customary av ailable desktop computer . 4.2 Categorical variables only W e also consider data sets with only categorical v ariables. Here, we use the MICE algorithm described in Section 3 instead of the MissP ALasso. W e use a dummy implementation of the KNNimpute algorithm to deal with categorical v ariables (see Section 3). W e apply the methods to the follo wing data sets: • Cardiac single photon emission computed tomograph y (SPECT) images : Kurgan et al. (2001) discuss this processed data set summarizing over 3000 2D SPECT images from n = 267 patients in p = 22 binary feature patterns. 7 0.0 0.3 0.6 0.0 0.3 0.6 0.0 0.3 0.6 SPECT data 10% 20% 30% ** *** *** *** *** *** 0.0 0.6 0.0 0.6 0.0 0.6 Promoter data 10% 20% 30% *** *** *** *** *** *** 0.0 0.3 0.0 0.3 0.0 0.3 Lymphogr aphy data 10% 20% 30% *** *** *** *** *** *** PFC Figure 2: Categorical data. A verage PFC for cross-v alidated KNNimpute (grey), MICE (white) and missForest (black) on three different data sets and three dif ferent amounts of missing v alues, i.e., 10%, 20% and 30%. Standard errors are in the order of magnitude of 10 − 4 . Significance levels for the paired W ilcoxon tests in fav our of missForest are encoded as “*” < 0.05, “**” < 0.01 and “***” < 0.001. • Promoter gene sequences in E. coli : The data set contains sequences found by Harley and Reynolds (1987) for promoters and sequences found by T o well et al. (1990) for non-promoters totalling n = 106 . For each candidate a sequence of 57 base pairs w as recorded. Each variable can take one of four DN A nucleotides, i.e., adenine, thymine, guanine or cytosine. Another variable distinguishes between promoter and non-promoter instances. • L ymphography domain data : The observations were obtained from patients suf fering from can- cer in the lymphatic of the immune system. For each of the n = 148 lymphoma p = 19 dif ferent properties were recorded mainly in a nominal fashion. There are nine binary v ariables. The rest of the v ariables hav e three or more lev els. In Figure 2 we can see that missForest is always imputing the missing values better than the compared methods. In some cases – namely for the SPECT data – the decrease of PFC compared to MICE is up to 60%. Ho wev er, for the other data sets the decrease is less pronounced ranging around 10 - 20% – but there still is a decrease. The amount of missing values on the other hand seems to ha ve only a minor influence on the performance of all methods. Except for MICE on the SPECT data, error rates remain almost constant increasing only by 1 - 2%. W e pointed out earlier that MICE is not primarily tailored for imputation performance b ut offers additional possibilities of assessing uncertainty of the imputed v alues due to the multiple imputation scheme. Anyhow , the results using the cross-v alidated KNNimpute (see Algorithm 2) on the dummy-coded categorical variables is surprising. The imputation for missForest needs on av erage 5 times as long as a cross-validated imputation using KNNimpute. 4.3 Mixed-type variables In the following we in vestigate four data sets where the first one has already been introduced, i.e. musk molecules data including the categorical response yielding the classification. The other data sets are: • Proteomics biomarkers f or Gaucher’s disease : Gaucher’ s disease is a rare inherited enzyme deficiency . In this data set Smit et al. (2007) present protein arrays for biomarkers ( p = 590 ) from blood serum samples ( n = 40 ). The binary response distinguishes between disease status. • Gene finding over prediction (GFOP) peptide search : This data set comprises mass-spectrometric measurements of n = 595 peptides from two shotgun proteomics experiments on the nematode 8 Caenorhabditis ele gans . The collection of p = 18 biological, technical and analytical variables had the aim of nov el peptide detection in a search on an extended database using established gene prediction methods. • Children’ s Hospital data : This data set is the product of a systematic long-term revie w of children with congenital heart defects after open-heart surgery . Next to defect and surgery related v ariables also long-term psychological adjustment and health-related quality of life was assessed. After removing observations with missing values the data set consists of n = 55 patients and p = 124 v ariables of which 48 are continuous and 76 are categorical. For further details see Latal et al. (2009). The results of this comparison are giv en in Figure 3. W e can see that missForest performs better than the other two methods, again reducing imputation error in many cases by more than 50%. For the GFOP data, KNNimpute has a slightly smaller NRMSE than missF orest but makes twice as much error on the categorical v ariables. Generally , with respect to the amount of missing values the NRMSE tends to have a greater v ariability than the PFC which remains largely the same. The imputation results for MICE on the Children’ s Hospital data hav e to be treated cautiously . Since this data set contains ill-distributed and nearly dependent variables, e.g., binary variables with very few observ ations in one category , the missingness pattern has a direct influence on the operability of the MICE implementation in the statistical software R. The imputation error illustrated in Figure 3 was computed from 50 successful simulations by randomly generating missingness patterns, which did not include only complete cases or no complete cases at all within the categories of the variables. Therefore, the actual numbers of simulations were lar ger than 50 for all three missing value amounts. Furthermore, nearly dependent v ariables were remov ed after each introduction of missing values. This leads to an av erage of 7 remov ed variables in each simulation. Due to this ad-hoc manipulation for making the MICE implementation work, we do not report significance statements for the imputation error . 4.4 Estimating imputation error In each experiment we get for each simulation run an OOB estimate for the imputation error . In Figure 4 the dif ferences of true imputation error, err true , and OOB error estimates, c err OOB , are illustrated for the continuous and the categorical data sets. Also, the mean of the true imputation error and the OOB error estimate ov er all simulations is depicted. W e can see that for the Isoprenoid and Musk data sets the OOB estimates are very accurate only dif fering from the true imputation error by a few percents. In case of the Parkinson’ s data set the OOB estimates exhibit a lot more variability than in all other data sets. Ho wever , on av erage the estimation is comparably good. For the categorical data sets the estimation accuracy behav es similarly ov er all scenarios. The OOB estimates tend to underestimate the imputation error with increasing amount of missing values. Apparently , the absolute size of the imputation error seems to play a minor role in the accuracy of the OOB estimates which can be seen nicely when comparing the SPECT and the Promoter data. 4.5 Computational efficiency W e assess the computational cost of missForest by comparing the runtimes of imputation on the pre vious data sets. T able 1 shows the runtimes in seconds of all methods on the analyzed data sets. W e can see that KNNimpute is by far the fastest method. Howe ver , missF orest runs considerably f aster than MICE and the MissP ALasso. In addition, applying missForest did not require antecedent standardization of the data, laborious dummy coding of categorical v ariables nor implementation of CV choices for tuning parameters. 9 0.0 0.3 0.6 Musk data 10% 20% 30% *** *** *** *** *** *** *** *** *** *** *** *** 0.0 0.3 0.6 Gaucher's data 10% 20% 30% ** *** *** *** *** *** *** *** *** 0.0 0.4 GFOP data 10% 20% 30% ### *** *** *** ## *** *** *** ## *** *** *** 0.0 0.4 Child hospital data 10% 20% 30% *** *** * *** *** NRMSE / PFC Figure 3: Mixed-type data. A verage NRMSE (left bar) and PFC (right bar , shaded) for KNNimpute (grey), MICE (white) and missF orest (black) on four different data sets and three dif ferent amounts of missing values, i.e., 10%, 20% and 30%. Standard errors are in the order of magnitude of 10 − 3 . Significance le vels for the paired W ilcoxon tests in f avour of missForest are encoded as “*” < 0.05, “**” < 0.01 and “***” < 0.001. If the a verage error of the compared method is smaller than that of missF orest the significance le vel is encoded by a hash (#) instead of an asterisk. Note that, due to ill-distribution and near dependence in the Child hospital data, the results for MICE ha ve to be treated with caution (see Section 4.3). There are two possible ways to speed up computation. The first one is to reduce the number of trees grown in each forest. In all comparative studies the number of trees was set to 100 which offers high precision b ut increased runtime. In T able 2 we can see that changing the number of trees in the forest has a stagnating influence on imputation error, but a strong influence on computation time which is approximately linear in the number of trees. The second one is to reduce the number of variables randomly selected at each node ( m try ) to set up the split. T able 2 sho ws that increasing m try has limited ef fect on imputation error , but computation time is strongly increased. Note that for m try = 1 we do not longer ha ve a random forest, since there is no more choice between v ariables to split on. This leads to a much higher imputation error , especially for the cases with low numbers of bootstrapped trees. W e use for all experiments b √ p c as def ault value, e.g., in the GFOP data this equals 4. 5 Conclusion Our ne w algorithm, missForest, allo ws for missing v alue imputation on basically any kind of data. In particular , it can handle multiv ariate data consisting of continuous and categorical variables simultane- ously . MissForest has no need for tuning parameters nor does it require assumptions about distributional aspects of the data. W e sho w on sev eral real data sets coming from different biological and medical fields that missForest outperforms established imputation methods like k -nearest neighbours imputation or multiv ariate imputation using chained equations. Using our OOB imputation error estimates miss- Forest offers a way to assess the quality of an imputation without the need of setting aside test data nor performing laborious cross-v alidations. For subsequent analysis these error estimates represent a mean 10 ● ● ● ● err true − err ^ OOB ● ● ● ● −0.2 0.0 0.2 0.4 0.6 0.1 0.3 0.1 0.3 0.1 0.3 0.2 0.2 0.2 Isoprenoid P ar kinson's Musk ● ● ● ● ● ● ● ● ● ● mean(err ^ OOB ) mean(err true ) ● ● ● ● ● ● err true − err ^ OOB ● ● ● ● ● ● −0.2 0.0 0.2 0.4 0.6 0.1 0.3 0.1 0.3 0.1 0.3 0.2 0.2 0.2 Lymphograph y SPECT Promoter ● ● ● ● ● ● ● ● ● ● mean(err ^ OOB ) mean(err true ) Figure 4: Difference of true imputation error err true and OOB imputation error estimate c err OOB for the continuous data sets (left) and the categorical data sets (right) and three dif ferent amounts of missing v alues, i.e., 0.1, 0.2 and 0.3. In each case the average err true (circle) and the average c err OOB (plus) o ver all simulations is gi ven. Data set n p KNN MissP ALasso MICE missF orest Isoprenoid 118 39 0.8 170 - 5.8 Parkinson’ s 195 22 0.7 120 - 6.1 Musk (cont.) 476 166 13 1400 - 250 Insulin 110 12626 1800 n/a - 6200 SPECT 267 22 1.3 - 37 5.5 Promoter 106 57 14 - 4400 38 L ymphography 148 19 1.1 - 93 7.0 Musk (mixed) 476 167 27 - 2800 500 Gaucher’ s 40 590 1.3 - 130 29 GFOP 595 18 2.7 - 1400 40 Children 55 124 2.7 - 4000 110 T able 1: A verage runtimes [s] for imputing the analyzed data sets. Runtimes are a veraged ov er the amount of missing v alues since this has a negligible ef fect on computing time. of informal reliability check for each variable. The full potential of missF orest is deployed when the data includes complex interactions or nonlinear relations between variables of unequal scales and dif- ferent type. Furthermore, missForest can be applied to high-dimensional data sets where the number of v ariables may greatly exceed the number of observations to a lar ge extent and still pro vides excellent imputation results. Acknowledgement Except for the Isoprenoid, the L ymphography , the Children’ s Hospital and the GFOP data all other data sets were obtained from the UCI machine learning repository (Frank and Asuncion (2010)). The GFOP data set was obtained from the Institute of Molecular Systems Biology , Zurich, Switzerland. Thanks go to L. Reiter for providing the data. The L ymphography data set was obtained from the University Medical Centre, Institute of Oncology , Ljubljana, Slov enia. Thanks go to M. Zwitter and M. Soklic for providing the data. The Children’ s Hospital data set was obtained from the Child Dev elopment Center at the Uni versity Children’ s Hospital Zrich, Switzerland. Thanks go to B. Latal and I. Beck for pro viding the data. Finally , we thank two anonymous referees for their constructi ve comments. 11 m try n tree 10 50 100 250 500 1 36.8/35.5 27.4/32.3 20.4/31.3 17.2/30.0 16.0/30.8 2.5s 3.2s 3.9s 5.8s 9.2s 2 34.9/31.8 24.8/29.2 18.3/28.8 16.0/28.6 15.5/29.1 6.9s 11.8s 15.0s 25.2s 39.3s 4 34.9/31.3 24.4/28.9 17.9/28.2 15.4/28.2 15.8/28.7 16.5s 25.1s 35.0s 49.0s 83.3s 8 34.7/31.4 24.3/28.9 18.1/27.8 15.2/27.8 15.7/28.6 39.2s 57.4s 84.4s 130.2s 190.8s 16 34.6/30.9 24.3/28.7 18.1/28.0 15.4/27.8 15.6/28.5 68.7s 99.7s 172.2s 237.6s 400.7s T able 2: A verage imputation error (NRMSE/PFC in percent) and runtime (in seconds) with dif ferent numbers of trees ( n tree ) grown in each forest and variables tried ( m try ) at each node of the trees. Here, we consider the GFOP data set with artificially introduced 10% of missing values. For each compari- son 50 simulation runs were performed using always the same missing v alue matrix for all numbers of trees/randomly selected v ariables for a single simulation. Refer ences Breiman, L. (2001). Random forests. Machine learning , 45 (1), 5–32. Frank, A. and Asuncion, A. (2010). UCI machine learning repository . Harley , C. B. and Reynolds, R. P . (1987). Analysis of e. coli promoter sequences. Nucleic Acids Researc h , 15: , 2343–2361. Kur gan, L., Cios, K., T adeusie wicz, R., Ogiela, M., and Goodenday , L. (2001). Knowledge discovery approach to automated cardiac SPECT diagnosis. Artificial Intelligence in Medicine , 23 (2), 149–169. Latal, B., Helfricht, S., Fischer , J., Bauersfeld, U., and Landolt, M. (2009). Psychological adjustment and quality of life in children and adolescents follo wing open-heart surgery for congenital heart disease: a systematic re vie w. BMC P ediatrics , 9 (6). Li, K. (1988). Imputation using Markov chains. Journal of Statistical Computation and Simulation , 30 (1), 57–79. Little, M., McSharry , P ., Hunter , E., Spielman, J., and Ramig, L. (2008). Suitability of dysphonia measurements for telemonitoring of Parkinsons disease. A v ailable from Nature Precedings. Little, R. and Rubin, D. (1987). Statistical Analysis with Missing Data . W iley New Y ork. Little, R. and Schluchter , M. (1985). Maximum likelihood estimation for mixed continuous and cate gor- ical data with missing v alues. Biometrika , 72 (3), 497–512. Mazumder , R., Hastie, T ., and T ibshirani, R. (2010). Spectral regularization algorithms for learning large incomplete matrices. J. Mac h. Learn. Res. , 11 , 2287–2322. 12 Oba, S., Sato, M., T akemasa, I., Monden, M., Matsubara, K., and Ishii, S. (2003). A Bayesian missing v alue estimation method for gene expression profile data. Bioinformatics , 19 (16), 2088–2096. Raghunathan, T ., Lepko wski, J., V an Hoewyk, J., and Solenberger , P . (2001). A multi v ariate technique for multiply imputing missing v alues using a sequence of regression models. Surve y Methodology , 27 (1), 85–96. Rubin, D. (1978). Multiple imputations in sample surveys-a phenomenological Bayesian approach to nonresponse. In Pr oceedings of the Surve y Researc h Methods Section, American Statistical Associa- tion , pages 20–34. Rubin, D. and Schafer , J. (1990). Ef ficiently creating multiple imputations for incomplete multiv ariate normal data. In Pr oceedings of the Statistical Computing Section of the American Statistical Associa- tion , pages 83–88. Schafer , J. (1997). Analysis of Incomplete Multivariate Data . Chapman & Hall. Smit, S., van Breemen, M., Hoefsloot, H., Smilde, A., Aerts, J., and de K oster , C. (2007). Assessing the statistical v alidity of proteomics based biomarkers. Analytica Chimica Acta , 592 (2), 210–217. St ¨ adler , N. and B ¨ uhlmann, P . (2010). P attern Alternating Maximization Algorithm for High-Dimensional Missing Data. Arxiv pr eprint arXiv:1005.0366 . T ibshirani, R. (1996). Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society . Series B (Methodological) , 58 (1), 267–288. T o well, G., Shavlik, J., and Noordewier , M. (1990). Refinement of approximate domain theories by kno wledge-based neural networks. In Pr oceedings of the Eighth National Confer ence on Artificial Intelligence , pages 861–866. T royanskaya, O., Cantor , M., Sherlock, G., Brown, P ., Hastie, T ., T ibshirani, R., Botstein, D., and Alt- man, R. (2001). Missing v alue estimation methods for DNA microarrays. Bioinformatics , 17 (6), 520–525. V an Buuren, S. (2007). Multiple imputation of discrete and continuous data by fully conditional specifi- cation. Statistical Methods in Medical Resear ch , 16 (3), 219–242. V an Buuren, S. and Oudshoorn, K. (1999). Flexible multiv ariate imputation by MICE. Leiden, The Netherlands: TNO Pre vention Center . W ille, A., Zimmermann, P ., Vranov ´ a, E., F ¨ urholz, A., Laule, O., Bleuler , S., Hennig, L., Prelic, A., V on Rohr, P ., Thiele, L., et al. (2004). Sparse graphical Gaussian modeling of the isoprenoid gene network in Arabidopsis thaliana. Genome Biology , 5 (11), R92. W u, X., W ang, J., Cui, X., Maianu, L., Rhees, B., Rosinski, J., So, W ., W illi, S., Osier , M., Hill, H., et al. (2007). The effect of insulin on e xpression of genes and biochemical pathways in human skeletal muscle. Endocrine , 31 (1), 5–17. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment