Variational approximation for mixtures of linear mixed models

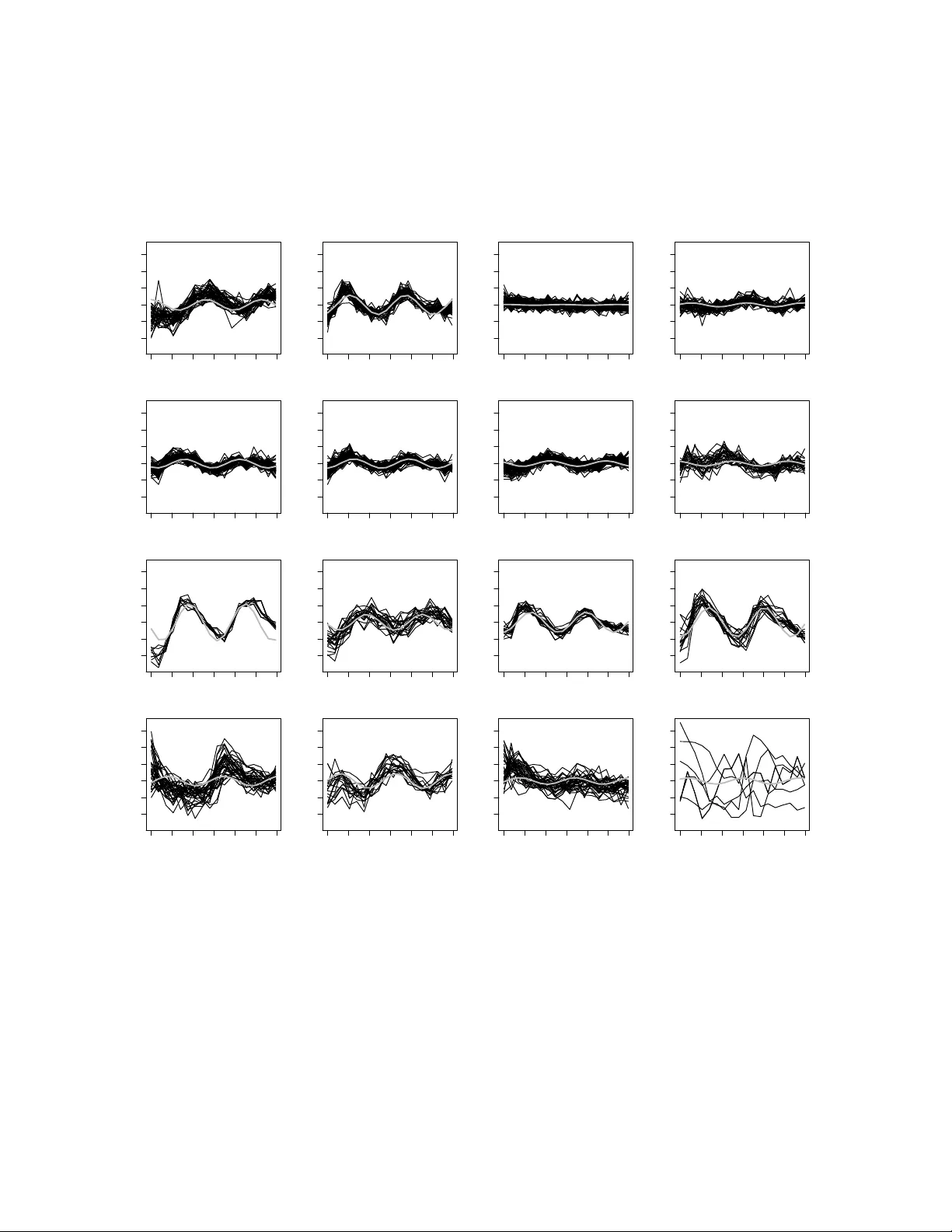

Mixtures of linear mixed models (MLMMs) are useful for clustering grouped data and can be estimated by likelihood maximization through the EM algorithm. The conventional approach to determining a suitable number of components is to compare different …

Authors: Siew Li Tan, David J. Nott