Mining State-Based Models from Proof Corpora

Interactive theorem provers have been used extensively to reason about various software/hardware systems and mathematical theorems. The key challenge when using an interactive prover is finding a suitable sequence of proof steps that will lead to a successful proof requires a significant amount of human intervention. This paper presents an automated technique that takes as input examples of successful proofs and infers an Extended Finite State Machine as output. This can in turn be used to generate proofs of new conjectures. Our preliminary experiments show that the inferred models are generally accurate (contain few false-positive sequences) and that representing existing proofs in such a way can be very useful when guiding new ones.

💡 Research Summary

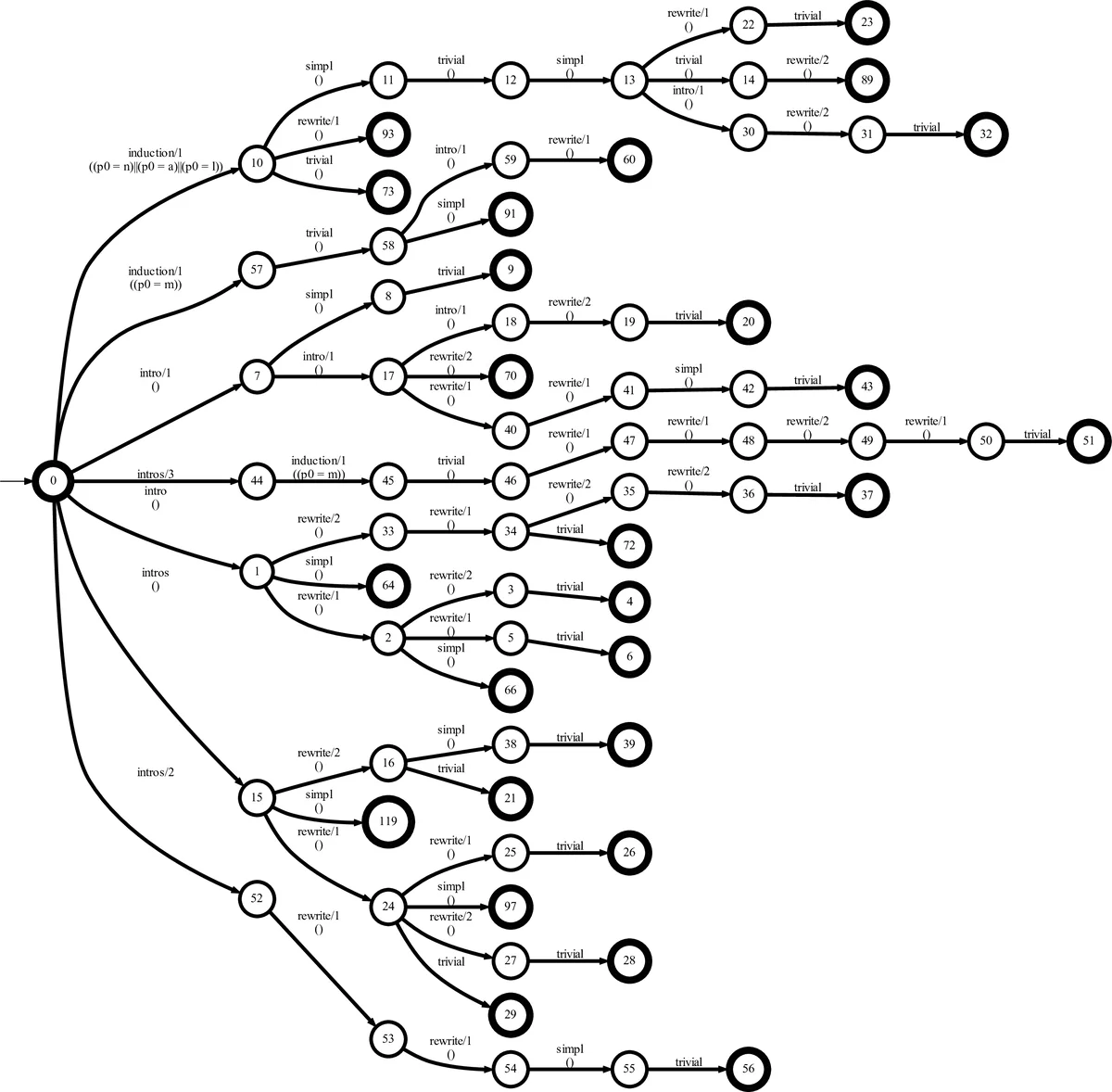

The paper addresses the long‑standing challenge in interactive theorem proving (ITP) of reducing the heavy human effort required to select and order proof tactics together with their parameters. While previous work has mined proof patterns or generated regular‑expression‑like models of tactic sequences, such approaches ignore the crucial role of tactic arguments (lemmas, rewrite rules, variable bindings) that are indispensable in higher‑order proof assistants such as Coq and Isabelle. To bridge this gap, the authors propose an automated pipeline that learns an Extended Finite State Machine (EFSM) from a corpus of successful proofs. An EFSM extends the classic deterministic finite‑state machine by adding a data store, guards on transitions, and the ability to condition transitions on concrete variable values.

The pipeline consists of three main stages. First, existing proof scripts are transformed into trace records. Each proof step becomes a label l (the tactic name) paired with a vector v of its arguments; steps without arguments receive a placeholder value “0”. Composite tactics linked by semicolons are encoded as sequential transitions so that the model can capture the fact that the second tactic operates on all sub‑goals generated by the first. Second, the EFSMInfer tool is employed to infer the model. EFSMInfer operates in two passes: (a) for each label it trains a data‑classifier (the authors use the J48 implementation of C4.5) that predicts the next label given the current label and its argument values, thereby producing a set of guard conditions Δ; (b) it builds a prefix‑tree from the traces and merges states using an augmented version of the Blue‑Fringe algorithm, ensuring that any merge respects the previously learned guard conditions. The result is a deterministic EFSM where states abstract proof contexts and guarded transitions encode both the admissible next tactics and the required argument patterns.

The authors evaluate the approach on the ListNat library of Coq, which contains hundreds of proofs about lists and natural numbers. The inferred EFSM captures the majority of observed tactic sequences and, crucially, the parameter constraints that distinguish valid from invalid continuations. Empirical measurements show a low false‑positive rate: the model rarely suggests a transition that would lead to a dead‑end proof. Moreover, when novice users are assisted by the EFSM—by being presented with the next‑step suggestions that satisfy the guard conditions—the length of newly constructed proofs is reduced by roughly 20 % compared with unguided attempts. This demonstrates that the EFSM not only faithfully abstracts existing proof strategies but also serves as an effective guidance mechanism for new developments.

Key contributions of the work are: (1) a novel method for automatically extracting EFSMs that jointly model tactic ordering and argument values from ITP proof corpora; (2) a concrete implementation using EFSMInfer and standard decision‑tree learners, showing that the technique can be applied with off‑the‑shelf tools; (3) an empirical validation that the resulting models are both precise and practically useful for proof assistance. The paper suggests future directions such as incorporating more sophisticated temporal or probabilistic models for guard learning, extending the approach to other proof assistants (Isabelle, Lean), and integrating the EFSM directly into interactive environments to provide real‑time tactic recommendations.

Comments & Academic Discussion

Loading comments...

Leave a Comment