A General Framework for Interacting Bayes-Optimally with Self-Interested Agents using Arbitrary Parametric Model and Model Prior

Recent advances in Bayesian reinforcement learning (BRL) have shown that Bayes-optimality is theoretically achievable by modeling the environment's latent dynamics using Flat-Dirichlet-Multinomial (FDM) prior. In self-interested multi-agent environme…

Authors: Trong Nghia Hoang, Kian Hsiang Low

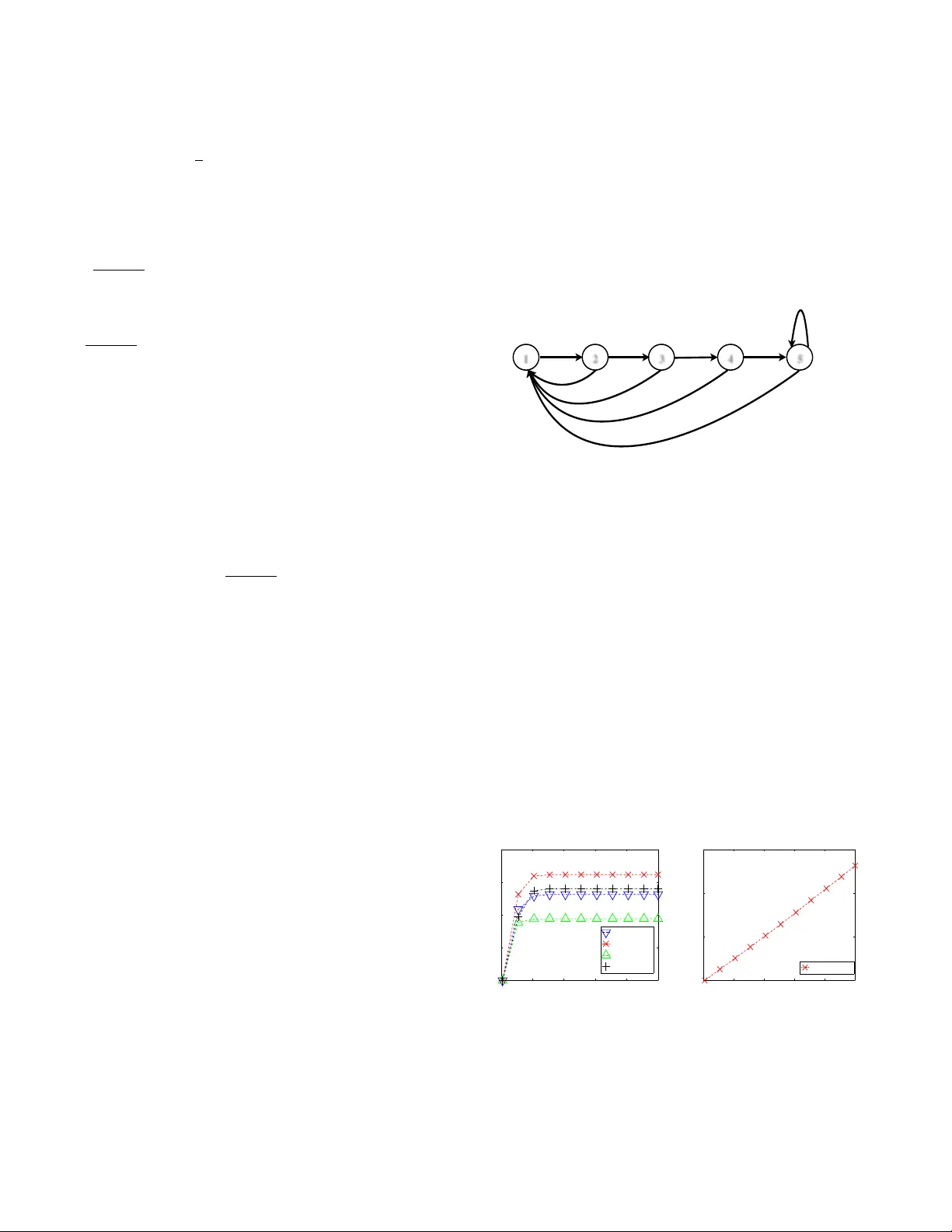

A General Framework f or Interacting Bayes-Optimally with Self-Inter ested Agents using Arbitrary Parametric Model and Model Prior T rong Nghia Hoang and Kian Hsiang Low Department of Computer Science, National Uni versity of Singapore Republic of Singapore { nghiaht, lo wkh } @comp.nus.edu.sg Abstract Recent advances in Bayesian reinforcement learn- ing (BRL) hav e shown that Bayes-optimality is theoretically achiev able by modeling the envi- ronment’ s latent dynamics using Flat-Dirichlet- Multinomial (FDM) prior . In self-interested multi- agent en vironments, the transition dynamics are mainly controlled by the other agent’ s stochastic behavior for which FDM’ s independence and mod- eling assumptions do not hold. As a result, FDM does not allo w the other agent’ s behavior to be generalized across different states nor specified us- ing prior domain knowledge. T o overcome these practical limitations of FDM, we propose a gener- alization of BRL to integrate the general class of parametric models and model priors, thus allowing practitioners’ domain kno wledge to be exploited to produce a fine-grained and compact representation of the other agent’ s behavior . Empirical e valua- tion sho ws that our approach outperforms existing multi-agent reinforcement learning algorithms. 1 Introduction In reinforcement learning (RL), an agent faces a dilemma between acting optimally with respect to the current, possi- bly incomplete knowledge of the environment (i.e., exploita- tion) vs. acting sub-optimally to gain more information about it (i.e., exploration). Model-based Bayesian reinforcement learning (BRL) circumvents such a dilemma by consider- ing the notion of Bayes-optimality [ Duff, 2003 ] : A Bayes- optimal policy selects actions that maximize the agent’ s e x- pected utility with respect to all possible sequences of future beliefs (starting from the initial belief) ov er candidate mod- els of the en vironment. Unfortunately , due to the large belief space, the Bayes-optimal policy can only be approximately deriv ed under a simple choice of models and model priors. For example, the Flat-Dirichlet-Multinomial (FDM) prior [ Poupart et al. , 2006 ] assumes the next-state distributions for each action-state pair to be modeled as independent multino- mial distributions with separate Dirichlet priors. Despite its common use to analyze and benchmark algorithms, FDM can perform poorly in practice as it often fails to exploit the struc- tured information of a problem [ Asmuth and Littman, 2011; Araya-Lopez et al. , 2012 ] . T o elaborate, a critical limitation of FDM lies in its inde- pendence assumption dri ven by computational conv enience rather than scientific insight. W e can identify practical exam- ples in the context of self-interested multi-agent RL (MARL) where the uncertainty in the transition model is mainly caused by the stochasticity in the other agent’ s behavior (in dif ferent states) for which the independence assumption does not hold (e.g., motion behavior of pedestrians [Natarajan et al. , 2012a; 2012b]). Consider , for example, an application of BRL in the problem of placing static sensors to monitor an environmental phenomenon: It in volv es acti vely selecting sensor locations (i.e., states) for measurement such that the sum of predictiv e variances at the unobserved locations is minimized. Here, the phenomenon is the “other agent” and the measurements are its actions. An important characterization of the phenomenon is that of the spatial correlation of measurements between neighboring locations/states [Lo w et al. , 2007; 2008; 2009; 2011; 2012; Chen et al. , 2012; Cao et al. , 2013], which makes FDM-based BRL extremely ill-suited for this problem due to its independence assumption. Secondly , despite its computational conv enience, FDM does not permit generalization across states [ Asmuth and Littman, 2011 ] , thus sev erely limiting its applicability in practical problems with a large state space where past obser- vations only come from a very limited set of states. Interest- ingly , in such problems, it is often possible to obtain prior do- main kno wledge providing a more “parsimonious” structure of the other agent’ s behavior , which can potentially resolve the issue of generalization. For example, consider using BRL to derive a Bayes-optimal policy for an autonomous car to navigate successfully among human-driv en vehicles [Hoang and Low , 2012; 2013b] whose beha viors in dif ferent situa- tions (i.e., states) are gov erned by a small, consistent set of latent parameters, as demonstrated in the empirical study of Gipps [ 1981 ] . By estimating/learning these parameters, it is then possible to generalize their behaviors across different states. This, ho wever , contradicts the independence assump- tion of FDM; in practice, ignoring this results in an inferior performance, as shown in Section 4. Note that, by using pa- rameter tying [Poupart et al., 2006], FDM can be modified to make the other agent’ s behavior identical in dif ferent states. But, this simple generalization is too restrictive for real-world problems like the e xamples abo ve where the other agent’ s be- havior in different states is not necessarily identical but re- lated via a common set of latent “non-Dirichlet” parameters. Consequently , there is still a huge gap in putting BRL into practice for interacting with self-interested agents of un- known behaviors. T o the best of our kno wledge, this is first in vestigated by Chalkiadakis and Boutilier [ 2003 ] who offer a myopic solution in the belief space instead of solving for a Bayes-optimal polic y that is non-myopic. Their proposed BPVI method essentially selects actions that jointly maxi- mize a heuristic aggreg ation of myopic v alue of perfect infor- mation [ Dearden et al. , 1998 ] and an av erage estimation of expected utility obtained from solving the exact MDPs with respect to samples drawn from the posterior belief of the other agent’ s behavior . Moreover , BPVI is restricted to work only with Dirichlet priors and multinomial likelihoods (i.e., FDM), which are subject to the abov e disadvantages in modeling the other agent’ s beha vior . Also, BPVI is demonstrated empiri- cally in the simplest of settings with only a few states. Furthermore, in light of the above examples, the other agent’ s behavior often needs to be modeled differently de- pending on the specific application. Grounding in the context of the BRL framew ork, either the domain expert struggles to best fit his prior knowledge to the supported set of mod- els and model priors or the agent de veloper has to re-design the frame work to incorporate a new modeling scheme. Ar- guably , there is no free lunch when it comes to modeling the other agent’ s beha vior across various applications. T o cope with this difficulty , the BRL framew ork should ideally allow a domain expert to freely incorporate his choice of design in modeling the other agent’ s behavior . Motiv ated by the abov e practical considerations, this pa- per presents a nov el generalization of BRL, which we call Interactive BRL (I-BRL) (Section 3), to integrate any para- metric model and model prior of the other agent’ s beha vior (Section 2) specified by domain experts, consequently yield- ing two advantages: The other agent’ s behavior can be rep- resented (a) in a fine-grained manner based on the practition- ers’ prior domain knowledge, and (b) compactly to be gener- alized across dif ferent states, thus o vercoming the limitations of FDM. W e show ho w the non-myopic Bayes-optimal policy can be deriv ed analytically by solving I-BRL exactly (Sec- tion 3.1) and propose an approximation algorithm to compute it efficiently in polynomial time (Section 3.2). Empirically , we e valuate the performance of I-BRL against that of BPVI [ Chalkiadakis and Boutilier, 2003 ] using an interesting traffic problem modeled after a real-world situation (Section 4). 2 Modeling the Other Agent In our proposed Bayesian modeling paradigm, the oppo- nent’ s 1 behavior is modeled as a set of probabilities p v sh ( λ ) , Pr ( v | s, h, λ ) for selecting action v in state s conditioned on the history h , { s i , u i , v i } d i =1 of d latest interactions where u i is the action taken by our agent in the i -th step. These distributions are parameterized by λ , which abstracts the ac- 1 For conv enience, we will use the terms the “other agent” and “opponent” interchangeably from now on. tual parametric form of the opponent’ s beha vior; this abstrac- tion provides practitioners the flexibility in choosing the most suitable degree of parameterization. F or example, λ can sim- ply be a set of multinomial distributions λ , { θ v sh } such that p v sh ( λ ) , θ v sh if no prior domain knowledge is a vailable. Oth- erwise, the domain knowledge can be exploited to produce a fine-grained representation of λ ; at the same time, λ can be made compact to generalize the opponent’ s beha vior across different states (e.g., Section 4). The opponent’ s behavior can be learned by monitoring the belief b ( λ ) , Pr ( λ ) over all possible λ . In particular, the be- lief (or probability density) b ( λ ) is updated at each step based on the history h ◦ h s, u, v i of d + 1 latest interactions (with h s, u, v i being the most recent one) using Bayes’ theorem: b v sh ( λ ) ∝ p v sh ( λ ) b ( λ ) . (1) Let ¯ s = ( s, h ) denote an information state that consists of the current state and the history of d latest interactions. When the opponent’ s behavior is stationary (i.e., d = 0 ), it follows that ¯ s = s . For ease of notations, the main results of our work (in subsequent sections) are presented only for the case where d = 0 (i.e., ¯ s = s ); extension to the general case just requires replacing s with ¯ s . In this case, (1) can be re-written as b v s ( λ ) ∝ p v s ( λ ) b ( λ ) . (2) The ke y dif ference between our Bayesian modeling paradigm and FDM [ Poupart et al. , 2006 ] is that we do not require b ( λ ) and p v s ( λ ) to be, respectiv ely , Dirichlet prior and multino- mial likelihood where Dirichlet is a conjugate prior for multi- nomial. In practice, such a conjugate prior is desirable be- cause the posterior b v s belongs to the same Dirichlet family as the prior b , thus making the belief update tractable and the Bayes-optimal polic y efficient to be deriv ed. Despite its computational conv enience, this conjugate prior restricts the practitioners from exploiting their domain knowledge to de- sign more informed priors (e.g., see Section 4). Furthermore, this turns out to be an overkill just to make the belief update tractable. In particular , we show in Theorem 1 below that, without assuming any specific parametric form of the initial prior , the posterior belief can still be tractably represented ev en though the y are not necessarily conjugate distributions. This is indeed sufficient to guarantee and deriv e a tractable representation of the Bayes-optimal policy using a finite set of parameters, as shall be seen later in Section 3.1. Theorem 1 If the initial prior b can be r epr esented exactly using a finite set of parameter s, then the posterior b 0 condi- tioned on a sequence of observations { ( s i , v i ) } n 0 i =1 can also be r epresented e xactly in parametric form. Pr oof Sketch . From (2), we can prov e by induction on n 0 that b 0 ( λ ) ∝ Φ( λ ) b ( λ ) (3) Φ( λ ) , Y s ∈ S Y v ∈ V p v s ( λ ) ψ v s (4) where ψ v s , P n 0 i =1 δ sv ( s i , v i ) and δ sv is the Kronecker delta function that returns 1 if s = s i and v = v i , and 0 otherwise 2 . 2 Intuitiv ely , Φ( λ ) can be interpreted as the likelihood of observ- ing each pair ( s, v ) for ψ v s times while interacting with an opponent whose behavior is parameterized by λ . From (3), it is clear that b 0 can be represented by a set of parameters { ψ v s } s,v and the finite representation of b . Thus, belief update is performed simply by incrementing the hyper - parameter ψ v s according to each observation ( s, v ) . 3 Interactive Bayesian RL (I-BRL) In this section, we first extend the proof techniques used in [ Poupart et al. , 2006 ] to theoretically derive the agent’ s Bayes-optimal policy against the general class of parametric models and model priors of the opponent’ s behavior (Sec- tion 2). In particular , we sho w that the deri ved Bayes-optimal policy can also be represented exactly using a finite number of parameters. Based on our deriv ation, a naiv e algorithm can be devised to compute the exact parametric form of the Bayes- optimal policy (Section 3.1). Finally , we present a practical algorithm to efficiently approximate this Bayes-optimal pol- icy in polynomial time (with respect to the size of the en vi- ronment model) (Section 3.2). Formally , an agent is assumed to be interacting with its opponent in a stochastic en vironment modeled as a tuple ( S, U, V , { r s } , { p uv s } , { p v s ( λ ) } , φ ) where S is a finite set of states, U and V are sets of actions av ailable to the agent and its opponent, respecti vely . In each stage, the immedi- ate payoff r s ( u, v ) to our agent depends on the joint action ( u, v ) ∈ U × V and the current state s ∈ S . The en vi- ronment then transitions to a new state s 0 with probability p uv s ( s 0 ) , Pr ( s 0 | s, u, v ) and the future payoff (in state s 0 ) is discounted by a constant factor 0 < φ < 1 , and so on. Fi- nally , as described in Section 2, the opponent’ s latent behav- ior { p v s ( λ ) } can be selected from the general class of para- metric models and model priors, which subsumes FDM (i.e., independent multinomials with separate Dirichlet priors). Now , let us recall that the ke y idea underlying the notion of Bayes-optimality [ Duff, 2003 ] is to maintain a belief b ( λ ) that represents the uncertainty surrounding the opponent’ s behav- ior λ in each stage of interaction. Thus, the action selected by the learner in each stage affects both its expected imme- diate payoff E λ [ P v p v s ( λ ) r s ( u, v ) | b ] and the posterior belief state b v s ( λ ) , the latter of which influences its future payoff and builds in the information gathering option (i.e., exploration). As such, the Bayes-optimal policy can be obtained by maxi- mizing the expected discounted sum of re wards V s ( b ) : V s ( b ) , max u X v h p v s , b i r s ( u, v ) + φ X s 0 p uv s ( s 0 ) V s 0 ( b v s ) ! (5) where h a, b i , R λ a ( λ ) b ( λ )d λ . The optimal policy for the learner is then defined as a function π ∗ that maps the belief b to an action u maximizing its expected utility , which can be derived by solving (5). T o deriv e our solution, we first re-state tw o well-known results concerning the augmented belief-state MDP in single-agent RL [ Poupart et al. , 2006 ] , which also hold straight-forwardly for our general class of parametric models and model priors. Theorem 2 The optimal value function V k for k steps-to-go con ver ges to the optimal value function V for infinite horizon as k → ∞ : k V − V k +1 k ∞ ≤ φ k V − V k k ∞ . Theorem 3 The optimal value function V k s ( b ) for k steps-to- go can be r epresented by a finite set Γ k s of α -functions: V k s ( b ) = max α s ∈ Γ k s h α s , b i . (6) Simply put, these results imply that the optimal v alue V s in (5) can be approximated arbitrarily closely by a finite set Γ k s of piecewise linear α -functions α s , as shown in (6). Each α - function α s is associated with an action u α s yielding an ex- pected utility of α s ( λ ) if the true behavior of the opponent is λ and consequently an ov erall expected re ward h α s , b i by as- suming that, starting from ( s, b ) , the learner selects action u α s and continues optimally thereafter . In particular, Γ k s and u α s can be deri ved based on a constructiv e proof of Theorem 3. Howe ver , due to limited space, we only state the constructi ve process below . Interested readers are referred to Appendix A for a detailed proof. Specifically , giv en { Γ k s } s such that (6) holds for k , it follo ws (see Appendix A) that V k +1 s ( b ) = max u,t α ut s , b (7) where t , ( t s 0 v ) s 0 ∈ S,v ∈ V with t s 0 v ∈ 1 , . . . , Γ k s 0 , and α ut s ( λ ) , X v p v s ( λ ) r s ( u, v ) + φ X s 0 α t s 0 v s 0 ( λ ) p uv s ( s 0 ) (8) such that α t s 0 v s 0 denotes the t s 0 v -th α -function in Γ k s 0 . Set- ting Γ k +1 s = { α ut s } u,t and u α ut s = u , it follows that (6) also holds for k + 1 . As a result, the optimal policy π ∗ ( b ) can be deriv ed directly from these α -functions by π ∗ ( b ) , u α ∗ s where α ∗ s = arg max α ut s ∈ Γ k +1 s h α ut s , b i . Thus, constructing Γ k +1 s from the previously constructed sets { Γ k s } s essentially boils down to an e xhaustiv e enumeration of all possible pairs ( u, t ) and the corresponding application of (8) to compute α ut s . Though (8) specifies a bottom-up procedure construct- ing Γ k +1 s from the pre viously constructed sets { Γ k s 0 } s 0 of α - functions, it implicitly requires a con venient parameterization for the α -functions that is closed under the application of (8). T o complete this analytical deriv ation, we present a final re- sult to demonstrate that each α -function is indeed of such parametric form. Note that Theorem 4 below generalizes a similar result proven in [ Poupart et al. , 2006 ] , the latter of which shows that, under FDM, each α -function can be rep- resented by a linear combination of multi variate monomials. A practical algorithm building on our generalized result in Theorem 4 is presented in Section 3.2. Theorem 4 Let Φ denote a family of all functions Φ( λ ) (4) . Then, the optimal value V k s 0 can be r epresented by a finite set Γ k s 0 of α -functions α j s 0 for j = 1 , . . . , | Γ k s 0 | : α j s 0 ( λ ) = m X i =1 c i Φ i ( λ ) (9) wher e Φ i ∈ Φ . So, each α -function α j s 0 can be compactly r epresented by a finite set of par ameters { c i } m i =1 3 . 3 T o ease readability , we ab use the notations { c i , Φ i } m i =1 slightly: Each α j s 0 ( λ ) should be specified by a different set { c i , Φ i } m i =1 . Pr oof Sketch . W e will prove (9) by induction on k 4 . Suppos- ing (9) holds for k . Setting j = t s 0 v in (9) results in α t s 0 v s 0 ( λ ) = m X i =1 c i Φ i ( λ ) , (10) which is then plugged into (8) to yield α ut s ( λ ) = X v ∈ V c v Ψ v ( λ ) + X s 0 ∈ S X v ∈ V m X i =1 c v s 0 i Ψ v s 0 i ( λ ) ! (11) where Ψ v ( λ ) = p v s ( λ ) , Ψ v s 0 i ( λ ) = p v s ( λ )Φ i ( λ ) , and the coef- ficients c v = r s ( u, v ) and c v s 0 i = φp uv s ( s 0 ) c i . It is easy to see that Ψ v ∈ Φ and Ψ v s 0 i ∈ Φ . So, (9) clearly holds for k +1 . W e hav e sho wn above that, under the general class of parametric models and model priors (Section 2), each α -function can be represented by a linear combination of arbitrary parametric functions in Φ , which subsume multiv ariate monomials used in [ Poupart et al. , 2006 ] . 3.1 An Exact Algorithm Intuitiv ely , Theorems 3 and 4 provide a simple and construc- tiv e method for computing the set of α -functions and hence, the optimal policy . In step k + 1 , the sets Γ k +1 s for all s ∈ S are constructed using (10) and (11) from Γ k s 0 for all s 0 ∈ S , the latter of which are computed pre viously in step k . When k = 0 (i.e., base case), see the proof of Theorem 4 above (i.e., footnote 4). A sketch of this algorithm is sho wn below: B A CKUP ( s, k + 1) 1. Γ ∗ s,u ← ( g ( λ ) , X v c v Ψ v ( λ ) ) 2. Γ v ,s 0 s,u ← ( g j ( λ ) , m X i =1 c v s 0 i Ψ v s 0 i ( λ ) ) j =1 ,..., | Γ k s 0 | 3. Γ s,u ← Γ ∗ s,u ⊕ M v ,s 0 Γ v ,s 0 s,u 5 4. Γ k +1 s ← [ u ∈ U Γ s,u In the abov e algorithm, steps 1 and 2 compute the first and second summation terms on the right-hand side of (11), re- spectiv ely . Then, steps 3 and 4 construct Γ k +1 s = { α ut s } u,t using (11) ov er all t and u , respecti vely . Thus, by iterativ ely computing Γ k +1 s = BA CKUP ( s, k + 1) for a sufficiently large value of k , Γ k +1 s can be used to approximate V s arbitrar- ily closely , as sho wn in Theorem 2. Howe ver , this naiv e algo- rithm is computationally impractical due to the following is- sues: (a) α -function explosion − the number of α -functions grows doubly e xponentially in the planning horizon length, as deriv ed from (7) and (8): Γ k +1 s = O Q s 0 Γ k s 0 | V | | U | , and (b) parameter explosion − the a verage number of pa- rameters used to represent an α -function gro ws by a factor of O ( | S || V | ) , as manifested in (11). The practicality of our approach therefore depends crucially on ho w these issues are resolved, as described ne xt. 4 When k = 0 , (9) can be verified by letting c i = 0 . 5 A ⊕ B = { a + b | a ∈ A, b ∈ B } . 3.2 A Practical Approximation Algorithm In this section, we introduce practical modifications of the B A CKUP algorithm by addressing the above-mentioned is- sues. W e first address the issue of α -function explosion by generalizing discrete POMDP’ s PBVI solver [ Pineau et al. , 2003 ] to be used for our augmented belief-state MDP: Only the α -functions that yield optimal values for a sampled set of reachable beliefs B s = { b 1 s , b 2 s , · · · , b | B s | s } are computed (see the modifications in steps 3 and 4 of the PB-B A CKUP algorithm). The resulting algorithm is sho wn below: PB-B A CKUP ( B s = { b 1 s , b 2 s , · · · , b | B s | s } , s, k + 1) 1. Γ ∗ s,u ← ( g ( λ ) , X v c v Ψ v ( λ ) ) 2. Γ v ,s 0 s,u ← ( g j ( λ ) , m X i =1 c v s 0 i Ψ v s 0 i ( λ ) ) j =1 ,..., | Γ k s 0 | 3. Γ i s,u ← g + X s 0 ,v arg max g j ∈ Γ v,s 0 s,u g j , b i s g ∈ Γ ∗ s,u 4. Γ k +1 s ← ( g i , arg max g ∈ Γ i s,u g , b i s ) i =1 ,..., | B s | Secondly , to address the issue of parameter explosion , each α -function is projected onto a fixed number of basis func- tions to keep the number of parameters from gro wing expo- nentially . This projection is done after each PB-BA CKUP operation, hence always keeping the number of parameters fixed (i.e., one parameter per basis function). In particular , since each α -function is in fact a linear combination of func- tions in Φ (Theorem 4), it is natural to choose these basis functions from Φ 6 . Besides, it is easy to see from (3) that each sampled belief b i s can also be written as b i s ( λ ) = η Φ i s ( λ ) b ( λ ) (12) where b is the initial prior belief, η = 1 / h Φ i s , b i , and Φ i s ∈ Φ . For con venience, these { Φ i s } i =1 ,..., | B s | are selected as basis functions. Specifically , after each PB-B A CKUP operation, each α s ∈ Γ k s is projected onto the function space defined by { Φ i s } i =1 ,..., | B s | . This projection is then cast as an optimiza- tion problem that minimizes the squared difference J ( α s ) be- tween the α -function and its projection with respect to the sampled beliefs in B s : J ( α s ) , 1 2 | B s | X j =1 α s , b j s − | B s | X i =1 c i Φ i s , b j s 2 . (13) This can be done analytically by letting ∂ J ( α s ) ∂ c i = 0 and solving for c i , which is equivalent to solving a linear sys- tem Ax = d where x i = c i , A j i = P | B s | k =1 Φ i s , b k s Φ j s , b k s and d j = P | B s | k =1 Φ j s , b k s α s , b k s . Note that this projection works directly with the values α s , b j s instead of the e xact parametric form of α s in (9). This allo ws for a more compact 6 See Appendix B for other choices. implementation of the PB-B A CKUP algorithm presented abov e: Instead of maintaining the exact parameters that repre- sent each of the immediate functions g , only their e valuations at the sampled beliefs B s = n b 1 s , b 2 s , · · · , b | B s | s o need to be maintained. In particular , the values of g , b i s i =1 ,..., | B s | can be estimated as follows: g , b i s = η Z λ g ( λ )Φ i s ( λ ) b ( λ )d λ ≈ P n j =1 g ( λ j )Φ i s ( λ j ) P n j =1 Φ i s ( λ j ) (14) where λ j n j =1 are samples drawn from the initial prior b . During the online execution phase, (14) is also used to com- pute the expected payoff for the α -functions ev aluated at the current belief b 0 ( λ ) = η Φ( λ ) b ( λ ) : h α s , b 0 i ≈ P n j =1 Φ( λ j ) P | B s | i =1 c i Φ i s ( λ j ) P n j =1 Φ( λ j ) . (15) So, the real-time processing cost of ev aluating each α - function’ s expected rew ard at a particular belief is O ( | B s | n ) . Since the sampling of { b i s } , { λ j } and the computation of n P | B s | i =1 c i Φ i s ( λ j ) o can be performed in advance, this O ( | B s | n ) cost is further reduced to O ( n ) , which makes the action selection incur O ( | B s | n ) cost in total. This is signifi- cantly cheaper as compared to the total cost O ( nk | S | 2 | U || V | ) of online sampling and re-estimating V s incurred by BPVI [ Chalkiadakis and Boutilier , 2003 ] . Also, note that since the of fline computational costs in steps 1 to 4 of PB- B A CKUP ( B s , s, k + 1) and the projection cost, which is cast as the cost of solving a system of linear equations, are al- ways polynomial functions of the interested v ariables (e.g., | S | , | U | , | V | , n, | B s | ), the optimal policy can be approximated in polynomial time. 4 Experiments and Discussion In this section, a realistic scenario of intersection navigation is modeled as a stochastic game [ W ang et al. , 2012 ] ; it is inspired from a near-miss accident during the 2007 D ARP A Urban Challenge. Considering the traffic situation illustrated in Fig. 1 where two autonomous vehicles (marked A and B) are about to enter an intersection (I), the road segments are discretized into a uniform grid with cell size 5 m × 5 m and the speed of each vehicle is also discretized uniformly into 5 lev els ranging from 0 m/s to 4 m/s. So, in each stage, the sys- tem’ s state can be characterized as a tuple { P A , P B , S A , S B } specifying the current positions ( P ) and velocities ( S ) of A and B, respectively . In addition, our vehicle (A) can either accelerate ( +1 m/s 2 ), decelerate ( − 1 m/s 2 ), or maintain its speed ( +0 m/s 2 ) in each time step while the other vehicle (B) changes its speed based on a parameterized reactive model [ Gipps, 1981 ] : v safe = S B + Distance ( P A , P B ) − τ S B S B /d + τ v des = min(4 , S B + a, v safe ) S 0 B ∼ Uniform (max(0 , v des − σ a ) , v des ) . In this model, the driv er’ s acce leration a ∈ [0 . 5 m/s 2 , 3 m/s 2 ] , deceleration d ∈ [ − 3 m/s 2 , − 0 . 5 m/s 2 ] , reaction time τ ∈ [0 . 5 s , 2 s ] , and imperfection σ ∈ [0 , 1] are the unkno wn parameters distributed uniformly within the corresponding ranges. This parameterization can cover a variety of driv ers’ typical behaviors, as sho wn in a preliminary study . For a fur- ther understanding of these parameters, the readers are re- ferred to [ Gipps, 1981 ] . Besides, in each time step, each ve- hicle X ∈ { A , B } mov es from its current cell P X to the next cell P 0 X with probability 1 /t and remains in the same cell with probability 1 − 1 /t where t is the expected time to mov e for- ward one cell from the current position with respect to the cur- rent speed (e.g., t = 5 /S X ). Thus, in general, the underlying stochastic game has 6 × 6 × 5 × 5 = 900 states (i.e., each v e- hicle has 6 possible positions and 5 levels of speed), which is significantly larger than the settings in previous experiments. In each state, our vehicle has 3 actions, as mentioned pre vi- ously , while the other vehicle has 5 actions corresponding to 5 lev els of speed according to the reactiv e model. B D A I D B A Figure 1: (Left) A near -miss accident during the 2007 D ARP A Urban Challenge, and (Right) the discretized en vi- ronment: A and B move tow ards destinations D A and D B while av oiding collision at I . Shaded areas are not passable. The goal for our vehicle in this domain is to learn the other vehicle’ s reactiv e model and adjust its navigation strategy ac- cordingly such that there is no collision and the time spent to cross the intersection is minimized. T o achiev e this goal, we penalize our vehicle in each step by − 1 and rew ard it with 50 when it successfully crosses the intersection. If it collides with the other vehicle (at I ), we penalize it by − 250 . The discount f actor is set as 0 . 99 . W e ev aluate the performance of I-BRL in this problem against 100 different sets of reactiv e parameters (for the other vehicle) generated uniformly from the abov e ranges. Against each set of parameters, we run 20 simulations ( h = 100 steps each) to estimate our vehicle’ s av erage performance 7 R . In particular , we compare our algo- rithm’ s average performance ag ainst the av erage performance of a fully informed vehicle ( Upper Bound ) who knows ex- actly the reactiv e parameters before each simulation, a ratio- nal vehicle ( Exploit ) who estimates the reacti ve parameters by taking the means of the above ranges, and a vehicle em- ploying BPVI [ Chalkiadakis and Boutilier , 2003 ] ( BPVI ). The results are shown in Fig. 2a: It can be observed that our vehicle alw ays performs significantly better than both the rational and BPVI-based vehicles. In particular, our ve- hicle manages to reduce the performance gap between the 7 After our vehicle successfully crosses the intersection, the sys- tem’ s state is reset to the default state in Fig. 1 (Right). 40 50 60 70 80 90 100 80 100 120 140 160 180 200 h (a) R Upper Bound Exploit I ï BRL BPVI 0 20 40 60 80 100 0 200 400 600 800 1000 h (b) Tim e (m in) Planning Time Figure 2: (a) Performance comparison between our v ehicle (I-BRL), the fully informed, the rational and the BPVI vehi- cles ( φ = 0 . 99 ); (b) Our approach’ s of fline planning time. fully informed and rational vehicles roughly by half. The difference in performance between our v ehicle and the fully informed vehicle is expected as the fully informed vehicle always takes the optimal step from the beginning (since it knows the reacti ve parameters in adv ance) while our vehi- cle has to take cautious steps (by maintaining a slo w speed) before it feels confident with the information collected during interaction. Intuitively , the performance g ap is mainly caused during this initial period of “caution”. Also, since the uni- form prior over the reactive parameters λ = { a, d, τ , σ } is not a conjugate prior for the other vehicle’ s beha vior model θ s ( v ) = p ( v | s, λ ) , the BPVI-based vehicle has to directly maintain and update its belief using FDM: λ = { θ s } s with θ s = { θ v s } v ∼ Dir ( { n v s } v ) (Section 2), instead of λ = { a, d, τ , σ } . Howe ver , FDM implicitly assumes that { θ s } s are statistically independent, which is not true in this case since all θ s are actually related by { a, d, τ , σ } . Unfortunately , BPVI cannot exploit this information to generalize the other vehicle’ s behavior across different states due to its restrictive FDM (i.e., independent multinomial likelihoods with separate Dirichlet priors), thus resulting in an inferior performance. 5 Related W orks In self-interested (or non-cooperati ve) MARL, there has been sev eral groups of proponents adv ocating dif ferent learning goals, the follo wing of which hav e garnered substantial sup- port: (a) Stability − in self-play or against a certain class of learning opponents, the learners’ behaviors conv erge to an equilibrium; (b) optimality − a learner’ s behavior nec- essarily con verges to the best policy against a certain class of learning opponents; and (c) security − a learner’ s av er- age payoff must exceed the maximin value of the game. F or example, the works of Littman [ 2001 ] , Bianchi et al. [ 2007 ] , and Akchurina [ 2009 ] hav e focused on (ev olutionary) game- theoretic approaches that satisfy the stability criterion in self- play . The works of Bowling and V eloso [ 2001 ] , Suematsu and Hayashi [ 2002 ] , and T esauro [ 2003 ] hav e dev eloped al- gorithms that address both the optimality and stability crite- ria: A learner essentially con v erges to the best response if the opponents’ policies are stationary; otherwise, it con verges in self-play . Notably , the work of Po wers and Shoham [ 2005 ] has proposed an approach that prov ably con verges to an - best response (i.e., optimality ) against a class of adaptiv e, bounded-memory opponents while simultaneously guaran- teeing a minimum av erage payof f (i.e., security ) in single- state, repeated games. In contrast to the above-mentioned works that focus on con vergence, I-BRL directly optimizes a learner’ s perfor- mance during its course of interaction, which may terminate before it can successfully learn its opponent’ s behavior . So, our main concern is how well the learner can perform be- fore its beha vior con ver ges. From a practical perspecti ve, this seems to be a more appropriate goal: In reality , the agents may only interact for a limited period, which is not enough to guarantee con ver gence, thus undermining the stability and optimality criteria. In such a context, the existing approaches appear to be at a disadvantage: (a) Algorithms that focus on stability and optimality tend to select exploratory ac- tions with drastic effect without considering their huge costs (i.e., poor rewards) [ Chalkiadakis and Boutilier , 2003 ] ; and (b) though the notion of security aims to prev ent a learner from selecting such radical actions, the proposed security v al- ues (e.g., maximin value) may not always turn out to be tight lower bounds for the optimal performance [ Hoang and Low , 2013 ] . Interested readers are referred to [ Chalkiadakis and Boutilier , 2003 ] and Appendix C for a detailed discussion and additional experiments to compare performances of I-BRL and these approaches, respectiv ely . Note that while solving for the Bayes-optimal policy ef fi- ciently has not been addressed explicitly in general prior to this paper , we can actually av oid this problem by allo wing the agent to act sub-optimally in a bounded number of steps. In particular , the works of Asmuth and Littman [ 2011 ] and Araya-Lopez et al. [ 2012 ] guarantee that, in the worst case, the agent will act nearly approximately Bayes-optimal in all but a polynomially bounded number of steps with high prob- ability . It is thus necessary to point out the difference be- tween I-BRL and these worst-case approaches: W e are in- terested in maximizing the a verage-case performance with certainty rather than the worst-case performance with some “high probability” guarantee. Comparing their performances is beyond the scope of this paper . 6 Conclusion This paper describes a nov el generalization of BRL, called I-BRL, to integrate the general class of parametric mod- els and model priors of the opponent’ s behavior . As a re- sult, I-BRL relaxes the restrictiv e assumption of FDM that is often imposed in existing works, thus offering practition- ers greater flexibility in encoding their prior domain knowl- edge of the opponent’ s behavior . Empirical e valuation shows that I-BRL outperforms a Bayesian MARL approach utilizing FDM called BPVI. I-BRL also outperforms existing MARL approaches focusing on con v ergence (Section 5), as shown in the additional experiments in [ Hoang and Lo w , 2013 ] . T o this end, we have successfully bridged the gap in applying BRL to self-interested multi-agent settings. Acknowledgments. This work was supported by Singapore- MIT Alliance Research & T echnology Subaward Agreements No. 28 R- 252 - 000 - 502 - 592 & No. 33 R- 252 - 000 - 509 - 592 . References [ Akchurina, 2009 ] Natalia Akchurina. Multiagent reinforce- ment learning: Algorithm con verging to Nash equilibrium in general-sum discounted stochastic games. In Proc. AA- MAS , pages 725–732, 2009. [ Araya-Lopez et al. , 2012 ] M. Araya-Lopez, V . Thomas, and O. Buf fet. Near-optimal BRL using optimistic local transitions. In Pr oc. ICML , 2012. [ Asmuth and Littman, 2011 ] J. Asmuth and M. L. Littman. Learning is planning: Near Bayes-optimal reinforcement learning via Monte-Carlo tree search. In Pr oc. UAI , pages 19–26, 2011. [ Bianchi et al. , 2007 ] Reinaldo A. C. Bianchi, Carlos H. C. Ribeiro, and Anna H. R. Costa. Heuristic selection of ac- tions in multiagent reinforcement learning. In Pr oc. IJCAI , 2007. [ Bowling and V eloso, 2001 ] Michael Bowling and Manuela V eloso. Rational and con vergent learning in stochastic games. In Pr oc. IJCAI , 2001. [ Chalkiadakis and Boutilier , 2003 ] Georgios Chalkiadakis and Craig Boutilier . Coordination in multiagent reinforce- ment learning: A Bayesian approach. In Pr oc. AAMAS , pages 709–716, 2003. [ Dearden et al. , 1998 ] Richard Dearden, Nir Friedman, and Stuart Russell. Bayesian Q-learning. In Pr oc. AAAI , pages 761–768, 1998. [ Duff, 2003 ] Michael Duff. Design for an optimal probe. In Pr oc. ICML , 2003. [ Gipps, 1981 ] P . G. Gipps. A behavioural car following model for computer simulation. T ransportation Resear ch B , 15(2):105–111, 1981. [ Hoang and Low , 2013 ] T . N. Hoang and K. H. Low . A general framework for interacting Bayes-optimally with self-interested agents using arbitrary parametric model and model prior . arXi v:1304.2024, 2013. [ Littman, 2001 ] Michael L. Littman. Friend-or-foe Q- learning in general-sum games. In Pr oc. ICML , 2001. [ Pineau et al. , 2003 ] J. Pineau, G. Gordon, and S. Thrun. Point-based value iteration: An anytime algorithm for POMDPs. In Pr oc. IJCAI , pages 1025–1032, 2003. [ Poupart et al. , 2006 ] Pascal Poupart, Nikos Vlassis, Jesse Hoey , and Ke vin Regan. An analytic solution to discrete Bayesian reinforcement learning. In Pr oc. ICML , pages 697–704, 2006. [ Powers and Shoham, 2005 ] Rob Po wers and Y oav Shoham. Learning against opponents with bounded memory . In Pr oc. IJCAI , 2005. [ Suematsu and Hayashi, 2002 ] Nobuo Suematsu and Akira Hayashi. A multiagent reinforcement learning algorithm using extended optimal response. In Pr oc. AAMAS , 2002. [ T esauro, 2003 ] Gerald T esauro. Extending Q-learning to general adaptive multi-agent systems. In Pr oc. NIPS , 2003. [ W ang et al. , 2012 ] Y i W ang, Kok Sung W on, David Hsu, and W ee Sun Lee. Monte Carlo Bayesian Reinforcement Learning. In Pr oc. ICML , 2012. A Proof Sketches for Theorems 2 and 3 This section provides more detailed proof sketches for Theo- rems 2 and 3 as mentioned in Section 3. Theorem 2. The optimal value function V k for k steps-to-go con verges to the optimal v alue function V for infinite horizon as k → ∞ : k V − V k +1 k ∞ ≤ φ k V − V k k ∞ . Pr oof Sketch . Define L k s ( b ) = | V s ( b ) − V k s ( b ) | . Using | max a f ( a ) − max a g ( a ) | ≤ max a | f ( a ) − g ( a ) | , L k +1 s ( b ) ≤ φ max u X v ,s 0 h p v s , b i p uv s ( s 0 ) L k s 0 ( b v s ) ≤ φ max u X v ,s 0 h p v s , b i p uv s ( s 0 ) k V − V k k ∞ = φ k V − V k k ∞ (16) Since the last inequality (16) holds for ev ery pair ( s, b ) , it follows that k V − V k +1 k ∞ ≤ φ k V − V k k ∞ . Theorem 3. The optimal v alue function V k s ( b ) for k steps- to-go can be represented as a finite set Γ k s of α -functions: V k s ( b ) = max α s ∈ Γ k s h α s , b i . (17) Pr oof Sketch . W e giv e a constructive proof to (17) by induc- tion, which shows ho w Γ k s can be built recursi vely . Assuming that (17) holds for k 8 , it can be pro ven that (17) also holds for k + 1 . In particular , it follo ws from our inductiv e assumption that the term V k s 0 ( b v s ) in (5) can be rewritten as: V k s 0 ( b v s ) = | Γ k s 0 | max j =1 Z λ α j s 0 ( λ ) b v s ( λ )d λ = | Γ k s 0 | max j =1 Z λ α j s 0 ( λ ) p v s ( λ ) b ( λ ) h p v s , b i d λ = h p v s , b i − 1 | Γ k s 0 | max j =1 Z λ b ( λ ) α j s 0 ( λ ) p v s ( λ )d λ By plugging the abov e equation into (5) and using the fact that r s b ( u ) = P v h p v s , b i r s ( u, v ) , V k +1 s ( b ) = max u r s b ( u ) + φ X s 0 ,v | Γ k s 0 | max j =1 p uv s ( s 0 ) Q v s ( α j s 0 , b ) (18) where Q v s ( α j s 0 , b ) = R λ α j s 0 ( λ ) p v s ( λ ) b ( λ )d λ . No w , applying the fact that X s 0 X v | Γ k s 0 | max t s 0 v =1 A s 0 v [ t s 0 v ] = max t X s 0 X v A s 0 v [ t s 0 v ] where A s 0 v [ t s 0 v ] = p uv s ( s 0 ) Q v s ( α t s 0 v s 0 , b ) and using r s b ( u ) = R λ b ( λ ) P v p v s ( λ ) r s ( u, v )d λ , (18) can be rewritten as V k +1 s ( b ) = max u,t Z λ b ( λ ) α ut s ( λ )d λ (19) 8 When k = 0 , (17) can be verified by letting α s ( λ ) = 0 with t = ( t s 0 v ) s 0 ∈ S,v ∈ V and α ut s ( λ ) = X v p v s ( λ ) r s ( u, v ) + φ X s 0 α t s 0 v s 0 ( λ ) p uv s ( s 0 ) ! . (20) By setting Γ k +1 s = { α ut s } u,t and u α ut s = u , it can be verified that (17) also holds for k + 1 . B Alternati ve Choice of Basis Functions This section demonstrates another theoretical adv antage of our framework: The flexibility to customize the general point- based algorithm presented in Section 3.2 into more manage- able forms (e.g., simple, easy to implement, etc.) with respect to dif ferent choices of basis functions. Interestingly , these customizations often allow the practitioners to trade of f ef- fectiv ely between the performance and sophistication of the implemented algorithm: A simple choice of basis functions may (though not necessarily) reduce its performance but, in exchange, besto ws upon it a customization that is more com- putationally efficient and easier to implement. This is es- pecially useful in practical situations where finding a good enough solution quickly is more important than looking for better yet time-consuming solutions. As an example, we present such an alternativ e of the basis functions in the rest of this section. In particular , let { λ i } n i =1 be a set of the opponent’ s models sampled from the initial belief b . Also, let Ψ i ( λ ) denote a function that returns 1 if λ = λ i , and 0 otherwise. According to Section 3.2, to keep the number of parameters from growing exponentially , we project each α -function onto { Ψ i ( λ ) } n i =1 by minimizing (13) or alternati vely , the unconstrained squared difference be- tween the α -function and its projection: J ( α s ) = 1 2 Z λ α s ( λ ) − | B s | X i =1 c i Φ i s ( λ ) 2 d λ . (21) Now , let us consider (8), which specifies the exact solution for (5) in Section 3. Assume that α t s 0 v s 0 ( λ ) is projected onto { Ψ i ( λ ) } n i =1 by minimizing (21): ¯ α t s 0 v s 0 ( λ ) = n X i =1 Ψ i ( λ ) ϕ t s 0 v s 0 ( i ) (22) where { ϕ t s 0 v s 0 ( i ) } i are the projection coefficients. According to the general point-based algorithm in Section 3.2, α ut s ( λ ) is first computed by replacing α t s 0 v s 0 ( λ ) with ¯ α t s 0 v s 0 ( λ ) in (8): α ut s ( λ ) = X v p v s ( λ ) r s ( u, v ) + φ X s 0 ¯ α t s 0 v s 0 ( λ ) p uv s ( s 0 ) ! . (23) Then, following (21), α ut s ( λ ) is projected onto { Ψ i ( λ ) } i by solving for { ϕ ut s ( i ) } i that minimize J ( α ut s ) = 1 2 Z λ α ut s ( λ ) − n X i =1 Ψ i ( λ ) ϕ ut s ( i ) ! 2 d λ . (24) The back-up operation is therefore cast as finding { ϕ ut s ( i ) } i that minimize (24). T o do this, define L ( λ ) = 1 2 α ut s ( λ ) − n X i =1 Ψ i ( λ ) ϕ ut s ( i ) ! 2 and take the corresponding partial deriv ativ es of L ( λ ) with respect to { ϕ ut s ( j ) } j : ∂ L ( λ ) ∂ ϕ ut s ( j ) = − α ut s ( λ ) − n X i =1 Ψ i ( λ ) ϕ ut s ( i ) ! Ψ j ( λ ) . (25) From the definition of Ψ j ( λ ) , it is clear that when λ 6 = λ j , ∂ L ( λ ) ∂ ϕ ut s ( j ) = 0 . Otherwise, this only happens when α ut s ( λ j ) = n X i =1 Ψ i ( λ j ) ϕ ut s ( i ) = ϕ ut s ( j ) (by def. of Ψ i ( λ ) ) (26) On the other hand, by plugging (22) into (23) and using Ψ i ( λ ) = 0 ∀ λ 6 = λ i , α ut s ( λ j ) can be expressed as α ut s ( λ j ) = X v p v s ( λ j ) r s ( u, v ) + φ X s 0 p uv s ( s 0 ) ϕ t s 0 v s 0 ( j ) ! So, to guarantee that ∂ L ( λ ) ∂ ϕ ut s ( j ) = 0 ∀ λ, j (i.e., minimizing (24) with respect to { ϕ ut s ( j ) } j ), the values of { ϕ ut s ( j ) } j can be set as (from (26) and the abov e equation) ϕ ut s ( j ) = X v p v s ( λ j ) r s ( u, v ) + φ X s 0 p uv s ( s 0 ) ϕ t s 0 v s 0 ( j ) ! . Surprisingly , this equation specifies exactly the α -vector back-up operation for the discrete version of (5): V s ( ˆ b ) = max u X v D p v s , ˆ b E r s ( u, v ) + φ X s 0 p uv s ( s 0 ) V s 0 ( ˆ b v s ) ! (27) where ˆ b is the discrete distribution over the set of samples { λ j } j (i.e., P j ˆ b ( λ j ) = 1 ). This implies that by choosing { Ψ i ( λ ) } i as our basis functions, finding the corresponding “projected” solution for (5) is identical to solving (27), which can be easily implemented using any of the existing discrete POMDP solvers (e.g., [ Pineau et al. , 2003 ] ). C Additional Experiments This section provides additional ev aluations of our proposed I-BRL framew ork, in comparison with existing works in MARL, through a series of stochastic games which are adapted and simplified from the testing benchmarks used in [ W ang et al. , 2012; Poupart et al. , 2006 ] . In particular , I-BRL is e valuated in two small yet typical application domains that are widely used in the existing works (Sections C.1, C.2). C.1 Multi-Agent Chain W orld In this problem, the system consists of a chain of 5 states and 2 agents; each agent has 2 actions { a, b } . In each stage of in- teraction, both agents will mov e one step forward or go back to the initial state depending on whether they coordinate on action a or b , respectiv ely [ Poupart et al. , 2006 ] . In partic- ular , the agents will receiv e an immediate re ward of 10 for coordinating on a in the last state and 2 for coordinating on b in any state except the first one. Otherwise, the agents will remain in the current state and get no re ward (Fig. 3). After each step, their payoffs are discounted by a constant factor of 0 < φ < 1 . 1 2 3 5 4 a, a : 0 a, a : 0 a, a : 0 a, a : 0 a, a : 10 b, b : 2 b, b : 2 b, b : 2 b, b : 2 Figure 3: Multi-Agent Chain W orld Problem. In this experiment, we compare the performance of I- BRL with the state-of-the-art framew orks in MARL which include BPVI [ Chalkiadakis and Boutilier, 2003 ] , Hyper-Q [ T esauro, 2003 ] , and Meta-Strategy [ Powers and Shoham, 2005 ] . Among these works, BPVI is the most relev ant to I- BRL (Section 1). Hyper -Q simply extends Q-learning into the context of multi-agent learning. Meta-Strategy , by default, plays the best response to the empirical estimation of the op- ponent’ s behavior and occasionally switches to the maximin strategy when its accumulated rew ard falls belo w the max- imin value of the game. In particular , we compare the av- erage performance of these frame works when tested against 100 dif ferent opponents whose behaviors are modeled as a set of probabilities θ s = { θ v s } v ∼ Dir( { n v s } v ) (i.e., of selecting action v in state s ). These opponents are independently and randomly generated from these Dirichlet distributions with the parameters n v s = 1 / | V | . Then, against each opponent, we run 20 simulations ( h = 100 steps each) to ev aluate the per - formance R of each frame work. The results sho w that I-BRL significantly outperforms the others (Fig. 4a). 0 20 40 60 80 100 0 0.5 1 1.5 2 h R BVPI I ï BRL Hyper ï Q MetaStrategy 0 20 40 60 80 100 0 50 100 150 h Time (mi n ) Planning Time (a) (b) Figure 4: (a) Performance comparison between I-BRL, BPVI, Hyper-Q, and Meta-Strategy ( φ = 0 . 75 ), and (b) I- BRL ’ s of fline planning time. From these results, BPVI’ s inferior performance, as com- pared to I-BRL, is expected because BPVI, as mentioned in Section 1, relies on a sub-optimal myopic information-gain function [ Dearden et al. , 1998 ] , thus underestimating the risk of moving forward and forfeiting the opportunity to go back- ward to get more information and earn the small reward. Hence, in many cases, the chance of getting big re ward (be- fore it is se verely discounted) is accidentally ov er-estimated due to BPVI’ s lack of information. As a result, this makes the expected gain of moving forward insuf ficient to compen- sate for the risk of doing so. Besides, it is also expected that Hyper-Q’ s and Meta-Strategy’ s performance are worse than I- BRL ’ s since they primarily focus on the criteria of optimality and security , which put them at a disadv antage in the context of our w ork, as explained pre viously in Section 1. Notably , in this case, the maximin v alue of Meta-Strategy in the first state is v acuously equal to 0 , which is ef fectively a lo wer bound for any algorithms. In contrast, I-BRL directly optimizes the agent’ s expected utility by taking into account its current be- lief and all possible sequences of future beliefs (see (5)). As a result, our agent behav es cautiously and always takes the backward action until it has sufficient information to guaran- tee that the expected gain of mo ving forward is w orth the risk of doing so. In addition, I-BRL ’ s online processing cost is also significantly less e xpensiv e than BPVI’ s: I-BRL requires only 2 . 5 hours to complete 20 simulations ( 100 steps each) against 100 opponents while BPVI requires 4 . 2 hours. In ex- change for this speed-up, I-BRL spends a fe w hours of of fline planning (Fig. 4b), which is a reasonable trade-off consider- ing ho w critical it is for an agent to meet the real-time con- straint during interaction. C.2 Iterated Prisoner Dilemma (IPD) The IPD is an iterati ve version of the well-kno wn one-shot, two-player game kno wn as the Prisoner Dilemma [ W ang et al. , 2012 ] , in which each player attempts to maximize its rew ard by cooperating (C) or betraying (B) the other . Un- like the one-shot game, the game in IPD is played repeatedly and each player knows the history of his opponent’ s mov es, thus having the opportunity to predict the opponent’ s behav- ior based on past interactions. In each stage of interaction, the agents will get a re ward of 3 or 1 depending on whether they mutually cooperate or betray each other , respectiv ely . In addition, the agent will get no re ward if it cooperates while his opponent betrays; con versely , it gets a reward of 5 for be- traying while the opponent cooperates. 30 40 50 60 70 80 90 100 35 40 45 50 55 h R BVPI I ï BRL Hyper ï Q Meta Strategy 0 20 40 60 80 100 0 10 20 30 40 50 60 70 80 h Time (mi n ) Planning Time (a) (b) Figure 5: (a) Performance comparison between I-BRL, BPVI, Hyper-Q and Meta-Strategy ( φ = 0 . 95 ); (b) I-BRL ’ s offline planning time. In this experiment, the opponent is assumed to make his decision based on the last step of interaction (e.g., adaptiv e, memory-bounded opponent). Thus, its behavior can be mod- eled as a set of conditional probabilities θ ¯ s = { θ v ¯ s } v ∼ Dir( { n v ¯ s } v ) where ¯ s = { a − 1 , o − 1 } encodes the agent’ s and its opponent’ s actions a − 1 , o − 1 ∈ { B , C } in the previous step. Similar to the previous experiment (Section C.1), we compare the average performance of I-BRL, BPVI [ Chalki- adakis and Boutilier , 2003 ] , Hyper-Q [ T esauro, 2003 ] , and Meta-Strategy [ Powers and Shoham, 2005 ] when tested against 100 different opponents randomly generated from the Dirichlet priors. The results are shown in Figs. 5a and 5b. From these results, it can be clearly observ ed that I-BRL also outperforms BPVI and the other methods in this experiment, which is consistent with our explanations in the previous ex- periment. Also, in terms of the online processing cost, I-BRL only requires 1 . 74 hours to complete all the simulations while BPVI requires 4 hours.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment