Patch-based Probabilistic Image Quality Assessment for Face Selection and Improved Video-based Face Recognition

In video based face recognition, face images are typically captured over multiple frames in uncontrolled conditions, where head pose, illumination, shadowing, motion blur and focus change over the sequence. Additionally, inaccuracies in face localisa…

Authors: Yongkang Wong, Shaokang Chen, S

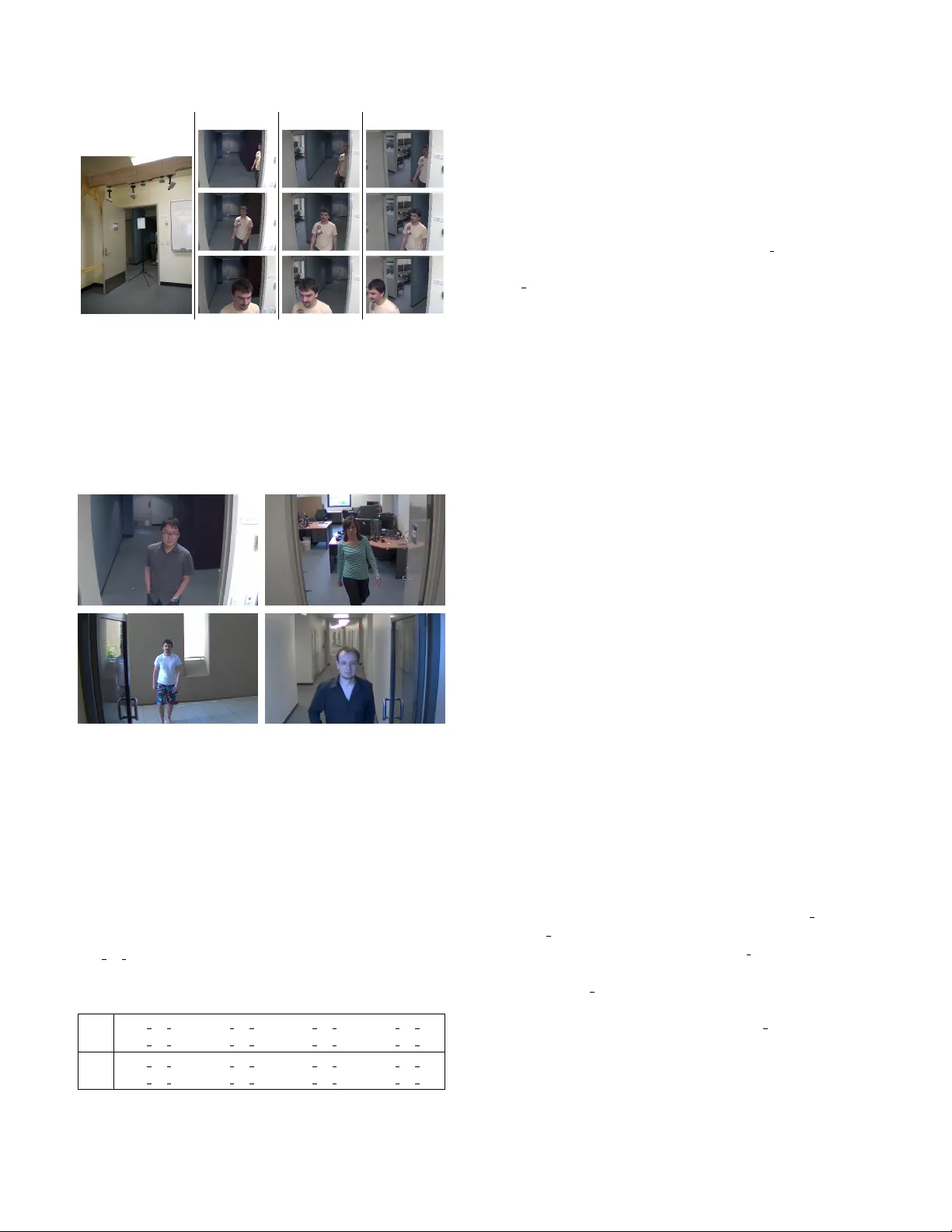

Patch-based Pr obabilistic Image Quality Assessment f or F ace Selection and Impro ved V ideo-based Face Recognition Y ongkang W ong, Shaokang Chen, Sandra Mau, Conrad Sanderson, Brian C. Lov ell NICT A, PO Box 6020, St Lucia, QLD 4067, Australia ∗ The Uni versity of Queensland, School of ITEE, QLD 4072, Australia Abstract In video based face recognition, face images are typically captur ed over multiple frames in uncontr olled conditions, wher e head pose , illumination, shadowing, motion blur and focus change over the sequence. Additionally , inaccuracies in face localisation can also introduce scale and alignment variations. Using all face imag es, including images of poor quality , can actually degr ade face r ecognition performance. While one solution it to use only the ‘best’ subset of ima ges, curr ent face selection techniques ar e incapable of simulta- neously handling all of the abovementioned issues. W e pr o- pose an efficient patch-based face image quality assessment algorithm which quantifies the similarity of a face image to a pr obabilistic face model, r epresenting an ‘ideal’ face. Image characteristics that affect r ecognition are taken into account, including variations in geometric alignment (shift, r otation and scale), sharpness, head pose and cast shad- ows. Experiments on FERET and PIE datasets show that the pr oposed algorithm is able to identify imag es which ar e simultaneously the most fr ontal, aligned, sharp and well illuminated. Further experiments on a new video surveil- lance dataset (termed ChokeP oint) show that the pr oposed method pr ovides better face subsets than existing face se- lection techniques, leading to significant impr ovements in r ecognition accur acy . 1. Introduction V ideo-based identity inference in surveillance conditions is challenging due to a v ariety of factors, including the subjects’ motion, the uncontrolled nature of the subjects, variable lighting, and poor quality CCTV video recordings. This results in issues for face recognition such as low reso- lution, blurry images (due to motion or loss of focus), large pose v ariations, and lo w contrast [ 14 , 28 , 36 , 41 ]. While re- cent face recognition algorithms can handle faces with mod- erately challenging illumination conditions [ 15 , 17 , 24 , 28 ], strong illumination variations (causing cast shado ws [ 30 ] and self-shadowing) remain problematic [ 31 ]. ∗ Published in: IEEE Conference on Computer V ision and Pattern Recognition W orkshops (CVPR W), pp. 74–81, 2011. One approach to overcome the impact of poor quality images is to assume that such images are outliers in a se- quence. This includes approaches like exemplar extraction using clustering techniques (eg. k-means clustering [ 13 ]) and statistical model approaches for outlier remov al [ 6 ]. Howe ver , these approaches are not likely to work when most of the images in the sequence ha ve poor quality — the good quality images would actually be classified as outliers. Another approach is explicit subset selection, where a face quality assessment is automatically made on each im- age, either to remov e poor quality face images, or to select a subset comprised of high quality images [ 10 , 20 , 21 , 33 ]. This impro ves recognition performance, with the additional benefit of reducing the overall computation load during fea- ture extraction and matching [ 19 ]. The challenge in this approach is finding a good definition for “face quality”. Sev eral face image standards hav e been proposed for face quality assessment (eg. ISO/IEC 19794-5 [ 1 ] and ICA O 9303 [ 2 ]). In these standards, quality can be divided into: (i) image specific qualities such as sharpness, contrast, compression artifacts, and (ii) face specific qualities such as face geometry , pose, eye detectability , illumination angles. Based in part on the above standards, many approaches hav e been proposed to analyse various face and image properties. For example, face pose estimation using tree structured multiple pose estimators [ 39 ], and face align- ment estimation using template matching [ 7 ]. Asymme- try analysis has been proposed to simultaneously estimate two qualities: out-of-plane rotation and non-frontal illumi- nation [ 10 , 29 , 40 ]. Since face recognition performance is simultaneously impacted by multiple factors, being able to detect one or two qualities is insufficient for robust subset selection. One ap- proach to simultaneously detect multiple quality character- istics is through a fusion of indi vidual face and image qual- ity measurements. Nasrollahi and Moeslund [ 21 ] proposed a weighted quality fusion approach to combine out-of-plane rotation, sharpness, brightness, and image resolution qual- ities. Rua et al. [ 26 ] proposed a similar quality assess- ment approach, by using asymmetry analysis and two sharp- ness measurements. Hsu et al. [ 16 ] proposed to learn fu- sion parameters on multiple quality scores to achiev e max- imum correlation with matching scores between face pairs. Another proposed fusion approach uses a Bayesian network to model the relationships among qualities, image features and matching scores [ 22 ]. The main drawbacks of the abov e fusion approaches are: • Fusion-based approaches only perform as well as their individual classifiers. For example, if a pose estima- tion algorithm requires accurate facial feature localisa- tion, the whole fusion frame work will fail in the cases where that pose algorithm fails (such as in low resolu- tion CCTV footage) [ 35 ]. • As various properties are measured individually and hav e different influence on face quality , it may be dif- ficult to combine them to output a single quality score for the purposes of image selection. • As multiple classifiers as inv olved, they are typically more time consuming and hence may not be suitable for real-time surveillance applications. • Since face matching scores are heavily dependant on system-specific details (including the input features, matching algorithms and training images), quality as- sessment approaches that learn a fusion model based on match scores end up being closely tied to the par- ticular system configuration and hence need to be re- trained for each system. Simultaneously detecting multiple quality characteristics can also be accomplished by learning a generic model to define the ‘ideal’ quality . Luo [ 18 ] proposed a learning based approach where the quality model is trained to corre- late with manually labelled quality scores. Howe ver , gi ven the subjectiv e nature of human labelling, and the fact that humans may not know what characteristics work best for automatic face recognition algorithms, this approach may not generate the best quality model for face recognition. In this paper we propose a straightforward and effecti ve patch-based face quality assessment algorithm, targeted to- wards handling images obtained in surveillance conditions. It quantifies the similarity of a given face to a probabilistic face model, representing an ‘ideal’ f ace, via patch-based lo- cal analysis. W ithout resorting to fusion, the proposed algo- rithm outputs a single score for each image, with the score simultaneously reflecting the degree of alignment errors, pose variations, shado wing, and image sharpness (under- lying resolution). Localisation of facial features (ie. eyes, nose, mouth) is not required. W e continue the paper as follows. In Section 2 we de- scribe the proposed quality assessment algorithm. Still im- age and video datasets used in the experiments are briefly described in in Section 3 . Extensive performance com- parisons against existing techniques are giv en in Section 4 (on still images) and Section 5 (on surveillance videos). The main findings are discussed in Section 6 . 2. Probabilistic Face Quality Assessment The proposed algorithm is comprised of fiv e steps: (1) pixel-based image normalisation, (2) patch extraction and normalisation, (3) feature extraction from each patch, (4) local probability calculation, (5) ov erall quality score generation via integration of local probabilities. These steps are elaborated below: 1. For a given image I , we perform non-linear pre- processing (log transform) to reduce the dynamic range of data. Following [ 9 ], the normalised image I log is calculated using: I log ( r , c ) = ln[ I ( r , c ) + 1] (1) where I ( r , c ) is the pixel intensity located at ( r , c ) . Logarithm normalisation amplifies low intensity pixels and compresses high intensity pixels. This property is helpful in reducing the intensity differences between skin tones. 2. The transformed image I log is di vided into N overlap- ping blocks (patches). Each block b i has a size of n × n pixels and ov erlap neighbouring blocks by t pixels. T o accommodate for contrast variations between face im- ages, each patch is normalised to hav e zero mean and unit variance [ 37 ]. 3. From each block, a 2D Discrete Cosine T ransform (DCT) feature vector is extracted [ 11 ]. Excluding the 0-th DCT component (as it has no information due to the previous normalisation), the top d low frequency components are retained. The low frequency compo- nents retain generic facial textures [ 12 ], while largely omitting person-specific information. At the same time, cast shadows [ 37 ] as well as variations in pose and alignment can alter the local textures. 4. For each block location i , the probability of the corre- sponding feature vector x i is calculated using a loca- tion specific probabilistic model: p ( x i | µ i , Σ i ) = exp h − 1 2 ( x i − µ i ) T Σ − 1 i ( x i − µ i ) i (2 π ) d 2 | Σ i | 1 2 (2) where µ i and Σ i are the mean and cov ariance matrix of a normal distribution. The model for each location is trained using a pool of frontal faces with frontal illumi- nation and neutral expression. All of the training face images are first scaled and aligned to a fixed size, with each eye located at a fixed location. W e emphasise that during testing, the faces do not need to be aligned. 5. By assuming that the model for each location is in- dependent, an overall probabilistic quality score Q for image I , comprised of N blocks, is calculated using: Q ( I ) = X N i =1 log p ( x i | µ i , Σ i ) (3) The resulting quality score represents the probabilistic similarity of a giv en face to an “ideal” face (as represented by a set of training images). A higher quality score reflects better image quality . 3. Face Datasets In this section, we briefly describe the FERET , PIE and ChokePoint face datasets, as well as their setup for our ex- periments. FERET [ 23 ] and PIE [ 32 ] are used to analyse ho w accu- rate the proposed quality assessment algorithm is for cor- rectly selecting best quality images with sev eral desired characteristics, compared to other existing methods. In to- tal, there are 1124 unique subjects in the training phase and 1263 subjects in the test phase. The ChokePoint dataset contains surveillance videos. It is used to study the improv ement in verification perfor- mance gained from subset selection, using the proposed quality method as well as other approaches. 3.1. Setup of Still Image Datasets: FERET and PIE T o study the performance of the proposed method in terms of correctly selecting images with desired characteris- tics, we simulated blurring as well as four alignment errors using images from the ‘fb’ subset of FERET . Experiments with pose v ariations (out-of-plane rotation) used dedicated subsets from FERET and PIE. Experiments with cast shad- ows used the illumination subset of PIE. The generated alignment errors 1 are: horizontal shift and vertical shift (using displacements of 0 , ± 2 , ± 4 , ± 6 , ± 8 pix- els), in-plane rotation (using rotations of 0 ◦ , ± 10 ◦ , ± 20 ◦ , ± 30 ◦ ), and scale variations (using scaling factors of 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 , 1 . 1 , 1 . 2 , 1 . 3 ) . For sharpness variations, each original image is first downscaled to three sizes ( 48 × 48 , 32 × 32 and 16 × 16 pixels) then rescaled to the baseline size of 64 × 64 pixels. See Fig. 1 for examples. FERET pro vides the dedicated ‘b’ subset with pose v ari- ations, containing out-of plane rotations of 0 ◦ , ± 15 ◦ , ± 25 ◦ , ± 40 ◦ , ± 60 ◦ . PIE also provides a dedicated subset with pose v ariations, though with a smaller set of rotations ( 0 ◦ , ± 22 . 5 ◦ , ± 45 ◦ , ± 67 . 5 ◦ ). The illumination subset of PIE was used to assess per- formance in various cast shadow conditions. In our experi- ments, we divided the frontal vie w images into six subsets 2 based on the angle of the corresponding light source. Sub- set 1 has the most frontal light sources, while subset 6 has the largest light sources angle ( 54 ◦ - 67 ◦ ). See Fig. 2 for ex- amples. 1 The generated alignment errors are representatives of real-life charac- teristics of automatic face localisation/detection algorithms [ 25 ]. 2 Subset 1: light source 8, 11, 20; Subset 2: light source 6, 7, 9, 12, 19, 21; Subset 3: light source 5, 10, 13, 14; Subset 4: light source 18, 22; Subset 5: light source 4, 15; Subset 6: light source 2, 3, 16, 17. Aligned Horizontal Shift V ertical Shift In-Plane Rotation Scale Change Blurring Figure 1. Examples of simulated image variations on FERET . Subset 1 ( 0 ◦ ) Subset 2 ( 16 ◦ - 21 ◦ ) Subset 3 ( 31 ◦ - 32 ◦ ) Subset 4 ( 37 ◦ - 38 ◦ ) Subset 5 ( 44 ◦ - 47 ◦ ) Subset 6 ( 54 ◦ - 67 ◦ ) Figure 2. Examples from PIE with strong directed illumination, causing self-shadowing. 3.2. Surveillance V ideos: ChokePoint Dataset W e collected a ne w video dataset 3 , termed Choke- P oint , designed for experiments in person identifica- tion/verification under real-world surveillance conditions using existing technologies. An array of three cameras was placed abov e sev eral portals (natural choke points in terms of pedestrian traffic) to capture subjects walking through each portal in a natural way (see Figs. 3 and 4 ). While a person is walking through a portal, a sequence of face images (ie. a face set) can be captured. Faces in such sets will hav e v ariations in terms of illumination conditions, pose, sharpness, as well as misalignment due to automatic face localisation/detection [ 25 , 28 ]. Due to the three camera configuration, one of the cameras is likely to capture a face set where a subset of the faces is near -frontal. The dataset consists of 25 subjects (19 male and 6 fe- male) in portal 1 and 29 subjects (23 male and 6 female) in portal 2. In total, it consists of 48 video sequences and 64,204 face images. Each sequence was named according to the recording conditions (eg. P2E S1 C3) where P , S, and C stand for portal , sequence and camera , respectively . E and L indicate subjects either entering or leaving the por- tal. The numbers indicate the respectiv e portal, sequence and camera label. For example, P2L S1 C3 indicates that the recording was done in Portal 2, with people leaving the portal, and captured by camera 3 in the first recorded se- quence. In this paper , all the experiments were performed with the video-to-video verification protocol. In this protocol, video sequences are divided into two groups ( G 1 and G 2 ), where each group played the role of dev elopment set and ev aluation set in turn. Parameters can be first learned on the dev elopment set and then applied on the e valuation set. The av erage v erification rate is used for reporting results. In our experiments we selected the frontal view cameras (sho wn in T able 1 ). In each group, each sequence takes turn to be the gallery , with the the leftov er sequences becoming the probe. 3 http://arma.sourceforge.net/chokepoint/ Camera Rig Camera 1 Camera 2 Camera 3 Figure 3. An example of the recording setup used for the Choke- P oint dataset. A camera rig contains 3 cameras placed just abo ve a door , used for simultaneously recording the entry of a person from 3 viewpoints. The variations between viewpoints allow for varia- tions in walking directions, f acilitating the capture of a near-frontal face by one of the cameras. Figure 4. Example shots from the ChokePoint dataset, showing portals with various backgrounds. T able 1. ChokePoint video-to-video verification protocol. Se- quences are divided into two groups (G1 and G2). Listed se- quences contain faces with the most frontal pose vie w . P , S, and C stand for portal , sequence and camera , respecti vely . E and L indi- cate subjects entering or leaving the portal. The numbers indicate the respective portal, sequence and camera label. For example, P2L S1 C3 indicates that the recording w as done in Portal 2, with people leaving the portal, and captured by camera 3 in the first recorded sequence. G1 P1E S1 C1 P1E S2 C2 P2E S2 C2 P2E S1 C3 P1L S1 C1 P1L S2 C2 P2L S2 C2 P2L S1 C1 G2 P1E S3 C3 P1E S4 C1 P2E S4 C2 P2E S3 C1 P1L S3 C3 P1L S4 C1 P2L S4 C2 P2L S3 C3 4. Experiments on Still Images In this section, we ev aluate how well the proposed qual- ity assessment method can identify the best quality faces when presented with both good and poor quality faces. The proposed method was compared with: (i) a score fu- sion method using pixel based asymmetry analysis and two sharpness analyses (denoted as Asym shrp ) [ 26 ], (ii) asymmetry analysis with Gabor features (denoted as Gabor asym ) [ 29 ], (iii) the classical Distance From Face Space (DFFS) method [ 5 ]. The ‘fa’ subset of FERET , containing frontal faces with frontal illumination and neutral expression, was used to train the location specific probabilistic models in the pro- posed method. The ‘fa’ subset was also used to select the decision threshold for rejecting “poor” quality images. The ‘fa’ subset was not used for an y other purposes. Based on preliminary experiments, closely cropped face images were scaled to 64 × 64 pixels, the block size was set to 8 × 8 pixels, with a 7 pixels ov erlap of neighbouring blocks. The preliminary experiments also suggested that using just 3 DCT coefficients was sufficient. This configu- ration was used in all experiments. The quality assessment methods were implemented with the aid of the Armadillo C++ library [ 27 ]. 4.1. Quality Assessment of F aces with V ariations in Alignment, Scale and Sharpness In this experiment we ev aluated the efficac y of each method to detect the best aligned images within a set of images that hav e a particular image v ariation. For exam- ple, out of the set of faces with rotations of 0 ◦ , ± 10 ◦ , ± 20 ◦ , ± 30 ◦ , we measured the percentage of 0 ◦ faces that were la- belled as “high” quality . Results for v ariations in shift, rotation and scale, sho wn in T able 2 , indicate that the proposed method consistently achiev ed the best or near-best performance across most of the variations. The results on the six PIE illumination sub- sets indicate that even in the presence of cast shadows, the proposed method can achiev e good results, with the excep- tion of images with scale changes. A veraging ov er all vari- ations, the proposed method achiev ed the best results. The asymmetry-based analysis methods (Gabor asym and Asym sharp) could not reliably detect vertical align- ment errors and scale variations. Gabor asym also per - formed poorly for detecting images with various sharpness variations. Asym shrp addressed this by combining asym- metry analysis with two image sharpness measurements. Despite that, the overall performance of Asym shrp was still poor . The performance of DFFS on alignment errors was con- sistent but generally lower than the proposed method. No- tably , DFFS failed to detect images with the best sharpness. T able 2. Quality assessment of alignment errors and sharpness v ariations on FERET ‘fb’ and all six PIE illumination subsets. Each value in the table indicates the percentage of the best aligned image in each variation type being assigned to have the highest quality score. For example, out of the set of faces with rotations of 0 ◦ , ± 10 ◦ , ± 20 ◦ , ± 30 ◦ , the value indicates the percentage of 0 ◦ faces labelled as “high” quality . The variations included: horizontal shift (HS), v ertical shift (VS), in-plane rotation (R T), scale (SC), sharpness (SH). The ‘o verall’ columns indicate the av erage performance of the abov e v ariations. Best performance is highlighted in bold. FERET ‘fb’ PIE illumination HS VS R T SC SH overall HS VS R T SC SH overall Asym shrp [ 26 ] 44.4 7.7 79.8 7.4 100.0 47.9 10.3 4.0 40.4 2.4 100.0 31.4 Gabor asym [ 29 ] 52.1 3.1 93.9 11.5 49.0 41.9 24.7 1.5 66.4 10.7 29.0 26.5 DFFS [ 5 ] 75.6 71.9 98.7 62.5 0.7 61.9 64.4 62.4 99.6 44.4 2.3 54.6 Proposed 83.4 85.4 99.6 73.0 99.8 88.2 65.9 62.6 98.8 37.0 95.9 72.0 T able 3. Quality assessment of pose variations on the pose subsets of FERET and PIE. Each v alue in the table indicates the percentage of images with a particular pose angle that were assigned to hav e the highest quality score. Best performance is highlighted in bold. FERET pose subset − 60 ◦ − 40 ◦ − 25 ◦ − 15 ◦ 0 ◦ +15 ◦ +25 ◦ +40 ◦ +60 ◦ Asym shrp [ 26 ] 0 0 0.5 30.5 68.0 1 0 0 0 Gabor asym [ 29 ] 2 5.5 7.5 24.5 58.0 2.5 0 0 0 DFFS [ 5 ] 0 0 0 5 92.0 3 0 0 0 Proposed 0 0 0.5 28 68.5 3 0 0 0 PIE pose subset − 67 . 5 ◦ − 45 ◦ − 22 . 5 ◦ — 0 ◦ — +22 . 5 ◦ +45 ◦ +67 . 5 ◦ Asym shrp [ 26 ] 0 0 2.94 — 94.1 — 1.5 1.5 0 Gabor asym [ 29 ] 0 8.8 10.3 — 73.5 — 5.9 1.5 0 DFFS [ 5 ] 0 1.5 11.8 — 79.4 — 7.4 0 0 Proposed 0 0 4.4 — 91.2 — 4.4 0 0 4.2. Quality Assessment on Pose V ariations In this experiment we e valuated the ability of each method to detect the most frontal faces in a set that in- cluded frontal and non-frontal (out-of-plane rotated) faces. The results, shown in T able 3 , indicate that the proposed method consistently achiev es second best performance on both FERET and PIE, with its performance on PIE being quite close to the top performer (Asym shrp). W e note that on FERET the visual differences between faces at 0 ◦ and ± 15 ◦ are minimal, which can explain why a significant proportion of faces at − 15 ◦ was classified as “frontal” by the proposed method. While DFFS gav e the best performance on FERET , its performance dropped on PIE. As there is an ov erlap be- tween the subjects in the ‘fa’ and pose subsets in FERET (where ‘fa’ was used for training), the inconsistenc y in per - formance across FERET and PIE suggests that DFFS might be ov er trained to the training dataset. The performance of Asym shrp and the proposed method is considerably better on PIE than on FERET . W e conjecture that this is due to the larger pose variation be- tween frontal faces and faces with the smallest pose angle ( ± 22 . 5 ◦ ), in contrast to ± 15 ◦ on FERET . T able 4. Quality assessment of images with cast shadows from the PIE dataset. Each v alue in the table indicates the percentage of images with a particular illumination direction that were assigned to ha ve the highest quality score. The illumination ranged from frontal (subset 1) to strongly directed (subset 6) where there are strong shadows (see Fig. 2 ). PIE illumination subset 1 2 3 4 5 6 Asym shrp [ 26 ] 97.1 2.9 0 0 0 0 Gabor asym [ 29 ] 51.5 5.9 2.9 39.7 4.4 0 DFFS [ 5 ] 0 0 4.4 88.2 7.4 0 Proposed 94.1 5.9 0 0 0 0 4.3. Quality Assessment on Cast Shadow V ariations Here we ev aluated the accuracy of selecting frontal face images with the least amount amount of cast shadow within a set of images subject to varying illumination direction. The direction ranged from frontal (subset 1) to side (sub- set 6), where sev ere cast shado ws exist (as shown in Fig. 2 ). The results, presented in T able 4 , sho w that Asym shrp achiev ed the best performance (correctly labelling frontally illuminated faces as ha ving high quality), with the proposed method a close second. In contrast, Gabor asym was con- fused between subsets 1 and 4, while DFFS erroneously la- belled most faces in subset 4 (containing significant shad- ows) as ha ving the highest quality . 5. Experiments on V ideo: Subset Selection In this section, we study the effecti veness of using qual- ity measurements to select a subset of images for video- based face verification. T o demonstrate the effecti veness of the quality assessment for a variety of face recognition systems, we used two facial feature extraction algorithms and two classification techniques, specifically designed for dealing with sets of faces (ie. image set matching). Specifically , we separately used Multi-Re gion His- tograms (MRH) [ 28 ] and Local Binary Patterns (LBP) [ 4 ] to extract features from each face. The comparison be- tween two sets of faces was performed using (i) Mutual Subspace Method (MSM) [ 38 ] (for both MRH and LBP), and (ii) feature a veraging [ 8 , 20 ] (for MRH only). The experiments were conducted on the ChokePoint dataset, using the video-to-video protocol (see Sec. 3.2 ). Each set of face images for a particular person was rank ordered according to the quality scores of the images, fol- lowed by k eeping the top N images. As per Section 4 , the proposed face quality measure- ment method was compared against three other methods: Asym shrp, Gabor asym and DFFS. The ‘fa’ subset of FERET , which is totally independent from ChokePoint, w as used for training DFFS and the proposed quality measure- ment method. In the first experiment, N v aried from 4 to 16 . The re- sults, reported in T able 5 , indicate that the proposed quality measurement method consistently leads to better face veri- fication performance than the other three methods, regard- less of the facial feature extraction algorithm used. The im- prov ement is most prev alent for N = 4 , indicating that the proposed method assigns high scores to high quality images more accurately . In the second experiment, N varied from 1 to the size of the set (labelled as “all”). Each face set was represented by an av erage MRH signature; LBP feature extraction was not used as it isn’t suitable for feature averaging. Face sets were then compared by using an L 1 -norm based distance between their corresponding av erage MRH signatures [ 8 , 20 , 28 ]. T able 5. V ideo-based f ace verification performance on the Chok e- P oint dataset, using MRH and LBP feature extraction algorithms coupled with the Mutual Subspace Method (MSM) for classify- ing face sets. Each set of face images for a particular person was rank ordered according to the quality scores of the images, fol- lowed by retaining top N quality images (ie. N is the subset size). Faces were segmented using automatic face localisation (detec- tion). The average face verification rate is reported (see Sec. 3.2 ). Best performance is highlighted in bold. Recognition Method Subset Selection MRH + MSM LBP + MSM Method N=4 N=8 N=16 N=4 N=8 N=16 Asym shrp [ 26 ] 67.5 70.3 75.4 65.3 67.6 70.5 Gabor asym [ 29 ] 75.4 78.6 84.0 69.3 71.4 74.5 DFFS [ 5 ] 74.7 78.1 83.4 69.4 70.3 74.6 Proposed 82.5 84.5 86.7 73.5 74.7 75.8 1 2 4 8 16 3 2 64 al l Subset size 65.0% 70.0% 75.0% 80.0% 85.0% 90.0% 95.0% A ccuracy (%) Asym_shrp Gabor_asym DFF S P r oposed Figure 5. V ideo-based face verification performance on the ChokeP oint dataset using av erage MRH signatures. Each set of face images for a particular person was rank ordered according to the quality scores of the images, follo wed by selecting a predefined number of top quality images to create a subset. Faces were seg- mented using automatic face localisation (detection). The average face verification rate is reported (see Sec. 3.2 ). From the results shown in Fig. 5 , it can be observed that using all captured faces generally does not lead to the best performance. It can also be observed that the proposed method considerably outperforms the other three methods for N ≤ 32 , and furthermore leads to the best verification performance (which occurs at N = 16 ). W e note that e ven when only one face is selected by the proposed method (ie. N = 1 ), relativ ely high verification accuracy is still achiev ed. This suggests that the proposed method has a high chance of picking the “best” face out of a set of faces. 6. Main Findings In this paper we presented a nov el patch-based face im- age quality assessment algorithm. Unlike previous meth- ods, the proposed approach is capable of simultaneously handling issues such as pose variations, cast shado ws, blur- riness as well as alignment errors caused by automatic face localisation (eg. in-plane rotations, horizontal and vertical shifts). The proposed method was ev aluated on two still face datasets (FERET and PIE), using faces subject to pose and illumination direction changes, as well as simulated geo- metric alignment errors and decreased sharpness. Exper- iments show that the proposed method has the best ov erall performance, identifying images which are the most frontal, well-aligned, illuminated and sharp. This is accomplished without requiring parameter tuning or retraining for each dataset tested. The proposed method was also ev aluated in a video- based face verification setting, on a new surveillance dataset termed ChokeP oint . For each giv en set of face images for a person, the proposed method was used to rank the images according to their quality . By selecting a subset containing only the top quality images, verification accuracy was con- siderably improv ed when compared to using all av ailable images. Furthermore, the proposed method consistently led to higher quality subsets (leading to higher verification ac- curacy) than pre vious image quality assessment algorithms, The proposed method is capable of assigning low-quality scores to images with cast shadows (eg. due to self- shadowing caused by strong directed illumination), how- ev er it is currently unlikely to detect more subtle variations in illumination. This is due to its elaborate illumination normalisation steps, necessary for generalisation purposes (ie. not being tied to the level of contrast and/or illumi- nation bias in a particular training dataset). The proposed method is also unlikely to detect minor expression varia- tions, as only low frequency information is used. According to [ 3 , 34 ], expression changes mainly lie in high frequency bands. Howe ver , many of the recent face recognition algo- rithms are capable of handling relati vely minor v ariations in both illumination and e xpression [ 15 , 17 , 24 , 28 ], thus these characteristics of the quality assessment method might be more of a feature than a limitation. Acknowledgements NICT A is funded by the Australian Government as repre- sented by the Department of Br oadband, Communications and the Digital Economy , as well as the Australian Research Council through the ICT Centr e of Excellence program. W e thank Dr Mehrtash Harandi for useful discussions. W e also thank all the volunteers who participated in the recording of the ChokePoint dataset. References [1] I S O /I E C 19794-5 (published version). Information tec hnol- ogy - Biometric Data Inter change F ormats , June 2005. [2] Machine readable trav el documents. International Civil A vi- ation Or ganization , August 2006. [3] L. Aguado, I. Serrano-Pedraza, S. Rodriguez, and F . J. Ro- man. Effects of spatial frequency content on classification of face gender and expression. The Spanish Journal of Psychol- ogy , 13(2):525–537, 2010. [4] T . Ahonen, A. Hadid, and M. Pietik ¨ ainen. Face recognition with local binary patterns. In ECCV , Lecture Notes in Com- puter Science (LNCS) , volume 3021, pages 469–481, 2004. [5] H. Bae and S. Kim. Real-time face detection and recogni- tion using hybrid-information extracted from face space and facial features. Image and V ision Computing , 23(13):1181– 1191, 2005. [6] S.-A. Berrani and C. Garcia. Enhancing face recognition from video sequences using robust statistics. In IEEE Inter- national Confer ence on V ideo and Signal Based Surveillance (A VSS) , pages 324–329, 2005. [7] L. Chang, I. Rod ´ es, H. M ´ endez, and E. del T oro. Best-shot selection for video face recognition using FPGA. In CIARP , Lectur e Notes in Computer Science (LNCS) , volume 5197, pages 543–550, 2008. [8] S. Chen, S. Mau, M. T . Harandi, C. Sanderson, A. Bigdeli, and B. C. Lov ell. Face recognition from still images to video sequences: A local-feature-based framew ork. EURASIP Journal on Ima ge and V ideo Pr ocessing , 2011. [9] W . Chen, M. J. Er, and S. W u. Illumination compensation and normalization for robust face recognition using discrete cosine transform in logarithm domain. IEEE T rans. Systems, Man and Cybernetics (P art B) , 36(2):458–466, 2006. [10] X. Gao, S. Z. Li, R. Liu, and P . Zhang. Standardization of face image sample quality . In ICB, Lecture Notes in Com- puter Science (LNCS) , volume 4642, pages 242–251, 2007. [11] R. Gonzales and R. W oods. Digital Image Pr ocessing . Pren- tice Hall, 3rd edition, 2007. [12] R. Gottumukkal and V . K. Asari. An improv ed face recog- nition technique based on modular P C A approach. P attern Recognition Letters , 25(4):429–436, 2004. [13] A. Hadid and M. Pietik ¨ ainen. From still image to video- based face recognition: An experimental analysis. In Proc. Automatic F ace and Gesture Recognition (AFGR) , pages 813–818, 2004. [14] M. T . Harandi, M. N. Ahmadabadi, and B. N. Araabi. Op- timal local basis: A reinforcement learning approach for face recognition. International Journal of Computer V ision , 81(2):191–204, 2009. [15] M. T . Harandi, C. Sanderson, S. Shirazi, and B. C. Lov ell. Graph embedding discriminant analysis on Grassmannian manifolds for improv ed image set matching. In IEEE Conf. Computer V ision and P attern Recognition (CVPR) , pages 2705–2712, 2011. [16] R.-L. V . Hsu, J. Shah, and B. Martin. Quality assessment of facial images. In Biometrics Symposium , 2006. [17] N. Kumar , A. Berg, P . Belhumeur , and S. Nayar . Attrib ute and simile classifiers for face v erification. In Int. Conf. Com- puter V ision (ICCV) , pages 365–372, 2009. [18] H. Luo. A training-based no-reference image quality assess- ment algorithm. In International Confer ence on Image Pr o- cessing (ICIP) , pages 2973–2976, 2004. [19] S. Marcel, C. McCool, P . Matejka, T . Ahonen, J. Cernocky , S. Chakraborty , V . Balasubramanian, S. Panchanathan, C. Chan, J. Kittler, et al. On the results of the first mo- bile biometry (MOBIO) face and speaker verification ev al- uation. In Recognizing P atterns in Signals, Speech, Images and V ideos, Lectur e Notes in Computer Science (LNCS) , vol- ume 6388, pages 210–225, 2010. [20] S. Mau, S. Chen, C. Sanderson, and B. C. Lovell. V ideo face matching using subset selection and clustering of probabilis- tic multi-region histograms. In International Confer ence on Image and V ision Computing New Zealand (IVCNZ) , 2010. [21] K. Nasrollahi and T . B. Moeslund. Face quality assessment system in video sequences. In BIOID, Lecture Notes in Com- puter Science (LNCS) , volume 5372, pages 10–18, 2008. [22] N. Ozay , Y . T ong, W . Frederick W , and X. Liu. Improv- ing face recognition with a quality-based probabilistic frame- work. In Computer V ision and P attern Recognition (CVPR) Biometrics W orkshop , pages 134–141, 2009. [23] P . J. Phillips, H. Moon, S. A. Rizvi, and P . J. Rauss. The FERET e valuation methodology for face-recognition algorithms. IEEE T rans. P attern Anal. Mach. Intell. , 22(10):1090–1104, 2000. [24] P . J. Phillips, W . T . Scruggs, A. J. O’T oole, P . J. Flynn, K. W . Bowyer , C. L. Schott, and M. Sharpe. FR VT 2006 and ICE 2006 large-scale experimental results. IEEE T rans. P attern Anal. Mach. Intell. , 32(5):831–846, 2010. [25] Y . Rodriguez, F . Cardinaux, S. Bengio, and J. Mariethoz. Measuring the performance of face localization systems. Im- age and V ision Computing , 24(8):882–893, 2006. [26] E. A. R ´ ua, J. L. A. Castro, and C. G. Mateo. Quality-based score normalization and frame selection for video-based per - son authentication. In BIOID, Lecture Notes in Computer Science (LNCS) , pages 1–9, 2008. [27] C. Sanderson. Armadillo: An open source C++ linear algebra library for fast prototyping and computationally intensiv e experiments. T echnical report, NICT A, 2010. http://arma.sourceforge.net/ . [28] C. Sanderson and B. C. Lovell. Multi-region probabilistic histograms for robust and scalable identity inference. In Lectur e Notes in Computer Science (LNCS) , volume 5558, pages 199–208, 2009. [29] J. Sang, Z. Lei, and S. Z. Li. Face image quality ev aluation for I S O/ I E C standards 19794-5 and 29794-5. In ICB, Lec- tur e Notes in Computer Science (LNCS) , volume 5558, pages 229–238, 2009. [30] A. Sanin, C. Sanderson, and B. C. Lov ell. Improved shado w remov al for rob ust person tracking in surv eillance scenarios. In International Conference on P attern Recognition (ICPR) , pages 141–144, 2010. [31] S. Shan, W . Gao, B. Cao, and D. Zhao. Illumination normal- ization for robust face recognition against varying lighting conditions. In W orkshop on Analysis and Modeling of F aces and Gestur es (AMFG) , pages 157–164, 2003. [32] T . Sim, S. Baker , and M. Bsat. The CMU pose, illumina- tion, and expression database. IEEE T ransactions on P at- tern Analysis and Machine Intelligence , 25(1):1615 – 1618, 2003. [33] M. Subasic, S. Loncaric, T . Petkovic, H. Bogunovic, and V . Kri vec. Face image validation system. In International Symposium on Image and Signal Processing and Analysis (ISP A) , pages 30–33, 2005. [34] J. D. Swisher, C. Brooking, and D. Somers. Spatial fre- quency and facial e xpressions of emotion. Journal of V ision , 4(8):905, 2004. [35] A. T orralba and P . Shina. Detecting faces in improv erished images. T echnical Report 028, MIT AI Lab , 2001. [36] Y . W ong, C. Sanderson, S. Mau, and B. C. Lovell. Dynamic amelioration of resolution mismatches for local feature based identity inference. In International Conference on P attern Recognition (ICPR) , pages 1200–1203, 2010. [37] X. Xie and K.-M. Lam. An ef ficient illumination normaliza- tion method for face recognition. P attern Recognition Let- ters , 27:609–617, 2006. [38] O. Y amaguchi, K. Fukui, and K. Maeda. Face recognition using temporal image sequence. In Pr oc. Automatic F ace and Gestur e Recognition (AFGR) , pages 318–323, 1998. [39] Z. Y ang, H. Ai, B. W u, S. Lao, and L. Cai. Face pose esti- mation and its application in video shot selection. In Inter- national Conference on P attern Recognition (ICPR) , pages 322–325, 2004. [40] G. Zhang and Y . W ang. Asymmetry-based quality assess- ment of face images. In ISVC, Lecture Notes in Computer Science (LNCS) , volume 5876, pages 499–508, 2009. [41] W . Zhao, R. Chellappa, A. Rosenfeld, and P . Phillips. Face recognition: A literature surve y . ACM Computing Surveys , 35(4):399–458, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment