Deep learning for neuroimaging: a validation study

Deep learning methods have recently made notable advances in the tasks of classification and representation learning. These tasks are important for brain imaging and neuroscience discovery, making the methods attractive for porting to a neuroimager's…

Authors: Sergey M. Plis, Devon R. Hjelm, Ruslan Salakhutdinov

Deep lear ning f or neur oimaging: a validation study Sergey M. Plis The Mind Research Network Albuquerque, NM 87106 s.m.plis@gmail.com Devon R. Hjelm Univ ersity of Ne w Me xico Albuquerque, NM 87131 dhjelm@mrn.org Ruslan Salakhutdinov Univ ersity of T oronto T oronto, Ontario M5S 2E4 rsalakhu@cs.toronto.edu V ince D. Calhoun The Mind Research Network Albuquerque, NM 87106 vcalhoun@mrn.org Abstract Deep learning methods have recently made notable advances in the tasks of clas- sification and representation learning. These tasks are important for brain imag- ing and neuroscience discov ery , making the methods attractive for porting to a neuroimager’ s toolbox. Success of these methods is, in part, explained by the flexibility of deep learning models. Howe ver , this flexibility makes the process of porting to new areas a difficult parameter optimization problem. In this work we demonstrate our results (and feasible parameter ranges) in application of deep learning methods to structural and functional brain imaging data. W e also describe a novel constraint-based approach to visualizing high dimensional data. W e use it to ana- lyze the ef fect of parameter choices on data transformations. Our re- sults show that deep learning methods are able to learn physiologically important representations and detect latent relations in neuroimaging data. 1 Introduction One of the main goals of brain imaging and neuroscience—and, possibly , of most natural sciences— is to improv e understanding of the in vestigated system based on data. In our case, this amounts to inference of descriptiv e features of brain structure and function from non-inv asiv e measurements. Brain imaging field has come a long way from anatomical maps and atlases tow ards data driv en fea- ture learning methods, such as seed-based correlation [2], canonical correlation analysis [33], and independent component analysis (ICA) [1, 24]. These methods are highly successful in rev ealing known brain features with new details [3] (supporting their credibility), in recovering features that differentiate patients and controls [28] (assisting diagnosis and disease understanding), and starting a “resting state” rev olution after revealing consistent patters in data from uncontrolled resting e x- periments [29, 35]. Classification is often used merely as a correctness checking tool, as the main emphasis is on learning about the brain. A perfect oracle that does not explain its conclusions would be useful, but mainly to f acilitate the inference of the ways the oracle draws these conclusions. As an oracle, deep learning methods are breaking records taken o ver the areas of speech, signal, image, video and text mining and recognition by improving state of the art classification accuracy by , sometimes, more than 30% where the prior decade struggled to obtain a 1-2% improv ements [19, 21]. What differentiates them from other classifiers, howe ver , is the automatic feature learning from data which largely contrib utes to improvements in accuracy . Presently , this seems to be the closest solution to an oracle that rev eals its methods — a desirable tool for brain imaging. Another distinguishing feature of deep learning is the depth of the models. Based on already ac- ceptable feature learning results obtained by shallow models—currently dominating neuroimaging 1 field—it is not immediately clear what benefits would depth have. Considering the state of multi- modal learning, where models are either assumed to be the same for analyzed modalities [26] or cross-modal relations are sought at the (shallow) lev el of mixture coefficients [23], deeper models better fit the intuiti ve notion of cross-modality relations, as, for example, relations between genetics and phenotypes should be indirect, happening at a deeper conceptual lev el. In this work we present our recent advances in application of deep learning methods to functional and structural magnetic resonance imaging (fMRI and sMRI). Each consists of brain v olumes b ut for sMRI these are static volumes—one per subject/session,—while for fMRI a single subject dataset is comprised of multiple volumes capturing the changes during an experimental session. Our goal is to validate feasibility of this application by a ) in vestigating if a b uilding block of deep generativ e models—a restricted Boltzmann machine (RBM) [17]—is competitive with ICA (a representativ e model of its class) (Section 2); b ) examining the effect of the depth in deep learning analysis of structural MRI data (Section 3.3); and c ) determining the v alue of the methods for discovery of latent structure of a lar ge-scale (by neuroimaging standards) dataset (Section 3.4). The measure of feature learning performance in a shallow model (a) is comparable with existing methods and kno wn brain physiology . Ho wever , this measure cannot be used when deeper models are in vestigated. As we further demonstrate, classification accurac y does not pro vide the complete picture either . T o be able to visualize the effect of depth and gain an insight into the learning process, we introduce a flexible constraint satisfaction embedding method that allo ws us to control the comple xity of the constraints (Section 3.2). Deliberately choosing local constraints we are able to reflect the transformations that the deep belief network (DBN) [15] learns and applies to the data and gain additional insight. 2 A shallow belief network for feature learning Prior to in vestigating the benefits of depth of a DBN in learning representations from fMRI and sMRI data, we would like to find out if a shallow (single hidden layer) model–which is the RBM— from this family meets the field’ s expectations. As mentioned in the introduction, a number of methods are used for feature learning from neuroimaging data: most of them belong to the single matrix factorization (SMF) class. W e do a quick comparison to a small subset of SMF methods on simulated data; and continue with a more extensi ve comparison against ICA as an approach trusted in the neuroimaging field. Similarly to RBM, ICA relies on the bipartite graph structure, or ev en is an artificial neural network with sigmoid hidden units as is in the case of Infomax ICA [1] that we compare against. Note the difference with RBM: ICA applies its weight matrix to the (shorter) temporal dimension of the data imposing independence on the spatial dimension while RBM applies its weight matrix (hidden units “receptive fields”) to the high dimensional spatial dimension instead (Figure 2). 2.1 A restricted Boltzmann machine A restricted Boltzmann machine (RBM) is a Markov random field that models data distribution parameterizing it with the Gibbs distrib ution o ver a bipartite graph between visible v and hidden variables h [10]: p ( v ) = P h p ( v , h ) = P h 1 / Z exp( − E ( v , h )) , where Z = P v P h e − E ( v , h ) is the normalization term (the partition function) and E ( v , h ) is the energy of the system. Each visible variable in the case of fMRI data represents a voxel of an fMRI scan with a real-v alued and approximately Gaussian distribution. In this case, the energy is defined as: E ( v , h ) = − X ij v j σ j W j i h i − X j ( a j − v j ) 2 σ 2 j − X i b i h i , (1) where a j and b j are biases and σ j is the standard deviation of a parabolic containment function for each visible variable v j centered on the bias a j . In general, the parameters σ i need to be learned along with the other parameters. Howe ver , in practice normalizing the distribution of each voxel to hav e zero mean and unit variance is faster and yet effecti ve [27]. A number of choices af fect the quality of interpretation of the representations learned from fMRI by an RBM. Encouraging sparse features via the L 1 -regularization: λ k W k 1 ( λ = 0 . 1 gave best results) and using hyperbolic tangent for hidden units non-linearity are essential settings that respectiv ely facilitate spatial and temporal interpretation of the result. The weights were updated using the truncated Gibbs sampling method 2 called contrasti ve di vergence (CD) with a single sampling step (CD-1). Further information on RBM model can be found in [16, 17]. 2.2 Synthetic data Correlation Spatial Maps Time Courses 0 0.9 a: A verage spatial map (SM) and time course (TC) correlations to ground truth for RBM and SMF models (gray box). GT RBM ICA b: Ground truth (GT) SMs and estimates obtained by RBM and ICA (thresholded at 0 . 4 height). Colors are consistent across the methods. Grey indicates back- ground or areas without SMs abov e threshold. H igher is bett er H igher is bett er L o w er is bett er IC A RBM c: Spatial, temporal, and cross correla- tion (FNC) accuracy for ICA (red) and RBM (blue), as a function of spatial ov erlap of the true sources from 1b. Lines indicate the av erage correlation to GT , and the color-fill indicates ± 2 standard errors around the mean. Figure 1: Comparison of RBM estimation accuracy of features and their time courses with SMFs. In this section we summarize our comparisons of RBM with SMF models—including Infomax ICA [1], PCA [14], sparse PCA (sPCA) [37], and sparse NMF (sNMF) [18]—on synthetic data with known spatial maps generated to simulate fMRI. Figure 1a shows the correlation of spatial maps (SM) and time course (TC) estimates to the ground truth for RBM, ICA, PCA, sPCA, and sNMF . Correlations are av eraged across all sources and datasets. RBM and ICA showed the best o verall performance. While sNMF also estimated SMs well, it showed inferior performance on TC estimation, likely due to the non-negati vity constraint. Based on these results and the broad adoption of ICA in the field, we focus on comparing Infomax ICA and RBM. Figure 1b sho ws the full set of ground truth sources along with RBM and ICA estimates for a single representativ e dataset. SMs are thresholded and represented as contours for visualization. Results ov er all synthetic datasets showed similar performance for RBM and ICA (Figure 1c), with a slight advantage for ICA with regard to SM estimation, and a slight advantage for RBM with regards to TC estimation. RBM and ICA also sho wed comparable performance estimating cross correlations also called functional network connecti vity (FNC). 2.3 An fMRI data application fMRI data RBM training T ime courses T arget time course RBM Features Cross correlations T ask-related features Features Space x x = TRAINING fMRI data ANAL YSIS T ime Space Figure 2: The processes of feature learning and time course computation from fMRI data by an RBM. The visible units are vox els and a hidden unit receptive field co vers an fMRI volume. Data used in this work comprised of task-related scans from 28 (fiv e females) healthy participants, all of whom ga ve written, informed, IRB-approved consent at Hartford Hospital and were compensated for participation 1 . All participants were scanned during an auditory oddball task (A OD) inv olving the detection of an infrequent target sound within a series of standard and no vel sounds 2 . 1 More detailed information regarding participant demographics is pro vided in [9] 2 The task is described in more detail in [4] and [9]. 3 Scans were acquired at the Olin Neuropsychiatry Research Center at the Institute of Li ving/Hartford Hospital on a Siemens Alle gra 3T dedicated head scanner equipped with 40 mT / m gradients and a standard quadrature head coil [4, 9]. The A OD consisted of two 8-min runs, and 249 scans (volumes) at 2 second TR (0.5 Hz sampling rate) were used for the final dataset. Data were post-processed using the SPM5 software package [12], motion corrected using INRIalign [11], and subsampled to 53 × 63 × 46 v oxels . The complete fMRI dataset w as masked belo w mean and the mean image across the dataset was remov ed, giving a complete dataset of size 70969 vox els by 6972 volumes. Each vox el was then normalized to have zero mean and unit v ariance. Figure 3: Intrinsic brain networks estimated by ICA and RBM. The RBM was constructed using 70969 Gaussian visible units and 64 hyperbolic tangent hidden units. The h yper parameters (0.08 from the searched [1 × 10 − 4 , 1 × 10 − 1 ] range) for learning rate and λ (0.1 from the searched range [ 1 × 10 − 2 , 1 × 10 − 1 ]) for L 1 weight decay were selected as those that sho wed a reduction of reconstruction error ov er training and a significant reduction in span of the receptiv e fields respectiv ely . Parameter value outside the ranges either resulted in unstable or slow learning ( ) or uninterpretable fea- tures ( λ ). The RBM was then trained with a batch size of 5 for approximately 100 epochs to allow for full con vergence of the parameters. After flipping the sign of negativ e receptive fields, we then identified and labeled spatially distinct features as corresponding to brain regions with the aid of AFNI [5] excluding features which had a high probability of corresponding to white matter , ventricles, or artifacts (eg. motion, edges). W e normalized the fMRI volume time series to mean zero and used the trained RBM in feed-forward mode to compute time series for each fMRI feature. This w as done to better compare to ICA, where the mean is remov ed in PCA preprocessing. The work-flo w is outlined in Figure 2, while Figure 3 shows comparison of resulting features with those obtained by Infomax ICA. In general, RBM performs competiti vely with ICA, while providing–perhaps, not surprisingly due to the used L 1 regularization—sharper and more local- ized features. While we recognize that this is a subjectiv e measure we list more features in Fig- ure S2 of Section 5 and note that RBM features lack negati ve parts for corresponding features. Note, that in the case of L 1 regularized weights RBM algorithms starts to resemble some of the ICA approaches (such as the recent RICA by Le at al. [20]), which may explain the sim- ilar performance. Howe ver , the dif ferences and possible advantages are the generati ve nature of the RBM and no enforcement of component orthogonality (not explicit at the least). More- ov er, the block structure of the correlation matrix (see belo w the Supplementary material section) of feature time courses provide a grouping that is more physiologically supported than that pro- vided by ICA. For example, see Figure S1 in the supplementary material section below . Perhaps, because ICA working hard to enforce spatial independence subtly af fects the time courses and their cross-correlations in turn. W e have observed comparable running times of the (non GPU) ICA ( http://www.nitrc.org/projects/gift ) and a GPU implementation of the RBM ( https://github.com/nitishsrivastava/deepnet ). 3 V alidating the depth effect Since the RBM results demonstrate a feature-learning performance competitive with the state of the art (or better), we proceed to in vestigating the ef fects of the model depth. T o do that we turn from fMRI to sMRI data. As it is commonly assumed in the deep learning literature [22] the depth is often improving classification accuracy . W e in vestigate if that is indeed true in the sMRI case. Structural data is con venient for the purpose as each subject/session is represented only by a single volume that has a label: control or patient in our case. Compare to 4D data where hundreds of volumes belong to the same subject with the same disease state. 3.1 A deep belief netw ork A DBN is a sigmoidal belief network (although other activat ion functions may be used) with an RBM as the top lev el prior . The joint probability distribution of its visible and hidden units is 4 parametrized as follows: P ( v , h 1 , h 2 , . . . , h l ) = P ( v | h 1 ) P ( h 1 | h 2 ) · · · P ( h l − 2 , h l − 1 ) P ( h l − 1 , h l ) , (2) where l is the number of hidden layers, P ( h l − 1 , h l ) is an RBM, and P ( h i | h i +1 ) factor into indi- vidual conditionals: P ( h i | h i +1 ) = n i Y j =1 P ( h i j | h i +1 ) (3) The important property of DBN for our goals of feature learning to facilitate discovery is its ability to operate in generati ve mode with fixed values on chosen hidden units thus allowing one to in vestigate the features that the model have learned and/or weighs as important in discriminativ e decisions. W e, howe ver , not going to use this property in this section, focusing instead on validating the claim that a network’ s depth provides benefits for neuroimaging data analysis. And we will do this using discriminativ e mode of DBN’ s operation as it provides an objectiv e measure of the depth effect. DBN training splits into two stages: pre-training and discriminativ e fine tuning. A DBN can be pre-trained by treating each of its layers as an RBM—trained in an unsupervised way on inputs from the previous layer —and later fine-tuned by treating it as a feed-forward neural network. The latter allo ws supervised training via the error back propagation algorithm. W e use this schema in the following by augmenting each DBN with a soft-max layer at the fine-tuning stage. 3.2 Nonlinear embedding as a constraint satisfaction problem A DBN and an RBM operate on data samples, which are brain volumes in the fMRI and sMRI case. A five-minute fMRI experiment with 2 seconds sampling rate yields 150 of these volumes per subject. For sMRI studies number of participating subjects varies but in this paper we operate with a 300 and a 3500 subject-volumes datasets. Transformations learned by deep learning methods do not look intuiti ve in the hidden node space and generative sampling of the trained model does not provide a sense if a model hav e learned an ything useful in the case of MRI data: in contrast to natural images, fMRI and sMRI images do not look very intuitiv e. Instead, we use a nonlinear embedding method to control whether a model learned useful information and to assist in inv estigation of what hav e it, in fact, learned. One of the purposes of an embedding is to display a complex high dimensional dataset in a way that is i ) intuitive, and ii ) representativ e of the data sample. The first requirement usually leads to displaying data samples as points in a 2-dimensional map, while the second is more elusive and each approach addresses it differently . Embedding approaches include relativ ely simple random linear projections—prov ably preserving some neighbor relations [6]—and a more complex class of nonlinear embedding approaches [30, 32, 34, 36]. In an attempt to organize the properties of this diverse family we have aimed at representing nonlinear embedding methods under a single constraint satisfaction problem (CSP) frame work (see belo w). W e hypothesize that each method places the samples in a map to satisfy a specific set of constraints. Although this work is not yet complete, it proven useful in our current study . W e briefly outline the ideas in this section to provide enough intuition of the method that we further use in Section 3. Since we can control the constraints in the CSP framew ork, to study the effect of deep learning we choose them to do the least amount of work—while still being useful—letting the DBN do (or not) the hard part. A more complicated method such as t-SNE [36] already does comple x processing to preserve the structure of a dataset in a 2D map – it is hard to infer if the quality of the map is determined by a deep learning method or the embedding. While some of the existing method may hav e provided the “least amount of work” solutions as well we chose to go with the CSP frame work. It explicitly states the constraints that are being satisfied and thus lets us reason about deep learning effects within the constraints, while with other methods—where the constraints are implicit—this would ha ve been harder . A constraint satisfaction problem (CSP) is one requiring a solution that satisfies a set of constraints. One of the well known examples is the boolean satisfiability problem (SA T). There are multiple other important CSPs such as the packing, molecular conformations, and, recently , error correcting codes [7]. Freedom to setup per point constraints without controlling for their global interactions makes a CSP formulation an attractiv e representation of the nonlinear embedding problem. Pursuing 5 this property we use the iterative “di vide and concur” (DC) algorithm [13] as the solver for our representation. In DC algorithm we treat each point on the solution map as a variable and assign a set of constraints that this v ariable needs to satisfy (more on these later). Then each points gets a “replica” for each constraint it is in volved into. Then DC algorithm alternates the divide and concur projections. The divide projection moves each “replica” points to the nearest locations in the 2D map that satisfy the constraint they participate in. The concur projection concurs locations of all “replicas” of a point by placing them at the average location on the map. The key idea is to av oid local traps by combining the divide and concur steps within the difference map [8]. A single location update is represented by: x c = P c ((1 + 1 /β ) ∗ P d ( x ) − 1 /β ∗ x ) x d = P d ((1 − 1 /β ) ∗ P c ( x ) + 1 /β ∗ x ) x = x + β ∗ ( x c − x d ) , (4) where P d ( · ) and P c ( · ) denote the divide and concur projections and β is a user-defined parameter . While the concur projection will only differ by subsets of “replicas” across different methods rep- resentable in DC framework, the di vide projection is unique and defines the algorithm behavior . In this paper, we choose a divide projection that keeps k nearest neighbors of each point in the higher dimensional space also its neighbors in the 2D map. This is a simple local neighborhood constraint that allows us to assess effects of deep learning transformation leaving most of the mapping deci- sions to the deep learning. Note, that for a general dataset we may not be able to satisfy this constraint: each point has ex- actly the same neighbors in 2D as in the original space (and this is what we indeed observe). The DC algorithm, howe ver , is only guaranteed to find the solution if it exists and oscillates otherwise. Oscillating beha vior is detectable and may be used to stop the algorithm. W e found informati ve watching the 2D map in dynamics, as the points that keep oscillating provide additional information into the structure of the data. Another practically important feature of the algorithm: it is determin- istic. Given the same parameters ( β and the parameters of P d ( · ) ) it conv erges to the same solution regardless of the initial point. If each of the points participates in each constraint then comple x- ity of the algorithm is quadratic. W ith our simple k neighborhood constraints it is O ( k n ) , for n samples/points. 3.3 A schizophrenia structural MRI dataset Figure 4: A smoothed gray matter seg- mentation of a patient and a health y control: each is a training sample. W e use a combined data from four separate schizophrenia studies conducted at Johns Hopkins Uni versity (JHU), the Maryland Psychiatric Research Center (MPRC), the Insti- tute of Psychiatry , London, UK (IOP), and the W estern Psychiatric Institute and Clinic at the University of Pitts- bur gh (WPIC) (the data used in Meda et al. [25]). The combined sample comprised 198 schizophrenia patients and 191 matched health y controls and contained both first episode and chronic patients [25]. At all sites, whole brain MRIs were obtained on a 1.5T Signa GE scanner using identical parameters and software. Original struc- tural MRI images were segmented in nati ve space and the resulting gray and white matter images then spatially nor- malized to gray and white matter templates respectively to deriv e the optimized normalization parameters. These parameters were then applied to the whole brain structural images in nativ e space prior to a ne w se gmentation. The obtained 60465 vox el gray matter images were used in this study . Figure 4 shows example orthogonal slice views of the gray matter data samples of a patient and a healthy control. The main question of this Section is to evaluate the effect of the depth of a DBN on sMRI. T o answer this question, we in vestigate if classification rates improve with the depth. For that we sequentially in vestigate DBNs of 3 depth. From RBM experiments we hav e learned that even with a larger number of hidden units (72, 128 and 512) RBM tends to only keep around 50 features driving the rest to zero. Classification rate and reconstruction error still slightly improv es, ho wever , when the number of hidden units increases. These observations af fected our choice of 50 hidden 6 units of the first two layers and 100 for the third. Each hidden unit is connected to all units in the previous layer which results in an all to all connecti vity structure between the layers, which is a more common and con ventional approach to constructing these models. Note, larger networks (up to double the umber of units) lead to similar results. W e pre-train each layer via an unsupervised RBM and discriminati vely fine-tune models of depth 1 (50 hidden units in the top layer), 2 (50-50 hidden units in the first and the top layer respectively), and 3 (50-50-100 hidden units in the first, second and the top layer respectiv ely) by adding a softmax layer on top of each of these models and training via the back propagation. W e estimate the accuracy of classification via 10-fold cross v alidation on fine-tuned models splitting the 389 subject dataset into 10 approximately class-balanced folds. W e train the rbf-kernel SVM, logistic regression and a k-nearest neighbors (knn) classifier using activ ations of the top-most hidden layers in fine-tuned models to the training data of each fold as their input. The testing is performed like wise but on the test data. W e also perform the same 10-fold cross validation on the raw data. T able 1 summarizes the precision and recall values in the F-scores and their standard de viations. depth raw 1 2 3 SVM F-scor e 0 . 68 ± 0 . 01 0 . 66 ± 0 . 09 0 . 62 ± 0 . 12 0 . 90 ± 0 . 14 LR F-scor e 0 . 63 ± 0 . 09 0 . 65 ± 0 . 11 0 . 61 ± 0 . 12 0 . 91 ± 0 . 14 KNN F-scor e 0 . 61 ± 0 . 11 0 . 55 ± 0 . 15 0 . 58 ± 0 . 16 0 . 90 ± 0 . 16 T able 1: Classification on fine-tuned models (test data) All models demonstrate a similar trend when the accu- racy only slightly increases from depth-1 to depth-2 DBN and then improv es signifi- cantly . T able 1 supports the general claim of deep learning community about improvement of clas- sification rate with the depth even for sMRI data. Improvement in classification e ven for the simple knn classifier indicates the character of the transformation that the DBN learns and applies to the data: it may be changing the data manifold to organize classes by neighborhoods. Ideally , to make general conclusion about this transformation we need to analyze several representativ e datasets. Howe ver , e ven working with the same data we can ha ve a closer view of the depth effect using the method introduced in Section 3.2. Although it may seem that the DBN does not pro vide significant Figure 5: Ef fect of a DBN’ s depth on neighborhood relations. Each map is shown at the same iteration of the algorithm with the same k = 50 . The color dif ferentiates the classes (patients and controls) and the training (335 subjects) from validation (54 subjects) data. Although the data becomes separable at depth 1 and more so at depth 2, the DBN continues distilling details that pull the classes further apart. improv ements in sMRI classification from depth-1 to depth-2 in this model, it keeps on learning potentially useful transformaions of the data. W e can see that using our simple local neighborhood- based embedding. Figure 5 displays 2D maps of the raw data, as well as the depth 1, 2, and 3 activ ations (of a network trained on 335 subjects): the deeper networks place patients and control groups further apart. Additionally , Figure 5 displays the 54 subjects that the DBN was not train on. These hold out subjects are also getting increased separation with depth. This DBN’ s behavior is potentially useful for generalization, when larger and more di verse data become av ailable. Our new mapping method has two essential properties to facilitate the conclusion and provide con- fidence in the result: its already mentioned local properties and the deterministic nature of the algo- rithm. The latter leads to independence of the resulting maps from the starting point. The map only depends on the models parameter k —the size of the neighborhood—and the data. 3.4 A large-scale Huntington disease data 7 Figure 6: A gray matter of MRI scans of an HD patient and a healthy control. In this section we focus on sMRI data collected from healthy controls and Huntington disease (HD) patients as part of the PREDICT -HD project ( www.predict- hd. net ). Huntington disease is a genetic neurodegenerati ve disease that results in degeneration of neurons in certain areas of the brain. The project is focused on identifying the earliest detectable changes in thinking skills, emotions and brain structure as a person begins the transition from health to being diagnosed with Huntington disease. W e would like to know if deep learning methods can assist in answering that question. For this study T1-weighted scans were collected at mul- tiple sites (32 international sites), representing multiple field strengths (1.5T and 3.0T) and multiple manufactures (Siemens, Phillips, and GE). The 1.5T T1 weighted scans were an axial 3D volumetric spoiled-gradient echo series ( ≈ 1 × 1 × 1 . 5 mm vox els), and the 3.0T T1 weighted scans were a 3D V olumetric MPRA GE series ( ≈ 1 × 1 × 1 mm vox els). depth raw 1 2 3 SVM F-scor e 0 . 75 0 . 65 ± 0 . 01 0 . 65 ± 0 . 01 1 . 00 ± 0 . 00 LR F-scor e 0 . 79 0 . 65 ± 0 . 01 0 . 65 ± 0 . 01 1 . 00 ± 0 . 00 T able 2: Classification on fine-tuned models (HD data) The images were segmented in the nativ e space and the normalized to a common template. After correlating the normalized gray matter se gmentation with the template and eliminating poorly correlating scans we obtain a dataset of 3500 scans, where 2641 were from patients and 859 from healthy controls. W e have used all of the scans in this imbalanced sample to pre-train and fine tune the same model architecture (50-50-100) as in Section 3.3 for all three depths 3 . Figure 7: Patients and controls group sep- aration map with additional unsupervised spectral decomposition of sMRI scans by disease se verity . The map represents 3500 scans. T able 2 lists the average F-score values for both classes at the raw data and all depth levels. Note the drop from the raw data and then a recov ery at depth 3. The lim- ited capacity of le vels 1 and 2 has reduced the network ability to differentiate the groups but representational capacity of depth 3 network compensates for the ini- tial bottleneck. This, confirms our previous observa- tion on the depth effect, howev er, does not yet help the main question of the PREDICT -HD study . Note, howe ver , while T able 1 in the previous section ev al- uates generalization ability of the DBN, T able 2 here only demonstrates changes in DBN’ s representational capacity with the depth as we use no testing data. T o further inv estigate utility of the deep learning approach for scientific discov ery we again augment it with the embedding method of Section 3.2. Figure 7 shows the map of 3500 scans of HD patients and healthy controls. Each point on the map is an sMRI volume, shown in Figures 6 and 7. Although we have used the complete data to train the DBN, discriminativ e fine-tuning had access only to binary label: control or patient. In ad- dition to that, we have information about severity of the disease from low to high. W e have color coded this information in Figure 7 from bright yellow (low) through orange (medium) to red (high). The network 4 discriminates the patients by disease se verity which results in a spectrum on the map. Note, that neither t-SNE (not shown), nor our ne w embedding see the spectrum or ev en the patient groups in the ra w data. This is a important property of the method that may help support its future use in discov ery of new information about the disease. 3 Note, in both cases we have experimented with larger layer sizes but the results were not significantly different to w arrant increase in computation and parameters needed to be estimated. 4 Note, the embedding algorithm does not hav e access to any label information. 8 4 Conclusions Our in vestigations show that deep learning has a high potential in neuroimaging applications. Even the shallow RBM is already competitiv e with the model routinely used in the field: it produces physiologically meaningful features which are (desirably) highly focal and have time course cross correlations that connect them into meaningful functional groups (Section 5). The depth of the DBN does indeed help classification and increases group separation. This is apparent on two sMRI datasets collected under varying conditions, at multiple sites each, from different disease groups, and pre-processed differently . This is a strong evidence of DBNs robustness. Furthermore, our study shows a high potential of DBNs for exploratory analysis. As Figure 7 demonstrates, DBN in conjunction with our ne w mapping method can reveal hidden relations in data. W e did find it difficult initially to find workable parameter regions, but we hope that other researchers won’ t hav e this difficulty starting from the baseline that we pro vide in this paper . References [1] A. J. Bell and T . J. Sejno wski. An information-maximization approach to blind separation and blind decon volution. Neural Computation , 7(6):1129–1159, No vember 1995. [2] Bharat Biswal, F Zerrin Y etkin, V ictor M Haughton, and James S Hyde. Functional connec- tivity in the motor cortex of resting human brain using echo-planar mri. Ma gnetic r esonance in medicine , 34(4):537–541, 1995. [3] M.J. Brookes, M. W oolrich, H. Luckhoo, D. Price, J.R. Hale, M.C. Stephenson, G.R. Barnes, S.M. Smith, and P .G. Morris. In vestig ating the electrophysiological basis of resting state net- works using magnetoencephalography . Pr oceedings of the National Academy of Sciences , 108(40):16783–16788, 2011. [4] V . D. Calhoun, K. A. Kiehl, and G. D. Pearlson. Modulation of temporally coherent brain networks estimated using ICA at rest and during cognitiv e tasks. Human Brain Mapping , 29(7):828–838, 2008. [5] R. W . Cox et al. AFNI: software for analysis and visualization of functional magnetic reso- nance neuroimages. Computers and Biomedical Researc h , 29(3):162–173, 1996. [6] T . de Vries, S. Chawla, and M. E. Houle. Finding local anomalies in very high dimensional space. In Proceedings of the 10th { IEEE } international confer ence on data mining , pages 128–137. IEEE, IEEE Computer Society , 2010. [7] Nate Derbinsky , Jos ´ e Bento, V eit Elser , and Jonathan S Y edidia. An impro ved three-weight message-passing algorithm. arXiv pr eprint arXiv:1305.1961 , 2013. [8] V . Elser , I. Rankenbur g, and P . Thibault. Searching with iterated maps. Pr oceedings of the National Academy of Sciences , 104(2):418, 2007. [9] Nathan Swanson et. al. Lateral dif ferences in the default mode netw ork in healthy controls and patients with schizophrenia. Human Brain Mapping , 32:654–664, 2011. [10] Asja Fischer and Christian Igel. An introduction to restricted boltzmann machines. In Pr ogr ess in P attern Recognition, Ima ge Analysis, Computer V ision, and Applications , pages 14–36. Springer , 2012. [11] L. Freire, A. Roche, and J. F . Mangin. What is the best similarity measure for motion correction in fMRI. IEEE T ransactions in Medical Imaging , 21:470–484, 2002. [12] Karl J Friston, Andrew P Holmes, Keith J W orsley , J-P Poline, Chris D Frith, and Richard SJ Fracko wiak. Statistical parametric maps in functional imaging: a general linear approach. Human brain mapping , 2(4):189–210, 1994. [13] S. Gravel and V . Elser . Divide and concur: A general approach to constraint satisfaction. Physical Review E , 78(3):36706, 2008. [14] Tre vor . Hastie, Robert. T ibshirani, and JH (Jerome H.) Friedman. The elements of statistical learning . Springer , 2009. [15] G. Hinton and R. Salakhutdinov . Reducing the dimensionality of data with neural networks. Science , 313(5786):504–507, 2006. [16] G. E. Hinton, S. Osindero, and Y . W . T eh. A fast learning algorithm for deep belief nets. Neural computation , 18(7):1527–1554, 2006. 9 [17] Geoffrey Hinton. Training products of experts by minimizing contrasti ve di vergence. Neural Computation , 14:2002, 2000. [18] P . O. Hoyer . Non-negati ve sparse coding. In Neur al Networks for Signal Processing , 2002. Pr oceedings of the 2002 12th IEEE W orkshop on , pages 557–565, 2002. [19] Alex Krizhe vsky , Ilya Sutskev er, and Geoffrey E. Hinton. Imagenet classification with deep con volutional neural networks. In Neur al Information Pr ocessing Systems , 2012. [20] Quoc V Le, Alexandre Karpenko, Jiquan Ngiam, and Andre w Y Ng. Ica with reconstruction cost for efficient o vercomplete feature learning. In NIPS , pages 1017–1025, 2011. [21] Quoc V . Le, Rajat Monga, Matthieu Devin, Kai Chen, Greg S. Corrado, Jeff Dean, and An- drew Y . Ng. Building high-le vel features using lar ge scale unsupervised learning. In Interna- tional Confer ence on Machine Learning. 103 , 2012. [22] N. Le Roux and Y . Bengio. Deep belief netw orks are compact uni versal approximators. Neural computation , 22(8):2192–2207, 2010. [23] Jingyu Liu and V ince Calhoun. Parallel independent component analysis for multimodal anal- ysis: Application to fmri and eeg data. In Biomedical Imaging: F r om Nano to Macr o, 2007. ISBI 2007. 4th IEEE International Symposium on , pages 1028–1031. IEEE, 2007. [24] M. J. McKeo wn, S. Makeig, G. G. Bro wn, T . P . Jung, S. S. Kindermann, A. J. Bell, and T . J. Sejnowski. Analysis of fMRI data by blind separation into independent spatial components. Human Brain Mapping , 6(3):160–188, 1998. [25] Shashwath A Meda, Nicole R Giuliani, V ince D Calhoun, Kanchana Jagannathan, David J Schretlen, Anne Pulver , Nicola Cascella, Matcheri Kesha van, W endy Kates, Robert Buchanan, et al. A lar ge scale ( n = 400) inv estigation of gray matter differences in schizophrenia using optimized vox el-based morphometry . Schizophr enia resear ch , 101(1):95–105, 2008. [26] M. Moosmann, T . Eichele, H. Nordby , K. Hugdahl, and V . D. Calhoun. Joint independent com- ponent analysis for simultaneous EEG-fMRI: principle and simulation. International J ournal of Psychophysiology , 67(3):212–221, 2008. [27] V inod Nair and Geoffre y E Hinton. Rectified linear units improve restricted boltzmann ma- chines. In Pr oceedings of the 27th International Conference on Machine Learning (ICML-10) , pages 807–814, 2010. [28] V . K. Potluru and V . D. Calhoun. Group learning using contrast NMF : Application to func- tional and structural MRI of schizophrenia. Circuits and Systems, 2008. ISCAS 2008. IEEE International Symposium on , pages 1336–1339, May 2008. [29] Marcus E. Raichle, Ann Mary MacLeod, Abraham Z. Sn yder, William J. Po wers, Debra A. Gusnard, and Gordon L. Shulman. A default mode of brain function. Pr oceedings of the National Academy of Sciences , 98(2):676–682, 2001. [30] Sam T Roweis and Lawrence K Saul. Nonlinear dimensionality reduction by locally linear embedding. Science , (5500):2323–2326, 2000. [31] Mikail Rubinov and Olaf Sporns. W eight-conserving characterization of complex functional brain networks. Neur oimage , 56(4):2068–2079, 2011. [32] John W Sammon Jr . A nonlinear mapping for data structure analysis. Computers, IEEE T ransactions on , 100(5):401–409, 1969. [33] Jing Sui, T ulay Adali, Qingbao Y u, Jiayu Chen, and V ince D. Calhoun. A revie w of multiv ari- ate methods for multimodal fusion of brain imaging data. Journal of Neur oscience Methods , 204(1):68–81, 2012. [34] Joshua B T enenbaum, V in De Silva, and John C Langford. A global geometric frame work for nonlinear dimensionality reduction. Science , 290(5500):2319–2323, 2000. [35] M.P . van den Heuv el and H.E. Hulshof f Pol. Exploring the brain network: A re view on resting- state fMRI functional connectivity. Eur opean Neur opsychopharmacology , 2010. [36] Laurens V an der Maaten and Geoffrey Hinton. V isualizing data using t-sne. J ournal of Machine Learning Resear ch , 9(2579-2605):85, 2008. [37] Hui Zou, T rev or Hastie, and Robert T ibshirani. Sparse principal component analysis. Journal of computational and gr aphical statistics , 15(2):265–286, 2006. 10 5 Supplementary material The correlation matrices for both RBM and ICA results on the fMRI dataset of Section 2.3 are pro- vided in Figure S1, where the ordering of components is performed separately for each method. Each netw ork is named by their physiological function but we do not go in depth explaining these in the current paper . F or RBM, modularity is more apparent, both visually and quantitati vely . Modu- larity , as defined in [31], av erages 0 . 40 ± 0 . 060 across subjects for RBM, and 0 . 35 ± 0 . 056 for ICA. These values are significantly greater for RBM ( t = 7 . 15 , p < 1 e − 6 per the paired t-test). Also note that the scale of correlation values for RBM and ICA is different, which highlights that RBM ov erestimated strong FNC values. Figure S1: Correlation matrices determined from RBM (left) and ICA (right), av eraged over sub- jects. Note that the color scales for RBM and ICA are different (RBM shows a larger range in correlations). The correlation matrix for ICA on the same scale as RBM is also provided as an in- set (upper right). Feature groupings for RBM and ICA were determined separately using the FNC matrices and known anatomical and functional properties. Figure S2: Sample pairs consisting of RBM (top) and ICA (bottom) SMs thresholded at 2 standard deviations. Pairing was done with the aid of spatial correlations, temporal properties, and visual inspection. V alues indicate the spatial correlation between RBM and ICA SMs. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

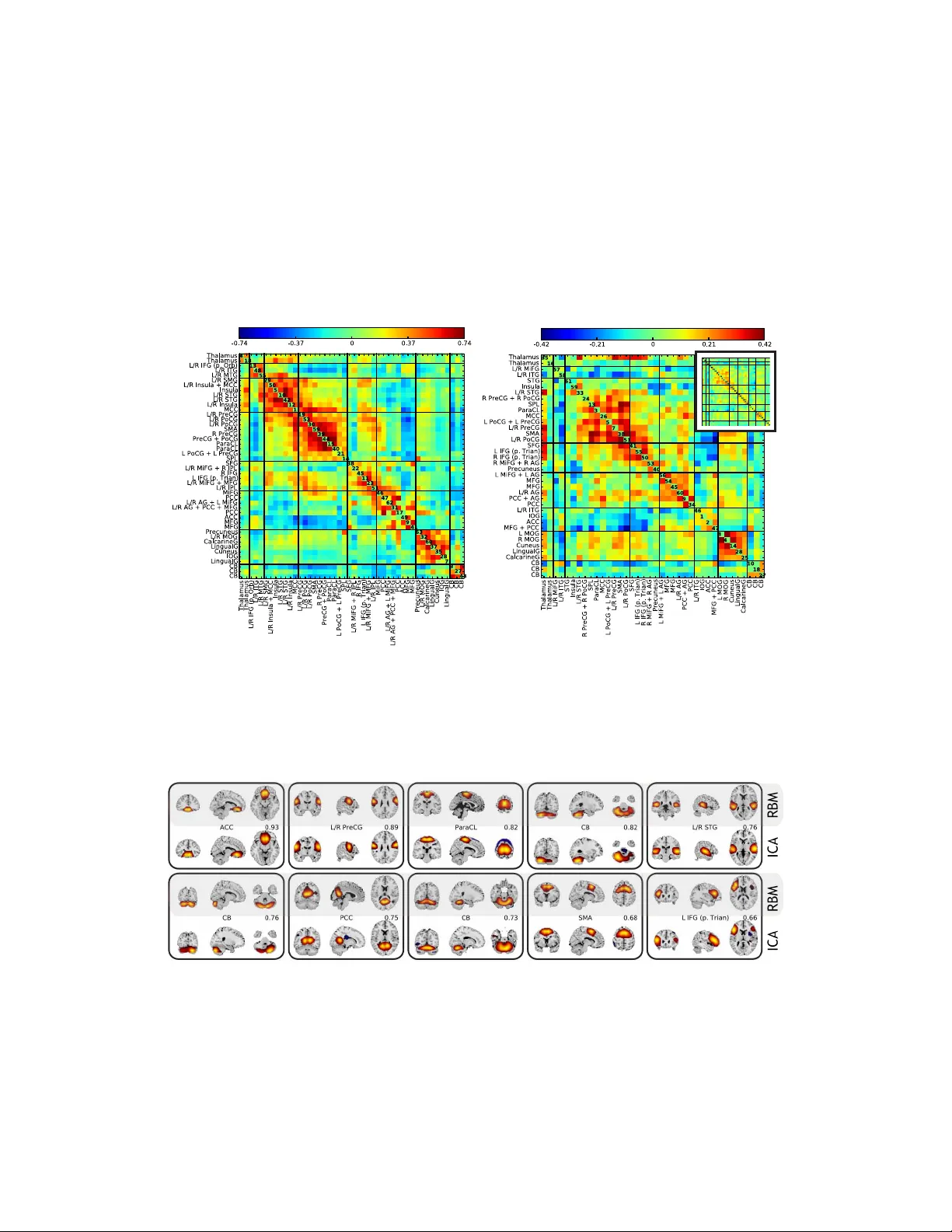

Leave a Comment