From Small-World Networks to Comparison-Based Search

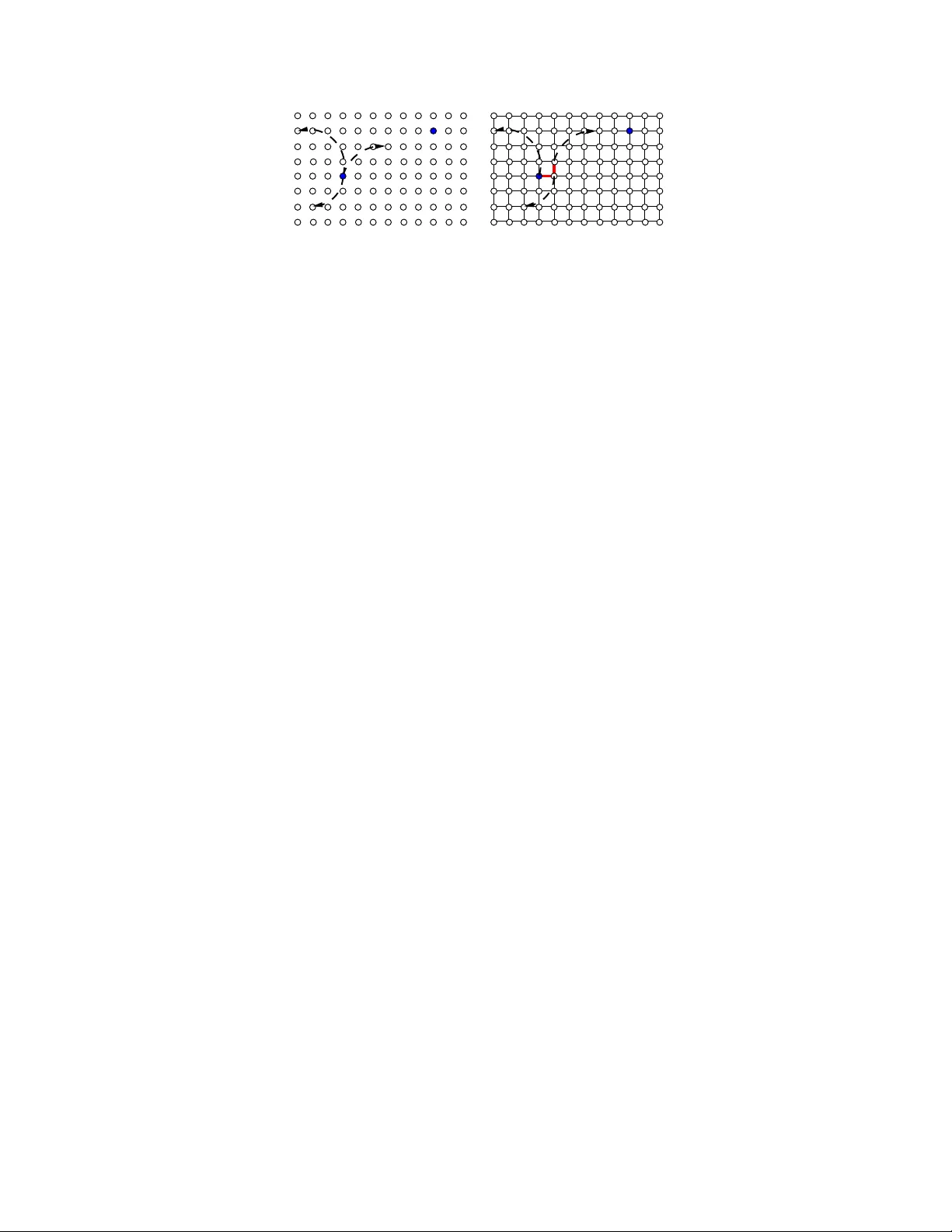

The problem of content search through comparisons has recently received considerable attention. In short, a user searching for a target object navigates through a database in the following manner: the user is asked to select the object most similar t…

Authors: Amin Karbasi, Stratis Ioannidis, Laurent Massoulie