Training Support Vector Machines Using Frank-Wolfe Optimization Methods

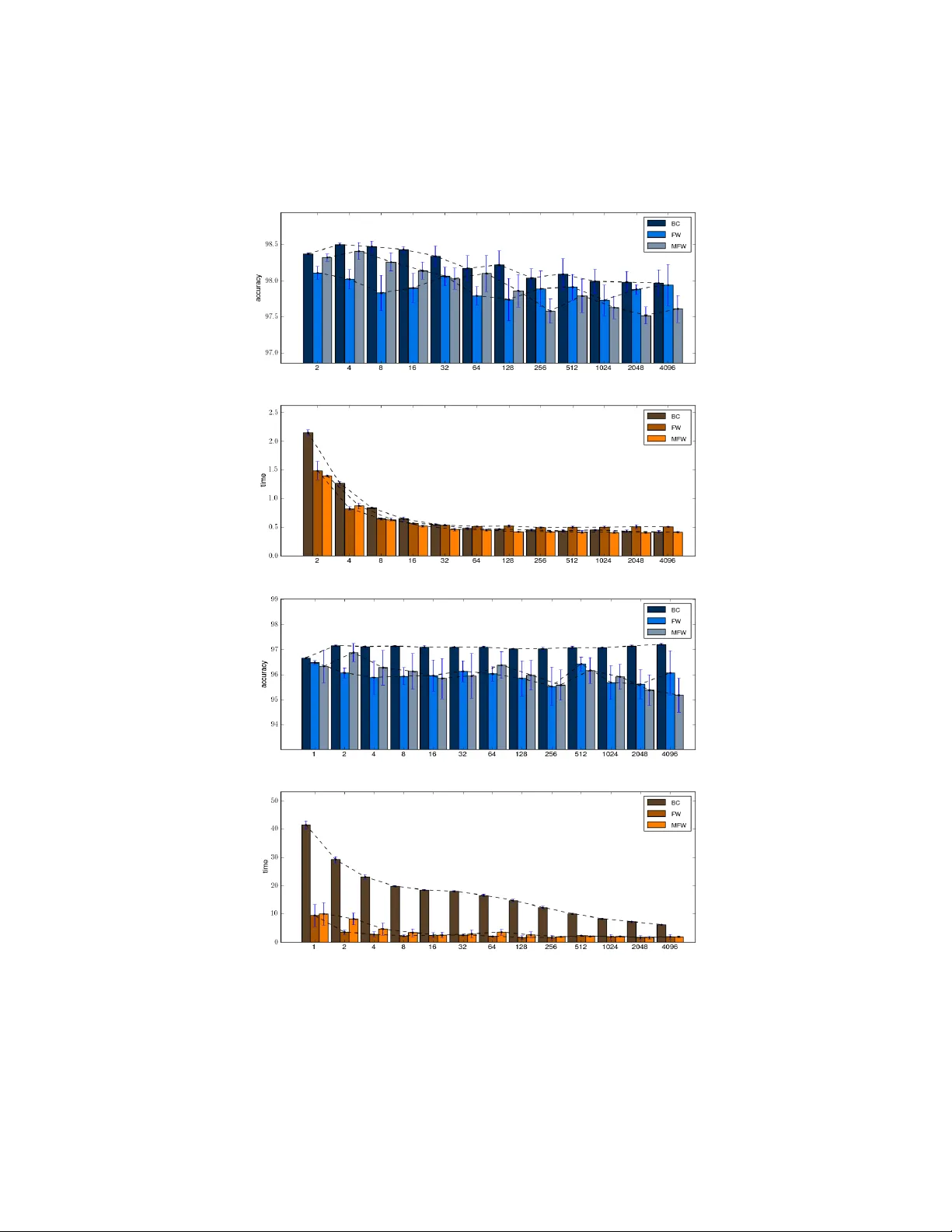

Training a Support Vector Machine (SVM) requires the solution of a quadratic programming problem (QP) whose computational complexity becomes prohibitively expensive for large scale datasets. Traditional optimization methods cannot be directly applied…

Authors: Emanuele Fr, i, Ricardo Nanculef