A framework for the local information dynamics of distributed computation in complex systems

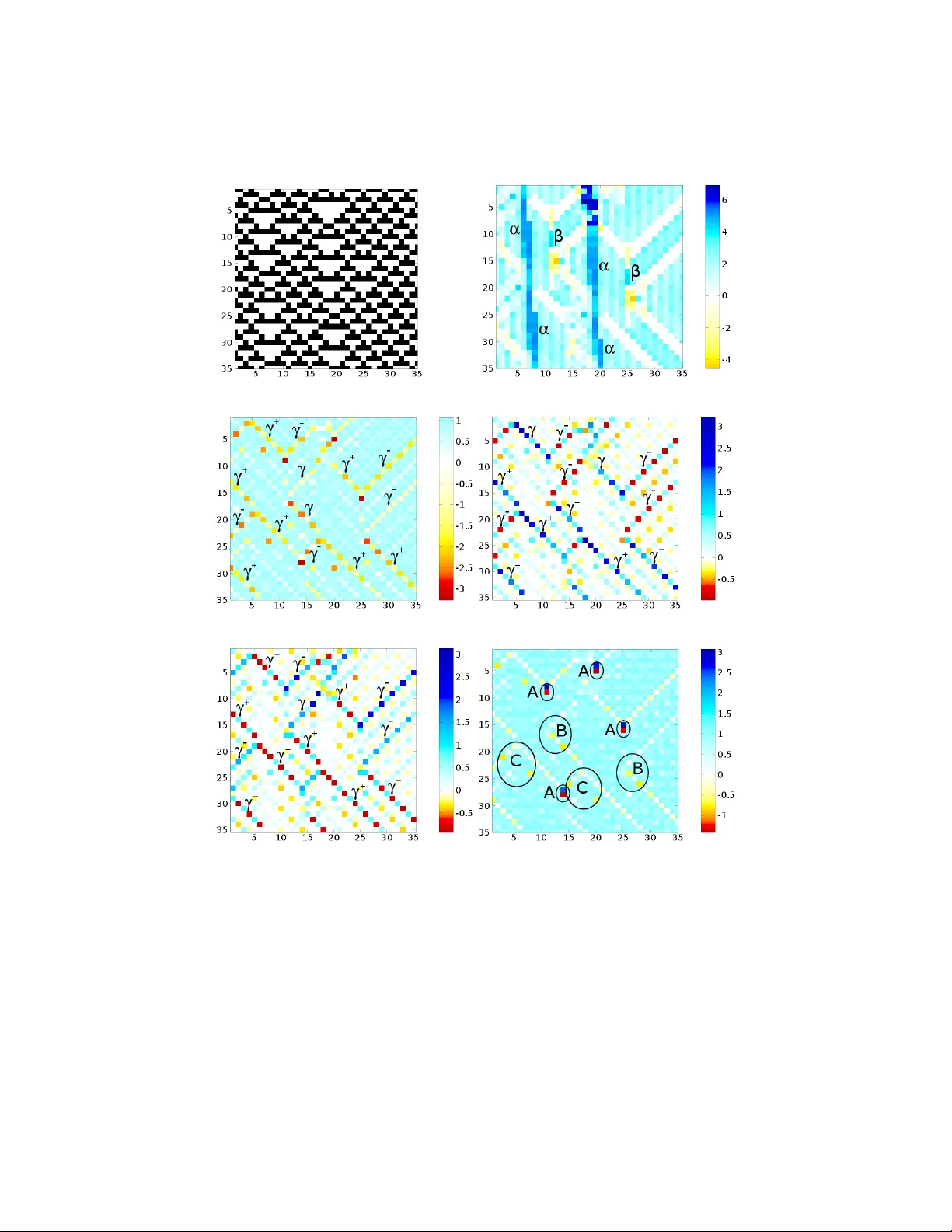

The nature of distributed computation has often been described in terms of the component operations of universal computation: information storage, transfer and modification. We review the first complete framework that quantifies each of these individ…

Authors: Joseph T. Lizier, Mikhail Prokopenko, Albert Y. Zomaya