On the Estimation of Pointwise Dimension

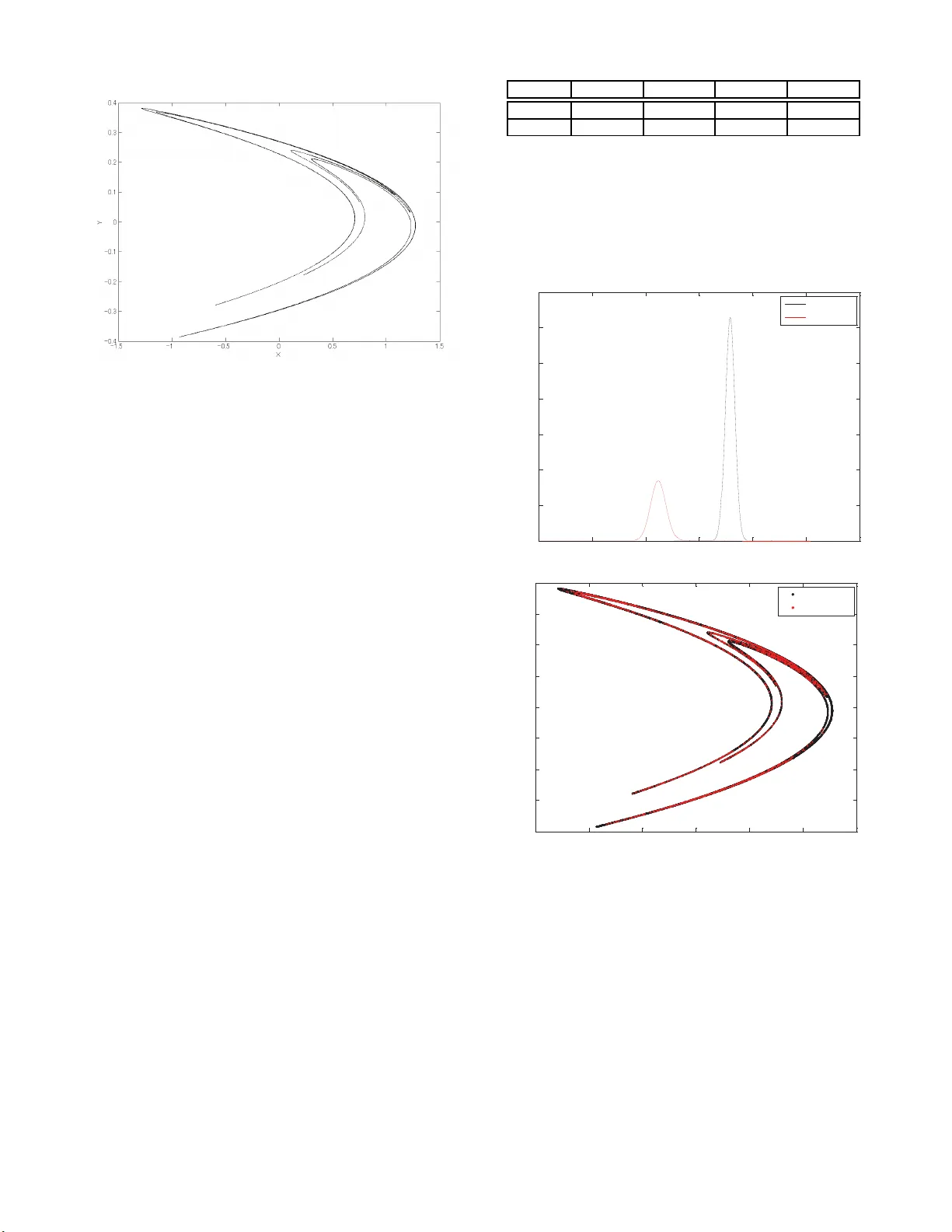

Our goal in this paper is to develop an effective estimator of fractal dimension. We survey existing ideas in dimension estimation, with a focus on the currently popular method of Grassberger and Procaccia for the estimation of correlation dimension.…

Authors: Shohei Hidaka, Neeraj Kashyap