Efficient Markov Network Structure Discovery Using Independence Tests

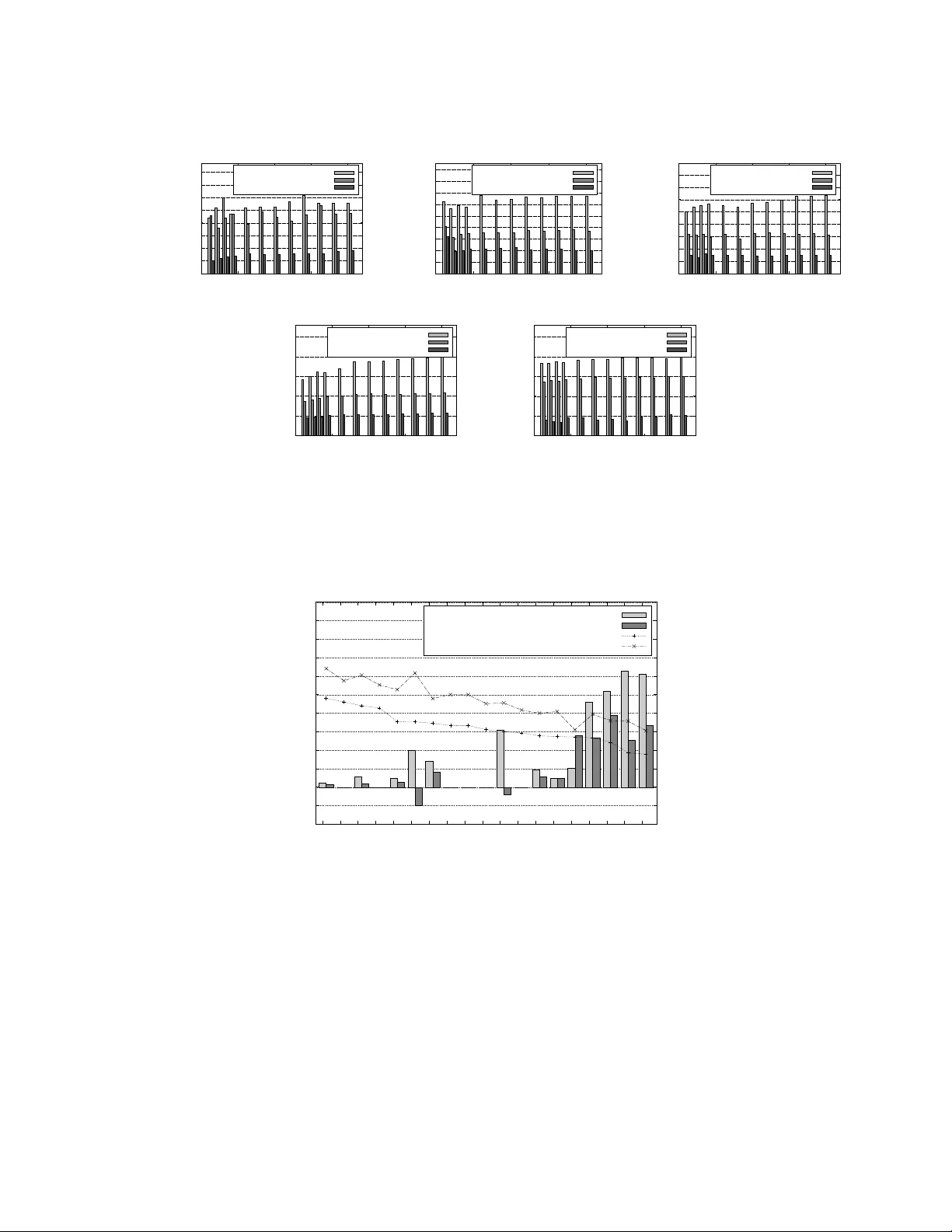

We present two algorithms for learning the structure of a Markov network from data: GSMN* and GSIMN. Both algorithms use statistical independence tests to infer the structure by successively constraining the set of structures consistent with the resu…

Authors: Facundo Bromberg, Dimitris Margaritis, Vasant Honavar