A PSO and Pattern Search based Memetic Algorithm for SVMs Parameters Optimization

Addressing the issue of SVMs parameters optimization, this study proposes an efficient memetic algorithm based on Particle Swarm Optimization algorithm (PSO) and Pattern Search (PS). In the proposed memetic algorithm, PSO is responsible for explorati…

Authors: Yukun Bao, Zhongyi Hu, Tao Xiong

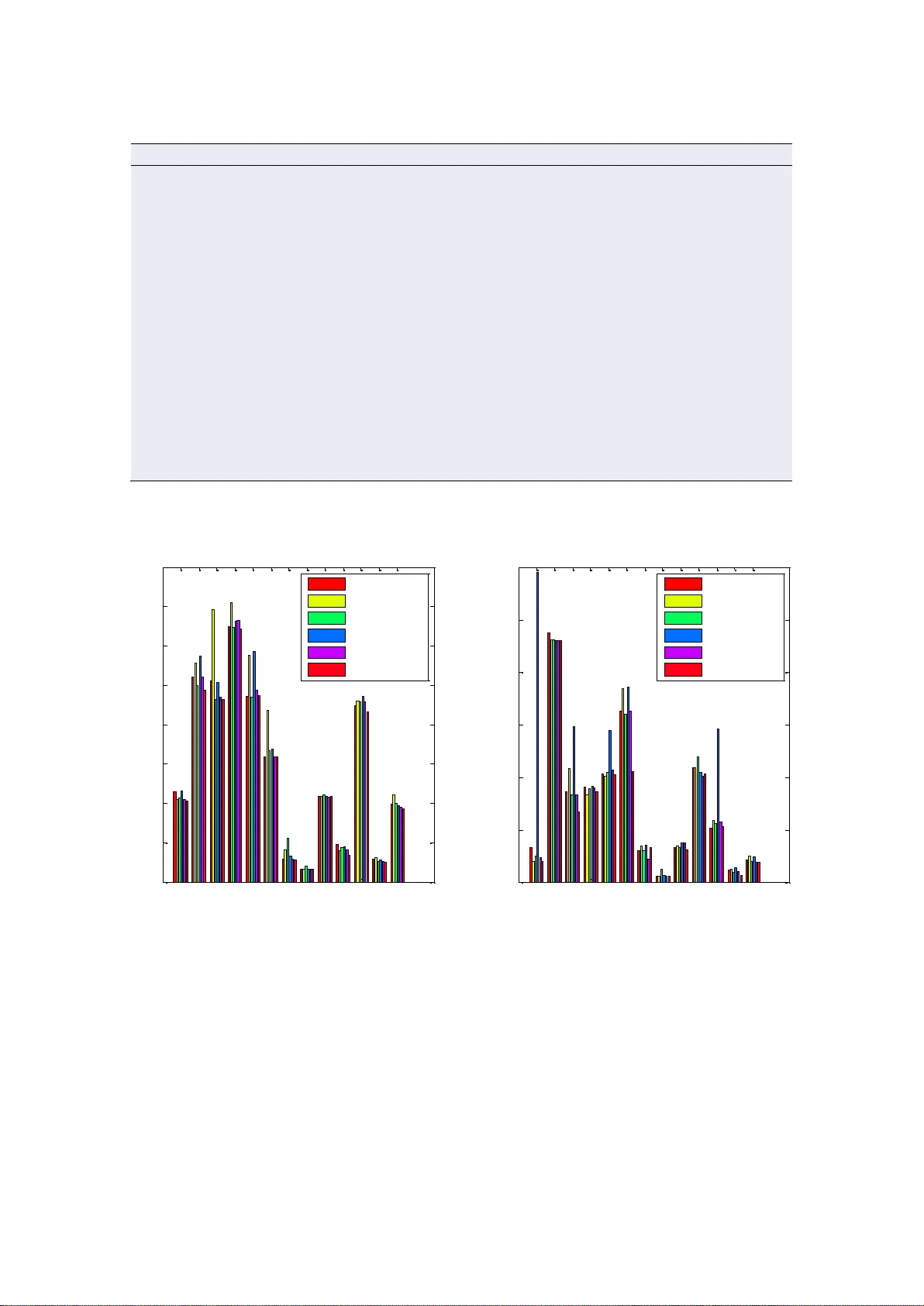

This is a p reprint copy that has been accepted for publica tion in Neur ocomputing . Please c it e this article as: Y ukun Bao, Zhongy i Hu, T ao Xiong, “ A PSO and Pattern S earch base d Memetic Algorithm for SVMs Parameters Optimization, ” Neur ocomputing , 1 17 , 2013 : 98 – 106 . http://dx.doi. or g/10.1016/j.n eucom .2013.01.027 Note: This prepr int copy is o nly for personal use. I Resear ch Highlights A PSO and pattern search based memetic algorithm is proposed Probabilistic selection strategy is proposed to select individuals for local search Local refinement with PS and probabilistic selection strategy are confirmed Experimental results show that the proposed MA outperforms established counterparts 1 A PSO and Pattern Search based Memetic Algorithm 1 for SVMs Parameters Optimization 2 Yukun Bao * , Zhongyi Hu, Tao Xiong 3 Department of Management Science and Inf ormation S y st ems, 4 School of Management, Huazhong University of Science and Technology, Wuhan, 5 P.R.China, 430074 6 Abstract 7 Addressing the issue of SVMs parameters opti mization, t his study proposes an effi cient 8 mem etic algorithm based on Particle Swarm Optim ization algorithm (PSO) and Pattern Search 9 (PS). In the proposed memetic algorit hm, P SO is responsible for exploration of the search space 10 and the detection of the potential regions with optim u m soluti ons, while pattern search (PS) i s 11 used to produce an ef fective exploitation on the potential regions obtained by P SO. Moreover , a 12 novel probabilistic selection strategy is proposed to select the appropriate individuals among the 13 current populati on to u nder go local refinement, keeping a well balance between explorati on and 14 exploitati on. Experiment al results confirm that the local refinement with PS and our proposed 15 selection strategy are effect ive, and finally de monstr ate ef fectiveness and robustness of the 16 proposed PS O- PS based MA for SVMs param eters optimization . 17 18 Key words: Param eters Opt imizat ion; Support V ector Machines; Memet ic Algorithm s; Particle 19 Swarm Optimizati on; Pattern Search 20 21 * Corresponding author: T el: +86- 27 - 62559765; fax: +86- 27 -8755643 7. Email: yukunbao@mail.hust.edu.cn or y .bao@ieee.org 2 1. Introduction 1 Support vector m achines (SVMs), first presented by V apnik [1] based on Statist ical Learning 2 Theory (SL T) and Structural Risk Minimization principle (SRM), solv e the classification problem 3 by maxim izing the margin between the separating hy per-plane and the data. SVMs im plement the 4 St ructural Risk Minimizat ion Principle (SRM), which seeks to minimi ze an upper bound of the 5 generalizati on error by using penalty paramet er C as trade-of f between training error and model 6 complexi ty . The use of kernel tricks enables the SVMs to have the ability of dealing with 7 nonlinear features in high dim ensional feature space. Due to the excellent general ization 8 perform ance, SVMs have been widely used in various areas, such as pattern recognition, text 9 categorizati on, fault diagnosis and so on. H owever , the generalization ability of SV Ms highly 10 depends on the adeq uate setting of param eters, such as penal ty coef ficient C and kernel param eters. 11 Therefore, the selection of the optim al param eters is of critical im portance to obtain a good 12 perform ance in handling l earning t ask with SVMs . 13 In this study , we mainly concentrate on the paramet ers optimization of SVMs, which has 14 gained great attentions in the past several years. The most popular and univer sal method is grid 15 search, which conducts an exhaustive search on the parameters space with the validation error 16 (such as 5-fold cross validation error) minim ized. Obviously , al though it can be easily parallelized 17 and seems safe [2] , its computational cost scales exponentially with the number of parameters and 18 the number of the sampl ing points for each paramet er [3] . Besides, the perform ance of grid search 19 is sensitive to the setting of the grid range and coarseness for each parameter , which are not easy 20 to set without prior knowledge. Instead of minim izing the validation error , another stream s of 21 3 studies focused on minimizing the approximat ed bounds on generalization perform ance by 1 numeri cal optimization methods [4 -12] . The numeri cal optimizat ion methods are generally more 2 ef ficient than grid search for their fast conver gence rate. H owever , this kind of methods can only 3 be suitable for the cases that error bounds are dif ferentiabl e and continuous with respect to the 4 paramet ers in SVMs. It is also worth noting that the numerical optimization methods, such as 5 gradient descent, may get stuck in local optim a and highly depend on the starting points . 6 Furtherm ore, experim ental evidence showed that several established bounds methods could not 7 compete with traditional 5-fold cross-validation method [6] , which indicates inevitably gap 8 between the approxim ation bounds and real error [13] . Recently , evolutionary algorithms such as 9 Genetic algorithm (GA ), parti cle swarm optimization (PSO), ant colony optimization (ACO) and 10 sim ulated annealing algorithm (SA) have been employed to optim ize the SVMs param eters for 11 their better global search abilities against nu meri cal optimization methods [14 -19] . However , GAs 12 and other evolutionary algorithms (EAs) are not guaranteed to find the global optimum solution to 13 a problem, though they are generally good at finding “acceptable good” or near -optim al solutions 14 to problems. Another drawback of EAs is that they are not well suited to perform finely tuned 15 search, but on the other hand, they are good at exploring the solution space since they search from 16 a set of designs an d not from a single desi gn. 17 Memet ic Algorithms (MAs), first coined by Moscato [20,21] , hav e been re garded as a n 18 promi sing framework that combi nes the Evolutionary Algorithms (EA s) with problem- specific 19 local searcher (LS), where t he latter is often referred to as a meme defined as a unit of cultural 20 evoluti on that is capable of local r efinem ents. From an optimi zation point of view , M As are hy brid 21 4 EAs that combine global and local search by using an EA to perform explorati on while the local 1 search m ethod performs exploit ation. This has the ability to exploit the com plementary advantages 2 of EAs (generality , robustness, global search efficiency ), and problem-specific local search 3 (exploiti ng application- specific problem structure, rapid convergence toward local minima) [22] . 4 Up to date, MAs have been recog nized as a powerful al gorithm ic paradigm for ev olutionary 5 computi ng in a wide variety of areas [23-26] . In particular , the relative advantage of MAs over 6 EAs is quit e consistent on co mpl ex search spaces. 7 Since the paramet ers space of SVMs is often consi dered complex, it is of interest to justify 8 the use of MAs for SVMs param eters optimizati on. T here have been, if any , few works related to 9 MAs reported in the l iterature of SVMs parameters optimization. In this study , by combining 10 Particle Swarm Optimization algorit hm (PSO) and P attern Search (PS), an effici ent PSO -PS based 11 Memet ic Algorithm (MA ) is proposed to opti mize t he param eters of S VMs. I n the propose d 12 PSO -PS based MA , PSO is responsi ble for explorati on of the search space and the detection of the 13 potential regions with optimum solution, while a direct search algorithm , pattern search (PS) in 14 this case, is used to produce an eff ective exploitation on the potential regions obtained by P SO. 15 Furtherm ore, the problem of selecting promising individuals to experience local refinement is also 16 addressed and thus a novel probabilistic selection strateg y is proposed to keep a balance between 17 exploration and ex ploitati on. The perfor mance of proposed P SO-PS based MA for parameter s 18 optimizat ion in SVMs is justified on several benchmarks against selected established counterpar ts. 19 Experiment a l resul ts and c omparisons demonstr ate the ef fectiveness of the proposed PSO-PS 20 based MA for parameters opt imizati on of SVMs. 21 5 The rest of the study is or ganized as follows. Section 2 presents a brief review on SVMs. 1 Section 3 elaborates on the PSO-PS based MA proposed in this study . The results with discussions 2 are reported in Section 4. F inally , we conclude this study in Section 5. 3 2. Support Vector Machines 4 Consider a binary classificati on problem involvi ng a set of trai ning 5 dataset 1 1 2 2 {( , ), ( , ). .. ( , )} n nn y y y R R x x x , where i x is input space, { 1 ,1 } i y is the labels of 6 the input space i x and n den otes the number of the data items in the training set. Based on the 7 structured risk minimizati on (SRM) principle [1] , SVMs aim to generate an optimal h yper -pl ane 8 to separate t he two classes b y minimizing the regul arized t raining error: 9 2 1 1 min 2 . . , 1 , 1 , 2..... 0, 1 , 2..... n i i i i i i C s t y b i n in w wx (1) 10 Where, , denotes the inner product; w is the weight vect or , which controls the smoothness 11 of the model; b i s a parameter of bias; i is a non-negative slack var iable w hich defi nes the 12 permi tted miscl assification error . In the regularized training error given by Eq. (1) , the first term, 13 2 1 2 w , is the regular ization ter m to be used as a m easure of flatness or com plexity of the function. 14 The second term 1 n i i is the empirical risk. Hence, C is referred to as the penalty coefficient and 15 it specifi es the trade- o ff between the em pirical ri sk and the regulari zation term . 16 According to W olfe‟ s Dual Theorem and the saddle -point condition, the dual optimizati on 17 problem of the above pri mal one is obtained as t he followi ng quadratic progr amm ing form : 18 6 1 , 1 1 1 ma x , 2 . . 0, 0 , 1 , 2..... nn i i j i j i j i i j n i i i i yy s t y C i n xx (2) 1 Where () i i n are nonnegati ve Lagrangian mult ipliers that can be obtained by solving the 2 convex quadrati c program ming problem stated above. 3 Finally , by sol ving Eq. (2) and using the trick of kernel function, the decision function can be 4 defined as the fol lowing ex plicit f orm: 5 1 ( ) sgn ( , ) n i i i i f x y K b xx (3) 6 Here, ( , ) i K xx is defined as kernel funct ion. The value of the kernel function is equival ent to 7 the inner product of t wo vectors i x an j x in the high- dimensional feature space 8 () i x and () j x , that is, ( , ) ( ), ( ) i j i j K x x x x . The elegance of using the kernel funct ion is 9 that one can deal with feature spaces of arbitrary dimensionality without having to compute the 10 map () x explicitly . Any function that satisfi es Mercer ‟ s condition [27] can be used as the kernel 11 function. The typical exam pl es of kernel funct ion are as f ollows: 12 Linear kernel : ( , ) , i j i j K x x x x 13 Poly nomial kernel: ( , ) , , 0. d i j i j Kr x x x x 14 Radial basis f unction (RBF ) kernel: 2 ( , ) e xp , 0. i j i j K x x x x 15 Sigmoi d kernel: ( , ) t an h , . i j i j Kr x x x x 16 Where, , r and d are kernel parameters. The kernel parameter should be carefully chosen as 17 it impl icitly defines the structure of the high dim ensional feat ure space () x and thus cont rols the 18 complexi ty of the final solution [28] . General ly , am ong these ker nel functions, RBF kernel is 19 strongly recomm ended and widely used for its performance and complexi ty [2] and thus SVMs 20 7 with RBF kernel function is the one studi ed in thi s study . 1 Overall, SVMs are a powerful classifier with strong theoretical foundations and excell ent 2 generalizati on perform ance. Note that before im plem enting the SVMs with RBF kernel, there are 3 two parameters (penalty parameter C and RBF kernel parameter ) have to set. Previous studies 4 show that these two parameters play an important ro le on the success of SVMs. In this study , 5 addressing the selection of these two param eters, a P SO-PS based Memet ic Algorithm (MA) is 6 proposed and j ustified w ithin the cont ext of SVMs with R BF ker nel function. 7 3. Proposed Memetic Algorithms 8 Memet ic Algorithms (MAs), first coined by Mosca to [20,21] , have come to light as an union 9 of population- based stochastic global search and local based cultural evoluti on, which are inspired 10 by Darwinian pri nciples of nat ural evoluti on and Dawkins notion of a mem e. The meme is defined 11 as a unit of cul tural evolution t hat is capable of local /individual refinements. As desi gned as 12 hybridi zation, MAs are expected to make full use of the balance between exploration and 13 exploitati on of the search space to complem ent the advantages of population based methods and 14 local based methods. Nowaday s, MAs have revealed their successes with high performance and 15 superior robustness across a wide range of problem domains [29,30] . However , according to the 16 No Free Lunch theory , the hy bridization can be more com plex and expensiv e to implem ent. 17 Considering the simplici ty in implement ation of particle swarm optimizations (PSO) and its 18 proven performance, being able to produce good solutions at a low computational cost [16,31-33], 19 this study proposed a PSO based mem etic algorithm with the patt ern search (PS) as a local 20 8 indivi dual learner , to solve the parameters optimization problem in SV Ms. T he concept of the 1 proposed Memetic algorit hms is illustrated in Fig.1 . In the proposed P SO- PS based MA , PSO is 2 used for exploring t he global search space of param eters, and pattern search is deserv ed to play the 3 role of local exploitati on based on it s advantages of simpl icity , flexibility and robustness . 4 In the following sub-sections, we will explain the implementati on of the proposed PSO - PS 5 MA for paramet ers optim ization in detai ls. 6 3.1. Repr esentation and Initialization 7 In our MA , each part icle/indiv idual in the population is a potential solution to the SVMs 8 paramet ers. And its status is characterized according to its posit ion and v elocity . The 9 D-dim ensional position for the particle i at iteration t can be repres ented as 1 , , t t t i i i D x x x . 10 Likewi se, the fly velocity (position change) for parti cle i at iteration t can be descr ibed as 11 1 , , t t t i i i D v v v . In this st udy , the position and velocity of one particle have only two dim ensions 12 that denote the two param ete rs ( C and ) to be optimi zed in SVMs. A set of particles 13 1 { , , } t t M P x x is called a sw arm ( or population). 14 Initial ly , the particles in t he population are randomly generated in the solution space 15 according to Eq.(4) an d Eq.(5) , 16 m in , m a x, m in, () id d d d x x r x x (4) 17 m in , m ax , mi n, () id d d d v v r v v (5) 18 Where id x and id v are the position value and veloci ty in the th d dimens ion of the 19 th i particle, respectively . r is a random number in the range of [0,1]. min, d x and ma x, d x denotes the 20 9 search space of the th d dimension (t hat is , the upper and lo wer lim it of the param e ters in SVMs) , 1 while min, d v and ma x, d v is used to co nstraint the vel ocity of the par ticle to av oid the particle 2 flying outsi de the search s pace. 3 After the initializati on of the population, the algorithm iteratively generates offspring using 4 PSO based operator and under goes local refinem ent with pattern search, which is to be discussed 5 in the foll owing sub- sections. 6 7 Initialize the population Offspring generation Begin Is termination condition satisfied? No Update gbest Next generation End Refinement with pattern search Is termination condition satisfied? Evaluate the neighbors of current point No Is improvement obtained? Update current point Yes No Decrease search step Next Yes Replace the particles and update the pbest Evaluate the swarm and initialize the pbest , gbest Evaluate the swarm and update the pbest , gbest Return optimal particle Yes Selection of particles to be refined Refinement with pattern search Selected particle 8 Fig.1. Flowchart of the proposed PSO- PS base d Memetic Algorithm 9 10 3.2. Fitness Function 1 The objective of optimizing the parameter s in a SVMs mod el is to maximize the 2 generalizati on ability or m i nimi ze generalization error of the m odel. As cross vali dation can 3 provide unbiased estim ation of the generalization error, in this study, we take the k-fold cross 4 validat ion error into consideration. Let kC VM R denotes the mean of the k folds ‟ 5 misclassi fication rate ( MR ). With the use of cross validation, k CV M R is deserved to be a 6 criterion for generaliza tion a bility of a model. H ence, kC VM R is used as fitness function in this 7 study. The fi tness functi on and errors ar e shown as f ollow s: 8 1 1 k j j Fitness kCVMR kCVM R MR k (6) 9 Where, k is the size of folds. In this study , 5-fold cross validation is conducted, which is 10 widely used and suggested i n [34] . 11 3.3. PSO based Operator 12 In the proposed PSO-PS based MA, PSO based operat ors are used to explore the search 13 spac e. Particl e Swarm Optimizati on (PSO) [35] is a popul ation- based meta-heuri stic that sim ulates 14 social behavi or such as birds flocking to a prom ising position to achieve precise objectives (e.g., 15 finding food) i n a mult i-dimensional spa ce by interacting among them . 16 T o search for the optimal solution, each particle adjusts their flight trajectories by using the 17 followi ng updating equat ions: 18 1 1 1 2 2 ( ) ( ) t t t t id id id id gd id v w v c r p x c r p x (7) 19 11 11 t t t id id id x x v (8) 1 Where 12 , cc are accelerati on coef ficients, w is inertia weight, 12 an d rr are random 2 numbers in the range of [0,1]. t id v and t id x denote the velocity and position of the i th particle in d th 3 dimens ion at t th iteration, respectively . id p is the value in dimensi on d of the best parameters 4 combinat ion (a particle) found so far by particle i . 1 , , i i i D p p p is called personal best ( pbest ) 5 position. gd p is the value in dim ension d of the best parameters combinat ion (a parti cle) found s o 6 far in the swarm ( P ); 1 , , g g g D p p p is considered as the global best ( gbest ) position. N ote 7 that each particle takes individual ( lbest ) and social ( gbest ) information into account for updating 8 its v elocity and posi tion. 9 In the search space, parti cles track the indiv idual‟ s best values and the best global values . The 10 process is terminated if the number of iteration reaches the pre-determi ned maxim um number of 11 iterati on. 12 3.4. Refinement w ith Pattern S earc h 13 In the proposed PSO-PS based MA, pattern search is employed to conduct exploitation of the 14 paramet ers solution space. Pattern search (PS) is a kind of numeri cal optimization m ethods that do 15 not require t he gr adient of the problem to be optimized. It i s a simple ef fective optimization 16 technique suitable for optim izing a wide range of objective functions. It does not require 17 derivat ives but direct function evaluation, making the method suitable for parameters optimizati on 18 of SVMs with the vali dation error minimized. The conver gence to local minima for constrained 19 problem s as well as unconstrained problem s has been proven in [36] . It investigates nearest 20 12 neighbor param eter vectors of the current point, and tries to find a better move. If all neighbor 1 points fail to produce a decrease, then the search step is reduced. This search stops un til the search 2 step gets suff iciently s mall , which ensuring t he conver gence t o a l ocal m inimum. The p attern 3 search is based on a pattern P k that defines the neighbor hood of curre nt points. A wel l often used 4 pattern is five-point unit-si ze rood pattern which can be represented by the generating matrix P k in 5 Eq.(9) . 6 1 0 1 0 0 0 1 0 1 0 k P (9) 7 The procedure of pattern search is outlined in Fig.2 . ∆ 0 d enotes the default search step of PS, 8 ∆ is a search step, p k is a column of P k , denotes the neighborhood of the center point . T he 9 terminat ion conditions are: the maximum iteration is met, or the search step gets a predefined 10 small value. To bal ance the amount of computational budget allocated for expl oration versus 11 exploitati on, ∆ 0 /8 is em pirically selected as t he minimum search step. 12 Algorithm 1. Refinement with pattern search for parameters optimization Begin Initialize : predefine the d efault sea rch step ∆ 0 ,initialize center point p 0 ; ∆ = ∆ 0 ; While (Termination conditions are not satisfied) 0 { * | fo r e a c h c ol u m n in } k k k p p p P ; Evaluate the nearest neighbors in ; If (there are improvements in th e ) Update the current center t o the best neighbor in ; ∆ = ∆ 0 ; Else Decrease the search step ∆ = ∆ 0 /2; End If En d While End Fig.2 . Pseudocode of pattern search for local refinement 13 13 3.5. A P robabi listic Selecti on S trategy 1 An important issue in MAs to be addressed is which part icles from the population should be 2 selected to undergo local improv ement (see “ Selection of particles to be ref ined ” procedure in 3 Fig.1 ). This selection directly defines the balance of evolution (exploration) and the indiv idual 4 learning (exploitation) under limited computational budget, which is of importance to the succes s 5 of MAs. In previous studies, a comm on way is to apply a local search to al l newly generated 6 of fspring, which suffers from much strong local exploitati on. Another way is to apply local search 7 to particles with a predefined probabili ty p l , which seems too blind and the p l is not easy to define 8 [37] . B esides, as [38] sugge sts that those solutions that are in proximity of a promising basin of 9 attracti on received an extended budget, the randomly selected particles may needs more cost to 10 reach the optimal. Hence, it seems a good choice that only the particles with the fitness larger than 11 a threshold v are selected for local i mprov ement [39] , while the threshold is problem specific and 12 some of t he selected part icles m ay be crow ed in the sam e promisi ng region. 13 T o this end, a probabilist ic selection strategy of selecting non-crowed individuals to undergo 14 refinem ent is proposed in thi s study . Beginning wit h the best indiv idual, each individual under go es 15 local search with the probability () l px (in Eq.( 10 ) ), a individual with better fitness has a more 16 chance to be selected for exploit ation. T o avoid exploiting the sam e region simultaneously , once 17 an individual is selected to be refined, those individual s crowd around the selected indiv idual (i.e., 18 the Euclidean distance is less than or equal to r ) is eliminated from the population. The only 19 paramet er in the proposed strategy is r , which denotes th e radius of the exploitati on region. The r 20 is selected empiri cally in this study . Detail of this strat egy is illust rated in Fi g.3 . 21 14 Formal ly , the selection probabil ity () l px of each solution x in the current population P is 1 specified by the following roulette- wheel select ion scheme wi th the linear scaling: 2 ma x ma x ( ) ( ) () ( ) ( ) l y f f x px f f y P P P (10) 3 Where ma x () f P is the m aximum ( worse) fitness v alue a mong the cur rent populati on P . 4 Algorithm 2. A Probabilistic Selection Strategy Input: current population P , constant radius r Output: a set of selected individuals that undergo local refinem ent ; Begin Initialize : P ’ as a copy of P ; as a null set ; While ( P ’ is not NULL ) Get the best fitness individual x belongs to P ’ , i.e., a rg m in{ ( ) | ' } x f x x P ; Calculate () l px according to Eq.(10) ; If ( ) (0 ,1 ) l p x r an d {} x ; Remove x from P ’ ; F or each individual y in P ’ If ( , ) d x y r , then rem ove y from P ’ ; End For E nd If End While End Fig.3. Pseudoco de of propos ed probabilistic s election str ategy 5 4. Experimental Results and Discussions 6 4.1. Experiment al Setup 7 In this study , we conduct two experiment s to justify the proposed PSO-PS based MA as well 8 as the proposed proba bilisti c selection st rategy . The first experiment aim s to investigat e the 9 perform ance of the four v ari ants of the propos ed PSO- P S based M A with dif ferent selection 10 15 strategies and thus the best variant is determined. Then, the best selected variant is employed to 1 compare agai nst the est ablished count erparts in l iterature i n the second experim ent. 2 LibSVM (V ersion 2.91) [34] is used for the im plementati on of SV Ms. PSO a nd sev eral 3 variant s of proposed MA are coded in MA TLAB 2009b using computer with Intel C ore 2 Duo 4 CPU T5750, 2G RAM. As suggested by Hsu et al. [2] , the search space of the parameters is 5 defined as an expone ntially growi ng space: 22 l og ; l og X C Y and 1 0 1 0 ; 10 10 XY . 6 Through initial experim ents, the paramet ers in PSO and MA are set as follows. The acceleration 7 coef ficients c 1 and c 2 are both equals to 2. For w , a time varying inertia weight linearly decreasing 8 from 1.2 to 0.8 is employed. The default search step ( ∆ ) in pattern search is set to 1. The radius of 9 the exploitat ion region ( r ) us ed in the prob abilist ic selection st rategy equals to 2. 10 T able 1 Details of the benchmark datasets 11 Data sets No. of attributes No. of training set No. of testing set No. of groups Banana 2 400 4900 100 Breast cancer 9 200 77 100 Diabetis 8 468 300 1 00 Flare solar 9 666 400 100 German 20 700 300 100 Heart 13 170 100 100 Image 18 1300 1010 20 Ringnorm 20 400 7000 100 Splice 60 1000 2175 20 Thyroid 5 140 75 100 Titanic 3 150 2051 100 Twonorm 20 400 7000 100 Waveform 21 400 4600 100 T o test the performance of the proposed PSO- PS based memet ic algorithms, computational 12 experim ents are carried out against some well-studied benchmarks. In this stud y , thirteen 13 16 commonly used benchmark datasets from IDA Benchmark Reposit ory 1 are used. These datasets, 1 having been preprocesse d by [40] , consist of 100 random groups (20 in the case of image and 2 splice datasets) with training and testing sets (about 60%:40%) describing the binary classification 3 problem . The dataset in each group has already been normalized to zero mean and unit standard 4 deviati on. For the dataset in each group, the training set is used to select the optim al parameters in 5 SVM based on 5-fold cross validation and establish the classifier , and then the test set is used to 6 assess the classifier with the optimal parameters. T able 1 summ arizes the general information of 7 these dataset s. 8 4.2. Experiment I 9 In the proposed PSO- PS based MA, a pattern sear ch is i ntegrated into PSO. So it is worth to 10 evaluat e the influence of pattern search. Besides, in order to establish an efficient and robust MA, 11 the selection of particles to be local ly improved plays an important r ole. Hence, in the first 12 experim ent, a single PSO and four variants of proposed MA with dif ferent selection strategies are 13 considered. The following a bbreviat ions represent t he algorithm s considered in this sub- section. 14 PSO : a single PSO without l ocal search; 15 MA 1 : all newly generated particles ar e refined wi th PS; 16 MA2 : PS is a pplied to eac h new part icle wit h a predefined probability p l =0.1; 17 MA 3 : PS is only applied to the two best fitness pa rticles; 18 MA4 : P S is applied to the select ed particles by probabilistic selection strategy proposed in 19 1 http ://www .raetschlab.org/Members/raetsch/benchmark / 17 section 3.5; 1 GS : a grid se arch method on the sear ch space, wi th the se arch step equ als to 0.5. 2 This experiment is conducted on the first groups of the top six datasets ( Banana , Breas t 3 cancer , Diabetis , Flar e solar , German and Heart ). The population size for PSO and each MA is 4 fixed to 15. The stopping criteri ons of PSO are set as follows: the number of iterations reached 5 100 or there is no im provement i n the fitness for 30 cons ecutive iterations. T o compare t he 6 algorithm s fairly , each MA stops when the num ber of the fitness ev aluations re aches the m aximum 7 value that equals to 1500, or there is no improv ement in the fitness value for 450 consecutive 8 iterati ons. Besides, grid search (GS) evaluates the fitness 1681 times by sampling 41× 41 points in 9 the parameters ‟ search space. For the purpose of reducing statistical errors, each benchmark is 10 independent ly sim ulated 30 tim es, and the err or rates and n umbers of fitness eval uations are 11 collected for compari son. 12 T able 2 describes the results obtained with the above algorithms. The colum n of „ error ‟ 13 shows the mean and standard deviation of the error rates (%) for 30 times. The “ #eva ” gives the 14 mean and standard deviat ion of the number s of fitness evaluat ions. The “ A ve ” row shows the 15 average error rate (%) and average number of fitness evaluations on all datasets. The last row 16 “ A veRank ” reports the average of the rank computed through the Fr iedman te st for each 17 algorithm on all datasets. 18 F ro m T able 2 , we can see that, no matter which kind of strategy is select ed in MA , the 19 perform ance in term of mean error rate is always better than that obtained b y single PSO , which 20 demonst rates the effectiveness of incorporating the local refinem ent with P S into PSO. Besides, 21 18 from the view of standard deviation, it can be seen that the perform ance of proposed PSO-P S 1 based MA is more stable than single PSO. As pattern search is employed to refine the individual s, 2 the number of fitness evaluations of each MA is larger than that of the P SO, wh ile it can be 3 regarded as the cost to avoid premature conver gence and conduct finely local refinement to obtain 4 better perform ances. Actually , the average number of fitness evaluat ion of MA is larger than that 5 of PSO by only at most 13% ( e.g. MA3 ). F inally , compared with G S, both PSO and MA achiev e 6 better results than G S in terms of test error rates and number of fitness evaluations. The facts 7 above imply that among the six algorithm s investigat ed, four variants of proposed MA tend to 8 achieve the s mal lest error rates with a little more fitness evaluati ons than that of PSO but still 9 much les s than that of gr id search. 10 By comparing four variant s of proposed MA , several results can be observed. It is interesting 11 to note that MA1 , where PS is applied to all newly generated individual s, does not achieve as good 12 results as expected. This may be due to the premature conver gence of the solutions because of the 13 strong local refinement is a pplied to the population. MA2 performs comparably with the MA1 , 14 which indicates that the random ly se lected strategy b y probability p l is also failed. MA3 gains 15 better results than MA1 and MA2 , and even has compet itive results with MA4 on some datasets 16 ( e.g. Diabetis dataset). Finally , MA4 always performs the best in terms of the error rate on each 17 dataset and the average error rate on all datasets, while the number of fitness evaluations does not 18 increase too much than that of the other three variants of MA. All the above results suggest the 19 success of our proposed probabilisti c selection strategy used in MA4 , highlighted by addressing 20 the balance of exploration and exploitati on in the proposed MA. Hence, we can conc lude that the 21 19 MA4 with the proposed probabil istic selection strategy is effectiv e and robust for solving 1 paramet ers optim ization in SVMs model ing. 2 In addition, the average computati onal time of each method is given in T able 3 . It is obvious 3 that all of the four variants of proposed MA consume more time than the single PSO in almost all 4 the datasets , which is m a inly due to refinem ent with pattern search. Am ong the four var iants of the 5 proposed MA with dif ferent selection strategy , the MA4 consumes a little more time than the other 6 three var iants. Since t he accuracy of a SVM m odel is often mor e important and the consumed tim e 7 of MA 4 is still much less than that of the grid search, MA4 is selected as the best one to perform a 8 further com parison i n subsecti on 4.3 with the est ablished counterparts i n the liter ature. 9 4.3. Experiment II 10 Furtherm ore, following the same experimental settings as in [12,13,40] , the second 11 experim ent will directly compare the perform ance of t he best variant as MA4 of the proposed 12 PSO - PS based M A with established al gorithm s in previous st udies. 13 The experim ent is conducted as follows. At first, on each of the first five groups of every 14 benchmark dataset, the par ameter s ar e optimized using the propose d M A4 , and then the final 15 paramet ers are computed as the median of obtained fiv e estim ations. A t last, the mean error rate 16 and standard deviation of SVMs with such parameters on all 100 (20 for image and splice datasets) 17 groups of each dataset ar e taken as the results of the corresponding algorithm. T able 4 and Fig.4 18 show the comparison of m ean testing error rates (%) and the standard deviations (%) obtained by 19 the proposed PSO-PS based MA and other algorithm s proposed in previous st udies [12,13,40] . F or 20 20 T able 2 Comparison of the variants of the proposed MA with PSO and GS 1 PSO MA1 MA2 MA3 MA4 GS error #eva error #eva error #eva error #eva error #eva error #eva Banana 11.50± 0.75 921.5 ± 87 11.03± 0.68 997.1± 65 11.05± 0.68 1031.2± 73.6 10.75± 0.52 1108± 70.3 10.63± 0.48 1029.7± 75.5 11.9 1681 Breast cancer 26.73± 5.16 987 ± 118.5 25.58± 4.87 1058.6± 101 25.65± 4.80 1135.8± 119.4 24.91± 4.72 1165.8± 126.7 24.69 ± 4.75 1109± 115.3 25.97 1681 Diabetis 25.31± 2.35 993.1 ± 87 24.41± 2.30 1088.3± 96 24.59± 2.21 1126.6± 105 23.47± 1.98 1140.5± 91.8 23.47± 1.98 1110.8± 94.4 23.67 1681 Flare solar 33.50± 2.27 1026.5 ± 94.5 33.31± 2.06 1071.5± 87.7 33.18± 2.20 1093.7± 109.5 32.71± 2.01 1184.3± 103.3 32.56± 1.8 1055.2± 80.6 34.25 1681 German 28.03± 2.96 1061 ± 103.5 25.66± 2.50 1153.6± 93.5 25.49± 2.73 1128.6± 114.4 24.36± 2.43 1109.5± 98.1 24.01± 2.26 1079.7± 91.5 24.33 1681 Heart 17.34± 3.9 901.5 ± 108 16.85± 3.26 985.3± 99.7 16 .74± 3.26 1047.6± 89.6 16.18± 2.87 994.4± 93.6 16.13± 2.45 976.8± 90.7 19 1681 Ave 23.74± 2.90 981.8 ± 99.8 22.81 ± 2.61 1059.1± 90.5 22.78 ± 2.65 1093.9± 101.9 22.06 ± 2.42 1117.1± 93.6 21.92 ± 2.29 1060.2± 90.7 23.19 1681 AveRank 5.00± 5.00 1 ± 3.5 3.83± 3.50 2.8± 2.3 3.83± 3.50 4.2± 3.8 2.08± 1.75 4.5± 3.2 1.08± 1.25 2.5± 2.17 4.33 6 2 T able 3 A verage comput ational ti me in seconds of ea ch method 3 PSO MA1 MA2 MA3 MA4 GS Banana 126.35 129.89 147.81 145.76 149.57 187.8 Breast cancer 56.71 59.69 62.48 62.01 67.25 74.5 Diabetis 275.03 288.73 264.02 27 8.56 311.61 358.13 Flare solar 405.88 402.13 447.31 474.37 432.12 564.97 German 687.53 700.78 764.22 697.11 787.2 994.01 Heart 34.54 35.94 40.34 35.21 37.07 53.64 A ve 264.34 269.53 287. 70 282.17 297.47 372.18 A veRa nk 1.33 2.50 3.67 3.00 4.50 6.00 21 convenience of compari son, the best errors for every dataset are bolded. The first column reports 1 the results obtained by two stages grid search based on 5 fold cross validation from [40] . T he 2 results of second and third column, both reported in [13] , are respectiv ely obtained by a 3 radius-m ar gin method and a fast-ef ficient strategy . “ L1- CB ” and “ L2 - CB ” respectiv ely show the 4 results of hybridi zation of CLPSO and B F GS by minimizing the L1-SVMs and L2 -SVMs 5 generalizati on errors in [12] . Among them, although no method can outperform all the others on 6 each dataset, the proposed PSO-PS based MA has lowest error on more than a half of the datasets. 7 From the perspectiv e of average rank, the proposed MA gains the rank 1.54± 1.88, which is the 8 best one among the six methods investigated. Final ly , the W ilcoxon signed rank test is used to 9 verify the performance of the proposed approach against “ CV ” for each dataset 2 . The results show 10 that the proposed MA is significant ly superior to “ CV ” in terms of error rate on eight datasets. T o 11 be concluded, those results imply our proposed approach is competitive with other met hods and 12 even more ef fective and robust than others, which indica te that our proposed PSO- PS based MA is 13 a practical m ethod for parameters opti mization i n SVMs. 14 5. Conclusions 15 The parameters of SV Ms are of great importance to the success of the SVMs. T o optimize the 16 paramet ers properly , this study proposed a PSO and pat tern sear ch (PS) based Mem etic Algorithm . 17 In the proposed PSO-PS based MA, PSO was used to explore the search space and detect the 18 potential regions wi th optimum solutions, while pattern search (PS) w as used to conduct an 19 ef fective refinement on the potential regions obtained by PSO. T o balan ce the explorat ion of PSO 20 and exploitation of PS, a probabilist ic selection strategy was also introduced. The performance of 21 proposed PSO-PS based MA was confirm ed through experiments on several benchm ark datasets. 22 The results can be summ arized as: (1) with dif ferent kinds of selection strategy , the variants of the 23 proposed MA are all superior to single PSO an d GS in term s of error rates and standard devi ations, 24 which confir ms the s uccess of refinem ent with pattern search. (2) Although the number of f itness 25 2 Only “ CV ” and “ Propo sed MA ” are compared using W ilcoxon signed rank test, because the detail results of others approaches on each dataset are not available. The detail results of “ CV ” on each dataset are available at http://www .raetschlab.o rg/Mem bers/raetsch/benchmark/ . 22 T able 4 Comparison with established algorithms 1 Dataset CV [40] RM [13] FE [13] L1 - CB [12] L2 - CB [12] Proposed MA Banana 11.53± 0.66 10.48± 0.40 10.68± 0.50 11.65± 5.90 10.44± 0.46 10.35± 0.40*** Breast Cancer 26.04± 4.74 27.83± 4.62 24.97± 4.62 28.75± 4.61 26.03± 4.60 24.37 ± 4.61* Diabetis 25.53± 1.73 34.56± 2.17 23.16 ± 1.65 25.36± 2.95 23.50± 1.66 23.17± 1.35*** Flare Solar 32. 43± 1.82 35.51± 1.65 32.37± 1.78 33.15± 1.83 33.33± 1.79 32.07 ± 1.73* German 23.61± 2.07 28.84± 2.03 23.41 ± 2.10 29.26± 2.88 24.41± 2.13 23.72± 2.05 Heart 15.95 ± 3.26 21.84± 3.70 16.62± 3.20 16.94± 3.71 16.02± 3.26 15.95± 2.11 Image 2.96± 0.60 4.19± 0.70 5.55± 0.60 3.34± 0.71 2.97± 0.45 2.90 ± 0.65 Ringnorm 1.66± 0.12 1.65± 0.11 2.03± 0.25 1.68± 0.13 1.68± 0.12 1.66± 0.12 Splice 10.88± 0.66 10.94± 0.70 11.11± 0.66 10.99± 0.74 10.84 ± 0.74 10.89± 0.63 Thyroid 4.80± 2.19 4.01± 2.18 4.4± 2.40 4.55± 2.10 4.20± 2.03 3.4 ± 2.07*** Titanic 22.42± 1.02 23.04± 1.18 22.87± 1.12 23.51± 2.92 22.89± 1.15 21.58 ± 1.06*** Twonorm 2.96± 0.23 3.07± 0.246 2.69± 0.19 2.90± 0.27 2.64± 0.20 2.49± 0.13 ** Waveform 9.88± 0.43 11.10± 0.50 10± 0.39 9.78± 0.48 9.58± 0.37 9.30± 0.37*** Ave 14.67± 1.50 16.70± 1.55 14.60± 1.50 15.53± 2.25 14.50± 1.46 13.99± 1.33 AveRank 3.38± 3.73 4.62± 3.69 3.62± 3.42 4.81± 5.31 3.04± 2.96 1.54± 1.88 * Statistic ally significant at the level of 0.05 when compared to CV . 2 ** S tatistically significant at the level of 0.01 when compared to CV . 3 ** * Statistically significant at the leve l of 0.001 when compared to CV . 4 Bold values are the best ones for each dataset 5 1 2 3 4 5 6 7 8 9 10 11 12 13 0 5 10 15 20 25 30 35 40 (a ) M ea n of err or ra tes 1 2 3 4 5 6 7 8 9 10 11 12 13 0 1 2 3 4 5 6 (b ) S t and ard dev i ation of er ro r rates CV RM EF L1 -C B L2 -C B Prop osed MA CV RM EF L1 -C B L2 -C B Prop osed MA 6 Fig. 4. Results on 13 benchmar k datasets: (a) Mean of error rates; (b) Standard deviation of erro r rates 7 8 evaluat ions and computat ional time of each MA are slightl y inferior to PSO, each MA consum es 9 much less fitness evaluati ons and computational tim e than grid search. (3) Among t he vari ants of 10 the proposed MA, the MA with our proposed probab ilisti c selection strategy gains the best 11 perform ance in terms of error rates and standard dev iations. (4) Compar ed with established 12 counterparts in previous studies, the proposed PSO-PS based MA using proposed probabilistic 13 23 selection strategy is capable of yielding higher and more robust perform ance than others. Hence, 1 the proposed PSO-PS based MA can be a promising alternativ e for SVMs parameters 2 optimizat ion. 3 One should note, however , that this study only focus on the PSO-PS based MA for 4 paramet ers optimization in SVMs, some other varieti es of PSO and evolutionary algorithm s ca n 5 also be used in the proposed MA. B esides, as the rapid development of hardware, the 6 paralleli zation of the proposed MA to make full use of the increasingly vast co mput ing resources 7 (e.g., mul tiple cores, GPU, cloud computing) is another interest ing topic. Other topics include 8 invest igating adaptive parameters setting of the proposed algorithms, co mpari ng more extensively 9 with other existing EA s or MAs, and developing more ef ficient memetic algorithms in dealing 10 with other designing issues, e.g., p opulation managem ent, i ntensity of refinement [29]. F uture 11 work will be on the research of the above cases and appli cations of the proposed approach in 12 practical pr oblems . 13 Acknowledgement 14 The authors would like to thank the anonymous reviewers for their valuable suggestions and 15 constructiv e comments. This work was supported by the Natural Scienc e Foundation of China 16 (Grant No. 70771042) and the Fundam ental Research Funds for the Central Universities 17 (2012QN208- HUST) and a grant from the Modern Inform ation Managem ent Research Center at 18 Huazhong U niversi ty of Science and T echnology . 19 References 20 21 22 [1] V.N. Vapnik, The Nature of Statistical Learning Theory, Sp ringer, New York, 1995 . 23 [2] C.W. Hsu, C.C . Cha ng, C.J. Lin, A p ractical guide to support vector classification, Depar tment of 24 Computer Science, Natio nal Taiwan Uni versity, 2003. 25 [3] G. Mo ore, C. Bergero n, K.P. Bennett, Model selectio n for p rimal SVM, Ma chine Learning, 85 26 (2011) 175 - 208. 27 [4] O. Chapelle, V. Vapnik, O. Bousqet, S. Mu kherjee, Choosing Multiple Para meters for S upport 28 Vector Machines, Machi ne Learning, 46 ( 1) (2002) 131 -159. 29 [5] S.S. Keerthi, Efficient tuning of SVM hyperpara meters using radius/ margin bound and iterative 30 algorithms, IEEE T ransactions on Neural Net works, 13 (5) (2002) 1225 -12 29. 31 [6] K. Duan, S.S. Keer thi, A.N. Po o, Evaluatio n of simple performance measures for tuning S VM 32 hyperparameters, Neuroco mputing, 51 (2003) 4 1 -59. 33 [7] K.M. Chung, W. C. Kao, C.L. S un, L.L. W ang, C.J. Lin, Radiu s margin bounds for support vector 34 24 machines with the RBF ker nel, Neural Co mputation, 15 (2003) 2643 -2681. 1 [8] N.E. Ayat, M. Cheriet, C.Y. Suen, Automatic model sel ection for the opti mization of SVM ker nels, 2 Pattern Recognition, 38 ( 10) (20 05) 1733 -1745. 3 [9] M.M. Adankon, M. Cheriet, Optimizing reso urces in model selection for s upport vector machine, 4 Pattern Recognition, 40 ( 3) (2007) 953 -963. 5 [10] L. Wang, P . Xue, K.L. Chan, Two Criteria for Mod el Selectio n i n Mul ticlass Suppor t Vector 6 Mac hines, I EEE Transactions on Syste ms Ma n and Cyber netics Part B -Cybernetics, 38 (6) ( 2008) 7 1432 -1448. 8 [11] M.M. Adankon, M. Cheriet, Model selection for the LS -SVM. Application to handwriting 9 recognition, Pattern Recog nition, 42 (12) (20 09) 3264 -3270. 10 [12] S. Li, M. Tan, T uning SVM parameters b y using a hybrid CLPSO – BFGS algorithm, 11 Neurocomputing, 73 (2010) 2089 -2096. 12 [13] Z.B. Xu, M.W. Dai, D.Y. Me ng, Fa st and Efficient Strategie s for Model Selection of Ga ussian 13 Support Vec tor Machine, IEEE Tr ansactions on Systems Man and Cyber netics P art B -Cybernetics, 14 39 (5) (2009) 1292 -1307. 15 [14] F. Imbault, K. Lebart, A stochastic optimization approach for parameter tuni ng of S upport Vecto r 16 Machines, i n: J. Kittler, M. P etrou, M. Nixon ( Eds.), Proceedings o f the 1 7th I nt ernational 17 Conference on Pattern Recognition, Vol 4, IEEE Computer Soc, Los Alamitos, 2004, pp.597 - 600. 18 [15] X.C. Guo, J. H. Yang, C.G. Wu, C.Y. Wang, Y.C. Liang, A novel LS -SVM s h yper-parameter 19 selection based on particle s warm optimization, Neuro computing , 71 ( 2008) 3211 -3215. 20 [16] H.J. Escalante, M. Montes, L.E. Sucar, Particle Swarm Mod el Select ion, Jo urnal of Machine 21 Learning Research, 10 (Feb) (2009) 405 - 440. 22 [17] X. Zhang, X. Chen, Z. He, An ACO -based algorithm for parameter o ptimization of s upport ve ctor 23 machines, Expert Syste ms with Applications, 37 (9) (2010) 661 8 -6628. 24 [18] T. Go mes, R. Prudencio, C. Soares, A. Rossi, A. Carvalho, Combining meta -learning and search 25 techniques to select p arameters for support vecto r machines, Neurocomputing, 75 ( 201 2) 3- 13. 26 [19] M.N. Kapp, R. Sabourin, P . Mau pin, A d ynamic model selection strategy f or support vector 27 machine classifiers, Applied Soft Computing, 12 (8) (2012) 2550 -2565. 28 [20] P. Moscato, M.G. Norman, A `memetic ' ap proach for the tra veling salesman prob l e m 29 implementation of a co mputational ecology for combinatorial o ptimization on message -passin g 30 systems, Parallel Co mputing and Tr ansputer Applications, (199 2) 177 -186. 31 [21] P. Moscato, On Evolution Search Opti mization Genetic Algorithms a nd Mar tial Ar ts: T owards 32 Memetic Algorithms, Caltec h Concurrent Co mputation Progra m, C3P Report, 826 (1989). 33 [22] J. T ang, M.H. Lim, Y.S. Ong, Diversi ty -adaptive par allel memetic algorithm for so lving large 34 scale combinatorial o ptimization problems, Soft Co mputing, 11 (9) ( 2007) 873 - 888. 35 [23] S. Areibi, Z. Yang, Effective Memetic Algorithms for VLSI design = genetic al gorithms + local 36 search plus multi-le vel clustering, Evolutio nary Computation, 1 2 (3) (2004) 327 -353. 37 [24] A. Elhossini, S. Areibi, R. Do ny, Strength Par eto Pa rticle Swarm Optimizatio n and Hybrid 38 EA -PSO for Multi -Objective Optimization, Evol utionary Computation, 1 8 (1) (2010) 127 - 156. 39 [25] A. Lara, G. Sanchez, C. Coello , O. Schuetze, HCS: A New Local Search Strategy for Me metic 40 Multiobjective Evolutio nary Algorithms, I EEE Transactions on E volutionary Computatio n, 1 4 (1) 41 (2010) 112 - 132. 42 [26] Q. H. Nguyen, Y.S. Ong, M.H. Lim, A P robabilistic Memetic Framework, IEEE Transactions on 43 Evolutionary Co mputation, 13 (3) (2009) 604 -623. 44 [27] V.N. Vapnik, Statistical Learnin g Theory, John Wiley &Sons, Inc, New York, 19 98. 45 [28] F.E.H. T ay, L.J. Cao, Application of support vector machines in financial time series forecasting, 46 Omega, 29 (4 ) (2001) 309 -317. 47 [29] X. Chen, Y. Ong, M. Lim, K. Tan, A Multi-Facet Survey on Memetic Computation, IEEE 48 Transactions On Evolutio nary Computation, 15 (5 ) (2011) 591 - 607. 49 [30] F. Neri, C. Cotta, Memetic algorit hms and memetic co mputing optimization: A l iterature revie w , 50 Swarm and Evolutionar y Computation, 2 (20 12) 1 - 14. 51 [31] X. Hu, R.C. Eberhart, Y. Shi, Engineering opti mization w ith particle swarm, Proceedings of the 52 2003 IEEE Swarm Intelligenc e Symposium, 2003 , pp.53 -57. 53 [32] M. Reyes, C. Coello, Multi -objec tive particle s warm optimizers: A survey o f the state - of -the -art, 54 International Journal o f Computationa l Intelligence Research, 2 (3) (2006) 287 -308. 55 [33] J. Kenned y, R.C. Eberhart, Y. Shi, Swarm intelligence, Morgan Kaufmann Publishers, San 56 Francisco, 2001. 57 [34] C. Chang, C. Lin, LIBSVM: a Library for Support Vector Machines, 2001. Software a vailable at 58 http://www.csie.ntu.ed u.tw/~cjlin/libsvm. 59 [35] J . Kennedy, R.C. Eberhart, Particle Swarm Optimizati on, P roceedings of the IEEE I nternational 60 25 Conference on Neural Net works, 4 (19 95) 1942 -1948. 1 [36] E.D. Dolan, R.M. Lewis, V. Torczon, On the lo ca l convergence of patter n search, SIAM J ournal 2 of Optimization, 14 (2 ) (2003) 567 -583. 3 [37] B. Liu, L. Wan g, Y.H. Jin, An ef fective P SO -based memetic al gorithm for flo w sho p scheduling, 4 IEEE Transactions on Syste ms Man and C ybernetics Part B -Cyber netics, 37 (1) (2007) 18- 27. 5 [38] M. Lozano, F. Her rera, N. Krasnogor, D. Molina, Real -coded memetic algorithms with crossover 6 hill-climbing, Evolutio nary Computation, 12 ( 3) (2004) 273 - 302. 7 [39] M.L. T ang, X. Yao , A memetic algorithm for VLSI floorplanning, IE EE Transactions on Systems 8 Man and Cybernetics P art B -Cybernetics, 37 (1) (2007) 62- 69. 9 [40] G. Rä tsch, T. Onoda, K.R. Mü ller, Soft Margins for AdaBoost, Machine Learning, 42 (3 ) (2001) 10 287 - 320. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment