Learning Multilingual Word Representations using a Bag-of-Words Autoencoder

Recent work on learning multilingual word representations usually relies on the use of word-level alignements (e.g. infered with the help of GIZA++) between translated sentences, in order to align the word embeddings in different languages. In this w…

Authors: Stanislas Lauly, Alex Boulanger, Hugo Larochelle

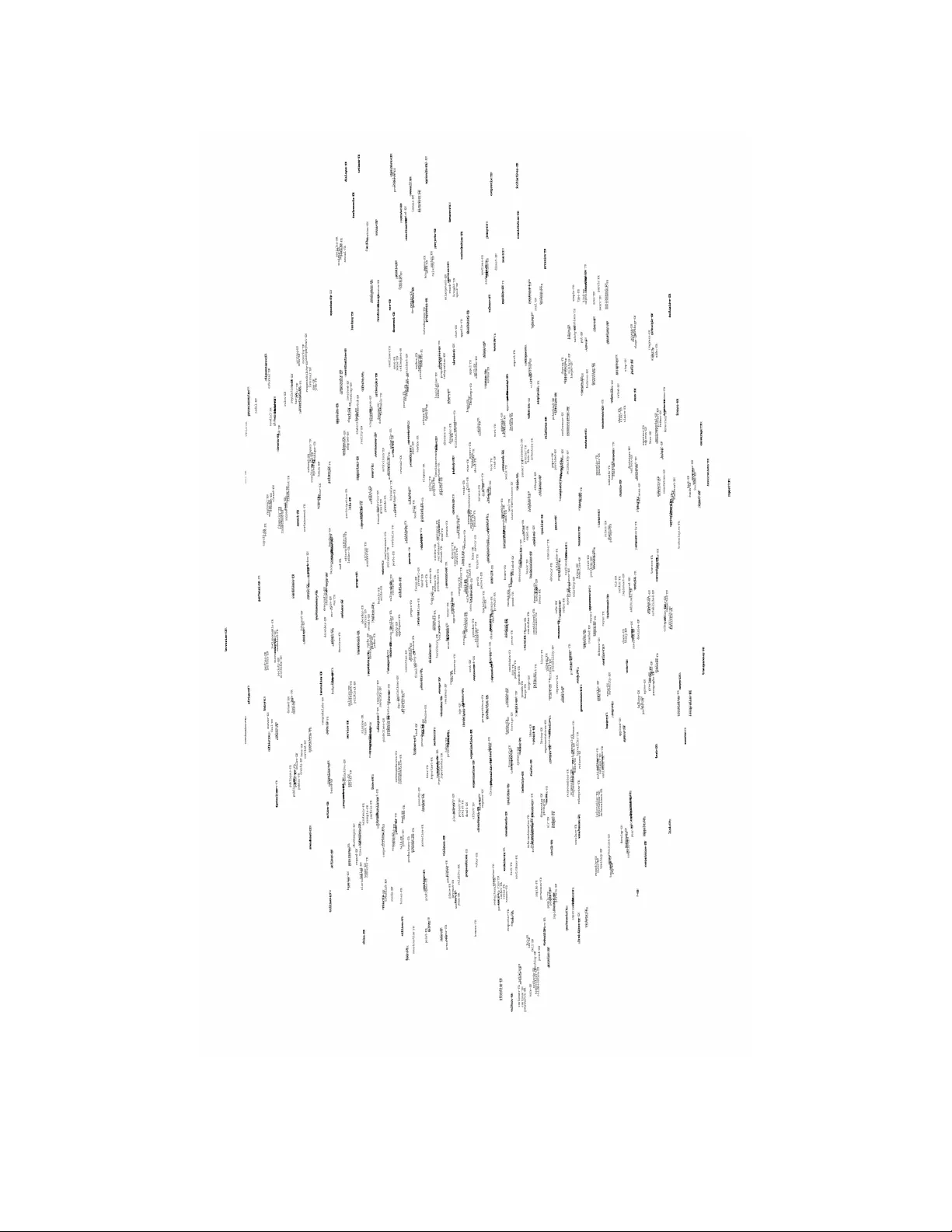

Lear ning Multilingual W ord Repr esentations using a Bag-of-W ords A utoencoder Stanislas Lauly D ´ epartement d’informatique Univ ersit ´ e de Sherbrooke stanislas.lauly@usherbr ook e.ca Alex Boulanger D ´ epartement d’informatique Univ ersit ´ e de Sherbrooke alex.boulang er@usherbr ook e.ca Hugo Larochelle D ´ epartement d’informatique Univ ersit ´ e de Sherbrooke hugo.lar oc helle@usherbr ooke.ca Abstract Recent work on learning multilingual word representations usually relies on the use of word-le vel alignements (e.g. infered with the help of GIZA++) between translated sentences, in order to align the word embeddings in dif ferent languages. In this workshop paper , we in vestigate an autoencoder model for learning multi- lingual word representations that does without such word-le vel alignements. The autoencoder is trained to reconstruct the bag-of-word representation of giv en sen- tence from an encoded representation extracted from its translation. W e ev aluate our approach on a multilingual document classification task, where labeled data is av ailable only for one language (e.g. English) while classification must be per- formed in a different language (e.g. French). In our experiments, we observe that our method compares fav orably with a pre viously proposed method that exploits word-le vel alignments to learn word representations. 1 Introduction V ectorial word representations have proven useful for multiple NLP tasks [1, 2]. It’ s been shown that meaningful representations, capturing syntactic and semantic similarity , can be learned from unlabled data. Along with a (usually smaller) set of labeled data, these representations allo ws to ex- ploit unlabeled data and impro ve the generalization performance on some gi ven task, e ven allo wing to generalize out of the vocab ulary observed in the labeled data only . While the majority of previous work has concentrated on the monolingual case, recent work has started looking at learning word representations that are aligned across languages [3, 4, 5]. These representations hav e been applied to a variety of problems, including cross-lingual document clas- sification [3] and phrase-based machine translation [4]. A common property of these approaches is that a word-lev el alignment of translated sentences is le veraged, either to deri ve a regularization term relating word embeddings across languages [3, 4]. In this workshop paper , we experiment with a method to learn multilingual word representations that does without word-to-word alignment of bilingual corpora during training. W e only require aligned sentences and do not exploit word-lev el alignments (e.g. extracted using GIZA++, as is usual). T o do so, we propose a multilingual autoencoder model, that learns to relate the hidden representation of paired bag-of-words sentences. 1 W e use these representations in the context of cross-lingual document classification where labeled dataset can be av ailable in one language, but not in another one. With the multilingual word repre- sentations, we want to learn a classifier with documents in one language and then use it on documents in another language. Our preliminary experiments suggest that our method is competiti ve with the representations learned by [3], which rely on word-le vel alignments. In Section 2, we describe the initial autoencoder model that can learn a representation from which an input bag-of-words can be reconstructed. Then, in Section 3, we extend this autoencoder for the multilingual setting. Related work is discussed in Section 4 and experiments are presented in Section 5. 2 A utoencoder f or Bags-of-W ords Let x be the bag-of-words representation of a sentence. Specifically , each x i is a word index from a fixed vocabulary of V words. As this is a bag-of-words, the order of the words within x does not correspond to the word order in the original sentence. W e wish to learn a D -dimensional vectorial representation of our words from a training set of sentence bag-of-words { x ( t ) } T t =1 . W e propose to achieve this by using an autoencoder model that encodes an input bag-of-words x as the sum of its word representations (embeddings) and, using a non-linear decoder, is trained to reproduce the original bag-of-words. Specifically , let matrix W be the D × V matrix whose columns are the vector representations for each word. The aggregated representation for a gi ven bag-of-w ords will then be: φ ( x ) = | x | X i =1 W · ,x i . (1) T o learn meaningful word representations, we wish to encourage φ ( x ) to contain information that allows for the reconstruction of the original bag-of-words x . This is done by choosing a recon- struction loss and by designing a parametrized decoder which will be trained jointly with the word representations W so as to minimize this loss. Because words are implicitly high-dimensional objects, care must be taken in the choice of recon- struction loss and decoder for stochastic gradient descent to be ef ficient. For instance, Dauphin et al. [6] recently designed an efficient algorithm for reconstructing binary bag-of-words representations of documents, in which the input is a fixed size vector where each element is associated with a word and is set to 1 only if the word appears at least once in the document. They use importance sampling to av oid reconstructing the whole V -dimensional input vector , which would be expensi ve. In this work, we propose a different approach. W e assume that, from the decoder, we can obtain a probability distribution ov er any word b x observed at the reconstruction output layer p ( b x | φ ( x )) . Then, we treat the input bag-of-words as a | x | -trials multinomial sample from that distribution and use as the reconstruction loss its negati ve log-likelihood: ` ( x ) = | v | X i =1 − log p ( b x = x i | φ ( x )) . (2) W e now must ensure that the decoder can compute p ( b x = x i | φ ( x )) efficiently from φ ( x ) . Specifi- cally , we’ d like to a void a procedure scaling linear with the v ocabulary size V , since V will be very large in practice. This precludes any procedure that would compute the numerator of p ( b x = w | φ ( x )) for each possible word w separetly and normalize so it sums to one. W e instead opt for an approach borrowed from the work on neural network language models [7, 8]. Specifically , we use a probabilistic tree decomposition of p ( b x = x i | φ ( x )) . Let’ s assume each word has been placed at the leaf of a binary tree. W e can then treat the sampling of a word as a stochastic path from the root of the tree to one of the leaf. W e note as l ( x ) as the sequence of internal nodes in the path from the root to a gi ven w ord x , with l ( x ) 1 always corresponding to the root. W e will not as π ( x ) the vector of associated left/right branching choices on that path, where π ( x ) k = 0 means the path branches left at internal node 2 v 1 v 2 v 3 ṽ 1 the dog barked v 4 le chien jappé a ṽ 2 ṽ 3 Figure 1: Illustration of a bilingual autoencoder that learns to construct the bag-of-w ord of the English sentence “ the dog barked ” from its French translation “ le chien a japp ´ e ”. The horizontal blue line across the input-to-hidden connections highlights the fact that these connections share the same parameters (similarly for the hidden-to-output connections). l ( x ) k and branches right if π ( x ) k = 1 otherwise. Then, the probability p ( b x | φ ( x )) of a certain word x observed in the bag-of-words is computed as p ( b x | φ ( x )) = | π ( ˆ x ) | Y k =1 p ( π ( b x ) k | φ ( x )) (3) where p ( π ( b x ) k | φ ( x )) is output by the decoder . By using a full binary tree of words, the number of different decoder outputs required to compute p ( b x | φ ( x )) will be logarithmic in the v ocabular size V . Since there are | x | words in the bag-of-words, at most O ( | x | log V ) outputs are thus required from the decoder . This is of course a worse case scenario, since words will share internal nodes between their paths, for which the decoder output can be computed just once. As for or ganizing words into a tree, as in Larochelle and Lauly [9] we used a random assignment of words to the leaves of the full binary tree, which we hav e found to work well in practice. Finally , we need to choose of parametrized form for the decoder . W e choose the following non-linear form: p ( π ( b x ) k = 1 | φ ( x )) = sigm( b l ( ˆ x i ) k + V l ( ˆ x i ) k , · h ( c + φ ( x ))) (4) where h ( · ) is an element wise non-linearity , c is a D -dimensional bias vector , b is a ( V -1)- dimensional bias v ector, V is a ( V − 1) × D matrix and sigm( a ) = 1 / (1 + exp( − a )) is the Sigmoid non-linearity . Each left/right branching probability is thus modeled with a logistic regression model applied on the non-linearly transformed representation of the input bag-of-words φ ( x ) 1 . 3 Multilingual Bag-of-words Let’ s no w assume that for each sentence bag-of-words x in some source language X , we have an associated bag-of-w ords y for the same sentence translated in some target language Y by a human expert. Assuming we hav e a training set of such ( x , y ) pairs, we’ d like to use it to learn representations in both languages that are aligned, such that pairs of translated words have similar representations. 1 While the literature on autoencoders usually refers to the post-nonlinearity activ ation vector as the hid- den layer , we use a different description here simply to be consistent with the representation we will use for documents in our experiments, where the non-linearity will not be used 3 T o achiev e this, we propose to augment the regular autoencoder proposed in Section 2 so that, from the sentence representation in a gi ven language, a reconstruction can be attempted of the original sentence in the other language. Specifically , we no w define language specific word representation matrices W x and W y , corre- sponding to the languages of the words in x and y respectively . Let V x and V y also be the number of words in the vocabulary of both languages, which can be different. The word representations howe ver are of the same size D in both languages. The sentence-lev el representation extracted by the encoder becomes φ ( x ) = | x | X i =1 W x · ,x i , φ ( y ) = | y | X i =1 W y · ,y i . (5) From the sentence in either languages, we want to be able to perform a reconstruction of the original sentence in any of the languages. In particular, given a representation in any language, we’ d like a decoder that can perfrom a reconstruction in language X and another decoder that can reconstruct in language Y . Again, we use decoders of the form proposed in Section 2, but let the decoders of each language hav e their own parameters ( b x , V x ) and ( b y , V y ) : p ( b x | φ ( z )) = | π ( ˆ x ) | Y k =1 p ( π ( b x ) k | φ ( z )) , p ( π ( b x ) k = 1 | φ ( z )) = sigm( b x l ( ˆ x i ) k + V x l ( ˆ x i ) k , · h ( c + φ ( z ))) p ( b y | φ ( z )) = | π ( ˆ y ) | Y k =1 p ( π ( b y ) k | φ ( z )) , p ( π ( b y ) k = 1 | φ ( z )) = sigm( b y l ( ˆ x i ) k + V y l ( ˆ y i ) k , · h ( c + φ ( z ))) where z can be either x or y . Notice that we share the bias c in the nonlinearity h ( · ) across decoders. This encoder/decoder structure allows us to learn a mapping within each language and across the languages. Specifically , for a given pair ( x , y ) , we can train the model to (1) construct y from x , (2) construct x from y , (3) reconstruct x from itself and (4) reconstruct y from itself. In our experiments, performed each of these 4 tasks simultaneously , combining the equally weighting the learning gradient from each. Experiments on v arious weighting schemes should be in vestigated and are left for future work. Another promising direction of in vestigation to the exploit the f act that tasks (3) and (4) could be performed on extra monolingual corpora, which is more plentiful. 4 Related work W e mentioned that recent work has considered the problem of learning multilingual representations of words and usually relies on word-lev el alignments. Klementie v et al. [3] propose to train simul- taneously two neural network languages models, along with a regularization term that encourages pairs of frequently aligned words to hav e similar word embeddings. Zou et al. [4] use a similar approach, with a different form for the re gularizor and neural network language models as in [2]. In our work, we specifically in vestigate whether a method that does not rely on word-le vel alignments can learn comparably useful multilingual embeddings in the context of document classification. Looking more generally at neural networks that learn multilingual representations of words or phrases, we mention the work of Gao et al. [10] which showed that a useful linear mapping between separately training monolingual skip-gram language models could be learned. They too ho wever rely on the specification of pairs of words in the two languages to align. Mikolo v et al. [5] also propose a method for training a neural network to learn useful representations of phrases (i.e. short segments of words), in the context of a phrase-based translation model. In this case, phrase-le vel alignments (usually extracted from word-le vel alignments) are required. 5 Experiments T o e valuate the quality of the word embeddigns learned by our model, we experiment with a task of cross-lingual document classication. The setup is as follows. A labeled data set of documents 4 in some language X is av ailable to train a classifier , howe ver we are interested in classifying doc- uments in a different language Y at test time. T o achieve this, we le verage some bilingual corpora, which importantly is not labeled with any document-le vel cate gories. This bilingual corpora is used instead to learn document representations in both languages X and Y that are enroucaged to be in variant to translations from one language to another . The hope is thus that we can successfully apply the classifier trained on document representations for language X directly to the document representations for language Y . 5.1 Data W e trained our multilingual autoencoder to learn bilingual word representation between English and French and between English and German. T o train the autoencoder , we used the English/French and English/German section pairs of the Europarl-v7 dataset 2 . This data is composed of about two million sentences, where each sentence is translated in all the relev ant languages. For our crosslingual classification problem, we used the English, French and German sections of the Reuters RCV1/RCV2 corpus, as provided by Amini et al. [11] 3 . There are 18758, 26648 and 29953 documents (ne ws stories) for English, French and German respecti vely . Document cate gories are organized in a hierarchy in this dataset. A 4-category classification problem was thus created by using the 4 top-level categories in the hierarchy (CCA T , ECA T , GCA T and MCA T). The set of documents for each language is split into training, validation and testing sets of size 70%, 15% and 15% respectiv ely . The raw documents are represented in the form of a bag-of-words using a TFIDF- based weighting scheme. Generally , this setup follows the one used by Klementie v et al. [3], b ut uses the preprocessing pipeline of Amini et al. [11]. 5.2 Crosslingual classification As described earlier , crosslingual document classification is performed by training a document clas- sifier on documents in one language and applying that classifier on documents in a different language at test time. Documents in a language are representated as a linear combination of its word embed- dings learned for that language. Thus, classification performance relies hea vily on the quality of multlingual word embeddigns between languages, and specifically on whether similar words across languages hav e similar embeddings. Overall, the e xperimental procedure is as follows. 1. T rain bilingual word representations W x and W y on sentence pairs extracted from Europarl-v7 for languages X and Y (we use a separate v alidation set to early-stop training). 2. T rain document classifier on the Reuters training set for language X , where documents are represented using the word representations W x (we use the validation set for the same language to perform model selection). 3. Use the classifier trained in the previous step on the Reuters test set for language Y , using the word representations W y to represent the documents. W e used a linear SVM as our classifier . W e compare our representations to those learned by Klementie v et al. [3] 4 . This is achieved by simply skipping the first step of training the bilingual word representations and directly using those of Klementiev et al. [3] in step 2 and 3. The provided word embeddings are of size 80 for the English and French language pair, and of size 40 for the English and German pair . The vocab ulary used by Klementiev et al. [3] consisted in 43614 w ords in English, 35891 words in French and 50110 w ords in German. The same v ocabulary w as used by our model, to represent the Reuters documents. In all cases, document representations were obtained by multiplying the word embeddings matrix with either the TFIDF-based bag-of-words feature vector or its binary version (the choice of which 2 http://www.statmt.org/europarl/ 3 http://multilingreuters.iit.nrc.ca/ReutersMultiLingualMultiView.htm 4 The trained word embeddings were downloaded from http://people.mmci.uni- saarland.de/ ˜ aklement/data/distrib/ 5 T rain FR / T rain EN / T est EN T est FR Klementiev 34.9% 49.2% Our embeddings 27.7% 32.4% T rain GR / Train EN / T est EN T est GR Klementiev et al. 42.7% 59.5% Our embeddings 29.8% 37.7% Soleil Personne Sun Person Roi King Voiture Maison Car House Arme Weapon Vivre Live Apprendre Learn Papier Paper T able 1: Left: Crosslingual classification error results, for English/French pair (T op) and En- glish/German pair (Bottom) . Right: For each French w ord, its nearest neighbor in the English word embedding space. method to use is made based on the validation set performance). W e normalized the TFIDF-based weights to sum to one. T est set classification error results are reported in T able 1. W e observ e that the word representations learned by our autoencoder are competiti ve with those provided by Klementiev et al. [3]. One will notice that the results for Klementiev et al. [3] are worse than those reported in the original reference. This dif ference might be due to the f act that our preprocessing of the Reuters data, which comes from Amini et al. [11], is different from the one in Klementiev et al. [3]. In particular , we note that Klementiev et al. [3] ignored documents that belonged to multiple categories, while Amini et al. [11] included them by assigning them to the category with the least training e xamples. T able 1 also shows, for a few French words, what are the nearest neighbor words in the English embedding space. A more complete picture is presented in the t-SNE visualization [12] of Figure 2. It sho ws a 2D visualization of the French/English word embeddings, for the 600 most frequent words in both languages. Both illustrations confirm that the multilingual autoencoder was able to learn similar embeddings for similar words across the two languages. 6 Conclusion and Future W ork W e presented e vidence that meaningful multilingual word representations could be learned without relying on w ord-level alignments. Our proposed multilingual autoencoder was able to perform com- petitiv ely on a crosslingual document classification task, compared to a word representation learning method that exploits word-le vel alignments. Encouraged by these preliminary results, our future work will in vestigate extensions of our bag- of-words multilingual autoencoder to bags-of-ngrams, where the model would also hav e to learn representations for short phrases. Such a model should be particularly useful in the context of a machine translation system. Thanks to the use of a probabilistic tree in the output layer , our model could efficiently assign scores to pairs of sentences. W e thus think it could act as a useful, complementary metric in a standard phrase-based translation system. Acknowledgements Thanks to Alexandre Allauzen for his help on this subject. Thanks also to Cyril Goutte for pro viding the classification dataset. Finally , a big thanks to Alexandre Klementiev and Ivan Tito v for their help. References [1] Joseph T urian, Lev Ratinov , and Y oshua Bengio. W ord representations: A simple and gen- eral method for semi-supervised learning. In Pr oceedings of the 48th Annual Meeting of the 6 Association for Computational Linguistics (ACL2010) , pages 384–394. Association for Com- putational Linguistics, 2010. [2] Ronan Collobert, Jason W eston, L ´ eon Bottou, Michael Karlen, K oray Kavukcuoglu, and P avel Kuksa. Natural Language Processing (Almost) from Scratch. Journal of Machine Learning Resear ch , 12:2493–2537, 2011. [3] Alexandre Klementie v , Iv an Tito v , and Binod Bhattarai. Inducing Crosslingual Distrib uted Representations of W ords. In Pr oceedings of the International Confer ence on Computational Linguistics (COLING) , 2012. [4] W ill Y . Zou, Richard Socher , Daniel Cer , and Christopher D. Manning. Bilingual W ord Embed- dings for Phrase-Based Machine T ranslation. In Confer ence on Empirical Methods in Natural Language Pr ocessing (EMNLP 2013) , 2013. [5] T omas Mikolo v , Quoc Le, and Ilya Sutskev er . Exploiting Similarities among Languages for Machine T ranslation. T echnical report, arXiv , 2013. [6] Y ann Dauphin, Xa vier Glorot, and Y oshua Bengio. Large-Scale Learning of Embeddings with Reconstruction Sampling. In Pr oceedings of the 28th International Confer ence on Machine Learning (ICML 2011) , pages 945–952. Omnipress, 2011. [7] Frederic Morin and Y oshua Bengio. Hierarchical Probabilistic Neural Network Language Model. In Pr oceedings of the 10th International W orkshop on Artificial Intelligence and Statis- tics (AIST A TS 2005) , pages 246–252. Society for Artificial Intelligence and Statistics, 2005. [8] Andriy Mnih and Geoffre y E Hinton. A Scalable Hierarchical Distributed Language Model. In Advances in Neural Information Pr ocessing Systems 21 (NIPS 2008) , pages 1081–1088, 2009. [9] Hugo Larochelle and Stanislas Lauly . A Neural Autoregressi ve T opic Model. In Advances in Neural Information Pr ocessing Systems 25 (NIPS 25) , 2012. [10] Jianfeng Gao, Xiaodong He, W en-tau Y ih, and Li Deng. Learning Semantic Representations for the Phrase T ranslation Model. T echnical report, Microsoft Research, 2013. [11] Massih-Reza Amini, Nicolas Usunier, and Cyril Goutte. Learning from Multiple Partially Observed V iews - an Application to Multilingual T ext Categorization. In Advances in Neural Information Pr ocessing Systems 22 (NIPS 2009) , pages 28–36, 2009. [12] Laurens van der Maaten and Geoffre y E Hinton. V isualizing Data using t-SNE. Journal of Ma- chine Learning Researc h , 9:2579–2605, 2008. URL http://www.jmlr.org/papers/ volume9/vandermaaten08a/vandermaaten08a.pdf . 7 Figure 2: A t-SNE 2D visualization of the learned English/French word representations (better visualized on a computer). W ords hyphenated with “EN” and “FR” are English and French words respectiv ely . 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment