Abstraction in decision-makers with limited information processing capabilities

A distinctive property of human and animal intelligence is the ability to form abstractions by neglecting irrelevant information which allows to separate structure from noise. From an information theoretic point of view abstractions are desirable bec…

Authors: Tim Genewein, Daniel A. Braun

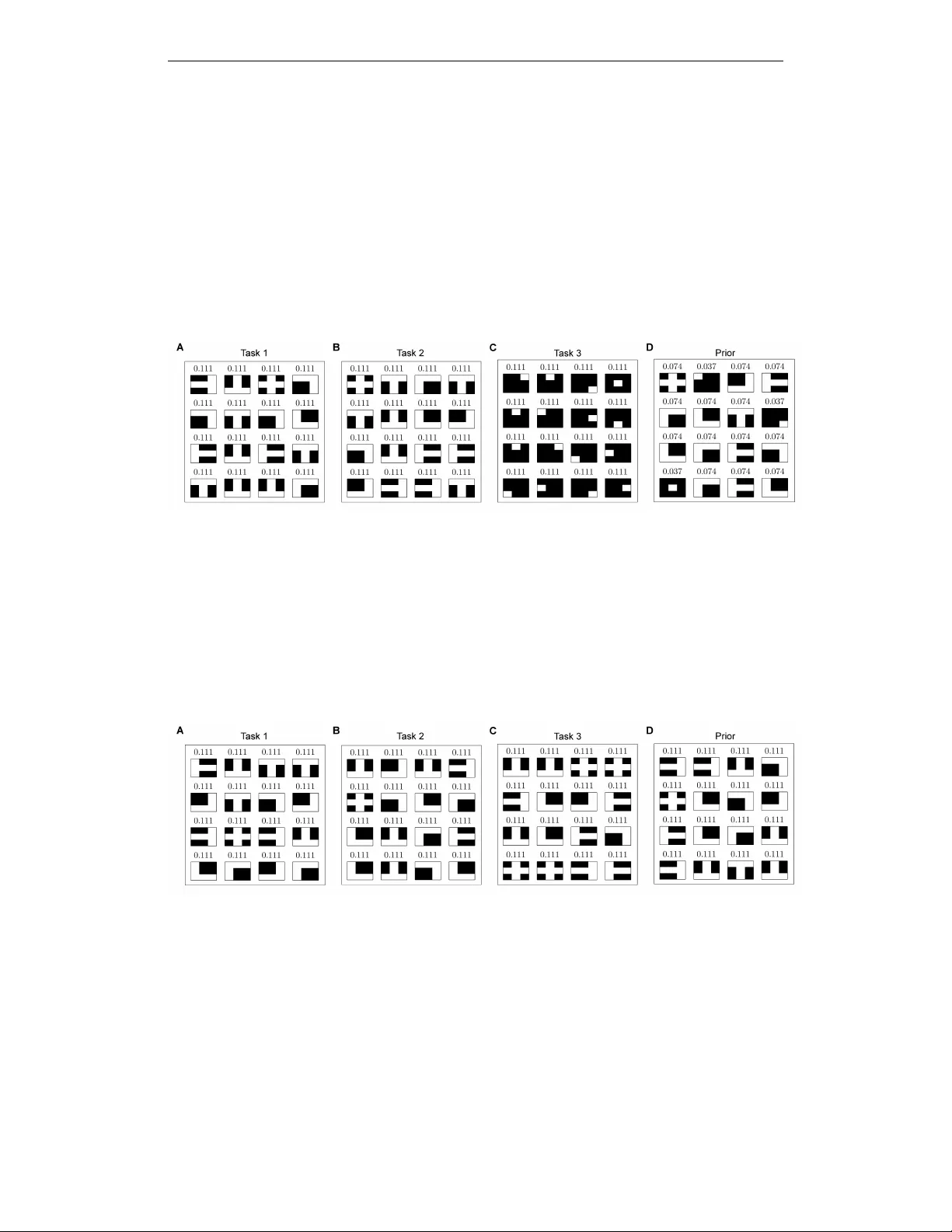

NIPS 2013 workshop on Planning with Information Constraints Abstraction in decision-makers with limited inf ormation pr ocessing capabilities Tim Genewein Max Planck Institute for Intelligent Systems Max Planck Institute for Biolog. Cybernetics 72072 T uebingen Germany tim.genewein@tuebingen.mpg.de Daniel A. Braun Max Planck Institute for Intelligent Systems Max Planck Institute for Biolog. Cybernetics 72072 T uebingen Germany daniel.braun@tuebingen.mpg.de Abstract A distinctive property of human and animal intelligence is the ability to form ab- stractions by neglecting irrelev ant information which allows to separate structure from noise. From an information theoretic point of view abstractions are desirable because they allow for very efficient information processing. In artificial systems abstractions are often implemented through computationally costly formations of groups or clusters. In this work we establish the relation between the free-energy framew ork for decision making and rate-distortion theory and demonstrate how the application of rate-distortion for decision-making leads to the emergence of abstractions. W e argue that abstractions are induced due to a limit in information processing capacity . 1 Introduction Most scientific papers start with an abstract that focuses on the main ideas of the w ork b ut leaves out many of the details. From an information theoretic point of vie w this allo ws for very efficient pro- cessing which is crucial if information processing capabilities are limited. In general, abstractions are formed by reducing the information content of an entity until it contains only information that is relev ant for a particular purpose. This partial neglect of information can lead to different entities being treated as equal or, phrased dif ferently , the separation of structure from noise. Consider the abstract concept of a “chair”, where many aspects such as the size, color, material or particular shape are considered as noise that is irrelev ant to the purpose of “sitting down”. The ability to form abstractions is thought of as a hallmark of intelligence, both in cogniti ve tasks and in basic sensorimotor beha viors [1 – 6] T raditionally it is conceptualized as being computation- ally costly because particular entities have to be grouped together by neglecting irrelev ant infor- mation. Here we argue that abstractions arise as a consequence of limited computational capacity . The inability to distinguish different entities leads to the formation of abstractions. Note that this information processing limitation can be induced through limited computational capacity , but also through limited sample sizes or low signal-to-noise ratios. In this paper we study abstractions in the process of decision-making, where “similar” situations elicit the same behavior when partially ignoring the current situational context. Follo wing the work of [7] decision-making with limited information-processing resources has been studied extensiv ely in psychology , economics, political science, industrial organization, computer science and artificial intelligence research. In this paper we use a information-theoretic model of decision-making under resource constraints [8–14]. In particular, [15 – 18] present a framew ork in which gain in expected utility is traded off against the adaptation cost of changing from an initial behavior to a posterior behavior . The variational problem that arises due to this trade-off has the same 1 NIPS 2013 workshop on Planning with Information Constraints mathematical form as the minimization of a fr ee energy difference functional in thermodynamics. Here, we discuss the close connection between the thermodynamic decision-making frame work [15] and rate-distortion theory which is an information theoretic frame work for lossy compression. The problem in lossy compression is essentially the problem of separating structure from noise and is thus highly related to finding abstractions [19 – 21]. In the context of decision-making the rate-distortion framework can be applied by conceptualizing the decision-maker as a channel from observations to actions with limited capacity , which is known in economics as the framew ork of “Rational Inattention” [22]. In the next section we discuss ho w the rate-distortion framew ork can be obtained for bounded- rational decision-makers that face a number of tasks. In Section 3 we demonstrate two simple applications to explore the type of abstractions that emerge from limited information processing capabilities. In Section 4 we summarize the findings and discuss the presented approach. 2 Rate-distortion theory for decision-making 2.1 Bounded-rational decision-making In [15], a bounded-rational actor that initially follows a policy p 0 ( x ) changes its behavior to q ( x ) in a way that optimally trades off the expected gain in utility against the transformation costs for adapting from p 0 ( x ) to q ( x ) . This trade-off is formalized by the follo wing variational principle argmax q ( x ) ∆ F [ q ] = argmax q ( x ) X x q ( x ) U ( x ) | {z } E q ( x ) [ U ] − 1 β X x q ( x ) log q ( x ) p 0 ( x ) | {z } D KL ( q || p 0 ) , (1) where β is kno wn as the in verse temperatur e and ∆ F is known as the dif ference in fr ee ener gy — negati ve free energy in physics—which is composed of the expected utility w .r .t. q ( x ) and the Kullback-Leibler (KL) div ergence between q ( x ) and p 0 ( x ) . β acts as a con version-factor between transformation cost (usually in nats or bits) and the expected utility . The distribution q ( x ) that maximizes the v ariational principle is giv en by q ( x ) = 1 Z p 0 ( x ) e β U ( x ) , (2) with the partition sum Z = P ξ p 0 ( ξ ) e β U ( ξ ) . The influence of the transformation cost and thus the boundedness of the actor is governed by the parameter β which determines “how far” the final behavior q ( x ) can de viate from the initial behavior p 0 ( x ) measured in terms of KL-di vergence. The perfectly rational actor that maximizes his utility can be recov ered as the limit case β → ∞ where transformation cost is ignored, whereas β → 0 corresponds to an actor that has infinite transformation cost or no computational resources and, thus, sticks with his prior policy p 0 . Note that in the notation sho wn here, U ( x ) is conceptualized as a function o ver gains. In case U ( x ) corresponds to a loss-function, the same variational principle allows to find the distribution q ( x ) that optimally trades of f minimum expected loss against transformation cost. In this case the argmin ov er q ( x ) has to be taken and the sign of β is in verted. In case x is a continuous random variable, sums hav e to be replaced by the corresponding integrals. 2.2 Multi-task decision-making with limited resour ces Consider an actor that is embedded into an en vironment and receiv es (potentially partial and noisy) information about the current state of the en vironment, that is the actor observes the value of a random v ariable y . This observation y allo ws the actor to reduce uncertainty about the current state of the en vironment and adapt its behavior correspondingly . Formally this is expressed with the conditional distrib ution p ( x | y ) ov er the action x . The thermodynamic frame work for decision- making introduced in the previous section can straightforwardly be harnessed for describing such a bounded-rational agent that recei ves information y by plugging in the conditional distribution p ( x | y ) 2 NIPS 2013 workshop on Planning with Information Constraints into Equation 1 argmax p ( x | y ) E p ( x | y ) [ U y ( x )] − 1 β D KL ( p ( x | y ) || p 0 ( x )) , (3) with the solution p ( x | y ) = 1 Z p 0 ( x ) e β U y ( x ) . (4) Notice that the utility function U in general depends on the observation y , leading to U ( x, y ) , b ut to indicate the conditioning on a specific value of y we write U y ( x ) . The initial distribution p 0 ( x ) can be interpreted as a default- or prior-beha vior in the absence of an observation, thus we will refer to p 0 ( x ) as “the prior”. The information processing cost is then giv en as the KL div ergence between p ( x | y ) and the prior p ( x ) with the con version factor β that relates the units of transformation cost and the units of utility . 2.3 The optimal prior In the free energy principle (Equation 3), the prior p 0 ( x ) is assumed to be gi ven. A very interesting question is which prior distrib ution p 0 ( x ) maximizes the free ener gy dif ference ∆ F for all observ a- tions y on avera ge . T o formalize this question, we extend the variational principle in Equation 3 by taking the expectation o ver y and the argmax ov er p 0 ( x ) argmax p 0 ( x ) X y p ( y ) " argmax p ( x | y ) E p ( x | y ) [ U y ( x )] − 1 β D KL ( p ( x | y ) || p 0 ( x )) # . The inner argmax-operator over p ( x | y ) and the expectation over y can be swapped because the variation is not o ver p ( y ) . With the KL-term e xpanded this leads to argmax p 0 ( x ) ,p ( x | y ) X x,y p ( x, y ) U ( x, y ) − 1 β X y p ( y ) X x p ( x | y ) log p ( x | y ) p 0 ( x ) . The solution to the argmax ov er p 0 ( x ) is given by p 0 ( x ) = P y p ( y ) p ( x | y ) = p ( x ) . (see 2.1.1 in [19] or [23]). Plugging in p ( x ) for p 0 ( x ) yields the follo wing variational principle for bounded-rational decision-making with a minimum av erage relativ e entropy prior argmax p ( x | y ) X x,y p ( x, y ) U ( x, y ) | {z } E p ( x,y ) [ U ] − 1 β X y p ( y ) D KL ( p ( x | y ) || p ( x )) | {z } I ( x ; y ) , (5) where I ( x ; y ) is the mutual information between x and y . The variational problem can be interpreted as maximizing e xpected utility with an upper bound on the mutual information or in the dual point of view , as minimizing the mutual information between actions and observ ations with a lower bound on the expected utility . The problem in Equation 5 is equiv alent to the problem formulation in rate- distortion theory ( [19, 24, 25]), where U ( x, y ) is usually conceptualized as a distortion function d ( x, y ) which leads to a flip in the sign of β and an argmin instead of an argmax. The solution that extremizes the v ariational problem is given by the self-consistent equations (see [19]) p ( x | y ) = 1 Z p ( x ) e β U y ( x ) , (6) p ( x ) = X y p ( y ) p ( x | y ) . (7) Note that the solution for the conditional distrib ution p ( x | y ) in the rate-distortion problem (Equa- tion 6) is the same as the solution in the free ener gy case of the previous section (Equation 4), e xcept that the prior p 0 ( x ) is now defined as the mar ginal distribution p 0 ( x ) = p ( x ) (see Equation 7). This 3 NIPS 2013 workshop on Planning with Information Constraints particular prior distribution minimizes the the average relativ e entropy between p ( x | y ) and p ( x ) which is the mutual information between actions x and observations y . In the limit-case β → ∞ where transformation costs are ignored, p ( x | y ) is equal to the perfectly ra- tional policy for each v alue of y independent of any of the other policies and p ( x ) becomes a mixture of these solutions. Note that if there is a subset of perfectly rational solutions that is shared among tasks, then only this subset will be assigned probability mass since it reduces mutual information (see Section 3.3) Importantly , high values of the mutual information term in Equation 5 will not lead to a penalization, which means that actions x can be very informative about the observation y . The behavior of an actor with infinite computational resources will thus be v ery observation-specific. In the case β → 0 the mutual information between actions and observations is minimized to I ( x ; y ) = 0 , leading to p ( x | y ) = p ( x ) ∀ y , the maximal abstraction where all y elicit the same response. The actor’ s behavior p ( x | y ) becomes independent of the observation y due to the lack in computational resources to change its behavior . W ithin this limitation the actor will, howe ver , still emit actions that maximize the expected utility P x,y p ( x ) U ( x, y ) . For values of the rationality parameter β in between these limit-cases, that is 0 < β < ∞ , the bounded-rational actor trades off observation-specific actions that lead to a higher expected utility for particular observations at the cost of high mutual information between observ ations y and actions x , against abstr act actions that yield a “good” expected utility for many observations and lead to a lower mutual information term. An alternativ e interpretation, closer to the rate-distortion frame work, is that the perceptual channel through which y is transmitted to the actor has a limited capacity gi ven by C = I ( x ; y ) . For large values of β , the transmission of y is not se verely influenced and the actor can choose the best action for this particular observation. For lower values of β howe ver , the actor becomes very uncertain about the true value of y and has to choose abstract actions that are “good” under all observations which are compatible with the actor’ s belief over y . 2.4 Computing the self-consistent solution The self-consistent solutions that maximize the v ariational principle in Equation 5 can be computed by starting with an initial distribution p 0 ( x ) and then iterating Equation 6 and Equation 7 in an alternating fashion. This procedure is well known in the rate-distortion framework as a Blahut- Arimoto-type algorithm [25, 26]. The iteration is guaranteed to con verge to a unique maximum (see 2.1.1 in [19] and [23, 24]. Note that p 0 ( x ) has to have the same support as p ( x ) . Implemented in a straightforward manner , the Blahut-Arimoto iterations can become computation- ally costly since the iterations inv olve e valuating the utility function for ev ery action-observation- pair ( x, y ) and computing the normalization constant Z . In case of continuous-valued random vari- ables, closed-form analytic solutions exist only for special cases. 3 Abstractions in multi-task decision-making 3.1 Problem formulation In the following we present the application of the rate-distortion framework for decision-making introduced in the pre vious section to multi-task decision problems. W e assume that we are gi ven a number of tasks within the same en vironment and that the observations from the en vironment are fully informati ve about the current task, that is we observ e the v alue of a discrete random v ariable y corresponding to a unique task. Note that this assumption can easily be relaxed. More formally we make the follo wing assumptions: we are gi ven a set of N tasks τ = { t 1 , t 2 , ...t N } which define the set of observ ations y ∈ { y 1 , y 2 , ..., y N } with y i = y j if and only if i = j . Each task is defined through the utility function U ( x, y ) , where x is an action. The action-space x ∈ X is the same for all tasks. W e assume that the probability ov er tasks is known and gi ven by p ( y ) . The goal of the decision maker is to find task-specific distributions p ( x | y ) that maximize the ex- pected utility P x,y p ( x | y ) U ( x, y ) given its computational constraints. This problem is formalized in the variational principle in Equation 5 with the self-consistent solutions in Equations 6, 7. In this 4 NIPS 2013 workshop on Planning with Information Constraints β = 100 β = 1 x U ( x, y 1 ) U ( x, y 2 ) p ( x ) p ( x | y 1 ) p ( x | y 2 ) p ( x ) p ( x | y 1 ) p ( x | y 2 ) [0 , 0] 0 0 0 0 0 0 0 0 [0 , 1] 0 1 0 . 5 0 1 0 0 0 [0 . 7 , 0] 0 . 7 0 . 7 0 0 0 1 1 1 [1 , 1] 1 0 0 . 5 1 0 0 0 0 T able 1: T wo-task decision problem. Possible actions and their utilities for both tasks are giv en in the first three columns of the table. The results of the Blahut-Arimoto iterations for a large value of β are sho wn in the middle three columns. In this case the maximum-utility action for each task is picked with full certainty . The results for a small value of β are shown in the last three columns. The decision mak er does not ha ve computational resources to change its beha vior according to the task and thus always picks the suboptimal action that leads to a high utility in both tasks. principle for bounded-rational decision-making, information processing costs arise from changing the prior -behavior p ( x ) to the task-specific beha vior p ( x | y ) and are measured in terms of KL-cost in accordance with the thermodynamic framew ork for decision-making [15]. 3.2 T rading off abstraction against optimal action W e designed the following two-task problem, to demonstrate the role of the rationality parameter β that governs the trade-off between expected utility and mutual information. In both tasks, the action x = [ x 1 , x 2 ] is one of four possible action-vectors (see T able 1). The utility for the first task is simply giv en by the value of the first component of the action v ector, whereas the utility for the second task is the Manhattan distance between the two components of the action vector: U ( x, y ) = x 1 if y = y 1 | x 1 − x 2 | if y = y 2 . The utilities for all actions are summarized in T able 1. The observation-v ariable y ∈ y 1 , y 2 is fully informativ e about the task with the task probabilities p ( y 1 ) = p ( y 2 ) = 1 2 . W ith this particular choice of utility functions and action-vectors, the maximum-utility action for one task has a utility of zero for the other task. Howe ver , there is a suboptimal action x ∗ sub = [0 . 7 , 0] that leads to the second-best utility in both en vironments. The simulation results summarized in T able 1 show that for a high value of the inv erse temperature β the decision-maker picks the maximum- utility action in each task with probability 1 . At a low value of β the actor uses the same action distribution for both tasks due to its boundedness, resulting in I ( x ; y ) = 0 . This leads to a maximal abstraction over both tasks which is solved optimally by putting all the probability mass on the suboptimal action x ∗ sub . Note that the limit β = 1 shown here is in general still far from the fully bounded limit β → 0 — in this particular example ho wev er lowering β further has no effect. Figure 1 A shows the transition from perfect rationality to full boundedness. Starting at β ≈ ∞ the entropy of the conditionals H ( x | y ) is zero, since for a given task the actor picks the maximum- utility action with certainty . By lowering the inv erse temperature β , both the mutual information I ( x ; y ) and the expected utility E p ( x,y ) [ U ] monotonically decrease. Initially H ( x ) stays constant, whereas H ( x | y ) increases, which means that the actor picks the two maximum-utility actions with increasing stochasticity . At 1 β ≈ 0 . 55 a phase transition occurs — the entropy H ( x ) rapidly peaks at 1 . 585 bits implying that three actions are now equally probable in p ( x ) . Lowering β further leads to a rapid drop in H ( x ) , H ( x | y ) and I ( x ; y ) to zero bits as well as a drop in expected utility to 0 . 7 . The decision maker is now in the fully abstract regime, where x ∗ sub is always chosen, regardless of the task. Figure 1 B shows the Rate-Utility function (in analogy to the rate-distortion function) where the in- formation processing rate I ( x ; y ) is sho wn as a function of the expected utility . If the decision-maker is conceptualized as a communication channel between observations and actions, the rate I ( x ; y ) defines the minimal required capacity of that channel. The Rate-Utility function thus specifies the minimum required capacity for computing an action with a certain expected utility , or analogously the maximally achiev able expected utility given a certain information processing capacity . Impor- 5 NIPS 2013 workshop on Planning with Information Constraints tantly , decision-makers in the shaded region are impossible , whereas decision-makers in the white region are suboptimal with respect to their information processing capabilities. Figure 1: T ransition from full rationality ( β ≈ ∞ ) to full boundedness ( β ≈ 0 ). A Trade-of f between I ( x ; y ) = H ( x ) − H ( x | y ) and e xpected utility E p ( x,y ) [ U ] B Rate-Utility function showing the information processing rate I ( x ; y ) as a function of the expected utility . The rate specifies the minimal a verage number of bits of the observation y that need to be processed in order to achiev e a certain expected utility . For the limit β → ∞ the decision maker picks the maximum utility action for each environment deterministically thus following the maximum expected utility (MEU) principle. 3.3 Changing the level of granularity Abstractions are formed by reducing the information content of an entity until it only contains rel- ev ant information. For a discrete random variable x ∈ X this translates into forming a partitioning ov er the space X where “similar” elements are grouped into the same subset of X and become in- distinguishable within the subset. In physics changing the granularity of a partitioning to a coarser lev el is known as coarse-graining which reduces the resolution of the space X in a nonuniform manner . In the rate-distortion framework the partitioning emer ges in the shared prior p ( x ) as a soft- partitioning (see [20]), where actions x with the same average utility get the same probability mass and become essentially indistinguishable. T o demonstrate this, we use a binary grid of size N x N , N = 3 where each cell of the grid can be white x i = 0 or colored in black x i = 1 . Actions are particular patterns on this grid thus the actionspace becomes x ∈ binary sequences of length N 2 . The utility function defines the following three tasks: 1. The utility equals the number of colored pixels, but one row and one column has to be all-white, otherwise the utility is zero. 2. An y pattern with exactly four colored pixels scores a utility of +4 , all other patterns have utility zero. 3. An y pattern with an ev en number of colored pixels scores a utility equal to the total number of colored pixels; all other patterns ha ve a utility of zero. Figure 2 sho ws 16 samples each from the conditionals p ( x | y ) for each task and the prior p ( x ) for β = 10 . Since the inv erse temperature is high, all the samples with nonzero probability are actions that yield maximum utility in their particular task. Note that the patterns that lead to a maximum utility in task (1) are a subset of the patterns that lead to maximum utility in task (2) but also lead to a nonzero utility in task (3). Since transformation costs are mostly ignored in this case, the patterns appearing for task (3) are very different from the patterns in task (1). Note howe ver that the additional patterns in task (2) that would also lead to maximum utility are assigned a probability of zero. The subset of patterns which are also optimal in task (1) is sufficient to achieve maximum 6 NIPS 2013 workshop on Planning with Information Constraints expected utility and by not including the additional “specialized” patterns for task (2) the mutual information can be reduced significantly . The prior p ( x ) consists essentially of two kinds of patterns: the ones that are optimal in task (1) and (2) simultaneously and the patterns that are optimal in task (3). The first two tasks have essentially become indistinguishable because the actor will respond with exactly the same action-distrib ution. By lo wering the in verse temperature to β = 0 . 1 (see Figure 3), the mutual information constraint gets more weight and suboptimal patterns are picked for task (3), similar to the simulation in the previous section. The behavior of the actor has no w become indistinguishable for all three tasks at the expense of a lower e xpected utility . Importantly , the effecti ve resolution of the prior p ( x ) has reduced from two distinct sets of patterns to a single set of indistinguishable patterns (in terms of their expected utility). The le vel of granularity of the prior has been reduced e ven further . Figure 2: Sampled patterns for β = 10 . The number abov e each pattern indicates the probability of the pattern in the corresponding distribution. A Samples for task (1) P ( x | y = 1) . All shown patterns yield maximum utility in the task. B Samples for task (2) P ( x | y = 2) . All shown pat- terns yield maximum utility in this task, ho wev er task (2) has more patterns that would potentially lead to maximum utility—only the subset that coincides with the maximum utility patterns in (1) has nonzero probability though. This is a consequence of sharing the same prior p ( x ) and mutual information minimization. C Samples for task (3) P ( x | y = 3) . The patterns in task (1) and (2) would also ha ve a nonzero probability in task (3), but the sampled patterns shown here yield twice the utility and have thus all the probability mass. D Samples from the shared prior P ( x ) . The prior is a mixture ov er the patterns shown in the conditional distrib utions. Figure 3: Sampled patterns for β = 0 . 1 . The number above each pattern indicates the probability of the pattern in the corresponding distribution. A Samples for task (1) P ( x | y = 1) . B Samples for task (2) P ( x | y = 2) . C Samples for task (3) P ( x | y = 3) . Compared to the case β = 10 in Figure 2, the increased weight of the mutual information term I ( x ; y ) has led to the selection of suboptimal actions in task (3), similar to the previous simulation. D Samples from the shared prior P ( x ) . In the fully abstract regime all conditional distributions are exactly equal to the prior p ( x ) , leading to I ( x ; y ) = 0 . 4 Discussion & Conclusions In this work, we discussed the connection between the thermodynamic framework [15] for decision- making with information processing costs and rate-distortion theory . This connection implies 7 NIPS 2013 workshop on Planning with Information Constraints a nov el interpretation of the rate-distortion framework for multi-task bounded-rational decision- making. Importantly , abstractions emerge naturally in this framework due to limited information processing capabilities. The authors in [27] find a very similar emergence of “natural abstractions” and “ritualized behavior” when studying goal-directed beha vior in the MPD case using the Relevant Information method, which is a particular application of rate-distortion theory . Although not shown here, the approach presented in this paper straightforwardly carries over to an inference case by treating y as observations and x as the belief-state. In the inference case, limited information processing capacities make it impossible to detect certain patterns which in turn renders dif ferent entities indistinguishable, leading to the formation of abstractions. This idea has been explored previously in [20, 21]. Both, the w ork just mentioned and our work are inspired by the Information Bottleneck Method [19], which is mathematically very similar to the rate-distortion problem (with a particular choice of distortion function) and thus also to the approach presented here. Note that limited information processing capabilities can arise for various reasons. The most obvious reason, perhaps, is the lack of computational power which is in many cases equiv alent to certain time-constraints (such as reaction times) or memory constraints. Other reasons for information processing limits are small sample sizes or low signal-to-noise ratios that put an upper limit on the mutual information independent of av ailable computational po wer . In the approach presented here, we assume that the decision-maker draws samples from p ( x ) . Re- sponding to a certain task with a sample from p ( x | y ) could then be implemented for instance with a rejection sampling procedure. The prior p ( x ) will then be the proposal-distribution that has the highest average acceptance rate over all tasks y . The computational cost of finding p ( x ) is not part of the current framew ork. These implications hav e to be explored in further work. Acknowledgments This study was supported by the DFG, Emmy Noether grant BR4164/1-1. References [1] Joshua B T enenbaum, Charles K emp, Thomas L Griffiths, and Noah D Goodman. How to gro w a mind: Statistics, structure, and abstraction. science , 331(6022):1279–1285, 2011. [2] Charles Kemp, Amy Perfors, and Joshua B T enenbaum. Learning overhypotheses with hierarchical bayesian models. Developmental science , 10(3):307–321, 2007. [3] Samuel J Gershman and Y ael Ni v . Learning latent structure: carving nature at its joints. Current opinion in neur obiology , 20(2):251–256, 2010. [4] Daniel A Braun, Carsten Mehring, and Daniel M W olpert. Structure learning in action. Behavioural brain r esearc h , 206(2):157–165, 2010. [5] Daniel A Braun, Stephan W aldert, Ad Aertsen, Daniel M W olpert, and Carsten Mehring. Structure learn- ing in a sensorimotor association task. PloS one , 5(1):e8973, 2010. [6] T im Gene wein and Daniel A Braun. A sensorimotor paradigm for bayesian model selection. F r ontiers in human neur oscience , 6, 2012. [7] Herbert A Simon. Theories of bounded rationality . Decision and or ganization , 1:161–176, 1972. [8] Richard D McK elvey and Thomas R Palfre y . Quantal response equilibria for normal form games. Games and economic behavior , 10(1):6–38, 1995. [9] Da vid H W olpert. Information theory-the bridge connecting bounded rational game theory and statistical physics. In Complex Engineer ed Systems , pages 262–290. Springer , 2006. [10] Hilbert J Kappen. Linear theory for control of nonlinear stochastic systems. Physical re view letters , 95(20):200201, 2005. [11] Jan Peters, Katharina M ¨ ulling, and Y asemin Altun. Relati ve entropy policy search. In AAAI , 2010. [12] Emanuel T odorov . Efficient computation of optimal actions. Pr oceedings of the national academy of sciences , 106(28):11478–11483, 2009. [13] Ev angelos Theodorou, Jonas Buchli, and Stefan Schaal. A generalized path integral control approach to reinforcement learning. The Journal of Machine Learning Resear ch , 9999:3137–3181, 2010. 8 NIPS 2013 workshop on Planning with Information Constraints [14] Jonathan Rubin, Ohad Shamir, and Naftali Tishby . Trading value and information in mdps. In Decision Making with Imperfect Decision Makers , pages 57–74. Springer , 2012. [15] Pedro A Ortega and Daniel A Braun. Thermodynamics as a theory of decision-making with information- processing costs. Pr oceedings of the Royal Society A: Mathematical, Physical and Engineering Science , 469(2153), 2013. [16] Daniel A Braun, Pedro A Orte ga, Evangelos Theodorou, and Stefan Schaal. Path integral control and bounded rationality . In Adaptive Dynamic Pr ogr amming And Reinfor cement Learning (ADPRL), 2011 IEEE Symposium on , pages 202–209. IEEE, 2011. [17] Daniel Alexander Ortega and Pedro Alejandro Braun. Information, utility and bounded rationality . In Artificial General Intelligence , pages 269–274. Springer , 2011. [18] Pedro A Ortega and Daniel A Braun. Free energy and the generalized optimality equations for sequential decision making. arXiv preprint , 2012. [19] Naftali Tishby , Fernando C Pereira, and W illiam Bialek. The information bottleneck method. The 37th annual Allerton Confer ence on Communication, Contr ol, and Computing , 1999. [20] Susanne Still and James P Crutchfield. Structure or noise? arXiv preprint , 2007. [21] Susanne Still, James P Crutchfield, and Christopher J Ellison. Optimal causal inference: Estimating stored information and approximating causal architecture. Chaos: An Inter disciplinary J ournal of Nonlinear Science , 20(3):037111–037111, 2010. [22] Christopher A Sims. Implications of rational inattention. J ournal of monetary Economics , 50(3):665–690, 2003. [23] I Csisz ´ ar and G T usn ´ ady . Information geometry and alternating minimization procedures. Statistics and decisions , 1984. [24] Thomas M Co ver and Joy A Thomas. Elements of information theory . John Wile y & Sons, 1991. [25] Raymond W Y eung. Information theory and network coding . Springer , 2008. [26] Richard Blahut. Computation of channel capacity and rate-distortion functions. IEEE T ransactions on Information Theory , 18(4):460–473, 1972. [27] Sander G van Dijk and Daniel Polani. Informational constraints-driv en organization in goal-directed behavior . Advances in Complex Systems , 2013. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment