Higher Dimensional Consensus: Learning in Large-Scale Networks

The paper presents higher dimension consensus (HDC) for large-scale networks. HDC generalizes the well-known average-consensus algorithm. It divides the nodes of the large-scale network into anchors and sensors. Anchors are nodes whose states are fix…

Authors: Usman A. Khan, Soummya Kar, Jose M. F. Moura

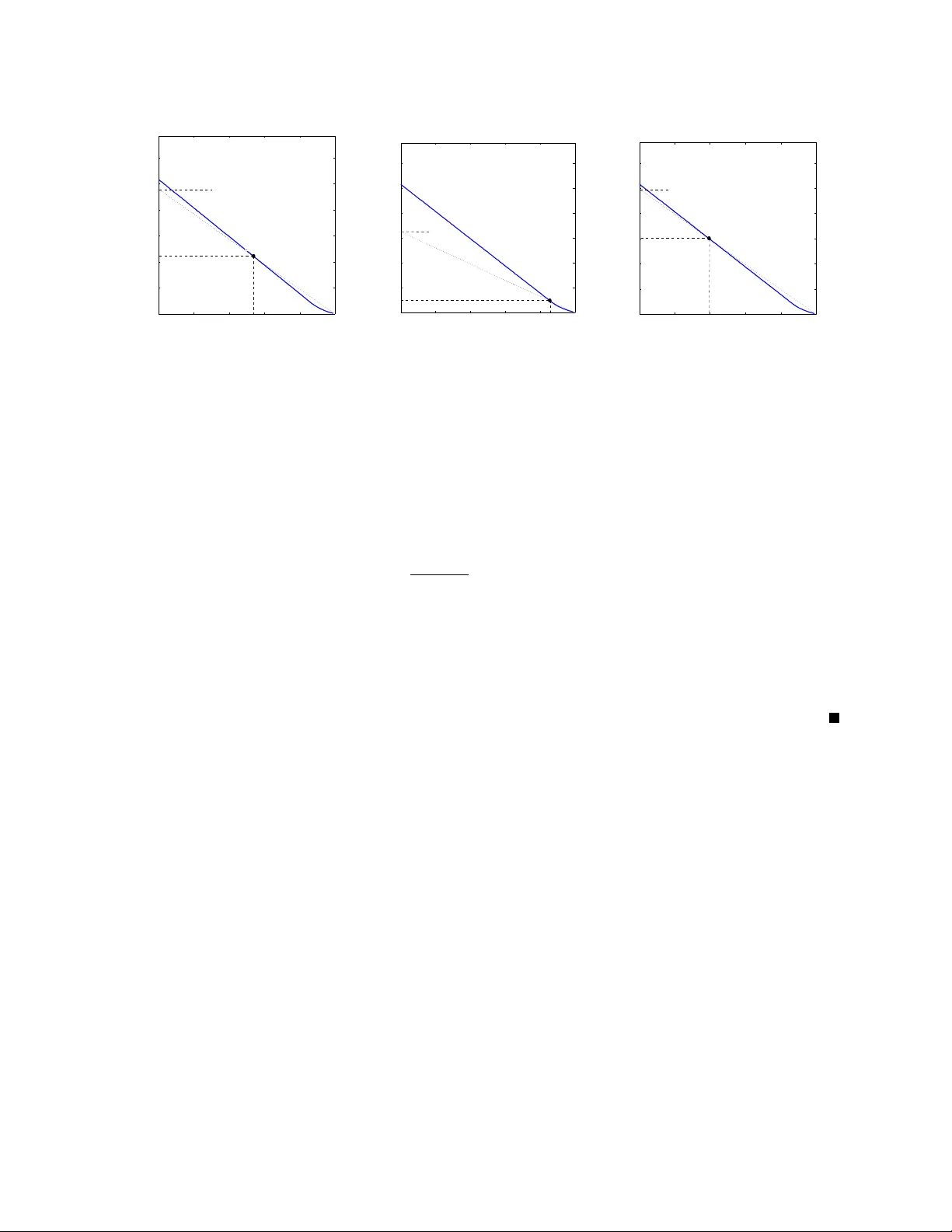

1 Higher Dimensional Consensus: Learning in Lar ge-Scale Networks Usman A. Khan, Soummya Kar , and Jos ´ e M. F . Moura Department of Electrical and Computer Engineering Carnegie Mellon Uni v ersity , 5000 Forbes A ve, Pittsb ur gh, P A 15213 { ukhan, moura } @ece.cmu.edu, soummyak@andre w .cmu.edu Ph: (412)268-7103 Fax: (412)268-3890 Abstract The paper presents higher dimension consensus (HDC) for large-scale netw orks. HDC generalizes the well-known av erage-consensus algorithm. It divides the nodes of the large-scale network into anchors and sensors. Anchors are nodes whose states are fixed ov er the HDC iterations, whereas sensors are nodes that update their states as a linear combination of the neighboring states. Under appropriate conditions, we show that the sensors’ states conv erge to a linear combination of the anchors’ states. Through the concept of anchors, HDC captures in a unified framework several interesting network tasks, including distributed sensor localization, leader-follower , distributed Jacobi to solve linear systems of algebraic equations, and, of course, a verage-consensus. In many network applications, it is of interest to learn the weights of the distributed linear algorithm so that the sensors conv erge to a desired state. W e term this inver se problem the HDC learning problem. W e pose learning in HDC as a constrained non-con vex optimization problem, which we cast in the framew ork of multi-objectiv e optimization (MPO) and to which we apply Pareto optimality . W e prove analytically relev ant properties of the MOP solutions and of the Pareto front from which we deri ve the solution to learning in HDC. Finally , the paper sho ws ho w the MOP approach resolves interesting tradeoffs (speed of con ver gence versus quality of the final state) arising in learning in HDC in resource constrained networks. Index T erms Distributed algorithms, Higher dimensional consensus, Large-scale networks, Spectral graph theory , Multi-objectiv e optimization, Pareto optimality . This work was partially supported by NSF under grant # CNS-0428404, by ONR under grant # MURI-N000140710747, and by an IBM Faculty A ward. November 1, 2018 DRAFT 2 I . I N T R O D U C T I O N This paper provides a unified frame work, high-dimensional consensus (HDC), for the analysis and design of linear distributed algorithms for lar ge-scale networks–including distributed Jacobi algorithm [1], av erage-consensus [2], [3], [4], [5], [6], [7], distributed sensor localization [8], distributed matrix in version [9], or leader-follo wer algorithms [10], [11]. These applications arise in many resource constrained large- scale networks, e.g., sensor networks, teams of robotic platforms, but also in cyber -physical systems like the smart grid in electric power systems. W e view these systems as a collection of nodes interacting over a sparse communication graph. The nodes hav e, in general, strict constraints on their communication and computation budget so that only local communication and lo w-order computation is feasible at each node. Linear distrib uted algorithms for constrained lar ge-scale networks are iterati ve in nature; the information is fused over the iterations of the algorithm across the sparse network. In our formulation of HDC, we vie w the large-scale network as a graph with edges connecting sparsely a collection of nodes; each node is described by a state. The nodes are partitioned in anchors and sensors. Anchors do not update their state over the HDC iterations, while the sensors iterativ ely update their states by a linear , possibly con vex, combination of their neighboring sensors’ states. The weights of this linear combination are the parameters of the HDC. For example, in sensor localization [8], the state at each node is its current position estimate. Anchors may be nodes instrumented with a GPS unit, knowing its precise location and the remaining nodes are the sensors that don’t know their location and for which HDC iterativ ely updates their state, i.e., their location, in a distributed fashion. The weights of HDC are for this problem the barycentric coordinates of the sensors with respect to a group of neighboring nodes, see [8]. W e consider two main issues in HDC. Analysis: Forward Problem Giv en the HDC weights or parameters and the sparse underlying con- necti vity graph determine (i) under what conditions does the HDC con verge; (ii) to what state does the HDC con verge; and (iii) what is the con ver gence rate. The forward problem establishes the conditions for con ver gence, the con ver gence rate, and the con vergent state of the network. Learning: In verse Problem Giv en the desired state to which HDC should conv erge and the sparsity graph learn the HDC parameters so that indeed HDC does con ver ge to that state. Due to the sparsity constraints, it may not be possible for HDC to con ver ge exactly to the desired state. An interesting tradeoff that we pursue is between the speed of con vergence and the quality of the limiting HDC con ver ging state, gi ven by some measure of the error between the final state and the desired state. Clearly , the learning problem is an in verse problem that we will formulate as the minimization of a utility function under November 1, 2018 DRAFT 3 constraints. A naive formulation of the learning problem is not feasible. Ours is in terms of a constrained non- con vex optimization formulation that we solv e by casting it in the conte xt of a multi-objecti ve optimization problem (MOP), [12]. W e apply to this MOP Pareto optimization. T o deri ve the optimal Pareto solution, we need to characterize the Pareto front (locus of P areto optimal solutions.) Although usually it is hard to determine the P areto front and requires extensi ve iterativ e procedures, we e xploit the structure of our problem to prove smoothness, conv exity , strict decreasing monotonicity , and differentiability properties of the Pareto front. W ith the help of these properties, we can actually derive an efficient procedure to generate Pareto-optimal solutions to the MOP , determine the Pareto front, and find the solution to the learning problem. This solution is found by a rather expressi ve geometric ar gument. A. Or ganization of the P aper W e no w describe the rest of the paper . Section II introduces notation and relev ant definitions, whereas Section III provides the problem formulation. W e discuss the forward problem (analysis of HDC) in Section IV and the in verse problem (learning in large-scale networks) in Sections V–VII. Finally , Section VIII concludes the paper . I I . P R E L I M I N A R I E S This section introduces the notation used in the paper and revie ws rele vant concepts from spectral graph theory and multi-objectiv e optimization. A. Spectral Graph Theory Consider a sensor network with N nodes. W e partition this network into K anchors and M sensors, such that N = K + M . As discussed in Section I, the anchors are the nodes whose states are fixed, and the sensors are the nodes that update their states as a linear combination of the states of their neighboring nodes. Let κ = { 1 , . . . , K } be the set of anchors and let Ω = { K + 1 , . . . , N } be the set of sensors. The set of all of the nodes is then denoted by Θ = κ ∪ Ω . W e model the network by a directed graph, G = ( V , A ) , where, V = { 1 , . . . , N } , denotes the set of nodes. The interconnections among the nodes are given by the adjacenc y matrix, A = { a lj } , where a lj = 1 , l ← j, 0 , otherwise , (1) November 1, 2018 DRAFT 4 and the notation l ← j implies that node j can send information to node l . The neighborhood, K ( l ) , at node l is K ( l ) = { j | a lj = 1 } . (2) The classification of nodes into sensors and anchors naturally induces the partitioning of the neighbor- hood, K ( l ) , at each sensor , l , i.e., K Ω ( l ) = K ( l ) ∩ Ω , K κ ( l ) = K ( l ) ∩ κ, (3) where K Ω ( l ) and K κ ( l ) are the set of sensors and the set of anchors in sensor l ’ s neighborhood, respecti vely . As a graph can be characterized by its adjacency matrix, to e very matrix we can associate a graph. For a matrix, Υ = { υ lj } ∈ R N × N , we define its associated graph by G Υ = ( V Υ , A Υ ) , where V Υ = { 1 , . . . , N } and A Υ = { a Υ lj } is given by a Υ lj = 1 , υ lj 6 = 0 , 0 , υ lj = 0 . (4) The con ver gence properties of distributed algorithms depend on spectral properties of associated matrices. In the following, we recall the definition of spectral radius. The spectral radius, ρ ( P ) , of a matrix, P ∈ R M × M , is defined as ρ ( P ) = max i | λ i ( P ) | , (5) where λ i ( P ) denotes the i th eigen v alue of P . W e also hav e ρ ( P ) = lim q →∞ k P q k 1 /q , (6) where k · k is any matrix induced norm. B. Multi-objective Optimization Pr oblem (MOP): P ar eto-Optimality In this subsection, we recall facts on multi-objectiv e optimization theory that we will use to de velop the solutions of the learning problem. W e consider the follo wing constrained optimization problem. Let { f k ( y ) } k =1 ,...,n be real-v alued functions, f k : X → R , ∀ k (7) November 1, 2018 DRAFT 5 on some topological space, X (in this work, X will always be a finite-dimensional vector space). The vector objectiv e function f ( y ) is f ( y ) = f 1 ( y ) . . . f n ( y ) . (8) Let { v k } be a family of n real-valued functions on X representing the inequality constraints and { w k } be a family of n real-valued functions on X representing the equality constraints. The feasible set of solutions, Y , is defined as Y = { y ∈ X | v k ( y ) ≤ V k , ∀ k , and w k ( y ) = W k , ∀ k } , (9) where V k , W k ∈ R . The multi-objectiv e optimization problem (MOP) is given by min y ∈Y f ( y ) . (10) Note that the inequality constraints, v k ( y ) , and the equality constraints, w k ( y ) , appear in the set of feasible solutions and, thus, are implicit in (10). In general, the MOP has non-inferior solutions, i.e., the MOP has a set of solutions none of which is inferior to the other . The solutions of the MOP are, thus, categorized as Pareto-optimal [12], defined in the follo wing. Definition 1 [Pareto optimal solutions] A solution, y ∗ , is said to be a Pareto optimal (or non-inferior) solution of a MOP if there exists no other feasible y (i.e., there is no y ∈ Y ) such that f ( y ) ≤ f ( y ∗ ) , meaning that f k ( y ) ≤ f k ( y ∗ ) , ∀ k , with strict inequality for at least one k . The general methods to solve the MOP , for example, include the weighting method, the Lagrangian method, and the ε -constraint method. These methods can be used to find the Pareto-optimal solutions of the MOP . In general, these approaches require extensi ve iterativ e procedures to establish P areto-optimality of a solution, see [12] for details. I I I . P R O B L E M F O R M U L A T I O N Consider a sensor network with N nodes communicating ov er a network described by a directed graph, G = ( V , A ) . Let u k ∈ R 1 × m be the state associated to the k th anchor , and let x l ∈ R 1 × m be the November 1, 2018 DRAFT 6 state associated to the l th sensor . W e are interested in studying linear iterative algorithms of the form u k ( t + 1) = u k ( t ) = u k (0) , t ≥ 0 , k ∈ κ, (11) x l ( t + 1) = p ll x l ( t ) + X j ∈K Ω ( l ) p lj x j ( t ) + X k ∈K κ ( l ) b lk u k (0) , t ≥ 0 , l ∈ Ω , (12) where: t is the discrete-time iteration index; and b lj ’ s and p lk ’ s are the state updating coefficients. W e assume that the updating coefficients are constant ov er the components of the m -dimensional state, x l ( t ) . W e term distributed linear iterativ e algorithms of the form (11)–(12) as Higher Dimensional Consensus (HDC) algorithms 1 [11], [10]. For the purpose of analysis, we write the HDC (11)–(12) in matrix form. Define U ( t ) = u T 1 ( t ) , . . . , u T K ( t ) T ∈ R K × m , X ( t ) = x T K +1 ( t ) , . . . , x T N ( t ) T ∈ R M × m , (13) P = { p lj } ∈ R M × M , B = { b lk } ∈ R M × K . (14) W ith the abov e notation, we write (11)–(12) concisely as U ( t + 1) X ( t + 1) = I 0 B P U ( t ) X ( t ) , (15) , C ( t + 1) = ΥC ( t ) . (16) Note that the graph, G ( Υ ) , associated to the N × N iteration matrix, Υ , is a subgraph of the commu- nication graph, G . In other words, the sparsity of Υ is dictated by the sparsity of the underlying sensor network, i.e., a non-zero element, υ lj , in Υ implies that node j can send information to node l in the original graph G . In the iteration matrix, Υ : the M × M lo wer right submatrix, P , collects the updating coef ficients of the M sensors with respect to the M sensors; and the lower left submatrix, B , collects the updating coefficients of the M sensors with respect to the K anchors. From (15), the matrix form of the HDC in (12) is X ( t + 1) = PX ( t ) + BU (0) , t ≥ 0 . (17) In this paper, we study the following two problems that arise in the context of the HDC. Analysis: F orward problem Giv en an N -node sensor network with a communication graph, G , the matrices B , and P , and the network initial conditions, X (0) and U (0) ; what are the conditions under 1 As we will show later, the HDC algorithms contain the conv entional av erage consensus algorithms, [3], [4], as a special case. The notion of higher dimensions is technical and will be studied later . November 1, 2018 DRAFT 7 which the HDC con verges? What is the conv ergence rate of the HDC? If the HDC con ver ges, what is the limiting state of the network? Learning: Inv erse problem Giv en an N -node sensor network with a communication graph, G , and an M × K weight matrix, W , learn the matrices P and B in (17) such that the HDC conv erges to lim t →∞ X ( t + 1) = WU (0) , (18) for ev ery U (0) ∈ R K × m ; if multiple solutions exist, we are interested in finding a solution that leads to fastest conv ergence. I V . F O RW A R D P R O B L E M : H I G H E R D I M E N S I O N A L C O N S E N S U S As discussed in Section III, the HDC algorithm is implemented as (11)–(12), and its matrix represen- tation is given by (16). W e di vide the study of the HDC in the following two cate gories. (A) No anchors: B = 0 (B) Anchors: B 6 = 0 W e analyze these two cases separately . In addition, we also provide, briefly , practical applications where each of them is relev ant. A. No anchor s: B = 0 In this case, the HDC reduces to X ( t + 1) = PX ( t ) , = P t +1 X (0) . (19) An important problem covered by this case is average-consensus. As well kno wn, if ρ ( P ) = 1 , (20) with 1 T and 1 being the left and right eigenv ectors of P , respecti vely , then we have lim t →∞ P t +1 = 11 T M , (21) under some minimal network connectivity assumptions, where 1 is the M × 1 column vector of 1 ’ s and M is the number of sensors. The sensors con verge to the av erage of the initial sensors’ states. The con vergence rate is dictated by the second largest (in magnitude) eigen v alue of the matrix P . For more precise and general statements in this regard, see for instance, [3], [4]. A verage-consensus, thus, is a November 1, 2018 DRAFT 8 special case of the HDC, when B = 0 and ρ ( P ) = 1 . This problem has been studied in great detail. Rele vant references include [13], [14], [15], [16], [17], [18], [19]. The rest of this paper deals entirely with the case ρ ( P ) < 1 and the term HDC subsumes the ρ ( P ) < 1 case, unless explicitly noted. When B = 0 , the HDC (with ρ ( P ) < 1 ) leads to X ∞ = 0 , which is not very interesting. B. Anchors: B 6 = 0 This extends the average-consensus to “higher dimensions” (as will be explained in Section IV -C.) Lemma 1 establishes: (i) the conditions under which the HDC conv erges; (ii) the limiting state of the network; and (iii) the rate of con ver gence of the HDC. Lemma 1 Let B 6 = 0 . If ρ ( P ) < 1 , (22) then the limiting state of the sensors, X ∞ , lim t →∞ X ( t + 1) = ( I − P ) − 1 BU (0) , (23) and the error, E ( t ) = X ( t ) − X ∞ , decays exponentially to 0 with exponent ln( ρ ( P )) , i.e., lim sup t →∞ 1 t ln k E ( t ) k ≤ ln( ρ ( P )) . (24) Pr oof: From (17), we note that X ( t + 1) = P t +1 X (0) + t X k =0 P k BU (0) , (25) ⇒ X ∞ = lim t →∞ P t +1 X (0) + lim t →∞ t X k =0 P k BU (0) , (26) and (23) follows from (22) and Lemma 9 in Appendix I. The error , E ( t ) , is given by E ( t ) = X ( t ) − ( I − P ) − 1 BU (0) , = P t X (0) + t − 1 X k =0 P k BU (0) − ∞ X k =0 P k BU (0) , = P t " X (0) − ∞ X k =0 P k BU (0) # . November 1, 2018 DRAFT 9 T o go from the second equation to the third, we recall (22) and use (112) from Lemma 9 in Appendix I. Let R = X (0) − P ∞ k =0 P k BU (0) . T o establish the con ver gence rate of k E ( t ) k , we ha ve 1 t ln k E ( t ) k = 1 t ln k P t R k , ≤ 1 t ln k P t kk R k , ≤ ln k P t k 1 /t + 1 t ln k R k . (27) No w , letting t → ∞ on both sides, we get lim sup t →∞ 1 t ln k E ( t ) k ≤ lim sup t →∞ ln k P t k 1 /t + lim sup t →∞ 1 t ln k R k , (28) = ln lim t →∞ k P t k 1 /t , (29) = ln ( ρ ( P )) . (30) and (24) follows. The interchange of lim and ln is permissible because of the continuity of ln and the last step follows from (6). The above lemma shows that we require (22) for the HDC to con ver ge. The limiting state of the network, X ∞ , is gi ven by (23) and the error norm, k E ( t ) k , decays exponentially to zero with expo- nent ln ( ρ ( P )) . W e further note that the limit state of the sensors, X ∞ , is independent of the sensors’ initial conditions, i.e., the algorithm forgets the sensors’ initial conditions and con ver ges to (23) for any X (0) ∈ R M × m . It is also straightforward to show that if ρ ( P ) ≥ 1 , then the HDC algorithm (17) di ver ges for all U (0) ∈ N ( B ) , where N ( B ) is the null space of B . Clearly , the case U (0) ∈ N ( B ) is not interesting as it leads to X ∞ = 0 . C. Consensus subspace W e no w define the consensus subspace as follo ws. Definition 2 (Consensus subspace) Given the matrices, B ∈ R M × K and P ∈ R M × M , the consensus subspace, Ξ , is defined as Ξ = { X ∞ | X ∞ = ( I − P ) − 1 BU (0) , U (0) ∈ R K × m , ρ ( P ) < 1 } . (31) The dimension of the consensus subspace, Ξ , is established in the follo wing theorem. Theor em 1 If K < M and ρ ( P ) < 1 , then the dimension of the consensus subspace, Ξ , is dim (Ξ) = m rank ( B ) ≤ mK . (32) November 1, 2018 DRAFT 10 Pr oof: The proof follows From Lemma 1 and Lemma 10 in Appendix I. No w , we formally define the dimension of the HDC. Definition 3 (Dimension) The dimension of the HDC algorithm is the dimension of the consensus sub- space, Ξ , normalized by m , i.e., dim ( HDC ) = dim (Ξ) m = rank ( B ) . (33) This definition is natural because the HDC is a decoupled algorithm, i.e., HDC corresponds to m parallel algorithms, one for each column of X ( t ) . So, the number of columns, m , in X ( t ) is factored out in the definition of dim ( HDC ) . Each column of X ( t ) lies in a subspace that is spanned by exactly rank ( B ) basis vectors. The number of these basis vectors is upper bounded by the number of anchors, i.e., is at most K . D. Practical Applications of the HDC Se veral interesting problems can be framed in the context of HDC. W e briefly sketch them below , for details, see [9], [11], [8], [10]. • Leader-follower algorithm [10]: When there is only one anchor , K = 1 , the sensors’ states con ver ge to the anchor state. W ith multiple anchors ( K > 1) , under appropriate conditions, the sensors’ states may be made to con verge to a desired, pre-specified linear combination of the anchors’ states. • Sensor localization in m -dimensional Euclidean spaces, R m : In [8], we choose the elements of the matrices P = { p lj } and B = { b lj } so that the sensor states con ver ge to their exact locations when only , K = m + 1 , anchors kno w their exact locations, for example, if equipped with a GPS. • J acobi algorithm for solving linear system of equations , [11]: Linear systems of equations arise naturally in sensor networks, for example, po wer flow equations in power systems monitored by sensors or time synchronization algorithms in sensor networks. W ith appropriate choice of the matrices B and P , it can be shown that the HDC algorithm (17) is a distributed implementation of the Jacobi algorithm to solve the linear system. • Distributed banded matrix in version : Algorithm (17) followed by a non-linear collapse operator is employed in [9] to solve a banded matrix in version problem, when the submatrices in the band are distributed among sev eral sensors. This distributed in version algorithm leads to distributed Kalman filters in sensor networks [20] using Gauss-Markov approximations by noting that the in verse of a Gauss-Marko v cov ariance matrix is banded. November 1, 2018 DRAFT 11 E. Robustness of the HDC Robustness is key in the context of HDC, when the information e xchange is subject to communication noise, packet drops, and imprecise knowledge of system parameters. In the context of sensor localization, we propose a modification to HDC in [8] along the lines of the Robbins-Monro algorithm [21] where the iterations are performed with a decreasing step-size sequence that satisfies a persistence condition. i.e., the step-sizes con ver ge to zero but not too fast (this condition is well studied in the stochastic approximation literature, [21], [22]). W ith such step-sizes, we show almost sure con vergence of the sensor localization algorithm to their exact locations under broad random phenomenon, see [8] for details. This modification is easily extended to the general class of HDC algorithms. V . I N V E R S E P R O B L E M : L E A R N I N G I N L A R G E - S C A L E N E T W O R K S As we briefly mentioned before, the in verse problem learns the parameter matrices ( B and P ) of the HDC such that HDC con ver ges to a desired pre-specified state (18). For con ver gence, we require the spectral radius constraint (22), and the matrices, B and P , to follow the underlying communication network, G . In general, due to the spectral norm constraint and the sparseness (network) constraints, equation (18) may not be met with equality . So, it is natural to relax the learning problem. Using Lemma 1 and (18), we restate the learning problem as follo ws. Consider ε ∈ [0 , 1) . Gi ven an N -node sensor network with a communication graph, G , and an M × K weight matrix, W , solve the optimization problem: inf B , P k ( I − P ) − 1 B − W k , (34) subject to: Spectral radius constraint, ρ ( P ) ≤ ε, (35) Sparsity constraint, G Υ ⊆ G , (36) for some induced matrix norm k · k . By Lemma 1, if ρ ( P ) ≤ ε , the conv ergence is exponential with exponent less than or equal to ln( ε ) . Thus, we ask, giv en a pre-specified con ver gence rate, ε , what is the minimum error between the conv erged estimates, lim t →∞ X ( t ) , and the desired estimates, WU (0) . Formulating the problem in this way naturally lends itself to a trade-off between the performance and the con ver gence rate. In some cases, it may happen that the learning problem has an exact solution in the sense that there exist B , P , satisfying (35) and (36) such that the objectiv e in (34) is 0 . In case of multiple such solutions, we seek the one which corresponds to the fastest con vergence, i.e., which leads to the smallest value November 1, 2018 DRAFT 12 of ρ ( P ) . W e may still formulate a performance versus con vergence rate trade-of f, if faster con vergence is desired. The learning problem stated as such is, in general, practically infeasible to solve because both (34) and (35) are non-con vex in P . W e now dev elop a more tractable framew ork for the learning problem in the follo wing. A. Revisiting the spectral r adius constraint (35) W e work with a con vex relaxation of the spectral radius constraint. Recall that the spectral radius can be expressed as (6). Howe ver , direct use of (6) as a constraint is, in general, not computationally feasible. Hence, instead of using the spectral radius constraint (35) we use a matrix induced norm constraint by realizing that ρ ( P ) ≤ k P k , (37) for any matrix induced norm. The induced norm constraint, thus, becomes k P k ≤ ε. (38) Clearly , (37) implies that any upper bound on k P k is also an upper bound on ρ ( P ) . B. Revisiting the sparsity constraint (36) In this subsection, we rewrite the sparsity constraint (36) as a linear constraint in the design pa- rameters, B and P . The sparsity constraint ensures that the structure of the underlying communication network, G , is not violated. T o this aim, we introduce an auxiliary v ariable, F , defined as F , [ B | P ] ∈ R M × N . (39) This auxiliary v ariable, F , combines the matrices B and P as they correspond to the adjacency ma- trix, A ( G ) , of the giv en communication graph, G , see the comments after (16). T o translate the sparsity constraint into linear constraints on F (and, thus, on B and P ), we employ a two-step procedure: (i) First, we identify the elements in the adjacency matrix, A ( G ) , that are zero; these elements correspond to the pairs of nodes in the network where we do not hav e a communication link. (ii) W e then force the elements of F = [ B | P ] corresponding to zeros in the adjacency matrix, A ( G ) , to be zero. Mathematically , (i) and (ii) can be described as follows. (i) Let the lower M × N submatrix of the N × N adjacency matrix, A = { a lj } (this lo wer part November 1, 2018 DRAFT 13 corresponds to F = [ P | B ] as can be noted from (16)), be denoted by A , i.e, A = { a ij } = { a lj } , l = K + 1 , . . . , N , j = 1 , . . . N , i = 1 , . . . , M . (40) Let χ contain all pairs ( i, j ) for which a ij = 0 . (ii) Let { e i } i =1 ,...,M be a family of 1 × M ro w-vectors such that e i has a 1 as the i th element and zeros e verywhere else. Similarly , let { e j } j =1 ,...,N be a family of N × 1 , column-vectors such that e j has a 1 as the j th element and zeros ev erywhere else. W ith this notation, the ij -th element, f ij , of F can be written as f ij = e i F e j . (41) The sparsity constraint (36) is explicitly giv en by e i F e j = 0 , ∀ ( i, j ) ∈ χ. (42) C. F easible solutions Consider ε ∈ [0 , 1) . W e now define a set of matrices, F ≤ ε ⊆ R M × N , that follo w both the induced norm constraint (38) and the sparsity constraint (42) of the learning problem. The set of feasible solutions is gi ven by F ≤ ε = { F ≤ ε = [ B | P ] | e i F e j = 0 , ∀ ( i, j ) ∈ χ, and k FT k ≤ ε } , (43) where T , 0 K × M I M ∈ R N × M . (44) W ith the matrix T defined as abov e, we note that P = FT . (45) Lemma 2 The set of feasible solutions, F ≤ ε , is conv ex. Pr oof: Let F 1 , F 2 ∈ F ≤ ε , then e i F 1 e j = 0 , ∀ ( i, j ) ∈ χ, e i F 2 e j = 0 , ∀ ( i, j ) ∈ χ. (46) For any 0 ≤ µ ≤ 1 , and ∀ ( i, j ) ∈ χ , e i ( µ F 1 + (1 − µ ) F 2 ) e j = µ e i F 1 e j + (1 − µ ) µ e i F 2 e j = 0 . November 1, 2018 DRAFT 14 Similarly , k ( µ F 1 + (1 − µ ) F 2 ) T k ≤ µ k F 1 T k + (1 − µ ) k F 2 T k ≤ µε + (1 − µ ) ε = ε. The first inequality uses the triangle inequality for matrix induced norms and the second uses the fact that, for i = 1 , 2 , F i ∈ F and k F i T k ≤ ε . Thus, F 1 , F 2 ∈ F ≤ ε ⇒ µ F 1 + (1 − µ ) F 2 ∈ F ≤ ε . Hence, F ≤ ε is con vex. Similarly , we note that the sets, F <ε and F < 1 , are also con ve x. D. Learning Pr oblem: An upper bound on the objective In this section, we simplify the objecti ve function (34) and giv e a tractable upper bound. W e ha ve the follo wing proposition. Pr oposition 2 Under the norm constraint k P k < 1 , then ( I − P ) − 1 B − W ≤ 1 1 − k P k k B + PW − W k (47) Pr oof: W e manipulate (34) to obtain successiv ely . ( I − P ) − 1 B − W = ( I − P ) − 1 ( B − ( I − P ) W ) , ≤ ( I − P ) − 1 k ( B − ( I − P ) W ) k , = X k P k k ( B − ( I − P ) W ) k , ≤ X k k P k k k ( B − ( I − P ) W ) k , ≤ 1 1 − k P k k B + PW − W k . (48) T o go from the second equation to the third, we use (112) from Lemma 9 in Appendix I. Lemma 9 is applicable here since (37) and gi ven the norm constraint k P k < 1 imply ρ ( P ) < 1 . The last step is the sum of a geometric series which con ver ges gi ven k P k < 1 . W e no w define the utility function, u ( B , P ) , that we minimize instead of minimizing k ( I − P ) − 1 B − W k . This is valid because u ( B , P ) is an upper bound on (34) and hence minimizing the upper bound leads to a performance guarantee. The utility function is u ( B , P ) = 1 1 − k P k k B + PW − W k . (49) November 1, 2018 DRAFT 15 W ith the help of the previous dev elopment, we no w formally present the Learning Pr oblem . Learning Pr oblem: Gi ven ε ∈ [0 , 1) , an N -node sensor network with a sparse communication graph, G , and a possibly full M × K weight matrix, W , design the matrices B and P (in (17)) that minimize (49), i.e., solve the optimization problem inf [ B | P ] ∈F ≤ ε u ( B , P ) . (50) Note that the induced norm constraint (38) and the sparsity constraint (42) are implicit in (50), as they appear in the set of feasible solutions, F ≤ ε . Furthermore, the optimization problem in (50) is equiv alent to the following problem. inf [ B | P ] ∈F ≤ ε ∩{k B k≤ b } u ( B , P ) , (51) where b > 0 is a sufficiently large number . Since (51) in v olves the infimum of a continuous func- tion, u ( B , P ) , over a compact set, F ε ∩ {k B k ≤ b } , the infimum is attainable and, hence, in the subsequent de velopment, we replace the infimum in (50) by a minimum. W e view the min u ( B , P ) as the minimization of its two factors, 1 / (1 − k P k ) and k B + PW − W k . In general, we need k P k → 0 to minimize the first factor , 1 / (1 − k P k ) , and k P k → 1 to minimize the second factor , k B + PW − W k (we explicitly prov e this statement later .) Hence, these two objecti ves are conflicting. Since, the minimization of the non-con ve x utility function, u ( B , P ) , contains minimizing two coupled con vex objecti ve functions, k P k and k B + PW − W k , we formulate this minimization as a multi-objectiv e optimization problem (MOP). In the MOP , we consider separately minimizing these two conv ex functions. W e then couple the MOP solutions using the utility function. E. Solution to the Learning Pr oblem: MOP formulation T o solve the Learning Pr oblem for ev ery ε ∈ [0 , 1) , we cast it in the context of a multi-objectiv e optimization problem (MOP). W e start by a rigorous definition of the MOP and later consider its equi valence to the Learning Pr oblem . In the MOP formulation, we treat k B + PW − W k as the first objecti ve function, f 1 , and k P k as the second objective function, f 2 . The objective vector , f ( B , P ) , is f ( B , P ) , f 1 ( B , P ) f 2 ( B , P ) = k B + PW − W k k P k . (52) November 1, 2018 DRAFT 16 The multi-objecti ve optimization problem (MOP) is giv en by min [ B | P ] ∈F ≤ 1 f ( B , P ) , (53) where 2 F ≤ 1 = { F = [ B | P ] : e i F e j = 0 , ∀ ( i, j ) ∈ χ, and k FT k ≤ 1 } . (54) Before providing one of the main results of this paper on the equiv alence of MOP and the Learning Pr oblem , we set the following notation. W e define ε exact = min {k P k | ( I − P ) − 1 B = W , [ B | P ] ∈ F < 1 } , (55) where the minimum of an empty set is taken to be + ∞ . In other words, ε exact is the minimum v alue of f 2 = k P k at which we may achieve an exact solution 3 of the Learning Pr oblem . A necessary condition for the existence of an exact solution is studied in Appendix II. If the exact solution is infeasible ( / ∈ F < 1 ), then ε exact = min { ∅ } , which we defined to be + ∞ . W e let E = [0 , 1) ∩ [0 , ε exact ] . (56) The Learning Pr oblem is interesting if ε ∈ E . W e no w study the relationship between the MOP and the Learning Pr oblem (50). Recall the notion of Pareto-optimal solutions of an MOP as discussed in Section II-B. W e have the following theorem. Theor em 3 Let B ε , P ε , be an optimal solution of the Learning Pr oblem , where ε ∈ E . Then, B ε , P ε is a Pareto-optimal solution of the MOP (53). The proof relies on analytical properties of the MOP (discussed in Section VI) and is deferred until Section VI-C. W e discuss here the consequences of Theorem 3. Theorem 3 says that the optimal solutions to the Learning Pr oblem can be obtained from the Pareto-optimal solutions of the MOP . In particular , it suffices to generate the Pareto front (collection of Pareto-optimal solutions of the MOP) for the MOP and seek the solutions to the Learning Pr oblem from the Pareto front. The subsequent Section is dev oted to constructing the Pareto front for the MOP and studying the properties of the Pareto front. 2 Although the Learning Problem is valid only when k P k < 1 , the MOP is defined at k P k = 1 . Hence, we consider k P k ≤ 1 when we seek the MOP solutions. 3 An exact solution is gi ven by [ B | P ] ∈ F such that ( I − P ) − 1 B = W or when the infimum in (34) is attainable and is 0 . November 1, 2018 DRAFT 17 V I . M U LT I - O B J E C T I V E O P T I M I Z A T I O N : P A R E T O F R O N T W e consider the MOP (53) as an ε -constraint problem, denoted by P k ( ε ) [12]. For a two-objectiv e optimization, n = 2 , we denote the ε -constraint problem as P 1 ( ε 2 ) or P 2 ( ε 1 ) , where P 1 ( ε 2 ) is gi ven by 4 min [ B | P ] ∈F ≤ 1 f 1 ( B , P ) subject to f 2 ( B , P ) ≤ ε 2 . (57) and P 2 ( ε 1 ) is given by min [ B | P ] ∈F ≤ 1 f 2 ( B , P ) subject to f 1 ( B , P ) ≤ ε 1 . (58) In both P 1 ( ε 2 ) and P 2 ( ε 1 ) , we are minimizing a real-v alued con ve x function, subject to a constraint on the real-v alued con vex function over a con vex feasible set. Hence, either optimization can be solved using a conv ex program [23]. W e can now write ε exact in terms of P 2 ( ε 1 ) as ε exact = P 2 (0) , if there exists a solution to P 2 (0) , + ∞ , otherwise . (59) Using P 1 ( ε 2 ) , we find the Pareto-optimal set of solutions of the MOP . W e explore this in Section VI-A. The collection of the v alues of the functions, f 1 and f 2 , at the Pareto-optimal solutions forms the Pareto front (formally defined in Section VI-B). W e explore properties of the Pareto front, in the context of our learning problem, in Section VI-B. These properties will be useful in addressing the minimization in (50) for solving the Learning Pr oblem . A. P areto-Optimal Solutions In general, obtaining Pareto-optimal solutions requires iterati vely solving ε -constraint problems [12], but we will sho w that the optimization problem, P 1 ( ε 2 ) , results directly into a Pareto-optimal solution. T o do this, we provide Lemma 3 and its Corollary 1 in the following. Based on these, we then state the Pareto-optimality of the solutions of P 1 ( ε 2 ) in Theorem 4. Lemma 3 Let [ B 0 | P 0 ] = argmin [ B | P ] ∈F ≤ 1 P 1 ( ε 0 ) . (60) 4 All the infima can be replaced by minima in a similar way as justified in Section V -D. Further note that, for technical con venience, we use F ≤ 1 and not F < 1 , which is permissible because the MOP objectives are defined for all values of k P k . November 1, 2018 DRAFT 18 If ε 0 ∈ E , then the minimum of the optimization, P 1 ( ε 0 ) , is attained at ε 0 , i.e., f 2 ( B 0 , P 0 ) = ε 0 . (61) Pr oof: Let the minimum value of the objecti ve, f 1 , be denoted by δ 0 , i.e., δ 0 = f 1 ( B 0 , P 0 ) . (62) W e prov e this by contradiction. Assume, on the contrary , that k P 0 k = ε 0 < ε 0 . Define α 0 , 1 − ε 0 1 − ε 0 . (63) Since, ε 0 < ε 0 < 1 , we hav e 0 < α 0 < 1 . For α 0 ≤ α < 1 , we define another pair , B 1 , P 1 , as B 1 , α B 0 , P 1 , (1 − α ) I + α P 0 . (64) Clearly , this choice is feasible, as it does not violate the sparsity constraints of the problem and further lies in the constraint of the optimization in (60), since k P 1 k ≤ (1 − α ) + αε 0 ≤ 1 − α (1 − ε 0 ) ≤ 1 − α 0 (1 − ε 0 ) = ε 0 . (65) W ith the matrices B 1 , P 1 in (64), we have the follo wing v alue, δ 1 , of the objectiv e function, f 1 , δ 1 = k B 1 + P 1 W − W k , = k α B 0 + ((1 − α ) I + α P 0 ) W − W k , = k α B 0 + α P 0 W − α W k , = αf 1 ( B 0 , P 0 ) = αδ 0 . (66) Since, α < 1 and non-negati ve, we have δ 1 < δ 0 . This shows that the new pair , B 1 , P 1 , constructed from the pair , B 0 , P 0 , results in a lower v alue of the objecti ve function. Hence, the pair , B 0 , P 0 , with k P 0 k = ε 0 < ε 0 is not optimal, which is a contradiction. Hence, f 2 ( B 0 , P 0 ) = ε 0 , . Lemma 3 shows that if a pair of matrices, B 0 , P 0 , solves the optimization problem P 1 ( ε 0 ) with ε 0 ∈ E , then the pair of matrices, B 0 , P 0 , meets the constraint on f 2 with equality , i.e., f 2 ( B 0 , P 0 ) = ε 0 . The follo wing corollary follo ws from Lemma 3. November 1, 2018 DRAFT 19 Cor ollary 1 Let ε 0 ∈ E , and [ B 0 | P 0 ] = argmin [ B | P ] ∈F ≤ 1 P 1 ( ε 0 ) , (67) δ 0 = f 1 ( B 0 , P 0 ) . (68) Then, δ 0 < δ ε , (69) for any ε < ε 0 , where [ B ε | P ε ] = argmin [ B | P ] ∈F ≤ 1 P 1 ( ε ) , (70) δ ε = f 1 ( B ε , P ε ) . (71) Pr oof: Clearly , from Lemma 3 there does not exist any ε < ε 0 that results in a lo wer v alue of the objecti ve function, f 1 . The above lemma shows that the optimal value of f 1 obtained by solving P 1 ( ε ) is strictly greater than the optimal value of f 1 obtained by solving P 1 ( ε 0 ) for any ε < ε 0 . The follo wing theorem now establishes the Pareto-optimality of the solutions of P 1 ( ε ) . Theor em 4 If ε 0 ∈ E , then the solution B 0 , P 0 , of the optimization problem, P 1 ( ε 0 ) , is Pareto optimal. Pr oof: Since, B 0 , P 0 solves the optimization problem, P 1 ( ε 0 ) , we have k P 0 k = ε 0 , from Lemma 3. Assume, on the contrary that B 0 , P 0 , are not Pareto-optimal. Then, by definition of Pareto-optimality , there exists a feasible B , P , with f 1 ( B , P ) ≤ f 1 ( B 0 , P 0 ) , (72) f 2 ( B , P ) ≤ f 2 ( B 0 , P 0 ) , (73) with strict inequality in at least one of the abov e equations. Clearly , if f 2 ( B , P ) < f 2 ( B 0 , P 0 ) , then k P k < ε 0 and B , P , are feasible for P 1 ( ε 0 ) . By Corollary 1, we ha ve f 1 ( B , P ) > f 1 ( B 0 , P 0 ) . Hence, f 2 ( B , P ) < f 2 ( B 0 , P 0 ) is not possible. On the other hand, if f 1 ( B , P ) < f 1 ( B 0 , P 0 ) then we contradict the fact that B 0 , P 0 , are optimal for P 1 ( ε 0 ) , since by (73), B , P , is also feasible for P 1 ( ε 0 ) . Thus, in either way , we have a contradiction and B 0 , P 0 are Pareto-optimal. November 1, 2018 DRAFT 20 B. Pr operties of the P ar eto F r ont In this section, we formally introduce the Pareto front and explore some of its properties in the context of the Learning Pr oblem . The Pareto front and their properties are essential for the minimization of the utility function, u ( B , P ) ov er F ≤ ε (50), as introduced in Section V -D. Let E denote the closure of E . The Pareto front is defined as follows. Definition 4 [Pareto front] Consider ε ∈ E . Let B ε , P ε , be a solution of P 1 ( ε ) then 5 ε = f 2 ( B ε , P ε ) . Denote by δ = f 1 ( B ε , P ε ) . The collection of all such ( ε, δ ) is defined as the Pareto front. For a given ε ∈ E , define δ ( ε ) to be the minimum of the objectiv e function, f 1 , in P 1 ( ε ) . By Theorem 4, ( ε, δ ( ε )) is a point on the Pareto front. W e now view the Pareto front as a function, δ : E 7− → R + , which maps ev ery ε ∈ E to the corresponding δ ( ε ) . In the following de velopment, we use the Pareto front, as defined in Definition 4, and the function, δ , interchangeably . The following lemmas establish properties of the Pareto front. Lemma 4 The Pareto front is strictly decreasing. Pr oof: The proof follows from Corollary 1. Lemma 5 The Pareto front is con vex, continuous, and, its left and right deriv atives 6 exist at each point on the Pareto front. Also, when ε exact = + ∞ , we have δ (1) = lim ε → 1 δ ( ε ) = 0 . (74) Pr oof: Let ε = f 2 ( · ) be the horizontal axis of the Pareto front, and let δ ( ε ) = f 1 ( · ) be the vertical axis. By definition of the Pareto front, for each pair ( ε, δ ( ε )) on the Pareto front, there exists matrices B ε , P ε such that k P ε k = ε, and k B ε + P ε W − W k = δ ( ε ) . (75) Let ( ε 1 , δ ( ε 1 )) and ε 2 , δ ( ε 2 ) be two points on the Pareto front, such that ε 1 < ε 2 . Then, there exists B 1 , P 1 , and B 2 , P 2 , such that k P 1 k = ε 1 , and k B 1 + P 1 W − W k = δ ( ε 1 ) , (76) k P 2 k = ε 2 , and k B 2 + P 2 W − W k = δ ( ε 2 ) . (77) 5 This follows from Lemma 3. Also, note that since B ε , P ε , is a solution of P 1 ( ε ) , B ε , P ε , is Pareto optimal from Theorem 4. 6 At ε = 0 , only the right derivati ve is defined and at ε = sup E , only the left deri vati ve is defined. November 1, 2018 DRAFT 21 For some 0 ≤ µ ≤ 1 , define B 3 = µ B 1 + (1 − µ ) B 2 , (78) P 3 = µ P 1 + (1 − µ ) P 2 . (79) Clearly , [ B 3 | P 3 ] ∈ F ≤ 1 as the sparsity constraint is not violated and k P 3 k ≤ µ k P 1 k + (1 − µ ) k P 2 k < 1 , (80) since k P 1 k < 1 and k P 2 k < 1 . Let ε 3 = k P 3 k , (81) and let z ( ε 3 ) = k B 3 + P 3 W − W k . (82) W e hav e z ( ε 3 ) = k µ B 1 + (1 − µ ) B 2 + ( µ P 1 + (1 − µ ) P 2 ) W − W k , = k µ B 1 + µ P 1 W − µ W + (1 − µ ) B 2 + (1 − µ ) P 2 W − (1 − µ ) W k , ≤ µ k B 1 + P 1 W − W k + (1 − µ ) k B 2 + P 2 W − W k , = µδ ( ε 1 ) + (1 − µ ) δ ( ε 2 ) . (83) Since ( ε 3 , z ( ε 3 )) may not be Pareto-optimal, there exists a Pareto optimal point, ( ε 3 , δ ( ε 3 )) , at ε 3 (from Lemma 3) and we hav e δ ( ε 3 ) ≤ z ( ε 3 ) , ≤ µδ ( ε 1 ) + (1 − µ ) δ ( ε 2 ) . (84) From (80), we have ε 3 ≤ µε 1 + (1 − µ ) ε 2 , (85) and since the Pareto front is strictly decreasing (from Lemma 4), we hav e δ ( µε 1 + (1 − µ ) ε 2 ) ≤ δ ( ε 3 ) . (86) November 1, 2018 DRAFT 22 From (86) and (84), we hav e δ ( µε 1 + (1 − µ ) ε 2 ) ≤ µδ ( ε 1 ) + (1 − µ ) δ ( ε 2 ) , (87) which establishes con vexity of the Pareto front. Since, the Pareto front is con ve x, it is continuous, and it has left and right deriv ativ es [24]. Clearly , δ (1) = lim ε → 1 δ ( ε ) by continuity of the Pareto front. By choosing P = I and B = 0 , we hav e δ (1) = 0 . Note that (1 , 0) lies on the Pareto front when ε exact = + ∞ . Indeed, for any B , P satisfying the sparsity constraints, we simultaneously cannot have k P k ≤ 1 , (88) or k B + PW − W k ≤ 0 , (89) with strict inequality in at least one of the above equations. Thus, the pair B = 0 , P = I is Pareto-optimal leading to δ (1) = 0 . C. Pr oof of Theor em 3 W ith the Pareto-optimal solutions of MOP established in Section VI-A and the properties of the Pareto front in Section VI-B, we no w prov e Theorem 3. Pr oof: W e prove the theorem by contradiction. Let k P ε k = ε 0 ≤ ε , and δ 0 = k B ε + P ε W − W k . Assume, on the contrary , that B ε , P ε is not Pareto-optimal. From Lemma 3, there e xists a Pareto-optimal solution B ∗ , P ∗ , at ε 0 , such that k P ∗ k = ε 0 , and δ ( ε 0 ) = k B ∗ + P ∗ W − W k , (90) with δ ( ε 0 ) < δ 0 , since B ε , P ε , is not Pareto-optimal. Since, k P ε k = ε 0 ≤ ε , the Pareto-optimal solution, B ∗ , P ∗ , is feasible for the Learning Pr oblem . In this case, the utility function for the Pareto- optimal solution, B ∗ , P ∗ , is u ( B ∗ , P ∗ ) = 1 1 − k P ∗ k k B ∗ + P ∗ W − W k , (91) = ( ε 0 ) 1 − ε 0 , (92) < δ 0 1 − ε 0 , (93) = u ( B ε , P ε ) . (94) Hence, B ε , P ε is not an optimal solution of the Learning Pr oblem , which is a contradiction. Hence, B ε , P ε November 1, 2018 DRAFT 23 is Pareto-optimal. The abov e theorem suggests that it suffices to find the optimal solutions of the Learning Pr oblem from the set of Pareto-optimal solutions, i.e., the P areto front. The next section addresses the minimization of the utility function, u ( B , P ) , and formulates the performance-con ver gence rate trade-offs. V I I . M I N I M I Z A T I O N O F T H E U T I L I T Y F U N C T I O N In this section, we de velop the solution of the Learning Pr oblem from the Pareto front. The solution of the Learning Pr oblem (50) lies on the Pareto front as already established in Theorem 3. Hence, it suf fices to choose a Pareto-optimal solution from the Pareto front that minimizes (50) under the gi ven constraints. In the following, we study properties of the utility function. A. Pr operties of the utility function W ith the help of Theorem 3, we no w restrict the utility function to the Pareto-optimal solutions 7 . By Lemma 3, for ev ery ε ∈ E , there exists a Pareto-optimal solution, B ε , P ε , with k P ε k = ε, and k B ε + P ε W − W k = δ ( ε ) . (95) Also, we note that, for any Pareto-optimal solution, B , P , the corresponding utility function, u ( B , P ) = k B + PW − W k 1 − k P k = δ ( k P k ) 1 − k P k . (96) This permits us to redefine the utility function as, u ∗ : E 7− → R + , such that, for any Pareto-optimal solution, B , P , u ( B , P ) = u ∗ ( k P k ) (97) W e establish properties of u ∗ , which enable determining the solutions of the Learning Pr oblem . Lemma 6 The function u ∗ ( ε ) , for ε ∈ E , is non-increasing, i.e., for ε 1 , ε 2 ∈ E with ε 1 < ε 2 , we have u ∗ ( ε 2 ) ≤ u ∗ ( ε 1 ) . (98) Hence, min [ B | P ] ∈F ≤ ε u ( B , P ) = u ∗ ( ε ) . (99) 7 Note that when ε exact = + ∞ , the solution B = 0 , P = I is Pareto-optimal, but the utility function is undefined here, although the MOP is well-defined. Hence, for the utility function, we consider only the Pareto-optimal solutions with k P k in E . November 1, 2018 DRAFT 24 Pr oof: Consider ε 1 , ε 2 ∈ E such that ε 1 < ε 2 , then ε 2 is a con ve x combination of ε 1 and 1 , i.e., there exists a 0 < µ < 1 such that ε 2 = µε 1 + (1 − µ ) . (100) From Lemma 3, there e xist δ ( ε 1 ) and δ ( ε 2 ) on the Pareto front corresponding to ε 1 and ε 2 , respecti vely . Since the Pareto front is conv ex (from Lemma 5), we hav e δ ( ε 2 ) ≤ µδ ( ε 1 ) + (1 − µ ) δ (1) . (101) Recall that δ (1) = 0 ; we have u ∗ ( ε 2 ) = δ ( ε 2 ) 1 − ε 2 , = δ ( ε 2 ) 1 − µε 1 − (1 − µ ) , = δ ( ε 2 ) µ (1 − ε 1 ) , ≤ µδ ( ε 1 ) µ (1 − ε 1 ) , (102) and (98) follows. W e no w ha ve min [ B | P ] ∈F ε u ( B , P ) = min [ B | P ] ∈F ε and ( B , P ) is Pareto-optimal u ( B , P ) , = min k P k≤ ε and ( B , P ) is Pareto-optimal u ( B , P ) , = min 0 ≤ ε 0 ≤ ε u ∗ ( ε 0 ) , = u ∗ ( ε ) . (103) The first step follo ws from Theorem 3. The second step is just a restatement since the sparsity constraints are included in the MOP . The third step follo ws from the definition of u ∗ and finally , we use the non- increasing property of u ∗ to get the last equation. W e no w study the cost of the utility function. From Lemma 6, we note that this cost is non-increasing as ε increases. When ε exact < 1 , this cost is 0 . When ε exact = + ∞ , we may be able to decrease the cost as ε → 1 . W e no w define the limiting cost. November 1, 2018 DRAFT 25 Definition 5 [Infimum cost] The infimum cost, c inf , of the utility function is defined as c inf , lim ε → 1 u ∗ ( ε ) , if ε exact = + ∞ , 0 , otherwise . (104) Clearly , the cost does not increase as ε → 1 from Lemma 6. If ε exact = + ∞ , it is not possible for the utility function, u ∗ ( ε ) , to attain c inf , since u ∗ ( ε ) is undefined at k P k = 1 . W e note that when ε exact = + ∞ , the utility function can have a v alue as close as desired to c inf , but it cannot attain c inf . The following lemma establishes the cost of the utility function, u ∗ ( ε ) , as ε → 1 . Lemma 7 If ε exact = + ∞ , then the infimum cost, c inf , is the negati ve of the left deri v ativ e, D − ( δ ( ε )) , of the Pareto front e valuated at ε = 1 . Pr oof: Recall that δ (1) = 0 . Then c inf is gi ven by c inf = lim ε → 1 u ∗ ( ε ) , = lim ε → 1 δ ( ε ) 1 − ε , = lim ε → 1 δ ( ε ) − δ (1) 1 − ε , = − D − ( δ ( ε )) | ε =1 . (105) B. Graphical Representation of the Analytical Results In this section, we graphically vie w the analytical results dev eloped earlier . T o this aim, we establish a graphical procedure using the follo wing lemma. Lemma 8 Let ( ε, δ ( ε )) be a point on the Pareto front and g ( ε ) a straight line that passes through ( ε, δ ( ε )) and (1 , 0) . The cost associated to the Pareto-optimal solution(s) corresponding to ( ε, δ ( ε )) is both the (negati ve) slope and the intercept (on the vertical axis) of g ( ε ) . Pr oof: W e define the straight line, g ( ε ) , as g ( ε ) = c 1 ε + c 2 , (106) November 1, 2018 DRAFT 26 0 0.2 0.4 0.6 0.8 1 0 0.5 1 1.5 2 2.5 3 f 2 = k P k f 1 = k B + PW − W k ( ² ∗ , δ ∗ ) ² ∗ δ ∗ c ∗ (a) 0 0.2 0.4 0.6 0.8 1 0 0.5 1 1.5 2 2.5 3 f 2 = k P k f 1 = k B + PW − W k c o ² o δ o ( ² o , δ o ) (b) 0 0.2 0.4 0.6 0.8 1 0 0.5 1 1.5 2 2.5 3 f 2 = k P k f 1 = k B + PW − W k c a ( ² a , δ a ) ² a δ a (c) Fig. 1. (a) Graphical illustration of Lemma 8. (b) Illustration of case (i) in performance-speed tradeoff. (c) Illustration of case (ii) in performance-speed tradeof f. where c 1 is its slope and c 2 is its intercept on the vertical axis. Since g ( ε ) passes through ( ε, δ ( ε )) and (1 , 0) , its slope, c 1 , is given by c 1 = δ ( ε ) − 0 ε − 1 = − u ∗ ( ε ) . (107) Since g ( ε ) passes through (1 , 0) , at ε = 1 we ha ve c 2 = [ g ( ε ) − c 1 ε ] ε =1 = g (1) − c 1 = u ∗ ( ε ) . (108) Figure 1(a) illustrates Lemma 8, graphically . Let ( ε ∗ , δ ∗ ) be a point on the P areto front. The cost, c ∗ , of the utility function, u ∗ ( ε ∗ ) , is the intercept of the straight line passing through ( ε ∗ , δ ∗ ) and (1 , 0) . C. P erformance-Speed T radeof f: ε exact = + ∞ In this case, no matter how large we choose k P k , the HDC does not con verge to the exact solution. By Lemma 1, the con vergence rate of the HDC depends on ρ ( P ) and thus upper bounding k P k leads to a guarantee on the con vergence rate. Also, from Lemma 6, the utility function is non-increasing as we increase k P k . W e formulate the Learning Pr oblem as a performance-speed tradeof f. From the Pareto front and the constant cost straight lines, we can address the follo wing two questions. (i) Giv en a pre-specified performance, c o (the cost of the utility function), choose a Pareto-optimal solution that results into the fastest conv ergence of the HDC to achiev e c o . W e carry out this procedure by drawing a straight line that passes the points (0 , c o ) and (1 , 0) in the Pareto plane. Then, we pick the Pareto-optimal solution from the Pareto front that lies on this straight line and November 1, 2018 DRAFT 27 also has the smallest value of k P k . See Figure 1(b). (ii) Giv en a pre-specified con ver gence speed, ε a , of the HDC algorithm, choose a Pareto-optimal solution that results into the smallest cost of the utility function, u ( B , P ) . W e carry out this procedure by choosing the Pareto-optimal solution, ( ε a , δ a ) , from the Pareto front. The cost of the utility function for this solution is then the intercept (on the vertical axis) of the constant cost line that passes through both ( ε a , δ a ) and (1 , 0) . See Figure 1(c). W e now characterize the steady state error . Let B o , P o , be the operating point of the HDC obtained from either of the two tradeoff scenarios described abov e. Then, the steady state error in the limiting state, X ∞ , of the network when the HDC with B o , P o is implemented, is giv en by e ss = k ( I − P o ) − 1 B o − W k , (109) which is clearly bounded abov e by (49). D. Exact Solution: ε exact < 1 In this case, the optimal operating point of the HDC algorithm is the Pareto-optimal solution corre- sponding to ( ε exact , 0) on the Pareto front. A typical Pareto front in this case is shown in Figure 2, labeled as Case I. A special case is when the sparsity pattern of B is the same as the sparsity of the weight matrix, W . W e can then choose B = W , P = 0 , (110) as the solution to the Learning Pr oblem and the Pareto front is a single point (0 , 0) shown as Case II in Figure 2. If it is desirable to operate the HDC algorithm at a faster speed than corresponding to ε exact , we can consider the performance-speed tradeoff in Section VII-C to get the appropriate operating point. V I I I . C O N C L U S I O N S In this paper , we present a framework for the design and analysis of linear distributed algorithms. W e present the analysis problem in the context of Higher Dimensional Consensus (HDC) algorithms that contains the av erage-consensus as a special case. W e establish the conv ergence conditions, the con vergent state and the con ver gence rate of the HDC. W e also define the consensus subspace and deri ve its dimensions and relate them to the number of anchors in the network. W e present the in verse problem of deriving the parameters of the HDC to con verge to a giv en state as learning in large-scale November 1, 2018 DRAFT 28 0 0.2 0.4 0.6 0.8 1 0 2 4 6 8 10 f 2 f 1 ² exa ct Case I Case II Fig. 2. T ypical Pareto front. networks. W e sho w that the solution of this learning problem is a Pareto-optimal solution of a multi- objecti ve optimization problem (MOP). W e explicitly prove the Pareto-optimality of the MOP solutions. W e then prov e that the Pareto front (collections of the Pareto-optimal solutions) is con ve x and strictly decreasing. Using these properties of the MOP solutions, we solve the learning problem and also formulate performance-speed tradeof fs. A P P E N D I X I I M P O RT A N T R E S U LT S Lemma 9 If a matrix P is such that ρ ( P ) < 1 , then lim t →∞ P t +1 = 0 , (111) lim t →∞ t X k =0 P k = ( I − P ) − 1 . (112) Pr oof: The proof is straightforward. Lemma 10 Let r Q be the rank of the M × M matrix ( I − P ) − 1 , and r B the rank of the M × n matrix B , then rank ( I − P ) − 1 B ≤ min( r Q , r B ) , (113) rank ( I − P ) − 1 B ≥ r Q + r B − M . (114) Pr oof: The proof is av ailable on pages 95 − 96 in [25]. November 1, 2018 DRAFT 29 A P P E N D I X I I N E C E S S A RY C O N D I T I O N Below , we provide a necessary condition required for the existence of an exact solution of the Learning Pr oblem . Lemma 11 Let ρ ( P ) < 1 , K < M , and let r W denote the rank of a matrix W . A necessary condition for ( I − P ) − 1 B = W to hold is r B = r W . (115) Pr oof: Note that the matrix I − P is in vertible since ρ ( P ) < 1 . Let Q = ( I − P ) − 1 , then r Q = M . From Lemma 10 in Appendix I and since by hypothesis K < M , rank ( QB ) ≤ r B , (116) rank ( QB ) ≥ M + r B − M = r B . (117) The condition (115) now follo ws, since from (34), we also hav e rank ( QB ) = r W . (118) R E F E R E N C E S [1] D. Bertsekas and J. Tsitsiklis, P arallel and Distributed Computations , Prentice Hall, Englewood Cliffs, NJ, 1989. [2] A. Jadbabaie, J. Lin, and A. S. Morse, “Coordination of groups of mobile autonomous agents using nearest neighbor rules, ” vol. A C-48, no. 6, pp. 988–1001, June 2003. [3] L. Xiao and S. Boyd, “Fast linear iterations for distributed averaging, ” Systems and Controls Letters , vol. 53, no. 1, pp. 65–78, Apr . 2004. [4] R. Olfati-Saber , J. A. Fax, and R. M. Murray , “Consensus and cooperation in networked multi-agent systems, ” Pr oceedings of the IEEE , v ol. 95, no. 1, pp. 215–233, Jan. 2007. [5] A. Nedic, A. Olshevsky , A. Ozdaglar, and J. N. Tsitsiklis, “On distributed av eraging algorithms and quantization ef fects, ” T echnical Report 2778, LIDS-MIT , Nov . 2007. [6] P . Frasca, R. Carli, F . Fagnani, and S. Zampieri, “ A verage consensus on networks with quantized communication, ” Submitted to the Int. J . Robust and Nonlinear Contr ol , 2008. [7] T . C. A ysal, M. Coates, and M. Rabbat, “Distributed av erage consensus using probabilistic quantization, ” in IEEE/SP 14th W orkshop on Statistical Signal Pr ocessing W orkshop , Maddison, W isconsin, USA, August 2007, pp. 640–644. [8] U. A. Khan, S. Kar , and J. M. F . Moura, “Distributed sensor localization in random en vironments using minimal number of anchor nodes, ” IEEE T ransactions on Signal Pr ocessing , vol. 57, no. 5, May . 2009, to appear . Also, in arXiv: http://arxiv .org/abs/0802.3563. [9] U. A. Khan and J. M. F . Moura, “Distributed Iterate-Collapse in version (DICI) algorithm for L − banded matrices, ” in IEEE 33rd International Confer ence on Acoustics, Speech, and Signal Processing , Las V egas, NV , Mar . 30-Apr . 04 2008. November 1, 2018 DRAFT 30 [10] U. A. Khan, Soummya Kar , and J. M. F . Moura, “Higher dimensional consensus algorithms in sensor networks, ” in IEEE 34th International Confer ence on Acoustics, Speech, and Signal Pr ocessing , T aipei, T aiwan, Apr . 2009. [11] U. Khan, S. Kar , and J. M. F . Moura, “Distributed algorithms in sensor networks, ” in Handbook on Sensor and Array Pr ocessing , Simon Haykin and K. J. Ray Liu, Eds. W iley-Interscience, New York, NY , 2009, to appear , 33 pages. [12] V . Chankong and Y . Y . Haimes, Multiobective decision making: Theory and methodology , North-Holland series in system sciences and engineering, 1983. [13] A. Rahmani and M. Mesbahi, “Pulling the strings on agreement: Anchoring, controllability , and graph automorphism, ” in American Contr ol Conference , New Y ork City , NY , July 11-13 2007, pp. 2738–2743. [14] S. Kar and J. M. F . Moura, “Sensor networks with random links: T opology design for distributed consensus, ” IEEE T ransactions on Signal Processing , vol. 56, no. 7, pp. 3315–3326, July 2008. [15] A. T . Salehi and A. Jadbabaie, “On consensus in random networks, ” in The Allerton Confer ence on Communication, Contr ol, and Computing , Allerton House, IL, September 2006. [16] S. Kar , S. A. Aldosari, and J. M. F . Moura, “T opology for distributed inference on graphs, ” IEEE T ransactions on Signal Pr ocessing , vol. 56, no. 6, pp. 2609–2613, June 2008. [17] S. Kar and Jos ´ e M. F . Moura, “Distributed consensus algorithms in sensor networks: Link failures and channel noise, ” IEEE T ransactions on Signal Processing , 2008, Accepted for publication, 30 pages. [18] A. Kashyap, T . Basar , and R. Srikant, “Quantized consensus, ” Automatica , vol. 43, pp. 1192–1203, July 2007. [19] M. Huang and J. H. Manton, “Stochastic approximation for consensus seeking: mean square and almost sure conv ergence, ” in Pr oceedings of the 46th IEEE Confer ence on Decision and Control , New Orleans, LA, USA, Dec. 12-14 2007. [20] U. A. Khan and J. M. F . Moura, “Distributing the Kalman filter for large-scale systems, ” IEEE T ransactions on Signal Pr ocessing , vol. 56, Part 1, no. 10, pp. 4919–4935, October 2008, DOI: 10.1109/TSP .2008.927480. [21] H. J. Kushner and G. Y in, Stochastic appr oximations and recur sive algorithms and applicaitons , Springer, 1997. [22] M. B. Nevelson and R. Z. Hasminskii, Stochastic Approximation and Recursive Estimation , American Mathematical Society , Providence, Rhode Island, 1973. [23] S. Boyd and L. V andenberghe, Conve x Optimization , Cambridge University Press, New Y ork, NY , USA, 2004. [24] R. T . Rockafellar , Conve x analysis , Princeton University Press, Princeton, NJ, 1970. Reprint: 1997. [25] G. E. Shilov and R. A. Silverman, Linear Algebra , Courier Dover Publications, 1977. November 1, 2018 DRAFT

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment