Non-Asymptotic Analysis of Tangent Space Perturbation

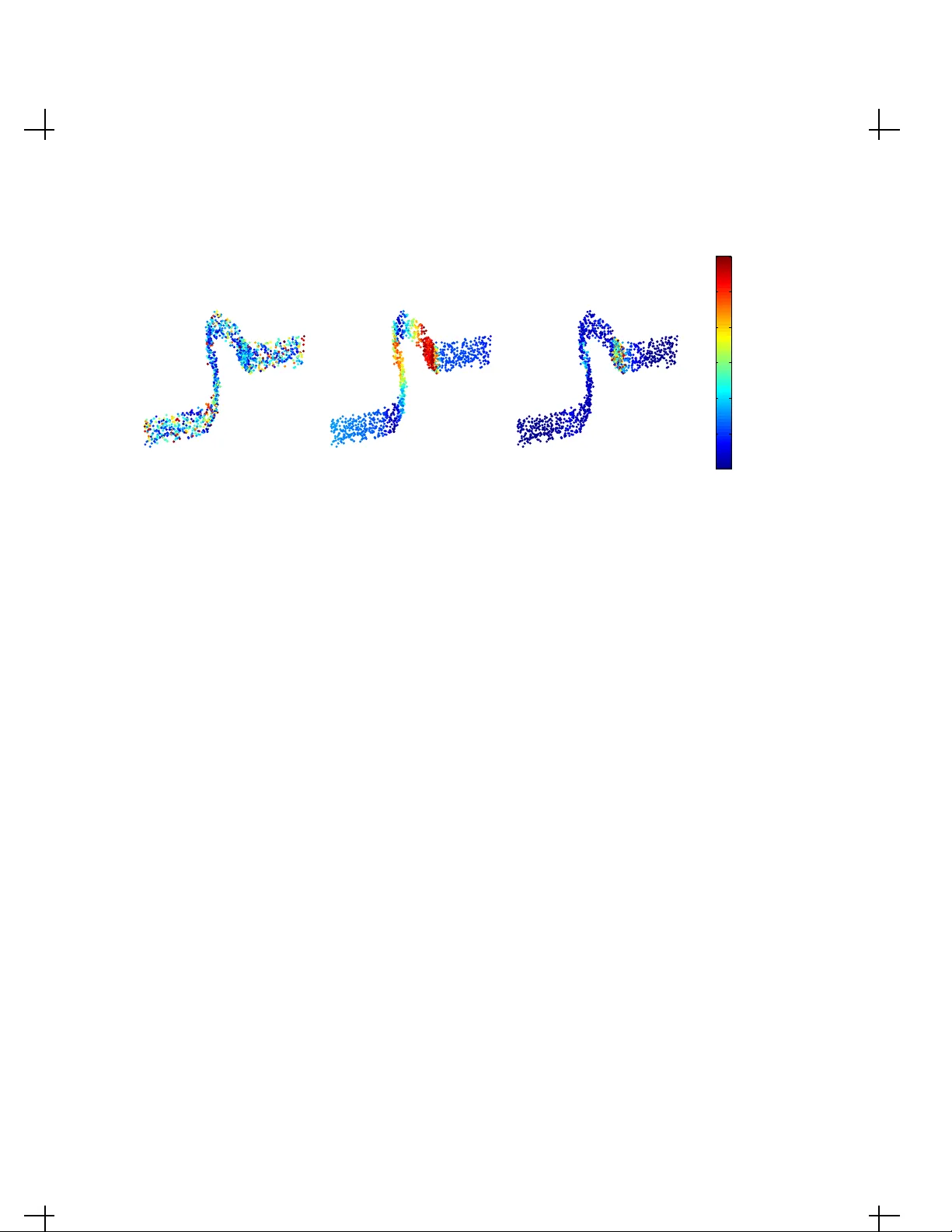

Constructing an efficient parameterization of a large, noisy data set of points lying close to a smooth manifold in high dimension remains a fundamental problem. One approach consists in recovering a local parameterization using the local tangent pla…

Authors: Daniel N. Kaslovsky, Francois G. Meyer