Shape from Texture using Locally Scaled Point Processes

Shape from texture refers to the extraction of 3D information from 2D images with irregular texture. This paper introduces a statistical framework to learn shape from texture where convex texture elements in a 2D image are represented through a point…

Authors: Eva-Maria Didden, Thordis Linda Thorarinsdottir, Alex Lenkoski

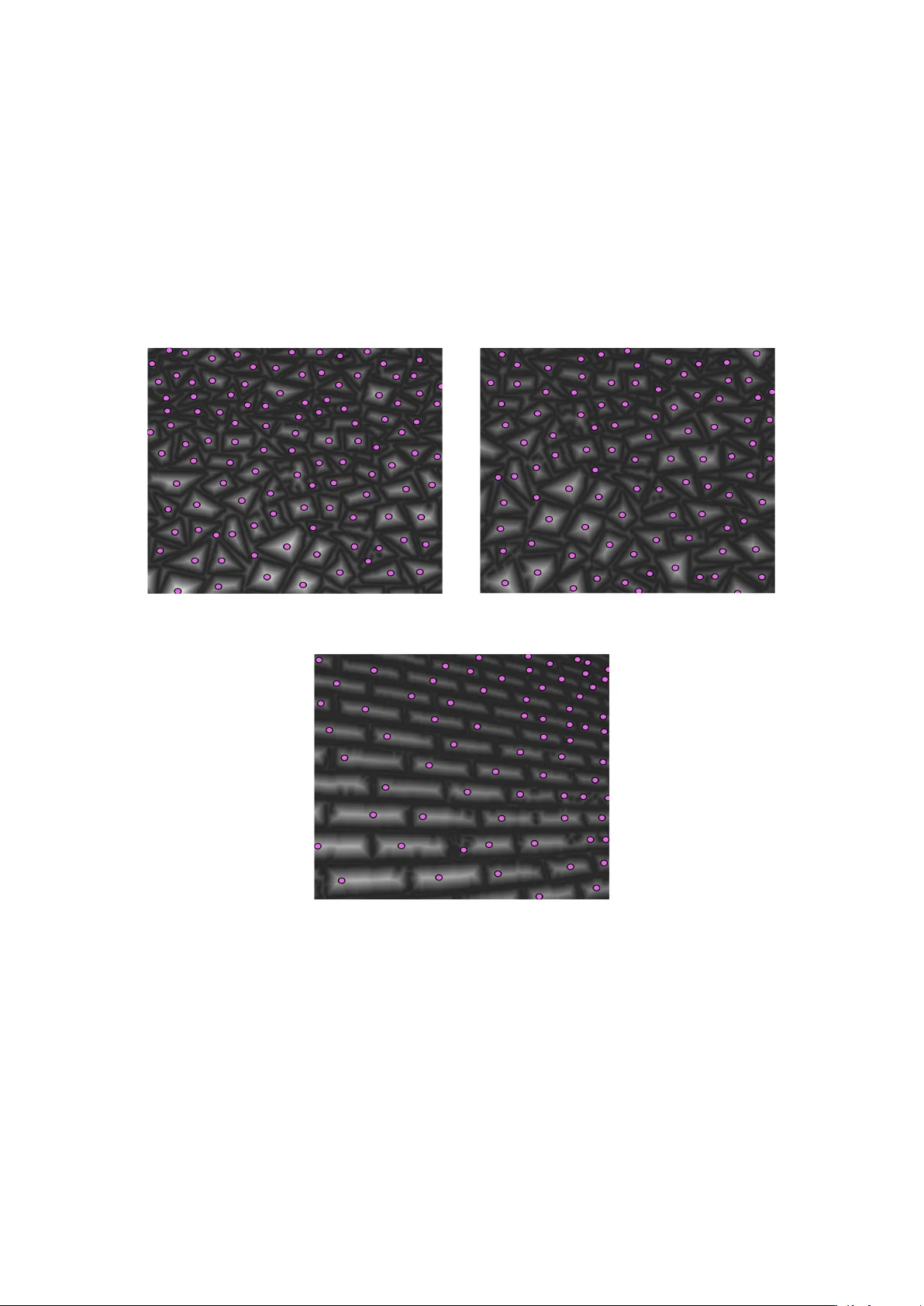

Shape from T e xture using Locally Scaled Point Processes Ev a-Maria Didden 1 , Thordis L. Thorarinsdottir and Alex Lenk oski 2 , and Christoph Schnörr 3 1 Institute of Applied Mathematics, Heidelber g University , Germany 2 Norwe gian Computing Centr e , Oslo, Norway 3 Image & P attern Analysis Gr oup, Heidelber g University , Germany Abstract Shape from texture refers to the e xtraction of 3D information from 2D images with ir- regular texture. This paper introduces a statistical framework to learn shape from texture where con v ex te xture elements in a 2D image are represented through a point process. In a first step, the 2D image is preprocessed to generate a probability map corresponding to an estimate of the unnormalized intensity of the latent point process underlying the te xture elements. The latent point process is subsequently inferred from the probability map in a non-parametric, model free manner . Finally , the 3D information is extracted from the point pattern by applying a locally scaled point process model where the local scaling function represents the deformation caused by the projection of a 3D surface onto a 2D image. K eywords: 3D scenes, con vex te xture elements, locally scaled point processes, near regular texture, perspecti v e scaling, shape analysis 1 Intr oduction Natural images contain a v ariety of perceptual information enabling the viewer to infer the three-dimensional shapes of objects and surfaces (T uceryan and Jain, 1998). Ste vens (1980) observed that surface geometry mainly has three effects on the appearance of te xture in images: foreshortening and scaling of texture elements, and a change in their density . Gibson (1950) proposed the slant, the angle between a normal to the surface and a normal to the image plane, as a measure for surface orientation. Ste vens amended this by introducing the tilt, the angle between the surf ace normal’ s projection onto the image plane and a fix ed coordinate axis in the image plane. In this paper, we will directly infer the surface normal from a single image taken under standard perspecti ve projection. Statistical procedures for estimating surface orientation often make strong assumptions on the regularity of te xture. W itkin (1981) assumes observ ed edge directions provide the necessary information, while Blostein and Ahuja (1989) consider circular texture elements with uniform intensity . Blake and Marions (1990) consider the bias of the orientation of line elements isotrop- ically oriented on a 3D plane, induced by the plane’ s orientation under orthographic projection, along with a computational approach related to Kanatani’ s texture moments (Kanatani, 1989). 1 Malik and Rosenholtz (1997) locally estimate “texture distortion” in terms of an affine trans- formation of adjacent image patches. The strong homogeneity assumption underlying this ap- proach has been relaxed by Clerc and Mallat (2002), to a condition that is difficult to verify in practice. Forsyth (2006) eliminates assumptions on the non-local structure of textures (like homogeneity) altogether and aims to estimate shape from the deformation of indi vidual te xture elements. Loh and Hartle y (2005) criticize prior work due to the restricti ve assumptions related to homogeneity , isotropy , stationarity or orthographic projection, and claim to devise a shape- from-texture approach in the most general form. Their work, ho we v er , also relies on estimating the deformation of single texture elements, similar to F orsyth (2006). W e propose a general framew ork for inferring shape from near regular textures, as defined by Liu et al. (2009), by applying the locally scaled point process model of Hahn et al. (2003). This framework enables the simultaneous representation of local variability and global regu- larity in the spatial arrangement of texture elements which are thought of as a marked point process. W e preprocess the image to obtain a probability map representing an unnormalized intensity estimate for the underlying point process, subsequently apply a non-parametric frame- work to infer the point locations and based on the resulting point pattern, learn the parameters of a locally scaled point process model to obtain a compact description of 3D image attributes. Point process models hav e previously been applied in image analysis applications where the goal is the detection of texture elements, see e.g. Lafarge et al. (2010) and references therein. These approaches usually apply a marked point process framew ork, with marks describing the texture elements. Such set-ups rely on a good geometric description of indi vidual texture el- ements, limiting the class of feasible textures. As our goal is not the detection of indi vidual texture elements but the extraction of 3D information, we omit the modeling of each texture element and infer the latent point locations in a model free manner . Thus, our sole assumption regarding te xture element shape is approximate con vexity which of fers considerable fle xibility . The remainder of the paper is or ganized as follo ws. The next section contains preliminaries on image geometry follo wed by the method section describing the image preprocessing, the point pattern detection and the point process inference framew ork. W e then present results for both simulated and real images with near re gular te xtures. Finally , the paper closes with a short discussion section. 2 Pr eliminaries Let P = { X ∈ R 3 : h δ, X i + h = 0 } , (1) with k δ k = 1 and h δ, X i < 0 , denote a 3D plane with unkno wn unit normal δ and distance h from the origin. W e assume δ to be oriented to w ards the camera, forming obtuse angles h δ, X i < 0 with projection rays X . The world coordinates X = ( X 1 , X 2 , X 3 ) > and image coordinates x = ( x 1 , x 2 ) > are aligned as shown in Fig. 1. Here, we denote the image domain by D and assume the image to be scaled to hav e fixed area, | D | = a . W e consider the basic pinhole camera (Hartley and Zisserman, 2000) and among the internal parameters, we only look at the focal length f > 0 which depends on the field of view , see Fig. 1. As usual, we identify image points and rays of the projecti ve plane through X = ( x 1 , x 2 , − f ) > . (2) An image point X giv en by (2) meets P in λX with λ = − h h δ, X i , λ > 0 . (3) 2 Figure 1: The camera with focal length f is oriented to wards the negati ve X 3 -halfspace. The scaled visible image domain is D = [ − a/ 2 , a/ 2] × [ − 1 / 2 , 1 / 2] . Gi ven the field of view in terms of an angle φ c , we hav e f = a/ 2 tan( φ c / 2) . It follo ws that a point X P in P is related to the image point X through X P = X P ( x 1 , x 2 ) = − h h δ, X i X . (4) A homogeneous texture cov ering P induces an inhomogeneous texture on the two-dimensional image plane with density gi ven by the surf ace element dX P = k ∂ x 1 X P × ∂ x 2 X P k λ 2 ( d x ) = − h 2 f h δ, X i 3 λ 2 ( d x ) , (5) where λ 2 denotes the two-dimensional Lebesgue measure. T aking, for instance, the fronto- parallel plane δ = (0 , 0 , 1) > results by (2) merely in the constant scale factor ( h/f ) 2 , i.e. the homogeneous density ( h/f ) 2 λ 2 ( d x ) . Howe ver , for arbitrary orientation δ , this factor depends on X , as illustrated in Fig. 2. Eqn. (5) then quantifies perspectiv e foreshortening and inhomo- geneity of the texture, respectiv ely , as observed in the image, and mathematically represents the visually apparent texture gradient. (a) δ =( 1 √ 2 , 0 , 1 √ 2 ) > (b) δ =( 1 2 √ 2 , 1 2 √ 2 , √ 3 2 ) > Figure 2: Mappings of re gular homogeneous point patterns in R 3 onto a 2D-plane. The simulations are based on the parameters D = [ − 1 / 2 , 1 / 2] × [ − 1 / 2 , 1 / 2] , h = 20 and φ c = 27 ◦ ( f = 0 . 98 ). 3 3 Methods In a first step, we apply image preprocessing that generates a probability map Y = { Y ( x ) : x ∈ D , 0 ≤ Y ( x ) ≤ 1 } representing the spatial arrangement of texture elements in the im- age. T o this end, two elementary techniques are locally applied: Boundary detection and the corresponding distance transform. The former step entails either gradient magnitude computa- tion using small-scale deri v ati ve-of-Gaussian filters (Cann y, 1986) or , for texture elements with less regular appearance, the earth-mover’ s distance (Pele and W erman, 2009) between local his- tograms. Inspecting in turn the histogram of the resulting soft-indicator function for boundaries enables one to determine a threshold and apply the distance transform. In our framework, the texture elements are regarded as a realization of a marked point process where the underlying point pattern is latent. The value of the probability map Y ( x ) in x ∈ D denotes the probability that one of the latent points is located in x . T o recover the latent point pattern based on the information in Y , we first search for local maxima in Y . That is, for some k 1 > 0 , let W x = [ x 1 − k 1 , x 1 + k 1 ] × [ x 2 − k 1 , x 2 + k 1 ] and set Φ = { x ∈ D : W x ⊂ D , Y ( x ) = max z ∈ W x Y ( z ) } . (6) W e then define a neighbourhood relation on Φ by setting x 1 ∼ x 2 if min z ∈ [ x 1 ,x 2 ] Y ( z ) ≥ k 2 max { Y ( x 1 ) , Y ( x 2 ) } , (7) where x 1 , x 2 ∈ Φ , [ x 1 , x 2 ] denotes the line from x 1 to x 2 and k 2 is a constant with 0 < k 2 < 1 . W e may now write Φ as a union of disjoint neighbourhood components, Φ = ∪ i =1 ,...,n C i , where each x ∈ C i is neighbour with at least one point in C i \ x . Under the assumption that the texture elements are close to con v ex, two points x 1 and x 2 in Φ are neighbours if and only if they likely fall within the same te xture element. Hence, we estimate the latent point process Ψ as Ψ = { x 1 , . . . , x n : Y ( x i ) = max z ∈ C i Y ( z ) } . (8) Formally , a point process can be described as a random counting measure N ( · ) , where N ( A ) is the number of e vents in A for a Borel set A of the relev ant state space, in our context the image domain D . The intensity measure of the point process is gi ven by Λ( A ) = E N ( A ) and the associated intensity function is α ( x ) = lim | d x |→ 0 E N ( d x ) | d x | . (9) For a homogeneous point process, it holds that α ( x ) = β for some β > 0 , while for an inhomo- geneous point process where the inhomogeneity stems from local scaling (Hahn et al. , 2003) we obtain α ( x ) = β c − 2 η ( x ) , (10) for some scaling function c η : R 2 → R + with parameters η . The scaling function c η acts as a local deformation in that it locally affects distances and areas. More precisely , ν d c ( A ) = R A c η ( x ) − d ν d ( d x ) , where v d denotes the d -dimensional volume measure and ν d c its scaled ver- sion for d = 1 , 2 . For identifiability reasons, Prokešová et al. (2006) propose normalizing c η to conserve the total area of the state space. That is, they define the normalizing constant of the scaling function such that λ 2 ( D ) = Z D c − 2 η ( x ) λ 2 ( d x ) . (11) 4 (a) η = ( − 1 , 0) > (b) η = ( − 1 , − 1) > Figure 3: Examples of distances from the point (0 , 0) within the observ ation window D = [ − 1 / 2 , 1 / 2] × [ − 1 / 2 , 1 / 2] , under exponential scaling assumptions due to (12). Darker shades of gray indicate smaller distances. Hahn et al. (2003) and Prokešová et al. (2006) specifically consider the exponential scaling function with c η ( x ) ∝ exp( η > x ) . This scaling function is particularly attracti ve in that locally scaled distances can be calculated explicitely , d c ( x i , x j ) = d ( x i , x j ) c − 1 η ( x i ) − c − 1 η ( x j ) η T ( x j − x i ) , (12) for any x i , x j ∈ D where d ( · , · ) denotes the Euclidean distance and d c ( · , · ) its scaled version. Examples of exponentially scaled distances are gi v en in Fig. 3. Here, we employ the density in (5) as a scaling function where we choose spherical coordi- nates δ = δ ( η 1 , η 2 ) (13) = (sin η 1 cos η 2 , sin η 1 sin η 2 , cos η 1 ) > , with η 1 ∈ [0 , u ] and η 2 ∈ [0 , 2 π ] . The upper limit u restricting the range of the scaling parameter η 1 ensures that h δ , X i < 0 and therefore depends on the focal length f as well as on the size and location of the observation windo w D . As suggested by Prokešo vá et al. (2006), we normalize the scaling function such that (11) holds. That is, we solve | D | = a = Z D γ ( δ , h, f ) dX P . (14) It follo ws that γ ( δ , h, f ) = 1 16 h 2 f 2 δ 3 ( aδ 1 − 2 f δ 3 − δ 2 ) × ( aδ 1 − 2 f δ 3 + δ 2 ) × ( aδ 1 + 2 f δ 3 − δ 2 ) × ( aδ 1 + 2 f δ 3 + δ 2 ) . A more general result for D = [ a 1 , a 1 ] × [ b 1 , b 2 ] is gi v en in the Appendix. Under the model in (5), the intensity function in (10) becomes α ( x ) = β γ δ ( η 1 , η 2 ) , h, f h 2 f h δ ( η 1 , η 2 ) , X i 3 , (15) 5 (a) η = (45 ◦ , 0 ◦ ) > (b) η = (30 ◦ , 45 ◦ ) > Figure 4: Examples of distances from the point (0 , 0) within the observ ation window D = [ − 1 / 2 , 1 / 2] × [ − 1 / 2 , 1 / 2] , under scaling assumptions due to (16). Darker shades of gray indicate smaller distances. with X = ( x 1 , x 2 , − f ) > as in (2). As a byproduct, the unkno wn plane parameter h cancels. It sets the absolute scale and cannot be inferred from a single image. Furthermore, the scaling function is computationally tractable and, as for the exponential scaling discussed above, the scaled distance function is av ailable in closed form, d c ( x i ,x j ) = d ( x i , x j ) × γ ( δ, h, f 1 2 (16) × 2 h √ f h δ, X i − X j i 1 h δ, − X i i 1 2 − 1 h δ, − X j i 1 2 ! , provided that the basic requirement h δ, X i i < 0 is fulfilled for all i = 1 , . . . , n . Examples of scaled distances are giv en in Fig.4. When compared with Fig. 3, we see that the perspectiv e scaling in (15) results in similar distance scaling as the exponential scaling while it also provides a coherent description of the perspecti ve foreshortening. For a giv en image, we assume that the focal length f is known. It remains to estimate the parameters ( β , η 1 , η 2 ) of the intensity function in (15) based on the estimated point pattern Ψ . The desired 3D image information, the slant and the tilt of the surface, may then be character- ized by the scaling parameter estimates ˆ η 1 and ˆ η 2 . The parameter estimation is performed by maximizing the composite likelihood, see e.g. Møller (2010), that takes the form L (Ψ | β , η 1 , η 2 ) ∝ exp( − β | D | ) β n n Y i =1 c − 2 η ( x i ) . (17) The maximum composite likelihood estimate for β is ˆ β = n/ | D | . For the remaining two parameters–the parameters of interest in our setting–we maximize the function l (Ψ | ˆ β , η 1 , η 2 ) (18) = n log n | D | − 1 + n X i =1 log( c − 2 η ( x i )) . 4 Results W e first present the results of a simulation study where we analyse sets of 3D point coordi- nates sampled from either a perfectly regular pattern or a homogeneous Poisson processes and subsequently projected onto the 2D-plane D = [ − 1 / 2 , 1 / 2] × [ − 1 / 2 , 1 / 2] , see Fig. 2 and Fig. 5. 6 (a) δ =( 1 √ 2 , 0 , 1 √ 2 ) > (b) δ =( 1 2 √ 2 , 1 2 √ 2 , √ 3 2 ) > Figure 5: Simulated Poisson point patterns with 3D shape gi ven by the outer normals in the subfigure captions. The internal parameters correspond to the settings in Fig. 2 and Fig. 4. W e estimate the scaling parameters associated with the synthetic patterns via the compos- ite likelihood in (18). The true parameter v alues and the corresponding estimates are gi ven in T able 1. While the estimation procedure is able to reconstruct the true values with a resonable accuracy , the results are slightly better for the re gular patterns than for the random patterns. These results are representativ e for se veral further such examples (results not shown), and we conclude that the composite likelihood is able to identify the scaling parameters of the perspec- ti ve scaling function irrespecti v e of the second order structure of the point pattern. T able 1: True angles and composite lik elihood estimates for the surface normals of the simulated point patterns in Figures 2 and 5. Regular pattern type refers to the images in Figure 2 and Poisson type to the images in Figure 5. Pattern type ( η 1 , η 2 ) ( ˆ η 1 , ˆ η 2 ) Regular (45 ◦ , 0 ◦ ) (45 . 5 ◦ , 0 . 0 ◦ ) Poisson (45 ◦ , 0 ◦ ) (46 . 2 ◦ , 0 . 7 ◦ ) Regular (30 ◦ , 45 ◦ ) (29 . 9 ◦ , 45 . 7 ◦ ) Poisson (30 ◦ , 45 ◦ ) (26 . 2 ◦ , 45 . 5 ◦ ) For the analysis of real natural scenes, we apply our methodology to the set of tiling and brick images sho wn in Fig. 6. The original images are of size 1280 × 960 pix els and during the preprocessing they are do wnsided to 1066 × 846 pixels in order to eliminate boundary ef fects in the point detection. The probability maps and the resulting point patterns are shown in Fig. 7. W e have here applied neighbourhoods of sixe 75 × 75 pixels for the tiling scenes and 55 × 55 pixels for the bricks scene, with a threshold of k 2 = 0 . 25 for the neighbourhood relation in all cases. The point detection is very robust in the selection of threshold v alue and threshold values from 0 . 15 to 0 . 5 have limited ef fects on the results. It is some what more sensiti v e to changes in the neighbourhood size; for the tiling images neighbourhoods from 55 × 55 to 95 × 95 result in similar scaling parameter estimates while for the bricks image, slightly smaller neighbourhoods seem to be needed. For deri ving the information on camera positioning and angle from the point configurations in Fig. 7, we project the point process realizations onto an observ ation window D of dimension [ − 0 . 69 , 0 . 69] × [ − 0 . 50 , 0 . 50] . W e further assume that the field of view corresponds to a standard wide angle setting of φ c = 54 ◦ and hence take f = 0 . 98 as a basis, the same settings as we applied in the simulation examples abov e. The resulting scaling parameter estimates are listed in T able 2 and the 3D orientation of the camera tow ard the textures is illustrated in Fig. 6. 7 (a) T iling A (b) T iling B (c) Bricks Figure 6: Original natural scenes (left) and the estimated 3D orientation to w ards the camera (right). The field of vie w is assumed to be driv en by a wide angle setting of φ c = 54 ◦ . 8 (a) T iling A (b) Tiling B (c) Bricks Figure 7: Estimated probability maps and point configurations for the natural scenes in Fig. 6. 9 T able 2: Perspecti ve scaling parameter estimates for the natural scenes in Fig. 6. T exture type ( ˆ η 1 , ˆ η 2 ) (a) T iling A (22 . 1 ◦ , 94 . 7 ◦ ) (b) T iling B (12 . 2 ◦ , 65 . 9 ◦ ) (c) Bricks (36 . 0 ◦ , 44 . 1 ◦ ) 5 Discussion This paper introduces a framework for extracting 3D information from a te xtured 2D image building on the recently dev eloped locally scaled point processes (Hahn et al. , 2003). The per- specti ve scaling function quantifies perspectiv e foreshortening and the resulting inhomogeneity of the texture. The frame work is quite flexible regarding assumptions on the texture composi- tion in that it only requires the texture elements to be close to con ve x in shape and it successfully extracts useful information related to camera orientation. The separation of image preprocessing and point detection on one hand and the estimation procedure for the scaling parameters on the other hand of fers great flexibility . W e belie ve that the locally scaled point process frame work can be applied in more general settings to analyse point patterns in images, for instance, as a ne w additional inference step in the te xture detection algorithms discussed in Lafarge et al. (2010) and references therein. Due to the lo w computa- tional budget of our framew ork, it also seems feasible to combine it with image segmentation where 3D information is needed for se veral se gments within an image, each of which might be cov ered with a dif ferent type of texture elements. There are further considerable a v enues for de v elopment. One area for future dev elopment is to build a large hierarchical framework where the three inference steps, the image preprocessing, the point detection and the parameter estimation, are joined in an iterati ve fashion. A fully Bayesian inference framework along the lines of the w ork of Rajala and Penttinen (2012) could also be an alternativ e to the composite likelihood estimation performed here. Future work will concentrate on embellishing our inference frame work. 6 Acknowledgments W e thank Ute Hahn for sharing her expertise. This work has been supported by the German Science Foundation (DFG), grant R TG 1653. The work of Thordis L. Thorarinsdottir and Alex Lenkoski was further supported by Statistics for Inno v ation, sfi 2 , in Oslo. Refer ences Blake, A., Marinos, C. (1990): Shape from texture: Estimation, isotropy and moments. Ar- tif. Intellig. 45, 323–380. Blostein, D., Ahuja, N. (1989): Shape from texture: Integrating texture-element extraction and surface estimation. IEEE T rans. P att. Anal. Mach. Intell. P AMI-11, 1233–1251. Canny , J. (1986): A computational approach to edge detection. IEEE T rans. P att. Anal. Mach. Intell. P AMI-8, 679–698. 10 Clerc, M., Mallat, S. (2002): The texture gradient equation for recovering shape from texture. IEEE T rans. P att. Anal. Mach. Intell. 24(4), 536–549. Forsyth, D. (2006): Shape from texture without boundaries. Int. J. Comp. V ision 67(1), 71–91. Gibson, J. (1950): The perception of the visual world. Houghton Mifflin, Boston, MA. Hahn, U., Jensen, E.V ., v an Lieshout, M.C., Nielsen, L. (2003): Inhomogenous spatial point processes by location-dependent scaling. Adv . Appl. Prob . (SGSA) 35, 319–336. Hartley , R., Zisserman, A. (2000): Multiple Kanatani, K. (1989): Shape from texture: General principle. Artif. Intell. 38, 1–48. Lafar ge, F ., Gimel’Farb, G., Descombes, X. (2010): Geometric feature extraction by a multi- marked point process. IEEE T rans. P att. Anal. Mach. Intell. 32(9), 1597–1609. Liu, Y ., Hel-Or , H., Kaplan, C., V an Gool, L. (2009): Computational symmetry in computer vision and computer graphics. Found. T rends Comp. Graphics and V ision 5(1-2), 1–195. Loh, A., Hartley , R. (2005): Shape from non-homogeneous, non-stationary , anisotropic, per- specti ve te xture. In: Proc. BMVC. pp. 69–78. Malik, J., Rosenholtz, R. (1997): Computing local surface orientation and shape from texture for curved surf aces. Int. J. Comp. V ision 23(2), 149–168. Møller , J. (2010): Spatial point patterns: Parametric Methods. In: Gelfand, A.E., Diggle, P .J., Fuentes, M., Guttorp, P . (eds.) Handbook of Spatial Statistics. CRC Press, Boca Raton, FL. Møller , J., W aagepetersen, R.P . (2004): Statistical Inference and Simulation for Spatial Point Processes. Chapman & Hall/CRC, Boca Raton, FL. Pele, O., W erman, W . (2009): Fast and robust earth mov er’ s distances. In: Proc. Int. Conf. Comp. V ision (ICCV). Prokešo vá, M., Hahn, U., Jensen, E.B.V . (2006): Statistics for locally scaled point processes. In: Baddeley , A., Gregori, P ., Mateu, J., Stoica, R., Stoyan, D. (eds.) Case Studies in Spatial Point Process Modelling. vol. 185, pp. 99–123. Springer , New Y ork. Rajala, T ., Penttinen, A. (2012): Bayesian analysis of a Gibbs hard-core point pattern model with v arying repulsion range. Comp. Stat. Data Anal. in press. Ste vens, K.A. (1980): Surface perception from local analysis of texture and contour . T ech. Rep. AI-TR 512, MIT T echnical Report, Artificial Intelligence Laboratory . T uceryan, M., Jain, A.K. (1998): T exture analysis. In: Chen, C.H., Pau, L.F ., W ang, P .S.P . (eds.) Handbook of Pattern Recognition and Computer V ision (2nd edition). pp. 207–248. W orld Scientific, Singapore. W itkin, A.P . (1981): Recovering surface shape and orientation from texture. Artif. Intellig. 17, 17–45. 11 7 A ppendix In our data analysis, we assume that the image domain is normalized such that D = [ − a/ 2 , a/ 2] × [ − 1 / 2 , 1 / 2] . More generally , the image domain could be of the form D = [ a 1 , a 2 ] × [ b 1 , b 2 ] for some a 1 , a 2 , b 1 , b 2 ∈ R with a 1 < a 2 and b 1 < b 2 . In this case, the condition of conservation of the total area in (11) becomes | D | = ( a 2 − a 1 )( b 2 − b 1 ) = Z D γ ( δ , d, f ) dX P . (19) It follo ws that γ ( δ , h, f ) = 2 h 2 f ( − ( a 1 + a 2 ) δ 1 − ( b 1 + b 2 ) δ 2 + f δ 3 ) − 1 × ( a 1 δ 1 + b 1 δ 2 − f δ 3 ) × ( a 1 δ 1 + b 2 δ 2 − f δ 3 ) (20) × ( a 2 δ 1 + b 1 δ 2 − f δ 3 ) × ( a 2 δ 1 + b 2 δ 2 − f δ 3 ) . 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment