Gradient Hard Thresholding Pursuit for Sparsity-Constrained Optimization

Hard Thresholding Pursuit (HTP) is an iterative greedy selection procedure for finding sparse solutions of underdetermined linear systems. This method has been shown to have strong theoretical guarantee and impressive numerical performance. In this p…

Authors: Xiao-Tong Yuan, Ping Li, Tong Zhang

Gradien t Hard Thresholding Pursuit for Sp ar sit y-Cons trained Optimization Xiao-T ong Y uan 1 , 2 , Ping Li 2 , 3 , T ong Zhang 2 1. Departmen t of Sta tistical Science, Cornell Univ ersity Ithaca, New Y ork, 14853, USA 2. Departmen t of Sta tistics & Biostatistics, Rutgers Univ ersity Piscata w ay , New Jersey , 08 854, USA 3. Departmen t of Computer Science, Rutg ers Univ ersit y Piscata w ay , New Jersey , 08 854, USA E-mail: { xtyuan1 980@gmail.com , pingli@stat.rutgers. edu , tzhang@stat .rutgers.edu } Abstract Hard Thresholding Purs uit (HTP) is an itera tive greedy selection pro cedure for finding sparse solutions of underdetermined linear systems. This method has b een shown to have s trong theo - retical guar antee a nd impressive numerical per formance. In this paper , we gener alize HTP from compressive sensing to a gener ic problem setup o f s parsity-constrained conv ex optimization. The prop osed algorithm iterates betw een a standard gradient descent step and a hard thresholding step with or without debiasing. W e prov e that our metho d enjoys the strong guarantees a nal- ogous to HTP in terms of rate o f convergence and para meter estimation accuracy . Numerical evidences show that our metho d is sup erior to the state-of-the-art gr eedy selection metho ds in sparse log istic reg ression and sparse precisio n matrix estimation tasks. Key w ords. Sparsit y , Greedy Selection, Hard Thresh olding Pursuit, Gradient Descen t. 1 1 In tro du ction In the p ast d ecade, high-dimens ional d ata analysis has receiv ed br oad r esearc h int erests in data mining and scient ifi c disco v ery , with man y significant results obtained in th eory , algorithm and applications. The ma jor driv en force is the rapid d ev elopmen t of d ata collection tec hn ologies in man y applications d omains such as so cial n et w orks , natural language p ro cessing, bioinformatics and compu ter vision. In these applications it is not unusual that data samples are repr esen ted with millions or ev en billions of f eatures using wh ic h an u nderlying statistical learning mo del m ust b e fit. In man y circumstances, ho wev er, the num b er of collected s amp les is substant ially smaller than the dimensionalit y of th e feature, imp lying th at consisten t estimators cann ot b e hop ed for unless additional assumptions are imp osed on th e mo del. One of the wid ely ac kno w ledged p rior assumptions is that the data exhibit lo w-dimensional structure, which can often b e captured b y imp osing s parsit y constraint on the mo d el p arameter space. It is th us crucial to dev elop robust and efficient compu tational pro cedures for solving, ev en just a p pro ximately , these optimization problems with sparsit y constraint. In this pap er, we fo cus on the follo wing generic sparsity- constrained optimization problem min x ∈ R p f ( x ) , s.t. k x k 0 ≤ k, (1.1) where f : R p 7→ R is a smo oth conv ex cost fun ction. Among ot h ers, several examples falling in to this mo del include: (i) Sp ars it y-constrained linear r egression m o del (T ropp & Gilb ert, 2007) where the residual error is used to measure data r econstruction e r ror; (ii) Sparsity- constrained logistic regression mo d el (Bahmani et al., 2013) where the s igmoid loss is us ed to m easure pr ed iction error; (iii) S parsit y-constrained graphical mo del learning (Jalali et al., 2011) w h ere the likelihoo d of samples dra wn from an und erlying probabilistic mo d el is used to measure data fi delit y . Unfortunately , due to the non-con v ex ca r d inalit y constraint, the problem (1 .1 ) is generally NP-hard even for the quadratic cost function (Natara jan , 1995 ). Th u s, one m u st ins tead seek appro ximate solutions. In particular, the sp ecial case of (1.1) in least square regression mo dels has gained significant atten tion in the area of compressed sensing (Donoho, 2006 ). A v ast b o dy of greedy selection algorithms for compressing sensing ha v e b een prop osed includ ing matc hing pur- suit (Mallat & Z hang, 1993), orthogonal matc hing p ursuit (T r opp & Gilb er t, 2007), compressive sampling matc hin g pursuit (Needell & T ropp, 2009), hard thr esholding pursu it (F oucart, 2011), iterativ e hard thresholding (Blumensath & Da vies , 2009) and subspace pur suit (Dai & Milenko vic, 2009) to name a few. These algorithms successiv ely select the p osition of n onzero en tries and esti- mate their v alues via exp lorin g the residual err or from the previous iteration. C omparing to those first-order conv ex optimization metho ds dev elop ed for ℓ 1 -regularized sp arse learning (Bec k & T eb oulle, 2009; Agarwa l et al. , 2010), these greedy selection algo r ithms often exhibit s imilar accuracy guar- an tees but more attractiv e computational efficiency . The least square err or used in compressive sens in g, how ev er, is not an app ropriate measure of discrepancy in a v ariet y of applications b ey ond signal pro cessing. F or example, in sta tisti- cal mac hine lea r ning the lo g-lik eliho o d function is commonly used in logistic regression prob- lems (Bishop, 2006) and graphical mo dels le arn ing (Jalali et al., 20 11 ; R avikumar et al., 2011). Th u s, it is desirable to inv estigate theory and algorithms applicable to a broader class of s p arsit y- constrained learning problems as giv en in (1.1). T o this end, sev eral forwa rd selection algo- rithms h a v e b een p rop osed to select th e nonzero en tries in a sequen tial fashion (Kim & Kim, 2004; S halev-Sh wartz et al., 2010; Y uan & Y an, 201 3 ; Jaggi, 2011 ). This catego r y of method s date 2 bac k to the F rank-W olfe metho d (F rank & W olfe, 1956). The forward greedy selection metho d has also b een generalized t o minimize a con vex ob jectiv e o v er the linear hull of a collection of atoms (T ewa r i et al., 2011 ; Y uan & Y an, 20 12 ). T o mak e the greedy selection pro cedure more adaptiv e, Zhang (2008) prop osed a forw ard-b ac kw ard algorithm whic h tak es b ackw ard steps adap- tiv ely whenev er b eneficial. Jalali et al. (2011) hav e applied this forwa rd -bac kwa rd selection metho d to learn the structur e of a sparse graphical mo del. More recen tly , Bahmani et al. (2013) pr op osed a gradien t hard-thresholding metho d whic h generalizes the compressiv e sampling matc hing pursu it metho d (Needell & T ropp, 2009) fr om compressiv e sensing to the general sparsity-c onstr ained op- timizatio n problem. The hard-thr eshholding-t yp e metho ds ha v e also b een shown to b e statistical ly and computationally efficien t for sp arse principal comp onent analysis (Y uan & Zhang, 2013; Ma, 2013). 1.1 Our Con tribution In this pap er, inspired by the success of Hard Thresh olding Pursuit (HTP) (F oucart, 2011, 2012) in compressiv e sensing, w e prop ose the Gradient Hard Thr esholding Pur suit (GraHTP) metho d to encompass the sparse estimation problems arising f r om app lications with general n onlinear mo dels. A t eac h iteration, GraH T P p erforms standard gradien t descen t follo we d by a hard thresh oldin g op eration whic h fi r st s elects the t op k (in magnitude) ent r ies of the resultant v ector and th en (optionally) conducts debiasing on the sele cted en tries. W e pro ve that und er mild conditions GraHTP (with or without debiasing) h as str ong theoretical guaran tees analogous to HTP in terms of con v ergence rate and parameter estimation accuracy . W e hav e applied GraHTP to the sparse logistic regression mod el and the spars e precision matrix estimatio n mo del, verifying that the guaran tees of HTP are v alid for these t wo mo dels. E mpirically w e demonstr ate that GraHTP is comparable or sup erior to the state-of-t h e-art greedy selec tion method s in these t wo sparse learning mo d els. T o our knowledge , GraHTP is the first gradien t-descen t-truncation-t yp e metho d for sparsity constrained nonlinear problems. 1.2 Notation In the follo win g, x ∈ R p is a v ector, F is an index set and A is a matrix. The follo wing notations will b e used in th e text. • [ x ] i : the i th en try of v ector x . • x F : the restriction of x to in dex set F , i.e., [ x F ] i = [ x ] i if i ∈ F , and [ x F ] i = 0 otherwise. • x k : the restriction of x to the top k (in mo dulus) entries. W e will simplify x F k to x k without am biguit y in the con text. • k x k = √ x ⊤ x : the Euclidean norm of x . • k x k 1 = P d i =1 | x i | : the ℓ 1 -norm of x . • k x k 0 : the num b er of n onzero ent r ies of x . • sup p( x ): the ind ex set of n onzero entries of x . • sup p( x, k ): th e index set of the top k (in mo du lus) en tries of x . 3 • [ A ] ij : the elemen t on the i th ro w and j th column of matrix A . • k A k = sup k x k≤ 1 k Ax k : the sp ectral norm of matrix A . • A F • ( A • F ): the r o ws (columns) of matrix A indexed in F . • | A | 1 = P 1 ≤ i ≤ p, 1 ≤ j ≤ q | [ A ] ij | : the elemen t-wise ℓ 1 -norm of A . • T r( A ): the trace (sum of diagonal elemen ts) of a sq u are matrix A . • A − : the restriction of a squ are matrix A on its off-diag onal ent r ies • vec t ( A ): (column wise) ve ctorization of a matrix A . 1.3 P ap er Or ganization This pap er pr o ceeds as follo ws: W e p resen t in § 2 the GraHTP algorithm. The con v ergence guar- an tees of GraHTP are pro vided in § 3. The sp ecializations of GraHTP in logistic r egression and Gaussian graphical m o dels learning are in v estigated in § 4. Mon te-Carlo simulatio n s and exp eri- men tal results on r eal data are presen ted in § 5. W e conclude this pap er in § 6. 2 Gradien t Hard Th resholding Pursuit GraHTP is an iterativ e greedy selection p ro cedure for app ro ximately optimizing the non-conv ex problem (1.1). A high level su mmary of GraHTP is describ ed in th e top panel of Algorithm 1. The pro cedu r e generates a sequence of inte rm ediate k -sparse v ectors x (0) , x (1) , . . . fr om an initial sparse appr o ximation x (0) (t ypically x (0) = 0). A t the t -th iteration, the fi rst step S1 , ˜ x ( t ) = x ( t − 1) − η ∇ f ( x ( t − 1) ), computes the gradien t descent at the p oin t x ( t − 1) with step-size η . Then in the second step, S2 , the k co ordinates of the v ector ˜ x ( t ) that ha ve th e largest m agnitude are chosen as the supp ort in whic h p u rsuing the minimization will b e most effectiv e. In the third step, S3 , w e find a v ector with this supp ort whic h minimizes th e ob jectiv e fu nction, whic h b ecomes x ( t ) . This last step, often referred to as debiasing , has b een sho wn to imp ro ve the p er f ormance in other algorithms to o. The iterations con tin u e until the algorithm reac h es a terminating cond ition, e.g., on the c hange of the cost fu nction or th e c hange of the estimated minimum from the previous iteration. A natur al criterion here is F ( t ) = F ( t − 1) (see S2 for the definition of F ( t ) ), sin ce then x ( τ ) = x ( t ) for all τ ≥ t , although there is no guarant ee that this sh ould o ccur. It will b e assumed throughout the p ap er that the card in alit y k is known. In practice this qu an tit y may b e regarded as a tun ing parameter of the algorithm via, for example, cr oss-v alidations. In th e standard form of GraHTP , the debiasing step S3 requir es to minimize f ( x ) o v er the supp ort F ( t ) . If this step is judged too costly , w e m a y consider instead a fast v arian t of GraHTP , where the debiasing is replaced b y a simple truncation op eration x ( t ) = ˜ x ( t ) k . This leads to the F ast GraHTP (FG r aHTP) describ ed in the b ottom panel of Algorithm 1. It is in teresting to note that F GraHTP can b e regarded as a pro jected gradien t descen t p ro cedure for optimizing the non-con ve x problem (1.1). Its p er-iteration computational o v erload is almost iden tical to that of the standard gradien t descen t pro cedure. While in this pap er w e only stu dy the F GraHTP outlined in Algorithm 1, we sh ould mentio n that other fast v arian ts of GraHTP can also b e considered. F or instance, to reduce the computational cost of S3 , w e can tak e a restricted Newton step or a restricted gradien t descen t step to calculate x ( t ) . 4 W e close this section b y p ointing out that, in the sp ecial case w here the squared err or f ( x ) = 1 2 k y − Ax k 2 is the cost function, GraHTP reduces to HTP (F oucart, 2011 ). Sp ecifically , the gradient descen t step S1 reduces to ˜ x ( t ) = x ( t − 1) + η A ⊤ ( y − Ax ( t − 1) ) and the d ebiasing step S3 reduces to the orthogonal pro jection x ( t ) = arg min {k y − Ax k , supp( x ) ⊆ F ( t ) } . In the meanwhile, F GraHTP reduces to IHT (Blumensath & Da vies , 2009) in which the iteration is defined by x ( t ) = ( x ( t − 1) + η A ⊤ ( y − Ax ( t − 1) )) k . Algorithm 1: Gradient Hard Thresh olding Pursuit (GraHTP). Initialization: x (0) with k x (0) k 0 ≤ k (typic al ly x (0) = 0 ), t = 1 . Output : x ( t ) . rep eat ( S1 ) Compute ˜ x ( t ) = x ( t − 1) − η ∇ f ( x ( t − 1) ); ( S2 ) Let F ( t ) = supp( ˜ x ( t ) , k ) b e the indices of ˜ x ( t ) with th e largest k abs olute v alues; ( S3 ) Compute x ( t ) = arg min { f ( x ) , supp( x ) ⊆ F ( t ) } ; t = t + 1; un til halting c onditio n holds ; ———————————————– ⋆ F ast Gr aHTP ⋆ ———————— — — — — — — — — rep eat Compute ˜ x ( t ) = x ( t − 1) − η ∇ f ( x ( t − 1) ); Compute x ( t ) = ˜ x ( t ) k as the tru ncation of ˜ x ( t ) with top k en tries preserve d ; t = t + 1; un til halting c onditio n holds ; 3 Theoretical Analysis In this section, we analyze the theoretical pr op erties of GraHTP and F GraHTP . W e first stu dy the con v ergence of these t wo algorithms. Next, w e inv estigate their p erformances for th e task of sparse r eco v ery in terms of conv ergence rate and parameter estimation accuracy . W e r equ ire the follo wing key tec hnical condition u nder whic h the conv ergence and parameter estimation accuracy of GraHTP/F GraHTP can b e guarante ed. T o simplify the notation in the follo wing analysis, we abbreviate ∇ F f = ( ∇ f ) F and ∇ s f = ( ∇ f ) s . Definition 1 (Condition C ( s, ζ , ρ s )) . F or any inte ger s > 0 , we say f satisfies c ondition C ( s, ζ , ρ s ) if for any index set F with c ar dinality | F | ≤ s and any x , y with supp ( x ) ∪ supp ( y ) ⊆ F , the fol lowing ine quality holds for some ζ > 0 and 0 < ρ s < 1 : k x − y − ζ ∇ F f ( x ) + ζ ∇ F f ( y ) k ≤ ρ s k x − y k . Remark 1. In the sp e cial c ase w her e f ( x ) is le ast squar e loss function and ζ = 1 , Conditio n C ( s, ζ , ρ s ) r e duc es to the wel l known Restricted I sometry Prop erty (RIP) c ondition in c ompr essive sensing. W e ma y establish the conn ections b etw een condition C ( s, ζ , ρ s ) and th e conditions of restricted strong conv exit y/smo othness wh ic h are k ey to the analysis of sev eral p revious g reedy selectio n metho ds (Zhang, 2008; Shalev-Shw artz et al., 201 0 ; Y uan & Y an, 2013; Bahmani et al., 2013). 5 Definition 2 (Restricted Strong Con vexit y/Smo othn ess) . F or any inte ger s > 0 , we say f ( x ) is r estricte d m s -str ongly c onvex and M s -str ongly smo oth if ther e exist ∃ m s , M s > 0 such that m s 2 k x − y k 2 ≤ f ( x ) − f ( y ) − h∇ f ( y ) , x − y i ≤ M s 2 k x − y k 2 , ∀k x − y k 0 ≤ s. (3.1) The f ollo win g lemma connects condition C ( s, ζ , ρ s ) to the restricted strong conv exit y/smo othness conditions. Lemma 1. A ssume that f is a differ entiable function. (a) If f satisfies c ondition C ( s, ζ , ρ s ) , then for al l k x − y k 0 ≤ s the fol lowing two ine qualities hold: 1 − ρ s ζ k x − y k ≤ k∇ F f ( x ) − ∇ F f ( y ) k ≤ 1 + ρ s ζ k x − y k f ( x ) ≤ f ( y ) + h∇ f ( y ) , x − y i + 1 + ρ s 2 ζ k x − y k 2 . (b) If f is m s -str ongly c onvex and M s -str ongly smo oth , then f sa tisfies c ondition C ( s, ζ , ρ s ) with any ζ < 2 m s / M 2 s , ρ s = p 1 − 2 ζ m s + ζ 2 M 2 s . A pr o of of this lemma is provided in App en d ix A.1. Remark 2. The Part(a) of L emma 1 indic ates that if c ondition C ( s, ζ , ρ s ) holds, then f is str ongly smo oth. The Part(b) of L emma 1 shows that the str ong smo othness/c onvexity c onditions im- ply c ondition C ( s, ζ , ρ s ) . Ther efor e, c ond ition C ( s, ζ , ρ s ) i s no str onger th an the st r ong smo oth- ness/c onveixy c onditio ns. 3.1 Con v ergence W e no w analyze the con vergence prop erties of GraHTP and F GraHTP . First and foremost, w e mak e a simple obs er v ation ab out GraHTP: since there is only a finite num b er of su b sets of { 1 , ..., p } of size k , the sequence defin ed b y GraHTP is ev en tually p erio d ic. The imp ortance of this observ ation lies in th e fact that, as so on as the con vergence of GraHTP is established, then we can certify that the limit is exactly ac hieve d after a fin ite num b er of iterations. W e establish in Th eorem 1 the conv ergence of GraHTP and F GraHTP und er prop er conditions. A pro of of this theorem is pro vided in App endix A.2. Theorem 1. Assume that f satisfies c ondition C (2 k , ζ , ρ 2 k ) and the step-size η < ζ / (1 + ρ 2 k ) . Then the se quenc e { x ( t ) } define d by Gr aHTP c onver ges in a finite numb er of iter ations. Mor e over, the se quenc e { f ( x ( t ) ) } define d by F Gr aHTP c onver ges. Remark 3. Sinc e ρ 2 k ∈ (0 , 1) , we have that the c onver genc e r esults in The or em 1 hold whenever the step-size η < ζ / 2 . If f is m s -str ongly c onvex and M s -str ongly smo oth, then fr om P art(b) of L emma 1 we know that The or em 1 holds whenever the step-size η < m s / M 2 s . 6 3.2 Sparse Reco very Performa nce The follo wing theorem is our main result on the parameter estimation accuracy of GraHTP and F GraHTP when the target solution is sp arse. Theorem 2. L et ¯ x b e an arbitr ary ¯ k -sp arse ve ctor and k ≥ ¯ k . L e t s = 2 k + ¯ k . If f satisfies c onditio n C ( s, ζ , ρ s ) and η < ζ , (a) if µ 1 = √ 2(1 − η /ζ + (2 − η /ζ ) ρ s ) / (1 − ρ s ) < 1 , then at iter ation t , Gr aHTP wil l r e c over an appr oximation x ( t ) satisfying k x ( t ) − ¯ x k ≤ µ t 1 k x (0) − ¯ x k + 2 η + ζ (1 − µ 1 )(1 − ρ s ) k∇ k f ( ¯ x ) k . (b) if µ 2 = 2(1 − η /ζ + (2 − η /ζ ) ρ s ) < 1 , then at iter ation t , FGr aHTP wil l r e c over an appr oxi- mation x ( t ) satisfying k x ( t ) − ¯ x k ≤ µ t 2 k x (0) − ¯ x k + 2 η 1 − µ 2 k∇ s f ( ¯ x ) k . A pr o of of this th eorem is p ro vided in App end ix A.3. Note that we did not make an y attempt to optimize th e constan ts in T heorem 2, w hic h are r elativ ely loose. In the discussion, we ignore the constan ts and fo cus on th e main message Theorem 2 con v eys. Th e part (a) of T h eorem 2 in dicates that und er pr op er conditions, the estimation error of GraHTP is determined by the multiple of k∇ k f ( ¯ x ) k , and the rate of con vergence b efore reac hing this er r or level is geometric. P articularly , if the sparse vecto r ¯ x is sufficient ly close to an unconstrained minimum of f then the estimation error flo or is negligible b ecause ∇ k f ( ¯ x ) has small magnitude. In the ideal case where ∇ f ( ¯ x ) = 0 (i.e., the sp arse ve ctor ¯ x is an u nconstrained min im um of f ), this result guaran tees that we can reco v er ¯ x to arb itrary p recision. In this case, if w e further assu me that η satisfies the conditions in Theorem 1, th en exact reco v ery can b e ac hiev ed in a finite num b er of iterations. Th e part (b) of Theorem 2 sho ws that FGraH T P enjo ys a similar geometric r ate of con v ergence and the estimation error is d etermined by the m u ltiple of k∇ s f ( ¯ x ) k with s = 2 k + ¯ k . The sh rink age rates µ 1 < 1 (see Pa rt (a)) and µ 2 < 1 (see Pa rt (b)) r esp ectiv ely con trol the con v ergence rate of GraHTP and F GraHTP . F or GraHTP , the condition µ 1 < 1 implies η > ((2 √ 2 + 1) ρ s + √ 2 − 1) ζ √ 2 + √ 2 ρ s . (3.2) By com b ining this condition w ith η < ζ , we can see that ρ s < 1 / ( √ 2 + 1) is a n ecessary condition to guaran tee µ 1 < 1. On the other sid e, if ρ s < 1 / ( √ 2 + 1), then we can alwa y s fin d a step-size η < ζ satisfying (3.2) such that µ 1 < 1. T h is condition of ρ s is analogous to the RIP condition for estimation from n oisy measuremen ts in compressiv e s en sing (Cand` es et al., 2006 ; Needell & T r opp, 2009; F oucart , 2011). Indeed, in this setup our GraHTP algorithm reduces to HTP whic h requires w eak er RIP condition than prior compressive sensing algorithms. The guaran tees of GraHTP and HTP are almost iden tical, although w e d id not m ak e any attempt to optimize the RIP sufficient constan ts, whic h are 1 / ( √ 2 + 1) (for GraHTP) versus 1 / √ 3 (for HTP). W e would lik e to emphasize that the condition ρ s < 1 / ( √ 2 + 1) deriv ed for GraHTP also holds in fairly general setups b ey ond compressiv e sensing. F or FG r aHTP we ha ve similar discussions. 7 F or the general spars it y-constrained optimization p r oblem, w e note that a similar estimation er- ror b ound has b een established f or the GraSP (Gradien t Su pp ort Pursu it) metho d (Bahmani et al., 2013) whic h is another hard-thresholding-t yp e metho d . A t time stamp t , GraSP fi rst conducts de- biasing o ver the union of the top k en tries of x ( t − 1) and the top 2 k en tries of ∇ f ( x ( t − 1) ), then it selects the top k en tries of the resultant ve ctor and u p dates their v alues via debiasing, wh ic h b ecomes x ( t ) . Our GraHTP is conn ected to Gr aS P in the sense th at the k largest abs olute eleme nts after the gradien t d escen t step (see S1 and S2 of Algorithm 1) will come from some combinatio n of the largest elemen ts in x ( t − 1) and the largest element s in the gradien t ∇ f ( x ( t − 1) ). Although the con v ergence rate are of the same ord er, the p er-iteration cost of GraHTP is chea p er than GraSP . Indeed, at eac h iteration, GraSP needs to minimize the ob jectiv e o v er a supp ort of size 3 k while that size for GraHTP is k . F GraHTP is ev en c heap er for iteration as it do es n ot n eed any debiasing op eration. W e will compare the actual numerical p erformances of th ese metho ds in our empirical study . 4 Applications In this section, w e will s p ecialize GraHTP/F GraHTP to t wo p opular statistica l learnin g mo dels: the sp arse logistic regression (in § 4.1) and the sparse p recision matrix estimation (in § 4.2). 4.1 Sparsit y-Constrained ℓ 2 -Regularized Logistic Regression Logistic r egression is one of the most p opular m o dels in statistics and mac hine learning (Bishop, 2006). In this mo del the relation b et ween the random feature v ector u ∈ R p and its asso ciated random bin ary lab el v ∈ {− 1 , +1 } is determined by the conditional pr obabilit y P ( v | u ; ¯ w ) = exp(2 v ¯ w ⊤ u ) 1 + exp(2 v ¯ w ⊤ u ) , (4.1) where ¯ w ∈ R p denotes a parameter vect or. Giv en a set of n indep endently drawn d ata sam- ples { ( u ( i ) , v ( i ) ) } n i =1 , logistic regression learns the parameters w so as to min imize the log istic log-lik elihoo d giv en b y l ( w ) := − 1 n log Y i P ( u ( i ) | v ( i ) ; w ) = 1 n n X i =1 log(1 + exp( − 2 v ( i ) w ⊤ u ( i ) )) . It is well-kno wn that l ( w ) is conv ex. Unfortunately , in high-d im en sional setting, i.e., n < p , the problem can be un derdetermined and thus its minim um is not u nique. A con ven tional w a y t o handle th is issue is to imp ose ℓ 2 -regularizatio n to the logistic loss to a voi d singularit y . The ℓ 2 - p enalt y , ho wev er, d o es n ot promote sparse solutions w hic h are often desirable in h igh-dimensional learning tasks. T he sparsity-c onstrained ℓ 2 -regularized logistic regression is then giv en b y: min w f ( w ) = l ( w ) + λ 2 k w k 2 , sub ject to k w k 0 ≤ k, (4.2) where λ > 0 is the regularization strength parameter. Obviously f ( w ) is λ -strongly con ve x and hence it h as a un ique minimum. Th e cardinalit y constrain t enforces the solution to b e s parse. 8 4.1.1 V erifying Condition C ( s, ζ , ρ s ) Let U = [ u (1) , ..., u ( n ) ] ∈ R p × n b e the d esign matrix and σ ( z ) = 1 / (1 + exp( − z )) b e the sigmoid function. In the case of ℓ 2 -regularized logistic loss considered in this section w e h a v e ∇ f ( w ) = U a ( w ) /n + λw , where the vect or a ( w ) ∈ R n is give n b y [ a ( w )] i = − 2 v ( i ) (1 − σ (2 v ( i ) w ⊤ u ( i ) )). The follo wing result v erifies that f ( w ) satisfies C ondition C ( s, ζ , ρ s ) un der mild conditions. Prop osition 1. Assume that for any index set F with | F | ≤ s we have ∀ i , k ( u ( i ) ) F k ≤ R s . Then the ℓ 2 -r e gu larize d lo gistic loss satisfies Condition C ( s, ζ , ρ s ) with any ζ < 2 λ (4 √ sR 2 s + λ ) 2 , ρ s = q 1 − 2 ζ λ + ζ 2 (4 √ sR 2 s + λ ) 2 . A pr o of of this r esult is giv en in App endix A.4. 4.1.2 Bounding the E stimation Error W e are going to b oun d k∇ s f ( ¯ w ) k wh ic h w e obtain fr om Theorem 2 that con trols the estimation error b oun ds of GraHTP (with s = k ) and FG r aHTP (with s = 2 k + ¯ k ). In the follo wing deviation, w e assu me that th e join t densit y of th e random vec tor ( u, v ) ∈ R p +1 is giv en b y the follo wing exp onenti al family distrib u tion: P ( u, v ; ¯ w ) = exp v ¯ w ⊤ u + B ( u ) − A ( ¯ w ) , (4.3) where A ( ¯ w ) := log X v = {− 1 , 1 } Z R p exp v ¯ w ⊤ u + B ( u ) du is the log-partition fun ction. The term B ( u ) c h aracterizes th e marginal b ehavio r of u . Obviously , the conditional d istr ibution of v give n u , P ( v | u ; ¯ w ), is giv en b y the logistical mo del (4.1). By trivial algebra we can obtain th e follo wing standard r esu lt whic h s ho ws th at the fi rst deriv ativ e of the logistic lo g-lik eliho o d l ( w ) yields t h e cumulan ts of the random v ariables v [ u ] j (see, e.g., W ainwrigh t & Jordan, 2008 ): ∂ l ∂ [ w ] j = 1 n n X i =1 n − v ( i ) [ u ( i ) ] j + E v [ v [ u ( i ) ] j | u ( i ) ] o . (4.4) Here the exp ectation E v [ · | u ] is taken ov er the conditional d istr ibution (4.1). W e introdu ce the follo wing sub-Gaussian condition on the random v ariate v [ u ] j . Assumption 1. F or al l j , we assume that ther e exists c onstant σ > 0 su ch that for al l η , E [exp( η v [ u ] j )] ≤ exp σ 2 η 2 / 2 . This assump tion holds wh en [ u ] j are sub-Gaussian (e.g., Gaussian or b ou n ded) random v ari- ables. The follo win g result establishes the b ound of k∇ s f ( ¯ w ) k . 9 Prop osition 2. If A ssumption 1 holds , then with pr ob ability at le ast 1 − 4 p − 1 , k∇ s f ( ¯ w ) k ≤ 4 σ p s ln p /n + λ k ¯ w s k . A pr o of of this r esult can b e found in App end ix A.5. Remark 4. If we cho ose λ = O ( p ln p/n ) , then with overwhelming pr ob ability k∇ s f ( ¯ w ) k vanishes at the r ate of O ( p s ln p /n ) . This b ound is sup erior to the b ound pr ovide d by Bahmani et al. (2013, Se ction 4.2) which is non-vanishing. 4.2 Sparsit y-Constrained P recision Matrix E stimation An imp ortant class of spars e learnin g p roblems inv olv es estimating the precision (in verse cov ariance) matrix of high dimensional r andom v ectors under the assu mption that the tr ue precision matrix is sparse. This pr oblem arises in a v ariet y of applications, among th em compu tational biology , n atural language p ro cessing and document analysis, where the mo del d imension may b e comparable or substanti ally larger than the sample size. Let x b e a p -v ariate ran d om v ector with zero-mea n Gaussian distr ib ution N (0 , ¯ Σ). Its dens it y is parameterized b y the precision matrix ¯ Ω = ¯ Σ − 1 ≻ 0 as follo ws: φ ( x ; ¯ Ω) = 1 p (2 π ) p (det ¯ Ω) − 1 exp − 1 2 x ⊤ ¯ Ω x . It is we ll kno wn that the conditional indep end ence b et w een the v ariables [ x ] i and [ x ] j giv en { [ x ] k , k 6 = i, j } is equiv alen t to [ ¯ Ω] ij = 0. T he conditional ind ep endence relations b et ween com- p onents of x , on the other hand , can b e represen ted by a graph G = ( V , E ) in whic h the vertex set V has p element s corresp ondin g to [ x ] 1 , ..., [ x ] p , and th e edge set E consists of ed ges b et we en no de pairs { [ x ] i , [ x ] j } . The edge b etw een [ x ] i and [ x ] j is excluded fr om E if and only if [ x ] i and [ x ] j are conditionally indep endent giv en other v ariables. T his graph is kno wn as Gaussian Mark o v random field (GMRF) (Edw ards, 2000). Th us for multiv ariate Gaussian d istribution, estimating the su pp ort of the p recision matrix ¯ Ω is equiv alen t to learning the structure of GMRF G . Giv en i.i.d. samples X n = { x ( i ) } n i =1 dra wn fr om N (0 , ¯ Σ), the negativ e log-lik elihoo d , up to a constan t, can b e written in terms of the p r ecision matrix as L ( X n ; ¯ Ω) := − lo g det ¯ Ω + h Σ n , ¯ Ω i , where Σ n is th e sample cov ariance matrix. W e are in terested in the problem of estimating a sparse precision ¯ Ω with no more than a pr e-sp ecified n umb er of off-diagonal non-zero en tries. F or this purp ose, w e consider the follo wing cardinalit y constrained log-determinant program: min Ω ≻ 0 L (Ω) := − lo g det Ω + h Σ n , Ω i , s.t. k Ω − k 0 ≤ 2 k, (4.5) where Ω − is the restriction of Ω on the off-diagonal en tries, k Ω − k 0 = | supp(Ω − ) | is the cardinalit y of the su pp ort set of Ω − and integ er k > 0 b ounds the n umber of edges | E | in GMRF. 4.2.1 V erifying Condition C ( s, ζ , ρ s ) It is ea sy to sh o w th at the Hessia n ∇ 2 L (Ω) = Ω − 1 ⊗ Ω − 1 , where ⊗ is the Kronec k er pro duct op erator. Th e follo win g resu lt shows that L satisfies Condition C ( s, ζ , ρ s ) if the eigenv alues of Ω are lo wer b oun ded from zero and upp er b ounded. 10 Prop osition 3. Supp ose that k Ω − k 0 ≤ s and α s I Ω β s I f or some 0 < α s ≤ β s . Then L (Ω) satisfies Condition C ( s, ζ , ρ s ) with any ζ < 2 α 4 s β 2 s , ρ s = q 1 − 2 ζ β − 2 s + ζ 2 α − 4 s . Pr o of. Due to the f act that the eigen v alues of Kronec k er p ro ducts of symmetric matrices are the pro du cts of the eigen v alues of their factors, it holds that β − 2 s I Ω − 1 ⊗ Ω − 1 α − 2 s I . Therefore we ha ve β − 2 s ≤ k∇ 2 L (Ω) k 2 ≤ α − 2 s whic h implies that L (Ω) is β − 2 s -strongly con v ex an d α − 2 s -strongly smo oth. Th e desired resu lt follo ws directly fr om the Pa rt(b) of Lemma 1. Motiv ated by Prop osition 3, we consider applying GraHTP to the follo win g mod ified version of problem (4.5): min αI Ω β I L (Ω) , s.t. k Ω − k 0 ≤ 2 k, (4.6) where 0 < α ≤ β are t wo constan ts whic h r esp ectiv ely lo wer and upp er b ound the eigen v alues of the desired solution. T o roughly estimate α and β , we emplo y a rule prop osed by Lu (2009, Prop osition 3.1) for the ℓ 1 log-determinan t program. S p ecifically , we set α = ( k Σ n k 2 + n ξ ) − 1 , β = ξ − 1 ( n − α T r(Σ n )) , where ξ is a small enough p ositiv e n umb er (e.g., ξ = 10 − 2 as utilized in our exp eriments). 4.2.2 Bounding the E stimation Error. Let h := v ect( ∇ L ( ¯ Ω)). It is kno wn from Th eorem 2 that the estimation error is con trolled by k h s k 2 . S ince ∇ L ( ¯ Ω) = − ¯ Ω − 1 + Σ n = Σ n − ¯ Σ, we h a v e k h s k 2 ≤ √ s | Σ n − ¯ Σ | ∞ . It is kno wn that | Σ n − ¯ Σ | ∞ ≤ p log p/n w ith p robabilit y at least 1 − c 0 p − c 1 for some p ositiv e constan ts c 0 and c 1 and su fficien tly large n (see, e.g., Ra vikumar et al., 2011, Lemma 1). Therefore with o v erwhelming probabilit y w e ha v e k h s k 2 = O ( p s log p/n ) when n is sufficien tly large. 4.2.3 A Mo dified GraHTP Unfortunately , GraHTP is not directly applicable to the pr ob lem (4.6) du e to the pr esence of the constrain t αI Ω β I in addition to the spars ity constrain t. T o address this issue, we need to mo dify the debiasing step (S3) of GraHTP to minimize L (Ω) o v er the constrain t of αI Ω β I as well as the sup p ort set F ( t ) : min αI Ω β I L (Ω) , s.t. sup p(Ω) ⊆ F ( t ) . (4.7) Since this problem is con vex, an y off-the-shelf con vex solver can b e applied for optimization. In our implemen tation, we resort to alternating direction metho d (ADM) f or solving th is su bproblem b ecause of its rep orted efficiency (Bo yd et al., 2010; Y u an , 2012). The implement ation details of ADM for solving (4.7) are d eferr ed to App endix B. The mo dified Gr aHTP for the precision m atrix estimation pr oblem is formally describ ed in Algorithm 2. 11 Generally s p eaking, the guarantee s in Theorem 1 and Theorem 2 are n ot v alid for th e mo d ified GraHTP . Ho w ever, if ¯ Ω − αI and β I − ¯ Ω are diagonally dominant, then w ith a slight mo dification of pro of, we can p r o v e th at the P art (a) of T heorem 2 is still v alid for the mo dified GraHTP . W e sk etc hily describ e the pro of id ea as follo ws: Let Z := [ ¯ Ω] F ( t ) . Since ¯ Ω − αI and β I − ¯ Ω are d iagonally dominan t, we h a v e Z − αI and β I − Z are also diagonall y dominant and th us αI Z β I . Since Ω ( t ) is the minim um of L (Ω) restricted o v er the un ion of the cone αI Ω β I and th e supp orting set F ( t ) , we hav e h∇ L (Ω ( t ) ) , Z − Ω ( t ) i ≥ 0. The remaining of the arguments follo ws that of the P art(a) of Theorem 2. Algorithm 2: A Mo difi ed GraHTP for Sparse Precision Matrix Estimation. Initialization: Ω (0) with Ω (0) ≻ 0 and k (Ω (0) ) − k 0 ≤ 2 k and αI Ω (0) β I (typic al ly Ω (0) = αI ), t = 1 . Output : Ω ( t ) . rep eat (S1) Compu te ˜ Ω ( t ) = Ω ( t − 1) − η ∇ L (Ω ( t − 1) ); (S2) Let ˜ F ( t ) = sup p(( ˜ Ω ( t ) ) − , 2 k ) b e the ind ices of ( ˜ Ω ( t ) ) − with the largest 2 k absolute v alues and F ( t ) = ˜ F ( t ) ∪ { (1 , 1) , ..., ( p, p ) } ; (S3) Compu te Ω ( t ) = arg min L (Ω) , αI Ω β I , su p p(Ω) ⊆ F ( t ) ; t = t + 1; un til halting c onditio n holds ; 5 Exp erimen tal Re sults This section is d ev oted to show the empirical p er f ormances of GraHTP and F GraHTP wh en app lied to sparse logistic regression an d sparse precision m atrix estimation prob lems. Here w e do not rep ort the r esults of our algorithms in c omp r essiv e sensing tasks because in these tasks G r aHTP and F GraHTP reduce to the w ell studied HTP (F oucart, 2011) and IHT (Blumensath & Davie s , 2009), resp ectiv ely . Our alg orithm s are implemen ted in Matla b 7.12 run ning on a desktop w ith In tel Core i7 3.2G CPU and 16G RAM. 5.1 Sparsit y-Constrained ℓ 2 -Regularized Logistic Regression. 5.1.1 Mon te-Carlo Sim ulation W e consider a syn thetic data mo d el iden tical to the one used in (Bahmani et al., 2013). Th e sp ars e parameter ¯ w is a p = 1000 dimensional vect or that has ¯ k = 100 nonzero ent r ies drawn ind ep endently from the standard Gauss ian distribution. Eac h data sa m p le is an ind ep endent instance of the random v ector u generated by an autoregressiv e pro cess [ u ] i +1 = ρ [ u ] i + p 1 − ρ 2 [ a ] i with [ u ] 1 ∼ N (0 , 1) , [ a ] i ∼ N (0 , 1), and ρ = 0 . 5 b eing the correlation. Th e data lab els, v ∈ {− 1 , 1 } , are then generated randomly acco rd ing to the Bernoulli distribution P ( v = 1 | u ; ¯ w ) = exp(2 ¯ w ⊤ u ) 1 + exp(2 ¯ w ⊤ u ) . W e fix th e r egularization parameter λ = 10 − 4 in th e ob jectiv e of (4.2 ). W e are in terested in the follo wing t w o cases: 12 1. Case 1 : Card inalit y k is fixed a n d sample size n is v aryin g: we test with k = 100 and n ∈ { 100 , 200 , ..., 20 00 } . 2. Case 2 : Sample size n is fixed and cardinalit y k is v arying: we test with n = 500 and k ∈ { 100 , 150 , ..., 50 0 } . F or eac h case, w e compare GraHTP and FGraHTP with t w o state-of-the-art greedy selection meth- o ds: GraSP (Bahmani et al., 2013) and FBS (F orw ard Basis Selection) (Y uan & Y an, 2013). As aforemen tioned, GraSP is also a hard-thr esholding-t yp e metho d. This metho d simultaneously se- lects at eac h iteration k n on zero entries and up d ate their v alues via exploring the top k en tries in the previous iterate as w ell as the top 2 k entries in th e p r evious gradient . FBS is a forwa r d - selection-t yp e metho d. This metho d iterativ ely select s an atom from the dictionary and minimizes the ob jectiv e function o v er the linear com b inations of all the selected atoms. Note that all the considered algorithms h av e geometric rate of conv ergence. W e will compare the computational effi- ciency of these metho ds in our empirical s tudy . W e initialize w (0) = 0. Throughout our exp erimen t, w e set the stopping criterion as k w ( t ) − w ( t − 1) k / k w ( t − 1) k ≤ 10 − 4 . Results. Figure 5.1(a) presents the estimati on errors of the considered algo rithm s. F rom the left panel of Figure 5.1(a) (i.e., Case 1) w e observ e that: (i) when cardinalit y k is fixed , the estimation errors of all the considered algorithms tend to decrease as sample size n increases; and (ii) in th is case GraHTP and FG r aHTP are comparable and b oth are su p erior to GraSP and FBS. F rom the righ t panel of Figure 5.1(a) (i.e., Case 2) we obser ve that: (i) when n is fi xed, the estimation errors of all the considered algorithms but FBS tend to increase as k increases (FBS is relativ ely insensitiv e to k b ecause it is a forward selection metho d); and (ii) in this case GraHTP and FGraHTP are comparable and b oth are sup erior to GraSP and FBS at relativ ely small k < 200. Figure 5.1(b) sho ws the CPU times of the considered algorithms. F rom this group of results we observe that in most cases, FBS is the fastest one wh ile GraHTP and FGraH T P are sup erior or comparable to GraSP in computational time. W e also observe that when k is relativ ely sm all, GraHTP is ev en faster than F GraHTP although F GraHTP is c heap er in p er-iteration o verhead. This is partially b ecause when k is small, GraHTP tends to need few er iterations than F GraHTP to con v erge. 13 0.2 0.4 0.6 0.8 1 0.8 0.85 0.9 0.95 1 n/p Estimation error k=100 GraHTP FGraHTP GraSP FBS 100 200 300 400 500 0.88 0.9 0.92 0.94 0.96 k Estimation error n=500 GraHTP FGraHTP GraSP FBS (a) Estimation Error 0.2 0.4 0.6 0.8 1 0 20 40 60 80 100 n/p CPU (in second) k=100 GraHTP FGraHTP GraSP FBS 100 200 300 400 500 0 10 20 30 40 k CPU (in second) n=500 GraHTP FGraHTP GraSP FBS (b) CPU Running Time Figure 5.1: Simulatio n data: estimation error and CPU time of the considered algorithms. 5.1.2 Real Data The algorithms are also compared on the rcv1.bina ry dataset ( p = 47,236) which is a p opular d ataset for binary classification on sparse data. A training s u bset of size n = 20,242 an d a testing s u bset of size 20,000 are used. W e test with sparsit y parameters k ∈ { 100 , 200 , ..., 10 00 } and fix th e regularization parameter λ = 10 − 5 . The in itial v ector is w (0) = 0 for all the consid er ed algorithms. W e set the stopping criterion as k w ( t ) − w ( t − 1) k / k w ( t − 1) k ≤ 10 − 4 or the iteration stamp t > 50. Figure 5.2 shows the ev olving cu r v es of empirical logistic loss for k = 200 , 400 , 800 , 10 00. It can b e observe d from this figure that GraHTP and GraSP are comparable in terms of con v ergence rate and they are sup erior to FGraHTP and FBS. The testing classificatio n er r ors and CPU r unnin g time of the considered algo rith m s are pr o vided in Figure 5.3: (i) in terms of accuracy , all the considered metho ds are comparable; an d (ii) in terms of running time, F GraHTP is the most efficien t one; GraHTP is significan tly faster than Gr aS P and FBS. The reason that FGraHTP ru ns fastest is b ecause it has ve r y lo w p er-iteration cost although its con v ergence curve is slight ly less sh arp er than GraHTP and GraSP (see Figure 5.2). T o summarize, GraHTP and F GraHTP ac hiev e b etter trade-offs b et w een accuracy and efficiency than GraHTP and FBS on rcv1.bina ry dataset. 14 10 20 30 40 50 0.2 0.4 0.6 0.8 Iterations Logistic loss k = 200 GraHTP FGraHTP GraSP FBS 10 20 30 40 50 0.2 0.4 0.6 0.8 Iterations Logistic loss k = 400 GraHTP FGraHTP GraSP FBS 10 20 30 40 50 0.2 0.4 0.6 0.8 Iterations Logistic loss k = 800 GraHTP FGraHTP GraSP FBS 10 20 30 40 50 0.2 0.4 0.6 0.8 Iterations Logistic loss k = 1000 GraHTP FGraHTP GraSP FBS Figure 5.2: rcv1.binary data: ℓ 2 -regularized logistic loss vs. num b er of iterations. 200 400 600 800 1000 0.04 0.06 0.08 0.1 k Classification error Classification Error GraHTP FGraHTP GraSP FBS 200 400 600 800 1000 0 5 10 15 20 25 30 35 40 k CPU time (in second) CPU Time GraHTP FGraHTP GraSP FBS Figure 5.3: rcv1.bina ry data: Classification error and CPU runnin g time cu r v es of the considered metho ds. 15 5.2 Sparsit y-Constrained P recision Matrix E stimation 5.2.1 Mon te-Carlo Sim ulation Our sim u lation stud y emplo ys the sparse precision matrix mo del ¯ Ω = B + σ I where eac h off-diagonal en try in B is generated indep endently and equals 1 with probabilit y P = 0 . 1 or 0 with probabilit y 1 − P = 0 . 9. B has zeros on the diagonal, an d σ is c h osen s o that the condition num b er of ¯ Ω is p . W e generate a training sample of size n = 100 fr om N (0 , ¯ Σ), and an indep enden t sample of size 100 from the same d istribution for tuning the parameter k . W e compare p erformance for different v alues of p ∈ { 30 , 60 , 120 , 200 } , r eplicated 100 times eac h. W e compare the m o dified GraHTP (see Algorithm 2) with GraSP and FBS. T o adopt GraSP to sparse precision matrix estimation, we mo d ify the algorithm with a similar t w o-stage s trategy as used in the mo d ified GraHTP suc h that it can handle the eigen v alue b oun ding constraint in addition to the sparsit y constrain t. In the work of Y uan & Y an (2013), FBS h as already b een applied to sparse p recision m atrix estimation. Also, w e compare GraHTP with GLasso (Graphical Lasso) whic h is a repr esen tativ e con vex m etho d for ℓ 1 -p enalized log-determinan t program (F riedman et al., 2008). Th e qualit y of p recision matrix e stimation is measured b y its distance to the truth in F r ob enius norm and the s upp ort reco very F-score. The larger the F-score, the b etter the supp ort reco v ery p erformance. The n umerical v alues ov er 10 − 3 in magnitude are considered to b e nonzero. Figure 5.4 c omp ares the matrix er r or in F rob eniu s norm, sparse reco ve ry F-score and CPU runn in g time ac hiev ed by eac h of the consid er ed algorithms for different p . Th e results show that GraHTP p erforms fa vorably in terms of estimation error and supp ort reco v ery accuracy . W e note that th e standard errors of GraHTP is relativ ely larger than Glasso. This is as exp ected since GraHTP appro ximately solve s a non-conv ex p roblem via greedy selection at eac h iteration; th e pro cedure is less stable than con v ex metho ds suc h as GLasso. Similar ph enomenon of instabilit y is observ ed for GraSP and FBS. Figure 5.2.1 sho ws the computational time of the considered algorithms. W e ob s erv ed th at GLasso, as a con vex solv er, is computationally sup erior to the thr ee considered greedy selection metho ds. Although inferior to GLasso, GraHTP is computationally m uch more efficien t than GraSP and FBS. T o visually insp ect the supp ort r eco very p erf ormance of the considered algorithms, we sho w in Figure 5.5 the heatmaps corresp ond ing to the p ercen tage of eac h matrix en try b eing iden tified as a nonzero element with p = 60. Visual insp ection on th ese h eatmaps sho ws that the three greedy selection method s, Gr aHTP , GraSP , FBS, tend to b e sparser than GLasso. Similar phenomenon is observ ed in our exp erimen ts with other v alues of p . 5.2.2 Real Data W e consider the task of LD A (linear discriminant analysis) classification of tumors usin g the breast cancer dataset (Hess et al., 2006). Th is dataset consists of 133 sub jects, eac h of which is asso ci- ated w ith 22,283 gene expression levels. Among these sub j ects, 34 are with pathological complete resp onse (p CR) and 99 are with residual disease (RD). The p CR sub jects are considered to ha ve a high c hance of cancer free surviv al in the long term. Based on th e estimated p recision matrix of the gene expr ession lev els, we app ly LD A to predict whether a sub ject can ac hiev e the pCR state or the R D state. In our exp eriment, we follo w the same proto col used b y (Cai et al., 2011) as we ll as references therein. F or the sak e of readers, w e briefly review this exp erimen tal setup . The data are rand omly divided into the training and testing sets. In eac h rand om division, 5 pCR sub jects and 16 RD 16 50 100 150 200 0 20 40 60 80 100 Dimensionality p Frobenius norm Estimation Error GraHTP GraSP FBS GLasso 50 100 150 200 0.2 0.4 0.6 0.8 1 Dimensionality p F−Score Structure Recovery GraHTP GraSP FBS GLasso 50 100 150 200 10 −2 10 0 10 2 10 4 Dimensionality p CPU (in second) Running Time GraHTP GraSP FBS GLasso Figure 5.4: Sim ulated data: comparison of Matrix F rob enius n orm loss, su pp ort reco v ery F-score and CPU running time of th e considered method s. sub jects are randomly selected to constitute the testing data, and the remaining sub jects form the training set with size n = 112. By using t wo-sample t test, p = 113 most significant genes are selected as cov ariates. F ollo wing the LD A framew ork, w e assume that the normalized gene expr es- sion d ata are normally distrib u ted as N ( µ l , ¯ Σ), where the t wo classes are assumed to hav e the same co v ariance matrix, ¯ Σ, but different m eans, µ l , l = 1 for pCR state and l = 2 for RD state. Giv en a testing data sample x , we calculate its LD A scores, δ l ( x ) = x ⊤ ˆ Ω ˆ µ l − 1 2 ˆ µ ⊤ l ˆ Ω ˆ µ l + log ˆ π l , l = 1 , 2, using the precision matrix ˆ Ω estimated by the considered metho ds. Here ˆ µ l = (1 /n l ) P i ∈ class l x i is the within -class mean in the training set and ˆ π l = n l /n is the prop ortion of class l sub jects in th e training s et. The classification rule is set as ˆ l ( x ) = arg max l =1 , 2 δ l ( x ). Clearly , the classification p erformance is directly affected by the estimation qualit y of ˆ Ω. Hence, w e assess the pr ecision matrix estimatio n p erformance on the testing data and compare (mo dified) GraHTP with GraSP and FBS. W e also compare GraHTP with GLasso (Graphical Lasso) (F riedman et al., 2008). W e use a 6-fold cross-v alidation on the training data for tuning k . W e rep eat the exp erimen t 100 times. Results. T o compare the classification p erform an ce, we use sp ecificit y , s ensitivit y (or recall), and 17 10 20 30 40 50 60 10 20 30 40 50 60 (a) Ground truth 10 20 30 40 50 60 10 20 30 40 50 60 (b) GraHTP 10 20 30 40 50 60 10 20 30 40 50 60 (c) GraSP 10 20 30 40 50 60 10 20 30 40 50 60 (d) FBS 10 20 30 40 50 60 10 20 30 40 50 60 (e) GLasso Figure 5.5: Simulatio n data w ith p = 60: heatmaps of the frequency of eac h precision matrix en try b eing identified as nonzeros out of 100 r ep licatio n s. Mathews correlation coefficient (MCC) cr iteria as in (Cai et al., 201 1 ): Sp ecificit y = TN TN + FP , Sensitivit y = TP TP + FN , MCC = TP × TN − FP × FN p (TP + FP)(TP + FN)(TN + FP)(TN + FN) , where TP and TN stand for true p ositive s (p CR) and true n egativ es (RD), resp ectiv ely , and FP and FN stand for false p ositiv es/ negativ es, resp ectiv ely . The larger the criterion v alue, the b etter the classification p er f ormance. S ince one can adjust decision threshold in any sp ecific algorithm to t r ade-off sp ecificit y and sensitivit y (increase one while r educe th e ot h er), the MCC is more meaningful as a single p erformance metric. T able 5.1: Brease cancer data: comparison of a ve rage (std) classification accuracy and av erage C PU runn in g time o v er 100 rep licatio n s. Metho ds Sp ecificit y Sensitivit y MCC CPU Time (sec.) GraHTP 0.77 (0.11) 0.77 (0.19 ) 0.49 (0.19) 1.92 GraSP 0.73 (0.10) 0.78 (0.18) 0.45 (0.17 ) 4.06 FBS 0.78 (0.11) 0.74 (0.18 ) 0.48 (0.19) 8.73 GLasso 0.81 (0.11) 0.64 (0.21) 0.45 (0.19) 1.19 T able 5.1 rep orts the av erages and standard deviations, in the paren theses, of the three classi- fication criteria ov er 100 replications. It can b e observ ed th at GraHTP is quite comp etitiv e to the 18 leading m etho ds in terms of the th ree metrics. The a verag es of CP U ru nning time (in seconds) of the considered m etho ds are listed in the last column of T able 5.1. Figure 5.6 sh ows the ev olving curv es of log-determinan t loss v erses num b er of iteratio n s . W e ob s erv ed that on this data, GraHTP con v erges muc h faster than GraSP and FBS. Note that w e did not draw th e curv e of GLasso in Figure 5.6 b ecause its ob jectiv e function is d ifferent from that of the pr oblem (4.6). 0 200 400 600 800 1000 0 20 40 60 80 100 120 Iterations Log−determinant loss Breast cancer GraHTP GraSP FBS Figure 5.6: Brease cancer d ata: log-determinan t loss conv ergence cur v es of the considered metho ds. 6 Conclusion In this pap er, we prop ose GraHTP as a generalization of HTP from compressive sen sing to the generic problem of sparsit y-constrained optimizati on. Th e m ain idea is to force the gradien t descent iteration to b e sp arse via hard threshlo din g. Theoretically , we pro ve that under mild conditions, GraHTP con v erges geometrically in fin ite s teps of iteration and its estimation error is controll ed b y the restricted norm of gradient at the ta rget sparse solution. W e al so prop ose and analyze the FGraH TP algorithm as a fast v ariant of GraHTP without the debiasing step. Em p irically , we compare GraHTP and F GraHTP with several representa tive greedy selection metho ds when applied to sp arse logistic regression and sp arse p recision matrix estimation tasks. O ur theoretical results and empirical evidences sho w that simply combing gradien t descen t with h ard thr eshlo ding, with or without debiasing, leads to efficien t and accurate computational pro cedures for sparsity- constrained optimization pr ob lems. Ac kno wledgmen t Xiao-T ong Y uan was a p ostdo ctoral researc h asso ciate su p p orted by nsf-dm s 0808864 an d nsf-eager 12493 16 . Ping Li is su pp orted b y ONR-N00 014-13-1-0 764, AF OS R-F A9550 -13-1-0137, and NSF-BigDa ta 12509 14. T ong Zh ang is sup p orted b y nsf-iis 1016061, nsf-d m s 10075 27 , and n s f-iis 125098 5 . 19 A T ec hnical Pro ofs A.1 Pro of of Lemma 1 Pr o of. P art (a) : The fi r st inequ alit y follo ws fr om the triangle inequality . T he second inequalit y can b e deriv ed b y combining the fi r st one and the in tegration f ( x ) − f ( y ) − h∇ f ( y ) , x − y i = R 1 0 h∇ f ( y + τ ( x − y )) − ∇ f ( y ) , x − y i dτ . P art (b) : By addin g t wo copies of the inequalit y (3.1) with x an d y interc hanged and u sing the Th eorem 2.1.5 in (Nestero v , 2004), w e kno w that ( x − y ) ⊤ ( ∇ f ( x ) − ∇ f ( y )) ≥ m s k x − y k 2 , k∇ F f ( x ) − ∇ F f ( y ) k ≤ M s k x − y k . F or an y ζ > 0 we ha ve k x − y − ζ ∇ F f ( x ) + ζ ∇ F f ( y ) k 2 ≤ (1 − 2 ζ m s + ζ 2 M 2 s ) k x − y k 2 . If ζ < 2 m s / M 2 s , then ρ s = p 1 − 2 ζ m s + ζ 2 M 2 s < 1. T h is prov es the desired result. A.2 Pro of of Theorem 1 Pr o of. W e first pro ve the finite iteration guarantee of GraHTP . Let us consider the vec tor ˜ x ( t ) k whic h is the restriction of ˜ x ( t ) on F ( t ) . According to the definition of x ( t ) w e hav e f ( x ( t ) ) ≤ f ( ˜ x ( t ) k ). It follo ws th at f ( x ( t ) ) − f ( x ( t − 1) ) ≤ f ( ˜ x ( t ) k ) − f ( x ( t − 1) ) ≤ h∇ f ( x ( t − 1) ) , ˜ x ( t ) k − x ( t − 1) i + 1 + ρ 2 k 2 ζ k ˜ x ( t ) k − x ( t − 1) k 2 ≤ − 1 2 η k ˜ x ( t ) k − x ( t − 1) k 2 + 1 + ρ 2 k 2 ζ k ˜ x ( t ) k − x ( t − 1) k 2 = − ζ − η (1 + ρ 2 k ) 2 ζ η k ˜ x ( t ) k − x ( t − 1) k 2 , (A.1) where the second inequ alit y follo ws from Lemma 1 and the thir d inequalit y follo ws f rom the fact that ˜ x ( t ) k is a b etter k -sp arse app ro ximation to ˜ x ( t ) than x ( t − 1) so that k ˜ x ( t ) k − ˜ x ( t ) k = k ˜ x ( t ) k − x ( t − 1) + η ∇ f ( x ( t − 1) ) k 2 ≤ k x ( t − 1) − x ( t − 1) + η ∇ f ( x ( t − 1) ) k 2 = k η ∇ f ( x ( t − 1) ) k 2 , w hic h implies 2 η h∇ f ( x ( t − 1) ) , ˜ x ( t ) k − x ( t − 1) i ≤ −k ˜ x ( t ) k − x ( t − 1) k 2 . S ince η (1 + ρ 2 k ) < ζ , it follo ws that the s equence { f ( x ( t ) ) } is nonin - creasing, h ence it is con v ergent . Since it is also ev ent u ally p erio dic, it must b e eve ntually constant . In view of (A.1), w e deduce that ˜ x ( t ) k = x ( t − 1) , and in particular that F ( t ) = F ( t − 1) , for t large enough. This imp lies that x ( t ) = x ( t − 1) for t large enough, which implies the desired result. By noting that x ( t ) = ˜ x ( t ) k and from the in equ alit y (A.1), w e im m ediately establish the conv er- gence of th e sequence { f ( x ( t ) ) } defin ed by F GraHTP . A.3 Pro of of Theorem 2 Pr o of. P art (a) : T h e first step of the pr o of is a consequence of the debiasing step S 3. Sin ce x ( t ) is th e minimum of f ( x ) restricted o ver the supp orting set F ( t ) , we ha ve h∇ f ( x ( t ) ) , z i = 0 whenev er 20 supp( z ) ⊆ F ( t ) . Let ¯ F = s u pp( ¯ x ) and F = ¯ F ∪ F ( t ) ∪ F ( t − 1) . It follo ws that k ( x ( t ) − ¯ x ) F ( t ) k 2 = h x ( t ) − ¯ x, ( x ( t ) − ¯ x ) F ( t ) i = h x ( t ) − ¯ x − ζ ∇ F ( t ) f ( x ( t ) ) + ζ ∇ F ( t ) f ( ¯ x ) , ( x ( t ) − ¯ x ) F ( t ) i − ζ h∇ F ( t ) f ( ¯ x ) , ( x ( t ) − ¯ x ) F ( t ) i ≤ ρ s k x ( t ) − ¯ x kk ( x ( t ) − ¯ x ) F ( t ) k + ζ k∇ k f ( ¯ x ) kk ( x ( t ) − ¯ x ) F ( t ) k , where the last inequalit y is from C on d ition C ( s, ζ , ρ s ), ρ k + ¯ k ≤ ρ s and k∇ F ( t ) f ( ¯ x ) k ≤ k∇ k f ( ¯ x ) k . After simp lification, w e ha ve k ( x ( t ) − ¯ x ) F ( t ) k ≤ ρ s k x ( t ) − ¯ x k + ζ k∇ k f ( ¯ x ) k . It follo ws th at k x ( t ) − ¯ x k ≤ k ( x ( t ) − ¯ x ) F ( t ) k + k ( x ( t ) − ¯ x ) F ( t ) k ≤ ρ s k x ( t ) − ¯ x k + ζ k∇ k f ( ¯ x ) k + k ( x ( t ) − ¯ x ) F ( t ) k . After rearran gement w e obtain k x ( t ) − ¯ x k ≤ k ( x ( t ) − ¯ x ) F ( t ) k 1 − ρ s + ζ k∇ k f ( ¯ x ) k 1 − ρ s . (A.2) The second step of the pro of is a consequence of steps S1 and S 2. W e notice that k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) ¯ F k ≤ k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) F ( t ) k . By eliminating th e con trib ution on ¯ F ∩ F ( t ) , w e deriv e k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) ¯ F \ F ( t ) k ≤ k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) F ( t ) \ ¯ F k . F or the r igh t-hand s ide, w e ha ve k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) F ( t ) \ ¯ F k ≤ k ( x ( t − 1) − ¯ x − η ∇ f ( x ( t − 1) ) + η ∇ f ( ¯ x )) F ( t ) \ ¯ F k + η k∇ k f ( ¯ x ) k . As for the left-hand side, w e h a v e k ( x ( t − 1) − η ∇ f ( x ( t − 1) )) ¯ F \ F ( t ) k ≥ k ( x ( t − 1) − ¯ x − η ∇ f ( x ( t − 1) ) + η ∇ f ( ¯ x )) ¯ F \ F ( t ) + ( x ( t ) − ¯ x ) F ( t ) k − η k∇ k f ( ¯ x ) k ≥ k ( x ( t ) − ¯ x ) F ( t ) k − k ( x ( t − 1) − ¯ x − η ∇ f ( x ( t − 1) ) + η ∇ f ( ¯ x )) ¯ F \ F ( t ) k − η k∇ k f ( ¯ x ) k . With ¯ F ∆ F ( t ) denoting the s y m metric difference of the set ¯ F and F ( t ) , it follo ws that k ( x ( t ) − ¯ x ) F ( t ) k ≤ √ 2 k ( x ( t − 1) − ¯ x − η ∇ f ( x ( t − 1) ) + η ∇ f ( ¯ x )) ¯ F ∆ F ( t ) k + 2 η k∇ k f ( ¯ x ) k ≤ √ 2 k x ( t − 1) − ¯ x − η ∇ F f ( x ( t − 1) ) + η ∇ F f ( ¯ x ) k + 2 η k∇ k f ( ¯ x ) k ≤ √ 2 k x ( t − 1) − ¯ x − ζ ∇ F f ( x ( t − 1) ) + ζ ∇ F f ( ¯ x ) k + √ 2( ζ − η ) k∇ F f ( x ( t − 1) ) − ∇ F f ( ¯ x ) k + 2 η k∇ k f ( ¯ x ) k ≤ √ 2(1 − η /ζ + (2 − η /ζ ) ρ s ) k x ( t − 1) − ¯ x k + 2 η k∇ k f ( ¯ x ) k , (A.3) where the last inequalit y follo ws from Condition C ( s, ζ , ρ s ), η < ζ and Lemma 1. As a fin al step, w e put (A.2) and (A.3) together to obtain k x ( t ) − ¯ x k ≤ √ 2(1 − η /ζ + (2 − η /ζ ) ρ s ) 1 − ρ s k x ( t − 1) − ¯ x k + (2 η + ζ ) k∇ k f ( ¯ x ) k 1 − ρ s 21 Since µ 1 = √ 2(1 − η /ζ + (2 − η /ζ ) ρ s ) / (1 − ρ s ) < 1, by recursive ly applying the ab o ve inequalit y w e obtain the desired inequalit y in part (a). P art (b): Reca ll th at F ( t ) = supp( x ( t ) , k ) and F = F ( t − 1) ∪ F ( t ) ∪ supp( ¯ x ). Consider the follo wing v ector y = x ( t − 1) − η ∇ F f ( x ( t − 1) ) . By usin g triangular inequalit y we ha v e k y − ¯ x k = k x ( t − 1) − η ∇ F f ( x ( t − 1) ) − ¯ x k ≤ k x ( t − 1) − ¯ x − η ∇ F f ( x ( t − 1) ) + η ∇ F f ( ¯ x ) k + η k∇ F f ( ¯ x ) k ≤ (1 − η /ζ + (2 − η /ζ ) ρ s ) k x ( t − 1) − ¯ x k + η k∇ s f ( ¯ x ) k , where the last inequalit y follo ws from Condition C ( s, ζ , ρ s ), η < ζ and k∇ F f ( ¯ x ) k ≤ k∇ s f ( ¯ x ) k . F or F GraHTP , we note that x ( t ) = ˜ x ( t ) k = y k , and th us k x ( t ) − ¯ x k ≤ k x ( t ) − y k + k y − ¯ x k ≤ 2 k y − ¯ x k . I t follo ws th at k x ( t ) − ¯ x k ≤ 2(1 − η /ζ + (2 − η /ζ ) ρ s ) k x ( t − 1) − ¯ x k + 2 η k∇ s f ( ¯ x ) k . Since µ 2 = 2(1 − η /ζ + (2 − η /ζ ) ρ s ) < 1, by recursively applying the ab o ve inequalit y w e obtain the desir ed in equ alit y in part (b). A.4 Pro of of Prop osition 1 Pr o of. Ob viously , f ( w ) is λ -strongly con v ex. Cons ider an index set F with card inalit y | F | ≤ s and all w , w ′ with sup p( w ) ∪ su pp( w ′ ) ⊆ F . Since σ ( z ) is Lipsc h itz con tin u ous with constan t 1, w e h a ve | [ a ( w )] i − [ a ( w ′ )] i ) | = 2 | σ (2 v ( i ) w ⊤ u ( i ) ) − σ (2 v ( i ) w ′⊤ u ( i ) ) | ≤ 4 | ( w − w ′ ) ⊤ v ( i ) u ( i ) | ≤ 4 k ( u ( i ) ) F kk w − w ′ k ≤ 4 R s k w − w ′ k , whic h implies k a ( w ) − a ( w ′ ) k ∞ ≤ 4 R s k w − w ′ k . Therefore we ha v e k∇ F f ( w ) − ∇ F f ( w ′ ) k ≤ 1 n k U F • ( a ( w ) − a ( w ′ )) k + λ k w − w ′ k ≤ 1 n k U F • ( a ( w ) − a ( w ′ )) k 1 + λ k w − w ′ k ≤ 1 n | U F • | 1 k a ( w ) − a ( w ′ ) k ∞ + λ k w − w ′ k ≤ (4 √ sR 2 s + λ ) k w − w ′ k , where the second “ ≤ ” follo ws k x k ≤ k x k 1 , the th ir d “ ≤ ” follo ws from k Ax k 1 ≤ | A | 1 k x k ∞ , and last “ ≤ ” follo ws from | U F • | 1 ≤ n √ s max i k [ u ( i ) ] F k ≤ n √ sR s . Th erefore f is (4 √ sR 2 s + λ )-strongly smo oth. Th e desired result follo ws directly fr om P art(b) of Lemma 1 . 22 A.5 Pro of of Prop osition 2 Pr o of. F or an y index set F with | F | ≤ s , we can dedu ce k∇ F f ( ¯ w ) k ≤ k [ ∇ l ( ¯ w )] F k + λ k ¯ w F k ≤ √ s k∇ l ( ¯ w ) k ∞ + λ k ¯ w s k . (A.4) W e next b ound the term k∇ l ( ¯ w ) k ∞ . F rom (4.4) w e hav e ∂ l ∂ [ ¯ w ] j = 1 n n X i =1 − v ( i ) [ u ( i ) ] j + E v [ v [ u ( i ) ] j | u ( i ) ] ≤ 1 n n X i =1 v ( i ) [ u ( i ) ] j − E [ v [ u ] j ] + 1 n n X i =1 E v [ v [ u ( i ) ] j | u ( i ) ] − E [ v [ u ] j ] , where E [] is tak en o v er the distrib u tion (4.3). Th erefore, for any ε > 0, P ∂ l ∂ [ ¯ w ] j > ε ≤ P 1 n n X i =1 v ( i ) [ u ( i ) ] j − E [ v [ u ] j ] > ε 2 ! + P 1 n n X i =1 E v [ v [ u ( i ) ] j | u ( i ) ] − E [ v [ u ] j ] > ε 2 ! ≤ 4 exp − nε 2 8 σ 2 , where the last “ ≤ ” follo ws from the large deviation inequalit y of sub-Gaussian random v ariables whic h is standard (see, e.g., V ershynin, 2011). By the union b ound we ha ve P ( k∇ l ( ¯ w ) k ∞ > ε ) ≤ 4 p exp − nε 2 8 σ 2 . By letting ε = 4 σ p ln p/n , we kno w that with pr obabilit y at least 1 − 4 p − 1 , k∇ l ( ¯ w ) k ∞ ≤ 4 σ p ln p/n. Com bing the ab ov e inequalit y with (A.4 ) yields th e desired resu lt. B Solving Subp roblem (4.7) via ADM In this app endix section, w e provide our implementa tion details of ADM for solving th e subprob- lem (4.7). By introd u cing an auxiliary v ariable Θ ∈ R p × p , the problem (4.7) is ob viously equiv alen t to the follo wing p roblem: min αI Ω β I L (Ω) , s.t. Ω = Θ , supp(Θ) ⊆ F . (B.1) Then, the Au gmen ted Lagrangian function of (B.1) is J (Ω , Θ , Γ) := L (Ω) − h Γ , Ω − Θ i + ρ 2 k Ω − Θ k 2 F rob , 23 where Γ ∈ R p × p is the m ultiplier of the linear constraint Ω = Θ and ρ > 0 is the p enalt y parameter for the violat ion of th e linear constraint. The ADM solv es the follo wing problems to generate the new iterate: Ω ( τ +1) = a r g m in αI Ω β I J (Ω , Θ ( τ ) , Γ ( τ ) ) , (B.2) Θ ( τ +1) = arg min supp(Θ) ⊆ F J (Ω ( τ +1) , Θ , Γ ( τ ) ) , (B.3) Γ ( τ +1) = Γ ( τ ) − ρ (Ω ( τ +1) − Θ ( τ +1) ) . Let us fi rst consider the minimization pr ob lem (B.2). It is easy to verify that it is equiv alen t to the follo wing minimization problem: Ω ( τ +1) = arg min αI Ω β I 1 2 k Ω − M k 2 F rob − 1 ρ log det Ω , where M = Θ ( τ ) − 1 ρ (Σ n − Γ ( τ ) ) . Let the sin gu lar v alue decomp osition of M b e M = V Λ V ⊤ , with Λ = diag( λ 1 , ..., λ n ) . It is easy to ve rif y that the solution of p roblem (B.2) is giv en by Ω ( τ +1) = V ˜ Λ V ⊤ , with ˜ Λ = diag( ˜ λ 1 , ..., ˜ λ n ) , where ˜ λ j = min β , max α, λ j + q λ 2 j + 4 /ρ 2 . Next, w e consider the m in imization problem (B.3). It is straigh tforw ard to see that the solution of problem (B.3) is giv en b y Θ ( τ +1) = h Ω ( τ +1) − 1 /ρ Γ ( τ ) i F . References Agarw al, A., Negah b an, S ., and W ain w righ t, M. F ast global con v ergence rates of grad ient metho ds for high-dimens ional statistica l reco v ery . In Pr o c e e dings of the 24th Annual Confer enc e on Neur al Information Pr o c essing Systems (NIPS’10) , 2010. Bahmani, S., Ra j, B., and Boufoun os, P . Greedy sparsit y-constrained optimization. J ournal of Machine L e arn ing R ese ar ch , 14:80 7–841, 2013. Bec k, A. and T eb oulle, Marc. A fast iterativ e shrink age-thresholding algorithm for linear in ve r s e problems. SIAM Journal on Imaging Scienc es , 2(1):183–20 2, 2009. Bishop, C.M. Pattern R e c o gnition and Machine L e arning . S pringer-V erlag New Y ork, I nc., Secau- cus, NJ, USA, 2006. ISBN 978-0-387 -31073-2. 24 Blumensath, T . and Da vies, M. E. Iterativ e hard thresholding for compressed sensing. Applie d and Computation al Harmonic A nalysis , 27(3 ):265–274 , 2009. Bo yd, S., Parikh, N., Chu, E ., Pele ato, B., and Ec kstein, J . Distribu ted optimizatio n and statistical learning via the alternating direction metho d of m ultipliers. F oundations and T r e nds in Machine L e arning , 3:1–122 , 2010. Cai, T., liu, W., and Luo, X. A constrained ℓ 1 minimization approac h to sparse pr ecision matrix estimation. Journal of the Americ an Statistic al Asso ciation , 106(494):5 94–607, 2011. Cand` es, E. J., Rom b erg, J . K., and T ao, T. Stable signal reco v ery fr om in complete and inaccurate measuremen ts. Co mmunic ations on P ur e and Applie d Mathematics , 59(8):1207– 1223, 2006. Dai, W. and Milenk o vic, O. S ubsp ace pursuit for compressiv e sensing signal reconstruction. IEEE T r ansactio ns on Information The ory , 55(5):2 230–2249, 2009. Donoho, D. L. Compressed sensing. IEEE T r ansactions on Information The ory , 52(4):12 89–1306, 2006. Edwa rd s, D. M. Intr o duction to Gr aphic al Mo del ling . Spr inger, New Y ork, 2000 . F oucart, S. Hard thresholding pursu it: An algorithm for compressiv e sen s ing. SIAM Journal on Numeric al Ana lysis , 49(6 ):2543–256 3, 2011. F oucart, S. S parse r eco v ery algorithms: s ufficien t conditions in terms of restricted isometry con- stan ts. In Appr oximation The ory XIII: San Antonio 2010 , volume 13 of Springer Pr o c e e dings i n Mathematics , pp . 65–7 7, 2012. F r ank, M. and W olfe, P . An algorithm for quadratic programming. Naval R es. L o gist. Quart. , 5: 95–11 0, 1956. F r iedman, J ., Hastie, T., and Tibshirani, R. S parse inv erse co v ariance estimation with th e graphical lasso. Biostatistics , 9(3):432–4 41, 2008. Hess, K. R., Anders on , K., Sym mans, W. F., and et al. Pharmacoge n omic pred ictor of s n esitivit y to preop erativ e c hemotherapy w ith paclitaxel and fluorouracil, d o xorubicin, and cyclophosphamide in breast cancer. Journal of Clinic al Onc olo gy , 24:4236–4 244, 2006. Jaggi, M. Sp ars e conv ex optimization metho ds for mac hine learning. T ec hnical r ep ort, PhD thesis in Theoretical Computer Science, ET H Zurich, 201 1. Jalali, A., J oh n son, C. C., and Ra vikum ar, P . K. On learning discrete graph ical mo dels using greedy metho ds. In Pr o c e e dings of the 25th Annual Confer enc e on Neur al Information Pr o c essing Systems (NIPS’11) , 201 1. Kim, Y on gd ai and Kim, Jin s eog. Gradien t lasso for f eature select ion. In Pr o c e e dings of the Twenty- first International Confer enc e on Machine L e arning (ICML’04) , pp . 60–67, 2004. Lu, Z. Sm o oth optimization app roac h for sparse co v ariance selection. SIAM Journal on Optimiza- tion , 19(4):18 07–1827, 2009. 25 Ma, Z . Spars e principal comp onen t analysis and iterativ e thresholding. Annals of Statistics , 41(2): 772–8 01, 2013 . Mallat, S. and Zhang, Zhifeng. Matc hing p u rsuits with time-frequency dictionaries. IEEE T r ans- actions on Signal Pr o c essing , 41(12):3 397–3415, 1993 . Natara jan, B. K. Sparse app ro ximate solutions to lin ear s ystems. SIAM Journal on Computing , 24(2): 227–234, 1995. Needell, D. and T ropp, J. A. C osamp: iterativ e signal reco very fr om incomplete and in accurate samples. IEEE T r ansactions on Information The ory , 26(3):301– 321, 2009. Nestero v, Y. Intr o ductory L e ctur e s on Convex Optimization: A Basic Course . Kluw er, 2004. ISBN 978-1 402075537 . Ra vikumar, P ., W ainwrigh t, M. J., Raskutti, G., and Y u, B. High-dimensional co v ariance estimation b y minimizing ℓ 1 -p enalized log-determinan t dive r gence. Ele ctr onic J ournal of Statistics , 5:935– 980, 2011. Shalev-Shw artz, Shai, Srebro, Nathan, and Z hang, T ong. T rading acc u racy for sparsit y in op- timizatio n problems with s p arsit y constrain ts. SIAM Journal on Optimization , 20:2807– 2832, 2010. T ew ari, A., Ra vikumar, P ., and Dhillon, I. S. Greedy algorithms for s tr ucturally constrained high dimensional pr ob lems. In P r o c e e dings of the 25th Annua l Confer enc e on Neur al Information Pr o c essing Systems (N IPS’11) , 2011. T ropp, J . and Gilb ert, A. Signal reco very from random m easuremen ts via orthogonal matc hing pursu it. IEEE T r ansactions on Information The ory , 53(1 2):4655–4 666, 2007 . V ers h ynin , Roman. Int r o duction to the non-asymp totic analysis of random matrices. 2011. URL http://a rxiv.org/ pdf/1011.3027.pdf . W ainwrigh t, M.J. and Jordan, M.I. Gr ap h ical mo dels, exp onent ial families, and v ariat ional infer- ence. F oundations and T r ends in Machine L e arning , 1(1-2):1–30 5, 2008. Y u an, X. M. Alt ern ating d irection metho d of multi p liers for co v ariance selection mod els. Journal of Scientific Computing , 51:261–273 , 2012. Y u an, X.-T. and Y an, S. F orward basis selection for sp arse approxima tion o v er d ictionary . In Pr o c e e dings of the Fifte enth International Confer enc e on A rtificial Intel ligenc e and Statistics (AIST A TS’12) , 201 2. Y u an, X.-T. and Y an, S. F orw ard basis selecti on for p ursuin g sparse repr esen tations o v er a dic- tionary . IEEE T r ansactions on Pattern Analysis And Machine Intel ligenc e , 35(12) :3025–303 6 , 2013. Y u an, X.-T. and Z hang, T. T run cated p ow er metho d for sparse eigen v alue problems. Journal of Machine L e arn ing R ese ar ch , 14:89 9–925, 2013. Zhang, T. Adativ e forward-bac kw ard greedy algorithm for spars e learning with linear mo d - els. In Pr o c e e dings of the 22nd Annual Confer enc e on Neu r al Information Pr o c essing Systems (NIPS’08) , 2008. 26

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

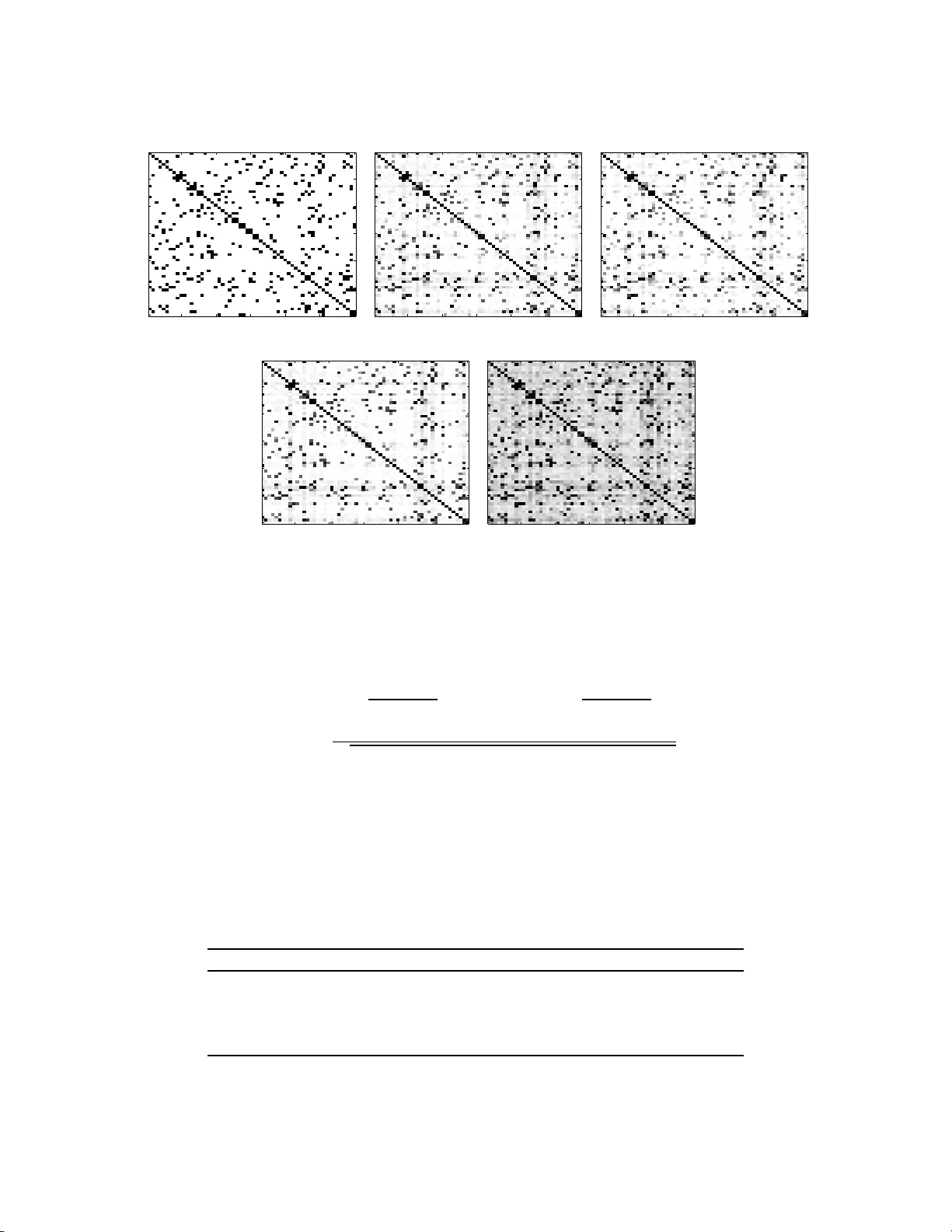

Leave a Comment