Estimating Functions of Distributions Defined over Spaces of Unknown Size

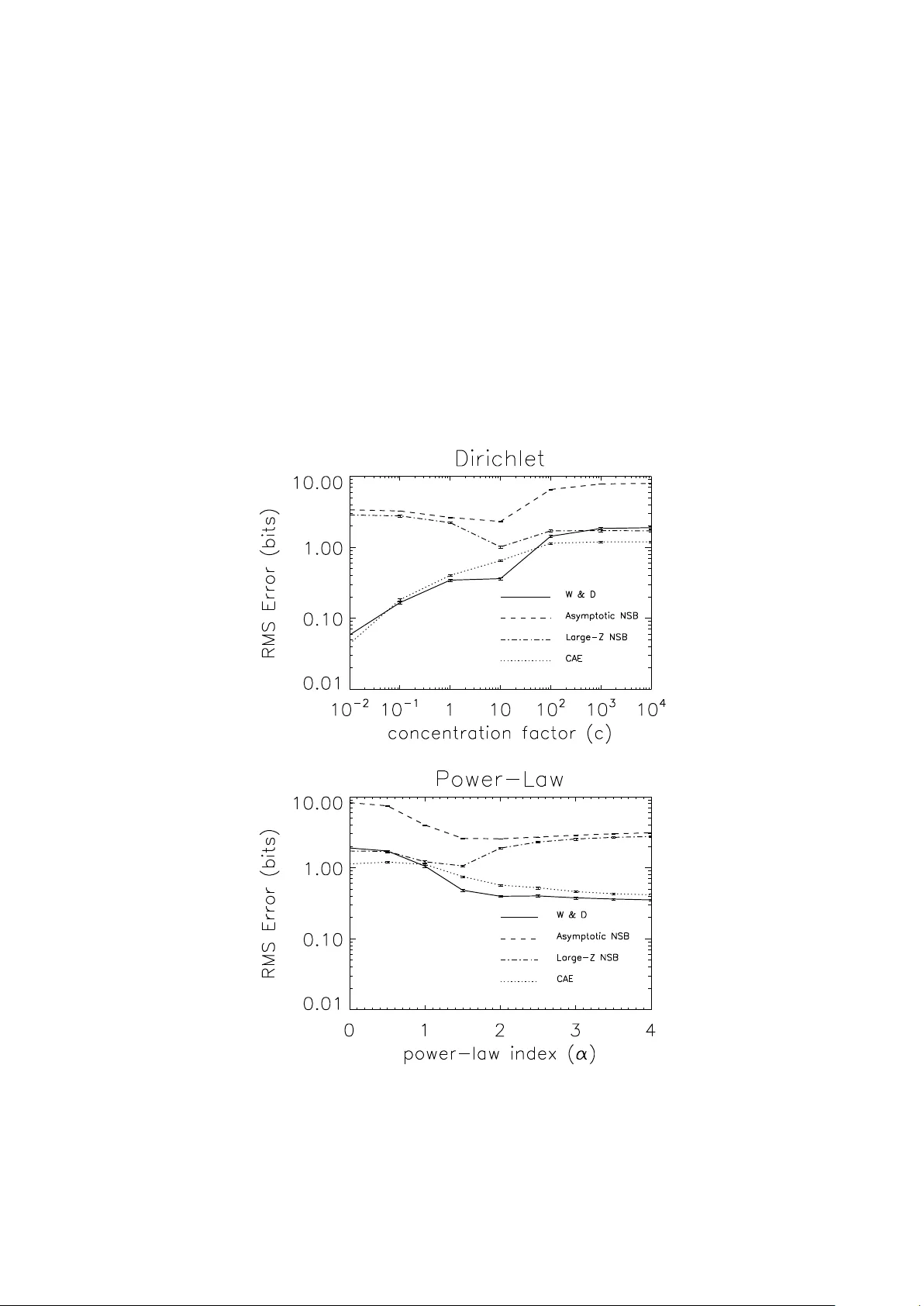

We consider Bayesian estimation of information-theoretic quantities from data, using a Dirichlet prior. Acknowledging the uncertainty of the event space size $m$ and the Dirichlet prior's concentration parameter $c$, we treat both as random variables…

Authors: David H. Wolpert, Simon DeDeo