Local Graph Clustering Beyond Cheegers Inequality

Motivated by applications of large-scale graph clustering, we study random-walk-based LOCAL algorithms whose running times depend only on the size of the output cluster, rather than the entire graph. All previously known such algorithms guarantee an …

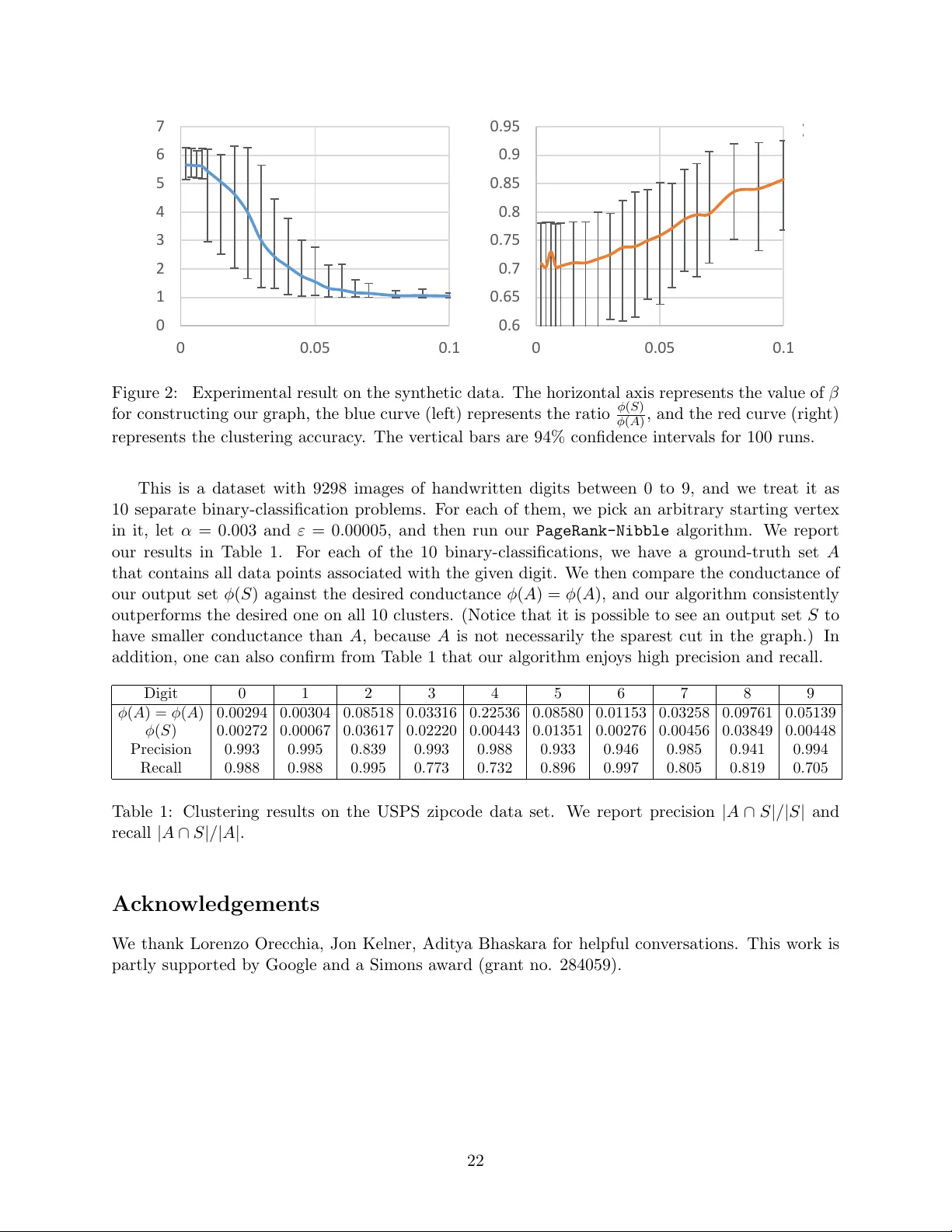

Authors: Zeyuan Allen Zhu, Silvio Lattanzi, Vahab Mirrokni