Inverting Nonlinear Dimensionality Reduction with Scale-Free Radial Basis Function Interpolation

Nonlinear dimensionality reduction embeddings computed from datasets do not provide a mechanism to compute the inverse map. In this paper, we address the problem of computing a stable inverse map to such a general bi-Lipschitz map. Our approach relie…

Authors: Nathan D. Monnig, Bengt Fornberg, Francois G. Meyer

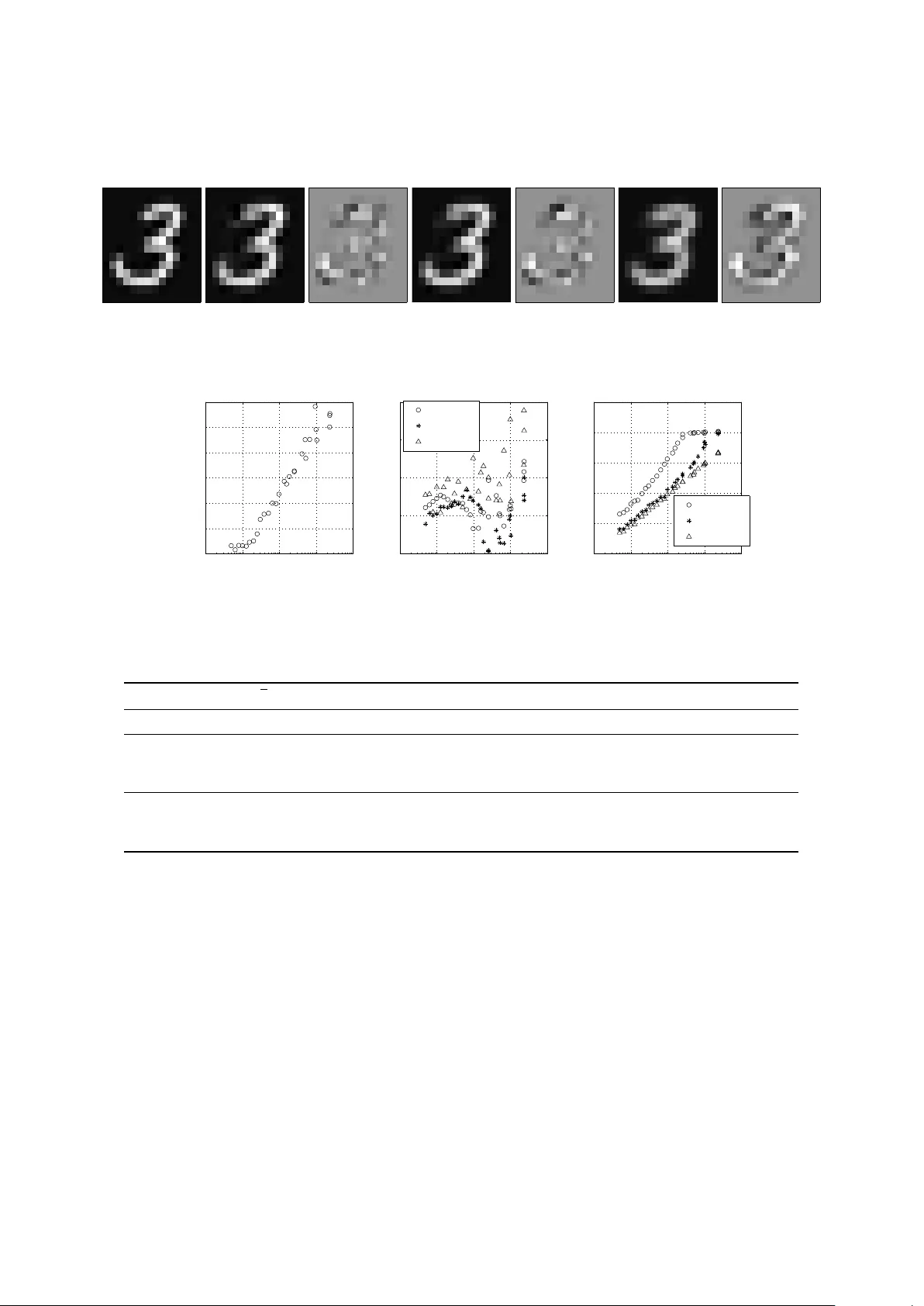

In verting Nonlinear Dimensionality Reduction with Scale-Free Radial Basis Function Interpolation Nathan D. Monnig a, ∗ , Bengt Fornber g a , François G. Meyer b a Department of Applied Mathematics, UCB 526, University of Colorado at Boulder , Boulder , CO 80309 b Department of Electrical Engineering, UCB 425, University of Color ado at Boulder , Boulder , CO 80309 Abstract Nonlinear dimensionality reduction embeddings computed from datasets do not provide a mechanism to compute the inv erse map. In this paper , we address the problem of computing a stable inv erse map to such a general bi- Lipschitz map. Our approach relies on radial basis functions (RBFs) to interpolate the in verse map ev erywhere on the lo w-dimensional image of the forward map. W e demonstrate that the scale-free cubic RBF k ernel performs better than the Gaussian kernel: it does not su ff er from ill-conditioning, and does not require the choice of a scale. The proposed construction is shown to be similar to the Nyström e xtension of the eigenv ectors of the symmetric normalized graph Laplacian matrix. Based on this observation, we provide a new interpretation of the Nyström extension with suggestions for improv ement. K eywor ds: in verse map, nonlinear dimensionality reduction, radial basis function, interpolation, Nyström extension 1. Introduction The construction of parametrizations of low dimensional data in high dimension is an area of intense research (e.g., [1, 2, 3, 4]). A major limitation of these methods is that they are only defined on a discrete set of data. As a result, the in verse mapping is also only defined on the data. There are well known strategies to extend the forward map to ne w points—for example, the Nyström e xtension is a common approach to solve this out-of-sample e xtension problem (see e.g., [5] and references therein). Howe ver , the problem of extending the in verse map (i.e. the pr eimage pr oblem ) has recei ved little attention so far (but see [6]). The nature of the preimage problem precludes application of the Nyström extension, since it does not in volv e extension of eigen vectors. W e present a method to numerically inv ert a general smooth bi-Lipschitz nonlinear dimensionality reduction mapping o ver all points in the image of the forward map. The method relies on interpolation via radial basis functions of the coordinate functions that parametrize the manifold in high dimension. The contributions of this paper are tw ofold. Primarily , this paper addresses a fundamental problem for the analysis of datasets: giv en the construction of an adaptive parametrization of the data in terms of a small number of coordinates, how does one synthesize new data using new values of the coordinates? W e provide a simple and elegant solution to solve the “preimage problem”. Our approach is scale-free and numerically stable and can be applied to an y nonlinear dimension reduction technique. The second contribution is a novel interpretation of the Nyström extension as a properly rescaled radial basis function interpolant. A precise analysis of this similarity yields a critique of the Nyström extension, as well as suggestions for impro vement. ∗ Corresponding author Email addr ess: E-mail: nathan.monnig@colorado.edu (Nathan D. Monnig) URL: http://amath.colorado.edu/student/monnign/ (Nathan D. Monnig) Pr eprint submitted to Elsevier September 19, 2018 2. The Inv erse Mapping 2.1. Definition of the problem, and appr oac h W e consider a finite set of n datapoints { x (1) , . . . , x ( n ) } ⊂ R D that lie on a bounded low-dimensional smooth manifold M ⊂ R D , and we assume that a nonlinear mapping has been defined for each point x ( i ) , Φ n : M ⊂ R D − → R d (1) x ( i ) 7− → y ( i ) = Φ n ( x ( i ) ) , i = 1 , . . . , n . (2) W e further assume that the map Φ n con verges toward a limiting continuous function, Φ : M → Φ ( M ) , when the number of samples goes to infinity . Such limiting maps exist for algorithms such as the Laplacian eigenmaps [2]. In practice, the construction of the map Φ n is usually only the first step. Indeed, one is often interested in ex- ploring the configuration space in R d , and one needs an in verse map to synthesize a new measurement x for a new configuration y = h y 1 · · · y d i T in the coordinate domain (see e.g., [7]). In other words, we would like to define an in verse map Φ − 1 n ( y ) at any point y ∈ Φ n ( M ) . Unfortunately , unlike linear methods (such as PCA), nonlinear dimen- sion reduction algorithms only pro vide an e xplicit mapping for the original discrete dataset { x (1) , . . . , x ( n ) } . Therefore, the in verse mapping Φ − 1 n is only defined on these data. The goal of the present work is to generate a numerical extension of Φ − 1 n to all of Φ ( M ) ⊂ R d . T o simplify the problem, we assume the mapping Φ n coincides with the limiting map Φ on the data, Φ n ( x ( i ) ) = Φ ( x ( i ) ) for i = 1 , . . . , n . This assumption allows us to rephrase the problem as follo ws: we seek an extension of the map Φ − 1 ev erywhere on Φ ( M ), gi ven the knowledge that Φ − 1 ( y ( i ) ) = Φ − 1 n ( y ( i ) ) = x ( i ) . W e address this problem using interpolation, and we construct an approximate inv erse Φ † n , which con verges toward the true in verse as the number of samples, n , goes to infinity , Φ † : Φ ( M ) → R D , with Φ † y ( i ) = x ( i ) , (3) and ∀ y ∈ Φ ( M ) , lim n →∞ Φ † n ( y ) = Φ − 1 ( y ) . (4) Using terminology from geometry , we call Φ ( M ) the coordinate domain , and Φ − 1 a coordinate map that parametrizes the manifold M = { x ∈ R D ; x = Φ − 1 ( y ) , y ∈ Φ ( M ) } . The components of Φ − 1 = h φ − 1 1 · · · φ − 1 D i T are the coor dinate functions . W e note that the focus of the paper is not the construction of ne w points y in the coordinate domain, but rather the computation of the coordinate functions ev erywhere in Φ ( M ). 2.2. Interpolation of multivariate functions defined on scattered data Giv en the kno wledge of the inv erse at the points y ( i ) , we wish to interpolate Φ − 1 ov er Φ ( M ) . W e propose to interpolate each coordinate function, φ − 1 i ( y ) , i = 1 , . . . , D independently of each other . W e are thus facing the problem of interpolating a function of sev eral variables defined on the manifold Φ ( M ). Most interpolation techniques that are designed for single variable functions can only be extended using tensor products, and have very poor performance in sev eral dimensions. F or instance, we know from Mairhuber theorem (e.g., [8]) that we should not use a basis independent of the nodes (for example, polynomial) to interpolate scattered data in dimension d > 1. As a result, fe w options exist for multiv ariate interpolation. Some of the most successful interpolation methods inv olve Radial Basis Functions (RBFs) [8]. Therefore, we propose to use RBFs to construct the inv erse mapping. Similar methods have been explored in [6, 9] to interpolate data on a low-dimensional manifold. W e note that while kriging [10] is another common approach for interpolating scattered data, most kriging techniques are equi v alent to RBF interpolants [11]. In fact, because in our application we lack specialized information about the cov ariance structure of the inv erse map, kriging is identical to RBF interpolation. W e focus our attention on two basis functions: the Gaussian and the cubic. These functions are representati ve of the two main classes of radial functions: scale dependent, and scale inv ariant. In the experimental section we compare the RBF methods to Shepard’ s method [12], an approach for multiv ariate interpolation and approximation that is used extensi vely in computer graphics [13], and which was recently proposed in [14] to compute a similar in verse map. 2 For each coordinate function φ − 1 i , we define φ † i to be the RBF interpolant to the data y ( i ) , x ( i ) , for all y ∈ Φ ( M ) , φ † i ( y ) = n X j = 1 α ( j ) i k ( y , y ( j ) ) . (5) The reader will notice that we dropped the dependency on n (number of samples) in Φ † = h φ † 1 . . . φ † D i T to ease readability . The function k in (5) is the kernel that defines the radial basis functions, k ( z , w ) = g ( k z − w k ). The weights, { α (1) i , . . . , α ( n ) i } , are determined by imposing the fact that the interpolant be exact at the nodes y (1) , . . . , y ( n ) , and thus are giv en by the solution of the linear system k ( y (1) , y (1) ) · · · k ( y (1) , y ( n ) ) . . . . . . . . . k ( y ( n ) , y (1) ) · · · k ( y ( n ) , y ( n ) ) α (1) i . . . α ( n ) i = x (1) i . . . x ( n ) i . (6) W e can combine the D linear systems (6) by concatenating all the coordinates in the right-hand side of (6), and the corresponding unknown weights on the left-hand side of (6) to form the system of equations, k ( y (1) , y (1) ) · · · k ( y (1) , y ( n ) ) . . . . . . . . . k ( y ( n ) , y (1) ) · · · k ( y ( n ) , y ( n ) ) α (1) 1 α (1) D . . . · · · . . . α ( n ) 1 α ( n ) D = x (1) 1 x (1) D . . . · · · . . . x ( n ) 1 x ( n ) D , (7) which takes the form K A = X , where K i , j = k ( y ( i ) , y ( j ) ), A i , j = α ( i ) j , and X i , j = x ( i ) j . Let us define the vector k ( y , · ) = h k ( y , y (1) ) . . . k ( y , y ( n ) ) i T . The approximate inv erse at a point y ∈ Φ ( M ) is gi ven by Φ † ( y ) T = k ( y , · ) T A = k ( y , · ) T K − 1 X . (8) 3. Conv ergence of RBF Interpolants 3.1. Invertibility and Conditioning The approximate inv erse (8) is obtained by interpolating the original data y ( i ) , x ( i ) using RBFs. In order to assess the quality of this inv erse, three questions must be addressed: 1) Given the set of interpolation nodes, n y ( i ) o , is the interpolation matrix K in (7) necessarily non-singular and well-conditioned? 2) How well does the interpolant (8) approximate the true inv erse Φ − 1 ? 3) What con vergence rate can we e xpect as we populate the domain with additional nodes? In this section we provide elements of answers to these three questions. For a detailed treatment, see [8, 15]. In order to interpolate with a radial basis function k ( z , w ) = g ( k z − w k ), the system (7) should have a unique solution and be well-conditioned. In the case of the Gaussian defined by k ( z , w ) = exp( − ε 2 k z − w k 2 ) , (9) the eigen values of K in (7) follow patterns in the po wers of ε that increase with successi ve eigen values, which leads to rapid ill-conditioning of K with increasing n (e.g., [16]; see also [17] for a discussion of the numerical rank of the Gaussian kernel). The resulting interpolant will exhibit numerical saturation err or . This issue is common among many scale-dependent RBF interpolants. The Gaussian scale parameter , ε , must be selected to match the spacing of the interpolation nodes. One commonly used measure of node spacing is the fill distance , the maximum distance from an interpolation node. Definition 1. F or the domain Ω ⊂ R d and a set of interpolation nodes Z = { z (1) , . . . , z ( n ) } ⊂ Ω the fill distance , h Z , Ω , is defined by h Z , Ω : = sup z ∈ Ω min z ( j ) ∈ Z k z − z ( j ) k . (10) 3 0 0.2 0.4 0.6 0.8 1 10 0 10 5 10 10 10 15 10 20 10 25 Fill Distance Condition number D = 5 Dimensions Cubic RBF Gaussian RBF 0 0.2 0.4 0.6 0.8 1 10 0 10 5 10 10 10 15 10 20 Fill Distance Condition number D = 20 Dimensions Cubic RBF Gaussian RBF 0 0.2 0.4 0.6 0.8 1 10 0 10 5 10 10 10 15 Fill Distance Condition number D = 100 Dimensions Cubic RBF Gaussian RBF Figure 1: Condition number of K in (7), for the Gaussian ( ◦ ) and the cubic ( ∗ ) as a function of the fill distance for a fixed scale ε = 10 − 2 . Points are randomly scattered on the first quadrant of the unit sphere in R D , for D = 5 , 20 , 100 from left to right. Note: the same range of n , from 10 to 1000, was used in each dimension. In high dimension, it takes a lar ge number of points to reduce fill distance. Ho wev er, the condition number of K still gro ws rapidly for increasing n . 10 − 4 10 − 2 10 0 10 0 10 5 10 10 10 15 10 20 ε Condition number D = 5 Dimensions Cubic RBF Gaussian RBF 10 − 4 10 − 2 10 0 10 0 10 5 10 10 10 15 10 20 ε Condition number D = 20 Dimensions Cubic RBF Gaussian RBF 10 − 4 10 − 2 10 0 10 0 10 5 10 10 10 15 10 20 ε Condition number D = 100 Dimensions Cubic RBF Gaussian RBF Figure 2: Condition number of K in (7), for the Gaussian ( ◦ ) and the cubic (–) as a function of the scale ε , for a fixed fill distance. n = 200 points are randomly scattered on the first quadrant of the unit sphere in R D , D = 5 , 20 , 100 from left to right. Owing to the di ffi culty in precisely establishing the boundary of a domain Ω ⊂ R d defined by a discrete set of sampled data, estimating the fill distance h Z , Ω is somewhat di ffi cult in practice. Additionally , the fill distance is a measure of the “worst case”, and may not be representativ e of the “typical” spacing between nodes. Thus, we consider a proxy for fill distance which depends only on mutual distances between the data points. W e define the local fill distance , h local , to denote the av erage distance to a nearest neighbor, h local : = 1 n n X i = 1 min j , i k z ( i ) − z ( j ) k . (11) The relationship between the condition number of K and the spacing of interpolation nodes is explored in Fig. 1, where we observe rapid ill-conditioning of K with respect to decreasing local fill distance, h local . Con versely , if h local remains constant while ε is reduced, the resulting interpolant improv es until ill-conditioning of the K matrix leads to propagation of numerical errors, as is shown in Fig. 2. When interpolating with the Gaussian kernel, the choice of the scale parameter ε is di ffi cult. On the one hand, smaller values of ε likely lead to a better interpolant. For example, in 1- d , a Gaussian RBF interpolant will conv erge to the Lagrange interpolating polynomial in the limit as ε → 0 [18]. On the other hand, the interpolation matrix becomes rapidly ill-conditioned for decreasing ε . While some stable algorithms ha ve been recently proposed to generate RBF interpolants (e.g., [19], and references therein) 4 these sophisticated algorithms are more computationally intensive and algorithmically complex than the RBF-Direct method used in this paper , making them undesirable for the inv erse-mapping interpolation task. Saturation error can be av oided by using the scale-free RBF kernel g ( k z − w k ) = k z − w k 3 , one instance from the set of RBF kernels kno wn as the radial powers , g ( k z − w k ) = k z − w k ρ for ρ = 1 , 3 , 5 , . . . . (12) T ogether with the thin plate splines , g ( k z − w k ) = k z − w k ρ log k z − w k for ρ = 2 , 4 , 6 , . . . , (13) they form the family of RBFs kno wn as the polyharmonic splines . Because it is a monotonically increasing function, the cubic kernel, k z − w k 3 , may appear less intuitiv e than the Gaussian. The importance of the cubic kernel stems from the fact that the space generated by linear combinations of shifted copies of the kernel is composed of splines. In one dimension, one recovers the cubic spline interpolant. One should note that the behavior of the interpolant in the far field (away from the boundaries of the conv ex hull of the samples) can be made linear (by adding constants and linear polynomials) as a function of the distance, and therefore div erges much more slo wly than r 3 [20]. In order to prov e the existence and uniqueness of an interpolant of the form, φ † i ( y ) = n X j = 1 α ( j ) i k y − y ( j ) k 3 + γ i + d X k = 1 β k , i y k , (14) we require that the set { y (1) , . . . , y ( n ) } be a 1-unisolvent set in R d , where m-unisolvency is as follows. Definition 2. The set of nodes { z (1) , . . . , z ( n ) } ⊂ R d is called m -unisolvent if the unique polynomial of total de gree at most m interpolating zer o data on { z (1) , . . . , z ( n ) } is the zer o polynomial. For our problem, the condition that the set of nodes { y ( j ) } be 1-unisolvent is equi valent to the condition that the matrix 1 · · · 1 y (1) · · · y ( n ) = 1 · · · 1 φ 1 ( x 1 ) · · · φ 1 ( x n ) . . . . . . φ d ( x 1 ) · · · φ d ( x n ) (15) hav e rank d + 1 (we assume that n ≥ d + 1). This condition is easily satisfied. Indeed, the rows 2 , . . . , d + 1 of (15) are formed by the orthogonal eigen vectors of D − 1 / 2 W D − 1 / 2 . Additionally , the first eigen vector , φ 0 , has constant sign. As a result, φ 1 , . . . , φ d are linearly independent of any other vector of constant sign, in particular 1 . In Figures 1 and 2 we see that the cubic RBF system exhibits much better conditioning than the Gaussian. 3.2. What Functions Can W e Repr oduce? the Concept of Native Spaces W e now consider the second question: can the interpolant (5) approximate the true inv erse Φ − 1 to arbitrary preci- sion? As we might expect, an RBF interpolant will conv erge to functions contained in the completion of the space of linear combinations of the kernel, H k ( Ω ) = s pan { k ( · , z ) : z ∈ Ω } . This space is called the native space . W e note that the completion is defined with respect to the k -norm, which is induced by the inner-product gi ven by the reproducing kernel k on the pre-Hilbert space H k ( Ω ) [8]. It turns out that the nati ve space for the Gaussian RBF is a very small space of functions whose Fourier trans- forms decay faster than a Gaussian [8]. In practice, numerical issues usually prevent conv ergence of Gaussian RBF interpolants, e ven within the nati ve space, and therefore we are not concerned with this issue. The nativ e space of the cubic RBF , on the other hand, is an extremely large space. When the dimension, d , is odd, the nativ e space of the cubic RBF is the Beppo Levi space on R d of order l = ( d + 3) / 2 [15]. W e recall the definition of a Beppo Levi space of order l . 5 Definition 3. F or l > d / 2 , the linear space BL l ( R d ) : = { f ∈ C ( R d ) : D α f ∈ L 2 ( R d ) , ∀| α | = l } , equipped with the inner pr oduct h f , g i BL l ( R d ) = P | α | = l l ! α ! h D α f , D α g i L 2 ( R d ) , is called the Beppo Le vi space on R d of order l, where D α denotes the weak derivative of (multi-index) or der α ∈ N d on R d . For e ven dimension, the Beppo Levi space on R d of order l = ( d + 2) / 2 corresponds to the native space of the thin plate spline g ( k z − w k ) = k z − w k 2 log k z − w k [15]. Because we assume that the in verse map Φ − 1 is smooth, we expect that it belongs to any of the Beppo Levi spaces. Despite the fact that we lack a theoretical characterization of the nativ e space for the cubic RBF in ev en dimension, all of our numerical experiments have demonstrated equal or better performance of the cubic RBF relativ e to the thin plate spline in all dimensions (see also [21] for similar conclusions). Thus, to promote algorithmic simplicity for practical applications, we have chosen to work solely with the cubic RBF . 3.3. Conver gence Rates The Gaussian RBF interpolant con ver ges (in L ∞ norm) exponentially fast to ward functions in the native space, as a function of the decreasing fill distance h Z , Ω [8]. Howe ver , as observed abov e, rapid ill-conditioning of the interpo- lation matrix makes such theoretical results irrelev ant without resorting to more costly stable algorithms. The cubic interpolant con verges at least as fast as O ( h 3 / 2 Z , Ω ) in the respective nativ e space [15]. In practice, we hav e experienced faster rates of algebraic con ver gence, as shown in the e xperimental section. 4. Experiments W e first conduct experiments on a synthetic manifold, and we then provide evidence of the performance of our approach on real data. For all experiments we quantify the performance of the interpolation using a “leave-one-out reconstruction” approach: we compute Φ † y ( j ) , for j = 1 , . . . , n , using the remaining n − 1 points: { y (1) , . . . , y ( j − 1) , y ( j + 1) , . . . , y ( n ) } and their coordinates in R D , { x (1) , . . . , x ( j − 1) , x ( j + 1) , . . . , x ( n ) } . The average performance is then mea- sured using the av erage leave-one-out l 2 reconstruction error , E avg = 1 n n X j = 1 k x ( j ) − Φ † ( y ( j ) ) k . (16) In order to quantify the e ff ect of the sampling density on the reconstruction error , we compute E avg as a function of h local , which is defined by (11). The two RBF interpolants are compared to Shepard’ s method, a multi variate interpolation / approximation method used extensi vely in computer graphics [13]. Shepard’ s method computes the optimal constant function that minimizes the sum of squared errors within a neighborhood N y of y in R d , weighted according to their proximity to y . The solution to this moving least squares approximation is gi ven by Φ † Shepard ( y ) = X j : y ( j ) ∈N y exp( − ε 2 k y − y ( j ) k 2 ) P i : y ( i ) ∈N y exp( − ε 2 k y − y ( i ) k 2 ) x ( j ) . (17) The relative impact of neighboring function values is controlled by the scale parameter ε , which we choose to be a multiple of 1 / h local . 4.1. Unit Sphere in R D For our synthetic manifold example, we sampled n points { x (1) , . . . , x ( n ) } from the uniform distribution on the unit sphere S 4 , then embedded these data in R 10 via a random unitary transformation. The data are mapped to { y (1) , . . . , y ( n ) } ⊂ R d = R 5 using the first five non-trivial eigen vectors of the graph Laplacian. The minimum of the total number of a v ailable neighbors, n − 1, and 200 neighbors was used to compute the interpolant. For each local fill distance, h local , the average reconstruction error is computed using (16). The performances of the cubic RBF , Gaussian RBF , and Shepard’ s method versus h local are shown in Fig. 3. W e note that the interpolation error based on the cubic RBF is lowest, and appears to scale approximately with O ( h 2 local ), an improvement ov er the O ( h 3 / 2 Z , Ω ) bound [15]. In fact, the cubic RBF prov es to be extremely accurate, even with a very sparsely populated domain: the lar gest h local corresponds to 10 points scattered on S 4 . 6 Original Cubic RBF Error = 0.23 Gaussian ( ε = 1 / ¯ h local ) Error = 0.25 Shepard ( ε = 1 / ¯ h local ) Error = 0.35 Figure 4: From left to right: original image to be reconstructed; reconstructions using the di ff erent methods, each followed by the residual error: cubic RBF , Gaussian RBF , and Shepard’ s method. 10 − 4 10 − 3 10 − 2 10 − 1 10 0 10 − 14 10 − 13 10 − 12 10 − 11 10 − 10 10 − 9 10 − 8 Local fill distance Inverse Mapping Error Cubic RBF 10 − 4 10 − 3 10 − 2 10 − 1 10 0 10 − 10 10 − 8 10 − 6 10 − 4 10 − 2 Local fill distance Inverse Mapping Error Gaussian RBF ε = 10 − 3 /h local ε = 10 − 2 /h local ε = 10 − 1 /h local 10 − 4 10 − 3 10 − 2 10 − 1 10 0 10 − 4 10 − 3 10 − 2 10 − 1 10 0 10 1 Local fill distance Inverse Mapping Error Shepards Method ε = 10 − 2 /h local ε = 10 − 1 /h local ε = 10 0 /h local Figure 3: A verage leave-one-out reconstruction residual, E avg , on S 4 embedded in R 10 , using the cubic (left), the Gaussian (center), and Shepard’ s method (right). Note the di ff erence in the range of y -axis. scale ( ε × h local ) 0 1 2 3 4 5 6 7 8 9 Cubic — 0.248 0.135 0.349 0.334 0.299 0.350 0.259 0.261 0.354 0.262 0 . 5 0.319 0.169 0.421 0.417 0.362 0.424 0.315 0.314 0.452 0.313 Gaussian 1 0.305 0.223 0.375 0.363 0.345 0.382 0.310 0.322 0.369 0.312 2 0.457 0.420 0.535 0.505 0.513 0.554 0.477 0.497 0.491 0.478 0 . 5 0.422 0.271 0.511 0.489 0.475 0.508 0.439 0.453 0.474 0.434 Shepard 1 0.302 0.175 0.396 0.385 0.348 0.378 0.314 0.318 0.379 0.309 2 0.303 0.186 0.400 0.382 0.362 0.402 0.320 0.325 0.382 0.320 T able 1: Reconstruction error E avg for each digit (0-9). Red denotes lowest av erage reconstruction residual. 4.2. Handwritten Digits Datasets In addition to the pre vious synthetic e xample, the performance of the in verse mapping algorithm was also assessed on a “naturally occurring” high-dimensional data set: a set of digital images of handwritten digits. The data set (obtained from the MNIST database [22]) consists of 1,000 handwritten images of the digits 0 to 9. The images were originally centered and normalized to ha ve size 28 × 28. In our experiments, the images were further resized to 14 × 14 pixels and normalized to have unit l 2 norm. W e obtained 10 di ff erent datasets, each consisting of 1,000 points in R 196 . The dimension reduction and subsequent leave-one-out reconstruction were conducted on the dataset corresponding to a specific digit, independently of the other digits. F or each digit, a 10-dimensional representation of the 1,000 images was generated using Laplacian Eigenmaps [2]. Then the in verse mapping techniques were ev aluated on all images in the set. T able 1 shows the reconstruction error E avg for the three methods, for all digits. Fig. 4 shows 7 Original Cubic RBF Error = 0.026 Gaussian ( ε = 0 . 5 / ¯ h local ) Error = 0.029 Shepard ( ε = 2 / ¯ h local ) Error = 0.040 Figure 5: From left to right: original image to be reconstructed; reconstructions using the di ff erent methods, each followed by the residual error: cubic RBF , Gaussian RBF , and Shepard’ s method. Cubic Gaussian Shepard Scale ( ε × h local ) — 0 . 25 0 . 5 1 1 2 4 E avg 0.0361 0.0457 0.0414 0.0684 0.0633 0.0603 0.0672 T able 2: Reconstruction error E avg for the Frey F ace dataset. Red denotes lowest av erage reconstruction residual. three representativ e reconstructions for the digit “3”. The optimal scales (according to T able 1) were chosen for both the Gaussian RBF and Shepard’ s methods. The cubic RBF outperforms the Gaussian RBF and Shepard’ s method in all cases, with the lo west av erage error (T able 1), and with the most “noise-like” reconstruction residual (Fig. 4). Results suggest that a poor choice of scale parameter with the Gaussian can corrupt the reconstruction. The scale parameter in Shepard’ s method must be carefully selected to av oid the two extremes of either reconstructing solely from a single nearest neighbor , or reconstructing a blurry , equally weighted, average of all neighbors. 4.3. F re y F ace Dataset Finally , the performance of the in verse mapping algorithms was also assessed on the Frey Face dataset [23], which consists of digital images of Brendan Frey’ s face taken from sequential frames of a short video. The dataset is composed of 20 × 28 gray scale images. Each image was normalized to have unit l 2 norm, pro viding a dataset of 1,965 points in R 560 . A 15-dimensional representation of the Fre y Face dataset was generated via Laplacian eigenmaps. The in verse mapping techniques were tested on all images in the set. T able 2 sho ws the mean leav e-one-out reconstruction errors for the three methods. Fig. 5 sho ws three representative reconstructions using the di ff erent techniques. The optimal scales (according to T able 2) were chosen for both the Gaussian RBF and Shepard’ s methods. Again, the cubic RBF outperforms the Gaussian RBF and Shepard’ s method in all cases, with the lo west av erage error (T able 2), and with the most “noise-like” reconstruction residual (Fig. 5). 5. Revisiting Nyström Inspired by the RBF interpolation method, we provide in the following a nov el interpretation of the Nyström extension: the Nyström extension interpolates the eigen vectors of the (symmetric) normalized Laplacian matrix using a slightly modified RBF interpolation scheme. While sev eral authors have mentioned the apparent similarity of Nyström method to RBF interpolation, the novel and detailed analysis provided belo w provides a completely new insight into the limitations and potential pitfalls of the Nyström extension. Consistent with Laplacian eigenmaps, we consider the symmetric normalized kernel e K = D − 1 / 2 K D − 1 / 2 , where K i j = k ( x ( i ) , x ( j ) ) is a radial function measuring the similarity between x ( i ) and x ( j ) , and D is the degree matrix (diagonal matrix consisting of the row sums of K ). 8 0 1 2 3 − 1 − 0.5 0 0.5 1 1.5 x φ 1 0 1 2 3 − 1 − 0.5 0 0.5 1 1.5 x φ 2 0 1 2 3 − 0.54 − 0.52 − 0.5 − 0.48 − 0.46 − 0.44 x φ 3 0 1 2 3 − 1 − 0.5 0 0.5 1 x φ 4 In − sample Points Nystrom Extension Figure 6: Example of Nyström extension of the eigen vectors of a thresholded Gaussian a ffi nities matrix. Giv en an eigen vector φ l of e K (associated with a nontrivial eigen value λ l ) defined over the points x ( i ) , the Nyström extension of φ l to an arbitrary new point x is given by the interpolant φ l ( x ) = 1 λ l n X j = 1 ˜ k ( x , x ( j ) ) φ l ( x ( j ) ) , (18) where φ l ( x ( j ) ) is the coordinate j of the eigenv ector φ l = h φ l ( x (1) ) · · · φ l ( x ( n ) ) i T . W e no w proceed by re-writing φ l ( x ) in (18), using the notation ˜ k ( x , · ) = h ˜ k ( x , x (1) ) · · · ˜ k ( x , x ( n ) ) i T , where ˜ k ( x , x ( j ) ) = k ( x , x ( j ) ) / p d ( x ) d ( x ( j ) ), and d ( x ) = P n i = 1 k ( x , x ( i ) ). φ l ( x ) = λ − 1 l ˜ k ( x , · ) T φ l = ˜ k ( x , · ) T Φ Λ − 1 [0 . . . 1 . . . 0] T = ˜ k ( x , · ) T Φ Λ − 1 Φ T φ l = ˜ k ( x , · ) T e K − 1 φ l = 1 √ d ( x ) h k ( x , x (1) ) . . . k ( x , x ( n ) ) i D − 1 / 2 ( D 1 / 2 K − 1 D 1 / 2 ) φ l = 1 √ d ( x ) k ( x , · ) T K − 1 ( D 1 / 2 φ l ) . (19) If we compare the last line of (19) to (8), we conclude that in the case of Laplacian Eigenmaps, with a nonsingular kernel similarity matrix K , the Nyström extension is computed using a radial basis function interpolation of φ l after a pre-rescaling of φ l by D 1 / 2 , and post-rescaling by 1 / √ d ( x ). Although the entire procedure it is not exactly an RBF interpolant, it is very similar and this interpretation provides new insight into some potential pitfalls of the Nyström method. The first important observ ation concerns the sensiti vity of the interpolation to the scale parameter in the kernel k . As we have explained in section 3.1, the choice of the optimal scale parameter ε for the Gaussian RBF is quite di ffi cult. In f act, this issue has recently receiv ed a lot of attention (e.g. [17, 5]). The second observ ation in volves the dangers of sparsifying the similarity matrix. In many nonlinear dimensionality reduction applications, it is typical to sparsify the kernel matrix K by either thresholding the matrix, or k eeping only the entries associated with the nearest neighbors of each x j . If the Nyström extension is applied to a thresholded Gaussian kernel matrix, then the components of k ( x , · ) as well as √ d ( x ) are discontinuous functions of x . As a result, φ l ( x ), the Nyström extension of the eigen vector φ l will also be a discontinuous function of x , as demonstrated in Fig. 6. In the nearest neighbor approach, the extension of the kernel function ˜ k to a new point x is highly unstable and poorly defined. Given this larger issue, the Nyström extension should not be used in this case. In order to interpolate eigenv ectors of a sparse similarity matrix, a better interpolation scheme such as a true (non-truncated) Gaussian RBF , or a cubic RBF interpolant could provide a better alternativ e to Nyström. A local implementation of the interpolation algorithm may provide significant computational savings in certain scenarios. Acknowledgments The authors would like to thank the three anonymous revie wers for their excellent comments. NDM was supported by NSF grant DMS 0941476; BF was supported by NSF grant DMS 0914647; FGM was partially supported by NSF grant DMS 0941476, and DOE award DE-SCOO04096. 9 References [1] M. Belkin, P . Niyogi, Laplacian eigenmaps for dimensionality reduction and data representation, Neural Computation 15 (2003) 1373–1396. [2] R.R. Coifman, S. Lafon, Di ff usion maps, Appl. Comput. Harmon. Anal. 21 (2006) 5–30. [3] S.T . Roweis, L.K. Saul, Nonlinear dimensionality reduction by locally linear embedding, Science 290 (2000) 2323–2326. [4] B. Scholkopf, A. Smola, K.-R. Müller , Kernel principal component analysis, in: Advances in Kernel Methods–Support V ector Learning, MIT Press, 1999, pp. 327–352. [5] R.R. Coifman, S. Lafon, Geometric harmonics: A novel tool for multiscale out-of-sample extension of empirical functions, Applied and Computational Harmonic Analysis 21 (2006) 31–52. [6] A. Elgammal, C.-S. Lee, The role of manifold learning in human motion analysis, in: Human Motion, Springer, 2008, pp. 25–56. [7] R.R. Coifman, I.G. K evrekidis, S. Lafon, M. Maggioni, B. Nadler , Di ff usion maps, reduction coordinates, and lo w dimensional representation of stochastic systems, Multiscale Model. Sim. 7 (2008) 842–864. [8] G.E. Fasshauer, Meshfree Approximation Methods W ith MA TLAB, Interdisciplinary Mathematical Sciences, W orld Scientific, 2007. [9] M. J. D. Powell, Radial basis function methods for interpolation to functions of many v ariables, in: HERCMA, 2001, pp. 2–24. [10] H. W ackernagel, Multiv ariate Geostatistics, An Introduction with Applications, Springer, 1998. [11] M. Scheuerer, R. Schaback, M. Schlather , Interpolation of spatial data – a stochastic or a deterministic problem?, European Journal of Applied Mathematics 24 (2013) 601–629. [12] D. Shepard, A two-dimensional interpolation function for irre gularly-spaced data, in: Proceedings of the 1968 23rd A CM national conference, A CM, New Y ork, NY , USA, 1968, pp. 517–524. [13] J.P . Lewis, F . Pighin, K. Anjyo, Scattered data interpolation and approximation for computer graphics, in: ACM SIGGRAPH ASIA 2010 Courses, A CM, New Y ork, NY , USA, 2010, pp. 2:1–2:73. [14] D. Kushnir , A. Haddad, R.R. Coifman, Anisotropic di ff usion on sub-manifolds with application to earth structure classification, Applied and Computational Harmonic Analysis 32 (2012) 280–294. [15] H. W endland, Scattered Data Approximation, Cambridge Monographs on Applied and Computational Mathematics, Cambridge Univ ersity Press, 2004. [16] B. Fornberg, J. Zuev , The Runge phenomenon and spatially v ariable shape parameters in RBF interpolation, Comput. Math. Appl. 54 (2007) 379–398. [17] A. Bermanis, A. A verbuch, R.R. Coifman, Multiscale data sampling and function extension, Applied and Computational Harmonic Analysis 34 (2013) 15–29. [18] T .A. Driscoll, B. Fornberg, Interpolation in the limit of increasingly flat radial basis functions, Comput. Math. Appl. 43 (2002) 413–422. [19] B. Fornberg, E. Lehto, C. Po well, Stable calculation of Gaussian-based RBF-FD stencils, Comput. Math. Appl. 65 (2013) 627–637. [20] B. Fornberg, T .A. Driscoll, G. Wright, R. Charles, Observations on the behavior of radial basis function approximations near boundaries, Comput. Math. Appl. 43 (2002) 473–490. [21] S.M. Wild, C.A. Shoemaker , Global con ver gence of radial basis function trust region deriv ative-free algorithms, SIAM Journal on Optimiza- tion 21 (2011) 761–781. [22] S. Gangaputra, Handwritten digit database, http://cis.jhu.edu/~sachin/digit/digit.html , 2012. [23] S. Roweis, Frey face dataset, http://cs.nyu.edu/~roweis/data.html , 2013. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment