Denoising Deep Neural Networks Based Voice Activity Detection

Recently, the deep-belief-networks (DBN) based voice activity detection (VAD) has been proposed. It is powerful in fusing the advantages of multiple features, and achieves the state-of-the-art performance. However, the deep layers of the DBN-based VA…

Authors: Xiao-Lei Zhang, Ji Wu

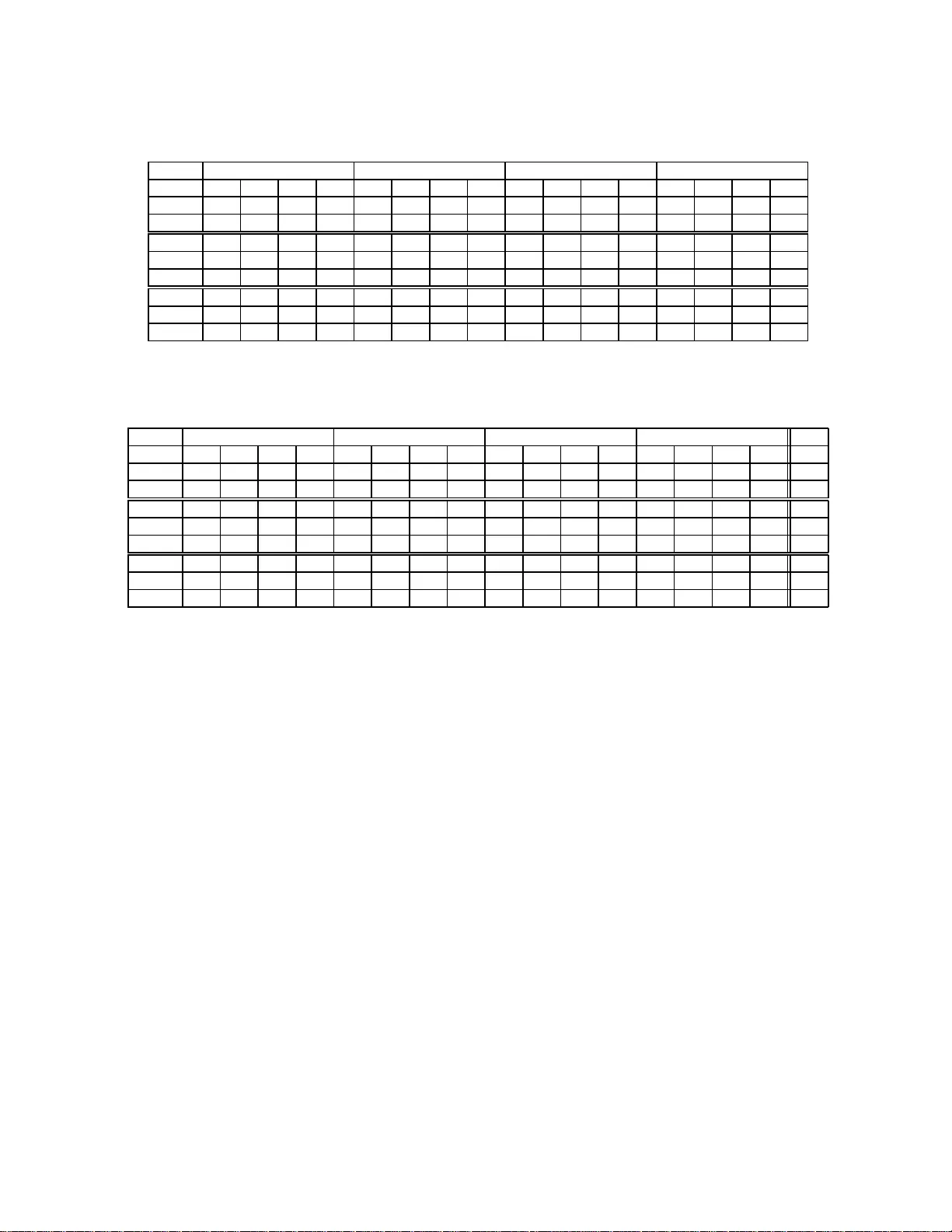

DENOISING DEEP NEURAL NETWORKS B ASED V OICE A CTIVITY DET ECTION Xiao-Lei Zhang and Ji W u Multimedi a Signal and Intelligent Information Processing Laboratory , Tsinghua National Laboratory for Information Science and T echnology , Department of Electronic Engineering, Tsinghua Univ ersity , B eijing, China. huoshan6@126.com , wu_ji@tsingh ua.edu.cn ABSTRA CT Recently , the deep-belief-networks (DBN) based voice ac - ti vity detection (V AD) has been proposed. It is powerful in fusing th e ad vantages o f multiple fea tures, and achieves the state-of-the- art perfor mance. However , the deep lay ers of the DBN-based V AD do no t sh ow an appa rent su periority to the shallower layers. I n this paper, we propose a denoising -deep- neural-n etwork (DDNN) based V AD to ad dress the afor emen- tioned prob lem. Specifically , we pre-train a deep neu ral net- work in a special unsup ervised den oising greedy layer-wise mode, and then fine-tune the wh ole network in a sup ervised way by the comm on back-p ropag ation alg orithm. In the pre- training phase, we take the noisy speech s ignals as the visible layer and try to extract a new feature that minimizes the re- construction cross-entro py loss between the noisy speech sig- nals and its correspon ding clean speech sign als. Ex perimental results show that the propo sed DDNN-based V AD not on ly outperf orms the DBN-based V AD but also shows an a pparent perfor mance improvement of the de ep layers over shallower layers. Index T erms — Deep learnin g, denoising deep neural net- works, v oice activity detection. 1. INTR O DUCTION V oice activity detectors (V ADs) help to separa te speech fr om its backg round noises. They are impo rtant fronten ds of mod- ern speech pro cessing systems, such as speech re cognition systems [1–3] and speech commun ication systems [4]. Re- cently , the m achine-lear ning-ba sed V ADs hav e r eceiv e d much attention in that the y have th e following n otable merits. First, they can be integrated to th e speec h re cognition systems nat- urally . Second, they hav e stro ng theoretical bases that guar- antee the perfo rmance. Third, they can fuse the advantages of multiple features [5–9] much better than traditional V ADs. This work is supported in part by the China Postdoctoral Science Foun- dation funded pro ject under Grant 2012M5 20278 and in pa rt by the Nati onal Natural Scie nce Funds of China under Grant 61170197. The mach ine-learnin g-based V ADs can be c ategorized to four grou ps [10 –16]. The first g roup is the d iscriminative- weight-train ing-based V ADs [ 10, 12, 16] . They condu ct lin- ear weig hted combinatio ns of m ultiple features in th e orig- inal feature space. The second gr oup is the su pport-vecto r- machine (SVM) b ased V ADs [11 , 13]. T hey first fuse mu ltiple features to a long feature vector in the orig inal feature space , and then projec t the long feature vector to the kernel-induced feature spa ce for better classification perfo rmance. The third group is the multip le-kernel-SVM (MK-SVM) based V AD [14, 15]. It takes the d istribution diversity of multiple fe a- tures into consider ation b y fir st p rojecting different f eatures into different kern el spaces an d then fuse th e featur es in the kernel spaces in a w ay with linear weighted combinatio n. A ll of the aforem entioned three gr oups utilize shallow models, i.e. m odels with only zero or one hid den layer, which lack the ability of describing highly variant featur es and discovering the underly ing m anifold of the features. The fourth group is the deep-be lief-networks ( DBNs) based V AD [17] . Fundamen tally , beca use the DBN [18] contains multiple h idden layers, the DBN-based V AD can describe hig hly variant f eatures; becau se the un supervised pre-train ing phase of DBN p rovides an initial p oint that is close to a good solution, the DBN-based V AD h as a stron g generalizatio n ability wh en co mpared with o ther machine- learning- based V ADs. Howev e r , the deep layers of the DBN- based V AD do no t yield an apparent sup eriority to the shal- lower layers. In ou r p ersonal opinio n, it might n ot be pro per to simply conside r V AD as a binary -class classification prob- lem with the no isy speech and the backgr ound no ise as the two classes, since the backgr ound noise also contributes to the distribution of the noisy spee ch. Th is might account for the inapp arent sup eriority of the deep lay ers over shallower layers in the DBN-based V AD. In th is pap er , we pro pose a novel den oising deep-n eural- networks (D DNNs) based V AD. The DDNN training also consists of two phases. The first phase is a spec ial u nsuper- vised den oising gr eedy layer-wise pr e-training phase. The pre-train ing pr ocess of each h idden layer tries to extract a new f eature that minimizes th e reconstruction cr oss-entr op y loss between the noisy speech signals and its corr espond- ing c lean spee ch signals (but not the n oisy sp eech sign als). The seco nd phase is th e well-k nown supervised fine-tunin g phase. It gro ups a ll lay ers with the pre-trained parame ters to a whole deep neural networks and tu ne the parameters for the minimum classification erro r . Ex perimental results show that the pro posed DDNN-based V AD not only outperf orms the DBN-based V AD but also shows an ap parent p erform ance improvement of the deep layers over shallower layers. 2. DENOISING-DNN-BASE D V AD The training pr ocess o f the DDNN- based V AD consists of two phases – u nsupervised de noising layer-wise pre- training phase and supervised fine-tu ning phase, which ar e pr esented in detail in Sections 2.1 and 2.2 r espectively . Th e overvie w of the DDNN-based V AD is presented in Algorithm 1. 2.1. Unsupervised Denoising Layer -wise Pre-training Suppose we have D -dimension al noisy speech ob serva- tions (i.e. frames) { x i , y i } n i =1 and their corresp onding clean speech observations { ˜ x i , y i } n i =1 with x i = [ x i,d ] D d =1 , y i ∈ { H 0 , H 1 } , where x d ∈ [0 , 1 ] and H 1 /H 0 denote the speech / no ise hypothe sis. The layer-wise pre-tr aining of each mo dule of DDNN consists of op timizing two acti vation fun ctions jointly . The first fun ction, denoted as f θ ( · ) , m aps the noisy speech obser- vation fr om the visible layer x to a h idden lay er f θ ( x ) . The second fu nction, den oted as g θ ′ ( · ) , tries to r econstruct ˜ x (but not x ) from the hidden layer by g θ ′ ( f θ ( x )) . The unsuper vised pr e-training tr ies to minimize the re- construction c ross-entropy loss between { x i } n i =1 and { ˜ x i } n i =1 which is defined as follows J θ ,θ ′ ( x ; ˜ x )=min θ ,θ ′ n X i =1 L ( ˜ x i ; g θ ′ ( f θ ( x i ))) (1) with L ( x i ; z i ) defined as L ( x i ; z i ) = − D X d =1 ( x i,d log z i,d + (1 − x i,d ) log (1 − z i,d )) where z i is short for g θ ′ ( f θ ( x i )) . Pro blem (1) can be solved locally by the well-known back-propaga tion algorithm. When we try to pre-tr ain the L -th mod ule with L > 1 (i.e. the module is not the lowest o ne), we sho uld first construct its input layer x ( L − 1) by transferr ing x (0) throug h the pre- trained networks as follo ws x ( L − 1) = f θ ( L − 1) . . . f θ ( l ) . . . f θ (2) f θ (1) x (0) (2) where l d enotes the l -th hidd en layer (i.e. the l -th layer-wise module fro m the bottom-u p), an d x (0) is th e or iginal featur e vector . Algorithm 1 Denoising-D NN-based V AD. Input: Feature set n x (0) i , ˜ x (0) i , y (0) i o n i =1 , the depth of the DDNN L Output: Feature extraction model θ ( l ) L l =1 , and the linear classifier above th e model. 1: /* Unsup ervised denoising layer -wise pre-trainin g */ 2: for l = 1 , . . . , L do 3: Get θ ( l ) by solving J θ ( l ) ,θ ′ ( l ) x ( l − 1) ; ˜ x ( l − 1) defined in equation (1) 4: Calculate x ( l − 1) by equation (2) 5: if l > 1 then 6: Get ˜ θ ( l − 1) by solving J ˜ θ ( l − 1) , ˜ θ ′ ( l − 1) ˜ x ( l − 2) ; ˜ x ( l − 2) or by the contrastive d iv ergen ce learn ing [19]. 7: Calculate ˜ x ( l − 1) by equation (3) 8: end if 9: end for 10: /* Super vised fine-tuning */ 11: Constru ct the classification-DDNN an d fine-tun e it by the back-p ropagatio n algor ithm for the minimu m classifica- tion error mentioned in Section 2.2. Here comes the qu estion. What should x ( L − 1) recon- struct? Here, we propo se to pre-train a c lean-speech to clean- speech dee p network that accompan ies with the noisy- signal to clean-signal deep network, so that we can get ˜ x ( l − 1) by ˜ x ( L − 1) = f ˜ θ ( L − 1) . . . f ˜ θ ( l ) . . . f ˜ θ (2) f ˜ θ (1) ˜ x (0) (3) There are two ways to pre-tra in the accom panying d eep network { f ˜ θ ( l ) } L − 1 l =1 (i.e. the deep neu ral network fo r th e clean-speech -to-clean- speech reconstru ction) in the layer - wise greedy trainin g m ode. Th e first one is to minimize the reconstruc tion cro ss-entropy lo ss via (1) with ˜ x as both th e input an d the target o f th e module. Another way is to maxi- mize the logrithmic likelihood of ˜ x by the efficient contrastive diver gence algo rithm propo sed in DBN [19 ]. In this p aper, we adopt the fo rmer f or simplicity . Note that we cannot use x ( L − 1) to recover ˜ x (0) directly for saving the comp utation load of co nstructing ˜ x ( L − 1) , since it’ s unlikely to describe th e extraction network { f θ ( l ) } L − 1 l =1 of the noisy speech simply b y a single hidden-lay er reconstru ction netw o rk g θ ′ (1) . In this paper, a ll activation f unctions f θ ( l ) x ( l − 1) and g θ ′ ( l ) ˆ x ( l − 1) are defined as f θ ( l ) x ( l − 1) = s W ( l ) x ( l − 1) + b ( l ) and g θ ′ ( l ) ˆ x ( l − 1) = s W ′ ( l ) ˆ x ( l − 1) + b ′ ( l ) respectively with th e fu nction s ( x ) set to the logistic f unction s ( x ) = 1 / (1 + e − x ) and { W ( l ) , b ( l ) } d enoted as the weight matrix and the bias term between the ( l − 1 ) -th and l -th layers of the network respectiv ely . 2.2. Supervised Fine-tuning The sup ervised fine-tun ing phase can be divided into three steps. The first step is to construct the feature extraction part of the DD NN by first discardin g the function { g θ ′ ( l ) } L l =1 and the accomp anying deep networks { f ˜ θ , g ˜ θ ′ } L − 1 l =1 and then stacking all p re-trained fu nctions { f θ ( l ) } L l =1 layer by layer as [18] did. Th e second s tep is to add a linear class ifier above the f eature extractio n part so as to formu late the entire DDNN. The third step is to fine- tune DDNN by th e com mon b ack- propag ation algo rithm fo r the minimum classification error (MCE), where the cross-entropy loss is also u sed as the surr o- gate r elaxation fun ction. W e call the DDNN for MCE as the classification-DDNN. Note that another usage of DDNN is to only carry out the first step of the classification- DDNN, an d then take th e extracted d enoising featur es as th e inpu t of some indepen dent c lassifiers, such as SVM. W e call the DDNN fo r extracting denoising featu res as the reconstru ction-DDNN. W e only consider the classification-DDNN in this paper . 3. MO TIV A TION AND RELA TED WORK The pr oposed algorith m can be viewed as an id ea comb ina- tion of the stacked den oising au toencod er (SDAE) [2 0, 2 1] and speech enh ancemen t techniqu es [2 2]. SD AE , prop osed by V in cent et al. in 2 008 [20 , 21], is a novel deep lear n- ing technique that has shown comparab le perfo rmance with DBN. I t first adds n oise to the original clean featur es and then takes the noisy featur es as the input of the m odule that is to be pre-train ed. But it does not tr y to reco nstruct th e noisy features. Instead, it tries to recover the origin al clean features by m inimizing th e cross-entropy loss or the squared error loss between the reconstructed features and the original clean fea- tures. Compare d with SD AE , DDNN also tries to recover the clean featu res, but th e noise in jected to the clean features is from the real en vir onment instead of from artificial addition. Speech en hancemen t techniq ues, such as the minimum mean square e rror estimation [ 22], try to estimate the amp li- tude of th e c lean speech from the noisy spe ech ob servation, which is also kn own a s the a p riori signal-to-n oise ratio (SNR) estimation . The speech enhan cement techniqu es have been widely employed in the V AD research, such as the well-known Soh n V AD [23] . Comp ared with the speech en - hancemen t techniqu es, we constru ct a deep ar chitecture in a machine-le arning perspecti ve for the clean speech estimation with an assumption th at the tr aining d ata has its c orrespon d- ing clean spe ech target, while som e speech-e nhancem ent- based V ADs assume th at the ba ckgrou nd n oise is relatively stationary , so that they can trust the statistical p arameters updated in th e silence perio d for the clean speec h estima- tion when the speech activity ap pears. W e have to n ote that many speec h enhancem ent techniques do not need the silence period for the noise spectrum estimation, such as [24]. 4. EXPERIMENTS Sev en noisy test corp ora of A UR ORA2 [25] are used for per- forman ce analysis. Four signal-to-no ise ratio (SNR) le vels of the au dio signals are selected, which are [ − 5 , 0 , 5 , 10 ] dB re- spectiv ely . Each test co rpus of A URORA2 conta ins 1001 ut- terances, which are s plit randomly into thr ee groups fo r train- ing, developing and test respectively . Each trainin g set and development set con sist o f 300 uttera nces resp ectiv e ly . Eac h test set consists o f 4 01 utterances. Note that the corp ora in the same background no ise scenario but at dif ferent SNR le v- els are split with the same r andom seed, and have the same manual labels. W e co ncatenate all short utteran ces in each data s e t to a long one so as to simu late the real-world applica- tion en vironm ent of V AD. Ev entually , the length of each long utterance is in a ra nge of ( 450,7 50)s long with the pe rcentages of speech ranging from 54.57 % to 73.32 %. The sampling rate is 8k Hz. W e set th e fram e leng th to 25ms long with a frame-shift of 10ms. W e e x tract 10 acoustic features from each ob servation. The detailed info rmation o f the f eatures are listed in T ab le 1. All f eatures are nor malized into the range of [0 , 1] in dimension. T able 1 . Featur es a nd their attributes. The su bscript of each feature is the window length of the feature [26]. ID Feature Dimension ID Feature Dimension 1 Pitch 1 7 MFCC 16 20 2 DFT 16 8 LPC 12 3 DFT 8 16 9 RAST A-PLP 17 4 DFT 16 16 10 AMS 135 5 MFCC 20 T otal 273 6 MFCC 8 20 The SVM-based V AD, MK-SVM-based V AD, an d DBN- based V AD ar e used for co mparison . For the SVM- based V AD, DBN-based V AD, an d D DNN-based V AD, we c oncate- nate all 10 fe atures in serial to a long feature vector an d take the long featu re vector as the inpu t of the classifiers. For the MK-SVM-based V AD, we deal with th e featu res in a similar way with [27]. In respect of th e p arameter setting, for the SVM-based and MK-SVM-based V ADs, the Gaussian RBF kernel is used. The par ameters o f SVM and MK- SVM are searched in g rid. For t he DBN-based and DDNN-b ased V ADs, u p to three hid- den layers are adopted with the nu mbers of the h idden units set to [5 4 , 7 , 7 ] respectively . The learning rate of th e unsu- pervised pre-train ing is set to 0. 004. T he maximu m epo ch of the unsup ervised pre- training is set to 20 0. Th e lear ning rate of th e supe rvised fune-tu ning is set to 0 .005. The m axi- mum ep och of th e super vised fun e-tuning is set to 130. The batch mo de trainin g is adopte d. Ea ch b atch contain s 512 ob- servations. Note that the param eters are selected empirically for a co mpromise between the training time complexity an d the acc uracy . W e ru n all expe riments 10 times an d repo rt the av e rage perf ormanc es. The re ported p erform ance might be further improved by tuning the parameters. T ables 2 and 3 list the experim ental resu lts. Th e high - T able 2 . Accuracy com parison in the babb le , car , restaurant , and s treet noises. The subscrip ts of the DBN and DDNN ar e the depths (i.e. the numbers of the hidden layers) of the deep neural networks. Babb le Car Restaurant Street -5dB 0dB 5dB 10dB -5 dB 0dB 5dB 10dB -5d B 0dB 5dB 10dB -5dB 0dB 5dB 10dB SVM 54.6 1 64.46 7 5.97 79 .53 72. 20 81.59 86.34 87.60 69.04 74.22 82.09 84.83 58.32 67.98 74.88 78.12 MKSVM 5 5.43 65.0 2 76.17 80.18 75.0 1 83.50 8 6.38 8 7.94 70 .44 75.71 83.25 86.30 63.38 73.35 77.60 79.10 DBN 1 61.03 69. 01 78.8 3 80.99 77.24 84.10 87.18 88.48 70.23 75.73 83.43 86.12 66.63 73.15 78.47 80.42 DBN 2 60.81 69. 24 78.9 4 81.23 77.88 84.14 87.04 88.44 70.10 75.68 83.59 86.08 67.41 73.76 78.70 80.86 DBN 3 60.55 69. 38 79.0 3 80.78 77.75 83.97 87.00 88.14 69.75 75.57 83.54 85.92 67.33 72.83 79.03 80.49 DDNN 1 60.69 69. 42 78.6 1 81.39 76.06 83.86 86.77 88.17 69.76 75.88 83.47 86.41 66.21 72.21 79.33 81.24 DDNN 2 58.62 69. 07 78.8 5 81.62 76.80 84.04 86.96 88.54 69.71 76.05 83.90 86.62 65.51 72.72 79.17 81.53 DDNN 3 57.84 69. 61 79.1 4 81.65 76.82 84.22 87.09 88.67 69.55 76.04 83.78 86.65 65.89 72.82 79.47 81.71 T able 3 . Ac curacy comp arison in th e air por t , train , and subway n oises. “A VR” is sho rt fo r average. “ ALL ” d enotes th at the A VR is calculated over all noise types and SNR lev els. Note that when we calculate the a verages, we did n ot con sider the results of the babb le noise in − 5 and 0 dB, since the manifolds of the speech and backgroun d n oise are similar in that situation. Airpor t T rain Subwa y A VR over diff. noise types A VR -5dB 0dB 5dB 10dB -5 dB 0dB 5dB 10dB -5d B 0dB 5dB 10dB -5dB 0dB 5dB 10dB ALL SVM 64.48 74.26 80.94 8 5.21 66 .24 74. 29 82.9 1 85.28 7 4.75 81 .24 83. 58 85.18 67.51 75.60 80.96 83.68 76.93 MKSVM 65. 86 75.5 9 82.30 8 5.38 6 8.78 76 .31 83.9 9 85.34 79.90 84.82 86.11 87.46 7 0.56 7 8.21 82 .26 84.53 78.89 DBN 1 66.18 7 6.63 81 .89 86.63 68.59 76.9 5 83.65 8 5.72 78 .54 82. 70 85.6 0 85.79 7 1.24 7 8.21 82 .72 84.88 79.26 DBN 2 66.35 7 6.66 81 .92 86.41 68.99 76.9 5 83.49 8 5.68 79 .10 83. 29 85.7 7 86.25 7 1.64 7 8.41 82 .78 84.99 79.46 DBN 3 66.62 7 6.38 81 .85 86.50 68.89 76.1 4 83.56 8 5.62 78 .95 83. 26 85.8 1 86.01 7 1.55 7 8.03 82 .83 84.78 79.30 DDNN 1 66.00 7 6.61 82 .34 86.81 68.59 77.3 6 83.88 8 5.94 77 .90 83. 20 85.8 4 86.64 7 0.75 7 8.19 82 .89 85.23 79.27 DDNN 2 66.80 7 6.86 82 .45 86.98 69.33 77.4 8 84.21 8 6.12 78 .19 83. 39 85.6 2 86.46 7 1.06 7 8.42 83 .02 85.41 79.48 DDNN 3 67.00 7 6.85 82 .30 86.85 69.44 77.6 0 84.25 8 6.16 78 .53 83. 60 85.7 3 86.49 7 1.21 7 8.52 83 .11 85.45 79.57 lighted con tents of ea ch co lumn are the be st pe rforman ce of the refer enced DBN-based V AD and that of the DDNN- based V AD on the co rrespond ing n oise scenario respe cti vely . From the two tables, we can see th at the deep layers of th e DDNN-based V ADs perfor m b etter than the shallower lay- ers, wh ich suppor ts o ur con jecture in Sectio n 3. Also, th e DDNN-based V AD outpe rforms the SVM-based V AD and the MK-SVM-based V AD. Moreover, the DDNN-based V AD ev e n ou tperfor ms the DBN-b ased V AD in se veral no ise sce- narios, which demo nstrates its effecti veness. The experimen - tal p henom enon m anifested ou r co njecture in the introd uction section abo ut the reason wh y the deep layers the DBN-based V AD d oes not outp erform the shallow layers. That is, the manifold s of the clean speech a nd back groun d noise m ixed with each other, so that we cann ot e xpect DBN to distinguish the backgro und noise fro m the noisy speech that co ntains the manifold s of both the clean s peech and th e backgro und noise. 5. CONCLUSIONS AND FUTURE WORK In this paper, we have pro posed a denoising -deep-n eural- networks-based V AD. Specifically , the DDNN training con- tains two phases. The first p hase is to pre-train a deep ne ural network in an u nsupervised d enoising gr eedy layer-wise mode. The seco nd ph ase is to fine-tune the whole deep ne u- ral network as usu al. The d enoising pre-tr aining m akes the DDNN discover the m anifold of th e clean speech without suffering sev e rely f rom the d isruption o f the backgrou nd noise. Experimen tal results h av e shown that the d eep lay- ers of the DDNN -based V AD are muc h more p owerful than the shallower layers, and mo reover , th e DDNN-b ased V AD outperf orms th e DBN-based V AD in se veral no ise scenarios. Howe ver, to train a DDNN model, the noisy speech train- ing corpu s needs its correspo nding clean corpu s, which is an ideal situation. Therefo re, h ow to relax this constraint is what we f ocus on in the futur e work. More over , the experim ents are limited to the matching environments, how to m ake the DDNN-based V AD perfo rm steadily in u nmatchin g environ- ments is another key problem we want to address. Acknowledgment: T he authors would like to thank the anonymou s referees for their valuable adv ice, which greatly improved th e quality of this paper . 6. REFERENCES [1] D. Y u and L. Deng, “Deep-structu red hidden cond itional random fields for ph onetic recognitio n, ” in Pr oc. I N- TERSPEECH , 2010, pp. 2986– 2989. [2] G. Da hl, D. Y u, L . Deng, and A. Acero, “Context- depend ent pre- trained d eep neur al networks for la rge vocab ulary speech recog nition, ” IEEE T rans. Audio, Speech, Lang. Pr ocess. , vol. 20, no. 1, pp. 30–42, 2012. [3] G. Hinton , L. Den g, D. Y u, G. Dahl, A. Mo - hamed, N. Jaitly , A. Sen ior , V . V anhoucke, P . Nguyen, T . Sainath, et al., “Deep neura l networks for acou stic modeling in speech recog nition, ” IEE E Signa l Pr o cess. Mag. , v ol. 29 , no. 11, pp. 2–17, 2012. [4] K. Hana and D. L. W ang, “ A classification based ap- proach to spee ch segregation, ” The Journal of th e Acoustical Society of America , vol. 99, pp. 1–34, 2012. [5] D. L. W ang, “The time d imension for scene analy sis, ” IEEE T rans. Neural Netw . , vol. 16, no. 6, pp. 1 401– 1426, 2005 . [6] D. L. W ang and G. J. Brown, Computatio nal auditory scene analysis: principles, algorithms and app lications , W iley-IEEE Press, 2006 . [7] Y . X. W ang , K. Han, and D. L . W ang, “Exp loring monaur al featur es fo r classification -based sp eech seg- regation, ” IE EE T rans. Audio, Speech, Lan g. P r ocess. , vol. 1, no. 99, pp. 1–10, 2012. [8] Y . X. W ang an d D. L . W ang, “ Cocktail party processing via stru ctured pred iction, ” in Pr oc. Adv . Neural Inform. Pr ocess. Syst. , 201 2, pp. 1–8. [9] Y . X. W ang an d D. L. W ang, “T owards scaling up classification-based spe ech sepa ration, ” IEEE T rans. Audio, Spe ech, Lang. Pr ocess. , vol. PP , no. 99, p p. 1– 23, 2013 . [10] S. I. Kang , Q. H. Jo, and J. H . Chang , “Discriminative weight training for a statistical model-based voice acti v- ity detection , ” I EEE Signa l Pr oc ess. Lett. , vol. 15, pp. 170–1 73, 2008. [11] J. W . Shin, J. H. Chang, and N. S. Kim, “V oice ac- ti vity detectio n based on statistical models and machin e learning app roaches, ” Compu ter Speech & Langu age , vol. 24, no. 3, pp. 515–530 , 20 10. [12] T . Y u and J. H. L. Han sen, “Discriminative training for multiple observation likelihoo d r atio b ased voice activ- ity detection , ” I EEE Signa l Pr oc ess. Lett. , vol. 17, no. 11, pp. 897–9 00, 2010. [13] J. W u and X. L. Zhan g, “Maximum m argin clu stering based statistical V AD with multiple ob servation co m- pound fe ature, ” IEEE S ignal Pr ocess. Lett. , vol. 18, n o. 5, pp. 283– 286, 2011. [14] J. W u and X. L. Zhang , “ Efficient multiple kernel support vector mach ine b ased voice activity detection , ” IEEE Sig nal Pr ocess. Lett. , vol. 18 , no . 8, pp . 466 –499, 2011. [15] X. L. Zh ang and J. W u, “Linear ithmic time spa rse and conv ex m aximum margin clusterin g, ” IEEE T rans. Syst., Man, Cybern. B, C y bern. , vol. 1, no. 99, pp. 1–2 4, 201 2. [16] Y . Su h and H. Kim, “Multiple acoustic mo del-based dis- criminative lik elih ood ratio weightin g for voice activity detection, ” IEEE Sign al Pr oce ss. Lett. , vol. 19 , no . 8 , pp. 507–51 0, 2 012. [17] X. L. Zhang and J. W u, “ Deep belief networks based voice activity d etection, ” IEEE T rans. Audio, Speech, Lang. Pr oce ss. , v o l. 21, no. 4, pp. 3371– 3408, 2013. [18] G.E . Hinton and R.R. Salakhutdinov , “Reducin g the di- mensionality o f data with neura l n etworks, ” Scien ce , vol. 313, no. 5786 , pp. 504– 507, 2006 . [19] M. A. Carreira-Per pinan and G. E . Hinton, “On co n- trastiv e diver g ence learning, ” in Pr oc. In t. Con f. Artif. Intell. Stat. , 2005, pp. 17–25 . [20] P . V inc ent, H. Laro chelle, Y . Bengio , and P . A. Man- zagol, “Extractin g and composing ro bust fe atures with denoising autoencoders, ” in Pr oc. 25th Int. Conf. Mac h . Learn. , 2008, pp. 1096–1 103. [21] P . V incent, H. L arochelle, I. Lajoie, Y . Bengio, and P . A. Manzagol, “Stacked denoising au toencod ers: Learning useful representation s in a d eep network with a loca l de- noising criterio n, ” J . Mach. Learn . Res. , vol. 11, p p. 3371– 3408 , 2010 . [22] Y . Eph raim and D. Malah, “Speech enhan cement using a minimum- mean squ are error short-time spectral ampli- tude estimato r , ” I EEE T rans. A coustic, Speech, S ignal Pr ocess. , vol. 32, no. 6, pp. 1109–11 21, 19 84. [23] J. Sohn , N. S. Kim, and W . Sung, “A statistical m odel- based voice activity detec tion, ” IEEE Signal Pr o cess. Lett. , vol. 6, no. 1, pp. 1–3, 1999 . [24] I srael Cohen, “Noise spectr um estimation in adverse en- vironm ents: Improved minima co ntrolled r ecursive av- eraging, ” IEEE T rans. S peech, Audio Pr ocess. , vol. 11, no. 5, pp. 466–4 75, 2003. [25] D. Pearce, H.G. Hirsch, e t al., “The A UROR A exper- imental framework for the perf ormanc e evaluation of speech recogn ition systems under noisy cond itions, ” in Pr oc. ICSLP’00 , 2000, vol. 4, pp. 29–32. [26] J. Ramír ez, J. C. Segur a, C. Benítez, L . García, an d A. Rubio , “Statistical voice activity detection using a multiple observation likelihood ratio test, ” I EEE Sign al Pr ocess. Lett. , vol. 12, no. 10, pp. 689–692 , 20 05. [27] Z. Xu, R. Jin, H. Y ang , I. King, and M. R. L yu, “Simple and efficient multiple kern el learn ing by gro up lasso, ” in Pr oc. 27th I nt. Conf. Ma ch. Learn. , 2010, pp. 11 75– 1182.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment