Towards Adapting ImageNet to Reality: Scalable Domain Adaptation with Implicit Low-rank Transformations

Images seen during test time are often not from the same distribution as images used for learning. This problem, known as domain shift, occurs when training classifiers from object-centric internet image databases and trying to apply them directly to…

Authors: Erik Rodner, Judy Hoffman, Jeff Donahue

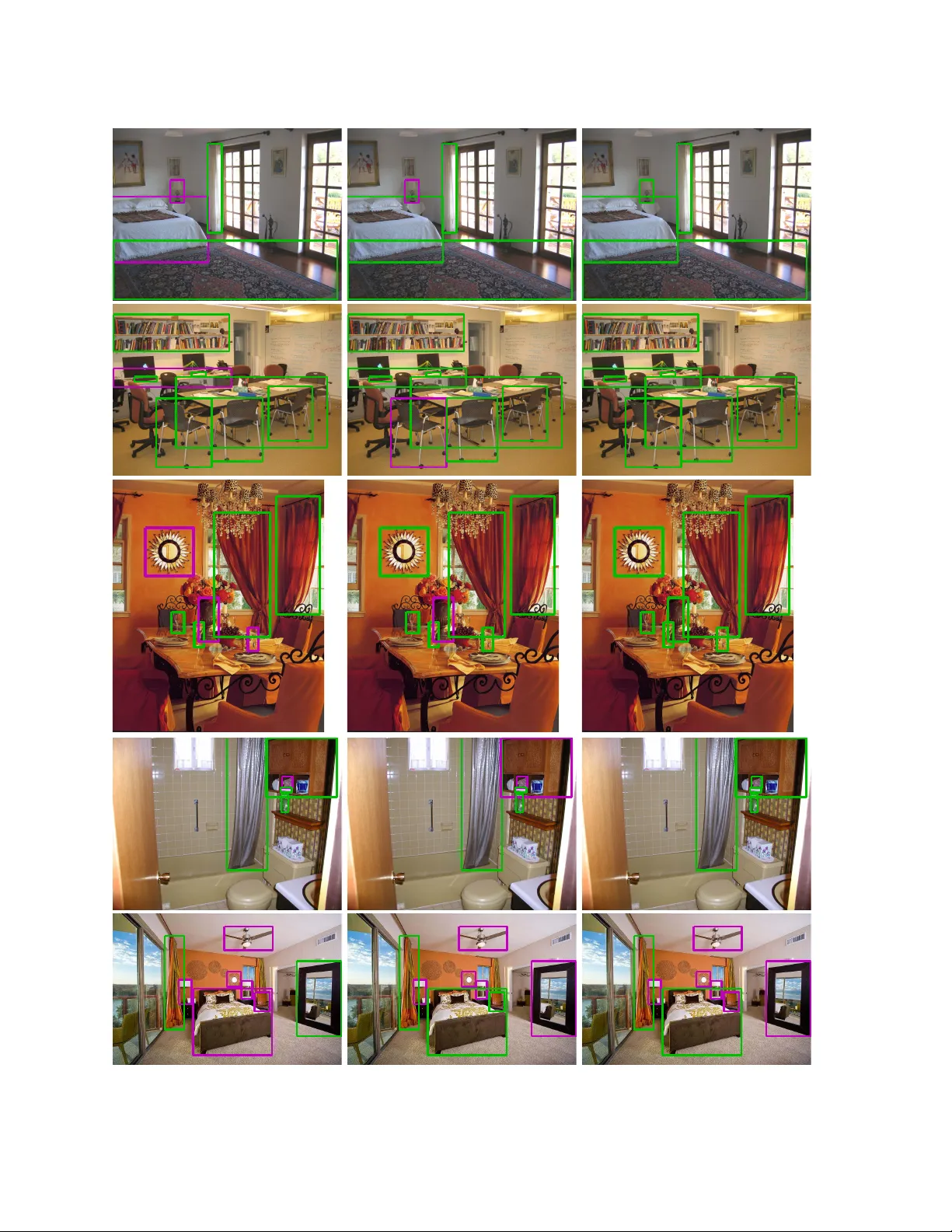

T owards Adapting ImageNet to Reality: Scalable Domain Adaptation with Implicit Low-rank T ransf ormations Erik Rodner 1 , 2 , ∗ Judy Hof fman 1 , ∗ Jef f Donahue 1 T re vor Darrell 1 Kate Saenko 3 1 ICSI & EECS UC Berkele y , 2 Uni versity of Jena, 3 UMass Lo well Abstract Images seen during test time ar e often not fr om the same distribution as images used for learning. This problem, known as domain shift, occurs when tr aining classifiers fr om object-centric internet image databases and trying to apply them dir ectly to scene understanding tasks. The con- sequence is often sever e performance de gradation and is one of the major barriers for the application of classifiers in r eal-world systems. In this paper , we show how to learn transform-based domain adaptation classifiers in a scalable manner . The ke y idea is to exploit an implicit rank con- straint, originated fr om a max-margin domain adaptation formulation, to make optimization tractable . Experiments show that the transformation between domains can be very efficiently learned fr om data and easily applied to new cat- e gories. This be gins to bridg e the gap between lar ge-scale internet image collections and object images captur ed in everyday life en vir onments. 1. Introduction Learning from huge datasets comprised of millions of images is one of the most promising directions to wards closing the gap between human and machine visual recog- nition abilities. There has been tremendous success in the area of large-scale visual recognition [ 4 ] allo wing for learn- ing of tens of thousands of visual categories. Ho wev er , in parallel, researchers hav e discovered the bias induced by current image databases and that performing visual recog- nition tasks across domains cripples performance [ 18 ]. Al- though this is especially common for smaller datasets, like Caltech-101 or the P ASCAL VOC datasets [ 18 ], the way large image databases are collected (typically using inter- net search engines) also introduces an inherent bias. This can be seen for e xample when comparing object images of the ImageNet [ 4 ] and SUN2012 database [ 20 ] in Figure 1 , where the “object-centric” data of ImageNet is of high res- ∗ both authors contributed equally Figure 1. Dataset bias of ImageNet and the SUN2012 database shown for an indoor scene and for the categories backpac k and apple on a bounding box lev el. olution with centered objects as well as sometimes artificial backgrounds, and the SUN2012 objects are part of scene images leading to blurred appearances with a large degree of occlusion and truncation. T ransform-based domain adaptation overcomes the bias by learning a transformation between datasets. In contrast to classifier adaptation [ 1 , 22 , 3 , 11 ], learning a transforma- tion between feature spaces directly allows us to perform adaptation e ven for (new) categories that are not present in both datasets. Especially for large-scale recognition with a large number of categories, this is a crucial benefit, be- cause we can learn category models for all the categories in a gi ven source domain also in the tar get domain. Transfor - mations can be learned in an unsupervised manner [ 12 ] or by using the labels present in both domains to maximize the margin of the classifier on the source and transformed target data [ 9 , 5 ]. 1 In this paper , we introduce a novel optimization method that enables transform-learning and associated domain adaptation methods to scale to “big data”. W e do this by a nov el re-formulation of the optimization in [ 9 ] as direct dual coordinate descent and by exploiting an implicit rank constraint. Although we learn a linear transformation be- tween domains, which has a quadratic size in the number of features used, our algorithm needs only a linear num- ber of operations in each iteration in both feature dimen- sions (source and target domain) as well as the number of training examples. This is an important benefit compared to other methods that need to run in kernel space [ 12 , 5 ] to ov ercome the high dimensionality of the transformation, a strategy impossible to apply for large-scale settings. The obtained scalability of our method is crucial as it allows the use of transform-based domain adaptation for datasets with a large number of categories and examples, settings in which pre vious techniques [ 12 , 5 , 9 ] were unable to run in reasonable time. Our experiments on different datasets show the various advantages of transform-based methods, such as generalization to new categories or even handling domains with different feature types. 2. Related W ork For the task of domain adaptation, two different sets of data are typically considered, the source and the target do- main, which are drawn from similar but distinct distribu- tions p ( x ) and p ( ˜ x ) . The goal is to transfer knowledge from the source domain to the target domain. In the fol- lowing, we briefly re view related work done in the areas of domain adaptation as well as transfer learning. Although transfer learning [ 13 ] considers a change of the conditional distribution p ( y | x ) rather than a change of the data distri- bution p ( x ) as in domain adaptation, the methods in both areas often use similar principles and ideas. Domain adaptation can be applied at different levels of the machine learning pipeline. For example, the adaptiv e SVM method [ 22 ] combines a target classifier and an e xist- ing source classifier by linear combination of their continu- ous outputs. This is related to adding a new re gularization term to the SVM objecti ve that forces the target SVM hyper - plane parameter to be close to the source hyperplane [ 21 ]. A ytar and Zisserman [ 1 ] showed the importance of using a scale-in variant similarity measure for this regularization term. Furthermore, the authors of [ 3 ] proposed a combina- tion of tar get, source and transductiv e SVM. More recently , Khosla et al . [ 11 ] introduced a method to jointly learn a “vi- sual world model” common across all domains in combina- tion with an additiv e bias term for each individual domain. In general, classifier adaptation methods are often lim- ited to cases where labeled training data is given for e very class in the source as well as in the target domain. Howe ver , we often have a source domain with not only more training examples but also more labeled categories av ailable. Ex- ploiting all the information and learning visual classifiers for new categories in the target domain is possible with met- ric or transformation-based methods. Another line of work was started by Gopalan et al . [ 8 ], who introduced domains as points on a manifold of sub- spaces. T o perform domain adaptation, features are mapped to the subspaces induced by the geodesic from the source to the target domain. This yields several intermediate rep- resentations of the input data that can be used for learning a classifier . Gong et al . [ 7 ] showed ho w to circumvent sam- pling only a finite number of subspaces by expressing the representation as a kernel. In contrast, T ommasi et al . [ 17 ] tackled the domain adaptation problem by learning a shared subspace capturing domain-in v ariant properties of the cate- gories. Learning for a ne w dataset is then done by learning an additional domain-specific transformation of the data. The work of Saenko et al . [ 14 ] was one of the earliest pa- pers to in vestigate domain adaptation challenges in visual recognition. The key idea of their work is to apply met- ric learning techniques that allo w for estimating a category- independent metric which related target and source e x- amples, and can be used in a nearest neighbor classifier . Kulis et al . [ 12 ] extended their work to asymmetric trans- formations and metrics using a Frobenius norm regularizer . A major bottleneck of their approach is the number of in- stance (linear) constraints, one for each pair of source and target examples, that need to be considered during optimiza- tion and the fact that transforms are learned independently of loss. Therefore, Hoffman et al . [ 9 ] recently showed ho w to jointly learn a transformation together with SVM param- eters in a max-margin frame work, which reduces the num- ber of constraints to the number of categories. The linear transformation w as quadratic in the feature dimensionality , and the kernelization as used by [ 9 , 12 ] was quadratic in the number of training examples. This scales poorly with very large data, and as we sho w in the experiments section is intractable for ev en modestly large-scale data. 3. Scalable T ransformation Learning W e introduce a method for learning a transformation, which is easy to apply , implement, and can be com- bined with other large-scale architectures. Our new scal- able method can be applied to supervised domain adapta- tion, where we are gi ven source training examples D = { ( x i , y i ) } n i =1 and target e xamples ˜ D = { ( ˜ x j , ˜ y j ) } ˜ n j =1 . Our goal is to learn a linear transformation W ˜ x mapping a target training data point ˜ x to the source domain. The transformation is learned through an optimization frame- work which introduces linear constraints between trans- formed target training points and information from the source and thus generalizes the methods of [ 14 , 12 , 9 ]. T o demonstrate the generality of our approach, we denote linear constraints in the source domain using hyperplanes v i ∈ R D for 1 ≤ i ≤ m . Let us denote with ˜ y ij a scalar which represents some measure of intended similarity be- tween v i and the target training data point ˜ x j . W ith this general notation, we can express the standard transforma- tion learning problem with slack variables as follo ws: min W , { η } 1 2 k W k 2 F + ˜ C m, ˜ n X i =1 ,j =1 ( η ij ) p s.t. ˜ y ij v T i W ˜ x j ≥ 1 − η ij , η ij ≥ 0 ∀ i, j . (1) Note that this directly corresponds to the transformation learning problem proposed in [ 9 ]. Previous transformation learning techniques [ 14 , 12 , 9 ] used a Bregman div ergence optimization technique [ 12 ], which scales quadratically in the number of tar get training examples (k ernelized version) or the number of feature dimensions (linear version). For the large-scale scenario considered in this paper , this is im- practical due to the lar ge number of target training examples and categories given, as well as the high dimensionality of the features. Therefore, we show in a new analysis both how to use dual coordinate descent for the optimization of W and that W has a low-rank structure, which can be ex- ploited to allow for efficient optimization as verified in our experimental e valuation. 3.1. Lear ning W with dual coordinate descent W e now re-formulate Eq. ( 1 ) as a vectorized optimiza- tion problem suitable for dual coordinate descent that al- lows us to use ef ficient optimization techniques. W e use w = vec ( W ) to denote the vectorized version of a matrix W obtained by concatenating the ro ws of the matrix into a single column vector . W ith this definition, we can write: k W k 2 F = k vec ( W ) k 2 2 = k w k 2 2 (2) v T i W ˜ x j = w T vec v i · ˜ x T j . (3) Let ` = m ( j − 1) + i be the index ranging ov er the target ex- amples as well as the m hyperplanes in the source domain, which we also denote as ` = ( i, j ) for con venience. W e now define a ne w set of “augmented” features as follows: d ` = vec v i · ˜ x T j ∈ R D × ˜ D , (4) t ` = ˜ y ij . (5) W ith these definitions, Eq. ( 1 ) is equi valent to a soft-margin SVM problem with training set ( d ` , t ` ) ˜ n · K ` =1 . W e e xploit this result of our analysis by using and modifying the ef ficient coordinate descent solver proposed in [ 10 ], which solves the SVM optimization problem in its dual form with respect to the dual variables α ` : min α ≥ 0 g ( α ) = 1 2 α T ¯ Q α − e T α . (6) W e have considered the L 2 -SVM formulation ( p = 2 in Eq. ( 1 )), although our techniques presented in this paper also hold for the standard L 1 -SVM case. The matrix Q is a regularized kernel matrix incorporating the labels, i.e . ¯ Q `,` 0 = t ` t ` 0 d T ` d ` 0 + λ δ [ i = j ] with λ = 1 2 ˜ C . The key idea is to maintain and update w e xplicitly: w = m · ˜ n X ` =1 α ` t ` d ` . (7) This dramatically reduces the computational complexity of the gradient computation in α ` compared to classical dual solvers commonly used for kernel SVM: ∇ ` g ( α ) = y i · w T d ` + λ α ` − 1 , (8) which requires a number of operations linear in the dimen- sionality of the given (augmented) feature vectors d ` . A single coordinate descent step can then be done by: α ` ← max 0 , α ` − ∇ ` g ( α ) k d ` k + λ (9) in the same asymptotic time. Note that explicitly maintain- ing w is essential for easily computable coordinate descent steps; therefore, gi ven the change 4 α ` of the step, we hav e to update w so that Eq. ( 7 ) is again fulfilled: w ← w + 4 α ` t ` d ` . (10) Whereas, for standard learning problems an iteration with only a linaer number of operations in the feature dimen- sionality already provides a sufficient speed-up, this is not the case when learning domain transformations W . When the dimension of the source and target feature space is D and ˜ D , respectiv ely , the features d ` of the augmented train- ing set have a dimensionality of D · ˜ D , which is imprac- tical for vision tasks with high-dimensional input features. For this reason, we show in the following how we can ef- ficiently exploit an implicit low-rank structure of W for a small number of hyperplanes inducing the constraints. 3.2. Implicit low-rank structur e of the transform T o deriv e a low-rank structure of the transformation ma- trix, let us recall Eq. ( 7 ) in matrix notation: W = m, ˜ n X i =1 ,j =1 α ` v i · ˜ x T j = m X i =1 v i ˜ n X j =1 α ` ˜ x T j . (11) Thus, W is a sum of m dyadic products and therefore a matrix of at most rank m , with m being the number of hy- perplanes in the source used to generate constraints. Note that for our e xperiments, we use the MMDT method [ 9 ], for which the number of hyperplanes equals the number of ob- ject categories we seek to classify . W e can exploit the lo w rank structure by representing W indirectly using: β i = ˜ n X j =1 α ` ˜ x T j . (12) This is especially useful when the number of categories is small compared to the dimension of the source domain, be- cause [ β 1 , . . . , β m ] only has a size of m × ˜ D instead of D × ˜ D for W . It also allo ws for very ef ficient updates with a computation time even independent of the number of cat- egories. First, with the given lo w-rank representation and the β i , we can easily speed up the scalar product in Eq. ( 8 ): w T d ` = v T i W ˜ x j = m X i 0 =1 v T i v i 0 β T i 0 ˜ x j = m X i 0 =1 ρ i,i 0 β T i 0 ˜ x j , where the matrix R = ( ρ i,i 0 ) ∈ R m × m can be calculated in advance. Furthermore, we can cache β T i 0 ˜ x j , leading to a only a cost of O ( ˜ D ) (Details in Sect. 3.3 ). The matrix R contains the correlations between hyper- planes and also sho ws the multi-task fashion of the ap- proach: the β i vectors can be seen as linear classifiers in the target domain and the matrix R combines all of them taking the dependencies between classes into account. This is an interesting and important aspect of our method in sce- narios with a large number of categories. A linear classifier v i is mapped to the target domain by: ˜ v i = W T v = m X i 0 =1 β i ρ i,i 0 (13) and therefore uses correlations to other cate gories, which is similar to transfer learning approaches [ 16 ]. T o allow for efficient α -updates in Eq. ( 9 ), we further need to consider an efficient calculation of the feature v ector norm k d ` k 2 : k d ` k 2 = k v i · ˜ x T j k 2 = k v i k 2 · k ˜ x j k 2 . (14) Finally the update formula in Eq. ( 10 ) can be translated into updating β i in only O ( ˜ D ) operations: β i ← β i + 4 α ` t ` ˜ x j . (15) 3.3. Algorithmic details and complexity In this section, we briefly discuss some implementation details of the solver used in our e xperiments (Sect. 5 ). Code for our efficient dual coordinate descent transform solver , adapted from liblinear [ 6 ], will be made publicly a vail- able online. The shrinking heuristics presented in [ 10 ] that maintain a set S of dual v ariables that hav e been set to zero during optimization and that are lik ely not to change in the future are also implemented in our approach. An algorith- mic outline of our approach is giv en in Figure 2 . α ` update W update Our approach O ( ˜ D ) O ( ˜ D ) Direct rep. of W O ( D · ˜ D ) O ( D · ˜ D ) Bregman opt. (kernel) [ 12 ] - O ( n · ˜ n ) Bregman opt. (linear) - O ( D · ˜ D ) T able 1. Asymptotic times for one iteration of the optimization, where a single constraint is taken into account. There are n source training points of dimension D and ˜ n target training points of di- mension ˜ D . Optimization of W in our method 1. For 1 ≤ i, i 0 ≤ K : ρ i,i 0 = v T i v i 0 2. For 1 ≤ j ≤ ˜ n : q j = k ˜ x j k 2 3. Repeat until con vergence of α (a) Loop through the acti ve set ` = ( i, j ) ∈ S i. s = P m i 0 =1 ρ i,i 0 β T i 0 ˜ x j using cached β T i 0 ˜ x j ii. G = δ [ ˜ y j = i ] · s + λα ` − 1 iii. P G = ( G α ` > 0 min( G, 0) α ` = 0 iv . if P G 6 = 0 A. α ` ← max( α ` − G/ ( q j · ρ i,i + λ ) , 0) B. β i ← β i + 4 α ` δ [ ˜ y j = i ] ˜ x j Figure 2. Pseudo code for W optimization without shrinking heuristics and caching details. Computational complexity The asymptotic times are summarized in T able 1 . While the asymptotic time for the kernel Bregman optimization used in [ 12 , 9 ] depends on the number of source examples, the time we need to iterativ ely take one constraint into account is independent of the num- ber of examples in either the source or tar get domain. One pass ov er all constraints takes time O ( ˜ n · m ) , which finally leads to a linear asymptotic time in the product of the num- ber of target points and the target dimension, independent of the size of the source training set. Therefore, our method allows for using transform-based adaptation in large-scale settings, where previous approaches [ 12 , 14 ] were unable to run at all. Identity regularizer As described in previous sections, the transformation W has a low-rank structure when using the original MMDT formulation. In situations with only a small number of categories, this can be too restricti ve for the class of transformations. Howe ver , when using the iden- tity regularizer k W − I k 2 F , we obtain W = I + P i v i β T i , which allows to estimate full rank matrices. The efficient updates in each coordinate descent iteration do not change significantly and are omitted here due to the lack of space. Caching techniques As mentioned earlier, we cache the scalar products β T i ˜ x j to allow for fast computation. Each time the vector β i is updated, all ˜ n cached values β T i ˜ x j are in valid and hav e to be updated in one of the next steps where ˜ x j is taken into account. When using a fully random- ized order of the dual v ariables α ` as suggested by [ 10 ], this in validation happens on av erage every K th step leading to a low probability that the cached value can be used in be- tween. For this reason, we only consider a random order of j and iterate normally through all the K categories. There- fore, we can use the cached v alues in each of the K blocks. Con vergence properties Our solver maintains all the con vergence properties of dual coordinate descent solvers. In particular , we have at least a linear con ver gence rate [ 10 , Theorem 1] and an -accurate solution can be obtained in O ( − log( )) iterations. 4. Domain adaptation datasets In the follo wing, we briefly describe the datasets used in our e xperiments for the source as well as the tar get domain. ImageNet ILSVRC2010 to SUN2012 Whereas Ima- geNet images were obtained using object category names and therefore contain a large portion of advertisement im- ages, the creation of the SUN database was done by search- ing for scene categories and labeling objects in the images afterwards. Therefore, there is a significant domain shift between the two datasets (Figure 1 ). In fact, T orralba and Efros’ s experiments in [ 18 ] consistently showed that the do- main shift between ImageNet and SUN is one of the most sev ere among all pairs of benchmark datasets they surveyed. For this reason, we assembled a new challenge for do- main adaptation methods by matching a subset of the ob- ject categories from the SUN2012 dataset [ 20 ] (target do- main) with the ones present in the hierarchy of the Ima- geNet 2010 challenge [ 2 ] (source domain). The matching of the category names in both datasets is done by using the manually maintained W ordNet matchings of the SUN2012 dataset [ 20 ]. Using the W ordNet descriptions, a large set of SUN2012 descriptions can be mapped to nodes of the W ordNet subgraph related to the ILSVRC2010 challenge; i.e ., to sets of ILSVRC2010 categories (leaf nodes). Finally , we consider pairs of SUN2012 labels and ILSVRC2010 cat- egory sets that lead to more than 20 examples. This leads to a total of 84 categories 1 . The final set of examples consists 1 tree, chair , cabinet, table, lamp, curtain, box, car, bed, mountain, desk, fence, mirror, skyscraper , bottle, rug, basket, bench, to wel, vase, bannis- ter , ball, stove, bookcase, magazine, refrigerator, buck et, clock, glass, hat, oven, boat, fan, shoe, dishwasher , telephone, airplane, loudspeaker , ap- parel, keyboard, bar, gate, bus, mug, bridge, umbrella, bicycle, backpack, laptop, washer, bathtub, roof, pitcher , fish, tower , flo wer , apple, file, teapot, minibike, printer, garage, guitar, ashcan, dog, dune, piano, ship, crane, newspaper , mouse, microphone, cliff, bell, elephant, shirt, toaster, orange, remote control, knife, helmet, grape, stick, shop of cropped bounding boxes not labeled as dif ficult or trun- cated. Classification with these examples without context knowledge can be considered as v ery challenging. T o allow for easy reproducibility of the results, we use the bag of visual words (BoW) features provided for the ImageNet challenge. Furthermore, features in the SUN database are extracted by computing bag of visual words features inside of the giv en bounding boxes. This is also done with the feature extraction code provided for the Ima- geNet challenge. Bing/Caltech256 dataset W e also use the Bing dataset of [ 3 ], which contains images for each category of the Cal- tech256 dataset. In contrast to the ImageNet/SUN2012 sce- nario, both datasets have been created using internet search images and category keywords. In total, this dataset con- sists of 256 object categories. Features for this dataset are provided by the authors of [ 3 ]. 5. Experiments In our experiments, we give empirical v alidation for the following claims: 1. Our optimization algorithm allows for significantly faster learning than the one used by [ 9 ] without loss in recognition performance (Sect. 5.2 ). 2. Our transform-based approach can be used for large- scale domain adaptation datasets and achieves state-of- the-art performance, significantly outperforming the geodesic flow k ernel method of [ 7 ] (Sect. 5.3 ). 3. W e can learn a transformation between large-scale datasets that can be used for transferring ne w cat- egory models without any tar get training examples (Sect. 5.4 ) e ven in the case of different feature dimen- sions (Sect. 5.5 ). 5.1. Baseline methods W e compare our approach to the standard domain adap- tation baseline, which is a linear SVM trained with only target or only source training examples ( SVM-T ar get / SVM- Sour ce ). Note that for new category experiments, where some classes do not hav e training examples in the target domain, the SVM-T ar get baseline cannot be used. Further - more, we ev aluate the performance of the geodesic flow kernel ( GFK ) presented by [ 7 ] and integrated in a nearest neighbor approach. The metric learning approach of [ 12 ] ( ARC-t ) and the shared latent space method of [ 5 ] ( HF A ) can only be compared to our approach in a medium-scale experiment which is tractable for kernelized methods. For our experiments, we always use the source code from the authors. W e refer to our method as large-scale max-margin do- main transform ( LS-MMDT ) in the follo wing. 0 10 20 30 40 50 60 4 6 8 10 12 14 16 18 20 22 average recognition rate number of target examples per category SVM-Source GFK (Gong 2012) HFA (Duan 2012) ARC-t (Kulis 2011) MMDT (Hoffman 2013) LS-MMDT (Our approach) 50 100 150 200 250 300 350 400 450 500 4 6 8 10 12 14 16 18 20 learning time in seconds number of target examples per category GFK (Gong 2012) MMDT (Hoffman 2013) LS-MMDT (Our approach) Figure 3. Medium-scale experiment: recognition rates and learn- ing times when using the first 20 categories of the Bing/Caltech256 (source/target) dataset. T imes of ARC-t [ 12 ] and HF A [ 5 ] are off- scale (12min and 55min for 10 target points per cate gory). -10 0 10 20 30 40 50 0 4000 8000 12000 average recognition rate number of examples in the target domain SVM-Target SVM-Source GFK (Gong 2012) LS-MMDT (Our approach) T-SVM (Bergamo 2010) DW-SVM PMC (Minmin 2011) Gopalan 2011 Best SVM (Bergamo 2010) 0 2 4 6 8 10 12 14 500 1000 1500 2000 average recognition rate (bounding boxes only) number of examples in the target domain SVM-Target SVM-Source GFK (Gong 2012) LS-MMDT (Our approach) Figure 4. Large-scale experiment with the Bing/Caltech256 do- main shift (76K source examples; left) as well as the Ima- geNet/SUN2012 domain shift (8K source examples; right) and a varying number of tar get examples. 5.2. Comparison to other adaptation methods W e first e valuate our approach on a medium-scale dataset comprised of the first 20 categories of the Bing/Caltech dataset. This setup is also used in [ 9 ] and allows us to compare our new optimization technique with the one used by [ 9 ] and also with other state-of-the-art domain adapta- tion methods [ 12 , 5 , 7 ]. W e use the data splits provided by [ 3 ] and the Bing dataset is used as source domain with 50 source examples per category . Figure 3 contains a plot for the recognition results (left) and the training time (right plot) with respect to the number of target training exam- ples per cate gory in the Caltech dataset. As Figure 3 shows, our solver is significantly faster than the one used in [ 9 ] and achieves the same recognition accuracy . Furthermore, it outperforms other state-of-the-art methods, like ARC- t [ 12 ], HF A [ 5 ], and GFK [ 7 ], in both learning time and recognition accuracy . 5.3. Experiments with a large number of categories In the next e xperiment, we use the Bing/Caltech256 dataset [ 3 ] with all 256 cate gories and our Ima- genet/SUN2012 subset, settings in which the optimization techniques used in [ 9 ] cannot be applied due to the large number of target training examples. Furthermore, we test 12 14 16 18 20 22 24 26 28 30 20 40 60 80 100 avg. recog. rate of new categories (bounding boxes only) number of provided labels in target domain SVM-Target (Oracle) LS-MMDT (Oracle) SVM-Source Transformation learned from held-out categories(LS-MMDT) 0 50 100 150 200 250 300 number of labeled target examples needed before SVM-Target has equal accuracy LS-MMDT (O*) LS-MMDT SVM-Source Figure 6. Ne w cate gory scenario: our approach is used to learn a transformation from held-out cate gories and to transfer new cate- gory models directly from the source domain without target exam- ples. The performance is compared to an oracle SVM-T arget and MMDT that use target e xamples from the held-out categories. the performance of our method on the ne w domain dataset presented in Sect. 4 and we restrict the comparison to meth- ods that provide generalization to ne w categories. The results are giv en in Figure 4 and we see that we out- perform again the geodesic flow method of [ 7 ] in both cases. Focusing on the right plot (Imagenet/SUN2012 dataset), no- tice that our method continues to hav e a performance benefit ov er SVM-T arget e ven as the number of labeled target ex- amples increases. This is due to the small number of train- ing examples a vailable for several of the cate gories, which is typical for real-world datasets [ 15 ]. Providing more la- beled training data is only possible for some of the cate- gories and without adaptation the recognition rates of less common classes cannot be improv ed. Figure 5 shows some of the results we obtained for in- scene classification and 700 provided tar get training ex- amples, where during test time we are given ground-truth bounding boxes and conte xt kno wledge about the set of ob- jects present in the image. The goal of the algorithm is then to assign the weak labels to the gi ven bounding-boxes. W ith this type of scene knowledge and by only considering im- ages with more than one cate gory , we obtain an accuracy of 59 . 21% compared to 57 . 53% for SVM-T arget and 53 . 14% for SVM-Source. In contrast to [ 19 ], we are not given the exact number of objects for each category in the image, making our problem setting more difficult and realistic. 5.4. T ransferring new category models A key benefit of our method is the possibility of trans- ferring category models to the tar get domain e ven when no target domain examples are av ailable at all. In the follow- ing experiment, we selected 11 categories 2 from our Ima- geNet/SUN2012 dataset and only provided training exam- ples in the source domain for them. The transformation is 2 laptop,phone,toaster ,keyboard,fan,printer ,teapot,chair,bask et,clock,bottle SVM-Source SVM-T arget Our approach carpet carpet curtain carpet bed bed curtain carpet bed desk lamp curtain carpet keyboard keyboard bookcase bookcase desk chair chair chair keyboard keyboard bookcase desk desk desk chair chair keyboard keyboard bookcase desk desk chair chair chair vase glass curtain curtain glass glass curtain mirror glass curtain glass glass mirror curtain mirror glass curtain glass glass vase curtain curtain cabinet bottle bottle towel curtain curtain bottle curtain towel curtain cabinet bottle towel towel desk lamp mirror curtain bed curtain chair curtain curtain desk lamp bed curtain chair desk lamp bed chair curtain desk lamp bed curtain chair desk lamp bed desk lamp curtain Figure 5. Results for object classification with given bounding boxes and scene prior knowledge: columns show the results of (1) SVM- Source, (2) SVM-T arget, and (3) transform-based domain adaptation using our method. Correct classifications are highlighted with green borders. The figure is best vie wed in color . learned from all other categories with both labeled exam- ples in the target and the source domain. As we can see in Figure 6 , this transfer method (“T ransf. learned from other categories”) even outperforms learn- ing in the target domain (SVM-T arget Oracle) with up to 100 labeled training examples. Especially with large-scale datasets, like ImageNet, this ability of our fast transform- based adaptation method provides a huge adv antage and al- lows using all visual categories provided in the source as well as in the target domain. Furthermore, the experiment shows that we indeed learn a category-in variant transforma- tion that can compensate for the observed dataset bias [ 18 ]. 5.5. Adapting from different featur e types T ransform-based domain adaptation can be also applied when source and tar get domain ha ve dif ferent feature di- mensionality . T o show the applicability of our method in this setting we use the same setup as in the previous exper - iment, but we computed 1500 -dimensional BoW features for objects in the SUN2012 dataset and learned a transfor- mation from the 1000 dimensional features in the ImageNet dataset. Adaptation with our approach achieves a recogni- tion rate of 18 . 2% compared to 16 . 9% of SVM-T arget us- ing one target training example per category . This can be seen as one of the most dif ficult adaptation scenarios, where we estimate the domain transformation from different cate- gories and between completely different feature spaces. 6. Conclusions In this paper, we showed how to extend transform- based domain adaptation towards large-scale scenarios. Our method allows for efficient estimation of a category- in variant domain transformation in the cases of large fea- ture dimensionality and a large number of training exam- ples. This is done by exploiting an implicit lo w-rank struc- ture of the transformation and by making explicit use of a close connection to standard max-margin problems and efficient optimization techniques for them. Our method is easy to implement and apply , and achiev es significant per- formance gains when adapting visual recognition models learned from biased internet sources to real-world scene un- derstanding datasets. An important take-home message of this paper is that collecting more and more annotated visual data does not necessarily help for solving scene understanding in general. Howe ver , domain adaptation can help to bridge the gap by learning category-in variant transformations without signifi- cant additional computational ov erhead. References [1] Y . A ytar and A. Zisserman. T abula rasa: Model transfer for object category detection. In Proc. ICCV , 2011. 1 , 2 [2] A. Berg, J. Deng, and L. Fei-Fei. Large scale visual recog- nition challenge, 2010. http://www.imagenet.org/ challenges/LSVRC/2010/ . 5 [3] A. Bergamo and L. T orresani. Exploiting weakly-labeled web images to improv e object classification: a domain adap- tation approach. In Pr oc. NIPS , 2010. 1 , 2 , 5 , 6 [4] J. Deng, W . Dong, R. Socher , L.-J. Li, K. Li, and L. Fei- Fei. Imagenet: A large-scale hierarchical image database. In Pr oc. CVPR , pages 248–255, 2009. 1 [5] L. Duan, D. Xu, and I. W . Tsang. Learning with aug- mented features for heterogeneous domain adaptation. In Pr oc. ICML , 2012. 1 , 2 , 5 , 6 [6] R.-E. Fan, K.-W . Chang, C.-J. Hsieh, X.-R. W ang, and C.-J. Lin. LIBLINEAR: A library for large linear classification. JMLR , 9:1871–1874, 2008. 4 [7] B. Gong, Y . Shi, F . Sha, and K. Grauman. Geodesic flow kernel for unsupervised domain adaptation. In Pr oc. CVPR , 2012. 2 , 5 , 6 [8] R. Gopalan, R. Li, and R. Chellappa. Domain adaptation for object recognition: An unsupervised approach. In Pr oc. ICCV , 2011. 2 [9] J. Hof fman, E. Rodner , J. Donahue, T . Darrell, and K. Saenko. Efficient learning of domain-inv ariant image rep- resentations. In Pr oc. ICLR , 2013. 1 , 2 , 3 , 4 , 5 , 6 [10] C.-J. Hsieh, K.-W . Chang, C.-J. Lin, S. S. Keerthi, and S. Sundararajan. A dual coordinate descent method for large- scale linear SVM. In Pr oc. ICML , 2008. 3 , 4 , 5 [11] A. Khosla, T . Zhou, T . Malisie wicz, A. A. Efros, and A. T or- ralba. Undoing the damage of dataset bias. In Pr oc. ECCV , 2012. 1 , 2 [12] B. Kulis, K. Saenko, and T . Darrell. What you saw is not what you get: Domain adaptation using asymmetric kernel transforms. In Pr oc. CVPR , 2011. 1 , 2 , 3 , 4 , 5 , 6 [13] S. J. Pan and Q. Y ang. A survey on transfer learning. T rans- actions on Knowledge and Data Engineering , 22:1345– 1359, 2010. 2 [14] K. Saenko, B. K ulis, M. Fritz, and T . Darrell. Adapting vi- sual category models to new domains. In Pr oc. ECCV , pages 213–226, 2010. 2 , 3 , 4 [15] R. Salakhutdinov , A. T orralba, and J. T enenbaum. Learning to share visual appearance for multiclass object detection. In Pr oc. CVPR , pages 1481–1488, 2011. 6 [16] T . T ommasi and B. Caputo. The more you know , the less you learn: from knowledge transfer to one-shot learning of object categories. In Proc. BMVC , 2009. 4 [17] T . T ommasi, N. Quadrianto, B. Caputo, and C. H. Lampert. Beyond dataset bias: Multi-task unaligned shared knowledge transfer . In Proc. A CCV , 2012. 2 [18] A. T orralba and A. Efros. Unbiased look at dataset bias. In Pr oc. CVPR , 2011. 1 , 5 , 8 [19] G. W ang, D. Forsyth, and D. Hoiem. Comparativ e object similarity for improved recognition with few or no examples. In Pr oc. CVPR , pages 3525–3532, 2010. 6 [20] J. Xiao, J. Hays, K. Ehinger , A. Oliv a, and A. T orralba. Sun database: Large-scale scene recognition from abbey to zoo. In Pr oc. CVPR , pages 3485–3492, 2010. 1 , 5 [21] J. Y ang, R. Y an, and A. G. Hauptmann. Adapting SVM clas- sifiers to data with shifted distributions. In Pr oc. ICDMW , pages 69–76, 2007. 2 [22] J. Y ang, R. Y an, and A. G. Hauptmann. Cross-domain video concept detection using adaptiv e SVMs. ACM Multimedia , 2007. 1 , 2

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment