On Generalized Bayesian Data Fusion with Complex Models in Large Scale Networks

Recent advances in communications, mobile computing, and artificial intelligence have greatly expanded the application space of intelligent distributed sensor networks. This in turn motivates the development of generalized Bayesian decentralized data…

Authors: Nisar Ahmed, Tsung-Lin Yang, Mark Campbell

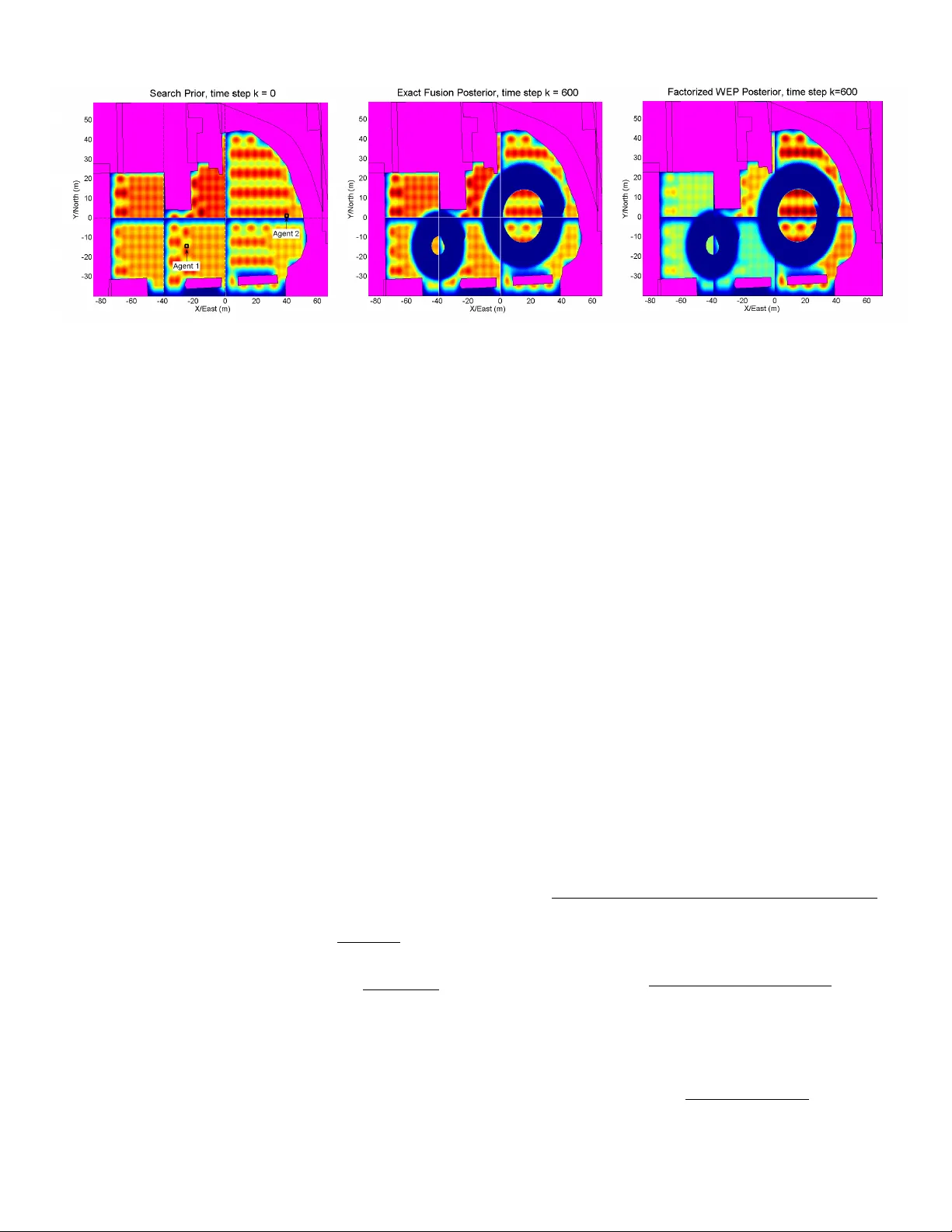

On Generalized Bayesian Data Fusion with Comple x Models in Lar ge Scale Networks Nisar Ahmed ∗ , Tsung-Lin Y ang † , and Mark Campbell ∗ ∗ Sibley School of Mechanical and Aerospace Engineering, Cornell Unv ersity , Ithaca, NY 14853, email: (nra6, mc288)@cornell.edu † T aggPic, Ithaca, NY 14853, email: yangchuck@gmail.com Abstract —Recent advances in communications, mobile com- puting, and artificial intelligence hav e greatly expanded the application space of intelligent distributed sensor networks. This in turn motivates the development of generalized Bayesian de- centralized data fusion (DDF) algorithms for rob ust and efficient information sharing among autonomous agents using probabilis- tic belief models. However , DDF is significantly challenging to implement for general r eal-world applications requiring the use of dynamic/ad hoc network topologies and complex belief models, such as Gaussian mixtures or h ybrid Bayesian networks. T o tackle these issues, we first discuss some new key mathematical insights about exact DDF and conserv ative approximations to DDF . These insights ar e then used to de velop novel generalized DDF algorithms f or complex beliefs based on mixture pdfs and conditional factors. Numerical examples moti vated by multi- robot target search demonstrate that our methods lead to significantly better fusion r esults, and thus have great potential to enhance distributed intelligent r easoning in sensor networks. I . I N T RO D U C T I O N Intelligent robotic sensor networks hav e dra wn consider- able interest for applications like en vironmental monitoring, surveillance, search and rescue, and scientific e xploration. T o operate autonomously in the face of real world uncertainties, individual robots in such networks typically rely on perception algorithms rooted in Bayesian estimation methods [1]. These not only permit robots to make intelligent local decisions amid noisy data and complex dynamics, but also enable them to efficiently gather and share information with each other, which greatly improv es perceptual rob ustness and task performance. The Bayesian distrib uted data fusion (DDF) paradigm pro- vides a particularly strong foundation for fully decentralized information sharing and perception in autonomous robot net- works. In theory , DDF is mathematically equiv alent to an idealized centralized Bayesian data fusion strate gy (in which all raw sensor data is sent to a single location for maximum information extraction), b ut is far more computationally ef- ficient, scalable, and robust to sensor network node failures through the use of recursive peer-to-peer message passing [2]. These properties have been successfully demonstrated for target search and tracking applications in large outdoor en vironments using wirelessly linked dynamic networks of autonomous/semi-autonomous UA Vs [3], [4]. Despite its merits, DDF is generally dif ficult to implement for three important reasons. Firstly , in order to maintain consis- tent network agent beliefs and a v oid ‘rumor propagation’ (i.e. double-counting old information as new information), either exact information pedigree tracking [5], [4] or conservati v e fusion [6] must be used. Each approach has dif ferent perfor - mance/robustness tradeoffs: exact pedigree tracking is optimal but computationally expensi ve for robust network commu- nication topologies (i.e. loop y/ad hoc netw orks), whereas conservati v e fusion suboptimally loses some ne w information to ensure consistenc y under an y topology . Secondly , both exact and conserv ati ve DDF methods yield analytically intractable results whenever local agent beliefs contain complex non- exponential family pdfs, e.g. Gaussian mixtures, which are commonly used for nonlinear estimation. V arious approxima- tions have been proposed to address this issue [6], [4], [7], [8], but these can be very inaccurate and do not scale well to large problem spaces. Thirdly , the DDF message passing protocol nominally requires each network node to exchange its entire local copy of the full joint state pdf with neighbors, which can lead to expensi ve processing requirements for high- dimensional pdfs in large/densely connected networks . W e propose nov el solutions for the second and third issues. Specifically , we present new mathematical insights about exact and conserv ati v e DDF methods that lead to: (i) flexible ‘f ac- torized DDF’ updates, which greatly simplify communication and processing requirements for fusion with complex joint state pdfs, and (ii) accurate approximations for recursi v e fusion with finite mixture models ov er continuous random variables. Numerical examples in the context of large scale static target search show how our proposed methods lead to lower processing costs and more accurate fusion results compared to con ventional DDF implementations. These results point to another interesting link between sensor networks and the po werful probabilistic graphical modeling frame work (Bayes nets, MRFs, factor graphs, etc.); this can be e xploited to de velop novel tightly coupled perception and planning algorithms that enable decentralized mobile sensor networks to cope with complex uncertainties more ef ficiently and robustly . I I . B AC K G RO U N D A. Bayesian DDF Pr oblem F ormulation Let x k be a d -dimensional dynamic state vector of con- tinuous and/or discrete random variables to be estimated by a decentralized network of N A autonomous agents. Assume each agent i ∈ { 1 , ..., N A } can perform local recursi ve Bayesian updates on a common prior pdf p 0 ( x ) with sensor data D i k having likelihood p ( D i k | x ) at discrete time step k > 0 , so that p i ( x | D i 1: k ) ∝ p i ( x | D i 1: k − 1 ) · p ( D i k | x ) , (1) where p i ( x | D i 1: k − 1 ) = p 0 ( x ) for k = 1 . For brevity , the LHS of (1) is hereafter denoted as p i ( x k ) (i.e. conditioning on av ailable observ ations is always implied). Given an any node- to-node communication topology at k , assume i is aw are only of its connected neighbors and is unaware of the complete network topology . Let N ( i, k ) denote the set of neighbors i receiv es information fr om at time k , and let Z i k denote the set of information recei ved by i up to time k , i.e. D i 1: k and information previously sent to i by other agents. The DDF problem is for each agent i to find the fused information pdf p f ( x k ) ≡ p i ( x k | Z i k ∪ Z N ( i,k ) k ) . (2) W ithout loss of generality , assume i computes (2) in a recursiv e ‘first in, first out’ manner for each j ∈ N ( i, k ) . It is easy to sho w that p i ( x k ) ∝ p ( x k | Z i k ∩ Z j k ) p ( x k | Z i/j k ) , where Z i/j k is the exclusi ve information at agent i with respect to j and p ( x k | Z i k ∩ Z j k ) ≡ p c ( x k ) is the common information pdf between i and j . Using this fact, [2] shows that (2) can be exactly recov ered via a distributed variant of Bayes’ rule, p f ( x k ) = p i ( x k | Z i k ∪ Z j k ) ∝ p i ( x k ) p j ( x k ) p c ( x k ) . (3) Note that D i 1: k and D j 1: k nev er need to be sent; i and j need only exchange their latest local pdfs for x , which compactly summarize all knowledge receiv ed from local sensor data and from network neighbors. T o maintain consistency , (3) requires explicit tracking of p c ( x k ) , which can be handled through exact fusion algorithms like the channel filter [2], [4] or information graphs [5]. These methods are generally infeasible for dynamic ad hoc topologies, in which case suboptimal conservati ve approximations to (3), such as the weighted exponential product (WEP) rule, can be used instead to guarantee consistent fusion without knowing p c ( x k ) , p f , W EP ( x k ) ∝ [ p i ( x k )] ω [ p j ( x k )] 1 − ω , ω ∈ [0 , 1] . (4) This trades off the amount of new information fused from p i ( x k ) and p j ( x k ) as a function of ω , which can be optimized according to various con vex information-theoretic cost metrics [6], [8]. If only simple exponential family pdfs lik e Gaussians are required for estimation, then (3) and (4) always yield closed-form results that can be readily implemented via the exchange and manipulation of suf ficient statistics. Unfortunately , this is not the case for more complex pdfs such as Gaussian mixtures (GMs), which are widely used for nonlinear estimation applications. F or example, consider a 2D Bayesian target search problem where x ∈ R 2 is the unknown location of a target in a large search space. As dicussed in [9], a decentralized team of mobile robots can use local optimal control laws in tandem with Bayesian DDF to efficiently reduce the uncertainty in x . Figure 1 shows a simple example of a finite GM prior p 0 ( x ) , along with a binary visual ‘detection/no detection’ sensor model for an (a) (b) (c) Fig. 1. Bayesian GM fusion example (black/white = low/high probability; magenta circle shows robot’ s position): (a) prior GM pdf p 0 ( x ) , (b) binary visual detector model p ( D | x ) , (c) Bayesian posterior GM pdf p ( x | D ) . autonomous mobile robot and a finite GM approximation to (1), p i ( x | D i 1: k ) ≈ M i X q =1 w i q N ( x ; µ i q , Σ i q ) (5) where M i is the number of mixands, µ i q and Σ i q are mixand q ’ s mean and covariance matrix, and w i q ∈ [0 , 1] is mixand q ’ s weight (s.t. P M i q =1 w i q = 1 ). Since substitution of (5) into (3) or (4) leads to non-closed form fusion pdfs, we must find tractable yet accurate approximations to implement DDF with GMs. In particular , if p f ( x k ) and p f , W EP ( x k ) can alw ays be closely approximated by GMs, then the recursi ve form of eqs. (1), (3) and (4) can be (approximately) maintained. T o this end, [7] derived a closed-form GM approximation to p f ( x k ) that replaces the GM pdf p c ( x k ) with a single moment- matched Gaussian, while [6] proposed a GM approximation to p f , W EP ( x k ) that is based on the cov ariance intersection rule for Gaussian pdfs. Although fast and con venient, both methods rely on strong heuristic assumptions that lead to poor approximations of (3) and (4) whenev er p i ( x ) , p j ( x ) , or p c ( x ) are highly non-Gaussian. Refs. [4], [8] proposed more rigorous approximations to (3) and (4) that use weighted Monte Carlo particle sets, which can be con verted into GM pdfs via the expectation-maximization (EM) algorithm. Howe ver , these methods are computationally e xpensiv e for online operations, since they require a large number of particles and multiple EM initializations for robustness. Another concern is that the exchange of each agent’ s full local state pdf copy in (3) and (4) can lead to high communication and processing costs if either the state dimension is not fixed or if the full state pdf grows more complex over time due to nonlinear/non-Gaussian dynamics or observ ation models, e.g. as in Fig. 1 (a) and (c). I I I . F AC T O R I Z E D D D F W e propose a novel way to implement eqs. (3) and (4) that allows agents to selectiv ely exchange partial copies of complex state pdfs p i ( x k ) and p j ( x k ) , so that rele vant new information about different subsets of x can be shared more efficiently . This is accomplished by rewriting (3) and (4) in terms of conditional dependencies within x , so that p i ( x k ) and p j ( x k ) factor into smaller conditional pdfs that are easier to communicate and process. A. F actorized Exact DDF Suppose x k = [ x 1 k , ..., x d k ] ; let ¯ x s k represent an arbitrary group- ing of sub-states of x k , such that S s ¯ x s k = x k ∀ s ∈ { 1 , ..., N s } and ¯ x s 1 k T ¯ x s 2 k = ∅ , ∀ s 1 6 = s 2 (the ordering of states in each grouping is unimportant, but each ¯ x s k has at least 1 state). Then the law of total probability implies that p i ( x k ) = p i ( ¯ x 1 k | ¯ x 2 k , ..., ¯ x N s k ) p i ( ¯ x 2 k | ¯ x 3 k , ..., ¯ x N s k ) ...p i ( ¯ x N s k ) = N s − 1 Y s =1 p i ( ¯ x s k | ¯ x s +1: N s k ) ! p i ( ¯ x N s k ) , where all terms are implicitly conditioned on Z i k . Applying this factorization to (3) giv es p f ( x k ) ∝ Q w ∈{ i,j } Q N s − 1 s =1 p w ( ¯ x s k | ¯ x s +1: N s k ) p w ( ¯ x N s k ) Q N s − 1 s =1 p c ( ¯ x s k | ¯ x s +1: N s k ) p c ( ¯ x N s k ) . Grouping like terms together and simplifying yields p f ( x k ) ∝ N s − 1 Y s =1 p i ( ¯ x s k | ¯ x s +1: N s k ) p j ( ¯ x s k | ¯ x s +1: N s k ) p c ( ¯ x s k | ¯ x s +1: N s k ) η ( ¯ x 1: s k ) ! × p i ( ¯ x N s k ) p j ( ¯ x N s k ) p c ( ¯ x N s k ) , (6) Thus, the original DDF update for a single d -dimensional joint pdf is equiv alent to N s ≤ d separate conditional DDF updates. This means that agents i and j can exchange information about various ‘chunks’ of the state pdf, e.g. the latest pdfs for ( x 2 , x 3 | x 4 , x 5 ..., x d ) and ( x d − 1 | x d ) may be fused for certain v alues of x 4 , x 5 ..., x d during one exchange, while other conditional factors are fused during other e xchanges. B. F actorized WEP DDF The factorization principle extends to approximate WEP DDF for dynamic ad hoc network topologies, where exact tracking and remo val of p c ( x k ) is infeasible. This follo ws from the fact that eq. (4) can be re written as p f , W EP ( x k ) ∝ p i ( x k ) p j ( x k ) [ p i ( x k )] 1 − ω [ p j ( x k )] ω ∝ p i ( x k ) p j ( x k ) ˆ p c ( x k ; ω ) , (7) where ˆ p c ( x k ; ω ) ∝ [ p i ( x k )] 1 − ω [ p j ( x k )] ω can be thought of as a conservati ve estimate of the common information pdf. This simple yet no vel insight allo ws to write, as in eq. (6), p f , W EP ( x k ) ∝ = N s − 1 Y s =1 p i ( ¯ x s k | ¯ x s +1: N s k ) p j ( ¯ x s k | ¯ x s +1: N s k ) ˆ p c ( ¯ x s k | ¯ x s +1: N s k ; ω ) ! · p i ( ¯ x N s k ) p j ( ¯ x N s k ) ˆ p c ( ¯ x N s k ; ω ) , (8) where each denominator term is a conserv ativ e estimate of a conditional common information pdf. Note that (7) and (11) nominally imply that these terms share the same ω , since ˆ p c ( x 1 k , ..., x d k ) ∝ [ p i ( ¯ x 1 k , ..., ¯ x N s k )] 1 − ω [ p j ( ¯ x 1 k , ..., ¯ x N s k )] ω ∝ N s − 1 Y s =1 [ p i ( ¯ x s k | ¯ x s +1: N s k )] 1 − ω [ p j ( ¯ x s k | ¯ x s +1: N s k )] ω × [ p i ( ¯ x N s k )] 1 − ω [ p j ( ¯ x N s k )] ω (9) Howe ver , it is possible to specify a separate ω parameter for each estimated conditional common information term, i.e. ˆ p c ( x 1 k , ..., x d k ) ∝ N s − 1 Y s =1 [ p i ( ¯ x s k | ¯ x s +1: N s k )] 1 − ω s | s +1: N s [ p j ( ¯ x s k | ¯ x s +1: N s k )] ω s | s +1: N s × [ p i ( ¯ x N s k )] 1 − ω d [ p j ( ¯ x N s k )] ω d ∝ N s − 1 Y s =1 ˆ p c ( ¯ x s k | ¯ x s +1: N s k ; ω s | s +1: N s ) · ˆ p c ( ¯ x N s k ; ω N s ) , (10) so that the WEP update can be generally expressed as p f , W EP ( x k ) ∝ N s − 1 Y s =1 p i ( ¯ x s k | ¯ x s +1: N s k ) p j ( ¯ x s k | ¯ x s +1: N s k ) ˆ p c ( ¯ x s k | ¯ x s +1: N s k ; ω s | s +1: N s ) ! × p i ( ¯ x N s k ) p j ( ¯ x N s k ) ˆ p c ( ¯ x N s k ; ω N s ) . (11) The parameters ω s | s +1: N s and ω N s can be separately opti- mized using the information-theoretic cost metrics described in [8], [6]. Howe ver , the main advantage of (11) lies in the fact that the dependence of ω s | s +1: N s on x s +1: N s can also be exploited to further minimize information loss for each conditional state x s | s +1: N s . C. Exploiting Conditional Independence T o obtain a complete state update in (6) or (11), each factor must be ev aluated with respect to all possible configurations of up to d − 1 conditioning states. This can be computa- tionally expensiv e/intractable for large d , especially if any conditioning states are continuous or are discrete with many possible realizations. This issue can be greatly mitigated by exploiting conditional independence relationships among the state groupings ¯ x s k . For instance, if x k = [ ¯ x # k , ¯ x ∗ k ] , where ¯ x # k is a partition of states that are conditionally independent of each other given another smaller partition of states ¯ x ∗ k and sensor data, then p f ( x k ) ∝ Y x s k ∈ ¯ x # k p i ( x s k | ¯ x ∗ k ) p j ( x s k | ¯ x ∗ k ) p c ( x s k | ¯ x ∗ k ) η ( x s k , ¯ x ∗ k ) × p i ( ¯ x ∗ k ) p j ( ¯ x ∗ k ) p c ( ¯ x ∗ k ) η ( ¯ x ∗ k ) , (12) where updates for x s k ∈ ¯ x # k all depend on the same fixed number of states in ¯ x ∗ k . In many cases, x k can also be augmented with latent v ariables to introduce useful conditional factorizations that lead to more efficient processing. In general, this implies that factorized DDF can be quite useful whenev er the local posteriors p i ( x k ) and p j ( x k ) can be represented via modular/hierarchical factors, such as those used in probabilis- tic graphical models like undirected Markov random fields (MRFs) or hybrid directed Bayesian networks (BNs) [10]. Although a full mathematical treatment is beyond the scope of this note, the follo wing target search example gi ves a simple illustration of how intelligent sensor agents can le verage such probabilistic graphical models to manage and share complex hybrid information efficiently and flexibly via factorized DDF . (a) (b) Fig. 2. (a) Physical target search setup, showing N R = 6 discrete search regions over the 2D search space, (b) hybrid BN model used by each agent. Fig. 4. KLD losses for factorized and whole joint WEP DDF vs. ω R . D. T ar get Searc h Example with Hybrid State Model Figure 2 (a) sho ws the ph ysical setup for a search problem in which multiple mobile robots are looking for a static object in a lar ge open space (Cornell’ s Engineering Quadrangle). Here, target coordinates x 1 and x 2 are grouped together into the 2D random v ariable x , which in turn is partitioned into N R ≥ 1 mutually exclusi ve discrete regions by latent random v ariable R ; each R ∈ { 1 , ..., N R } is assigned a prior pdf p 0 ( x | R ) and region probability p 0 ( R ) ∈ [0 , 1] s.t. P R p 0 ( R ) = 1 . Let D i k be conditionally dependent on R such that the target is only detectable in R if that region is in the robot’ s sensor range, i.e. p ( D i k = ‘no detection’ | x, R = r ) = 1 , if in r sensor range p ( D i k | x, R = r ) = p ( D i k | x ) from Fig. 1(b), otherwise . Figure 2 (b) shows the corresponding hybrid BN model used by each robot to update its local belief over x and R . Considering local updates for robot i (likewise for j ), the joint pdf from the hybrid BN is p i ( x, R, D i 1: k ) = p 0 ( R ) p 0 ( x | R ) p ( D i 1: k | x, R ) , and so the Bayesian sensor update can be factored as p i ( x, R | D i 1: k ) = p i ( x | R, D i 1: k ) · p i ( R | D i 1: k ) . (13) The conditional pdfs are recursi vely updated via Bayes’ rule, p i ( x | R, D i 1: k ) ∝ p i ( x | R, D i 1: k − 1 ) p i ( D i k | x, R ) , (14) p i ( R | D i 1: k ) ∝ p i ( R | D i 1: k − 1 ) p ( D i k | R, D i 1: k − 1 ) , (15) where p ( D i k | R, D i 1: k − 1 ) = R p i ( x | R, D i 1: k − 1 ) p i ( D i k | x, R ) dx . In this hybrid model, each robot updates only its local copy of p ( x | R ) if R is within sensor range. The robots can then selectiv ely fuse posterior regional pdfs p ( x | R ) and/or the whole set of posterior discrete re gion weights p ( R ) with each other via either factorized exact or WEP DDF , p f ( x, R ) ∝ p i ( x | R ) p j ( x | R ) p c ( x | R ) · p i ( R ) p j ( R ) p c ( R ) = p f ( x | R ) · p f ( R ) · η ( R ) , (16) p f , W EP ( x,R ) ∝ p i ( x | R ) p j ( x | R ) ˆ p c ( x | R ; ω x | R ) · p i ( R ) p j ( R ) ˆ p c ( R ; ω R ) = p f , W EP ( x | R ; ω x | R ) · p f , W EP ( R ; ω R ) · ˆ η ( R ) , (17) where p f ( · ) and p f , W EP ( · ) refer to locally normalized condi- tional fusion posteriors, and η ( R ) = R p i ( x | R ) p j ( x | R ) p c ( x | R ) dx and ˆ η ( R ) = R p i ( x | R ) p j ( x | R ) ˆ p c ( x | R ; ω ) dx are the ‘denormalization’ terms required to make the product of the normalized conditional fusion posteriors equal to their corresponding unnormalized joint fusion pdfs. As a simple numerical example, consider a search mis- sion for two robot agents who are initialized with the same prior search map shown in Figure 3 (a), where p 0 ( x | R ) is given by a discrete grid approximation to a pseudo- uniform GM pdf for each R = r ∈ { 1 , ..., 6 } and p 0 ( R ) = [0 . 1190 , 0 . 1190 , 0 . 2415 , 0 . 1497 , 0 . 1735 , 0 . 1973] . For time steps k = 0 to k = 600 , robot 1 starts off in region R = 5 and moves in a counterclockwise inward spiral through regions R = 2 , 1 , 4 and 5 , while robot 2 starts off in region R = 3 and moves in a counterclockwise inward spiral through regions R = 2 , 4 , 6 , and 3 while locally fusing its o wn sensor data. The robots perform Bayesian sensor updates using only their own local data up time step k = 600 , at which point they decide to perform a DDF update. Note that only robot 1 has any ne w information about R ∈ { 1 , 4 } and only robot 2 has any new information about R ∈ { 3 , 5 } , while both robots 1 and 2 hav e new information about R ∈ { 2 , 5 } . It is thus straightforward to show that factorized e xact DDF gi ves p f ( x | R ∈ { 1 , 4 } ) = p 1 ( x | R ∈ { 1 , 4 } ) , p f ( x | R ∈ { 3 , 6 } ) = p 2 ( x | R ∈ { 3 , 6 } ) , p f ( x | R ∈ { 2 , 5 } ) ∝ p 1 ( x | R ∈ { 2 , 5 } ) p 2 ( x | R ∈ { 2 , 5 } ) p 0 ( x | R ∈ { 2 , 5 } ) , p f ( R ) ∝ p 1 ( R | D 1 1:600 ) · p j ( r | D 2 1:600 ) p 0 ( R ) , ∝ p 0 ( R ) p 1 ( D 1 1:600 | R ) p 2 ( D 2 1:600 | R ) , ∀ R. This implies that robot 1 needs to send p ( R ) (or p 1 ( D 1 1:600 | R ∈ { 1 , 2 , 4 , 5 } ) ) and p ( x | R ∈ { 1 , 2 , 4 , 5 } ) to robot 2, which in turn only needs to send p ( R ) (or p 2 ( D 2 1:600 | R ∈ { 2 , 3 , 4 , 6 } ) ) to robot 1. Once robot 2 receiv es robot 1’ s mes- sage, it directly overwrites its local copy of p 2 ( x | R ∈ { 1 , 4 } ) with p f ( x | R ∈ { 1 , 4 } ) = p 1 ( x | R ∈ { 1 , 4 } ) , calculates p f ( x | R ∈ { 2 , 5 } ) as above, and finally updates p ( R ) , while leaving p 2 ( x | R ∈ { 3 , 6 } ) unaltered. Robot 1 performs a simi- lar update procedure upon recei ving robot 2’ s message, except (a) (b) (c) Fig. 3. (a) T arget search map prior distribution and initial locations of robot searchers, (b) exact DDF results after 600 time steps, (c) factorized WEP DDF results after 600 time steps, showing slight disagreement between p f ( R ) and p f , WE P ( R ) . Dark red/dark blue indicates high/low probability mass; magenta polygons show obstacle/boundary regions of search space. that it directly overwrites its local cop y of p 1 ( x | R ∈ { 3 , 6 } ) with p 2 ( x | R ∈ { 3 , 6 } ) and leaves p 1 ( x | R ∈ { 1 , 4 } ) unaltered. In this manner , each robot performs a full hybrid state pdf update with a sparse set of messages and calculations that exactly recov ers the centralized fusion pdf as shown by the final grid-based result in Figure 3 (b), thus bypassing the need to transmit/process the ra w sensor data histories or each robot’ s full local cop y of p ( x, R | D 1:600 ) . Similar communication and processing requirements are obtained for factorized WEP DDF , if robots 1 and 2 directly set p f , W EP ( x | R ; ω x | R ) for R ∈ { 1 , 3 , 4 , 6 } using ω ( x | R ∈ { 1 , 4 } ) = 1 (robot 1) / 0 (robot 2) , ω ( x | R ∈ { 3 , 6 } ) = 0 (robot 1) / 1 (robot 2) , ⇒ p f , W EP ( x | R ∈ { 1 , 4 } ) = p 1 ( x | R ∈ { 1 , 4 } ) , p f , W EP ( x | R ∈ { 3 , 6 } ) = p 2 ( x | R ∈ { 3 , 6 } ) , and use the minimax WEP metric described in [8] to perform three separate optimizations (two for ω x | R for R ∈ { 2 , 5 } and one for ω R ). Ho wev er , Figure 3(c) shows that the final fusion result incurs an information loss of 0.0214 nats, as giv en by the joint K ullback-Leibler di vergence (KLD) D K L [ p f ( x, R ) || p f , W EP ( x, R )] = D K L [ p f ( R ) || p f , W EP ( R )] + X r ∈ R p ( r ) D K L [ p f ( x | r ) || p f , W EP ( x | r )] , where D K L [ p f ( R ) || p f , W EP ( R )] = X r ∈ R p ( R ) log p f ( r ) p f , W EP ( r ) , D K L [ p f ( x | r ) || p f , W EP ( x | r )] = Z p f ( x | r ) log p f ( x | r ) p f , W EP ( x | r ) dx. Closer inspection re veals that D K L [ p f ( R ) || p f , W EP ( R )] contributes the most to joint KLD, while D K L [ p f ( x | R ) || p f , W EP ( x | R )] is extremely small for R ∈ { 2 , 5 } and zero for R ∈ { 1 , 3 , 4 , 6 } . Interestingly , Fig. 4 shows that this information loss can be minimized by choosing an ω R value larger than the one found via the minimax WEP metric (where all ω x | R are held fixed). Fig. 4 also sho ws this new ω R leads to lower information losses than application of con ventional ‘whole WEP’ DDF ov er the joint 3-dimensional grid for x and R (i.e. the same as setting all ω x | R = ω R ). While these results imply that factorized WEP can indeed lead to very accurate fusion results, more sophisticated procedures for joint optimization of ω R and ω x | R must be found to minimize information loss. I V . D D F W I T H M I X T U R E M O D E L F AC T O R S This section describes our newly proposed mixture fusion algorithm, which ov ercomes the major limitations of other mixture fusion methods and produces accurate GM approxi- mations to p f ( x k ) and p f , W EP ( x k ) (or any conditional factors thereof) for general GM DDF scenarios. Our technique is highly parallelizable and provides a unified approach to high fidelity recursi ve fusion of complex pdfs for both exact and WEP DDF . If we replace p i ( x k ) and p j ( x k ) with GM pdfs in either (3) or (7) and let u ( x k ) be the corresponding (estimated) non- Gaussian common information pdf (i.e. p c ( x k ) or ˆ p c ( x k ) ), then this gi ves for exact DDF (and like wise for WEP DDF) p f ( x k ) ∝ M i X q =1 w i q N ( x k ; µ i q , Σ i q ) M j X r =1 w j r N ( x k ; µ j r , Σ j r ) u ( x k ) , which is equi valent to p f ( x k ) ∝ M i X q =1 M j X r =1 w i q w j r N ( x k ; µ i q , Σ i q ) N ( x k ; µ j r , Σ j r ) u ( x k ) . (18) Using the fact that the product of two Gaussian pdfs is another unnormalized Gaussian pdf, this can be further simplified to p f ( x k ) ∝ M i X q =1 M j X r =1 w ij q r ¯ z ij q r N ( x k ; µ ij q r , Σ ij q r ) u ( x k ) , (19) where each numerator term results from component-wise ‘Naiv e Bayes’ fusion of p i ( x k ) and p j ( x k ) , Σ ij q r = h Σ i q − 1 + Σ j r − 1 i − 1 , (20) µ ij q r = Σ ij q r h Σ i q − 1 µ i q + Σ j r − 1 µ j r i , (21) ˜ w ij q r = w i q w j r ¯ z ij q r , (22) ¯ z ij q r = N ( µ i q ; µ j r , Σ i q + Σ j r ) . (23) Eq.(19) is thus a mixture of non-Gaussian components formed by the ratio of a single (unnormalized) Gaussian pdf and non- Gaussian pdf u ( x k ) . Although not a normalized closed-form pdf, each component of (19) tends to concentrate most of its mass around µ ij q r . In particular , as x k mov es away from µ ij q r , the covariance Σ ij q r (which is ‘smaller’ than either Σ i q or Σ j r ) forces each Gaussian numerator term to decay more rapidly than 1 u ( x k ) grows. This insight suggests that a good GM approximation to either p f ( x k ) or p f , W EP ( x k ) can be found by approximating each pdf ratio term in (19) with a moment- matched Gaussian pdf, which leads to the GM approximation p f ( x k ) ≈ 1 η M i X q =1 M j X r =1 ˜ w ∗ q r N ( x k ; µ ∗ q r , Σ ∗ q r ) , (24) where ˜ w ∗ q r = w i q w j r · E [1] p qr ( x k ) , (25) µ ∗ q r = E [ x k ] p qr ( x k ) , (26) Σ ∗ q r = E x k x T k p qr ( x k ) − µ ∗ q r ( µ ∗ q r ) T , (27) η = M i X q =1 M j X r =1 ˜ w ∗ q r , p q r ( x k ) ∝ ¯ z ij q r N ( x k ; µ ij q r , Σ ij q r ) u ( x k ) . While the required moments cannot be found analytically , they can be quickly estimated via Monte Carlo importance sampling (IS) [11], which exploits the identity E [ f ( x k )] p qr ( x k ) = E p q r ( x k ) h q r ( x k ) f ( x k ) h qr ( x k ) = E [ θ ( x k ) f ( x k )] h qr ( x k ) , where f ( x k ) is a giv en moment function and h q r ( x k ) is a proposal pdf for each mixand p q r ( x k ) that is easy to sample from, has a shape ‘close’ to p q r ( x k ) , and has support on x k such that p q r ( x k ) > 0 ⇒ h q r ( x k ) > 0 (both p q r ( x k ) and h q r ( x k ) need only be kno wn up to normalizing constants). Giv en a set of N s samples { x s k } N s s =1 ∼ h q r ( x k ) , we obtain the sampling estimate E [ f ( x k )] p qr ( x k ) ≈ N s X s =1 θ ( x s k ) f ( x s k ) , θ ( x s k ) ∝ p q r ( x k ) h q r ( x k ) . Note that the moment calculations (25)-(27) for each q r term in (24) can be easily parallelized. There are many possible ways to select h q r ( x k ) for each p q r ( x k ) ; a particularly con venient (though not necessarily optimal) choice is h q r ( x k ) = N ( x k ; µ ij q r , Σ S AM P q r ) for some suitable Σ S AM P q r . This works well in practice as long as h q r ( x k ) adequately covers the major support regions of p q r ( x k ) , i.e. if (Σ S AM P q r − Σ ∗ q r ) is positi ve semi-definite and p q r ( x k ) does not hav e too many widely separated modes. W e ha ve found that one effecti ve strategy for lo w dimensional applications (i.e. ≤ 5 states) is to select Σ S AM P q r = arg max( | Σ i q | , | Σ j r | , | Σ D EF | ) , where Σ D EF = α · I and tuning parameter α represents a conser- vati ve upper bound on the expected variance for an y posterior mixand in an y dimension. This approach may not work well in large state spaces ( ≥ 5 states) or for multimodal p q r ( x k ) . In future work, more sophisticated adaptive IS techniques [11] will be in vestigated for rob ust selection of h q r ( x k ) . A. Illustrative 2D Example Figure 5 shows a simple 2D example of WEP DDF for two GMs p i ( x k ) and p j ( x k ) using various mixture fusion approximations. Fig. 5 (c) sho ws a high fidelity grid-based approximation to p f , W EP ( x k ) , which is very closely matched by the GM produced by our mixture fusion technique in Fig. 5 (d) (the KL div ergence between both pdfs is 0.0034 nats). Fig. 5 (e) shows the fusion result obtained by the particle condensation method of [8], which relies on the EM algorithm to learn a GM approximation of p f , W EP ( x k ) . Although this captures the general shape of the true pdf, the parameter estimates are extremely sensiti ve to initial guesses and tend to get trapped at poor local solutions, which leads to greater information loss (KLD of 0.1035 nats). Fig. 5 (f) shows that the fusion GM produced by first order cov ariance intersection (FOCI) [6] loses even more information (KLD of 0.6972 nats), due to its ov erly conserv ativ e nature. Note that the results in Fig. 5 (d) and (e) are subject to variance from Monte Carlo IS; although not shown here, this variance is substantially lower for the proposed mixture fusion method due to the fact that IS is applied to each non-Gaussian mixand of p f , W EP ( x k ) indi vidually , rather than to p f , W EP ( x k ) as a whole. Furthermore, the proposed method does not require the solution to a nonlinear optimization problem as in the particle condensation method. Lik e the FOCI approximation, the proposed technique also automatically leads to a larger but still finite number of mixands M f = M i M j in the GM approximation. Post-hoc GM compression methods can be used to control M f in real applications while minimizing information loss, although these typically have O (( M f ) 2 ) or O (( M f ) 3 ) memory and time costs. In future work, we will in vestigate ways to control M f ‘on the fly’, e.g. by culling/merging q r terms with very small weights in (24) before/during IS moment-matching calculations. R E F E R E N C E S [1] S. Thrun, W . Bur gard, and D. Fox, Probabilistic Robotics . Cambridge, MA: MIT Press, 2001. [2] S. Grime and H. Durrant-Whyte, “Data fusion in decentralized sensor networks, ” Contr ol Engineering Practice , vol. 2, no. 5, pp. 849–863, 1994. [3] T . Kaupp, B. Douillard, F . Ramos, A. Makarenko, and B. Upcroft, “Shared environment representation for a human-robot team performing information fusion, ” Journal of F ield Robotics , v ol. 24, no. 11, pp. 911– 942, 2007. (a) (b) (c) (d) (e) (f) Fig. 5. (a)-(b) GMs p i ( x ) and p j ( x ) with M i = M j = 14 ; (c) ground truth grid-based p f , WE P ( x k ) ( ω = 0 . 56922 ); (d) proposed GM approximation; (e) weighted EM GM approximation; (f) FOCI GM approximation. [4] L.-L. Ong, T . Baile y , H. Durrant-Whyte, and B. Upcroft, “Decentralised particle filtering for multiple target tracking in wireless sensor netw orks, ” in FUSION 2008 , 2008. [5] T . Martin and K. Chang, “ A distrib uted data fusion approach for mobile ad hoc networks, ” in FUSION 2005 , 2005, pp. 1062–1069. [6] S. Julier , “ An empirical study into the use of chernoff information for robust, distrib uted fusion of Gaussian mixture models, ” in FUSION 2006 , 2006. [7] K. Chang and W . Sun, “Scalable fusion with mixture distributions in sensor networks, ” in 2010 International Conferences on Contr ol, Atuomation, Robotics and V ision (ICARV) , 2010. [8] N. Ahmed, J. Schoenberg, and M. Campbell, “Fast weighted exponential product rules for rob ust multi-robot data fusion, ” in Robotics: Science and Systems 2012 . [9] F . Bourgault, “Decentralized control in a Bayesian world, ” Ph.D. disser- tation, University of Sydney , 2005. [10] C. Bishop, P attern Recognition and Machine Learning . New Y ork: Springer , 2006. [11] C. Robert and G. Casella, Monte Carlo Statistical Methods , 2nd ed. New Y ork: Springer, 2004.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment