Coding for Random Projections

The method of random projections has become very popular for large-scale applications in statistical learning, information retrieval, bio-informatics and other applications. Using a well-designed coding scheme for the projected data, which determines…

Authors: Ping Li, Michael Mitzenmacher, Anshumali Shrivastava

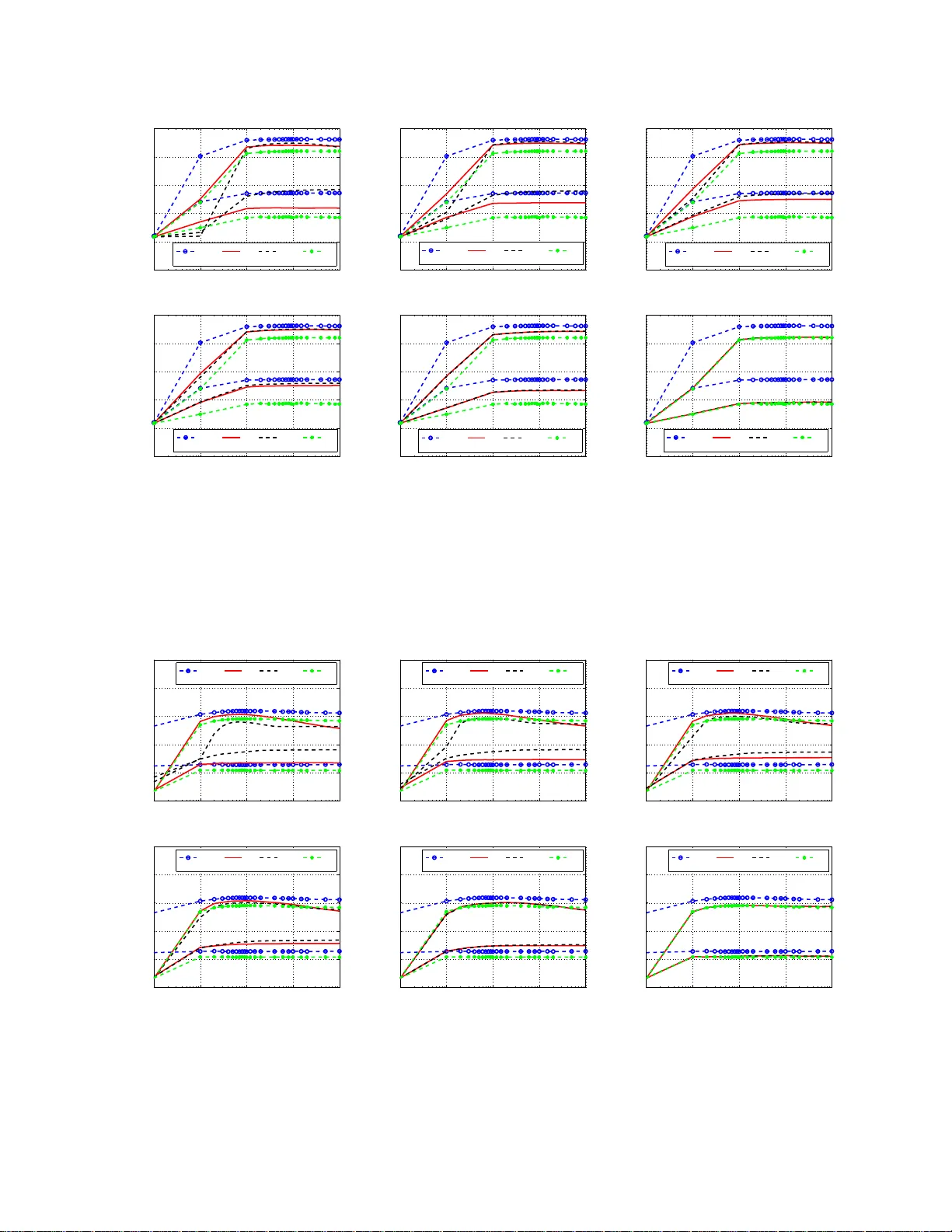

Coding for Random Projections Ping Li Department of Statistics & Biostatistics Department of Computer Science Rutgers Univ ersity Piscataw ay , NJ 08854 pl314@rci. rutgers.ed u Michael Mitzenmacher School of Engineering and Applied Sciences Harv ard Univ ersity Cambridge, MA 02138 michaelm@e ecs.harvar d.edu Anshumali Shriv astav a Department of Computer Science Cornell Unive rsity Ithaca, NY 14853 anshu@cs.c ornell.edu Abstract The method of random projectio ns has become very pop ular for large-scale applications in statistical learning, informa tion retriev al, bio- informa tics and oth er applicatio ns. Using a well-designed coding scheme for the projected data, which determines the number of bits n eeded for each projected v alue and how to allocate these bits, can significantly improve the ef fectiv eness of the algorithm, in s torage cost as well as computatio nal speed. In this paper , we stud y a number of simple coding schem es, focusing on the task of similarity estimation and on an application to training linear classifiers. W e d emonstrate that uniform quantiz ation outperfor ms the standard existing in fluential method [8]. Indeed, we argue that in many cases codin g with just a small numb er of bits suffices. Furthermo re, we also develop a non- uniform 2- bit coding scheme that generally pe rforms well in practice, as confirmed by our e xperiments on training linear suppor t v ector machines (SVM). 1 1 Introd uction The method of random projectio ns has become popular for large -scale machine learnin g application s such as classificati on, re gress ion, matri x factorizat ion, singu lar v alue decompositio n, near neighbo r search, bio- informat ics, and more [22, 6, 1, 3, 10, 1 8, 11, 24, 7, 16, 2 5]. In this paper , we study a numbe r of simple and ef fecti ve schemes for coding the project ed data, with the focus on similarity estimation and training linear classifier s [15, 23, 9, 2 ]. W e will c losely compar e our m ethod w ith the influen tial prior codi ng scheme in [8]. Consider two hig h-dimen sional vectors , u, v ∈ R D . The idea is to multipl y them with a random normal projec tion matrix R ∈ R D × k (where k ≪ D ), to genera te two (much) shorter vec tors x, y : x = u × R ∈ R k , y = v × R ∈ R k , R = { r ij } D i =1 k j =1 , r ij ∼ N (0 , 1) i.i.d. (1) In real application s, the datas et w ill consis t of a large number of vectors (not just two) . W it hout loss of genera lity , we use one pair of data vec tors ( u, v ) to demonstrate our results. In th is st udy , for con venien ce, we ass ume tha t the marg inal Eucli dian norms of th e ori ginal data ve ctors, i.e., k u k , k v k , are kn o wn. This ass umption is reas onabl e in practice [18]. For example, the input data for feedin g to a support v ector machine (SVM) are usually normalized, i.e., k u k = k v k = 1 . Computing the mar ginal norms for the ent ire datas et only requires one linear scan of the da ta, which is anyw ay needed during data collectio n/pro cessing. W itho ut loss of generalit y , we assume k u k = k v k = 1 in this paper . The joint distr ib ution of ( x j , y j ) i s hence a bi-v ariant normal: x j y j ∼ N 0 0 , 1 ρ ρ 1 , i.i.d. j = 1 , 2 , ..., k . (2) where ρ = P D i =1 u i v i (assumin g k u k = k v k = 1 ). For con ven ience a nd bre vity , we also restri ct ou r attenti on t o ρ ≥ 0 , which is a common scenario in practice. Throughout the p aper , we adopt the con vent ional notati on for the standa rd normal pdf φ ( x ) and cdf Φ( x ) : φ ( x ) = 1 √ 2 π e − x 2 2 , Φ( x ) = Z x −∞ φ ( x ) dx (3) 1.1 Unif orm Quantization Our first propo sal is perhaps the most intuiti ve scheme, based on a simple uniform quantizat ion: h ( j ) w ( u ) = ⌊ x j /w ⌋ , h ( j ) w ( v ) = ⌊ y j /w ⌋ (4) where w > 0 is t he bin width and ⌊ . ⌋ is the standard floor operation, i.e., ⌊ z ⌋ is the larg est integ er which is smaller than or equal to z . For exa mple, ⌊ 3 . 1 ⌋ = 3 , ⌊ 4 . 99 ⌋ = 4 , ⌊− 3 . 1 ⌋ = − 4 . Later in the paper we will als o use the standa rd ceiling operation ⌈ . ⌉ . W e sho w tha t the collisi on p robab ility P w = Pr h ( j ) w ( u ) = h ( j ) w ( v ) is a monoton ically increasing fu nction of the similarity ρ , makin g (4) a suitab le coding scheme for similarity estimatio n and near neighbo r search. The poten tial benefits of coding with a small number of bit s arise because the (unc oded) projected data, x j = P D i =1 u i r ij and y j = P D i =1 v i r ij , being real-v alu ed numb ers, are neither con venient /economica l for storag e and transmission, nor w ell-sui ted for inde xing. Since the original data are assumed to be normalized , i.e., k u k = k v k = 1 , the mar ginal distrib u- tion of x j (and y j ) is the standar d normal, w hich decays rapidly at the tail, e.g., 1 − Φ(3) = 10 − 3 , 2 1 − Φ(6) = 9 . 9 × 10 − 10 . If w e use 6 as cutof f, i.e., value s with absolute v alue greater than 6 are just treated as − 6 and 6, then the nu mber of bits needed to represent the bin the valu e lies in is 1 + log 2 6 w . In particular , if we choose the bin w idth w ≥ 6 , we can just record the sign of the outcome (i.e., a one-bit scheme). In general, the optimum choic e of w depends on the similarity ρ and the task. In this paper we focus on the task of similar ity estimatio n (of ρ ) and w e will pro vide the optimum w valu es for all similarit y le ve ls. Interes tingly , using our un iform quan tizati on scheme, we find in a certain range the optimum w v alues are quit e large, and in particu lar are lar ger than 6. W e can b u ild linear class ifier (e.g., linea r SVM) usi ng code d rando m project ions. For example, assume the proje cted v alu es are within ( − 6 , 6) . If w = 2 , then the code v alue s output by h w will be within the set {− 3 , − 2 , − 1 , 0 , 1 , 2 } . This means we can represent a project ed v alue using a vec tor of length 6 (with exa ctly one 1) and the total length of the new feature vector (fed to a linear SVM) w ill be 6 × k . See more details in S ection 6. This trick was also recen tly used for linear learn ing with binary data ba sed on b-bit minwise hashing [19, 20]. O f course, we can also use the method to speed up kernel ev aluations for kern el SVM with high-d imensio nal data. Near neighbor sear ch is a basic problem studied since the early days of modern computing [12] with applic ations throug hout computer scien ce. The use of coded projection dat a for near neighbo r search is closel y related to locali ty sens itive hashi ng (LSH) [1 4]. For e xample , using k projections and a bin width w , we can naturall y build a hash table with 2 ⌈ 6 w ⌉ k b uck ets. W e map ev ery data vector in the dataset to one of the buc ket s. For a query data vect or , w e search for similar data vectors in the same b uck et. Because the concep t of LSH is well-kno w n, we do not elaborat e on the details. Compared to [8 ], our propose d cod ing scheme has better p erforman ce for near neig hbor searc h; the analys is will be report ed in a separa te techn ical report . This paper focuse s on similarity estimatio n. 1.2 Advantages ov er the W indow-and -Offset Coding Scheme [8] propos ed the follo wing well-kno wn codin g scheme, which uses windo ws and a random of fset: h ( j ) w ,q ( u ) = x j + q j w , h ( j ) w ,q ( v ) = y j + q j w (5) where q j ∼ unif or m (0 , w ) . [8] sho wed that the collisio n probabilit y can be written as P w ,q = Pr h ( j ) w ,q ( u ) = h ( j ) w ,q ( v ) = Z w 0 1 √ d 2 φ t √ d 1 − t w dt (6) where d = || u − v || 2 = 2(1 − ρ ) is the Euclidean distance between u and v . T he dif feren ce bet ween (5) and our proposal (4) is that we do not use the additiona l randomization w ith q ∼ unif or m (0 , w ) (i.e., the of fset) . By comparin g them closely , we will demonstrat e the follo wing adv antage s of our scheme: 1. Operation ally , our scheme h w is simpler than h w ,q . 2. W ith a fix ed w , our scheme h w is alway s more accurate than h w ,q , often significa ntly so. 3. For each coding scheme, we can separately find the opt imum bin width w . W e will sho w that the optimize d h w is also more accura te than optimized h w ,q , often significa ntly so. 4. For a wide range of ρ v alues (e.g., ρ < 0 . 56 ), the optimum w value s for our scheme h w are relati v ely lar ge (e.g., > 6 ), w hile for the existi ng scheme h w ,q , the optimum w v al ues are small (e.g., about 1 ). This means h w requir es a smaller number of bits than h w ,q . In summary , uniform quantizatio n is simpler , m ore accurate, and uses fe wer bits than the influential prior work [8] which use s the windo w with the random off set. 3 1.3 Organization In Sectio n 2, w e analyze the collision prob ability for the unifor m quantizat ion sch eme and then compa re it with the collision pro babili ty of the well-kno wn prio r wor k [8] w hich uses an addit ional ran dom of fs et. Because the collision pro babil ities are monotone functions of the similarity ρ , we can alw ays estimate ρ from the observed (empirical) collision probabi lities. In Section 3, we theoretic ally compare the estimation v arian ces of these two sche mes and conclude that the random offse t step in [8] is not needed. In Section 4, w e de vel op a 2-bit non- unform coding scheme and demonstra te th at its perfor mance l ar gely matches the performance of the uniform quantiza tion sche me (which requires storing more bits). Intere st- ingly , for c ertain ra nge of the similarit y ρ , we observ e that only o ne bit is neede d. Thus, Section 5 is de v oted to compa ring the 1-b it scheme with our propose d methods. T he comparisons sho w that the 1-bit scheme does not perform as well when the similarity ρ is high (whic h is oft en the case applications are intereste d in). In Section 6, we provide a set of expe riments on training linear SVM using all the coding schemes we ha v e studied. The e xper imental resu lts basically co nfirm the v ari ance analysis. S ection 7 presents sev eral directi ons for related future research . Finally , Section 8 conclud es the paper . 2 The Collision Prob ability of Uninf orm Quantization h w T o use our coding scheme h w (4), we need to ev aluate P w = Pr h ( j ) w ( u ) = h ( j ) w ( v ) , the collision proba- bility . From prac tition ers’ perspecti ve, as long as P w is a monoto nicall y increasin g function of the similarity ρ , it is a suitabl e co ding sche me. In othe r word s, it does not matter whether P w has a closed -form e xpr ession , as long as we can demonstrate its adv antage over the alternati v e [8], whose collision probabi lity is denoted by P w ,q . Note that P w ,q can be ex presse d in a closed -form in terms of the standard φ and Φ functions: P w ,q = Pr h ( j ) w ,q ( u ) = h ( j ) w ,q ( v ) =2Φ w √ d − 1 − 2 √ 2 π w / √ d + 2 w/ √ d φ w √ d (7) Recall d = 2(1 − ρ ) is the E uclide an distance k u − v k 2 . It is clear that P w ,q → 1 as w → ∞ . The follo win g L emma 1 will help deri v e the collisi on probab ility P w (in Theore m 1). Lemma 1 Assume x y ∼ N 0 0 , 1 ρ ρ 1 , ρ ≥ 0 . Then Q s,t ( ρ ) = Pr ( x ∈ [ s, t ] , y ∈ [ s, t ]) = Z t s φ ( z ) " Φ t − ρz p 1 − ρ 2 ! − Φ s − ρz p 1 − ρ 2 !# dz (8) ∂ Q s,t ( ρ ) ∂ ρ = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 (1+ ρ ) + e − s 2 (1+ ρ ) − 2 e − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) ≥ 0 (9) Proof: See Appendix A. Theor em 1 The collision pr o babili ty of the codin g scheme h w define d in (4) is P w = 2 ∞ X i =0 Z ( i +1) w iw φ ( z ) " Φ ( i + 1) w − ρz p 1 − ρ 2 ! − Φ iw − ρz p 1 − ρ 2 !# dz (10) 4 which is a mono tonic ally incr ea sing function of ρ . In partic ular , when ρ = 0 , we have P w = 2 ∞ X i =0 [Φ (( i + 1) w ) − Φ ( iw ) ] 2 (11) Proof: The pr oo f follows fr om Lemma 1 by us ing s = iw and t = ( i + 1) w , i = 0 , 1 , ... . 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0 P w P w,q 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.25 P w P w,q 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.5 P w P w,q 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.75 P w P w,q 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.9 P w P w,q 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.99 P w P w,q Figure 1: Collision probabiliti es, P w and P w ,q , for ρ = 0 , 0 . 25 , 0 . 5 , 0 . 75 , 0 . 9 , and 0 . 99 . Our propose d scheme ( h w ) has s maller col lision probab ilities than the exi sting sche me [8] ( h w ,q ), espe cially when w > 2 . Figure 1 plots bo th P w and P w ,q for selected ρ values . T he dif ferenc e between P w and P w ,q becomes appare nt after about w > 2 . For ex ample, when ρ = 0 , P w quickl y approache s the limit 0.5 while P w ,q kee ps increa sing (to 1) as w increas es. Intu iti v ely , the f act that P w ,q → 1 w hen ρ = 0 , is undesi rable be cause it mean s two ort hogon al vectors will ha ve the same code d va lue. Thus, it is n ot surpr ising that our prop osed scheme h w will hav e better performanc e than h w ,q . W e will analyze their theo retica l v arianc es to provi de precis e comparisons. 3 Analysis of T wo Cod ing Schemes ( h w and h w,q ) f or Similarity Estimation In both schemes (correspon ding to h w and h w ,q ), the collisio n probabilitie s P w and P w ,q are monotoni cally increa sing fun ctions of the similarity ρ . Since there is a one-to-on e mapping betwee n ρ and P w , we can tab ula te P w for each ρ (for example , at a precision of 10 − 3 ). From k indepen dent project ions, w e can compute the empirical ˆ P w and ˆ P w ,q and find the estimates, denoted by ˆ ρ w and ˆ ρ w ,q , respecti vely , from the tables . In this secti on, we compare the estimatio n varian ces for thes e two estimato rs, to demonstrat e adv ant age of the proposed coding scheme h w . 5 Theorem 2 pro vides the va riance of h w , for estimati ng ρ from k random projecti ons. Theor em 2 V ar ( ˆ ρ w ,q ) = V w ,q k + O 1 k 2 , wher e (12) V w ,q = d 2 / 4 w/ √ d φ w/ √ d − 1 / √ 2 π 2 P w ,q (1 − P w ,q ) , d = 2(1 − ρ ) (13) Proof: See Appendix B. Figure 2 plot s the varian ce fa ctor V w ,q defined in (13) without the d 2 4 term. (Recall d = 2(1 − ρ ) .) The minimum is 7.6797 (keepi ng four digits), attained at w / √ d = 1 . 647 6 . T he plot also suggests that the perfor mance of th is pop ular scheme can be sen siti v e to the choice of the bin width w . This is a pract ical disadv antage. Since we do not kn o w ρ (or d ) in ad v ance a nd we must spec ify w in adv an ce, th e per formanc e of th is scheme mig ht be unsatis fac tory , as one can n ot re ally find on e “opti mum” w for all pai rs in a d ataset . 10 −1 10 0 10 1 10 2 10 0 10 1 10 2 10 3 w/ √ d V w,q Figure 2: The varia nce factor V w ,q (13) without the d 2 4 term, i.e., V w ,q × 4 /d 2 . In compariso n, our propos ed scheme has smaller var iance and is not as sensiti v e to the choice of w . Theor em 3 V ar ( ˆ ρ w ) = V w k + O 1 k 2 , wher e (14) V w = π 2 (1 − ρ 2 ) P w (1 − P w ) P ∞ i =0 e − ( i +1) 2 w 2 (1+ ρ ) + e − i 2 w 2 (1+ ρ ) − 2 e − w 2 2(1 − ρ 2 ) e − i ( i +1) w 2 1+ ρ 2 (15) In parti cular , when ρ = 0 , we have V w | ρ =0 = " P ∞ i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 P ∞ i =0 ( φ (( i + 1) w ) − φ ( iw )) 2 # " 1 / 2 − P ∞ i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 P ∞ i =0 ( φ (( i + 1) w ) − φ ( iw )) 2 # (16) Proof: See Appendix C. 6 Remark: A t ρ = 0 , the minimum is V w = π 2 4 attaine d at w → ∞ , as shown in F igure 3. Note that when w → ∞ , we hav e P ∞ i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 → 1 / 4 and P ∞ i =0 ( φ (( i + 1) w ) − φ ( iw )) 2 → 1 / (2 π ) , and henc e V w | ρ =0 → h 1 / 4 1 / (2 π ) i h 1 / 2 − 1 / 4 1 / (2 π ) i = π 2 4 . In comparison, Theorem 2 says that when ρ = 0 (i.e., d = 2 ) we hav e V w ,q = 7 . 6797 , which is significant ly large r than π 2 / 4 = 2 . 4674 . 0 2 4 6 8 10 2 2.5 3 3.5 4 4.5 5 w V w at ρ =0 Figure 3: The minimum of V w | ρ =0 → π 2 / 4 , as w → ∞ . T o compare the v ar iances of the two esti mators, V ar ( ˆ ρ w ) and V ar ( ˆ ρ w ,q ) , we compare their leading consta nts, V w and V w ,q . Figure 4 plots the V w and V w ,q at selected ρ value s, verifying that (i) the varian ce of th e propo sed scheme (4) can be sign ificantly lo wer than the e xistin g scheme (5); a nd (ii) the perf ormance of the propo sed scheme is not as sensit i ve to the choice of w (e.g., w hen w > 2 ). 0 2 4 6 8 10 0 5 10 15 20 w Var factor (V) ρ = 0 V w V w,q 0 2 4 6 8 10 0 5 10 15 20 w Var factor (V) ρ = 0.25 V w V w,q 0 2 4 6 8 10 0 2 4 6 8 10 w Var factor (V) ρ = 0.5 V w V w,q 0 2 4 6 8 10 0 1 2 3 4 5 w Var factor (V) ρ = 0.75 V w V w,q 0 2 4 6 8 10 0 0.5 1 1.5 2 w Var factor (V) ρ = 0.9 V w V w,q 0 2 4 6 8 10 0 0.01 0.02 0.03 0.04 w Var factor (V) ρ = 0.99 V w V w,q Figure 4: C ompariso ns of two c oding s chemes at fixed bin width w , i.e., V w (15) vs V w ,q (13). V w is smaller than V w ,q especi ally when w > 2 (or e ven when w > 1 and ρ is small). For both schemes, at a fixed ρ , we can find the optimum w value which minimizes V w (or V w ,q ). In gen eral, once w > 1 ∼ 2 , V w is not sensit i ve to w (unless ρ is very close to 1). T his is one significa nt adva ntage of the proposed scheme h w . 7 It is also informati ve to compare V w and V w ,q at their “optimum” w va lues (for fi xed ρ ). Note that V w is not sen siti v e to w once w > 1 ∼ 2 . The left pan el of Figure 5 plots the best v alue s for V w and V w ,q , confirming that V w is significant ly lo wer than V w ,q at smaller ρ val ues (e.g., ρ < 0 . 56 ). 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 5 6 7 8 ρ Var factor (V) V w V w,q 0 0.2 0.4 0.6 0.8 1 0 2 4 6 8 10 ρ Optimum w V w V w,q Figure 5: Comparisons of two coding schemes, h w and h w ,q , at optimum bin width w . The right panel of Figure 5 pl ots the optimum w valu es (for fixed ρ ). Around ρ = 0 . 56 , the optimum w for V w becomes significan tly larg er than 6 and may not be reliabl y ev alua ted. From the remark for The- orem 3, we know tha t at ρ = 0 the op timum w gro ws to ∞ . T hus, we can conclud e that if ρ < 0 . 56 , it suf fices to implement our coding scheme using just 1 bit (i.e., signs of the projecte d data). In comparison, for the exist ing scheme h w ,q , the optimum w vari es much slower . Even at ρ = 0 , the optimum w is around 2. This means h w ,q will always need to use more bits than h w , to code the projected data. T his is another adv ant age of our proposed scheme. In practice, we do not know ρ in adv ance and we often care about data vector pairs of high similarities . When ρ > 0 . 56 , Figure 4 a nd Figure 5 i llustr ate that we migh t want to choose small w v alues (e.g., w < 1 ). Ho wev er , usi ng a small w val ue will hurt the performance in pairs of the low simila rities. This dile mma moti v ates us to de v elop non-uni form coding schemes. 4 A 2-Bit Non-Unif orm Codin g Scheme If we quantize the projected data according to the four region s ( −∞ , − w ) , [ − w, 0) , [0 , w ) , [ w , ∞ ) , we ob- tain a 2-bit non-uniform scheme. At the risk of abus ing notation , we name this scheme “ h w , 2 ”, not to be confus ed with the name of the exis ting scheme h w ,q . Accordin g to Lemma 1, h w , 2 is also a valid coding scheme. W e can theoreti cally compute the collision probab ility , denoted by P w , 2 , which is a gain a mono tonic ally increasing function of the si milarity ρ . Wi th k projec tions, we can estimate ρ from the empir ical obser v ation of P w , 2 and we d enote this e stimator by ˆ ρ w , 2 . Theorem 4 pro vides the expr ession s for P w , 2 and V ar ( ˆ ρ w , 2 ) . Theor em 4 P w , 2 = Pr h ( j ) w , 2 ( u ) = h ( j ) w , 2 ( v ) = 1 − 1 π cos − 1 ρ − 4 Z w 0 φ ( z )Φ − w + ρz p 1 − ρ 2 ! dz (17) 8 V ar ( ˆ ρ w , 2 ) = V w , 2 k + O 1 k 2 , wher e V w , 2 = π 2 (1 − ρ 2 ) P w , 2 (1 − P w , 2 ) 1 − 2 e − w 2 2(1 − ρ 2 ) + 2 e − w 2 1+ ρ 2 (18) Proof : See Appendix D. Figure 6 plot s P w , 2 (toget her with P w ) f or se lected ρ values . When w > 1 , P w , 2 and P w lar gely ov erlap . For small w , the two probabil ities beh a v e very dif fer ently , as expect ed. Note P w , 2 has the same value at w = 0 and w = ∞ , and in f act , when w = 0 or w = ∞ , we ju st ne ed one bit ( i.e., th e sig ns). Note that P w , 2 and P w dif fer sign ificantly at small w . W il l this be beneficial ? The answer again depend s on ρ . 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0 P w P w,2 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.25 P w P w,2 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.5 P w P w,2 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.75 P w P w,2 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.9 P w P w,2 0 1 2 3 4 5 6 7 8 9 10 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 w Prob ρ = 0.99 P w P w,2 Figure 6: Collis ion probabil ities for the two prop osed coding scheme h w and h w , 2 . Note th at, w hile P w , 2 is a monoton ically increasing function in ρ , it is no longe r m onoto ne in w . Figure 7 plots both V w , 2 and V w at sel ected ρ valu es, to compa re their v aria nces. For ρ ≤ 0 . 5 , the v arian ce of the estimator using the 2-bit scheme h w , 2 is signi ficantly lo wer than that of h w . Howe ve r , when ρ is high, V w , 2 might be somewhat higher than V w . This means that, in general, w e expec t the performance of h w , 2 will be similar to h w . When applica tions mainly care about highly similar data pairs, we expe ct h w will ha v e (slightly ) better performance (at the cost of more bits). Finally , Figure 8 p resen ts the smallest V w , 2 v alues a nd the op timum w values a t which the s mallest V w , 2 are attaine d. This plot v erifies that h w and h w , 2 should perfor m very similarly , although h w will ha v e better perfor mance at high ρ . Also, for a w ide range, e.g., ρ ∈ [0 . 2 0 . 62] , it is preferable to implement h w , 2 using just 1 bit becaus e the optimum w val ues are lar ge. 9 0 2 4 6 8 10 0 2 4 6 8 w Var factor (V) ρ = 0 V w V w,2 0 2 4 6 8 10 0 2 4 6 8 w Var factor (V) ρ = 0.25 V w V w,2 0 2 4 6 8 10 0 1 2 3 4 w Var factor (V) ρ = 0.5 V w V w,2 0 2 4 6 8 10 0 0.5 1 1.5 w Var factor (V) ρ = 0.75 V w V w,2 0 2 4 6 8 10 0 0.1 0.2 0.3 0.4 0.5 w Var factor (V) ρ = 0.9 V w V w,2 0 2 4 6 8 10 0 0.01 0.02 w Var facotr (V) ρ = 0.99 V w V w,2 Figure 7: Comparisons of the estimation v ar iances of two propose d schemes: h w and h w , 2 in terms of V w and V w , 2 . When ρ ≤ 0 . 5 , V w , 2 is sig nificant ly lower than V w at small w . Ho we ve r , when ρ is high , V w , 2 will be some what higher than V w . Note that V w , 2 is not as sensiti ve to w , unlike V w . 0 0.2 0.4 0.6 0.8 1 0 1 2 ρ Var factor (V) V w V w,2 0 0.2 0.4 0.6 0.8 1 0 2 4 6 8 10 ρ Optimum w V w V w,2 Figure 8: Left panel: the smallest V w , 2 (or V w ) v alue s. Right panel: the optimum w v al ues at which the smallest V w , 2 (or V w ) is attained at a fixed ρ . 5 The 1-Bit Scheme h 1 and Comparisons with h w, 2 and h w When w > 6 , it is suf fi cient to implemen t h w or h w , 2 using just one bit , because th e normal proba bility densit y decay s ver y rapidly: 1 − Φ(6) = 9 . 9 × 10 − 10 . Note tha t we need to consider a very small tail probab ility because there are many data pairs in a lar ge datas et, not just one pa ir . W ith the 1-bit s cheme, we simply code the proj ected data by recording their signs. W e deno te this sche me by h 1 , and the corresp ondin g collisi on probab ility by P 1 , and the corres pond ing estimator by ˆ ρ 1 . 10 From Theorem 4, by settin g w = 0 (or equi v alen tly w = ∞ ), we can directly infer P 1 = Pr h ( j ) 1 ( u ) = h ( j ) 1 ( v ) = 1 − 1 π cos − 1 ρ (19) V ar ( ˆ ρ 1 ) = V 1 k + O 1 k 2 , where V 1 = π 2 (1 − ρ 2 ) P 1 (1 − P 1 ) (20) This collision probability is widely kno wn [13 ]. The work of [4 ] also populari zed the use 1-bit coding . The v arian ce was analyzed and compared with a maximum likeliho od estimator in [18]. Figure 9 and Figure 10 plot the ratios of the v arian ces: V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w ) and V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w, 2 ) , to illustrate ho w much we lose in accurac y by using only one bit. N ote ˆ ρ 1 is not relat ed to the bin width w while V ar ( ˆ ρ w ) and V ar ( ˆ ρ w , 2 ) are function s of w . In Figure 9, we plot the maximum val ues of the ratios, i.e., we use the smallest V ar ( ˆ ρ w ) and V ar ( ˆ ρ w , 2 ) at each ρ . T he ratios demonstr ate that potentiall y both h w and h w , 2 could substan tially outperform h 1 , the 1-bit sche me. Note that in F igure 9, we plot 1 − ρ in the horizo ntal axis with log-scale, so that the high simil arity reg ion can be visu alized bette r . In practice, ma ny applic ations a re often more interested in the high similarity reg ion, for example , duplicate detections . 1 0.1 0.01 0.001 0 2 4 6 8 10 1− ρ Var factor (V) V 1 /V w V 1 /V w,2 Figure 9: V aria nce ratios: V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w ) and V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w, 2 ) , to illustrate the reduction of estimation accuracies if the 1-bit coding scheme is used. Here, we plot the m aximum ratios (over all choices of bin width w ) at each ρ . T o better visualize the high similarity region, we plot 1 − ρ in log-scal e. In pract ice, we must pre- specif y the quanti zation bin width w in adv ance. Thus, the impro ve ment of h w and h w , 2 ov er the 1-bit sche me h 1 will not be as drastic as sho w n in Figure 9. For more realistic comparisons, Figure 10 plots V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w ) and V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w, 2 ) , for fixed w val ues. This figur e adv ocate s the recommendatio n of the 2-bit codin g scheme h w , 2 : 1. In the high similarity region, h w , 2 significa ntly outperf orms h 1 . The improv ement drops as w becomes lar ger (e.g., w > 1 ). h w also works well, in fa ct better than h w , 2 when w is small. 2. In the lo w similarity region, h w , 2 still outperfor ms h 1 unless ρ is very low and w is not small. N ote that the performa nce of h w is noticeab ly worse than h w , 2 and h 1 when ρ is lo w . 11 Thus, w e believ e the 2-bit sch eme h w , 2 with w around 0.75 provide s an overal l good comp romise. In fact , this is consisten t w ith our obse rv atio n in the SV M ex perimen ts in Section 6. 1 0.1 0.01 0.001 0 1 2 3 4 5 6 1− ρ Var factor (V) w = 0.5 V 1 /V w V 1 /V w,2 1 0.1 0.01 0.001 0 1 2 3 4 5 6 1− ρ Var factor (V) w = 0.75 V 1 /V w V 1 /V w,2 1 0.1 0.01 0.001 0 1 2 3 4 5 6 1− ρ Var factor (V) w = 1 V 1 /V w V 1 /V w,2 1 0.1 0.01 0.001 0 1 2 3 4 5 6 1− ρ Var factor (V) w = 1.25 V 1 /V w V 1 /V w,2 Figure 10: V arianc e ratios: V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w ) and V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w, 2 ) , for four selected w values . In the high similarity region (e.g., ρ ≥ 0 . 9 ), the 2-bit scheme h w , 2 significa ntly outp erforms the 1-bit scheme h 1 . In the low similarity reg ion, h w , 2 still works reasonably well w hile the performan ce of h w can be poor (e.g., when w ≤ 0 . 75 ). This justifies the recommenda tion of the 2-bit scheme. Can we simply use the 1-bit scheme? When w = 0 . 75 , in the hig h similarity region, the v aria nce ratio V ar ( ˆ ρ 1 ) V ar ( ˆ ρ w, 2 ) is between 2 and 3. Note that, per projected data valu e, the 1-b it scheme requires 1 bit but the 2-bit scheme needs 2 bits. In a sense, the performanc e of h w , 2 and h 1 is actually similar in terms of the total number bits to store the (coded) projec ted data, accordi ng the analysis in this paper . For simila rity estimation, we belie v e it is preferable to use the 2-bit scheme, for the followin g reasons: • The processing cost of the 2-bit scheme would be lo wer . If we use k projecti ons for the 1-bit scheme and k / 2 pro jectio ns for the 2-bit scheme, althou gh they hav e the same storage cost, the proce ssing cost of h w , 2 for generating the project ions would be only 1/2 of h 1 . For very high-di mension al data , the proces sing cost can be substan tial. • As we will sho w in Section 6, when we train a linear clas sifier (e.g., usin g LIBLINEAR ), we need to expan d the projected data into a binary vector with exact k 1’ s if we use k projections for both h 1 and h w , 2 . For this applicatio n, we observe the training time is mainly det ermined by the number of nonze ro entries and the qua lity of the inpu t data. Even with th e same k , we o bserv e the training spe ed on the input data generate d by h w , 2 is often slightl y faster than using the data generated by h 1 . • In this study , we restr ict our attentio n to linear estimator s (whic h can be writte n as inne r products) 12 by simply using the (o v erall) collision pro babil ity , e.g., P w , 2 = Pr h ( j ) w , 2 ( u ) = h ( j ) w , 2 ( v ) . There is significa nt room for impro v ement by using more refined estimators. For example, we can treat this proble m as a conting enc y tab le whose cell probabilit ies are func tions of the similarity ρ and hence we can estimate ρ by solv ing a maximum lik elih ood equation. S uch an estimator is still useful for many applica tions (e.g., nonline ar kern el S VM). W e w ill report that work separate ly , to maintain the simplicit y of this paper . Note that quantizatio n is a non-re v ersibl e process. Once we quantize the data by the 1-bit scheme, there is no hop e of reco ver ing any information other than the sig ns. O ur wor k pro vide s the necess ary theoretical justificat ions for m aking practic al choices of the coding schemes . 6 An Experimental Study f or T raining Lin ear SVM W e conduct experime nts with random projection s for training ( L 2 -regula rized) linear SVM (e.g., LIBLIN- EAR [9]) on three high-dimensi onal datasets : ARCEN E, F ARM, URL , which are av aila ble from the UCI reposi tory . The orig inal U RL dataset has about 2.4 million exampl es (collected in 120 days) in 3231961 di- mension s. W e only use d the da ta from the first day , with 10000 e xamples for t rainin g and 10000 for t esting . The F ARM data set has 2059 t rainin g and 2084 tes ting e xample s in 5 4877 di mension s. The ARCENE dat aset contai ns 100 training and 100 testin g example s in 10000 dimensions. W e implement the four coding schemes studied in this pap er: h w ,q , h w , h w , 2 , and h 1 . Recall h w ,q [8] was based on uniform quantizat ion plus a random offse t, with bin width w . Here, w e first illustrate exactly ho w we utiliz e the cod ed data f or tr aining line ar SVM. Suppo se we us e h w , 2 and w = 0 . 75 . W e can code an origin al projecte d val ue x into a vecto r of length 4 (i.e., 2-bit): x ∈ ( −∞ − 0 . 75) ⇒ [1 0 0 0] , x ∈ [ − 0 . 75 0) ⇒ [0 1 0 0] , x ∈ [0 0 . 75) ⇒ [0 0 1 0] , x ∈ [0 . 75 ∞ ) ⇒ [0 0 0 1] This way , with k projections , for each featur e vec tor , we obtain a n e w ve ctor of len gth 4 k with e xact ly k 1’ s. This new vecto r is then fed to a solver such as LIBLIN EAR. Recently , this strategy was adopted for linear learnin g with binary data based on b-bit minwise hashing [19, 20]. Similarly , when using h 1 , th e dimen sion of the ne w vecto r is 2 k with exac tly k 1’ s. For h w and h w ,q , we must specify a cutof f va lue such as 6 otherwise they are “infinite precision ” schemes. Practically speaking, becaus e the normal dens ity decays very rap idly at the tai l (e.g., 1 − Φ(6) = 9 . 9 × 10 − 10 ), we es sentia lly do not suf fer from informat ion loss if we choose a lar ge enough cutof f such as 6. Figure 11 reports the test accura cies on the UR L data, for comparin g h w ,q with h w . T he results basi- cally confirm our analysis of the estimatio n v ar iances . For small bin w idth w , the two schemes perfo rm ver y similarly . Ho we ve r , w hen using a relati vely lar ge w , the scheme h w ,q suf fers from noticeable reduc - tion of classification accuracies. The experime ntal resul ts on the othe r two datasets demons trate the same pheno menon. This exper iment confirms that the step of rand om offset in h w ,q is not neede d, at least for similarit y estimation and training linear classifiers . There is one tuning parameter C in linear SVM. Figure 11 reports the accura cies for a wide range of C va lues, from 10 − 3 to 10 3 . Before we fee d the data to LIBLINEAR, we always normaliz e them to ha v e unit norm (which is a recommended practice). Our exp erienc e is that, with normalized input data, the best accura cies are often attain ed aro und C = 1 , as verified in F igure 11. For other figure s in thi s section, we will only report C from 10 − 3 to 10. 13 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 6 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 5 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 4 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 3 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 2.5 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 2 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 1.5 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 1 C Classification Acc (%) h w h w,q 10 −3 10 −2 10 −1 10 0 10 1 10 2 10 3 50 60 70 80 90 100 k = 16 k = 64 k = 256 URL: w = 0.5 C Classification Acc (%) h w h w,q Figure 11: T e st accuraci es on the U RL dataset using LIBL INEAR, for comparing two coding schemes: h w vs. h w ,q . Recall h w ,q [8] was based on uniform quantizatio n plus a random of fset, with bin length w . Our propo sed sc heme h w remov es the random offs et. W e report the classification resu lts fo r k = 16 , k = 64 , and k = 256 . In each panel, there are 3 solid cu rve s for h w and 3 dashed curv es for h w ,q . W e report the results for a w ide range of L 2 -reg ulariz ation parameter C (i.e., the horizont al axis). When w is lar ge, h w notice ably outperfo rms h w ,q . When w is small , the tw o sc hemes per form ve ry similar ly . This observ ation is of co urse expec ted from ou r analy sis of the est imation va riance s. This e xperime nt confirms that th e rando m of fset step of h w ,q may not be needed . Figure 12 reports the tes t class ification accur acies (av erag ed ov er 20 repetitio ns) for the URL dataset. When w = 0 . 5 ∼ 1 , b oth h w and h w , 2 produ ce similar resu lts as usi ng the or iginal pro jected data. The 1-b it scheme h 1 is ob vious ly less competiti v e. W e provid e similar plots (Figure 13) for the F ARM data set. 14 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 0.25 C SVM Acc (%) Orig h w h w,2 h 1 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 0.5 C SVM Acc (%) Orig h w h w,2 h 1 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 0.75 C SVM Acc (%) Orig h w h w,2 h 1 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 1 C SVM Acc (%) Orig h w h w,2 h 1 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 1.5 C SVM Acc (%) Orig h w h w,2 h 1 10 −3 10 −2 10 −1 10 0 10 1 50 60 70 80 90 100 k = 16 k = 256 URL: w = 3 C SVM Acc (%) Orig h w h w,2 h 1 Figure 12: T est accu racies on the URL data set using L IBLINEAR, for comparing fou r cod ing schemes: uncod ed (“Orig ”), h w , h w , 2 , and h 1 . W e report the results for k = 16 and k = 256 . Thus, in each panel, there ar e 2 gro ups of 4 curv es. W e repo rt the res ults for a wide ran ge of L 2 -reg ulariz ation parameter C (i.e., the ho rizont al axis). When w = 0 . 5 ∼ 1 , both h w and h w , 2 produ ce similar resu lts as usi ng the original projec ted data, while the 1-bit scheme h 1 is less compet iti v e. 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 0.25 C SVM Acc (%) Orig h w h w,2 h 1 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 0.5 C SVM Acc (%) Orig h w h w,2 h 1 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 0.75 C SVM Acc (%) Orig h w h w,2 h 1 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 1 C SVM Acc (%) Orig h w h w,2 h 1 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 1.5 C SVM Acc (%) Orig h w h w,2 h 1 10 −2 10 −1 10 0 10 1 10 2 50 60 70 80 90 100 k = 16 k = 256 FARM: w = 3 C SVM Acc (%) Orig h w h w,2 h 1 Figure 13: T est accuracies on the F ARM dataset using LIBLINE AR, for comparing four codi ng schemes : uncod ed (“Orig”), h w , h w , 2 , and h 1 . W e report the results for k = 16 and k = 256 . 15 W e summarize the expe riments in Figure 14 for all three datasets. The upper panels report, for each k , the best (highe st) test cla ssificatio n accura cies amon g all C v alues and w v alues (for h w , 2 and h w ). The results sho w a clear trend: (i) the 1-bit ( h 1 ) scheme produces noticeably lo wer accuracie s compared to others ; (ii) the per formance s of h w , 2 and h w are quite similar . The bottom panel s of F igure 14 report the w v al ues at which the best accuracies were attained. For h w , 2 , the optimum w va lues are often close to 1. One interesti ng observ a tion is that for the F ARM dataset, using the coded data (by h w or h w , 2 ) can actuall y produ ce better accurac y than using the original (uncoded ) data, when k is not large . This phenomenon may not be too surprisin g because quantiz ation may be also viewed as some form of regulariz ation and in some cases may help boost the performan ce. 10 20 50 100 200 50 60 70 80 90 100 k Classification Acc (%) ARCENE: SVM Orig h w h w,2 h 1 10 20 50 100 200 60 65 70 75 80 85 k Classification Acc (%) FARM: SVM Orig h w h w,2 h 1 10 20 50 100 200 50 60 70 80 90 100 k Classification Acc (%) URL: SVM Orig h w h w,2 h 1 10 20 50 100 200 0 0.25 0.5 0.75 1 1.25 1.5 k Optimum w ARCENE: SVM h w h w,2 10 20 50 100 200 0 0.25 0.5 0.75 1 1.25 1.5 k Optimum w FARM: SVM h w h w,2 10 20 50 100 200 0 0.25 0.5 0.75 1 1.25 1.5 k Optimum w URL: SVM h w h w,2 Figure 14: Summary of the linear SVM results on three datasets . The upper pan els report, for each k , the best (highes t) class ification accu racies among all C values and w va lues (for h w , 2 and h w ). The bottom panels report the w val ues at which the best accuraci es were attained. 7 Futur e W ork This pa per only studies linea r estimato rs, which can be written as inn er produ cts. Linear estimators are ext remely useful because they allow highly efficient implementatio n of linear classifiers (e.g., linear SVM) and near neighbor search methods using hash tables. For app licatio ns that allo w nonlinear estimators (e.g., nonlin ear kernel SVM), we can substant ially improv e linear estimat ors by solvi ng nonlinear MLE (maximum like lihood ) equations. The analy sis w ill be rep orted separately . Our work is, to an e xtent , inspi red by the recent work on b -bit m inwise hash ing [19, 20], which also propo sed a cod ing scheme for minwise hashing and applied it to learnin g applica tions where the data are binary and sparse. Our work is for general data types, as oppose d to binary , sparse data. W e expect coding methods will a lso pro v e v alua ble for other v a riation s of random proje ctions , including the coun t-min sketch [5] and relat ed v ariants [26] an d very sp arse random proj ection s [17]. Anothe r potential ly interest ing future directi on is to dev elop refined coding schemes for improvi ng sign stable pr oje ction s [21] (which are useful for χ 2 similarit y estimation, a popular similarity measure in compute r vision and N LP). 16 8 Conclusion The method of random projectio ns has become a standar d algori thmic approach for computing dista nces or correlat ions in massi ve, high-dime nsiona l dat asets. A compact representati on (coding) of the proje cted data is crucial for efficien t transmiss ion, retrie v al , and ene r gy consumption . W e ha v e compared a simple scheme based on unifor m quantizatio n with the influential coding scheme using windows with a random of fset [8]; our scheme app ears operation ally simpler , more accurate, not as sensiti v e to parameter s (e.g., the wido w/bin width w ), and uses fe wer bits. W e further more de v elop a 2-bit non-uniform co ding sch eme which perfor ms similarly to uniform quantizati on. Our exp eriments with linear SVM on sev era l real-world high- dimensio nal data sets confirm the efficac y of the two proposed coding schemes. Based on the theoreti cal analys is and empirica l eviden ce, we recommend the use of the 2-b it non-unifo rm coding scheme with the first bin width w = 0 . 7 ∼ 1 , especially when the targe t similarity lev el is high. A Proof of Lemma 1 The joint dens ity function of ( x, y ) is f ( x, y ; ρ ) = 1 2 π √ 1 − ρ 2 e − x 2 − 2 ρxy + y 2 2(1 − ρ 2 ) , − 1 ≤ ρ ≤ 1 . In this pap er we focus on ρ ≥ 0 . W e use the usual notation for standard normal pdf and cdf: φ ( x ) = 1 √ 2 π e − x 2 / 2 , Φ( x ) = R x −∞ φ ( x ) dx . T he prob ability Q s,t can be simplified to be Q s,t = Z t s Z t s 1 2 π p 1 − ρ 2 e − x 2 − 2 ρxy + y 2 2(1 − ρ 2 ) dxdy = Z t s Z t s 1 2 π p 1 − ρ 2 e − x 2 − 2 ρxy + y 2 2(1 − ρ 2 ) dy dx = Z t s 1 2 π p 1 − ρ 2 e − x 2 2 Z t s e − ( y − ρx ) 2 2(1 − ρ 2 ) dy dx = Z t s 1 2 π p 1 − ρ 2 e − x 2 2 Z t − ρx √ 1 − ρ 2 s − ρx √ 1 − ρ 2 e − u 2 2 p 1 − ρ 2 dudx = Z t s 1 √ 2 π e − x 2 2 Z t − ρx √ 1 − ρ 2 s − ρx √ 1 − ρ 2 1 √ 2 π e − u 2 2 dudx = Z t s 1 √ 2 π e − x 2 2 " Φ t − ρx p 1 − ρ 2 ! − Φ s − ρx p 1 − ρ 2 !# dx Next we e v a luate its deri v ati v e ∂ Q s,t ( ρ,s ) ∂ ρ . ∂ Q s,t ( ρ, s ) ∂ ρ = Z t s 1 √ 2 π e − x 2 2 φ t − ρx p 1 − ρ 2 ! − x + tρ (1 − ρ 2 ) 3 / 2 − φ s − ρx p 1 − ρ 2 ! − x + sρ (1 − ρ 2 ) 3 / 2 ! dx Note that ∂ ∂ ρ Φ t − ρx p 1 − ρ 2 !! = φ t − ρx p 1 − ρ 2 ! − x + tρ (1 − ρ 2 ) 3 / 2 17 and Z t s 1 √ 2 π e − x 2 2 φ t − ρx p 1 − ρ 2 ! − x + tρ (1 − ρ 2 ) 3 / 2 dx = Z t s 1 2 π e − x 2 + t 2 − 2 tρx 2(1 − ρ 2 ) − x + tρ (1 − ρ 2 ) 3 / 2 dx = Z t s 1 2 π e − ( x − tρ ) 2 2(1 − ρ 2 ) e − t 2 2 − x + tρ (1 − ρ 2 ) 3 / 2 dx = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 / 2 e − ( x − tρ ) 2 2(1 − ρ 2 ) t s = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 / 2 e − t 2 (1 − ρ ) 2(1+ ρ ) − e − ( s − tρ ) 2 2(1 − ρ 2 ) and Z t s 1 √ 2 π e − x 2 2 φ s − ρx p 1 − ρ 2 ! − x + sρ (1 − ρ 2 ) 3 / 2 dx = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − s 2 / 2 e − ( x − sρ ) 2 2(1 − ρ 2 ) t s = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − s 2 / 2 − e − s 2 (1 − ρ ) 2(1+ ρ ) + e − ( t − sρ ) 2 2(1 − ρ 2 ) Combining the results , we obtain ∂ Q s,t ( ρ, s ) ∂ ρ = Z t s 1 √ 2 π e − x 2 2 φ t − ρx p 1 − ρ 2 ! − x + tρ (1 − ρ 2 ) 3 / 2 − φ s − ρx p 1 − ρ 2 ! − x + sρ (1 − ρ 2 ) 3 / 2 ! dx = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 / 2 e − t 2 (1 − ρ ) 2(1+ ρ ) − e − ( s − tρ ) 2 2(1 − ρ 2 ) − 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − s 2 / 2 − e − s 2 (1 − ρ ) 2(1+ ρ ) + e − ( t − sρ ) 2 2(1 − ρ 2 ) = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 (1+ ρ ) − e − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) + e − s 2 (1+ ρ ) − e − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) = 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − t 2 (1+ ρ ) + e − s 2 (1+ ρ ) − 2 e − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) = 1 2 π 1 (1 − ρ 2 ) 1 / 2 " e − t 2 2(1+ ρ ) − e − s 2 2(1+ ρ ) 2 + 2 e − t 2 + s 2 2(1+ ρ ) − 2 e − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) # ≥ 0 18 The last inequ ality holds because − t 2 + s 2 2(1 + ρ ) − − t 2 + s 2 − 2 stρ 2(1 − ρ 2 ) = 1 2(1 − ρ 2 ) − ( t 2 + s 2 )(1 − ρ ) + t 2 + s 2 − 2 stρ = ρ 2(1 − ρ 2 ) [ s − t ] 2 ≥ 0 This complet es the proof. B Pr oof of Theorem 2 From the collision probabili ty , P w ,q = 2Φ w √ d − 1 − 2 √ 2 π w / √ d + 2 w / √ d φ w √ d , we can estimate d (and ρ ). Recall d = 2(1 − ρ ) . W e denote the estimator by ˆ d w ,q (and ˆ ρ w ,q ), from the empirical probability ˆ P w ,q , which is estimate d without bias from k projections . Note that ˆ d w ,q = g ( ˆ P w ,q ) for a nonline ar fun ction g . As k → ∞ , the estimator ˆ d w ,q is asymptoticall y unbias ed. The v arian ce can be determine d by the “delta” method: V ar ˆ d w ,q = V ar ˆ P w ,q g ′ ( P w ,q ) 2 + O 1 k 2 = 1 k P w ,q (1 − P w ,q ) g ′ ( P w ,q ) 2 + O 1 k 2 Since ∂ P w ,q ∂ d = − φ w √ d wd − 3 / 2 − 1 √ 2 π w d − 1 / 2 + 1 w d − 1 / 2 φ w √ d + 1 w/ √ d φ w √ d w √ d wd − 3 / 2 = 1 w/ √ d d − 1 φ w √ d − 1 √ 2 π w / √ d d − 1 we ha ve g ′ ( P w ,q ) = 1 ∂ P w,q ∂ d = w/ √ d φ w/ √ d − 1 / √ 2 π d and V ar ˆ d w ,q = d 2 k w/ √ d φ w/ √ d − 1 / √ 2 π 2 P w ,q (1 − P w ,q ) + O 1 k 2 Because ρ = 1 − d/ 2 , we know tha t V ar ( ˆ ρ w ,q ) = 1 4 V ar ˆ d w ,q = V w ,q k + O 1 k 2 where V w ,q = d 2 / 4 w/ √ d φ w/ √ d − 1 / √ 2 π 2 P w ,q (1 − P w ,q ) This complet es the proof. 19 C Proof of Theor em 3 This proof is similar to the proof of Theorem 2. T o ev a luate the asymptotic var iance, we need to compute ∂ P w ∂ ρ : ∂ P w ∂ ρ = 1 π 1 (1 − ρ 2 ) 1 / 2 ∞ X i =0 e − ( i +1) 2 w 2 (1+ ρ ) + e − i 2 w 2 (1+ ρ ) − 2 e − ( i +1) 2 w 2 + i 2 w 2 − 2 i ( i +1) w 2 ρ 2(1 − ρ 2 ) = 1 π 1 (1 − ρ 2 ) 1 / 2 ∞ X i =0 e − ( i +1) 2 w 2 (1+ ρ ) + e − i 2 w 2 (1+ ρ ) − 2 e − w 2 2(1 − ρ 2 ) e − i ( i +1) w 2 1+ ρ Thus, V ar ( ˆ ρ w ) = V w k + O 1 k 2 , where V w = π 2 (1 − ρ 2 ) P w (1 − P w ) P ∞ i =0 e − ( i +1) 2 w 2 (1+ ρ ) + e − i 2 w 2 (1+ ρ ) − 2 e − w 2 2(1 − ρ 2 ) e − i ( i +1) w 2 1+ ρ 2 Next, we con sider the special case with ρ → 0 . P w | ρ =0 =2 ∞ X i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 = 2 ∞ X i =0 Z ( i +1) w iw φ ( x ) dx ! 2 = 2 w 2 ∞ X i =0 Z i +1 i φ ( wx ) dx 2 ∂ P w ∂ ρ ρ =0 = 1 π ∞ X i =0 e − ( i +1) 2 w 2 / 2 − e − i 2 w 2 / 2 2 = 2 ∞ X i =0 ( φ (( i + 1) w ) − φ ( iw )) 2 Combining the results , we obtain V w | ρ =0 = 2 w 2 P ∞ i =0 R i +1 i φ ( wx ) dx 2 1 − 2 w 2 P ∞ i =0 R i +1 i φ ( wx ) dx 2 1 π P ∞ i =0 e − ( i +1) 2 w 2 / 2 − e − i 2 w 2 / 2 2 2 = P ∞ i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 1 / 2 − P ∞ i =0 (Φ(( i + 1) w ) − Φ( iw )) 2 P ∞ i =0 ( φ (( i + 1) w ) − φ ( iw )) 2 2 20 D Proof of Theor em 4 P w , 2 = Pr h ( j ) w , 2 ( u ) = h ( j ) w , 2 ( v ) =2 Z w 0 φ ( x ) " Φ w − ρx p 1 − ρ 2 ! − Φ − ρx p 1 − ρ 2 !# dx + 2 Z ∞ w φ ( x ) " 1 − Φ w − ρx p 1 − ρ 2 !# dx =2 Z w 0 φ ( x ) " Φ w − ρx p 1 − ρ 2 ! − 1 + Φ ρx p 1 − ρ 2 !# dx + 2 Z ∞ w φ ( x ) " 1 − Φ w − ρx p 1 − ρ 2 !# dx =4 Z w 0 φ ( x ) " Φ w − ρx p 1 − ρ 2 ! − 1 # dx + 2 Z w 0 φ ( x )Φ ρx p 1 − ρ 2 ! dx + 2 Z ∞ 0 φ ( x ) " 1 − Φ w − ρx p 1 − ρ 2 !# dx = − 4 Z w 0 φ ( x )Φ − w + ρx p 1 − ρ 2 ! dx + 2 Z w 0 φ ( x )Φ ρx p 1 − ρ 2 ! dx + 2 Z ∞ 0 φ ( x ) " 1 − Φ w − ρx p 1 − ρ 2 !# dx =1 − 1 π cos − 1 ρ − 4 Z w 0 φ ( x )Φ − w + ρx p 1 − ρ 2 ! dx W e need to sho w g ( ρ ) = Z w 0 φ ( x )Φ ρx p 1 − ρ 2 ! dx + Z ∞ 0 φ ( x ) " 1 − Φ w − ρx p 1 − ρ 2 !# dx = 1 2 − 1 2 π cos − 1 ρ Because g ′ ( ρ ) = Z w 0 φ ( x ) φ ρx p 1 − ρ 2 ! x (1 − ρ 2 ) 3 / 2 xdx + Z ∞ 0 φ ( x ) φ w − ρx p 1 − ρ 2 ! x − ρw (1 − ρ ) 3 / 2 dx = Z w 0 1 2 π e − x 2 2(1 − ρ 2 ) x (1 − ρ 2 ) 3 / 2 dx + Z ∞ 0 1 2 π e − ( x − ρw ) 2 + w 2 − w 2 ρ 2 2(1 − ρ 2 ) x − ρw (1 − ρ 2 ) 3 / 2 xdx = 1 2 π 1 (1 − ρ 2 ) 1 / 2 1 − e − w 2 2(1 − ρ 2 ) + 1 2 π 1 (1 − ρ 2 ) 1 / 2 e − w 2 2 e − ρ 2 w 2 2(1 − ρ 2 ) = 1 2 π 1 (1 − ρ 2 ) 1 / 2 we kno w g ( ρ ) = Z ρ 0 g ′ ( ρ ) dρ + g (0) = 1 2 π sin − 1 ρ + (Φ( w ) − 1 / 2) / 2 + (1 − Φ( w )) / 2 = 1 2 π sin − 1 ρ + 1 4 = 1 2 − 1 2 π cos − 1 ρ 21 Also, ∂ P w , 2 ∂ ρ = 1 π 1 p 1 − ρ 2 − 4 Z w 0 φ ( x ) φ − w + ρx p 1 − ρ 2 ! x − ρw (1 − ρ 2 ) 3 / 2 dx = 1 π 1 p 1 − ρ 2 − 4 2 π 1 (1 − ρ 2 ) 1 / 2 e − w 2 2 e − ρ 2 w 2 2(1 − ρ 2 ) − e − ( w − ρw ) 2 2(1 − ρ 2 ) = 1 π 1 p 1 − ρ 2 − 2 π 1 p 1 − ρ 2 e − w 2 2(1 − ρ 2 ) − e − w 2 1+ ρ Thus, combini ng the results, we obtain V ar ( ˆ ρ w , 2 ) = V w , 2 k + O 1 k 2 where V w , 2 = π 2 (1 − ρ 2 ) P w , 2 (1 − P w , 2 ) 1 − 2 e − w 2 2(1 − ρ 2 ) + 2 e − w 2 1+ ρ 2 This complet es the proof. Refer ences [1] Ella Bingham and Heikki Mannila. Random projectio n in dimension ality red uction : Applicatio ns to image and text dat a. In KDD , page s 245–250, San Francisco , CA, 2001. [2] Leon Bottou. http:// leon.bo ttou.or g/projects/sgd . [3] Jeremy Buhler and M artin T ompa. Finding motifs using random projec tions. Jo urnal of C omputati onal Biolog y , 9(2):225 –242, 2002. [4] Moses S. Charikar . Similarity estimation technique s from roundin g algorithms. In STOC , page s 380– 388, Montreal , Quebec, Canada, 2002. [5] Graham C ormode and S. Muthukrishna n. An improv ed data str eam summary: the cou nt-min sketch and its applica tions. Jou rnal of Algorithm , 55(1):58 –75, 2005. [6] Sanjoy Dasgupta. Learnin g mixtures of gaussians. In FOCS , pages 634–644 , New Y ork , 1999. [7] Sanjoy Dasgupta. Experimen ts with random projection. In U AI , page s 143–151, Stanford, CA , 2000 . [8] Mayur Datar , Nicole Imm orlica , Piotr Indyk, and V ahab S. Mirrokn. Locality-sen siti v e has hing scheme based on p -stabl e distrib ution s. In SCG , pages 253 – 262, Brooklyn, NY , 2004. [9] Rong-En Fan, Kai-W ei Chang, Cho-Jui Hsieh, Xiang-Rui W ang, and Chih-Jen Lin. Liblinear : A library for lar ge linear classifica tion. Jou rnal of Machine Learning Resear ch , 9:1871–18 74, 2008. [10] Dmitriy Fradkin and Dav id Madigan. Experiments with random projection s for machine learning . In KDD , pages 517–5 22, W ashington, DC, 2003. 22 [11] Y oa v Freund , Sanjo y Dasgupt a, Mayank Kabra, and Nakul V erma. Learning t he struct ure of manifolds using random projec tions . In NIPS , V anco uve r , BC, Canada, 2008. [12] Jerome H . Friedman, F . Bask ett, and L. Shustek. An algorithm for finding nearest neig hbors . IEEE T ransact ions on C omputer s , 24:100 0–100 6, 1975. [13] Michel X . Goemans and David P . W illia mson. Improv ed approx imation algorithms for maximum cut and satis fiability prob lems u sing semidefinite p rogra mming. Journ al of A CM , 42(6):1115 –1145 , 1995. [14] Piotr Indyk and Rajee v Motwani. Approximate neare st neigh bors: T ow ards removi ng the curse of dimensio nality . In STOC , page s 604–613, D allas, TX, 1998 . [15] Thorsten J oachims. Tr aining linea r svms in linea r time. In KDD , pages 217–2 26, Pittsb u r gh, P A, 2006. [16] W illiam B. John son and Joram Linden straus s. Extensio ns of L ipschi tz m apping into Hilbert space. Contempor ary Mathematics , 26:189–2 06, 1984. [17] Ping Li. V ery sparse stable random projectio ns for dimension reduction in l α ( 0 < α ≤ 2 ) norm. In KDD , San Jose, CA, 2007. [18] Ping Li, Tr e v or J. Hastie , and Kenn eth W . Churc h. Improving rand om projectio ns using mar ginal informat ion. In COLT , page s 635–649, Pittsb ur gh, P A, 2006. [19] Ping Li and Arnd Christia n K ¨ onig. b-bit minwise hashing. In WWW , pages 67 1–680 , R aleigh , NC, 2010. [20] Ping Li, Art B Owen, and Cun -Hui Zhan g. One permutatio n hashing . In NIPS , Lake T ahoe, NV , 2012. [21] Ping Li, Genn ady Samorodn itsk y , and J ohn Hopcrof t. Sign stable pr ojecti ons, sign cau chy pro jectio ns and chi-sq uare kernels. T echnica l report, arXi v:1308 .1009, 2013. [22] Christos H . Papadi mitriou, Prabha kar Ragha v a n, Hisao T amaki, and Santos h V empala. Latent semantic inde xing : A probabilis tic analysis. In PODS , pages 159–1 68, Seattle,W A, 1998. [23] Shai Shalev- Shwartz , Y oram Singer , and Nathan S rebro. Pe gasos: Primal estimated sub-gradien t sol ver for svm. In ICML , page s 807–814 , Corval is, Oregon, 2007 . [24] Santosh V empala . The Random Pr oje ction Method . American Mathematica l Society , Providen ce, RI, 2004. [25] Fei W ang and Ping Li. Efficie nt nonnega ti v e matrix facto rizatio n with random projection s. In SDM , 2010. [26] Kilian W einber ge r , Anirban Dasg upta, Joh n Langfo rd, Alex Smola, and Josh Atte nber g . Feature hash- ing for lar ge scale multitask learnin g. In ICM L , pag es 1113–112 0, 2009. 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment