Processing stationary noise: model and parameter selection in variational methods

Additive or multiplicative stationary noise recently became an important issue in applied fields such as microscopy or satellite imaging. Relatively few works address the design of dedicated denoising methods compared to the usual white noise setting…

Authors: Jer^ome Fehrenbach, Pierre Weiss

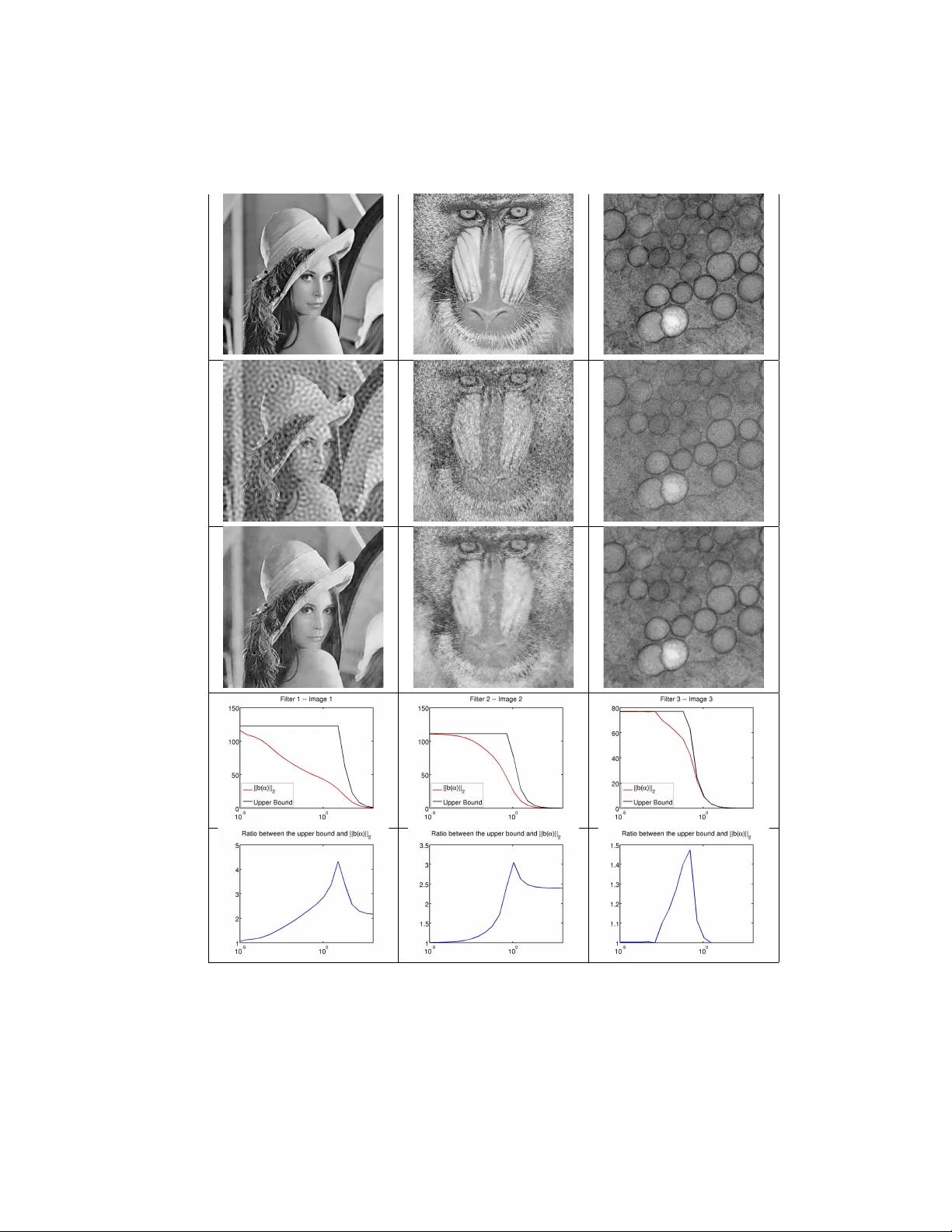

Pr ocessing st a tionar y noise: model and p arameter selection in v aria tional methods. J ´ erˆ ome F ehrenbac h ∗ Pierre W eiss † August 3, 2018 Abstract Additiv e or m ultiplicativ e stationary noise recen tly became an im- p ortan t issue in applied fields such as microscopy or satellite imaging. Relativ ely few w orks address the design of dedicated denoising metho ds compared to the usual white noise setting. W e recen tly prop osed a v aria- tional algorithm to tac kle this issue. In this pap er, we analyze this problem from a statistical point of view and provide deterministic properties of the solutions of the asso ciated v ariational problems. In the first part of this w ork, we demonstrate that in many practical problems, the noise can b e assimilated to a colored Gaussian noise. W e pro vide a quan titative mea- sure of the distance betw een a stationary process and the corresp onding Gaussian process. In the second part, we fo cus on the Gaussian setting and analyze denoising metho ds whic h consist of minimizing the sum of a total v ariation term and an l 2 data fidelity term. While the constrained form ulation of this problem allows to easily tune the parameters, the La- grangian formulation can be solved more efficiently since the problem is strongly con vex. Our second contribution consists in providing analytical v alues of the regularization parameter in order to approximately satisfy Morozo v’s discrepancy principle. Keyw ords Stationary noise, Berry-Esseen theorem, Morozo v principle, T o- tal v ariation, Image Decon v olution, Negative norm mo dels, Destriping, Conv ex analysis and optimization. 1 In tro duction In a recent pap er [8], a v ariational method that decomp oses an image into the sum of a piecewise smooth comp onent and a set of stationary processes w as ∗ IMT-UMR5219, Universit ´ e de T oulouse, CNRS, T oulouse, F rance ( jerome.fehrenbach@math.univ-toulouse.fr ) † IT A V-USR3505, Universit ´ e de T oulouse, CNRS, T oulouse, F rance ( pierre.weiss@itav-recherche.fr ) 1 for the remov al of stationary noise. 2 prop osed. This algorithm has a large n umber of applications suc h as decon- v olution or denoising when structur e d p atterns degrade the image conten ts. A t ypical example of application that received a considerable atten tion lately is destriping [5, 7, 8, 12, 16]. It w as also shown to generalize the negativ e norm mo dels [2, 14, 20, 26] in the discrete setting [9]. Figures 1, 3, 6 show examples of applications of this algorithm in an additive noise setting and Figure 2 shows an example with a multiplicativ e noise model. This algorithm is based on the hypothesis that the observed image u 0 can b e written as: u 0 = u + m X i =1 b i (1) where u denotes the original image and ( b i ) i ∈{ 1 , ··· ,m } denotes a set of realizations of indep endent sto c hastic pro cesses B i . These pro cesses are further assumed to b e stationary and read B i = ψ i ∗ Λ i where ψ i denotes a kno wn kernel and Λ i are i.i.d. ran dom vectors. The decomp osition algorithm can then b e deduced from a Ba yesian approach, leading to large scale con vex optimization problems of size m × n where n is the n um b er of pixels/vo xels in the image. This method is now used routinely in the con text of microscopy imaging. Its main weakness for a broader use lies in the difficulty to set its parameters adequately . One basically needs to input the filters ψ i and the marginals of eac h random vectors Λ i , which is uneasy ev en for imaging sp ecialists. Our aim in this pap er is to provide a set of mathematically founded rules to simplify the parameter selection and reduce computing times. W e do not tackle the problem of finding the filters ψ i (whic h is a problem similar to blind deconv olution), but fo cus on the choice of the marginals of Λ i . The outline of the pap er is as follo ws. Notation are describ ed in section 2.1. In section 2.2, we review the main principles motiv ating the decomposition algorithm. In section 3, we sho w that - from a statistical point of view and for man y applications - assuming that λ i is a Gaussian pro cess is nearly equiv alent to selecting other marginals. This has the double adv antage of simplifying the analysis of the mo del properties and reducing the computational complexity . In section 4, we show that when b i are dra wn from Gaussian pro cesses, parameter selection can b e p erformed in a deterministic w ay , by analyzing the primal-dual optimalit y conditions. W e also show that the prop ose d ideas allo ws to reduce the problem dimension from m × n to n v ariables, thus dividing the storage cost and computing times b y a factor roughly equal to m . The appendix 5 contains the pro ofs of the results stated in section 4. 2 Notation and con text 2.1 Notation W e consider discrete d -dimensional images u ∈ R n , where n = n 1 · n 2 · · · n d de- notes the pixels num b er. The pixels lo cations b elong to the set Ω = { 1 , · · · , n 1 }× · · · × { 1 , · · · , n d } . The pixel v alue of u at lo cation x ∈ Ω is denoted u ( x ) = for the remov al of stationary noise. 3 Figure 1: T op: full size images - Bottom: zo om on a small part. F rom left to right: Noisy image (16,5dB), denoised using the metho d prop osed in [8] (PSNR=32,3dB), original image. u ( x 1 , · · · x d ). Let u ∈ R n denote an image. The image u mean ∈ R n has all its comp onen ts equal to the mean of u . The standard l p -norms on R n are denoted k · k p . Discrete vector fields q = q 1 . . . q d ∈ R n × d are denoted by b old symbols. The isotropic l p -norms on vector fields are denoted k · k p and defined by: k q k p = k q q 2 1 + · · · + q 2 d k p . The discrete partial deriv ative in direction k is defined by ∂ k u ( · , x k , · ) = ( u ( · , x k + 1 , · ) − u ( · , x k , · ) if 1 ≤ x k < n k u ( · , 1 , · ) − u ( · , n k , · ) if x k = n k . Using p erio dic boundary conditions allows to rewrite partial deriv atives as cir- cular conv olutions: ∂ k u = d k ? u where d k is a finite difference filter. The discrete gradien t op erator in d -dimension is defined b y: ∇ = ∂ 1 ∂ 2 . . . ∂ d . The discrete isotropic total v ariation of u ∈ R n is defined by T V ( u ) = k∇ u k 1 . Let k · k α and k · k β denote norms on R n and R m resp ectiv ely and A : R n → R m for the remov al of stationary noise. 4 Figure 2: An example inv olving a multiplicativ e noise mo del. F rom left to righ t. Original image - Noisy image. It is obtained by multiplying each line of the original image b y a uniform random v ariable in [0 . 1 , 1]. SNR=10.6dB - Denoised using the metho d proposed in [8] on the logarithm of the noisy image. SNR=29.1dB - Ratio betw een the original image and the denoised image. The m ultiplicative factor is retriev ed accurately . denote a linear op erator. The sub ordinate op erator norm k A k α → β is defined as follo ws: k A k α → β = max k x k α ≤ 1 k Ax k β . (2) Let u and v b e t w o d -dimensional images. The p oint wise pro duct b etw een u and v is denoted u v and the p oint wise division is denoted u v . The conjugate of a num b er or a v ector a is denoted ¯ a . The transconjugate of a matrix M ∈ C m × n is denoted M ∗ . The canonical basis of R n is denoted ( e i ) i ∈{ 1 , ··· ,n } . The discrete F ourier basis of C n is denoted ( f i ) i ∈{ 1 , ··· ,n } . W e use the con ven tion that k f i k ∞ = 1 , ∀ i so that k f i k 2 = √ n (see e.g. [13]). In all the for the remov al of stationary noise. 5 Figure 3: Left: SPIM image of a zebrafish em bryo Tg.SMYH1:GFP Slo w m yosin Chain I sp ecific fib ers. Right: denoised image using VSNR. (Image credit: Julie Batut). pap er F = f ∗ 1 . . . f ∗ n denotes the d -dimensional discrete F ourier transform matrix. The inv erse F ourier transform is denoted F − 1 and satisfies F − 1 = F ∗ n . The discrete F ourier transform of u is denoted F u or ˆ u . It satisfies k ˆ u k 2 = √ n k u k 2 . The discrete symmetric of u is denoted ˜ u and defined by ˜ u = F − 1 ¯ ˆ u . The con volution pro duct b ewteen u and ψ is denoted u ? ψ and defined for any x ∈ X by: u ? ψ ( x ) = X y ∈ Ω u ( y ) ψ ( x − y ) (3) where p erio dic b oundary conditions are used. It satifies u ? ψ = F − 1 ˆ u ˆ ψ . (4) Since the discrete conv olution is a linear op erator, it can b e represented by a matrix. The conv olution matrix asso ciated to a kernel ψ is denoted in capital letters Ψ: Ψ u = u ? ψ . (5) The transp ose of a conv olution op erator with ψ is a conv olution operator with the symmetrized kernel: Ψ T u = ˜ ψ ? u . 2.2 Decomp osition algorithm The VSNR algorithm (V ariational Stationary Noise Remov al) is described in [8]. The starting p oint of our algorithm is the following image formation model: u 0 = u + m X i =1 λ i ? ψ i (6) for the remov al of stationary noise. 6 where u 0 ∈ R n is the observ ed image and u ∈ R n is the image to recov er. Each ψ i ∈ R n is a known filter and each λ i ∈ R n is the realization of a random v ector with i.i.d. en tries. W e assume that its densit y reads p ( λ i ) ∝ exp( − φ i ( λ i )) where φ i is a sep ar able function of kind φ i ( λ i ) = X x ∈ Ω ϕ i ( λ i ( x )) . (7) with ϕ i : R → R ∪ { + ∞} (t ypical examples are l p to the p norms). Note that h yp othesis (7) is a simple consequence of the i.i.d. hypothesis. Our aim is to recov er b oth the stationary comp onen ts b i = λ i ? ψ i and the image u . Assuming that the noise b = m X i =1 b i and the image are dra wn from indep enden t random vectors, the maximum a p osteriori (MAP) approach leads to the following optimization problem: Find ( λ ∗ , u ∗ ) ∈ arg max λ ∈ R n × m ,u ∈ R n p ( λ , u | u 0 ) . Ba yes’ rule allo ws to reformulate this problem as: Find λ ∗ ∈ arg min λ ∈ R n × m ,u ∈ R n − log p ( u 0 | λ , u ) − log p ( λ ) − log p ( u ) , where u = u 0 − P m i =1 λ i ? ψ i . Since we assumed indep endence of the λ i s, − log p ( λ ) = m X i =1 − log p ( λ i ) . If we further assume that p ( u ) ∝ exp( −k∇ u k 1 ), the optimization problem we aim at solving finally writes: Find λ ∈ Arg min λ ∈ R n × m ∇ u 0 − m X i =1 λ i ? ψ i ! 1 + m X i =1 φ i ( λ i ) . (8) This problem is con vex and can b e solved efficien tly using first order algo- rithms such as Cham b olle-Pock’s primal-dual method [6, 9]. The filters ψ i and the functions φ i are user defined and should be selected using prior kno wledge on the noise prop erties. Unfortunately , the c hoice of φ i pro ved to b e very com- plicated in applications. Even for the special case φ i ( · ) = α i 2 k · k 2 2 , α i is curren tly obtained b y trial and error and interesting v alues v ary in the range [10 − 8 , 10 10 ] dep ending on the filters ψ i and the noise level. It is thus essential to restrict the range of these parameters in order to ease the task of end-users. Problem (8) is a very large scale problem since t ypical 3D images contain from 10 8 to 10 9 v oxels. Most automatized parameter selection metho ds such as generalized cross v alidation [10] or generalized SURE [24] require to solv e sev eral instances of (8). This leads to excessive computational times in our setting. In for the remov al of stationary noise. 7 this pap er, w e propose to estimate the parameters α i according to Morozo v principle [15]. Con trarily to recent contributions [1, 25] whic h find solutions of the constrained problems by iteratively solving the unconstrained problem (8), our aim is to obtain an analytical approximate v alue of α i . This approach is motiv ated by the fact that in denoising applications, the users usually hav e a crude idea of the noise level, so that it makes no sense to reac h exactly a giv en noise level. Note that the constrained problem could b e solv ed directly b y using methods suc h as the ADMM [17, 23]. How ever, when φ i ( · ) = α i 2 k · k 2 2 , the Lagrangian formulation is strongly conv ex, while the constrained one is not, and efficient metho ds that conv erge in O 1 k 2 can b e devised in the strongly con vex setting [6, 28]. 3 Effectiv eness of the Gaussian mo del in the non Gaussian setting In this section we analyze the statistical prop erties of random pro cesses that can b e written as Λ ∗ ψ where Λ is a white noise pro cess. Our main result is that the stationary noise b i = λ i ? ψ i can b e assimilated to a Gaussian colored noise for many applications of in terest ev en if Λ is non Gaussian. The heuristic reason is that if conv olutions kernels with a large supp ort are considered, then man y pixels ha ve a significan t contribution to one pixel of the estimated noise comp onen t. Therefore, a central limit theorem implies that the sum of these con tributions can b e assimilated to a sum of Gaussian pro cesses. 3.1 Distance of stationary pro cesses to the Gaussian dis- tribution Our results are simple consequences of the Berry-Esseen theorem [4] that quan- tifies the distance b etw een a sum of independent random v ariables and a Gaus- sian. Theorem 1 (Berry-Esseen) . L et X 1 , X 2 , · · · , X n b e indep endent c enter e d r an- dom variables of finite varianc e σ 2 i and finite thir d or der moment ρ i = E ( | X i | 3 ) . L et S n = X 1 + X 2 + · · · + X n p σ 2 1 + σ 2 2 + · · · + σ 2 n . L et F n denote the cumulative distribution functions (c df ) of S n . L et Φ denote the c df of the standar d normal distribution. Then k F n − Φ k ∞ ≤ C 0 P n i =1 ρ i ( P n i =1 σ 2 i ) 3 / 2 (9) wher e C 0 ≤ 0 . 56 . for the remov al of stationary noise. 8 In our problem, we c onsider random vectors of kind: B = ψ ? Λ = ΨΛ , (10) so that B ( x ) = X y ∈ Ω Λ( x − y ) ψ ( y ) , where (Λ( x )) x ∈ Ω are i.i.d. random v ariables. Let us further assume that they are of finite second and third order momen t 1 . Denote σ 2 = E (Λ( y ) 2 ) < + ∞ and ρ = E ( | Λ( y ) | 3 ) < + ∞ . The mean of B is E ( B ) = 0 since con volution operators preserv e the set of vectors with zero mean. Moreov er its cov ariance matrix is C ov ( B ) = σ 2 Ψ T Ψ with Ψ T Ψ = F − 1 diag ( | ˆ ψ | 2 ) F whatever the distribution of Λ. Since Gaussian pro cesses are completely describ ed by their mean and co v ariance matrix, it suffices to prov e that any co ordinate B ( x ) is close to a Gaussian for the whole pro cess B to b e near Gaussian. The following results state that B is close to a Gaussian random vector whatever the law of Λ if the filter ψ satisfies a geometrical criterion discussed later. Prop osition 1. L et G denote the c df of B ( x ) s wher e s = k ψ k 2 . This c df is indep endent of x , mor e over: k G − Φ k ∞ ≤ 0 . 56 ρ σ 3 k ψ k 3 3 k ψ k 3 2 . (11) Pr o of. The indep endence w.r.t. x is a direct consequence of the stationarity of B . Bound (11) is a direct consequence of Berry-Esseen theorem 1. It suffices to notice that E ( | Λ( x − y ) ψ ( y ) | 2 ) = ψ ( y ) 2 σ 2 , E ( | Λ( x − y ) ψ ( y ) | 3 ) = | ψ ( y ) | 3 ρ for an y ( x , y ) ∈ Ω 2 and to apply theorem 1. Th us, if k ψ k 3 3 k ψ k 3 2 is sufficiently small, the distribution of B will b e near Gaussian. The following result clarifies this condition in an asymptotic regime. Prop osition 2. L et ψ : R d + → R denote a function. L et Ω n = [1 , n ] d ∩ Z d denote a Euclide an grid. L et s n = s X x ∈ Ω n ψ 2 ( x ) . If Λ( x ) is of finite se c ond and thir d or der moment and the se quenc e ( ψ ( x )) x ∈ Z d is uniformly b ounde d | ψ ( x ) | ≤ M < + ∞ , ∀ x ∈ Z d and has infinite varianc e lim n → + ∞ s n = + ∞ , then for al l x ∈ Ω n : B ( x ) s n ( D ) → N (0 , σ 2 ) . (12) Pr o of. Let us denote: f ( n ) = P x ∈ Ω n | ψ ( x ) 3 | P x ∈ Ω n ψ ( x ) 2 3 / 2 . (13) 1 This hypothesis is not completely necessary , but simplifies the exp osition. for the remov al of stationary noise. 9 W e hav e X x ∈ Ω n | ψ ( x ) | 3 ≤ X x ∈ Ω n k ψ k ∞ ψ ( x ) 2 ≤ M s 2 n . Th us: f ( n ) ≤ M s 2 n s 3 n = M s n . The right-hand side in (9) is f ( n ) and go es to 0 as n → + ∞ . Lindeb erg- F eller theorem could also b e used in this context and allow to av oid moment conditions. 3.2 Examples W e present differen t examples of kernels where the Theorem 1 applies. Example 1. We first c onsider a kernel that is the indic ator function of a ”lar ge” set, namely ψ ( x ) = 1 if x ∈ I , and # I = N . Then the upp er b ound in Equation (9) is C 0 / √ N . It b e c omes smal l when N b e c omes lar ge. Example 2. L et us study the c ase of kernels with a (slow enough) p ower de c ay: ψ ( x ) = | x | α , for some − d/ 2 < α < 0 . In this c ase, the quantity s n tends to infinity sinc e it is asymptotic to Z [1 ,n ] d | x | 2 α d x ∼ K Z n r =1 r d − 1 r 2 α dr ∼ K n d +2 α for some c onstant K . Ther efor e Pr op osition 2 applies. This r esult is stil l valid for α ≥ 0 . Example 3. We tr e at the c ase of an anisotr opic Gaussian filter ψ with axes aligne d with the c o or dinate axes. In this c ase the varianc e is finite and pr op osi- tion 2 do es not apply. However we c an give an explicit value of the upp er b ound in (11) , which ensur es that the pr o c ess is close fr om a Gaussian. L et us assume that ψ ( x ) = K e − P d i =1 x 2 i / 2 σ 2 i , wher e K is a normalizing c onstant and x = ( x 1 , x 2 , . . . , x d ) ∈ Z d . We pr ovide in this c ase an upp er b ound for f ( n ) in terms of ( σ i ) . F or the sake of simplicity we assume that K = 1 . X x ∈ Z d | ψ ( x ) 3 | = X ( n 1 ,...,n d ) ∈ Z d e − 3 P 1 ≤ i ≤ d n 2 i / 2 σ 2 i = d Y i =1 X n ∈ Z e − 3 n 2 / 2 σ 2 i ! = d Y i =1 1 + 2 X n> 0 e − 3 n 2 / 2 σ 2 i ! for the remov al of stationary noise. 10 and similarly X x ∈ Z d | ψ ( x ) 2 | = d Y i =1 1 + 2 X n> 0 e − n 2 /σ 2 i ! We use the fol lowing ine qualities 1 2 r π α − 1 ≤ Z + ∞ 1 e − αt 2 dt ≤ X n> 0 e − αn 2 ≤ Z + ∞ 0 e − αt 2 dt = 1 2 r π α to obtain max 1 , r π α − 1 ≤ 1 + 2 X n> 0 e − αn 2 ≤ 1 + r π α . It fol lows that lim n →∞ f ( n ) ≤ d Y i =1 1 + σ i p 2 π / 3 max (1 , σ i √ π − 1) 3 / 2 = d Y i =1 g ( σ i ) . Note that g ( σ ) = O + ∞ ( 1 √ σ ) . In other wor ds if the Gaussian kernel has sufficiently lar ge varianc es, the c onstant in the upp er b ound of (9) is smal l. Example 4. In this example, we il lustr ate the the or em on a pr actic al setting. L et us assume that Λ( x ) is a Bernoul li-uniform r andom variable in or der to mo del sp arse pr o c esses. With this mo del Λ( x ) = 0 with pr ob ability 1 − γ and takes a r andom value distribute d uniformly in [ − 1 , 1] with pr ob ability γ . Simple c alculation le ads to σ 2 = γ 3 and ρ = γ 4 so that e quation (11) gives: k G − Φ k ∞ ≤ 0 . 73 √ γ k ψ k 3 3 k ψ k 3 2 . (14) L et us define a 2D anisotr opic Gaussian filter as: ψ ( x 1 , x 2 ) = C exp − x 2 1 2 σ 2 1 − x 2 2 2 σ 2 2 (15) wher e C is a normalization c onstant. This filter is use d fr e quently in the mi- cr osc opy exp eriments we p erform and is t hus of p articular inter est. Figur e 4 shows pr actic al r e alizations of stationary pr o c esses define d as Λ ? ψ . Note that as σ 1 or γ incr e ase, the textur e gets similar to the Gaussian pr o c ess on the last r ow. T able 1 quantifies the pr oximity of the non Gaussian pr o c ess to the Gaus- sian one using pr op osition 1. The pr o c esses c an har d ly b e distiguishe d fr om a p er c eptual p oint of view when the right hand-side in (11) is less than 0 . 4 . for the remov al of stationary noise. 11 H H H H H γ σ 1 2 8 32 64 128 0.001 0.01 0.05 0.1 0.5 1 Gaussian pro cess Figure 4: The first six rows show stationnary pro cesses obtained by con volving an anisotropic Gaussian filter with Bernoulli uniform pro cesses for different v alues of γ and different v alues of σ 1 . The v alue of σ 2 = 2. The last row shows a Gaussian pro cess obtained b y con v olving Gaussian white noise with the same Gaussian filter. for the remov al of stationary noise. 12 H H H H H γ σ 1 2 8 32 64 128 0.001 1.00 1.00 1.00 1.00 1.00 0.01 1.00 1.00 0.98 0.82 0.69 0.05 0.88 0.62 0.44 0.37 0.31 0.1 0.62 0.44 0.31 0.26 0.22 0.5 0.28 0.20 0.14 0.12 0.10 1 0.20 0.14 0.10 0.08 0.07 T able 1: V alues of b ound (11) with resp ect to γ and σ 1 . 3.3 Numerical v alidation In the previous paragraphs we sho wed that in man y situations, stationary ran- dom pro cesses B of kind B = Λ ? ψ (16) where Λ denotes a white noise pro cess can b e assimilated to a coloured Gaussian noise. A Bay esian approac h th us indicates that problem (8) can be replaced b y the following approximation: Find λ ( α ) = arg min λ ∈ R n × m k∇ ( u 0 − m X i =1 ψ i ? λ i ) k 1 + m X i =1 α i 2 k λ i k 2 2 (17) for a particular choice of α i discussed later. This new problem has an attractive feature compared to (8): it is strongly conv ex in λ , which implies uniqueness of the minimizer and the existence of efficient minimization algorithms. Unfortu- nately , it is w ell known that Bay esian approaches can substan tially deviate from the prior mo dels that underly the MAP estimator [19]. The aim of this para- graph is to v alidate the prop osed appro ximation experimentally . W e consider a problem of stationary noise remov al. W e generate stationary pro cesses from the mo dels describ ed in Example 4 and Figure 4 for differen t v alues of γ . Bernoulli-uniform pro cesses are generated from functions φ i that are nonconv ex ( l 0 -norms) and in this case, problem (8) is a hard com binatorial problem. W e denoise the image using either a standard l 1 -norm relaxation: Find λ ∈ Arg min λ ∈ R n k∇ ( u 0 − ψ ? λ ) k 1 + α k λ k 1 , (18) or the l 2 -norm approximation suggested by the previous theorems: Find λ ∈ Arg min λ ∈ R n k∇ ( u 0 − ψ ? λ ) k 1 + α 2 k λ k 2 2 . (19) The optimal parameter α is estimated by dichotom y in order to maximize the SNR of the denoised image. As can b e seen in Figure 5 the l 1 -norm approx- imation provides b etter results for very sparse Bernoulli pro cesses and the l 2 appro ximation pro vides similar or better results when the Bernoulli pro cess gets denser. This confirms the results presented in section 3.1. for the remov al of stationary noise. 13 6.02dB 27.09dB 16.87dB 0.001 6.02dB 16.41dB 15.87dB 0.01 6.02dB 17.65dB 17.53dB 0.05 6.02dB 18.61dB 18.24dB 0.1 6.02dB 14.29dB 15.41dB 0.5 6.02dB 17.67dB 18.15dB 1 Figure 5: Denoising results with the resolution of an T V − l 1 or T V − l 2 problem. F rom top to bottom: increasing v alue of γ . Left: noisy images. Center: denoised using an l 1 prior. Righ t: denoised using an l 2 prior. for the remov al of stationary noise. 14 4 Primal-dual estimation in the l 2 -case Motiv ated by the results presented in the previous section, we fo cus on the l 1 − l 2 problem (17). Since the mapping λ 7→ P m i =1 α i 2 k λ i k 2 2 is stricly conv ex, this problem admits a unique minimizer. In this section, we aim at prop osing an automatic estimation of an adequate v alue of α = ( α 1 , · · · , α m ). A natural c hoice for the regularization parameter α (also known as Morozov’s discrepancy principle [15]) is to ensure that k ψ i ? λ i ( α ) k = k b i k (20) for a given norm k · k . In practice, k b i k is usually unknown, but the user usually has an idea of the noise lev el and can set k b i k ' η i k u 0 k where η i ∈ ]0 , 1[ denotes a noise fraction. In the rest of this section, we provide estimates for k b ( α ) k 2 , in the case m = 1 in paragraph 4.1 and in the general case in paragraph 4.2. When the parameters ( α i ) i ∈{ 1 ,...,m } are given, the filter with m filters is equiv alent to a related problem with 1 filter. The link is detailed in paragraph 4.3. Finally paragraph 4.4 shows ho w the prop osed results can b e used in a practical algorithm. The pro ofs are pro vided in the app endix. 4.1 Results for the case m = 1 filter W e first state our results in the particular case of m = 1 filter in order to clarify the exp osition. W e obtain several b ounds on the l 2 -norm of the noise k b ( α ) k 2 that are v alid for different v alues of α . The following theorem stated for m = 1 filter is a particular case of the results presen ted in paragraph 4.2. Theorem 2. L et α > 0 and denote h k = ψ ? ˜ ψ ? ˜ d k for k ∈ { 1 , . . . , d } . Then k b ( α ) k 2 ≤ √ n α max k ∈{ 1 ,...,d } k ˆ h k k ∞ . (21) If we further assume that ˆ ψ do es not vanish ther e exists a value ¯ α > 0 such that ∀ α ∈ (0 , ¯ α ] , b ( α ) = u 0 − u mean 0 . This theorem states that the norm of b is b ounded by a decaying function of α . Moreov er lim α → 0 + k b ( α ) k 2 = k u 0 − u mean 0 k 2 , and for sufficiently small v alues of α the solution is indep endent of α and known in closed form. Note that α 7→ k b ( α ) k 2 is not necessarily monotonically decreasing. The quantit y k u 0 − u mean 0 k 2 whic h is an upper b ound in a neigh b orho o d of 0 is not necessarily an upp er b ound for all α > 0. In our numerical tests, we never encountered a situation where k b ( α ) k 2 > k u 0 − u mean 0 k 2 . In the following, we make the abuse to refer to min( √ n α max k ∈{ 1 ,...,d } k ˆ h k k ∞ , k u 0 − u mean 0 k 2 ) as an “upp er bound”. As will b e observed in the numerical exp eriments in section 4.5, the b ound k b ( α ) k 2 ≤ √ n α max k ∈{ 1 ,...,d } k ˆ h k k ∞ pro vided in Theorem 2 is quite accurate and for the remov al of stationary noise. 15 sufficien t for supervised parameter selection. The following prop osition pro vides a low er b ound with the same asymptotic deca y rate in 1 α for k b ( α ) k 2 . Prop osition 3. Assume that ˆ ψ do es not vanish. L et b ( α ) = ψ ? λ ( α ) wher e λ ( α ) is the solution of (19) . L et P 1 denote the ortho gonal pr oje ctor on Ran(Ψ T ∇ T ) and b 1 = P 1 (Ψ − 1 u 0 ) . Then if α is sufficiently lar ge, k b ( α ) k 2 ≥ 1 α 1 k Ψ − 1 k 2 → 2 k b 1 k 2 k A + b 1 k ∞ . 4.2 Results for the general case m ≥ 1 filters In this paragraph, we state results that generalize Theorem 2 to the case of m ≥ 1 filters. Theorem 3. L et α = ( α 1 , · · · , α m ) denote p ositive weights. L et h i,k = ψ i ? ˜ ψ i ? ˜ d k for k ∈ { 1 , . . . , d } . Then k b i ( α ) k 2 ≤ √ n α i max i ∈{ 1 ,...,m } max k ∈{ 1 ,...,d } k ˆ h i,k k ∞ . (22) Theorem 4. Denote Ψ = (Ψ 1 , Ψ 2 , . . . , Ψ m ) ∈ R n × nm and assume that Ψ T Ψ has ful l r ank (this is e quivalent to the fact that ∀ ξ , ∃ i ∈ { 1 , . . . , m } , ˆ ψ i ( ξ ) 6 = 0 ). L et ˆ λ 0 ( α ) = ( ˆ λ 0 1 , . . . , ˆ λ 0 m ) b e define d by: ˆ λ 0 i ( α )( ξ ) = 0 if ξ = 0 , ¯ ˆ ψ i ( ξ ) ˆ u 0 ( ξ ) α i P m j =1 | ˆ ψ j ( ξ ) | 2 α j otherwise . (23) Then ther e exists a value ¯ α > 0 such that for al l α ∈ ]0 , ¯ α ] m the solution λ ( α ) of pr oblem (17) is: λ ( α ) = λ 0 ( α ) . (24) Theorems 3 and 4 generalize Theorem 2. In practice, w e observed that the ratio √ n α i max i ∈{ 1 ,...,m } max k ∈{ 1 ,...,d } k ˆ h i,k k ∞ k b i ( α ) k 2 do es not exceed limited v alues of the order of 5 (see the b ottom row of Figure 6). This gives an idea of the sharpness of (22). The b ound (22) can thus b e used to provide the user warm start parameters α i . This idea is detailed in the algorithm presented in section 4.4. 4.3 Equiv alence with a single filter mo del In section 3, w e show ed that the follo wing image formation model is rich enough for many applications of interest: u 0 = u + m X i =1 λ i ? ψ i (25) for the remov al of stationary noise. 16 where λ i is the realization of a Gaussian r andom ve ctor of distribution N (0 , σ 2 i I ). Let b = P m i =1 λ i ? ψ i . An imp ortan t observ ation is that the previous mo del is equiv alent to the follo wing: u 0 = u + λ ? ψ , (26) where λ is the realization of a Gaussian random vector N (0 , σ 2 I ) and σ and ψ satisfy: σ 2 | ˆ ψ ( χ ) | 2 = m X i =1 σ 2 i | ˆ ψ i ( χ ) | 2 , ∀ χ. (27) This condition ensures that b oth noises hav e the same co v ariance matrix E ( B B T ) where B is defined in (10). In what follo ws, we set α = 1 σ 2 and α i = 1 σ 2 i . The abov e remark has a pleasan t consequence: problems (28) and (29) b elow are equiv alent from a Bay esian p oint of view if only the noise comp onen t b = P m i =1 λ i ? ψ i and the denoised image u are sought for. min λ ∈ R n × m k∇ ( u 0 − m X i =1 λ i ? ψ i ) k 1 + m X i =1 α i 2 k λ i k 2 2 . (28) min λ ∈ R n k∇ ( u 0 − λ ? ψ ) k 1 + α 2 k λ k 2 2 . (29) Hence the optimization can b e performed on R n instead of R n × m . The follo wing result states that this simplification is also justified form a deterministic point of view. Theorem 5. L et λ i ( α ) denote the minimizer of (28) and λ ( α ) denote the min- imizer of (29) . L et b i ( α ) = λ i ( α ) ? ψ i and b ( α ) = λ ( α ) ? ψ . If c ondition (27) is satisfie d, the fol lowing e quality holds: m X i =1 b i ( α ) = b ( α ) . (30) Mor e over, the noise c omp onents b i ( α ) c an b e r etrieve d fr om b ( α ) using the fol- lowing formula: ˆ λ i ( ξ ) = ¯ ˆ ψ i ( ξ ) ˆ b ( ξ ) α i P m j =1 | ˆ ψ j ( ξ ) | 2 α j if P m j =1 | ˆ ψ j ( ξ ) | 2 6 = 0 0 otherwise . (31) In practice, this theorem allows to divide the computing times and memory requiremen ts by a factor appro ximately equal to m . for the remov al of stationary noise. 17 4.4 Algorithm The following algorithm summarizes how the results presented in this pap er allo w to design an effective sup ervised parameter estimation. Algorithm 1: Effective supervised algorithm. Input : u 0 ∈ R n : noisy image. ( ψ i ) i ∈{ 1 ,...,m } ∈ R n × m : a set of filters. ( η 1 , . . . , η m ) ∈ [0 , 1] m : noise levels. Output : u ∈ R n : denoised image ( b i ) i ∈{ 1 ,...,m } ∈ R n × m : noise comp onents (satisfying k b i k 2 ' η i k u 0 k 2 ). b egin Compute α i = √ n k ˆ h i k ∞ k u 0 k 2 η i (see Prop osition 4). Compute ˆ ψ = q P m i =1 k ˆ ψ i k 2 α i . Find λ ∈ arg min λ ∈ R n k∇ ( u 0 − λ ? ψ ) k 1 + 1 2 k λ k 2 2 (see [8]). Compute u = u 0 − λ ? ψ . Compute b = λ ? ψ . Compute b i = λ i ? ψ i using Theorem 5. 4.5 Numerical exp eriments The ob jectiv e of this section is to v alidate Theorem 3 exp erimentally and to c heck that the upp er b ound in the right-hand side of equation (22) is not to o coarse. W e compute the minimizers of (17) using an iterative algorithm for v arious filters, v arious images and v arious v alues of α . Then we compare the v alue k b ( α ) k 2 with min( √ n k ˆ h i k ∞ α i , k u 0 − u mean 0 k 2 ). As stated in paragraph 4.1, this quantit y is not strictly sp eaking an upp er-b ound but we could not find examples of practical in terest where k b ( α ) k 2 ≥ min( √ n k ˆ h i k ∞ α i , k u 0 − u mean 0 k 2 ). As can b e seen in the fourth and fifth row of Figure 6, the upp er-b ound and the true v alue follow a similar curv e. The fifth row shows the ratio b etw een these v alues. F or the considered filters, the upp er b ound deviates at most from a factor 4 . 5 from the true v alue. This shows that the upp er-b ound (22) can provide a go o d hin t on ho w to c ho ose a correct v alue of the regularization parameter. The user can then refine this b ound easily to get a visually satisfactory result. 5 App endix In this section we pro vide detailed proofs of the results presen ted in section 4. 5.1 Pro of of Theorem 3 Theorem 3 is a direct consequence of Lemma 1 and prop osition 4 b elow. for the remov al of stationary noise. 18 Figure 6: Comparison of the upp er b ound in equation (22) with k b ( α ) k 2 . First ro w: original image. 2nd row: noisy image. 3rd row: denoised using the pro- p osed algorithm. 4th row: comparison of the upp er b ound (22) with k b ( α ) k 2 . Last row: ratio b etw een the upp er bound and the true v alue of k b ( α ) k 2 . for the remov al of stationary noise. 19 Lemma 1. L et k · k N denote a norm on R n . The fol lowing ine quality holds: k ψ i ? λ i ( α ) k N ≤ 1 α i k Ψ i Ψ T i ∇ T k ∞ → N . (32) Pr o of. Problem (17) can b e recast as the follo wing saddle-p oint problem: min λ ∈ R n × m max q ∈ R n × d , k q k ∞ ≤ 1 h∇ ( u 0 − m X i =1 λ i ? ψ i ) , q i + m X i =1 α i 2 k λ i k 2 2 . The dual problem obtained using F enc hel-Ro ck afellar dualit y [21] reads: max q ∈ R n × d , k q k ∞ ≤ 1 min λ ∈ R n × m h∇ ( u 0 − m X i =1 λ i ? ψ i ) , q i + m X i =1 α i 2 k λ i k 2 2 . (33) Let q ( α ) denote the solution of the dual problem (33). The primal-dual optimalit y conditions are: λ i ( α ) = − Ψ T i ∇ T q ( α ) α i (34) and q ( α ) = ∇ ( P m i =1 ψ i ? λ i ( α ) − u 0 ) |∇ ( P m i =1 ψ i ? λ i ( α ) − u 0 ) | . (35) The last equalit y holds only formally since ∇ ( P m i =1 ψ i ? λ i ( α ) − u 0 ) may v anish at some lo cations. It means that q represents the normal to the level curves of the denoised image u 0 − P m i =1 ψ i ? λ i . Using (34), w e obtain ψ i ? λ i ( α ) = − 1 α i Ψ i Ψ T i ∇ T q ( α ). Moreov er, k q ( α ) k ∞ ≤ 1. It then suffices to use the norm op erator definition (2) to obtain inequality (32). In order to use inequality (32) for practical purp oses, one needs to estimate upp er b ounds for k · k ∞ ,N . Unfortunately , it is kno wn to b e a hard mathematical problem as shown in [11, 22]. The sp ecial case N = 2, which corresp onds to a Gaussian noise assumption, can b e treated analytically: Prop osition 4. L et h i = h i, 1 . . . h i,d with h i,k = ψ i ? ˜ ψ i ? ˜ d k . Then: k Ψ i Ψ T i ∇ T k ∞ → 2 = √ n k ˆ h i k ∞ = √ n max k ∈{ 1 ,...,d } k ˆ h i,k k ∞ . (36) for the remov al of stationary noise. 20 Pr o of. First remark that: k Ψ i Ψ T i ∇ T k ∞ , 2 = max k q k ∞ ≤ 1 k d X k =1 h i,k ? q k k 2 ≤ max k q k 2 ≤ √ n k d X k =1 h i,k ? q k k 2 ≤ √ n max P d k =1 k ˆ q k k 2 2 ≤ 1 k d X k =1 ˆ h i,k ˆ q k k 2 = √ n k ˆ h i k ∞ . In order to obtain the reverse inequalit y , let us define Q k = { q ∈ R n × d , q k ∈ { f 1 , · · · , f n } and q i = 0 , i ∈ { 1 , · · · , d }\{ k }} and the F ourier transform of this set which is ˆ Q k = { ˆ q ∈ C n × d , ˆ q k ∈ { ne 1 , · · · , ne n } and ˆ q i = 0 , i ∈ { 1 , · · · , d }\{ k }} . Let us denote Q = ∪ d k =1 Q k and ˆ Q = ∪ d k =1 ˆ Q k . Th us we obtain: k Ψ i Ψ T i ∇ T k ∞ , 2 = max k q k ∞ ≤ 1 k d X k =1 h i,k ? q k k 2 ≥ max q ∈Q k d X k =1 h i,k ? q k k 2 = max ˆ q ∈ ˆ Q k P d k =1 ˆ h i,k ˆ q k k 2 √ n = √ n k ˆ h i k ∞ whic h ends the pro of. 5.2 Pro of of Theorem 4 Denote Ψ = (Ψ 1 , Ψ 2 , . . . , Ψ m ) ∈ R n × nm and assume that Ψ T Ψ has full rank. This condition ensures the existence of λ satisfying P m i =1 λ i ? ψ i = u 0 − u mean 0 , where u mean 0 denotes the mean of u 0 . Prop osition 5. L et λ 0 ( α ) denote the solution of the fol lowing pr oblem λ 0 ( α ) = arg min P m i =1 α i 2 k λ i k 2 2 λ ∈ R n × m P m i =1 λ i ? ψ i = u 0 − u mean 0 . (37) for the remov al of stationary noise. 21 Then the ve ctor ˆ λ 0 ( α ) = ( ˆ λ 0 1 , . . . , ˆ λ 0 m ) is e qual to: ˆ λ 0 i ( ξ ) = 0 if ξ = 0 ¯ ˆ ψ i ( ξ ) ˆ u 0 ( ξ ) α i P m j =1 | ˆ ψ j ( ξ ) | 2 α j otherwise . (38) Pr o of. First notice that the full rank hypothesis on Ψ T Ψ is equiv alent to as- suming that ∀ ξ , ∃ i ∈ { 1 , . . . , m } , ˆ ψ i ( ξ ) 6 = 0 since Ψ i = F − 1 diag( ˆ ψ i ) F . Then: λ 0 ( α ) = arg min P m i =1 α i 2 k λ i k 2 2 λ ∈ R n × m P m i =1 λ i ? ψ i = u 0 − u mean 0 = arg min P m i =1 α i 2 k ˆ λ i k 2 2 λ ∈ R n × m P m i =1 ˆ λ i ˆ ψ i = \ u 0 − u mean 0 . This problem can b e decomp osed as n indep endent optimization problems of size m . If ξ = 0, it remains to observe that \ u 0 − u mean 0 (0) = 0 since u 0 − u mean 0 has zero mean. F or ξ 6 = 0, this amounts to solve the m dimensional quadratic problem: arg min ˆ λ ( ξ ) ∈ C m m X i =1 α i 2 | ˆ λ i ( ξ ) | 2 2 suc h that m X i =1 ˆ ψ i ( ξ ) ˆ λ i ( ξ ) = ˆ u 0 ( ξ ) . (39) It is straightforw ard to derive the solution (38) analytically . Lemma 2. If ˆ ψ i ( ξ ) = 0 then ˆ λ 0 i ( α )( ξ ) = 0 and if ˆ ψ i ( ξ ) 6 = 0 then | ˆ λ 0 i ( α )( ξ ) | ≤ ˆ u 0 ( ξ ) ˆ ψ i ( ξ ) . Ther efor e, for every α , k λ 0 i ( α ) k 2 ≤ k ˆ u 0 ˆ ψ i k 2 (with the c onvention to r eplac e by 0 the terms wher e the denominator vanishes). Pr o of. It is a direct consequence of Equation (38). Pr o of. of Theorem 4 Let F α ( λ ) = G ( λ ) + P m i =1 α i 2 k λ i k 2 2 with G ( λ ) = k ( ∇ (Ψ λ − u 0 ) k 1 . The ob jective is to prov e that ∂ F α ( λ 0 ( α )) 3 0 for sufficiently small α . Denote C = { β 1 R n , β ∈ R } the space of constant images. Since Ker( ∇ ) = C and Ψ λ 0 ( α ) − u 0 ∈ C , ∇ (Ψ λ 0 − u 0 ) = 0. Standard results of con vex analysis lead to ∂ G ( λ 0 ( α )) = Ψ T ∇ T ∂ k·k 1 (0) = Ψ T ∇ T Q where Q = { q ∈ R n × d , k q ( x ) k 2 ≤ 1 , ∀ x ∈ Ω } is the unit ball asso ciated to the dual norm k · k ∗ 1 . Since Ran( ∇ T ) = Ker( ∇ ) ⊥ w e deduce Ran( ∇ T ) = C ⊥ is the for the remov al of stationary noise. 22 set of images with zero mean. Therefore, since Q has non-empty in terior, there exists γ > 0 such that ∇ T Q ⊃ B (0 , γ ) ∩ C ⊥ where B (0 , γ ) denotes a Euclidean ball of radius γ . Therefore ( ∂ F α ( λ 0 ( α ))) i = ( ∂ G ( λ 0 ( α ))) i + α i λ 0 i ( α ) = (Ψ T ∇ T Q ) i + α i λ 0 i ( α ) ⊃ (Ψ T ( B (0 , γ ) ∩ C ⊥ )) i + α i λ 0 i ( α ) . Note that Ψ T ( B (0 , γ ) ∩ C ⊥ ) = Ψ T 1 ( B (0 , γ ) ∩ C ⊥ ) × . . . × Ψ T m ( B (0 , γ ) ∩ C ⊥ ) . Since conv olution op erators preserve C ⊥ w e obtain: Ψ T i ( B (0 , γ ) ∩ C ⊥ ) ⊃ B (0 , γ i ) ∩ Ran(Ψ i ) ∩ C ⊥ for some γ i > 0 . No w, it remains to remark that proposition 5 ensures λ 0 ( α ) ∈ (Ran(Ψ 1 ) ∩ C ⊥ ) × . . . × (Ran(Ψ m ) ∩ C ⊥ ) . Therefore for α i ≤ γ i k ˆ u 0 ˆ ψ i k 2 ( ∂ F α ( λ 0 )) i ⊃ ( B (0 , γ i ) ∩ Ran(Ψ i ) ∩ C ⊥ ) + α i λ 0 i ( α ) 3 0 . In view of Lemma 2 it suffices to set ¯ α = min i ∈{ 1 ,...,m } γ i k ˆ u 0 ˆ ψ i k 2 to conclude the pro of. 5.3 Pro of of prop osition 3 W e now concen trate on problem (29) in the case of m = 1 filter and provide a lo wer-bound on k b ( α ) k 2 . W e assume that Ψ in inv ertible, meaning that ˆ ψ do es not v anish. The dual problem of min λ ∈ R n k∇ ( u 0 − λ ? ψ ) k 1 + α 2 k λ k 2 2 (40) is max q ∈ R n × d , k q k ∞ ≤ 1 h∇ u 0 , q i − 1 2 α k Ψ T ∇ T q k 2 2 . (41) The solution λ ( α ) of (40) can b e deduced from the solution q ( α ) of (41) by using the primal-dual relationship λ ( α ) = − 1 α Ψ T ∇ T q ( α ) . (42) for the remov al of stationary noise. 23 W e can write: arg max q ∈ R n × d , k q k ∞ ≤ 1 h∇ u 0 , q i − 1 2 α k Ψ T ∇ T q k 2 2 (43) = arg min q ∈ R n × d , k q k ∞ ≤ 1 1 2 k Ψ T ∇ T q − α Ψ − 1 u 0 k 2 2 . (44) Let P 1 denote the orthogonal pro jector on Ran(Ψ T ∇ T ) and P 2 denote the orthogonal pro jector on Ran(Ψ T ∇ T ) ⊥ . Using these op erators, w e can write α Ψ − 1 u 0 = αb 1 + αb 2 where b 1 = P 1 Ψ − 1 u 0 and b 2 = P 2 Ψ − 1 u 0 . Problem (44) b ecomes: q ( α ) = arg min q ∈ R n × d , k q k ∞ ≤ 1 1 2 k Ψ T ∇ T q − αb 1 k 2 2 . Let us denote A = Ψ T ∇ T and q 0 ( α ) = A + b 1 k A + b 1 k ∞ . Since b 1 ∈ Ran( A ), A q 0 ( α ) = b 1 k A + b 1 k ∞ . Th us as long as k A + b 1 k ∞ ≥ 1 α : |k A q ( α ) k − α k b 1 k 2 | ≤ k A q ( α ) − αb 1 k = min k q k ∞ ≤ 1 k A q − αb 1 k 2 ≤ k A q 0 ( α ) − αb 1 k 2 = k b 1 k A + b 1 k ∞ − αb 1 k 2 = α − 1 k A + b 1 k ∞ k b 1 k 2 . Since A q ( α ) is a pro jection of αb 1 on a conv ex set that contains the origin, α k b 1 k 2 ≥ k A q ( α ) k 2 and we get: α k b 1 k 2 − k A q ( α ) k 2 ≤ α − 1 k A + b 1 k ∞ k b 1 k 2 whic h is equiv alen t to k A q ( α ) k 2 ≥ b 1 k A + b 1 k ∞ . Since λ ( α ) = − 1 α A q ( α ) we get: k λ ( α ) k 2 ≥ 1 α k b 1 k 2 k A + b 1 k ∞ and since λ = Ψ − 1 b we obtain || b ( α ) || 2 ≥ 1 α k Ψ − 1 k − 1 2 → 2 k b 1 k 2 k A + b 1 k ∞ . for the remov al of stationary noise. 24 5.4 Pro of of Theorem 5 Theorem 5 is a simple consequence of a more general result describ ed b elow. Let F b e a conv ex low er semi-contin uous (l.s.c.) function and Ψ : R n → R n , Ψ i : R n → R n , i = 1 . . . m denote linear operators. Define: ( λ i ) 1 ≤ i ≤ m = arg min ( λ i ) 1 ≤ i ≤ m ∈ ( R n ) m F m X i =1 Ψ i λ i ! + 1 2 m X i =1 k λ i k 2 , ( P 1 ) and λ = arg min λ ∈ R n F (Ψ λ ) + 1 2 k λ k 2 . ( P 2 ) Prop osition 6. If the op er ators Ψ and (Ψ i ) i =1 ...m satisfy the r elation ΨΨ ∗ = m X i =1 Ψ i Ψ ∗ i , then the solutions ( λ i ) 1 ≤ i ≤ m of ( P 1 ) and λ of ( P 2 ) ar e r elate d by Ψ λ = m X i =1 Ψ i λ i . Pr o of. W e define Ψ : R n × m → R n b y Ψ = (Ψ 1 , Ψ 2 , . . . , Ψ m ), so that for λ = λ 1 λ 2 · · · λ m , Ψ λ = P m i =1 Ψ i λ i . The optimality condition of P 1 reads: Ψ ∗ ∂ F ( Ψ ¯ λ ) + m X i =1 ¯ λ i 3 0 , and the optimality condition of P 2 reads Ψ ∗ ∂ F (Ψ ¯ λ ) + ¯ λ 3 0 . The minization problem P 2 admits a unique minimizer denoted λ . By hypothesis ΨΨ ∗ = ΨΨ ∗ , hence Ran Ψ = RanΨ and there exists λ 0 suc h that Ψ λ = Ψ λ 0 . The optimality condition of P 2 implies that 0 ∈ ΨΨ ∗ ∂ F (Ψ λ ) + Ψ λ, hence 0 ∈ ΨΨ ∗ ∂ F ( Ψ λ 0 ) + Ψ λ 0 = Ψ ( Ψ ∗ ∂ F ( Ψ λ 0 ) + λ 0 ) . for the remov al of stationary noise. 25 This prov es that ev ery vector λ 0 ∈ Ψ ∗ ∂ F ( Ψ λ 0 ) + λ 0 b elongs to Ker Ψ . If we c ho ose such a λ 0 and set λ = λ 0 − λ 0 w e hav e Ψ ∗ ∂ F ( Ψ λ ) + λ = Ψ ∗ ∂ F ( Ψ λ 0 ) + λ 0 − λ 0 3 0 . This implies that λ is the minimizer of P 1 and we hav e Ψ λ = Ψ ( λ 0 − λ 0 ) = Ψ λ 0 = Ψ λ, whic h ends the pro of. Let us now turn to the proof of Theorem 5. Pr o of. T o obtain (30), it suffices to make the change of v ariable λ 0 i = λ i √ α i in problem ( P 1 ) and to apply proposition (6) together with condition (27). T o ob- tain (31), it remains to observe that since P m k =1 b i ( α ) = b ( α ), the determination of λ i b oils down to the follo wing quadratic problem: ( λ i ( α )) i ∈{ 1 ,...,m } = arg min P m i =1 λ i ?ψ i = b ( α ) m X i =1 α i 2 k λ i k 2 2 = arg min P m i =1 ˆ λ i ˆ ψ i = ˆ b ( α ) m X i =1 α i 2 k ˆ λ i k 2 2 . The solution of this problem can b e obtained analytically b y deriving its opti- malit y conditions. It leads to equation (31). Conclusion This pap er focussed on the problem of stationary noise remo v al using v ariational metho ds. In the first part, we sho wed that assuming the noise to b e Gaussian is reasonable under conditions that are met in many applications suc h a destriping. In the second part we thus concentrated on v ariational problems that consist of minimizing l 1 − l 2 functionals. W e derived upp er and low er b ounds on the l 2 -norm of the solutions of these functionals and sho wed that they can be used for simplifying the task of parameter selection. W e also provided a n umerical tric k that allo ws to drastically reduce the computing times for cases where the noise is describ ed as a sum of stationary pro cesses. Overall this work allows to strongly reduce the computing times, to ease the parameter selection and to mak e our algorithms robust to differen t conditions. As a persp ective, let us notice that the low er b ound proposed in proposition (3) is coarse and it would b e interesting to obtain tighter results highlighting wh y the upp er b ound is near tigh t in practice. W e also plan to study the problem of deterministic parameter selection in a more general setting suc h as l p − l q functionals. for the remov al of stationary noise. 26 Ac knowledgmen ts This work was partially supp orted by ANR SPH-IM-3D (ANR-12-BSV5-0008). References [1] A. Ara vkin, Y. Burke and M. Friedlander V ariational pr op erties of value functions , T o app ear in SIAM Journal on optimization, 2013. [2] J.F. Aujol and A. Chambolle , Dual norms and image de c omp osition mo dels , International Journal of Computer Vision, 63, 1, 85–104, 2005 [3] F. Bauss, M. Nikolo v a and G. Steidl , F ul ly smo othe d l1-TV mo dels: Bounds for the minimizers and p ar ameter choic e , Journal of Mathematical Imaging and Vision, online F eb. 2013 [4] P. Billingsley , Conver genc e of pr ob ability me asur es , Wiley-Interscience, v ol 493, 2009. [5] H. Carf ant an and J. Idier , Statistic al line ar destriping of satel lite- b ase d pushbr o om-typ e images , IEEE T ransactions on Geoscience and Re- mote Sensing, 48, 4, 1860–1871, 2010. [6] A. Chambolle and T. Pock , A first-or der primal-dual algorithm for c onvex pr oblems with applic ations to imaging , Journal of Mathematical Imaging and Vision, 40, 1, 120–145, 2011. [7] S. Chen and J.-L. Pellequer , DeStrip e: fr e quency-b ase d algorithm for r emoving strip e noises fr om AFM images , BMC structural biology , 11, 1, 7, 2011. [8] J. Fehrenbach, P. Weiss and C. Lorenzo , V ariational algorithms to r emove stationary noise. Applic ation to micr osc opy imaging , IEEE Image Pro cessing, 21, 10, 4420–4430, 2012. [9] J. Fehrenbach, P. Weiss and C. Lorenzo , V ariational algorithms to r emove strip es: a gener alization of the ne gative norm mo dels , J. F ehren- bac h, P . W eiss and C. Lorenzo, Proc. ICPRAM, 2012. [10] G. Golub, M. Hea th, and G. W ahba , Gener alize d cr oss-validation as a metho d for cho osing a go o d ridge p ar ameter , T ec hnometrics, 21, 2, 215–223, 1979. [11] J. Hendrickx and A. Olshevsky , Matrix p-norms ar e NP-har d to ap- pr oximate if p 6 = 1 , 2 , ∞ , SIAM Journal on Matrix Analysis and Applica- tions, 31, 5, 2802–2812, 2010. [12] U. Leischner, A. Schierloh, W. Zieglg ¨ ansberger and H.-U. Dodt , F ormalin-induc e d fluor esc enc e r eve als c el l shap e and morpholo gy in biolo gic al tissue samples , PloS one, 5, 4, 2010. for the remov al of stationary noise. 27 [13] S. Malla t , A wavelet tour of signal pr o c essing , Academic Press, 1999. [14] Y. Meyer , Oscil lating p at terns in image pr o c essing and nonline ar evolu- tion e quations: the fifte enth De an Jac queline B. L ewis memorial le ctur es , Amer Mathematical So ciety , 2001. [15] V. Morozo v , On the solution of functional e quations by the metho d of r e gularization , Soviet Math. Dokl, 7, 1, 414–417, 1966. [16] B. M ¨ unch, P. Tr tik, F. Marone, and M. St amp anoni , Strip e and ring artifact r emoval with c ombine d wavelet-F ourier filtering , Opt. Express, 17, 10, 8567–8591, 2009. [17] M. Ng, P. Weiss and X. Yuan , Solving c onstr aine d total-variation image r estor ation and r e c onstruction pr oblems via alternating dir e ction metho ds , SIAM journal on Scientific Computing, 32, 5, 2710–2736, 2010. [18] M. Nikolo v a , A variational appr o ach to r emove outliers and impulse noise , Journal of Mathematical Imaging and Vision, 20, 1-2, 99–120, 2004. [19] M. Nik olov a Mo del distortions in Bayesian MAP r e c onstruction , Inv erse Problems and Imaging, 1, 2, 2007. [20] S. Osher, A. Sol ´ e and L. Vese , Image de c omp osition and r estor ation using total variation minimization and the H-1 norm , SIAM Multiscale Mo deling & Simulation, 1, 3, 349–370, 2003. [21] R.T. Rockafellar , Convex analysis , V ol. 28, Princeton univ ersity press, 1996. [22] J. Rohn , Computing the norm k A k ∞→ 1 norm is NP-har d , Linear and Multilinear Algebra, 47, 3, 195–204, 2000, T a ylor & F rancis. [23] T. Teuber, G. Steidl and R. Chan Minimization and p ar ameter es- timation for seminorm r e gularization mo dels with I-diver genc e c onstr aints , In verse Problems, 29, 3, 2013. [24] S. V aiter, C. Deledalle, G. Peyr ´ e, J. F adili and C. Dossal , L o c al Behavior of Sp arse Analysis R e gularization: Applic ations to Risk Estima- tion , Applied and Computational Harmonic Analysis, 2012. [25] E. v an den Berg, M.P. Friedlander , Sp arse optimization with le ast- squar es c onstr aints , SIAM Journal on Optimization, 21, 4, 1201–1229, 2011. [26] L. Vese and S. Osher , Mo deling textur es with total variation minimiza- tion and oscil lating p atterns in image pr o c essing , Journal of Scien tific Com- puting, 19, 1, 553–572, 2003 [27] J. Y ang, Y. Zhang, W. Yin , An efficient TVL1 algorithm for deblur- ring multichannel images c orrupte d by impulsive noise , SIAM Journal on Scien tific Computing, 31, 4, 2842–2865, 2009. for the remov al of stationary noise. 28 [28] P. Weiss, L. Blanc-F ´ eraud and G. A uber t , Efficient schemes for total variation minimization under c onstr aints in image pr o c essing , SIAM journal on Scientific Computing, 31, 3, 2047–2080, 2009.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment