Application of three graph Laplacian based semi-supervised learning methods to protein function prediction problem

Protein function prediction is the important problem in modern biology. In this paper, the un-normalized, symmetric normalized, and random walk graph Laplacian based semi-supervised learning methods will be applied to the integrated network combined …

Authors: Loc Tran

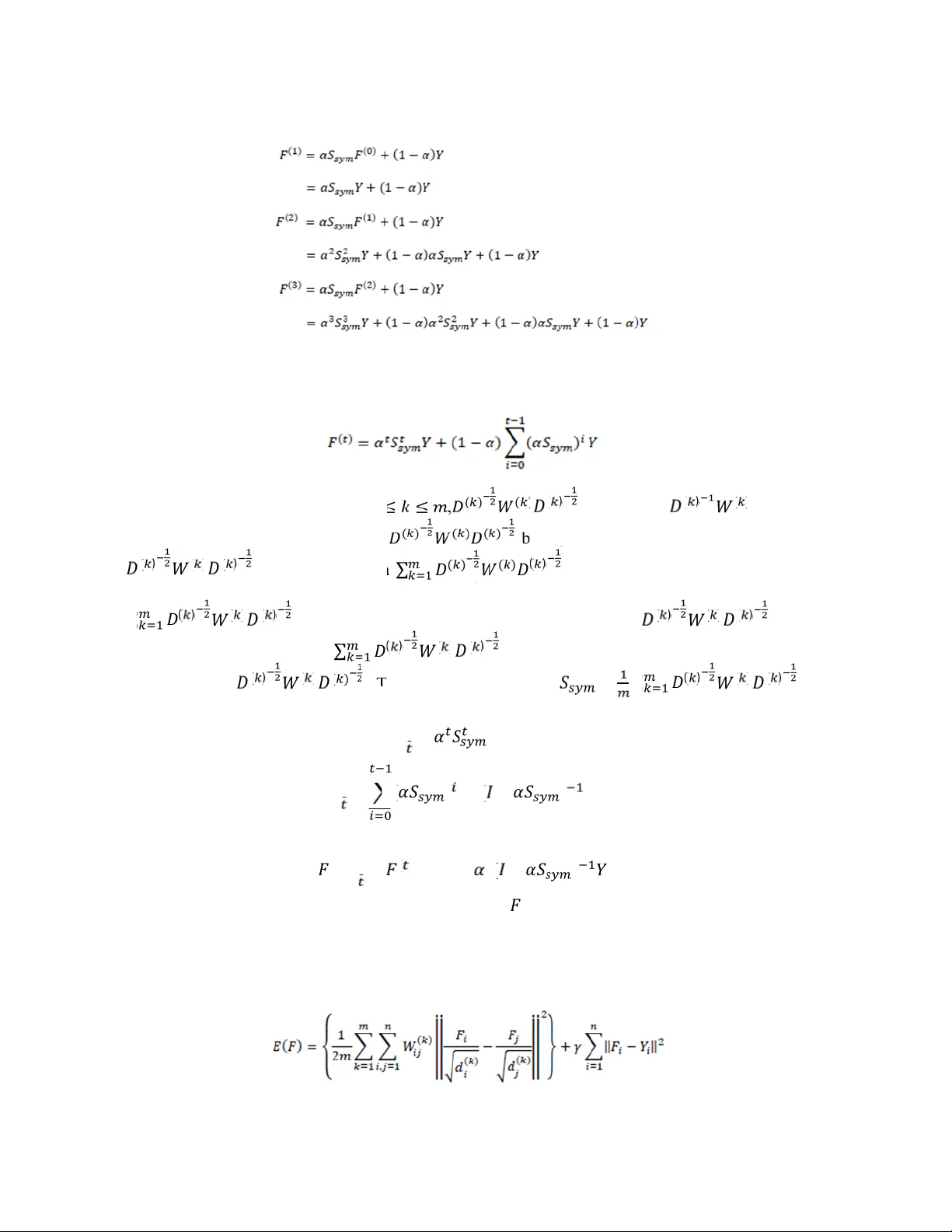

Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2, June 2 013 DOI: 10 .5121/ijb b.2013.320 2 11 Application of three graph Laplacian based semi - supervised learning methods to protein function prediction problem Loc Tran University of Minn esota tran039 8@umn.e du Abstract: Protein function prediction is the imp ortant problem in mo dern b iology. In this paper, the u n -normalized , symmetric norma lized, a nd random walk graph Laplacia n based semi -supervised learn ing method s will b e applied to the integrated ne twork comb ined from m ultiple netwo rks to p redict th e fu nctio ns o f all yeast proteins in these multiple networks. These multiple network s are network created from Pfam domain structure, co- particip ation in a protein comple x, p rotein -protein interaction ne twork, g enetic interact ion network, an d netwo rk c reated from cell cycle gen e expression measu rements. Multip le n etworks are combin ed with fixed weights instead of using conve x optimization to determin e the combinatio n weig hts due to h igh time comp lexity o f co nvex optimiza tion metho d. This simp le combin ation me thod will n ot affect the accura cy p erforman ce m easu res of the three semi -su pervised lea rning metho ds. Exp eriment results show that the un -no rmalized a nd symmetric normalized graph Laplacian based methods perform slig htly better than random walk g rap h Laplacian based method fo r inte grated network. Moreover, t he accura cy performan ce measures of these three semi -su pervised lear ning methods for integra ted ne twork are much better tha n the b est accurac y performanc e measure s of th ese three m ethods fo r the ind ividual n etwork. Keywords : semi-super vised lea rning, gra ph Laplac ian, yeast, pro tein, function 1. Introduction Protein function pr ediction is the important problem in modern biolo gy. Identif ying the function of proteins by biological experiments is very expensive and hard . He nce a lot of computational methods ha ve been proposed to i nfer the functions of the proteins by using var ious types of information such as gene expression data and protein -protein interaction networks [1]. First, i n order to predict protein function, the s equence similarity algorithms [2 , 3] ca n be employed to f ind t he ho mologies between the already annotated protein s and theun -annotated protein. The n the annotated protein s with si milar sequence s c an be used to assign th e function to the un-annotated protein. Tha t’s the classical way to predi ct protein function [4]. Second, to predict protein function, a graph ( i.e. kernel) which is the natural model of r elationship between proteinsc an also be employed. In th is model, the nodes r epresent proteins . T he edges represent for the possible interactions between nodes. T hen, machine learning m ethods s uch as Support V ector M achine [5 ], Artificial Neural Networks [4], un-norm alized graph Lap lacian based semi -supervised learning method [6 ,14], or neighbor counting method [7 ] can be applied to this graph to infer the fun ctions of un -annotated protein. The neighbor counting method labels the protein with the function that o ccurs frequently in the prot ein ’ s adjacent nodes in the protein - protein interaction network. H ence neighbor counting method does not utilize the f ull topology of the network. However, t he Artificia l Neural N etworks, Support Vec tor Machine , a ndun- normalized graph Laplacian ba sed semi-supervised learning method utilize the full topology of Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 12 the network.Moreover, the Artificial Neura l Networks and Support V ector Machine are supervised learning methods . While the neighbor coun ting method, the A rtificial Neural Network s, a nd the un-nor malized graph Laplacian based semi -supervised learningmethod are all based on the assum ption that t he labels of two adjacent proteins i n graph are likely to be the same, SVM do not rely on this assumption. Graphs used in neighbor counting method, Artificial Neural Networks, and the un - normalized graph Laplacian b ased semi-supervised learning method are very sp arse.However, t he graph (i.e. kernel) us ed in SVM is fully-connected. Third, t he Artificial Neural Networ ks m ethod is applied t o the single protein-protein interaction network only. However, the SVM method and un -normalized graph Laplacian based se mi- supervised learning method try t o use weighted combination of multiple networks(i.e. kernels) such as gene co-expression net workand prot ein-protein interaction network to improve the accuracy performance m easures . While [5] (SVM m ethod) determine s the optimal weighted combination of ne tworks by solving t he semi -definite problem , [6,14] (un-normalized graph Laplacian bas ed semi -supervised l earning method) use s a dual problem and gradient descent to determine the weighted combination of networks. In t he last decade, t he normalized graph Lapl acian [8] and random wal k graph La placian [9] based semi-supervised learning methods have successfully been applied to some s pecific classification tas ks such as digit recognition and t ext classification. However, t o the best of my knowledge, the normalized graph La placian and random walk graph Laplacian based semi- supervised lear ning methods have not yet been applied to protei n function prediction problem and hence their o verall accuracy perfo rmance measure comparisons have not been done. In this paper, we will apply thr ee un -norm alized , symmetric normalized , a nd random walk graph La placian based semi-supervised l earning m ethod s to the integrated network combined wi th f ixed weights.These five networksused for the combination ar e available from [6] . The m ain poi nt of these three methods is to let every node of the graph iteratively propagate s its label in formation to its adjacent nod es and the pr ocess is repeated until convergence [8]. Moreove r, since [6] has pointed out that the integrated network com bined with optimized w eights has similar performance to the integrated network combined with equal weights, i.e. without optimization, we wil l use the integrated network combined with equal weights due t o high time -complexity of these optimization methods . This type of combination will be discussed clearly in th e next sections. We will organize the paper as follows: Section 2 will introduce random walk and symmetric normalized graph Laplacian based semi-supervised learning algorithms i n detail.Section 3wil l show how t o derive the closed f orm solut ions of normalized an d un-normalized gra ph Laplacian based semi-supervised l earning f r om r egularization framework. In section 4, we will a pply these three algorithms to the integrated network of fi ve networks avail able from [6]. T hese five networks are network created from Pfam domain structure, co -participation in a protein complex, protein-protein interaction network, genetic interaction network, and network created from cell cycle gene expression measurements . Se ction 5 will conclude this paper and discus s the future directions of researches of this protein f unction prediction problem utilizing hypergraph Laplacian. Claim: Random walk and symmetric normalized graph La placians h ave been widely use d not in classification but also i n clustering [ 8,13]. In this paper, we will f ocus on the application of these two graph Lap lacians t o the protein function p rediction problem. The accurac y perfo rmance measures of these two methods will be compared to the accuracy performance measure of the un - normalized graph Laplacian based semi - Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 13 We do not claim that the acc uracy performance measures of these t wo methods will be better than the accuracy per form ance measure of the un -normalized graph Laplacian based se mi- supervised l earning method (i .e. the published method) in this protein function prediction problem. We just do the com parisons. To the best of my knowledge, no theoretical framework ha ve been given to prove that which graph Laplacian method achieves t he best accuracy per formance measure in the classific ation task. In the other words, the accurac y performance measures of these three graph Laplacian based semi-supervised learning methods depend on the datasets we used. However, in [8] , the author have pointed out that t he accuracy pe rformance measure of the symmetric normalized graph Laplacian based semi-supervised learning method ar e better than accuracy performance measures of the random walk and un -normalized graph Laplacian ba sed semi -supervised learn ing methods in digit recognition and t ext categorization problems. Moreover, its accuracy perf ormance measure is also better than the accuracy performance measure of Support Vector Machine method (i.e. the known best classifier in literature) in two pr opos ed digit recognition and text categorization problems. Thi s fact is worth investigated in protein funct ion prediction problem. Again, we do not claim t hat our two proposed random wal k an d symmetric normalized graph Laplacian based semi-supervised learning methods will perform better than the published method (i.e. the un-normalized graph Laplacian method)in this protein function pr ediction problem. At least, the ac curacy perfor mance measures of two new p roposed methods are similar to or are not worse than the accuracy performance measure of the published method (i.e. the un -normalized graph Laplacian method). 2. Algorithms Given networks i n the d ataset, t he weights for individual net works used to combine to integrated network are . Given a set of proteins { , … , , , … , } where = + is the total number of proteins in the integrated network, define c bethe total number of functional classes and the matrix ∈ ∗ be th e estimated label matrix for the set of protein s { , … , , , … , }, where the point is la beled as sign( ) for each functional class j ( 1 ≤ ≤ ) . Please note that { , … , } is the set of all labeled points and { , … , } i s the set of all un -labeled points. Let ∈ ∗ the initial label matrix for n proteins in the network be defined as follows = 1 1 ≤ ≤ − 1 1 ≤ ≤ 0 + 1 ≤ ≤ Our objective is t o predict the labels of t he un -labeled points , … , . We can achieve this objective by l etting ev ery node (i.e. proteins) in the net work it eratively pr opagates i ts label information t o its adjacent n odes a nd this process is repeated unt il convergence. These three algorithms are based on three assumptions: - local consistency: nearby proteins are likely to have th e same function - global consistency: proteins on the same structure (cluster or sub -manifolds) are likely to have the same function - these protein networks contain no self -loops Let ( ) represents the indi vidual network in the dataset. Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 14 Random walk gr aph Laplacian based semi-supe rvised learning algorithm In t his section, we slightly change the original random walk graph Laplacian bas ed semi- supervised learning algo rithm can be obtained from [9] . T he outline of the new versi on of this algorithm is as follows 1. Form the affinity matrix ( ) (for each k such that 1 ≤ ≤ ) : ( ) = exp − || − || 2 ∗ ≠ 0 = 2. Construct = ∑ ( ) ( ) where ( ) = di ag( ( ) , … , ( ) ) and ( ) = ∑ ( ) 3. Iterate until convergence ( ) = ( ) + ( 1 − ) , where α is an arbitrary parameter belongs t o [0,1] 4. Let ∗ be the limit of the sequence { ( ) }. For each protein functional class j, label each protein ( + 1 ≤ ≤ + ) as sign( ∗ ) Next, we l ook for the closed -form sol ution of the random walk graph Laplacian based semi- supervised learning. In the other words, we need to show that … Thus, by induction, Since is the stochastic m atrix, its eigenvalues are in [ - 1,1]. Moreover, since 0<α<1, thus Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 15 Therefore, Now, from the above formula, we can compute ∗ directly. The original random wal k graph Laplacian based semi -supervised learning algorithm developed by Zhu can be derived from the modified algorithm by setting = 0 , where 1 ≤ ≤ and = 1, where + 1 ≤ ≤ + . In the other words, we can express ( ) in matrix form as follows ( ) = ( ) + ( − ) , where I is the identity matrix and = 0 … 0 ⋮ ⋮ 0 … 0 0 0 1 0 ⋮ ⋮ 0 … 1 ( ) Normalized gr aph Laplacian based semi-supervised learning algorithm Next, we will give t he brief overview of the original normalized graph Laplacian based semi- supervised learning algorithm can be obtained from [8]. The outline of this algorithm is as follows 1. Form the affinity matrix ( ) (for each 1 ≤ ≤ ) : ( ) = exp − || − || 2 ∗ ≠ 0 = 2. Construct = ∑ ( ) ( ) ( ) where ( ) = diag( ( ) , … , ( ) ) and ( ) = ∑ ( ) 3. Iterate until convergence ( ) = ( ) + ( 1 − ) , where α i s an arbitrary p arameter belongs to [0,1] 4. Let ∗ be the limit of the sequence { ( ) }. For each protein functional class j, label each protein ( + 1 ≤ ≤ + ) as sign( ∗ ) Next, we look f or the closed -form sol ution of the nor malized graph Laplacian based semi - supervised learning. In the other words, we need to show that ∗ = l i m → ∞ ( ) = ( 1 − ) − Suppose ( ) = , then Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 16 … Thus, by induction, Since for every integer k such that 1 ≤ ≤ , ( ) ( ) ( ) is similar to ( ) ( ) which is a stochastic matrix, eigenvalues o f ( ) ( ) ( ) b elong to [ -1,1]. Moreover, for every k, ( ) ( ) ( ) is symmetric, then ∑ ( ) ( ) ( ) is also symmetric . Therefore, by using Weyl’s inequality in [10] and the references therein, the la rgest eigenvalue of ∑ ( ) ( ) ( ) is at most t he sum of e very largest eigenvalues of ( ) ( ) ( ) and the smallest ei genvalue o f ∑ ( ) ( ) ( ) is at l east t he sum of every smallest eigenvalues of ( ) ( ) ( ) . Thus, t he eigenvalues of = ∑ ( ) ( ) ( ) belong to [- 1,1]. Moreover, since 0<α<1, thus lim → ∞ = 0 lim → ∞ ( ) = ( − ) Therefore, ∗ = l i m → ∞ ( ) = ( 1 − )( − ) Now, from the above formula, we can compute ∗ directly. 3. Regularization Frameworks In this section, we will develop the regularization framework f or the normalized graph Laplacian based semi-supervised learning iterative version . First, let’s consider the error function Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 17 In this e rror functi o n ( ) , a n d belong to . Please note that c is the total number o f protein functional classes, ( ) = ∑ ( ) , and i s the positive regularization parameter . Hence = ⋮ = ⋮ Here ( ) stands for the sum of the s quare loss between t he estimated label matrix and th e initial label matrix and the smoothness constraint. Hence we can rewrite ( ) as follows ( ) = − + ( ( − ) ( − )) Our objective is to minimize this error function. I n the other words, we solve = 0 This will lead to Let = . Hence the solution ∗ of the above equations is ∗ = (1 − )( − ) Also, please note that = ∑ ( ) ( ) is not t he symmetric matrix, thus we cannot develop the regularization framework f or th e random wal k graph Laplacian based se mi- supervised learning iterative version. Next, we will develop the regularization f ramework for t he un -norm alized graph Lapl acian based semi- supervised learning algorithms. First, let’s consider the error function ( ) = 1 2 ( ) − , + ‖ − ‖ In this error functio n ( ) , and belong to . Please note that c is the total number of protein functional classes and is the positive regularization parameter . Hence = ⋮ = ⋮ Here ( ) stands for the sum of the s quare loss between t he estimated label matrix and th e initial label matrix and the smoothness constraint. Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 18 Hence we can rewrite ( ) as follows ( ) = 1 ( ) + ( ( − ) ( − )) Please note that un-normalized Laplacian m atrix of the networ kis ( ) = ( ) − ( ) . Our objective is to minimize this error function. I n the other words, we solve = 0 This will lead to 1 ( ) + ( − ) = 0 ∑ ( ) + = Hence the solution ∗ of the above equations is ∗ = ( 1 ( ) + ) Similarly, we can also obtain the other form of solution ∗ of the normalized graph Laplacian based s emi-supervised learning algorithm as follows (no te normalized Laplacian matri x of networkis ( ) = − ( ) ( ) ( ) ) ∗ = ( 1 ( ) + ) 4. Experiments a nd results The Dataset The th ree symmetric normalized, random wa lk , and un-normalized graph Lapl acian based se mi- supervised learning are applied to the dataset obtained from [6]. This dataset is composed of 3588 yeast pr oteins from Sacchar omyces cerevisiae , annotated with 13 highest -level functional classes from M IPS Comprehensi ve Yeast Genome Dat a (Table 1) . This dataset contains fiv e networks of pairwise relationships, which ar e very sparse .These five networks are network c reated from Pfam domain stru cture ( ( ) ), co-participation in a protein complex ( ( ) ), protein-protein interaction network ( ( ) ), genetic interaction ne twork ( ( ) ), and network created f rom cell cycle gene expression measurements ( ( ) ). The first network, ( ) , was obtained from the Pfam domain structure of the given genes. At the time of t he curation of t he dataset, Pfam con tained 4950 domains. For each protein, a bi nary vector of this length was created. Each element of this vector represe nts the presence or a bsence of one Pfam domain. T he value of ( ) is then the normalization of the dot product between the domain vector s of proteins i and j. The f ifth network, ( ) , was obtained f rom gene expre ssion data collec ted by [12]. I n this network, an edge wit h weight 1 is c reated between two pr oteins if their gene expression profiles are sufficiently similar. Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 19 The r emaining three networks were created with d ata from t he MIP S Comprehensive Yeast Genome Database (CYGD). ( ) is composed of binary edges indicating whether the given proteins are known to c o -participate in a protein complex. The binary edges of ( ) indicate known protein -protein physical i nteraction s. Finally, the binary edges i n ( ) indicate known protein-protein genetic interactions. The protein functional classes these proteins w ere a ssigned to are the 13 funct ional classes defined by CYGD a t the t ime of the curation of this dataset. A brief description of these functional classes is given in the following Table 1. Table 1: 13 CYGD functio nal classes Classes 1 Metabolis m 2 Energy 3 Cell cycle and DN A pro cessing 4 Transcriptio n 5 Protein synthe sis 6 Protein fate 7 Cellular transpo rtation and tr anspor tation mech anism 8 Cell r escue, defe nse and virulence 9 Interaction with cell en vironment 10 Cell fate 11 Control of cell o r ganization 12 Transp ort facilitation 13 Others Results In this section, we experim ent with the above three m ethods in terms of classification accuracy performance measure. All experiments were implemented in Matlab 6.5 o n virtual machine. For the comparisons discussed here , the t hree-fold cross validation is use d to compute the accuracy performance m easures for each class and e ach m ethod. The accuracy per formance measure Q is given as follows = + + + + True Positive (TP), True Negative (T N), False Positive ( FP), and False Negative (FN) are defined in the following table 2 Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 20 Table 2: Definitions of TP, TN, FP, and FN Pred icted Label Positive Negative Known Lab el Positive True Po sitive (T P) False Ne gative (FN) Negative False Po sitive (FP) True N egative (TN ) In t hese e xperiments, parameter is set to 0.85 a nd = 1 . For this dataset, th e third table shows the accuracy performance measures of the three methods applying to integrated network for 13 functional classes Table 3: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using integrated network Functional Classes Accurac y Perfor mance Measu res (%) Integrated Ne twork Normalized Random Wa lk Un-nor malized 1 76.87 76.98 77.20 2 85.90 85.87 85.81 3 78.48 78.48 77.56 4 78.57 78.54 77.62 5 86.01 85.95 86.12 6 80.43 80.49 80.32 7 82.02 81.97 81.83 8 84.17 84.14 84.17 9 86.85 86.85 86.87 10 80.88 80.85 80.52 11 85.03 85.03 85.92 12 87.49 87.46 87.54 13 88.32 88.32 88.32 From th e above table 3, we recognized that th e symmetric normalized and un-normalized graph Laplacian based semi-supervised learning methods slightly p erform better than the random walk graph Laplacian based semi-supervised learning method. Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 21 Next, we will show the accuracy performance measures of the three methods for each individual network ( ) in the following tables: Table 4: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using network ( ) Functional Classes Accurac y Perfor mance Measu res (%) Network ( ) Normalized Random Wa lk Un-nor malized 1 64.24 63.96 64.30 2 71.01 71.07 71.13 3 63.88 63.66 63.91 4 65.55 65.41 65.47 5 71.35 71.46 71.24 6 66.95 66.69 67.11 7 67.89 67.70 67.84 8 69.29 69.29 69.31 9 71.49 71.40 71.52 10 65.30 65.47 65.50 11 70.09 70.04 70.12 12 72.71 72.66 72.63 13 72.85 72.77 72.85 Table 5: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using network ( ) Functional Classes Accurac y Perfor mance Measu res (%) Network ( ) Normalized Random Wa lk Un-nor malized 1 24.64 24.64 24.64 2 27.84 27.84 27.79 3 23.16 23.16 23.08 Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 22 4 22.60 22.60 22.52 5 26.37 26.37 26.23 6 24.39 24.39 24.19 7 26.11 26.11 26.37 8 27.65 27.65 27.62 9 28.43 28.43 28.34 10 25.81 25.81 25.22 11 27.01 27.01 25.98 12 28.43 28.43 28.40 13 28.54 28.54 28.54 Table 6: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using network ( ) Functional Classes Accurac y Perfor mance Measu res (%) Network ( ) Normalized Random Wa lk Un-nor malized 1 29.63 29.57 29.40 2 34.11 34.11 33.95 3 27.93 27.90 27.70 4 28.51 28.48 28.57 5 34.03 34.03 33.92 6 30.57 30.55 30.04 7 32.08 32.08 32.02 8 33.05 33.03 32.92 9 33.78 33.78 33.75 10 30.18 30.18 29.99 11 32.64 32.64 32.53 Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 23 12 34.53 34.53 34.45 13 34.48 34.48 34.31 Table 7: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using network ( ) Functional Classes Accurac y Perfor mance Measu res (%) Network ( ) Normalized Random Wa lk Un-nor malized 1 18.31 18.28 18.26 2 20.93 20.90 20.88 3 18.09 18.06 18.09 4 18.39 18.39 18.39 5 21.07 21.07 21.04 6 18.98 18.98 18.90 7 18.73 18.73 18.67 8 19.90 19.90 19.62 9 20.04 20.04 19.93 10 17.31 17.28 17.17 11 19.18 19.18 19.09 12 20.54 20.54 20.57 13 20.54 20.54 20.48 Table 8: Comparisons of symmetric normalized, random walk, and un -normalized graph Laplacian based methods using network ( ) Functional Classes Accurac y Perfor mance Measu res (%) Network ( ) Normalized Random Wa lk Un-nor malized 1 26.45 26.45 26.51 2 29.21 29.21 29.21 Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 24 3 25.89 25.78 25.92 4 26.76 26.62 26.76 5 29.18 29.18 29.18 6 27.42 27.23 27.42 7 28.21 28.18 28.01 8 28.51 28.54 28.54 9 29.71 29.68 29.65 10 26.81 26.95 27.01 11 28.79 28.82 28.85 12 30.16 30.13 30.16 13 30.18 30.16 30.18 From th e above tables, we easily see that the un -normalized (i.e. the publ ished) and normalized graph Laplacian based se mi-supervised learning methods slightly perform bett er t han the random walk graph Laplacian based s emi-supervised learning method using network ( ) and ( ) . For ( ) , ( ) , and ( ) , the random walk and the normalized graph Laplacian based semi - supervised learning methods slightly perf orm better than the un -normalized (i.e. t he published) graph Laplacian based sem i -supervised learning m ethod. ( ) , ( ) , and ( ) are all three networks created with data from the MIPS Com prehensive Yeast Genome Database (CYGD). Moreover, the accuracy performance measures of all three methods for ( ) , ( ) , ( ) , and ( ) are un-acceptable since they are worse than random guess. Again, this fact occurs due to the sparseness of these four networks. For integrated network and e very individual network except ( ) , we recognize that the symmetric normalized graph Laplacian ba sed semi -supervised l earning method performs sl ightly better than the other two graph Laplacian based m ethods. Finally, the accuracy performance measures of these three methods for the integrated network are much better than t he best accuracy p erformance measure of these three methods for individual network. Due to t he spars eness of the networks, the accuracy performance measures for individual networks W2, W3, W4, and W5 a re unacceptable. They ar e worse than ra ndom guess . The best accuracy performance measure of these three methods for individual network will be shown in the following s upplemental table. Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 25 Supplement Table: Comparisons of un-normalized graph Laplacian based methods using network ( ) and integrated network Functional Classes Accurac y Perfor mance Measu res (%) Integrated net work (un-nor malized) Best individ ual net work ( ) (un-nor malized) 1 77.20 64.30 2 85.81 71.13 3 77.56 63.91 4 77.62 65.47 5 86.12 71.24 6 80.32 67.11 7 81.83 67.84 8 84.17 69.31 9 86.87 71.52 10 80.52 65.50 11 84.92 70.12 12 87.54 72.63 13 88.32 72.85 5. Conclusion The detailed iterative al gorithms and regularization fram eworks for the th ree no rmalized , r andom walk, a nd un -normalized graph Laplacian based semi-supervised l earning methods applying to the inte grated network from multiple networks have been developed . T hese three methodsare successfully applied to the protein function prediction problem ( i.e. classification problem). Moreover, t he comparison of the accuracy perfor mance measures fo r these three methods h as been done. These three methods c an also be applied to cancer classification problems using gene expression data. Moreover, these three methods can not only be us ed in cl assification p roblem b ut also i n ranking problem. In specific, give n a se t of genes (i.e. the queries ) making up a protei n complex/ pathways or given a set of genes (i.e. t he queries) involved in a specific disease (for e.g. leukemia), these three methods can also be us ed to fi nd more potential members of the complex/pathway or more genes involved i n the same di sease by ranking genes i n gene c o-expression network (derived Internatio nal Journal o n Bioinfor matics & B ioscience s (IJB B) Vo l.3, No.2 , June 2013 26 from gene expr ession data) or the protein -protein interaction networ k or the i ntegrated network of them. The genes with the highest rank the n will be select ed and t hen checked by b iologist experts to se e if the extended genes in fact belong to the same complex/pathway or are involved in the same disease. T hese problems are also called complex/pathway mem bers hip determination and biomarker discovery in cancer classification. I n cancer cl assification problem, only the sub- matrix of the gene expression data of th e extended gene list will be used i n cancer classificatio n instead of the whole gene expression d ata. Finally, to the best of my knowledge, the normalized , ra ndom walk, and un-normalized hypergraph Laplacian based sem i-supervised learning methods have not be en applied to the protein function prediction problem. T hese methods applied to protein functio n prediction are worth inv estigated since [ 11] have s hown that these hypergraph Laplacian based semi- supervisedlearning methods outperform the graph Laplacian based s emi-supervised learning methods in text-categorization and letter recognition. References 1. Shin H.H ., Lisewski A.M.a nd Lichtarge O.G raph shar pening plus gra ph inte gration: a syne rgy that improves pr otein functio nal cl assificatio n B ioinfor matics2 3(23) 321 7-3224, 2007 2. Pearso n W .R. and Lipman D .J. Improved tools for biological sequence co mparison Pr oceedings o f the Nationa l Acade my of Science s of the U nited State s of A merica, 85 (8), 24 44 – 244 8, 1998 3. Lockhart D.J ., Dong H., B yrne M.C., Folle ttie M.T., Ga llo M .V., Chee M.S., M ittmann M., Wang C., Koba yashi M. , Horton H. , and B ro wn E.L. E xpressio n monit oring by hybridizatio n to high - density oligo nucleotide arra ys Nature Bio technology, 1 4(13 ), 1675 – 168 0, 1996 4. Shi L., Cho Y. , and Zhang A. Pred iction of P rotein Funct ion from Co nnectivit y of P rotein Interaction Ne tworks I nternati onal Jo urnal of Comp utational Bio science, Vo l.1, No. 1, 2010 5. Lanckriet G.R.G., De ng M., Cristiani ni N ., Jor dan M.I., a nd Nob le W.S. Ke rnel -ba sed data f usion and its a pplicatio n to pro tein function pred iction in yeast Pa cific S ymposiu m o n B iocomputi ng (PSB ), 2004 6. Tsuda K., Shin H .H, and S choelkop f B. Fast protein c lassificatio n with multiple net works Bioinfor matics (EC CB’05), 21(Suppl. 2 ):ii59 -ii65, 200 5 7. Schwiko wski B., Uetz P. , and Fields S. A networ k of pr otein – pro tein intera ctions in yeast Nature Biotechnolo gy, 18( 12 ), 1257 – 126 1, 2000 8. Zhou D., B ousquet O., Lal T .N., W eston J. and Sc hölkopf B. Lear ning with Local and G lobal Consiste ncy Advances in Neural Info rmation Processi ng Systems (NIP S) 16, 321 -328. (Eds.) S. Thrun, L. Sa ul a nd B. Schö lkopf, M IT Pr ess, Cambridge, MA, 200 4 9. Xiaoj in Zhu and Zoub in Ghahr amani. Learni ng fro m la beled and unlabeled data with lab el prop agation T echnical Rep ort CMU -CALD-0 2-107, Carnegi e Mellon Uni versit y, 2002 10. Kn utson A. and T ao T. Ho neycombs a nd sums of Her mitian matrices Notices A mer. M at h. Soc . 48, no. 2, 175 – 186, 2001 11. Zhou D., Hua ng J . a nd Schölkop f B. Learning with H ypergraphs: Clusteri ng, Classificatio n, and Embedding Adva nces i n Neu ral In formation Proc essing S yste m (NIP S) 19 , 160 1 -16 08. (Eds.) B. Schölkop f, J.C. P latt and T. Hofmann, MIT Press, Ca mbridge, M A, 2007 . 12. Spe llman P ., Sherlo ck G., and e t al. Comprehensive identi fication of cell cycle -re gulated genes of the yea st sacc haro myces cere visiae b y microarr ay hybridiz ation Mol. Biol. Cell, 9:3 273 – 3297, 1998 13. Luxb urg U. A Tutorial on S pectr al Clustering Sta tistics and Co mputing 17 (4): 3 95 -416, 2007 14. Shin H., T suda K., and Schoelkopf B.P rotein functiona l class p redictio n with a combi ned graphExper t Syste ms wit h Applicatio ns, 36:3 284 -3292, 20 09

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment