Quality indicators for scientific journals based on experts opinion

This paper presents the results and further development of a survey sent to 11,799 Spanish faculty members and researchers from various fields of the social sciences and the humanities, obtaining a total of 45.6% (5,368 responses) usable answers. Res…

Authors: Elea Gimenez-Toledo, Jorge Manana-Rodriguez, Emilio Delgado-Lopez-Cozar

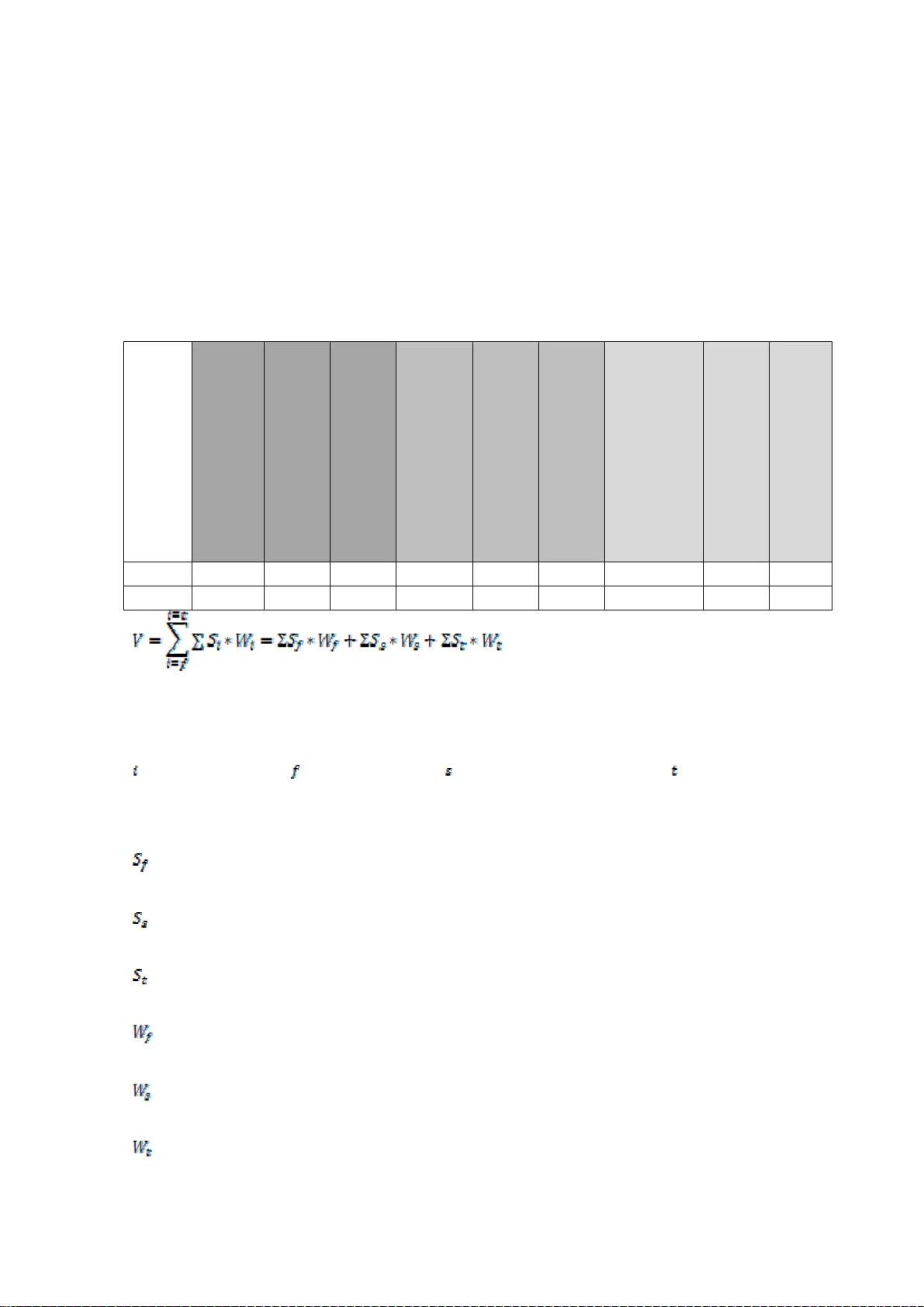

Quality indicators for scientific jo urnals based on experts’ opinion Elea Giménez-Toledo(1), Jorge Mañana-Rod ríguez(2), Emilio Delg ado-López-Có zar(3) (1)Elea Giménez-Toledo (c orrespondin g author) has a PhD in in formation science. She is research fellow at the CSIC and director of EPUC, wh ich is devoted to the evaluation of social science a nd humanities journals and books. She is co-au thor of two platforms on Spanish journal evalu ation on social sciences and humanities: RESH and DICE (Dissemina tion and Editorial Quality of Spanish Journa ls in the Humanities, Social Sciences, and Law).Spanish National Research Co uncil, Centre for Human and Social Sciences, Albasanz, 26-28 , 28037, Madrid , Spain. E-mail: elea.gimenez@cc hs.csic.es (2)Jorge Mañ ana-Rodrígue z is PhD student at the Spanish National Research Council, and member of EPUC research gr oup. His work i s devoted to the st udy of specialization measur ement, characteristics and implications for scientific ou tput assessment in social sciences and hu manities. Spanish National Research Council, C entre for Hum an and Social Sciences, Al basanz, 26-28, 2 8037, Madrid, S pain. E- mail: jorge.mannana @cchs.csic .es . Telephone (003 4) 916022795 (3)Emilio Delgado-López-Cózar is Prof essor of Library and Infor mat ion Science at the Un iversity of Granada and m ember of EC3 researc h group. He is de voted to the eval uation of scient ific journals a nd science, the study of researc h in LiS and to the evalua tion of scientific output. He is o ne of the developers of In-Recs Index (Impact factor of Span ish Social Sciences Journal s). Departm ent of library and information science, Gr anada University, Cartuj a Campus,18011, Gran ada, Spain. E-mail : edelgado@ugr.es Keywords: journal quality, experts’ opinion, survey, hu manities, social sciences. Abstract: This paper presents the resu lts and further development of a survey sent to 11,799 Spanish faculty members and researchers from various fields of the social sciences an d the humanities, obtaining a total of 45.6 % (5,368 respon ses) usable answers . Respondents were as ked (a) to indi cate the three most important journals in their fi eld and (b) to rate them on a 0-10 scale according to their quality. The information obtained has been syn thesized in two indicators which reflect the perceived quality o f journals. Once the values were obtain ed, the journals were categorized according to each indicator and the ordinal positions were compared. Different profiles of journals are analyzed in con nection with experts’ opinion, such as regional orient ation, and the conse nsus among res earchers is studie d. Finally, the possibilities of extending the research and indicators to sets of internationa l journals are ex plored. Introduction Several quality indicators exist which are design e d for the direct or indirect assessment of the quality of journals (Rou sseau, 2002). Usually, a comb ination of these ind icators is used by the most important dat abases in order to select journals (Thomson Reuters, 2012; Medline, 2012, for exampl e), in the context of scientific assessment pro cesses carried out by agencies or institutions, or by systems developed ad hoc to assess th e quality of journals based in a giv en country. Among t hese indicators it i s possible to disti nguish between bibl iometric i ndicators (impact factor, internationality in dicators, endogamy ind icator s, etc.), visibility indicators (i.e. presence in databases), indicators concern ing editorial formal quality and th e editorial management of publications (such as peer revi ew and its characteristics) . Nevertheless, alt hough the combinati on of various indicato rs provides a more precise picture of the quality of a given journ al, the humanities and social sciences scholarly community often clai ms that the assessment of the quality of conten t is a fundamental element in evaluation processes. The experts from each disciplin e are, in fact, t he only ones who can provide this assessment of conte nt. By its own nature, t his asse ssment is subjecti ve and biased, as the perception of “quality” is different for each p erson and can be influenced by their own concept of wh at rigor is in research, by t heir knowledge o f the discipli nary scope of the j ournals they ha ve to assess or by their involvement as an author or m ember of the advisory board in a given jour nal. In the case of the resea rch conducted by Donohue and Fox (2000), using survey m ethodology and obtaini ng 243 usable answers, a positive but moderate correlation (r=0.58, n=46, p-valu e 0.0001) has been observ ed between the 5-year impact factor and t he assessment ma de by the experts; in this sense, the m oderate correlation s hows a certain degree of discrepa ncy between these two measures . Nevertheless , as has been proved in previo us studies (Axarlo glou & Theoharaki s, 2003, Nederhoff & Zwaan, 1990), whe n the assessment is carri ed out by a larg e group of researche rs in which various discip lines are represented, there i s a convergence of opinions; that is to say, there is a concentration of votes b y experts for a core of jour nals which cou ld be considered key and as quality journals for e ach discipline. Thi s could be partic ul arly interesting in the assessment of the scientific activity processes in d isciplines where the impact factor d oes not exist or is n ot a determinant. It is possible, as well, to identify j ournals which are co nsidered not to be importan t, journals which are considered important by a limited n umber of faculty members and researche rs, and journals which are considered important by most res earchers. Above all, these assessmen ts perm it the calib ration of the diversity of assessment s made of diffe rent journals as a result o f different sch ools of th ought, approac hes or areas of s pecializati on. As Axarlo glou & Theoha rakis (200 3) pointed o ut, diversit y and plurali sm in the disciplines does successfully co ntribute to their development and growth, but, nevertheless, Hodgson & Rothman (1 999) pointed ou t that global researc h and the advisory b oards of the top jo urnals are dominated by a few institutions which defend their own ideas and approaches. Assessing journals in a close-to-reality fashion i nvolves taking into account the heterogeneity of the publications and opinions about these pub lications which can be found within a discip line, and this is one of the objec tives of the survey on which this paper is based. The work of Axarl oglou & Theoha rakis (2003) is particularly illuminating in th is sense, b ecause it shows the d ifferences in the assessment of the quality of journals prov ided by economists, depending on certain variables such as the school of though t in which these pu blications can be classified or th e methodological appro ach followed in th e research published in these journals. Th at is to say, they show that the perception of quality is neith er coincident nor unanim ous with the ranki ngs or categori zations wh ich are usually used in scien tific assessment, and even varies am ong individu als belonging to an apparent ly homogene ous communit y, such as that of economists. Ned erhoff & Zwaan (1990) also addressed the m easurement and analysis of perceived quality by Dutch and for eign scholars on a wide set of Dutch- based journals, usi ng the survey as t he information gathering tech nique. In the consult ation of expe rts the respondents were asked to classify the jo urnals as scholarly or non-scholarly , and a core of very important jo urnals was identi fied which re sulted from the overlapping of several quality characteristics. The conclusions of the cited studies rai se new questions rega rding the debate between the practical “products” (datab ases, lists, etc.) which provide efficient solutions to scientific assessment processes and t he more complex “p roducts” which invol ve meeting the g reat diversity of parameters which affect the quality of publication s. This idea is well expressed by Axarloglou & Theoharakis (2 003, p.4) “The unde rlying perce ptual heterogeneit y with respect to Jour nal quality i s a frequent cause of deba te in tenure an d promoti on committees”. In any assessment process, ca refully carried out, that h eterogeneity should be take n into consideration an d, in this sense, a considerable n umber of indica tors of a different nature could hel p to balance the weight and effects of a hom ogeneous assessment . Objectives and methodology It h as been the gen eral aim of this project to provide content quality in dicators for Span ish social sciences and humanities journ als. By doing this, the in tention is to design an d apply an extended range of quality indicators to scientific journ als taken into account by the Span ish assessment agencies, which are available on websites such as DICE , RESH, In Recs, MIAR or CIRC, as examples of those m ost widely used. The main sp ecific objective of this paper is to show and discuss the validity of th e two quality indicators of s cientific jou rnals based on the opinion and c ontent assessment p rovided by Spanis h experts in the various disciplines of the humanities and th e social sciences. For this purpose, the application of both indicat ors to the journal s of two disci plines, an thropology and library and informatio n science, is shown and the results an d differences between both values are a nalyzed. The inform ation gathering i nstrument used i s a web-form survey designed wit h PHP and MySQL, and t he target populati on was comprised of 11,799 Spanish faculty mem bers and researchers from various disciplines of t he social sciences and the humanities, all which m eet the condition of having, at least, a six year research period a pproved by the CNEAI 1 . Compared to other international and national surveys, this is one of the b iggest samples of researchers that has been used up to now. Alt hough pe rsonal invitations to particip ate in the survey were 1 Recognition to the researchers in th e form of additi onal payment which is given as a result of a positive assessment of six years of research activity. made, the answers were abso lutely anonymous. This survey sought to iden tify the quality of the journals according to the opinion of the expe rts themselves and, at the same tim e, to observe the di versity among the disciplines belonging to the so cial sciences and th e humanities. In this sense, another relevant feature of the survey is the level of data aggreg ation: scie ntific specialization and not disciplin es; this is an important p oint since several studies have sho wn that scholars’ special ization is a determi nant in the perception of a journa l’s quality. In order to achieve this, it was impor tant to distinguish the an swers according to the discipline of the resp ondents. The survey included a total of five questions regarding different aspects related to the quality of scientific jour nals, and two of the answers ha ve been the basis for the design an d developm ent of the indicators pr oposed in thi s work: ‐ Indicate the three b est Spanish journals from your area of specializa tion and rate each o f them from 1 to 10, being 1 the lowest value and 10 the highest valu e. ‐ Indicate the three best jo urnals in your area o f specialization. You ca n choose both Spanish and foreign jo urnals. In orde r to rate each of them, co nsider 1 the lowest value and 10 the highest value. In order to facilitate the completion o f these two questions, lists of jou rnals by discipline or sets of disciplines were offered. These lists included more than 1 ,900 Spanish journal titles an d more than 8,000 foreign j ournals (so, in the near fut ure a comparison of percepti ons between Spanish an d foreign journals will be possible). If the respondent did not find the journal he wanted to select in these extensive lists, the respondent had the op tion of adding the title of the publicatio n. The response rate was 45.6% (5,368 answers) a lthough it varie d consid erably according to the different di sciplines of the respon dents. This respo nse rate is one of the hig hest among those fou nd in similar st udies (Lowe, 2005: 1, 314 surveys sent , 149 usable ans wers, 16% respo nse rate; Axarglol ou, 2003: 10,402 mails sent, 2,103 usable answers,: 20.22% response rate; Brinn, 1996: 260 surveys se nt, 90 usable answers, 34.6% response ra te; Giles, 1989: 550 surveys sen t, 215 usable answers, 40 % response rate), althou gh it does not re ach the 69.4% o f Nederhoff & Zwaan (1990) w ho, however, had a more limited target set of research ers (385 surve ys). This high response rate can be explained by the strong “awareness” of the community of Sp anish sch olars and teachers in the disciplines of the humanities an d the social sciences regarding scien tific assessment issues. The survey provides two kinds of varia bles from whi ch the indicators have been devel oped. Firstly, the num ber of votes or tim es a given journal has b een rated in any posi tion (called by Axarloglou “familiarity” in his study). Secondly, the position (f irst, second or third) in which a given journal was voted. This indicator is related to the Averag e Rank Po sition which was propo sed by Hull & Wright (1990) and als o mentioned in t he cited study, w hereas the number of votes would be related t o the concept of familiarity as detai led in Theoharakis & Hirs t (2002) as well as in Olth eten (2005). In the case of that study, fam iliarity was described as t he numbe r o f times a given jour nal was positione d in the upper 20% of top quality jo urnals. Quality indicators according to experts The information obtained from the su rvey is presented in a table similar to the following, which will be used as an example for the exp lanation and calculus of the two indicators developed. Table 1: Example of the data obtained from the survey From the information given ab ove a ranking can be derived. The information related to the quality of a journal is both the number of vo tes it has received as th e first, second or third mo st important jo urnal, as well as the scores the journal has recei ved when voted in each position. When the scores received by a journal as the fi rst m ost important journal (or in the other two positions, second or third ) are added together, the fre quency with wh ich the journal ha s been voted affects the overall sum of the sco res; an in crease of 1 in the number of vot es means an increase of between 1 and 10 in the sum of scores for that position (as the first, second or third most importan t journal). A journal could be voted three times as the first most im portant and the scores could be 5, 5, and 5 for each vote, while anot her could also be voted three tim es as the first most important j ournal but recei ve a score of 10, 10 and 10. An addition al vote for a given position (first, second or third) will alwa ys mean an increase in the sum of scores for a journal. F rom this direct relat ion between the num ber of votes an d the sum of scores, it can be derived that the sum of the scor es given to a journal is a representativ e measure of its perceived quali ty. Neverthele ss, a single journal ca n be vote d a differen t number of t imes (with di fferent associated scores) in different positions. The v alues of the scores given to a journal can v ary from 1 to 10, regardless of the positio n that journal is being vo te d for, and at the same time the in dicator shou ld be sensitive not only to the sum of scores, but also to the different po sitions (first, second or third) among which this scores are distributed. TITLE NUMBER OF TIMES VOTED 1 ST MOST IMPORTANT JOURNAL SCORES WHEN VOTED 1 ST MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 1 ST POSITION NUMBER OF TIMES VOTED 2 ND MOST IMPORTANT JOURNAL SCORES WHEN VOTED 2 ND MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 2 ND POSITION NUMBER OF TIMES VOTED 3 RD MOST IMPORTANT JOURNAL SCORES WHEN VOTED 3 RD MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 3 RD POSITION A 3 7; 8; 6 21 4 6; 7; 9; 5 27 2 6; 5 11 B 6 8; 7; 7; 9; 6; 8 45 2 6; 8 14 1 6 6 C 2 9; 7 16 6 7;5;6;8; 5 ;6 37 4 5;4; 7;6 22 ∑ 11 82 12 78 7 39 Table 2: Journals with the same overall sc ore, but received in different posi tions. The sum of the scores, in first, second and third position, for journ als M and N is the same: 43, but the score given to M in the first position is 1 highe r than journal N, wh ereas N scores 1 higher when voted as the t hird most im portant journal. Th is makes the use o f a weight necessary. The general fo rmula of the i ndicator woul d be the foll owing: Where: can take three values: denotes first position, denotes the second position and denotes the th ird position. : Are the s cores given wh en voted as th e first most important journal. : Are the sco res given w hen voted as t he second m ost important journal. : Are the scores given when voted as the third most impor tant journal. : Is the weight applied to the sum of scores wh en voted as the first most important journal. : Is the weight applied to the sum of scor es when voted as the second most important journal. : Is the weight applied to the sum of scor es when voted as the third most important journal. TITLE NUMBER OF TIMES VOTED 1 ST MOST IMPORTANT JOURNAL SCORES WHEN VOTED 1 ST MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 1 ST POSITION NUMBER OF TIMES VOTED 2 ND MOST IMPO RTANT JOURNAL SCORES WHEN VOTED 2 ND MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 2 ND POSITION NUMBER OF TIMES VOTED 3 RD MOST IMPO RTANT JOURNAL SCORES WHEN VOTED 3 RD MOST IMPORTANT JOURNAL SUM OF SCORES WHEN VOTED IN 3 RD POSITION M 2 9 ;10 19 2 7;7 14 2 5;5 10 N 2 9; 9 18 2 7;7 14 2 5;6 11 Regarding the value of the weigh t, the only condition it has to meet is: > > . This is because the scores given to a journal when voted as the first most im portant should be weighted with a higher value th an when that s ame journal ha s been voted as the second or the t hird most im portant. To ensure that this condition is met, two op tions are proposed. Firstly, it is possible to give an arbitrary weight to each su m of scores for first, second and third position. As an exam ple, the value of : could be 3, the value of , 2 and the value of .This indica tor is denom inated V 1 : The generic weight is notated here as in order to distinguish it from the weight app lied to the second indicator V 2 (explained below) where the weight is noted as . From table 1 , indicator V 1 can be calculated, i.e. for journal A as follows: V 1 = 21*3+27*2+11 = 128 Nevertheless, to assign a weight tak ing as the only conditio n that the values meet the men tioned condition cou ld raise these questions: is it appropriate to give a weight to the first position which is three times larger than the weight given to the third position? Fu rthermore, it should be considered whether assigning an arbi trary and equal weight to all journals regardless of their discipline is the most adequate option, taking into account that the d ifferences betwee n the average scores given to journals voted as the first, second and t hird most im portant journ als substantia lly vary among di fferent disci plines and areas of specialization. As a solution to these questions, it is proposed to deduce the value of the weights from the information given by the respondents itself, th at is to say, from the results of the survey. By doing this, a possible value for t he weight woul d be t he average of the scores gi ven by the respondents for each position (first, second or third); if the respondents g i ve a high average score to the jo urnals in first position, a smaller average scor e in the second position and an ev en smaller average in the third po sition, the condition for the weights would be m et. Moreover , this weight would not be arbitrary, because it would be ad justed to the val ues given by t he respondents for each discipli ne. The weight pr oposed for indi cator V 2 involves two measures for its value in each position, first, second or third. The first measure is the av erage score per vote (A SV) in each position for all journals: the quotient of the total score gi ven to all journals and the total number of votes received by all journals in each position. In the case of journals in table 1: ASV 1 = ; ASV 2 = ; ASV 3 = For all disciplines it has b een observed that ASV 1 > ASV 2 > ASV 3 , which m eans that these average scores meet, in all cases, the condition require d. It is sensitive to differences in the scoring pattern, whic h differs among t he different disc iplines. Nevertheless, these val ues are too big to be suita ble weights: the values potentially range from 1 to 10, which in some cases is more than the sum of th e values given by the resp ondents in the 1 -10 scale. A suitable solutio n to this problem is to includ e, as a denominator, the second measure used to develop this second weight, which is the sum of the three ASV. It is the same as expressing the avera ge score per vote for each po sition in a per-unit range with respect to the sum of the three av erages. This is , the weight applied in the case of the seco nd indicator V 2 . A further adva ntage of using this per-unit average sc ore per vote is tha t the sum of the three values for the wei ghts would alway s be 1. On the c o ntrary, if “raw” ASV values where used as weights for the sum of score s given in each position, their sum would not be the same am ong different disciplines, which could involve co mparability problems. The general formula for this weight is: Using th e data in table 1, the values of the weights applied to the sum of scores in first, second and third po sition would b e: = 0.38 = 0.33 = 0.28 Finally, the value of indi cator V 2 would be: JOURNAL A: 21* 0.38 + 27 *0.33+ 11 *0.28 = 19.97 JOURNAL B: 45* 0.38 +14 *0.33+6 *0.28= 23.4 JOURNAL C: 16* 0.38 + 37 *0.33+ 22 *0.28 = 24. 45 Results The design of the survey its elf permits two vari ables to be obtaine d for each journal : the number of votes and t he scores give n to the journ al, and the posit ion in which that journal has bee n voted. Moreover, taking int o account that each discipline has a different num ber of journals, a different population of scholars and teachers who c ould potentia lly give a score for each of t he journals, and a different ap preciation of t he journals (the degree of usage i s different am ong the discipli nes comprising the humanities and th e social sciences), it seemed logical that the ind icator for the publications sho uld take into account th e behavior of the discipline in th e survey, that is to say, it shou ld not be calculated on all the journals from the opini on of all the respon dents. Therefore , both propose d formulae are relate d to the answer obtained in the context of a particular discipline. Within a discipline there are different “knowledge areas” (areas of speciali zation), recognized by the former Innovat ion and Scie nce Ministry (Spain), for which the vote s, positions an d scores have been cl ustere d according to this disciplinary structure in or der to give the value of the indicator for each discipline. Both indicators have been applied to th e same set of journals, and th e variations observed in the change of posit ion for the same jo urnal between the t wo indicators seem to be strictly rel ated to the values of the weights W. In the cas e of the second indicator (V 2 ), when the weights, w h ich are dependent on the distribution o f scores, are close to 3 , 2 and 1 for the fi rst, second and thi rd positions re spectively, both indicators would offer almost identical ordinal values. As an example of this, in the following table th e values and pos ition changes between both in dicators for Sp anish Anthropol ogy journals are shown: Table 3. Spani sh Anthropology journals. V 1 vs. V 2 : values an d position comparison. TITLE V 1 Position change from V 1 to V 2 V 2 Revista de Antro pología Social 52,3 0 24,77 Revista de Dia lectología y T radiciones Po pulares 21 0 15,76 Revista d'Et nologia de Cat alunya 7,67 0 9,51 AIBR. Revista de Ant ropología Iberoam erica 5,56 0 8,41 Historia, A ntropología y Fuentes Orales 2,08 0 5,56 Gazeta de Antropolog ía 1,72 0 4,75 Trans. Revista Transcult ural de Música 1,62 0 4,63 Ankulegi. Revista de Antr opología Social 0,87 0 3 Pasos. Revi sta de Turismo y Patrimonio C ultural 0,46 0 2,36 Demófilo. Revi sta de Cultura Tradici onal de Andalucía 0,31 0 1,66 Revista de A ntropologia Expe rimental 0,14 0 1,43 Anales de la Fundación Joaq uín Costa 0,12 0 1,29 Oráfrica 0, 05 0 1,1 Anuario de Eu sko-Folklore 0,02 0 0,4 Cuadernos de Etnología y Etnografí a de Navarra 0,01 0 0,37 Culturas P opulares 0,01 0 0,37 Etniker Bizkai a 0,01 0 0,37 Revista Vale nciana d'etnol ogia 0,01 0 0,33 Temas de Ant ropología Aragonesa 0,01 0 0,31 Nevertheless, there are position change s directly proportional (among other factors related to the calculus of the indicator) to the magnitude of the di fferences between the weights used in the indicators V 1 y V 2 . As an example of this characteristic, in th e following table, shown are the values of both indicators and the position chan ges in the ordinal scal e for the Information & Library Science discipline. Table 4. Spanish Library & Information Science journ als. V 1 vs. V 2 : values and position comparison. TITLE V 1 Position change from V 1 to V 2 TITLE V 2 El Profesional de la Información 40,15 -1 Revista Española de D ocumentación Científica 26,80 Revista Español a de Document ación Científica 31,31 1 El Profesional de la Inform ación 25,62 Lligall. Revista Catalana d'Arxivíst ica 6,53 -6 BiD: text os universitaris de bibliotecono mia i documentació 8,61 Revista Gener al de Inform ación y Docume ntación 4,41 -4 Scire. Repres entación y Organización del Conocimiento 6,34 BiD: textos universitaris de bibliotecono mia i documentació 4,04 3 Anales de Documentaci ón 6,21 Anales de D ocumentación 2,69 1 Boletí n de la ANABAD 5,14 Scire. Representación y Organización del Conocimiento 2,14 3 Cybermetrics. International Journal of Scientometrics, Informetrics and Bibliometrics 4,59 Documentaci ón de las Ciencias de la Inform ación 2,05 -2 Re vista General de Infor mación y Document ación 4,30 Boletín de la ANABAD 1,69 3 Lligall. R evista Catalana d' Arxivísti ca 3,40 Cybermetrics. International Journal of Sci entometrics, Informetrics and Bibliom etrics 0,83 3 Document ación de las Cienci as de la Información 2,96 Item. Revista de Biblioteconomía i Document ació 0,13 0 Item. Revi sta de Bibliote conomía i Document ació 1,73 Ibersid. Revista de Sistemas de Informaci ón y Docume ntación 0,05 0 Ibersid. Rev ista de Sistemas de Informaci ón y Docume ntación 1,26 Cuadernos de Docum entación Multimedia (ed. electrónica) 0,02 0 Cuadernos de Documentación Multimedia (ed. electrónica) 0,75 Revista de Mu seología 0,02 0 Revista de M useología 0,27 The tabulation of th e results of the survey has offered the p ossibility; furthermore, of detecting multidis ciplinary journals mentioned by respondents and which belong t o different knowl edge fields. A given jou rnal can be inclu ded in various disciplines and o btain diffe rent values in each of these disciplines. This aspect open s the door to research in th e context of multidisciplinary journals, referring to the conseque nces which the evaluat ion of jou rnals could ha ve for publicati ons which ha ve a multidisci plinary scope and whic h, maybe due to this fac tor, are not recognized as c ore publications in any discipline; that is to say, the po ssible penalization of specialized or multidisciplin ary journals in assessment systems. Discussion and conclusions The assessm ent of a journal ’s content thro ugh ex pert opi nion sh ould be one m ore variable to be considered in the assessment of scien tific journals. By doing so, formal o r indirect quality indicato rs would be com plemented, and t he scientific knowl edge of scholars and the value given by th em to the journals in t heir disciplines w ould be take n into account an d ascertained. This pro posal is not only based on the votes obt ained by a j ournal given b y the responde nts, but also on the score an d position gi ven to it. In this sense, it diffe rs from t he method used by Nederhoff & Zwaan (1990), based on average sc ores recei ved by the jour nals. The indicators proposed in this study show more points in common with th ose presented by Axarloglou & The oharakis (200 3). Basicall y, in all the formulae shown above, taken into accou nt is the frequency with which a journal has b een voted, the position assigned to it and a sp ecific weight which allows both the generation of a ranking an d evidence of the differen ces among journals. I n the case of the study by Axa rloglou & Theo harakis there is the variable “tier” in which respondents po sitioned the journal; respond ents were asked to position each of the voted jour nals among th e first 15 jour nals (first tier) or among the second 15 (secon d tier). This difference is fundam ental, because the weight given to each journal de pends on the tier in which it is positioned. The weight given by Axar logl ou & Theoharakis to each journal is a const ant value and the difference bet ween the positions would be al ways a thirtieth, si nce the number of journals in bot h tiers is 30. In this paper, the weight is given by the averag e of the scores and the position in which the journals of each discipline appear (first , second or third position). This represents an important advantag e in the assessment of scientific activity in the humanities and the social sciences wh ere different schools of thoug ht, different position ing depending on the methodolo gy used and a wide ra nge of areas of special ization with di fferent degree s of international projection can be found. To h ave an assessment of the content of the journals, which takes i nto account the characteristics of the sam ple, a llows the particularities of each discipline to be attended to, but it does not allow raw comparisons between disciplines. One of the results of Axa rloglou & The oharakis is that, al though there is agreement among respondents regarding th e top journals, it is also true th at larger differences can be ob served in relation to the more regional ori ented journal s. The geographi c factor, the school of thought, the participation in o r affiliation to a journal, the res earch meth odology approach or the area of specializatio n and the characteristics of the institution of the respondent cou l d affect and cause variations in the perception of the quality of a journal. All th ese factors allow them to affirm that “These findings should serve as a warning against monolithic research evaluation practices that do not acco unt for the underlying differences of the communi ty” (Axarloglo u & Theoharakis , 2003). In t his sense, recent developments in Flanders (Engels et al, 2012) take into accoun t a variety of sources and assessment procedures, among them is expert opinion in the form of panels, thoug h survey met hodology is n ot used. In the form ulae of both indi cators V 1 and V 2 a greater preciseness can be appreciated in V 2 . Mathematically, the second indicator (V 2 ) could be c onsidered more adj usted to the distrib ution of votes in different disciplin es. If the value of the ind icator is to be for public use, and therefore is go ing to be a reference and dat a to be taken into acco unt by differe nt specialists (evaluators, researchers, editors), it is recommended that the form ulae be the easiest and th e most precise possible. Too complex an indicator could cause m istrust among users and, in s ome cases, make com prehension di fficult. Th e results of the application of these indicato rs reveal the extent to whi ch they are comparable and if there is a parallel or not between th e values. The results conclude that there is a high correlation between them, although small differences are observed in th e position of certain jou rnals. A line of research is now open to analyze the values of V 2 for all j ournals, so that the op inion of specialists regarding t he journals in t heir disciplines wi ll become bet ter known and described in m ore detail. Nevertheless, t he team which has devel oped this study dec ided to include the V 1 indicator in the RESH 2 information syst em which m akes a comprehensive assessm ent of all the S panish scholar ly journals in the humanities and the social sciences. The diversity of ind icators in this information syste m will allow the correlation of variables such as the impact of journals and the assessment p rovided by experts. Nede rhoff & Zwaa n (1990) have worked pre viously on this last point, finding as one o f the results that the journa ls with the best expert assessment score did coin cide with those with the highest impact factor, but also finding cases in wh ich the tendency is exactly the opposite. The indicators shown in RESH related to the pres ence of journals in internatio nal databases will give also the opportunity o f identifying if the publi cations which have t he best score accor ding to expert opinion are also t hose with greater dissem ination or have been sel ected by the internati onal databases such as WoS or Scopus. In this sense, it would be interesting to observe whether the journals with the best scores for jour nals belonging t o the disciplines with a st rong local orie ntation are cover ed or not by these 2 Epuc.cchs.csic.es/resh databases and, therefore, prob ably certain err ors might be id entified, which often occur i n the assessment processes of the output of th e humanities and the social scie nces when these datab ases are the only source of information taken i nto account. Although expert con sultation seem s to be a priori the best method fo r making an approxim ated assessment of the content quality of j ournals, such an assessment should be h andled carefully due to various reasons. Firstly, the assessment by an expert is, in fact, a perception of quality. This perception is necessarily infl uenced by the expert’s aca demic life: reading re search papers, the de gree of partici pation on advisory o r editorial boards , or even the fact of publishing i n journals whi ch have assessm ent processes of papers, as well as the invisible colleges which exist in the various disciplines or sub- disciplines of journals. The journal is, like it or no t, a part of a networ k or an invisibl e college. In the case of Spain, it has take n time, effort and m any research projects to meet the needs of the social sciences and the humanities, evaluating journals with different methods and adapting these to the characteristics of particular disciplin es. There is still much to b e done and one of the steps to be fulfilled would be to de al with the diff erences and diversity of publishing ha bits within a gi ven discipli ne which is reflected in the publications selected by scholars. E ven in the discipline of Economics, a “hard science” in the context of the social sciences, differences are observed and argued. Funding and acknowledgements This study has been car ried out within t he framework of the research project Valoración integrada de las revistas españ olas de Ciencias Sociales y Huma nidades media nte la aplicación de indicadores múltiples [Integrat ed Assessment o f Spanish Social Sci ences and Hum anities journals by the application of multiple indi cators] SEJ2007- 68069-C02- 02, funded by t he Spanish Mini stry of Science and Innovation be tween 2007 and 2010. The authors would li ke to acknowled ge Sonia Jiménez, the Information a nd Comm unication Technologies and Statistical Analysis units for their contri bution to this work . References Axarloglou, K. & Theoh arakis, V. (2003). Diversity in economics: an analysis of journ al quality perceptions. Journal of the European Ec onomics Association , 1(6), 1402 -1423. Bence, V. & Oppenheim, C . ( 2004a ). The influence of peer revi ew on the Research Asse ssment Exercise . Journal of I nformation Sci ence, 30 , 347 – 68 . Benjamin, J. & Brenner, V. (1974) Perceptions of journal qu ality, The Accounting Review, 49(2), 3 60– 362. Brinn, T., Jones, M. J., & Pendlebury, M. (1996) . UK accountants’ perceptio ns of research journal quality. Accounting and Business Research , 26(3), 265–278. Butler, L. (200 3). Modifying publication pract ices in response to foun ding formul as. Research Evaluation, 17(1), 39-46. Donohue JM; Fox JB. A multi-method ev aluation of journals in the decision a nd managem ent sciences by US academics. OMEGA-INTERNAT IONAL JOURNA L OF MANAGEMENT SCIENCE 20 00, 28(1): 17- 36 Engels, Tim C. E., Ossenbl ock, Truyke n L. B. & Spr uyt, Eric H. J. (forthcom ing). Changi ng publicatio n patterns in the Social Scien ces and Humanities, 2000-2009. Scientometrics , Online First™, 21 February 2012 Giles, M., Mizell, F. and Paterson, D. (1989) ‘P olitical Scientists’ Journal Ev aluation Revisited’, PS: Political Science and Po litics , 22 (3), 613–7. Hawkins, R. G., Ritter, L. S. & Walter, I. (1 973 ). What Economists think o f their journals. Journal of Political Economy , 81, 1017-1032. Hull, R. P., & Wright, G. B. (1990). Faculty perceptions of journal quality: An update. Accountin g Horizons , 4(1), 77– 98. Hodgson, G. M . & Rothman H. (1999 ). The Editors and Authors of Econom ics Journals: a case of institutional o ligopoly? Economic Jou rnal , 109, 37-52. Lowe, A. and Locke, J. (2005). Perception s of journal quality and research paradigm: Results of a web- based surv ey of British Accounting Academics. Accounting, Organiz ation and Society , 30, 81-98. Malouin, J. L. & J. F. Outreville. (1987 ). The rela tive impact of Economics journals: a cross-country survey and comparison. Jour nal of Econo mics and Busines s , 29, 267-277. Medline (2012). MED LINE Journal Selection. Accessed: Jan. 11th 2012 http://www.nlm.nih.g ov/pubs/factsheets/jsel.html Nederhof, A. J. & Zwaan, R. A. (1991). Quality judgments of journals as ind icators of research performance in the Humanities and the social and behavioral scien ces. Journal of the American Society for Information Science , 42(5), 332-3 40. Oltheten, E, T heoharakis, V an d Travlos, NG. 20 05. Faculty perce ptions and rea dership patterns of finance jour nals: a global view . Journal of Financial an d Quantitative Analysis , 40: 223–39 Rousseau R., 20 02. Journal Evaluatio n: Technical and Practical Issues . Library Trends , 50(3), pp. 4 18- 439. Theoharakis, Vasilis and Hirst, Andrew (2002). Perceptual Differences of Marketing Jou rnals: A Worldwide Perspective. Marketing Letters 13 (4), 38 9–402. Thomson Reut ers (2012). The Thomson Scie ntific journal selection pr ocess. Accessed: 1 1 Jan. 2012 http://thomsonreuters.com/pro ducts_services/s cience/free/essays/journal_selectio n_process/ DICE: epuc .cchs.cs ic.es/dice RESH: epuc.cchs.csic.es/resh CIRC: epuc.cchs.csic.es/circ MIAR: http://miar.ub.es/que.php In Recs: http://ec3.ugr.es/in-recs

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment