Compressive Coded Aperture Keyed Exposure Imaging with Optical Flow Reconstruction

This paper describes a coded aperture and keyed exposure approach to compressive video measurement which admits a small physical platform, high photon efficiency, high temporal resolution, and fast reconstruction algorithms. The proposed projections …

Authors: Zachary T. Harmany, Roummel F. Marcia, Rebecca M. Willett

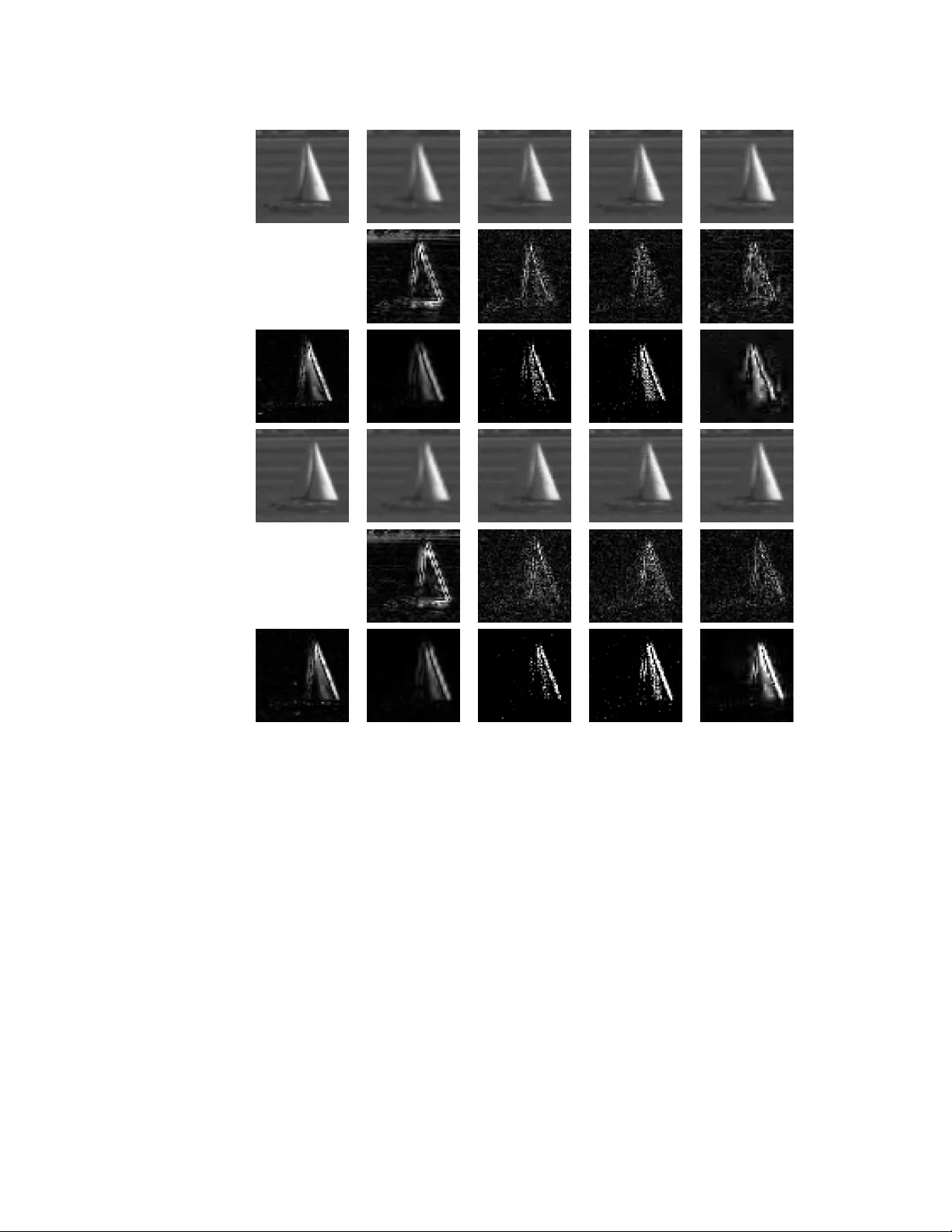

1 Compressi v e Coded Aperture K e yed Exposure Imaging with Optical Flo w Reconstruction Zachary T . Harman y , Member , IEEE, Roummel F . Marcia, Member , IEEE, and Rebecca M. W illett, Senior Member , IEEE Abstract —This paper describes a coded apertur e and keyed exposure approach to compressive video measurement which admits a small physical platform, high photon efficiency , high temporal resolution, and fast r econstruction algorithms. The proposed projections satisfy the Restricted Isometry Property (RIP), and hence compressed sensing theory pr ovides theor etical guarantees on the video reconstruction quality . Moreover , the projections can be easily implemented using existing optical elements such as spatial light modulators (SLMs). W e extend these coded mask designs to no vel dual-scale masks (DSMs) which enable the reco very of a coarse-resoluti on estimate of the scene with negligible computational cost. W e develop fast numerical algorithms which utilize both temporal correlations and optical flow in the video sequence as well as the innovative structur e of the projections. Our numerical experiments demonstrate the efficacy of the proposed approach on short-wav e infrared data. Index T erms —Compr essiv e sampling, coded apertures, con vex optimization, sparse r ecovery , coded exposure, T oeplitz matrices, optical flow I . I N T RO D U C T I O N T HE theory of compressed sensing (CS) suggests that we can collect high-resolution imagery with relati vely few photodetectors or a small focal plane array (FP A) when the scene is sparse or compressible in some dictionary or basis and the measurements are chosen appropriately [1], [2]. There has been significant recent interest in building imaging systems in a variety of contexts to exploit CS ( cf. [3]– [12]). By designing optical sensors to collect measurements of a scene according to CS theory , we can use sophisticated computational methods to infer critical scene structure and content. One particularly famous example is the Single Pixel Camera [13], which collects sequential projections of the scene. While these measurements are supported by the CS literature, there are sev eral practical challenges associated with the tradeoff between temporal resolution and physical system footprint. In this paper we describe an alternative approach to designing a low frame-rate snapshot CS camera which This research is supported by D ARP A Contract No. HR0011-04-C-0111, ONR Grant No. N00014-06-1-0610, D ARP A Contract No. HR0011-06-C- 0109, and NSF-DMS-08-11062. Portions of this work were presented at the IEEE International Conference on Acoustic, Speech, and Signal Processing, March 2008, and at the SPIE Electronic Imaging Conference, January 2009, and SPIE Photonics Europe, April 2010. Z.T . Harmany is with the Department of Electrical and Computer Engineer- ing, The Univ ersity of W isconsin-Madison, Madison, WI 53706 USA (e-mail: harmany@wisc.edu). R.M. W illett is with the Department of Electrical and Computer Engineer- ing, Duke Univ ersity , Durham, NC 27708 USA (e-mail: willett@duke.edu). R.F . Marcia is with the School of Natural Sciences, Uni versity of California, Merced, CA 95343 USA (e-mail: rmarcia@ucmerced.edu). naturally parallelizes the compressiv e data acquisition. Our approach is based on two imaging techniques called coded apertures and keyed exposures, which we explain next. Coded apertures [14], [15] hav e a long history in low- light astronomical applications. Coded apertures were first dev eloped to increase the amount of light hitting a detector in an optical system without sacrificing resolution (by , say , increasing the diameter of an opening in a pinhole camera). The basic idea is to use a mask, i.e., an opaque rectangu- lar plate with a specified pattern of openings, that allo ws significantly brighter observations with higher signal-to-noise ratio than those from conv entional pinhole cameras [14], [15]. These masks encode the image before detection, inducing a more complicated point spread function than that associated with a pinhole aperture. The original scene is then recov ered from the encoded observations in post-processing using an appropriate reconstruction algorithm which exploits the mask pattern. These multiplexing techniques are particularly popular in astronomical [16], [17] and medical [18]–[20] applications because of their efficac y at wav elengths where lenses cannot be used, but recent work has also demonstrated their utility for collecting both high resolution images and object depth information simultaneously [21]. Ke yed exposure imaging (also called coded exposure [22], flutter shutter [23], or coded strobing [24]) was initially devel- oped to facilitate motion deblurring in video using a relativ ely low frame rate. In some cases motion has been inferred from a single ke yed exposure snapshot. The basic idea is that the camera sensor continuously collects light while the shutter is rapidly opened and closed; the shutter movement ef fectiv ely modulates the motion blur point spread function, and with well-chosen shutter movement patterns it becomes possible to deblur moving objects. Similar effects can be achieved using a strobe light instead of moving a shutter . Despite the utility of the above methods in specific settings, they both face some limitations. The design of con ventional coded apertures does not account for the inherent structure and compressibility of natural scenes, nor the potential for nonlinear reconstruction algorithms. Like wise, existing keyed exposure methods focus on direct (uncoded) measurements of the spatial content of the scene and have limited reconstruction capabilities, as we detail below . The Coded Aperture K eyed Exposure (CAKE) sensing paradigm we describe in this paper is designed to allo w nonlinear high-resolution video recon- struction from relativ ely few measurements in more general settings. Recent related work [25] following our early technical report [26] describes a physical platform for combining coded 2 apertures with coded exposures. W e provide theoretical sup- port for this framework as well as detail nov el dual-scale mask generation schemes that can be used to compute a coarse- resolution estimate of the scene with negligible computational cost; this coarse estimation can be followed by reconstruction algorithms which use optical flow models of video sequences. A. Pr oblem F ormulation W e consider the problem of reconstructing an N -frame video sequence f ? , where each frame is an n 1 × n 2 two- dimensional image denoted f ? t . Using standard vector rep- resentation, we hav e that f ? t ∈ R n for t = 1 , . . . , N where n , n 1 n 2 is the total number of pixels. As a result, the vector representation of the video sequence is f ? = [( f ? 1 ) > , . . . , ( f ? N ) > ] > ∈ R nN . The observ ations y of f ? are also acquired as a video sequence. W e do not assume that the observations are acquired at the same rate at which we will ultimately reconstruct f ? . In general, we assume y is an M -frame video sequence, with each frame y k of size m 1 × m 2 . Similarly to f ? , we have y k ∈ R m for k = 1 , . . . , M , where m , m 1 m 2 , therefore y = [ y > 1 , . . . , y > M ] > ∈ R mM . W e observe f ? via a spatio-temporal sensing matrix A ∈ R mM × nN which linearly projects the spatio-temporal scene onto an mM -dimensional set of observ ations: y = Af ? + w, where w ∈ R mM is noise associated with the physics of the sensor . CS optical imaging systems must be designed to meet sev eral competing objectiv es: • The sensing matrix A must satisfy some necessary criterion (such as the RIP , defined belo w) which provides theoretical guarantees on the accuracy with which we can estimate f ? from y . • The total number of measurements, mM , must be lo wer than the total number of pix els to be reconstructed, nN . This is achiev able via compressive spatial acquisition ( m < n ), frame rate reduction ( M < N ), or simultaneous spatio-temporal compression. • The measurements y must be causally related to the temporal scene f ? , which restricts the structure of the projections A . • The optical measurements modeled by A must be imple- mentable in a way that results in a smaller , cheaper , more robust, or lower power system. • The sensing matrix structure must facilitate fast reconstruc- tion algorithms. This paper demonstrates that compr essive Coded Apertur e K eyed Exposure systems achieve all these objectives. B. Contributions The primary contribution of this paper is the design and theoretical characterization of compressi ve Coded Aperture Ke yed Exposure (CAKE) sensing. W e describe how keyed ex- posure ideas can be used in conjunction with coded apertures to increase both the spatial and temporal resolution of video from relati vely fe w measurements. W e pro ve hitherto unkno wn theoretical properties of such systems and demonstrate their efficac y in sev eral simulations. W e also detail a new mask design method that enables, with trivial computational cost, a low-resolution image of the scene from which we estimate an optical flow vector field for use in a computationally efficient optical flo w-based reconstruction algorithm. In addition, we discuss sev eral important algorithmic aspects of our approach, as well as detailing considerations for hardware implementa- tions of the proposed approach. This paper builds substantially upon earlier preliminary studies by the authors [27]–[30] and related independent work by Romberg [31]. C. Organization of the P aper The paper is organized as follows. Section II introduces relev ant background material; Section II-A describes conv en- tional coded aperture imaging techniques and Section II-B describes keyed e xposure techniques currently in the literature. W e describe the compressive sensing problem and formulate it mathematically in Section II-C. W e describe our approach to video compressed sensing using the CAKE paradigm in Section III including theoretical analysis of the method. W e then describe the design of novel dual-scale mask patterns in Section IV. Section V details how we perform the video re- construction from the CAKE measurements which we validate with numerical experiments in Section VI. W e conclude with a discussion of the implementation details and tradeoffs in practical optical systems in Section VII. I I . B A C K G RO U N D Prior to detailing our main contributions, we first re vie w pertinent background material. This revie w touches upon the dev elopment of coded aperture imaging, coded exposure pho- tography , and a brief re view of salient concepts in compressed sensing theory . A. Coded Apertur e Imaging Seminal work in coded aperture imaging includes the de- velopment of masks based on Hadamard transform optics [32] and pseudorandom phase masks [33]. Modified Uniformly Redundant Arrays (MURAs) [34] are generally accepted as optimal mask patterns for coded aperture imaging. These mask patterns (which we denote by h MURA ) are binary , square patterns, whose grid size matches the spatial r esolution of the photo-detector and hence are length m . Each mask pattern is specifically designed to have a complementary pattern h MURA such that the two-dimensional con volution h MURA ∗ h MURA is a single peak with flat side-lobes ( i.e., a Kronecker δ function). In practice, the resolution of a detector array dictates the properties of the mask pattern and hence the resolution at which f ? can be reconstructed. W e model this effect as f ? being downsampled to the resolution of the detector array and then con volved with the mask pattern h MURA , which has the same resolution as the FP A and the downsampled f ? , i.e., y = ( D f ? ) ∗ h MURA + w, 3 where ∗ denotes circular con volution in two dimensions, w corresponds to noise associated with the physics of the sensor, and D f ? is the inte gration downsampling of the scene, which consists of partitioning f ? into uniformly sized d 1 × d 2 blocks, where d i , n i /m i for i = 1 , 2 , and measuring the total intensity in each block. Because of the construction of h MURA and h MURA , D f ? can be reconstructed using b f = y ∗ h MURA . Howe ver , the resulting resolution is often lo wer than what is necessary to capture some of the desired details in the image. Clearly , the estimates from MURA reconstruction are limited by the spatial resolution of the photo-detector . Thus, high resolution recon- structions cannot generally be obtained from low-resolution MURA-coded observ ations. It can be sho wn that this mask design and reconstruction result in minimal reconstruction errors at the FP A resolution and subject to the constraint that linear , convolution-based r econstruction methods would be used . Howe ver , when the scene of interest is sparse or compressible, and nonlinear sparse reconstruction methods may be employed, then CS ideas can be used to design coded apertures. B. Coded (K e yed) Exposure Imaging Coded (or keyed) exposures were dev eloped recently in the computational photography community . Initial work in this area was focused on engineering the temporal component of a motion blur point spread function by rapidly opening and closing the shutter during a single exposure or a small number of exposures at a lo w frame rate [22], [23]. That is, y = A KE f ? + w = X t ∈ T f ? t + w, where the keyed exposure (KE) measurement matrix A KE selects the subset of frames during which the shutter is open. W e refer to this subset as the exposure code T ⊆ { 1 , . . . , N } . If an object is mo ving during image acquisition, then a static shutter would induce a typical motion blur , making the moving object dif ficult to resolve with standard deblurring methods. Howe ver , by “fluttering” the shutter during the exposure using carefully designed patterns, the induced motion blur can be made inv ertible and moving objects can be accurately reconstructed. Instead of a moving shutter , more recent work uses a strobe light to produce a similar effect [24]. While this nov el approach to video acquisition can produce very accurate deblurred images of moving objects, there is sig- nificant o verhead associated with the reconstruction process. T o see why , note that e very object moving with a dif ferent velocity or trajectory will produce a dif ferent motion blur . This means that (a) any stationary background must be removed during preprocessing and (b) multiple moving objects must be separated and processed individually . More recently , it was shown that these challenges could be sidestepped when the video is temporally periodic ( e.g., consider a video of an electronic toothbrush spinning) [24]. The periodic assumption amounts to a sparse temporal Fourier transform of the video, and this approach, called coded strob- ing, is a compressiv e acquisition in the temporal domain. As a result, the authors were able to le verage ideas from compressed sensing to achieve high-quality video reconstruction. The assumption of a periodic video makes it possible to apply much more general reconstruction algorithms that do not require background subtraction or separating different moving components. Howe ver , it is a very strong assumption, which places some limits in its applicability to real-world settings. The approach described in this paper has similar performance guarantees but operates on much more general video sequences. C. Compressed Sensing In this section we briefly define the Restricted Isometry Property (RIP) and explain its significance to reconstruction performance. In subsequent sections, we demonstrate our primary theoretical contribution, which is to prove the RIP for compressi ve coded aperture and keyed exposure systems. Definition 1 (Restricted Isometry Property (RIP) [35]) . A matrix A satisfies the RIP of or der s if ther e exists a constant δ s ∈ (0 , 1) for which (1 − δ s ) k f k 2 2 ≤ k Af k 2 2 ≤ (1 + δ s ) k f k 2 2 . holds for all s -sparse f ∈ R n . If this pr operty holds, we say A is RIP ( s, δ s ) . Matrices which satisfy the RIP are called CS matrices; and when combined with sparse recov ery algorithms, they are guaranteed to yield accurate estimates of the underlying function f ? : Theorem 1 (Sparse Recov ery with RIP Matrices [36], [37]) . Let A be a matrix satisfying RIP (2 s, δ 2 s ) with δ 2 s < √ 2 − 1 , and let y = Af + w be a vector of noisy observations of any signal f ∈ R n , wher e the w is a noise or error term with k w k 2 ≤ for some > 0 . Let f s be the best s -sparse appr oximation of f ; that is, f s is the appr oximation obtained by keeping the s largest entries of f and setting the others to zer o. Then the estimate b f = arg min f ∈ R n k f k 1 sub ject to k y − Af k 2 ≤ , (1) obe ys k f − b f k 2 ≤ C 1 ,s + C 1 ,s k f − f s k 1 √ s , wher e C 1 ,s and C 2 ,s ar e constants which depend on s but not on n or m . Note that the reconstruction (1) in Theorem 1 is equiv alent to b f = arg min f ∈ R n 1 2 k y − Af k 2 2 + τ k f k 1 (2) where τ > 0 , which depends on , can be viewed as a regularization parameter . 4 I I I . C O D E D A P E RT U R E K E Y E D E X P O S U R E S E N S I N G Here we detail our compressive video acquisition method that combines coded apertures and keyed exposures to address all the competing challenges detailed in Sec. I-A. In our CAKE imaging method, each observed frame y k is given by an exposure of B , N / M high-rate coded observations in the time interv al T k , { ( k − 1) B + 1 , . . . , k B } : y k = X t ∈ T k A t f ? t + w k , (3) for k = 1 , . . . , M and w k is a zero-mean additive noise. W ithout the exposure, the action of each A t would be to collect a compressiv e snapshot measurement of the scene at time t . Each of these snapshots utilizes a high-resolution coded aperture and can be modeled mathematically as A t f ? t = S ( f ? t ∗ h t ); (4) where h t ∈ R n is a coding mask, and S ∈ { 0 , 1 } m × n is a subsampling operator (detailed belo w). The subsampling operator effecti vely models the action of a low-resolution FP A or CCD array . Our theory allows S to be a structured nonrandom linear operator , and hence we can re write the abov e as y k = S " X t ∈ T k ( f ? t ∗ h t ) # . (5) T o implement this sensing paradigm we simply modulate the coded aperture mask over the B -frame exposure time. It should be stressed that the coding masks ( { h t } N t =1 ) are of the size and resolution at which f ? will be reconstructed; this is in contradistinction to the MURA system, in which h MURA is at the size and resolution of the FP A. Thus in (4), we model the measurements as the scene being conv olved with the coded mask and then downsampled. While the sensing strategy described in (3) is straightfor- ward to implement, the additional structure imposed on the sensing matrix complicates the performance analysis of the system from a compressive sensing perspecti ve. T o highlight this, we now describe the structure of these sensing operations. The two-dimensional con volution of h t with a frame f ? t as in (5) can be represented as the application of the Fourier transform to f ? t and h t , followed by element-wise multiplica- tion and application of the inv erse Fourier transform. In matrix notation, this series of linear operations can be expressed as ( f ? t ∗ h t ) = F − 1 diag( F h t ) F f ? = H t f ? t , where F is the two-dimensional Fourier transform matrix, and diag( F h t ) is a diagonal matrix whose elements correspond to the transfer function, which is the Fourier transform of h t . The matrix product H t = F − 1 diag( F h t ) F ∈ R n × n is block-circulant with circulant block (BCCB) matrix. In matrix notation, a BCCB matrix H is consists of n 2 × n 2 blocks, H = H 1 H n 2 · · · H 3 H 2 H 2 H 1 · · · H 4 H 3 . . . . . . . . . . . . . . . H n 2 H n 2 − 1 · · · H 2 H 1 , 1 2 3 4 Scene Mask Coded Lightfield Time = = = = ⇤ ⇤ ⇤ ⇤ X Integrated Lightfield Data # 4 Fig. 1. Illustration of a single measured frame using CAKE sensing. where each H j ∈ R n 1 × n 1 is circulant; i.e., H j is of the form H j = h j, 1 h j,n 1 · · · h j, 3 h j, 2 h j, 2 h j, 1 · · · h j, 4 h j, 3 . . . . . . . . . . . . . . . h j,n 1 h j,n 1 − 1 · · · h j, 2 h j, 1 . This structure is a direct result of the fact that the k -point one-dimensional Fourier transform F k diagonalizes any k × k circulant matrix (such as H j with k = n 1 ) and so F ≡ F n 2 ⊗ F n 1 diagonalizes block-circulant matrices (such as H ). Here ⊗ denotes the Kronecker matrix product. For each frame, a compressed sensing matrix can be formed from the matrix H by retaining only a subset of the rows of H t . This is equiv alent to restricting the number of measurements collected of the vector H t f ? t . Here we simply subsample H t f ? t by applying a point-wise subsampling matrix S which retains only one measurement per uniformly sized d 1 × d 2 block; i.e., we subsample by d 1 in the first coordinate and d 2 in the second coordinate so that the result is an m 1 × m 2 image with m i = n i /d i , i = 1 , 2 . In matrix form S can be thought of as retaining a certain number of rows of the identity matrix. Because of the structure and deterministic nature of this type of do wnsampling, it is often more straightforward to realize in practical imaging hardware (see Sec. VII). The resulting per-frame sensing matrix A t is then giv en by A t = S H t = S F − 1 diag( F h t ) F . For our CAKE sensing strategy in (3), the sensing matrix for each block of B frames is formed by concatenating sensing matrices of the abov e type. For the k th low-rate observation, we ha ve a corresponding sensing matrix A k = A ( k − 1) B +1 · · · A kB = S H ( k − 1) B +1 · · · H kB . W e illustrate this sensing procedure in Figure 1. Since our sensing strategy is independent across each low- resolution frame, and also for simplicity of presentation, we only consider the recovery of a length B block of frames from a single snapshot image in our theoretical analysis. 5 Theorem 2 (Coded Aperture Keyed Exposure Sensing) . Let A = [ A 1 · · · A B ] be an m × nB sensing matrix for the CAKE system where the coded aperture pattern for each A t is drawn iid fr om a suitable pr obability distribution. Then there exists absolute constants c 1 , c 2 > 0 such that for any m ≥ c 1 δ − 2 s 2 log( nB ) , A is RIP ( s, δ s ) with δ s ≤ δ with pr obability exceeding 1 − 2 n 2 B 2 e − c 2 m/s 2 . Note here that s denotes the sparsity of B frames of the video sequence, rather than simply the sparsity of an individual frame; that is, if each frame had sparsity s 0 , then na ¨ ıvely , without accounting for temporal correlations, we would have s = B s 0 . W e present the proof of Theorem 2 in Appendix A for the particular case where we select [ h t ] k = ( p d/n with probability 1 / 2 , − p d/n with probability 1 / 2 , iid for k = 1 , . . . , n and t = 1 , . . . , N . This binary-valued dis- tribution was selected to model coded apertures with tw o states – open and closed – per mask element. It is straightforward to extend the result to other mask generating distributions, such as uniform or Gaussian distributed entries. W e discuss the issue of implementing these masks in Section VII. Recent work by Bajwa et al. [38], [39], showed that sub- sampled rows from random circulant matrices (and T oeplitz matrices, in general) are sufficient to recover sparse f ? from y with high probability . In particular , they showed that when m ≥ C δ − 2 s 2 log n for some constant C > 0 , a T oeplitz matrix whose first row contains elements drawn independently from a Gaussian distribution are RIP ( s, δ s ) with δ s ≤ δ with high probability , with extensions to other generating distrib utions. Drawing h iid from any zero-mean sub-Gaussian distribution with variance d/n is suf ficient to establish RIP; if one is willing to pay additional logarithmic f actors [40] shows RIP can be established if m ≥ C δ − 2 s (log s ) 2 (log n ) 2 for some constant C . It is of interest to note that since the CAKE sensing matrix is a concatenation of downsampled T oeplitz matricies, this sensing strategy has clear connections to [41] where the y consider concatenations of T oeplitz matricies as a sensing matrix for performing multiuser detection in wireless sensor networks. The important conceptual link is that their sensing matrix is used to determine a sparse set of simultaneously activ e users, where in our system, we are using it to infer a sequence of sparse frames. I V . D U A L - S C A L E M A S K S While the iid random coded aperture mask patterns are supported theoretically by Theorem 2, using a generativ e distribution with more structure allows for mask designs with sev eral practical benefits. Here we describe a new method of coded aperture mask design which we call Dual-Scale Masks (DSMs). This approach allows us to obtain a coarse low-rate low-resolution estimate from which we can often accurately estimate the optical flow motion field. W e describe the algorithms used for optical flow estimation as well as how these optical flow estimates are used in the final reconstruction in Sec. V -B. As these mask designs lev erage elements of Romberg’ s work [31], we first re view important components of this work prior to detailing our dual-scale mask designs. A. Phase-Shifting Mask Designs W e note that Romberg [31] conducted related work concur- rent and independent of our initial inv estigations [27]. While the random conv olution in Romberg’ s work follows a similar structure, the conv olution pattern is generated randomly in the frequency domain. More specifically , the entries of the transfer function correspond to random phase shifts (with some constraints to keep the resulting observation matrix real-valued). F or simplicity of presentation, we describe the generating distribution for a one-dimensional con volution for ev en-length masks. First we generate σ ∈ C n such that [ σ ] l = ± 1 with equal probability if l = 1 , e iφ with φ ∼ U (0 , 2 π ) if 2 ≤ l ≤ n/ 2 , ± 1 with equal probability if l = n/ 2 + 1 [ σ ] ∗ n − l +2 if n/ 2 + 2 ≤ l ≤ n. (6) Here U (0 , 2 π ) denotes the uniform distribution over [0 , 2 π ] . Then the real-valued con volution kernel is then given by h = F − 1 σ . This result can be extended easily for two- dimensional conv olutions. W e call coded aperture mask pat- terns h generated by this procedure phase-shift masks . T o form a compressed sensing matrix from this random con- volution, [31] considers tw o dif ferent do wnsampling strate gies: sampling at random locations and random demodulation. The first method entails selecting a random subset Ω ⊂ { 1 , . . . , n } of indices. W e form a downsampling matrix S Ω by retaining only the ro ws of the identity matrix indexed by Ω , hence the resulting measurement matrix is giv en by A = S Ω F − 1 diag( σ ) F , where σ is generated according to the two-dimensional analog of (6). The random demodulation method multiplies the result of the random con volution by a random sign sequence s ∈ {− 1 , 1 } n , such that s k = ± 1 with equal probability for all k = 1 , . . . , n , then performs an integration downsampling of the result. Therefore in this case the measurement matrix is A = D diag ( s ) F − 1 diag( σ ) F . It can be shown that both of these strategies yield RIP- satisfying matrices with high probability . Theorem 3 (Fourier-Domain CCA Sensing [31]) . Let A be an m × n sensing matrix r esulting fr om random con volution with phase shifts followed by either random subsampling or r andom demodulation, and let W denote an arbitrary orthonormal basis. Then there exists a constant c 3 > 0 such that if m ≥ c 3 δ − 2 min( s log 6 n, s 2 log 2 n ) , AW is RIP ( s, δ s ) with δ s ≤ δ with pr obability exceeding 1 − O ( n − 1 ) . 6 In certain regimes, these theoretical results are stronger than those in Theorem 2 specialized to B = 1 . The main strength is that these results allow sparsity in an arbitrary orthonormal basis. Howe ver , there are important dif ferences between the observation models which hav e a significant impact on the feasibility and ease of hardware implementation. W e elaborate on this in Section VII. B. Dual-Scale Mask Designs As the name suggests, our DSMs utilize two mask patterns; one pattern is related to the coarse low-rate low-resolution measurement scale, and the other at the fine high-rate high- resolution reconstruction scale. W e differentiate the two pat- terns using superscripts: h L k for the coarse-scale masks, and h H t for the fine-scale masks. W e lev erage the uniform phase gen- eration strategy in Section IV -A for our low-resolution pattern, and generate our high-resolution pattern directly in the spatial domain. The reason being that the random phase patterns yield a unitary transform which is easily in verted to acquire a low- resolution estimate. Generating the high-resolution pattern in the spatial domain easily allo ws us to ensure that each lo w- resolution block in the pattern has zero mean. While this may be possible with a suitable normalization of the phase-shift patterns, some of the desirable properties of these patterns will be lost. Our procedure is as follo ws. For k = 1 , . . . , M , generate a length- m random phase sequence σ k ∈ C m such that | [ σ k ] l | = 1 , l = 1 , . . . , m and σ k is conjugate symmetric ( i.e., according to the two-dimensional analog of (6) with n = m ). W e then let h L k = D > F − 1 σ k where D is integration downsampling and F is the 2D DFT matrix. For t = 1 , . . . , N , generate an h H t as follows. First we partition each mask into d 1 × d 2 blocks, then on each block we select a binary-valued vector in {− p d/n, p d/n } d uniformly at random from binary vectors which sum to zero and assign these v alues to this portion of the block. Said differently , on each block we randomly select half of the mask elements to hav e value +1 and set the other half to − 1 , so that D h H t = 0 by construction. The DSM patterns h t suitable for use in the CAKE sensing strategy (3) are linear combinations of the form h t = αh L k + β h H t , (7) where k = b ( t − 1) /B c + 1 , and t = 1 , . . . , N . The scalars α and β allow additional flexibility in the generation and are normalized such that α 2 + β 2 = 1 . Indi vidually h H t and h L k are normalized such that k h H t k 2 2 = k h L t k 2 2 = n/m , so this scaling ensures that k h t k 2 2 = n/m (as ( h H t ) > h L t = 0 by design). See Fig. 2 for an e xample mask pattern. The selection of α and β essentially trade-off the quality of the intermediate low-resolution estimate, and the final high- resolution reconstruction. Our experiments rev eal that a larger weighting on the high-resolution mask results in higher fidelity reconstructions. The advantage of the DSM design is that we can obtain a coarse estimate of f ? with negligible computational cost. The remainder of this section details how this is accomplished. Our h H h L = h + Fig. 2. Example dual-scale mask pattern. Here n 1 = n 2 = 16 ( n = 256 ), d 1 = d 2 = 4 ( d = 16 ), and thus m 1 = m 2 = 4 ( m = 16 ). target is to recover an estimate of the low-rate low-resolution frame sequence f L k := 1 dB X t ∈ T k D f ? t . For each lo w-resolution frame, an estimate c f L k of f L k can be found easily from a size m con volution with y k . If we denote Σ k = F − 1 diag( σ k ) F , this estimate is computed via c f L k = 1 αB Σ > k y k . (8) W e deri ve (8) in Appendix B, and we detail how this esti- mate is used to inform an optical flow-based reconstruction algorithm in Section V. V . V I D E O R E C O N S T R U C T I O N T o solve the CS minimization problems (1) or (2), we use well-established gradient-based optimization methods. These iterativ e algorithms are attractiv e because they utilize repeated applications of the operators A and A > [42]–[44]. For most CS matrices, these matrix-vector products are a large compu- tational burden and storing such matrices is memory intensive ev en for modest image sizes. Howe ver , the BCCB structure of A allows for computationally f ast matrix-v ector products using FFTs that do not require explicit storage of the matrix. In our case, the memory footprint is no larger than that of the image itself. In the remainder of this section, we describe ho w these algorithmic approaches are adapted for video reconstruction as well as describe ho w optical flow constraints can be introduced to improv e reconstruction performance. A. Reconstruction for V ideo Giv en measurements from the proposed CAKE imaging system, where we have designed the mask patterns for sparsity in a temporal transform, we recover the frames by solving a minimization similar to (2). Howe ver , since successi ve video frames are generally highly correlated, there are gains in considering the differences between subsequent frames instead. W e therefore define the difference frame sequence θ = [ θ > 1 , . . . , θ > N ] > such that θ t = ( f t t = 1 , f t − f t − 1 t = 2 , . . . , N . (9) From θ , the frames f t can easily be recovered by f t = P t i =1 θ i , t = 1 , . . . , N , which can be expressed compactly as 7 f = ( L ⊗ I n ) θ , where L is an N × N lower triangular matrix of all ones. Additionally , total variation (TV) regularization [45] has been shown to lead to increased reconstruction accuracy . W e combine these approaches by utilizing a TV penalty on the first frame ( f 1 = θ 1 ), and an sparsity penalty on the subsequent dif ference fames and solve the following minimization problem b θ = arg min θ ∈ R nN 1 2 k A ( L ⊗ I n ) θ − y k 2 2 + τ TV k θ 1 k TV + τ 1 N X t =2 k θ t k 1 b f = ( L ⊗ I n ) b θ . (10) Note that in the above we are solving for the entire video sequence in one large optimization problem. This is the reconstruction technique we use in the experimental results section. Empirically , this leads to better reconstruction performance howe ver this may pose computational challenges for video sequences of longer duration. In such cases, an effecti ve approach is to process the video in an online fashion, solving for only a few blocks of frames simultaneously . In many appli- cations, such as surveillance and monitoring, the video frames are strongly correlated, and the solution to a pre vious block of frames may be used as an initialization to the algorithm to solve the next block of frames. This estimate will generally be very accurate, and therefore, relativ ely fe w iterations are needed to obtain a solution to each optimization problem. In previous work, we noticed that when the amount of processing time allotted per frame is held constant, the accuracy generally increases with the number of frames processed simultaneously . Howe ver , one simply cannot solve for arbitrarily many frames simultaneously , as the improvement in accuracy diminishes when the size of the problem is such that only a v ery small number of reconstruction iterations can be run within the allotted time. W e refer the reader to [28] for details. B. Reconstruction using Estimated Optical Flow Optical flo w is a measurement of the spatial motion of the grayscale intensity of a video sequence over time [46] and has enjoyed success in the computer vision community for sev eral applications such as video coding [47], and robot vision [48]. The de velopment of f ast algorithms for estimating optical flo w , such as [49] or [50] based on conv ex optimization, has enabled its use in the signal processing community . In addition to its use in this work, we ha ve successfully employed optical flo w in related work to design adaptive sensing matrices based on compressiv e coded apertures [51]. Mathematically , optical flow is a time-varying vector field v t , t = 1 , . . . , N ov er the image space which describes how the grayscale intensity of frame f t is propagated to frame f t +1 . For two-dimensional video sequences, we consider v t = ( v 1 ,t , v 2 ,t ) , where v 1 ,t ∈ R n is the horizontal component and v 2 ,t ∈ R n is the v ertical component of the optical flow . W e can express the ef fects of the optical flow v t as a matrix V t such that f t +1 ≈ V t f t . Grouping these constraints over the duration of the video sequence, we can express this as V f ≈ 0 , where V = V 1 − I V 2 − I . . . . . . V N − 1 − I . (11) In this work, we use optical flow as a method to enforce smooth motion fields over an estimated video sequence. Our approach is based on the CS-MUVI approach [52]. The reconstruction procedure follows the following steps. 1) From the low-rate coded data y , we compute the lo w- resolution estimate in (8). 2) W e upsample c f L k , k = 1 , . . . , M to match the frame rate and resolution of the sequence f t , t = 1 , . . . , N . In our implementation, we use spline interpolation based on the interp3 MA TLAB command. 3) The optical flow field is computed using software pro- vided by Liu [53], and described in detail in [49]. 4) Using this estimated optical flow , we form the matrix V of motion maps in (11). Using these motion maps in conjunction with the data, we solv e for a set of sparse frame coefficients defined in (9) via the conv ex program minimize θ ∈ R nN k W T θ k 1 (12) subject to k A ( L ⊗ I n ) θ − y k 2 ≤ 1 , k V ( L ⊗ I n ) θ k 2 ≤ 2 , t = 1 , . . . , N − 1 where W is a sparsity basis for the difference frame sequence f . In our implementation, we consider sparsity in the Daubechies-4 wav elet basis. Here 1 and 2 are tuning pa- rameters that dictate how tightly the data and optical flow constraints should be enforced. The conv ex program in above can be placed into a standard ` 2 -constrained ` 1 program which can be solved using SPGL1 [44]. The added optical flow constraints are an effecti ve tool for enforcing smooth motion in the video sequence as well as constraining the optimization program which, in our experiments, results in faster conv er gence behavior . W e demonstrate the effecti veness of this method in Section VI. V I . N U M E R I C A L E X P E R I M E N T S In this section, we demonstrate the effecti veness of the pro- posed CAKE architecture at successfully recovering a video sequence of short-wa ve infrared (SWIR) data collected (cour - tesy of Jon Nichols at NRL) by a short-wave IR ( 0 . 9 − 1 . 7 µ m) camera. The camera is based on a 1024 × 1280 InGaAs (Indium, gallium arsenide) focal plane array with 20 µ m pixel pitch. Optically , the camera was built around a fixed, f / 2 aperture and pro vides a 6 ◦ field of vie w along the diagonal with a focus range of 50 m → ∞ . Imagery were output at the standard 30Hz frame rate with a 14 bit dynamic range. An example frame is sho wn in Fig. 3. In this sequence, the three boats are tra veling at dif ferent v elocities with respect to the slowly-changing background of the wa ves. The size of each frame is 128 × 256 , and we consider reconstructing 28 frames of the sequence. 8 Con ventional Dual-Scale Optical T ruth Spline CAKE Mask Flow Upsampling CAKE CAKE Frame 5 Residual Difference between Frame 5 and Frame 6 Frame 24 Residual Difference between Frame 24 and Frame 25 T otal RMSE 0.7192% 0.5270% 0.4764% 0.4503% Fig. 4. Results obtained for the sailboat region of interest for an example pair of frames (Frames 5 and 24). Sho wn are the true frames and the reconstructions from traditionally sampled data, from CAKE sensed data, from CAKE using a dual-scale mask, and CAKE with optical flows. Also reported are the total RMSEs from Frames 5 to 24 for each approach — the average over 10 trials are listed in T able I. For comparison we show the magnitude of the residuals as compared with the truth as well as the difference between consecutive frames. Note the overall better scene reconstruction both spatially and temporally using the three CAKE methods. Specifically , they more accurately recover the edges of the main sail. Furthermore, the low amplitude and blurry frame differences using spline upsampling indicate that spline upsampling captures the movement of the boat less accurately than CAKE. Finally , using CAKE with optical flow results in much less noisy inter-frame differences, which shows that this method reconstructs motion to a higher accuracy . W e consider CAKE observations where we downsample spatially by a factor of 2 in both directions ( d 1 = d 2 = 2 ), and use a coded exposure block length of B = 4 . W e compare three CAKE paradigms: single mask, dual-scale masks, and CAKE using optical flow . W e reconstruct the entire video sequence using all M = N/B = 7 low-resolution lo w- rate frames using a total variation penalty on the first frame, and ` 1 sparsity penalty on all subsequent difference frames. W e optimize the regularization parameters to minimize the reconstruction error . Specifically , for the single- and dual- scale mask CAKE (10), τ TV = 1 . 0 × 10 − 2 , τ 1 = 2 . 0 × 10 − 2 , and for CAKE with optical flo w (12), 1 = 4 . 3 × 10 − 2 and 2 = 4 . 3 × 10 3 . W e used the weights α = 0 . 383 and β = 0 . 924 in (7). For comparison, we consider traditionally captured data ( i.e., by simply av eraging over d 1 × d 2 × B blocks of the spatiotemporal video sequence). T o interpolate this data to the original resolution of the video sequence, we consider spline interpolation. W e show the estimates for the different acquisition and re- construction methods in Fig. 4. Here we focus only on a re gion of interest (R OI) of the sailboat. W e see that the CAKE sensing is able to reconstruct the scene with a higher spatial and temporal resolution. This is evidenced in the residuals, which include much less critical scene structure than the con ventional system. W e see from the examination of the difference frame that the nearest-neighbor reconstruction from con ventionally sampled data yields only slight motion o ver the two frames and suffers from poor spatial resolution. Using spline interpolation helps improv e the spatial resolution, but it is still insufficient to recov er the scene with high temporal resolution, as can be 9 Fig. 3. Example frame (frame 21) from the ground truth video sequence used in the numerical experiments. Sensing Architecture Reconstruction RMSE (A verage over 10 Trials) Con ventional Spline Upsampling 0.7192% CAKE 0.5270% Dual-Scale Mask CAKE 0.4764% Optical Flow CAKE 0.4503% T ABLE I R E CO N S T RU CT I O N R MS E A CH I E V ED F O R T H E C ON V E N TI O NA L A N D C O DE D A P E RTU R E A N D K EY E D E X PO S U R E (C A K E ) A R C H IT E C T UR E S OV E R T HE V I D E O S EQ U E NC E . R E SU LT S A RE R E P O RTE D F O R T H E R E G I ON O F I NT E R E ST O F T HE S A I L BO A T . D U E TO B O UN DA RY I S S UE S , W E R E PO RT T H E RM S E D I S CO U N T IN G T H E FI RS T A N D L A ST B L O C K O F 4 F R AM E S . seen in the blur on the leading edge of the sail. Numerically we quantify the performance over the entire video sequence in terms of the RMSE (%), 100 · k b f − f ? k 2 / k f ? k 2 , calculated ov er the sailboat R OI in order to ef fecti vely assess ability to capture motion information in the scene. This is tabulated in T able VI. In summary we see that the CAKE acquisitions are able to outperform traditionally sampled video in terms of reconstruction accuracy and reconstructing salient motion. On av erage, to reconstruct the 28 video frames, CAKE took 96 minutes and Dual-Scale Mask CAKE took 93 minutes, while CAKE with Optical Flow took only 31 minutes. Con ventional spline upsampling took nearly 23 seconds. It should be noted that there are limitations to the CAKE system in the presence of strong motion. In this case, the sparsity lev el of the difference frames may drastically increase as the previous frame ceases to be a good prediction of the next frame. As such, the RIP bound in Theorem 2 states the number of measurements we require to reconstruct the scene must necessarily increase, requiring either a faster temporal resolution measurements or higher resolution FP A to achiev e the same accuracy . Because of this balance, a system designer may need to make important engineering tradeof fs to imple- ment CAKE acquisition for a particular application. V I I . H A R DW A R E A N D P R A C T I C A L I M P L E M E N TA T I O N C O N S I D E R A T I O N S In this section we describe ho w we shift from modeling the coded aperture masks in a way that is compatible with compressed sensing theory , to a model that describes their actual implementation in an optical system. In particular , gaps between theory and practice arise due to: • when using lenses with bandwidth below the desired resolution; • system calibration issues; • nonnegati vity of scenes and sensing matrices in incoher- ent light settings; • inaccurate synchronization of spatial light modulators and sensor read-out; • low photon efficienc y of some architectures; • hardware implementation of downsampling operation; • quantization errors in observ ations. Each of these is described in more detail below . Lens bandwidth: Our analysis does not account for the bandwidth of the lenses; in particular , we implicitly assume that the bandwidth of the lenses is high enough that band- limitation ef fects are negligible at the resolution of interest. System calibration issues: In all of the hardware settings described below , precise alignment of the optical components ( e.g ., the mask and the focal plane array) is critical to the performance of the proposed system. Often a high-resolution FP A is helpful for alignment and calibration. Even when we hav e the ability to estimate A precisely , there are settings where using an approximation of A has advantages; for instance, when we can approximately compute Af using fast Fourier transforms, then conducting sparse recovery is much faster than with a dense matrix representation of A . When we run a sparse recovery algorithm with an inaccurate sensing matrix A , it corresponds to the observ ation model y = Af + E f + w, where E f represents the difference between the true projec- tions collected by imager and the assumed projections in A . The term E f can be thought of as signal-dependent noise. Recent work analyzes the theoretical ramifications of these kinds of errors [54]. Nonnegativity in incoherent light settings: In this paper we focus on incoher ent light settings (consistent with many applications in astronomy , microscopy , and infrared imaging). In this case, the coded aperture must be real-v alued and flux-preserving ( i.e., the light intensity hitting the detector cannot exceed the light intensity of the source). In a con ven- tional lensless coded aperture imaging setup, the point spread function associated with the aperture is the mask pattern h itself. T o shift a RIP-satisfying aperture as in Theorem 2 to an implementable aperture, one simply needs to apply an affine transform to h mapping [ − p d/n, p d/n ] to [0 , 1 /n ] . This transform ensures that the resulting mask pattern is nonnegati ve and flux-preserving. In previous work [29], [30], we discuss accounting for nonnegati vity during reconstruction by a mean subtraction step. Synchronization issues: Programmable spatial light mod- ulators (SLMs) allo w changing the mask pattern over time, a critical component of the CAKE concept. Howe ver , in order for the proposed approach to work, the mask or SLM used must be higher resolution than the FP A. Currently , very high resolution SLMs are still in de velopment. Chrome on quartz masks can be made with higher resolution than many SLMs, but cannot be changed on the fly unless we mount a small number of fixed masks on a rotating wheel or translating 10 stage. Furthermore, the changing of the mask patterns must be carefully synchronized to th timing of readout on the focal plane array . Photon efficiency: Phase-shift masks for coded aperture imaging have been implemented recently using a phase screen [55]. This approach allows one to account for dif fraction in the optical design. Howe ver , depending on the precise optical architecture, phase shift masks may be less photon-ef ficient than amplitude modulation masks. Downsampling in hardwar e: In de veloping RIP-satisfying coded apertures, Theorem 2 assumes the subsampling opera- tion S selects one measurement per d 1 × d 2 block. From an implementation standpoint, this operation effecti vely discards a large portion ( ( d − 1) /d ) of the available light, which would result in much lo wer signal-to-noise ratios at the detector . A more pragmatic approach is to use larger detector ele- ments that essentially sum the intensity ov er each d 1 × d 2 block, making a better use of the available light. W e call this operation inte gration downsampling and denote it by D to distinguish it from subsampling. The drawback to this approach is that we lose many of the desirable features of the system in terms of the RIP . Integration downsampling causes a large coherence between neighboring columns of the resulting sensing matrix A . An intermediate approach would randomly sum a fraction of the elements in each size d block, which increases the signal-to-noise ratio versus subsampling, but yields smaller expected coherence. This approach is motiv ated by the random demodulation proposed in [31] and described in Sec IV, whereby the signal is multiplied by a random sequence of signs {− 1 , +1 } , then block-wise averaged. The pseudo- random summation proposed here can be thought of as an optically realizable instantiation of the same idea where we multiply by a random binary { 0 , 1 } sequence. Noise and quantization errors: While CS is particularly useful when the FP A needs to be kept compact, it should be noted that CS is more sensitive to measurement errors and noise than more direct imaging techniques. The e xperi- ments conducted in this paper simulated very high signal-to- noise ratio (SNR) settings and sho wed that CS methods can help resolve high resolution features in images. Howe ver , in low SNR settings CS reconstructions can exhibit significant artifacts that may e ven cause more distortion than the lo w- resolution ef fects associated with con ventional coded aperture techniques such as MURA. Similar observations are made in [56], which presents a direct comparison of the noise robust- ness of CS in contrast to con ventional imaging techniques both in terms of bounds on ho w reconstruction error decays with the number of measurements and in a simulation setup; the authors conclude that for most real-world images, CS yields the biggest gains in high signal-to-noise ratio (SNR) settings. Related theoretical work in [57] sho w that in the presence of low SNR photon noise, theoretical error bounds can be large, and thus the e xpected performance of CS may be limited unless the number of av ailable photons to sense is sufficiently high. These considerations play an important role in choosing the type of downsampling to implement. Similar issues arise when considering the bit-depth of focal plane arrays, which corresponds to measurement quantization errors. Future efforts in designing optical CS systems must carefully consider the amount of noise anticipated in the measurements to find the optimal tradeoff between the focal plane array size and image quality . V I I I . C O N C L U S I O N S In this paper, we demonstrate how CS principles can be applied to physically realizable optical system designs, namely coded aperture imaging. Numerical experiments show that CS methods can help resolve high resolution features in videos that con ventional imaging systems cannot. W e have also demonstrated that our CAKE acquisition system can recov er video sequences from highly under-sampled data in much broader settings than those considered in initial coded exposure studies. Howev er, our derived theoretical limits sho w that there are important tradeoffs in v olved that depend on the spatial sparsity and temporal correlations in the scene that ultimately govern the accuracy of our reconstructions for a specified number of compressiv e measurements. Finally , we note that our proposed approach performs well in high signal- to-noise ratio settings, b ut like all CS reconstructions, it can exhibit significant artifacts in high-noise settings. T w o practical issues associated with coded aperture imaging in general are the blur due to misalignment of the mask and diffraction and interference effects. Non-compressiv e aperture codes hav e been dev eloped to be robust to these effects [58]. One important avenue for future research is the dev elopment of compr essive coded aperture makes with similar robustness properties. Non-compressi ve coded apertures hav e also been shown useful in inferring the depth of different objects in a scene; similar inference may be possible with the compressiv e coded apertures described in this paper . Howe ver , using the current proof techniques here or in [40] cannot be directly applied as the additional structure in the DSM masks results in random vectors that are not isotropic sub-Gaussian random vectors. A C K N O W L E D G E M E N T S The authors would like to thank Prof. Justin Romberg and Dr . Kerkil Choi for sev eral valuable discussions, Dr . Aswin Sankaranarayanan for providing code to reproduce the results in [52], and Dr . Seda Senay for assisting with the numerical experiments. A P P E N D I X A. Pr oof of the RIP for CAKE Sensing Here we present the proof of Theorem 2 that our CAKE sensing architecture satisfies the restricted isometry property . The proof uses the same techniques as that of Theorem 4 in [39], where the RIP is established by shifting the analysis of the submatrices of A to the entries of the Gram matrix G = A > A by in v oking Ger ˇ sgorin’ s disc theorem [59]. This theorem states that the eigenv alues of an n × n complex matrix G all lie in the union of n discs d j ( c j , r j ) , j = 1 , 2 , . . . , n , centered at c j = G j,j with radius r j = m X i =1 i 6 = j | G i,j | . 11 In essence, we show that with high probability G ≈ I , so that the eigen values of G are clustered around one with suitably high probability . Pr oof of Theorem 2: Note that the Gram matrix has a certain block structure; since A = [ A 1 · · · A B ] , we have G = A > A = A > 1 A 1 A > 1 A 2 · · · A > 1 A B A > 2 A 1 A > 2 A 2 · · · A > 2 A B . . . . . . . . . . . . A > B A 1 A > B A 2 · · · A > B A B . In the interest of notational simplicity , it is easier to work on the two-dimensional images versus their one-dimensional vec- torial representations. This means that for our coded aperture mask patterns h t , instead of our previous indexing notation [ h t ] k , we use two dimensional indexing [ h t ] ( k 1 ,k 2 ) such that [ h t ] ( k 1 ,k 2 ) = [ h t ] k 1 + n 1 k 2 = [ h t ] k . Additionally , for the sensing matrices A t , we use the indexing [ A t ] ( l 1 ,l 2 ) , ( k 1 ,k 2 ) = [ A t ] l 1 + m 1 l 2 ,k 1 + n 2 k 2 = [ A t ] l,k , also define ( k ) n = k mo d n for modular arithmetic. W e first focus on the diagonal blocks of this matrix. First we need a relationship between the matrices A t and the mask patterns h t . It can be shown that [ A t ] l,k = [ h t ] (( l 1 − 1) d 1 − k 1 +1) n 1 +1 , (( l 2 − 1) d 2 − k 2 +1) n 2 +1 , so the entries of the diagonal blocks of G are [ A > t A t ] p,q = n 1 /d 1 X l 1 =1 n 2 /d 2 X l 2 =1 h (( l 1 − 1) d 1 − p 1 +1) n 1 +1 , (( l 2 − 1) d 2 − p 2 +1) n 2 +1 · h (( l 1 − 1) d 1 − q 1 +1) n 1 +1 , (( l 2 − 1) d 2 − q 2 +1) n 2 +1 . (13) From the normalization, it is straightforward to sho w that E [ A > t A t ] = I . No w we need to bound the de viation about the mean via concentration. The diagonal terms are simply a sum of n = n 1 n 2 bounded iid entries, and hence for all t and q , Hoeffding’ s inequality yields P ( | [ A > t A t ] q ,q − 1 | ≥ δ d ) ≤ 2 exp − 2 nδ 2 d d . Next we consider the off-diagonal entries of the diagonal blocks. There are two special cases to consider . In the special case that either mod ( p 1 − q 1 , d 1 ) 6 = 0 or mod ( p 2 − q 2 , d 2 ) 6 = 0 , each of the terms in the summand in (13) picks out a differ ent set of coefficients from h t . Howe ver , the case that both mod ( p 1 − q 1 , d 1 ) = 0 and mod ( p 2 − q 2 , d 2 ) = 0 needs special care since there are no w dependencies between the terms in the summand in (13). Howe ver , due to the special nature by which we select the coefficients, we can partition the sum into two sums (denoted S 1 and S 2 such that each of these is a sum of n/ 2 d independent terms. W e can then apply the Hoeffding bound to each to yield P ( | S i | ≥ δ o / 2 s ) ≤ 2 exp − nδ 2 o 4 ds 2 , i = 1 , 2 . Then applying the union bound giv es us P ( | [ A > t A t ] p,q | ≥ δ o /s ) ≤ 4 exp − nδ 2 o 4 ds 2 , p 6 = q . (14) Since this latter bound decays more slowly , and in the interest of simplicity , we ov erbound all the off-diagonal entries of the diagonal blocks using this latter expression. Now consider the off-diagonal blocks A > t A t 0 , t 6 = t 0 . From independence we can show that E [ A > t A t 0 ] = 0 . The entries of these matrices are sums of independent entries, and we could apply Hoef fding’ s bound. Howe ver , will will need to ov erbound with (14) to obtain a simple expression, hence we use P ( | [ A > t A t 0 ] p,q | ≥ δ o /s ) ≤ 4 exp − nδ 2 o 4 ds 2 , t 6 = t 0 . Lastly , we need to perform a union bound ov er all the entries of the matrix. W e ha ve nB diagonal entries, and exploiting symmetry , we only hav e nB ( nB − 1) / 2 remaining entries. Hence taking δ d = δ o = δ s / 2 , P ( A does not satisfy RIP ( s, δ s )) ≤ 2 nB exp − nδ 2 s 2 d + 4[ nB ( nB − 1) / 2] exp − nδ 2 s 16 ds 2 ≤ [2 nB + 2 nB ( nB − 1)] exp − nδ 2 s 16 ds 2 ≤ 2 n 2 B 2 exp − c 2 n ds 2 , where c 2 ≤ δ 2 s / 16 . If nB ≥ 2 , this probability is less than one provided n/d ≥ c 1 δ − 2 s 2 log( nB ) for any δ ≤ δ s where c 1 ≥ 3 δ 2 /c 2 , noting that m = n/d establishes the theorem. B. Coarse Reconstruction using Dual-Scale Masks This section verifies (8). First, we decompose f ? into two terms, f ? t = D > f L b ( t − 1) /B c +1 + h f ? t − D > f L b ( t − 1) /B c +1 i , (15) such that the first is based on f L and the remaining is a residual term. In the sequel we define the residual as f f ? t = f ? t − D > f L b ( t − 1) /B c +1 . Now consider our measurements. For simplicity , assume we hav e these without noise. Then if we define H H t , F − 1 diag( F h H t ) F , and H L k , F − 1 diag( F h L k ) F , we can write the observations as y k = X t ∈ T k A t f ? t = S X t ∈ T k h αH L b ( t − 1) /B c +1 + β H H t i f ? t = αS H L k X t ∈ T k D > f L b ( t − 1) /B c +1 + αS H L k X t ∈ T k f f ? t + β S X t ∈ T k H H t f ? t = αB S H L k D > f L k + αS H L k X t ∈ T k f f ? t + β S X t ∈ T k H H t f ? t , 12 where we used the decomposition (15) in the third equality . T urning to our coarse estimate (8), we hav e c f L k = 1 d Σ > k S H L k D > f L k + 1 dB Σ > k S H L k X t ∈ T k f f ? t + 1 dB β α Σ > k S X t ∈ T k H H t f ? t . (16) It can be sho wn that Σ > k S H L k D > = dI . For simplicity of notation, we show this for 1D signals; the extension to higher dimensions is straightforward. Furthermore, we drop the k subscripts temporarily . W e show that S H L D > = d Σ , since by construction of the random phases Σ > Σ = I m . T o see this, first let g , F − 1 σ , then by construction we have g k = h ( k − 1) d +1 = 1 d d X q =1 h L ( k − 1) d + q , and so Σ kl = g ( k − l ) m +1 = h L ( k − l ) m d +1 = 1 d d X q =1 h L ( k − l ) m d + q . Now let us examine S H L D > : ( S H L D > ) kl = n X i =1 n X j =1 S ki H L ij D lj = n X i =1 n X j =1 1 { i = kd } h L ( i − j ) n +1 d X q =1 1 { j =( l − 1) d + q } = d X q =1 h L [( kd ) − (( l − 1) d + q )] n +1 = d X q =1 h L (( k − l ) d + d − q ) n +1 = d X q =1 h L (( k − l ) d + p − 1) n +1 = d X q =1 h L ( k − l ) m d + p = dg ( k − l ) m +1 , and hence S H L D > = d Σ as claimed. Because Σ > k S H L k D > = dI , we have c f L k = f L k + η k , where η k is defined as the second two terms in (16). Note that we expect that η k will be small in comparison to f L k . In particular, the first term in η k will be small because the energy in f L k will be much greater than that in f f ? t for smoothly varying video sequences. The second term in η k will be zero- mean because σ k and h H t are generated independently from zero-mean distrib utions. R E F E R E N C E S [1] E. Cand ` es, J. Romberg, and T . T ao, “Robust uncertainty principles: Exact signal reconstruction from highly incomplete frequency information, ” IEEE T rans. Inform. Theory , vol. 52, no. 2, pp. 489 – 509, 2006. [2] D. L. Donoho, “Compressed sensing, ” IEEE T rans. Inform. Theory , vol. 52, no. 4, pp. 1289–1306, 2006. [3] M. Shankar , R. Willett, N. Pitsianis, T . Schulz, R. Gibbons, R. T . Kolste, J. Carriere, C. Chen, D. Prather , and D. Brady , “Thin infrared imaging systems through multichannel sampling, ” Applied Optics , vol. 47, no. 10, pp. B1–B10, 2008. [4] A. F . Coskun, I. Sencan, T .-W . Su, and A. Ozcan, “Lensless wide-field flourescent imaging on a chip using compressive decoding of sparse objects, ” Optics Express , vol. 18, no. 10, pp. 10510–10523, 2010. [5] L. C. Potter , E. Ertin, J. T . Parker , and M. Cetin, “Sparsity and compressed sensing in radar imaging, ” Pr oceedings of the IEEE , vol. 98, no. 6, pp. 1006–1020, 2010. [6] J. Gu, S. K. Nayar, E. Grinspun, P . N. Belhumeur , and R. Ramamoorthi, “Compressiv e structured light for recovering inhomogeneous participat- ing media, ” in Pr oceedings of the European Confer ence on Computer V ision , 2008. [7] M. Mohtashemi, H. Smith, D. W alburger , F . Sutton, and J. Diggans, “Sparse sensing dna microarray-based biosensor: Is it feasible?, ” in 2010 IEEE Sensors Applications Symposium , Feb 2010, pp. 127–130. [8] M.A. Sheikh, O. Milenkovic, and R.G. Baraniuk, “Designing compres- siv e sensing DNA microarrays, ” in 2nd IEEE International W orkshop on Computational Advances in Multi-Sensor Adaptive Processing, 2007 , December 2007, pp. 141–144. [9] M. Lustig, D. Donoho, and J. M. Pauly , “Sparse MRI: The application of compressed sensing for rapid MR imaging, ” Magnetic Resonance in Medicine , vol. 58, no. 6, pp. 1182–1195, December 2007. [10] A.C. Gurbuz, J.H. McClellan, and W .R. Scott, “ A compressiv e sensing data acquisition and imaging method for stepped frequency GPRs, ” IEEE Tr ansactions on Signal Pr ocessing , vol. 57, no. 7, pp. 2640 – 2650, July 2009. [11] P . Y e, J.L. Paredes, G.R. Arce, Y . W u, C. Chen, and D.W . Prather, “Compressiv e confocal microscopy , ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing, 2009 , April 2009, pp. 429 –432. [12] J. Bobin, J.-L. Starck, and R. Ottensamer, “Compressed sensing in astronomy , ” Selected T opics in Signal Pr ocessing, IEEE Journal of , vol. 2, no. 5, pp. 718 –726, October 2008. [13] M. F . Duarte, M. A. Davenport, D. T akhar, J. N. Laska, T . Sun, K. F . Kelly , and R. G. Baraniuk, “Single pix el imaging via compressiv e sampling, ” IEEE Sig. Pr oc. Mag. , vol. 25, no. 2, pp. 83–91, 2008. [14] J. G. Ables, “Fourier transform photography: a new method for X-ray astronomy , ” Proceedings of the Astr onomical Society of Australia , vol. 1, pp. 172, 1968. [15] R. H. Dicke, “Scatter -hole cameras for X-rays and gamma-rays, ” Astr ophysical Journal , vol. 153, pp. L101–L106, 1968. [16] E. Caroli, J. B. Stephen, G. Di Cocco, L. Natalucci, and A. Spizzichino, “Coded aperture imaging in X- and gamma-ray astronomy , ” Space Science Reviews , vol. 45, pp. 349–403, 1987. [17] G. Skinner, “Imaging with coded-aperture masks, ” Nucl. Instrum. Methods Phys. Res. , vol. 221, pp. 33–40, Mar. 1984. [18] R. Accorsi, F . Gasparini, and R. C. Lanza, “ A coded aperture for high- resolution nuclear medicine planarimaging with a conv entional anger camera: experimental results, ” IEEE T rans. Nucl. Sci. , vol. 28, pp. 2411– 2417, 2001. [19] G. R. Gindi, J. Arendt, H. H. Barrett, M. Y . Chiu, A. Ervin, C. L. Giles, M. A. Kujoory , E. L. Miller, and R. G. Simpson, “Imaging with rotating slit apertures and rotating collimators, ” Medical Physics , vol. 9, pp. 324–339, 1982. [20] S. R. Meikle, R. R. Fulton, S. Eberl, M. Bahlbom, K.P . W ong, and M. J. Fulham, “ An in vestigation of coded aperture imaging for small animal SPECT, ” IEEE T rans. Nucl. Sci. , vol. 28, pp. 816–821, 2001. [21] A. Levin, R. Fergus, F . Durand, and W . T . Freeman, “Image and depth from a con ventional camera with a coded aperture, ” in Pr oc. of Intl. Conf. Comp. Graphics. and Inter . T ech. , 2007. [22] A. Agrawal and Y i Xu, “Coded exposure deblurring: Optimized codes for psf estimation and in vertibility , ” in IEEE Computer V ision and P attern Recognition , 2009. [23] R. Raskar , A. Agrawal, and J. Tumblin, “Coded exposure photography: Motion deblurring using fluttered shutter , ” in A CM Special Interest Gr oup on Computer Graphics and Interactive T echniques (SIGGRAPH) , 2006. [24] A. V eeraragha van, D. Reddy , and R. Raskar, “Coded strobing pho- tography: Compressive sensing of high speed periodic videos, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 33, no. 4, pp. 671–686, 2011. [25] P . Llull, X. Liao, X. Y uan, J. Y ang, D. Kittle, L. Carin, G. Sapiro, and D. J. Brady , “Coded aperture compressive temporal imaging, ” ArXiv e-prints , Feb. 2013. [26] Z. T . Harmany , R. F . Marcia, and R. M. W illett, “Spatio-temporal compressed sensing with coded apertures and keyed exposures, ” http://arxiv .or g/abs/1111.7247, 2011. 13 [27] R. F . Marcia and R. M. Willett, “Compressiv e coded aperture superres- olution image reconstruction, ” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Processing (ICASSP) , 2008. [28] R. F . Marcia and R. M. W illett, “Compressive coded aperture video reconstruction, ” in Pr oc. 16th Euro. Signal Pr ocess. Conf., 2008 , Lausanne, Switzerland, August 2008. [29] R. F . Marcia, Z. T . Harmany , and R. M. Willett, “Compressive coded aperture imaging, ” in Proceedings of the 2009 IS&T/SPIE Electr onic Imaging: Computational Imaging VII , San Jose, CA, USA, January 2009. [30] R. F . Marcia, Z. T . Harmany , and R. M. Willett, “Compressive coded apertures for high-resolution imaging, ” in Proc. 2010 SPIE Photonics Eur ope , Brussels, Belgium, April 2010. [31] J. Romberg, “Compressive sampling by random con volution, ” SIAM Journal on Imaging Sciences , vol. 2, no. 4, pp. 1098–1128, 2009. [32] N. J. A. Sloane and M. Harwit, “Masks for Hadamard transform optics, and weighing designs, ” Appl. Opt. , vol. 15, no. 1, pp. 107–114, 1976. [33] A. Ashok and M. A. Neifeld, “Pseudorandom phase masks for superresolution imaging from subpixel shifting, ” Appl. Opt. , vol. 46, no. 12, pp. 2256–2268, 2007. [34] S. R. Gottesman and E. E. Fenimore, “New family of binary arrays for coded aperture imaging, ” Appl. Opt. , vol. 28, no. 20, pp. 4344–4352, 1989. [35] E. J. Cand ` es and T . T ao, “Decoding by linear programming, ” IEEE T rans. Inform. Theory , vol. 15, no. 12, pp. 4203–4215, 2005. [36] E. Cand ` es, “Compressive sampling, ” in Int. Congress of Mathematics , 2006, vol. 3, pp. 1433–1452. [37] E. J. Cand ` es, J. K. Romberg, and T . T ao, “Stable signal recovery from incomplete and inaccurate measurements, ” Communications on Pur e and Applied Mathematics , vol. 59, no. 8, pp. 1207–1223, 2006. [38] W . Bajwa, J. Haupt, G. Raz, S. Wright, and R. Nowak, “T oeplitz- structured compressed sensing matrices, ” in Proc. of Stat. Sig. Pr oc. W orkshop , 2007. [39] J. D. Haupt, W . U. Bajwa, G. M. Raz, and R. D. Nowak, “T oeplitz compressed sensing matrices with applications to sparse channel esti- mation, ” IEEE T ransactions on Information Theory , vol. 56, no. 11, pp. 5862–5875, 2010. [40] F. Krahmer, S. Mendelson, and H. Rauhut, “Suprema of chaos processes and the restricted isometry property , ” Comm. Pure Appl. Math. (to appear) , 2013. [41] L. Applebaum, W .U. Bajwa, M.F . Duarte, and R. Calderbank, “ Asyn- chronous code-division random access using con ve x optimization, ” Accepted to Elsevier Phy . Commun. , 2011. [42] S. Wright, R. Nowak, and M. Figueiredo, “Sparse reconstruction by separable approximation, ” IEEE T rans. Signal Process. , vol. 57, pp. 2479–2493, 2009. [43] M. A. T . Figueiredo, R. D. Nowak, and S. J. Wright, “Gradient projection for sparse reconstruction: Application to compressed sensing and other in verse problems, ” IEEE J. Sel. T op. Sign. Pr oces.: Special Issue on Conve x Optimization Methods for Signal Processing , vol. 1, no. 4, pp. 586–597, 2007. [44] E. van den Berg and M. P . Friedlander, “Probing the pareto frontier for basis pursuit solutions, ” SIAM J. Sci. Comput. , vol. 31, no. 2, pp. 890–912, 2008. [45] L.I. Rudin, S. Osher, and E. Fatemi, “Nonlinear total variation based noise removal algorithms, ” Physica D: Nonlinear Phenomena , vol. 60, no. 1-4, pp. 259–268, 1992. [46] A. V erri and T . Poggio, “Motion field and optical flow: Qualitativ e properties, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 11, no. 5, pp. 490–498, 1989. [47] P . Moulin, R. Krishnamurthy , and J. W . W oods, “Multiscale modeling and estimation of motion fields for video coding, ” IEEE T ransactions on Image Processing , vol. 6, no. 12, pp. 1606–1620, 1997. [48] X. Song, L. D. Seneviratne, and K. Althoefer, “ A Kalman filter- integrated optical flow method for velocity sensing of mobile robots, ” IEEE/ASME T ransactions on Mechatr onics , vol. 16, no. 3, pp. 551–563, 2011. [49] C. Liu, Beyond Pixels: Exploring New Repr esentations and Applications for Motion Analysis , Ph.D. thesis, Massachusetts Institute of T echnology , 2009. [50] A. A yv aci, M. Raptis, and S. Soatto, “Occlusion detection and motion es- timation with conv ex optimization, ” in Advances in Neural Information Pr ocessing Systems 23 , J. Lafferty , C. K. I. Williams, J. Shawe-T aylor, R.S. Zemel, and A. Culotta, Eds., pp. 100–108. MIT Press, 2010. [51] Z. T . Harmany , A. Oh, R. F . Marcia, and R. M. W illett, “Motion-adaptive compressiv e coded apertures, ” Proceedings of SPIE , vol. 8165, no. 1, pp. 81651C–81651C–5, Sept. 2011. [52] A. C. Sankaranarayanan, C. Studer , and R. G. Baraniuk, “CS-MUVI: video compressive sensing for spatial-multiplexing cameras, ” in 2012 IEEE International Conference on Computational Photography (ICCP) , Apr . 2012, pp. 1–10. [53] C. Liu, “Optical flow matlab/c++ code, ” http://people.csail.mit.edu/celiu/ OpticalFlow/. [54] P .-L. Loh and M. W ainwright, “High-dimensional regression with noisy and missing data: provable guarantees with non conve xity , ” Ann. Stat. , vol. 40, no. 3, pp. 1637–1664, 2012. [55] W . Chi and N. George, “Phase-coded aperture for optical imaging, ” Optics Communications , vol. 282, pp. 2110–2117, 2009. [56] J. Haupt and R. Nowak, “Compressiv e sampling vs. conv entional imaging, ” in Proc. IEEE Intl. Conf. on Imaging Processing , 2006, pp. 1269–1272. [57] M. Raginsky , R. M. Willett, Z. T . Harmany , and R. F . Marcia, “Com- pressed sensing performance bounds under Poisson noise, ” IEEE T rans. Signal Process. , vol. 58, no. 8, pp. 3990–4002, 2010. [58] J. W . Stayman, N. Subotic, and W . Buller, “ An analysis of coded aperture acquisition and reconstruction using multi-frame code sequences for relaxed optical design constraints, ” in Proceedings SPIE 7468 , 2009. [59] R. V arga, Ger ˇ sgorin and His Cir cles , Springer-V erlag, Berlin, Germany , 2004. PLA CE PHO TO HERE Zachary T . Harmany received the B.S. (magna cum lade, with honors) in Electrical Engineering and B.S. (cum lade) in Physics from The Pennsylvania State University in 2006 and his Ph.D. in Electrical and Computer Engineering from Duke Univ ersity in 2012. In 2010 he was a visiting researcher at The Univ ersity of California, Merced. He is currently a Postdoctoral Fellow at the University of W isconsin- Madison. His research interests include nonlinear optimization, machine learning, and signal and im- age processing with applications in medical imaging, astronomy , and night vision. PLA CE PHO TO HERE Roummel F . Marcia received his B.A. in Mathemat- ics from Columbia Univ ersity in 1995 and his Ph.D. in Mathematics from the University of California, San Diego in 2002. He was a Computational and Informatics in Biology and Medicine Postdoctoral Fellow in the Biochemistry Department at the Uni- versity of W isconsin-Madison and a Research Sci- entist in the Electrical and Computer Engineering at Duke University . He is currently an Associate Professor of Applied Mathematics at the Univ ersity of California, Merced. His research interests include nonlinear optimization, numerical linear algebra, signal and image processing, and computational biology . PLA CE PHO TO HERE Rebecca M. Willett (SM ’02, M ’05, SM ’11) is an assistant professor in the Electrical and Com- puter Engineering Department at Duke University . She completed her PhD in Electrical and Computer Engineering at Rice Univ ersity in 2005. Prof. W illett receiv ed the National Science Foundation CAREER A ward in 2007, is a member of the D ARP A Com- puter Science Study Group, and received an Air Force Office of Scientific Research Y oung In vesti- gator Program award in 2010. Prof. Willett has also held visiting researcher positions at the Institute for Pure and Applied Mathematics at UCLA in 2004, the University of Wisconsin- Madison 2003-2005, the French National Institute for Research in Computer Science and Control (INRIA) in 2003, and the Applied Science Research and Dev elopment Laboratory at GE Healthcare in 2002. Her research interests include network and imaging science with applications in medical imaging, wireless sensor networks, astronomy , and social networks.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment