A new Bayesian ensemble of trees classifier for identifying multi-class labels in satellite images

Classification of satellite images is a key component of many remote sensing applications. One of the most important products of a raw satellite image is the classified map which labels the image pixels into meaningful classes. Though several paramet…

Authors: Reshu Agarwal, Pritam Ranjan, Hugh Chipman

1 A new Ba y esia n en semble of trees clas sifier for identi fy ing multi- clas s label s in satellite images Reshu Ag arw al, Pritam Ra njan a nd Hu gh Chipm an Depa rtm ent of Mathematics and Statistics Acadia University, Wolfville, Nova Scotia , Canada Abstrac t: Cla ss ifica tion of satellite im ages i s a key co m p onent of m any re m o te se nsing appli cat ion s. One of th e m ost i m porta nt prod ucts of a r aw satel li t e i m a ge i s th e cla ss ifie d m ap which la bels t he image pi xels i nto m eani ngful classes. T hough s everal para m e tri c and no n-parametric cla ss ifie rs have b een developed thus far, accurate la beling of the pi xels still remains a chall enge. In thi s p aper, we prop ose a new rel i able m ulti class- c la ss ifie r for i den tifying class labels of a satellite i m age in r em ote se nsing appli cat ion s. The proposed m ulticla ss-classifier i s a generali z ation of a binary c la ss ifie r based on the flex ible ensemble of reg ression tree s m odel call ed Baye s ia n Additive Regre ss io n Trees (BART). W e use d three small ar eas fro m th e LANDSAT 5 T M i m age, acquir ed on Aug ust 15, 2009 ( path/row: 08/29, L 1T pro duct, UTM map pr ojection) o ver King s County, Nova Scotia , Canada to classify the land-use. Several prediction accura cy a nd uncertain ty measures h ave been used to co m p are th e reliability of th e prop osed c lassifie r with the state-o f-the-art classifier s in rem ote sensing . INTRODU CTION Satelli te i m a ge c lassifica tion pla ys a cru cial role in numerous rem ote sensing data appli cat ion s like la nd-use planning, l and cov er chang e m o ni tor ing , forest d egradation assessm ent, hydrol ogical m odel ing, susta inabl e developm ent, wil dlife ha bitat m odeli ng, bi odiversity conservation , and so on. One of the m ost i m porta nt i nputs in such an appli cat ion i s the classified m ap, whi ch i s a raw image with pi x el-wise class label s. Thus , i t becomes essenti al to achieve the highest po ssible accuracy of t he classified m aps. Several classifier s have been developed and implemented worldwide ( e.g., Fra nklin et al ., 2002 ; Pal and Math er, 2003; Gall ego, 2 004), h owever, accurate l abeling of the pixels s till rem ai ns a challenge of ten due to th e complexity in stud y ar ea terrain, sensor characteristics and training data size estim atio n (Blinn, 2005; Song et al., 2012). 2 Among vari ous para m e tri c classification methods, the m axi m u m l i kelihood (ML) cla ssifier has b een the most widel y used cla ss ifier i n i m age proce s sing software (Peddle , 19 93) . Ho w ever, th e ML cl assi fier i s ba sed on a pa rametric model tha t assum es nor mall y distributed data which i s often violate d i n co m plex l and-use sa t ellit e images ( Lu a nd W e ng, 2007). No n-param etr ic classifiers, w hi ch do not m ake strong assum pti ons li ke norm ali ty, ha v e gained much p opularit y . Cl assifiers base d on k- near est neighbor ( k-NN), artificial neural netw orks (ANN), deci sion trees and support vector m achi nes ( SVM ) have shown bette r p erfor m a nce as co mpared to ML classi fiers for com ple x lan dscapes ( Zhang and W ang, 20 03; Bazi an d Melgani, 2 006; Li et al ., 2010) . O f course, the non-p aram e tri c m ethods ar e not p erfect either, and have m any shortco m ings. Rankin g of such classifiers has bee n a n i nteresting re search ar ea i n the m achin e l earning li tera ture. For exam p le , S udha an d Bhava ni (2012) conclude d that SVM is a bette r cla ss ifier than k -NN, and Song et al. ( 2012) argue that SVM i s eith er com parab le to, or slightly better tha n ANN. Deci s io n tree ba sed classifiers b ecam e extrem el y popula r i n machine learni ng af ter cla ss ifica tion and regression tre es (CART) were in troduced by Breiman et al. , (1984), al though this type of cla ssifier had been arou nd since 1960 ’s under the name of concept le arn ing system s (CLS). In rem ote se nsi ng a ppli cations, CART has be en successfully used for the classification of m u lti spe c tral and hype r-spectral i m a ges with hi gh accuracy (e.g. , Hansen et al., 1996; Friedl and Brodl ey 1997; Yang et a l. 20 03). Refinements over CART (e .g., b agging, boosting and random for ests) have also been used i n remote sensi ng for m o re accu rate class la bel i dentification (e.g., Lawrence et al . 2004). In this paper , we illustrate that CART can som e times yi eld unreliable pr ed iction s. In this paper, we pr opose a n ew reliabl e multiclass-classifier for accurate class la bel predi ct io n. The pr oposed classifie r i s based on the Bayesian ensemble of tree s m odel call ed Bayesian Add itive Re gression Tree (BA RT) ori ginally developed by Chipman et al . ( 2010). In the context of drug d isco very and credit ri sk m odelin g, BA RT has been used for constructing binary classifier s un der the name of BART prob it (Chip m an et al. 2010) and Bayesian Additive C la ss ifica tion T ree (BACT) (Zhang and Ha rdle 2 010), 3 respe ctively. In t hi s pa per, we follow a one-against-all ap proach for generalizing this bi nary classifier to a m ult iclass-classifie r which is referred to a s mBA CT. As i llustrated i n the result s section, m BACT yi elds more re liable predicted class l abel s than two po pular com peti ng classifie rs, SVM and CART. For perfor m a nce com p ari son of differen t classifie rs, we use a LANDSAT 5 TM i mage coverin g King s County of Nova Scotia, Ca nada, a cquired on August 15 , 2009. T his i s a pred om inan tly rural area with several small towns. The sate llite (LANDSAT 5 TM) stoppe d working i n November 2011 a nd the i m ages over K ing s Co unty d uring September 2009 – Nove m ber 2011 were un clear (i.e ., cloudy o r snow y). Th us, we used the scene acquire d on August 15, 200 9 (path/row: 08/ 29, L1T product, UTM m ap projecti on) f or perform ance com pari son . Th e sate llite i m age consists of s ix r eflectance bands (blue, green, r ed, near i nfrared and two m iddl e i nfrared) with 30- m eter resolu tion and one th erm al band w i th 120-meter resolu t ion. F or maintainin g resolu tion consi stency, the therm al ban d was not u sed in building t he classifier. Since the entire scene i s ver y l arge ( 185 km × 170 km ), our st udy area consists of thre e r elatively sm a ll regio ns in Ki ngs County, i .e., th e t owns of W olfville, W indsor and Kentvill e and their surro und ing ar eas. Each image con sists of si x re fle ctance m atri ces (correspo nding to the si x bands) , and each p ixel can be classified i n o ne of the se ven lan d-use classes, buil t-up (BU), po nd/lake/river water ( W ), Ba y of Fun dy (BF), ag ricultural l and (AL), grassla nd (GL), trees (T) and scrubl and (SL). The rem aind er of the paper is organize d as follows: The ne x t secti on prese nts brief reviews on SV M, CART, BART and BART probi t models. W e propose the ne w cla ss ifica tion m e thodol og y, m BACT, in the foll owing section. Then , the data se t obtain ed from LANDSAT 5 T M i m a ge and study areas are discu ssed. The f ollowing secti on star ts w ith a brief re view of the accuracy and u ncertainty m easures use d f or perfo rm ance compari son. T hen the c la ss ified i m ages a nd ta bulated resu lts for the prop osed and competing classifiers are presented. Finally, the pap er con cludes with the overall com pari son of all classifiers, a nd a few im portan t remarks. 4 BACKGROU ND In thi s section we briefly review the key ideas o f SVM a nd CART, and pre sent the necessar y details o f BART m eth odol og ies that are r elevant for the devel opm en t of mBA CT. F or detail s on SVM, CART , BART and B ACT see L iu and Zheng ( 2005), Brie m an et al. ( 1984), Chipm an et a l. (2010) and Z hang and Hardle (2010) respectivel y. Support Vec tor Machine ( SVM) SVM w as ori ginally deve loped by Vapnik ( 1995) for binary cla ss ifica tion, and w as l ater extended to the d om ain of regre ss io n pro blem s (Vapni k et al. 1996) . A basic SVM cla ss ifier (de s igned for bi nary response) ta kes a set of input s and pr edicts the cla ss la bel for eve ry given in put. The m ain i dea is to con struct a separating hyp erplane i n the in put space that can divide t he training data into tw o cl asses with minimal err or. Let be t he re sponse variable and = { , , … , } be the set of in dependent predi ctor v ariable s . Supp ose con sists of traini ng d ata points with bi nary response ∈ { − 1 , 1 } at -di m ensi onal i nput = , , … , , for = 1, … , . Th en, the li near separ ating hyperplane in th e i nput space i s { ∶ ( ) = + = 0 } , where ∈ , is a scala r and ( ) is the d ecision fun ct io n. T hat is, ( ) > 0 i m pli es that the pre dicted cla ss labe l at i s ( ) = 1 , w herea s ( ) < 0 supports ( ) = − 1 . Th e par am eters ( , ) are obta ine d by solving the following opt imizati on problem : ( ) = 1 2 | | ( 1) ( ) ≥ 1 , = 1, … , . A li near h yperplane is often i nsufficient for separating th e data i nto two cl asse s, and a nonline ar hyperplane has to be con structed. Thi s i s achi eved by transform in g the input data i n a much hi gher (possibly i nfinite) dim ensi ona l space called the feature space ( → ( ) ), and f indi ng a linear hyperplane i n th at space . T ypically, a kern el fu nction ( , ) i s used to impli c itly de fine this non-linea r transformation. T hus, the decision function becom e s ( ) = ( ) + = ( , ) + , 5 where and a re e stimated by sol ving the o pt i mization p roblem i n Equation ( 1) with thi s dec ision funct ion . A few popular kernel s a re as follo ws: Polyn om ial ker nel: , = 〈 , 〉 + Gaussian kernel: , = exp − − Sigmoid ke rnel: , = tanh 〈 , 〉 + where an d are scale para m e ters, i s an o ffset para m e ter, an d is the degree o f the pol ynom i al kernel. In this paper, we ar e in terested in a multiclass-cla ss ifica tion problem with = seven cla sses (built-up , w ater, Bay o f Fun dy, ag ricultural l and, g rassland, tre es and scrubla nd). T he data set consists of points with response ∈ { 1, 2 , … , } for ever y -di m ensi ona l in put = , , … , . One of the m ost popu lar te chn ique for cla ss ifyin g multi class d ata is to u se th e one-again st-a ll a pproach (e.g., Botto u et al. 1994; Liu an d Z heng 2005), where th e m ai n i dea i s to build a series of inde pendent deci sion functions, ( ) , = 1, … , , an d then choose th e class lab el that c orrespond s to t he la rgest , i .e., ( ) = arg max { ( ) , = 1, … , } . Al ternatively, one can follow the o ne-against-one approa ch ( e.g., Knerr et al. 19 90; Fr ie dm an 199 6), in which all pairwise, ( − 1)/ 2 , bi nary classifiers are trained and the class with m a ximum votes is the predicted class la bel ( ) . Hsu and Lin (2002) argue that th e one-again st-one appr oach can often outperform the one- against-a ll method. W e used th e built-in function ( ) in the R package “ ” (Kar atzoglou et al. 2013) f or fitting al l SVM m odel s. The classifier uses the one-against-on e app roach for sol v in g a multi c lass-classifica tion problem. W e used most of the defaul t arguments in ( ) (including type=”C-svc” ), e xcept th e kernel param e ters spe cified by kernel=”pol ydot" and k par = li st ( degree = 2, scale = 1, offset = 0.5 ) . T hese values of the par am eters in "kpar ” ha ve been chosen based o n a preli m inar y study o f the datase ts cons ide red i n t his pa per. One m ay fi nd alternative parameters combinatio n to be m o re approp riate i n other ap plications. As expected from a ny cl assifier , () can prod uce both t he pre dicted class l ab el ( ) and the cl assificat ion p robability ( ) = 6 ( = | ) for every cl ass = 1 , … , . These values a re achi ev e d by u sing typ e = "pro babilities" and type = "response" i n the built-i n funct io n . ( ) . It i s worth noting that the predi c ted class lab el obtain ed via () does not necessarily m atch with the max i m u m pr edi cted classification probability ( ) = m ax { ( ) , = 1, … , } , i .e., it i s not always tr ue that ( ) = argmax { ( ) , = 1, … , } . It tur ns out that the two app roaches, model predictio n with type = " response" , and m odel prediction via type = "pr obabilities" , use di fferent methods (se e W u e t al. 200 4 fo r detai ls). For the examples con sidered i n this pape r, we implemented bot h approach es for predi ct in g cla ss l abels, and realize d that the fir st approach with type = "r esponse" yiel ds m ore accura te predicted cla ss l abels than th e l atter app roach with predicted class lab el as ( ) = arg max { ( ) , = 1 , … , } . An illustration is given in the Results se cti on. Classif ication and Regression Tree ( CART) Cla ss ifica tion tr ees gain ed m uch popul arity in the m achin e learni ng li terature s ince CART was d eveloped b y Brie m a n e t al. (1 984). In t he context of image cl assification, the m a in i dea i s to com e up with a deci sion tree t hat p art ition s the i m age vi a recur sive partitio ning i nto hom ogeneo us regions, for i nstance, bu ilt- up, w ater, trees, and so on. W e only discuss b in ary trees, as the decision tree s w ith multi-way splits ca n easily be obtain ed by iterative binary splits in binary tre es. The constru ction of a binar y decision tree starts with assigning the entire training data ( p oints) i n o ne grou p calle d th e r oot n ode. Thi s no de is t hen split i nto two nodes via one of the p redictors. For i nstance, can be used to split the entire data into two subgr oups or nodes { ∶ ≤ } an d { ∶ > } . The two nodes ar e then further split usi ng a val ue of anoth er (or th e sa m e) p redicto r variable . The spli tt ing pr ocess continu es until a f ull tree i s grown. Subsequentl y, techn iqu es like cr oss-validation and tree complexity ar e used to prune t he branches with very f ew data poi nts to avoid o ver- fit ting. Finally, each term ina l (or leaf) node is assigned one class label ∈ {1, 2, … , } . 7 Choosing the b est splitting vari able a nd split poi nt com bin ation ( , ) a t ever y node i s an important part o f the d ecision tree construction. An o ptim al ( , ) combination i s obtain ed by m i ni mizing the total withi n partiti on miss-classifica tion error ( ) = 1 − ( ) (or som e o ther i m purity i ndices li ke Gini i ndex or entr opy) over every predicto r- spli t point com bin ation. W e used th e implementation o f CART i n the R p ackage “ ” ( Thernea u e t al. 20 13) for all examples i n this pap er. Fi rst, () i s used to gr ow the full tre e, then . () prunes the tree using a tr ee com p le x ity param eter ( ). Note that th e val ue of h as to be provided i n () as a control p arameter, how ever, it can be tuned afterwards based o n the cross va lidati on error and th e number of splits in the fully grown tr ee. Interestingly, for all exam pl es consi dered in the paper, the o ptim al value of tree complexity pa rameter turns ou t to be = 0.01 (the de fault value). W e used m ostly the default p arameters of ( ) , and . () , ex cept minspli t = 1 0 (i n . ), w hi ch d etermines the m ini mum nu mber of point s a node must have to be cons ide red for splitting, and xval = 5 (in . ), which specifies 5 -fold cross- validation. As i n ( ) , on e can use . () with type = ”class” and type = ”pro b” to obtain the predicted cla ss labe ls ( ) and m ulticlass- classification prob abilities { ( ) , = 1, … , } . Unli ke () , here , ( ) = argmax { ( ) , = 1 , … , } for all i n the input space (i m age). Bayesi an Additive Regression Tree (BART) Chi pm an et a l. (2010) propo sed a fl exible non- param etri c reg ress ion m o del call ed BART, which is a sum of tre es m odel deve loped i n the Bayesi an fr am ew ork. T his p aper prop oses a n ew m ulti class -classifier (re ferred to as m BACT) based o n BART m odel s. In thi s sect ion, w e pre sent a brief overview of BART. The BART model represents the r esponse a t an inpu t a s a sum of ada ptively chosen functions and an indepen dent norm a l e rror, ( ) = ( ; , ) + ( ; , ) + ⋯ + ( ; , ) + = ℎ ( ) + , (2) 8 where the fun ction ( ; , ) den otes a “regress ion tree” m odel for a binary decision tree with ter m inal nodes (i.e., the i nput space i s par titioned in to re ctangul ar regio ns), ~ (0, ) , and = { , , … , } i s the colle ction of ter m inal node predi ct io ns for tree . As a function of , the -th tree m o del ( ; , ) produces a pi ecewise-constant o utput, , which is obtained by fo llowing th e se quence of deci sion r ules i n tree a nd arriving at th e terminal no de 1 ≤ ≤ . The model predi ct io n a t any inp ut is obtain ed by com bin ing th e ensemble o f tr ees output, i.e ., ℎ ( ) = ∑ . Vi ewing Equation (2 ) a s a stat istical m odel , th e p arameter vect or Θ = ( , . . . , , , . . . , , ) has to b e estimated. Chi pm an et al. (2010) take large values of (50 to 200) and fit the m odel (2) in a Bayesi an fram e w ork using Markov Cha in Monte Carlo (MCMC) methods. Each of t he decision trees is constructed using an extension of the Bayesia n CART m ethodol ogy (Chipman et al . 19 98), where th e b asic i dea i s t o spe cify a p rior on the tree space and a prior on the te rm inal nodes o utputs for each tree i n th e tree space. Instead of a closed-f orm pri or, a tree-ge nerating stochastic pr ocess w as used on the tree sp ace. Combini ng this p rior with th e tree model l i ke lihood yiel ds a posterior di stribution on t he tree space. An efficient MCMC-based a lg orithm was u sed t o stochasticall y sea rch for good trees in th e tree spa ce. Since BART uses a sum of tre es m odel , t he trees w ith fewer spli ts (i. e., l ess th an 5 splits) w ere assigned higher pri or prob abilities, and discrete uniform pri or w as u sed t o cho ose the set of can didate split poi nts for every split. For m ore d etail s on thes e prior s see Chipm an et a l. (2010). W e used th e R li brary (Chi pman and McCull och 2 009) for implementin g BART (a key com ponen t of m BACT). T he mai n functio n i s ( ) , which takes seve ral argu m e nts fo r co ntrolling different fe atures of the MCMC chai ns , an d the p riors on tree para m e ters, terminal node prediction s, , and the noise vari ance. T he argum ents relate d to the MCMC chai n specifies th at the predi ctive sam p le s are s aved e very “keepevery” round s a fter “ nskip” sa m ples ar e discarded as burn-in, an d t he chain stops after “n dpost” realizati ons. A fe w i m porta nt tree para m e ters ar e “num cut” – the 9 m axi m u m nu m ber of sp lit p oints al ong each input , “nt ree” – the number of t rees i n the ensemble ( ) , a nd " " – the variance param et er in te rm inal n ode p redictions, ~ 0, , where ∝ 1/ √ . Prio r on the noise va riance ( ) can also be passed i n via "sigest", "sigdf" and "sigquant" . As co m p ared to a single tree b ased CART model, the BART model ( 2) is an “ensem ble” of d ecision trees, and thus create s a fle xible modelin g fram ework. BART i s al so capable of i ncorporating higher di m ensio nal inte ractions, by a dapt ively choos in g the structu re and indi v id ual rules of ’s. T he sum of tre es a spect of BART impli citly shares in form at ion from nei gh bouring inp uts, an d m o del s the spatial dependency. F urthermore, m any in di v id ual trees ( ) m ay pl ace spli t p oints in th e sam e are a, allowing the predicted function to change rapidly nearby, e ffectively capturi ng n onstat io nary (spi ky ) be hav iour such as a brupt changes in the respon se (e.g., between ro ads, grassland and w ater). BART as a Binary Classifier Li ke m any other sta tistical regression models, BART ca n al so be used for classificatio n. Chi pm an e t a l. (2 010) prop osed an extensi on of BART call ed “ BART pr obi t” fo r cla ss ifyin g b ina ry response ∈ {0, 1} . The m ain id ea is to m odel the r esponse as ( ) = ( = 1 | ) = Φ [ ℎ ( )] , where ℎ ( ) = ∑ ; , , and Ф i s the standard norm al cum ula tive distribution function ( CDF), corresp ond ing to a probit li nk functio n. Let b e a tr aining da taset with bi nary respon se ∈ { 0, 1 } a t -di m ensi onal inp ut = , , … , , f or = 1, … , . For m odel conveni ence the respon se i s re sc al ed to [ − 3, 3 ] . The BART probit m odel fi t retur ns the posterior realizati ons of ’s an d ’s, which ar e u sed t o co m pute Monte Carlo esti m ate of ( ) . If t he po sterior draws fr om MCMC run s are ( ) , ( ) , = 1, … , ; = 1, 2, … , , th e trained classifier ( ) is gi ven by ( ) = 1, 1 Ф ℎ ( ) ( ) ≥ 0.5 , 0, ℎ (3 ) 10 where ℎ ( ) ( ) = ∑ ; ( ) , ( ) and ( ) = ∑ Ф ℎ ( ) ( ) . Fro m an i m ple m e ntati on viewpoi nt, the built-in function ( ) in t he R li brary can also be used to fit BART prob it m o del s. It is however worth n oting that for BART probi t implementati on a few ar guments of ( ) are now e ith er ig nored by the function or have slightl y di fferent valu es. F or i nstance, in case of binary r esponse, the n oise variance ( ) i s fixed at 1 , and as a r esult "sigest" , "sigd f" an d " sigquant" ar e ig nored. The prior variance on the te rm inal node output i s defin ed b y = 3/ √ as com pared to = 0.5/ √ (u sed in the r egression f ram e w ork) . O ne ca n al so include an ar gum ent calle d "binaryOffse t" for offsetting the value of ℎ ( ) away from zero . Chi pm an et al. (2010) used BART probit mode l in a drug discove ry appli cation, wher e the goal was to classify com pounds. Zhan g and Hardle (2010) indepen dent ly develope d a si m il ar adaptation of BART, appl ying i t to the p roblem of cla ssifyin g the sol vency status o f Germ an fi rm s based on their f inancial s tatement inf orm ati on . Zhang and Hardle call ed t heir cl assifier Bayesian Addi tive Classificatio n Trees (BACT), a nd dem onstra ted th at BACT outperforms CA RT an d SVM in iden tifying insolvent firm s. NEW M ETHODO LOG Y: m BACT In this section we p ropose a ne w reliable classifie r called m BACT, which i s a m ulticlass gener alization of BART probit a nd BACT. T he key i dea is to use BART probit w ith the one- against–all approach f or dev eloping a multiclass-classifier . Thou gh t he motivatin g appli cat ion consider ed i n this paper com es fr om re mote se nsin g literatu re, the cla ssifier prop osed here can be used in oth er applications as w ell. Let be a set of poin ts w ith re sponse ∈ { 1, 2, … , } and -dim ensi onal inpu t = , , … , , for = 1, … , . Th e m ethod sta rts with transforming th e data tha t would facili tate the one-a ga inst- all appr oach. For ea ch cla ss = 1 , … , , we g enera te a pseudo data set with origi nal , and new response ( ) defi ned as follo w s: 11 ( ) = 1, = 0, ≠ . (4) In t he sp irit o f Li u and Zh eng (20 05), we b uild bin ary cl assifiers usin g , , … , , and then com bine them to ob tai n the desir ed multi class-cla ss ifie r. For = 1, 2 , … , , l et ( ) ( ) = ( ) = 1 be the classifica tion pr obab ility of i nput w ith cl ass l abel 1 (i nd icato r of class against others) u nder the bina ry dat a = , ( ) , = 1 , … , [note that ( ) ( ) and ( ) are slightly different quantities]. Then, the d ata set is used to fit the standard BART pro bit m odel ( ) ( ) = ( ) = 1 = Φ ℎ ( ) ( ) , where ℎ ( ) ( ) = ∑ ( ) ; ( ) , ( ) , and Ф i s the stan dard n ormal CDF. T he Monte Carlo e stim ate of ( ) ( ) obtain ed from the po sterior draws of ( ) ’s and ( ) ’s i s ( ) ( ) = 1 Ф ℎ ( ) ( ) ( ) . The c la ss ifica tion proba bilities of these bi nary cla ssifiers based on , , … , can b e com bin ed to obtain the predicted class la bel u nder the original -cl ass dataset , ( ) = argmax ( ) ( ) , = 1 , … , . (5 ) Since ( ) ( ) are b inomial success p robabilities for datasets and no t m ulti nomial prob abilities f or one dataset, t he total ∑ ( ) ( ) i s n ot a lways 1 . However, ( ) can be ap prox imated usi ng ( ) ( ) as follows ( ) ≈ ( ) ( ) ∑ ( ) ( ) , for = 1, … , . (6) Fro m Equati ons (5) and (6), it i s clear th at the predicted cla ss label matches with the m axi m u m predi cted classification probability ( ) = max { ( ) , = 1 , … , } . The i m ple m entati on of mBACT re quires fitting BART probit m o del s o n pseudo data sets , , … , . For al l exam ples con sid ered in this paper, we used m ostly th e default argu m e nts of ( ) , except dif ferent MCMC param eters, keepevery = 20 , nd post = 500 0 ( for m ore stable posterior estimates), tree parameter, nu mcut = 100 0 (for re fined search o f opt im al sp lit poi nts) and variance parameter = 1 . T he default value o f = 2 imposes consi derable shr inka ge to the indi vi dual terminal nod e output , w herea s 12 the pr oposed chan ge ( = 1 ) increases the p rior vari ance and a pplies less sh rinkage (or smoothness) o f the re sponse. This i s par ticularly important for our ap plication a s the la nd-use (e.g., the buildup area and w ater b odies) changes ab rupt ly. Next we di scuss the s atellite i mages used fo r the pe rform a nce comparison of m BACT with two popul ar classifier s (SVM and CART) in remote sensi ng. STUD Y ARE A AND D AT A COLLECT I ON A multi spectral satellite i m age acqu ir ed by L ANDSAT 5 T M o n August 15 , 20 09 (path /row : 0 8/29, L1T prod uct, UT M map p r ojection ) over Nova Scoti a, Canada, i s consi dered for this com parative study. A lthough LANDSAT 5 TM da ta consists o f seven bands, the sixth ban d i s therm al with coarse r r esolutio n (120 -m eter) than the oth er six reflect ance bands (30-meter), thus we used o nl y the six r efl ectance bands ( blue, gr een, red, near infrared and two m idd le infrared b an ds). This is a l arge LANDSAT scen e ( 185 km × 17 0 km) cove ring (43.632 N, 63.266 W ) to (45.5 79 N, 65.169 W ), w here each pi x el i s of 30 × 30 re solution. Based o n the consi stency of land -use and a ccessibility of ground da ta, we chose th ree relatively sm a ll regio ns o f Ki ngs Cou nty (the towns of W ol fville, W indsor an d Kentville an d their surro und ing rural areas) from this sce ne for p erformance compari son. (i) W olfvi lle area: A sm all portion of the scene coveri ng (45.070 N, 64.334 W ) to (45.0 98 N, 64.386 W ), w ith 105 × 134 p ixel image (Fig ure 1(b)). (ii ) Win dsor a rea: A medium si ze regio n of the scene cove ring ( 44.932 N, 64. 102 W ) to (4 4.995 N, 64.195 W ) with 236 × 239 pi xels (Figure 1(c)). (iii ) Kentvill e a rea: A rel ative l arge regi on of the scene fr om ( 45.044 N, 64.4 18 W ) to (47.1 17 N, 64.552 W ) with 278 × 34 9 p ixels (Figu re 1(d)). A F alse Color Composite (FCC) o f the three stud y areas constructed using th ree bands (gre en, red and near infrared) are show n in Figure 1. 13 Figure 1: A False Color Composite o f the study areas in Nova Sco tia. Each pixel of the i m age in the three study areas can be c la ss ifie d into one o f the seven cla sses, built-up, po nd/lake/river water, Bay of F undy, ag ricultural l and o r barr en, grassla nd, trees an d scru bland. The land-use of Win dsor is in teresting as i t is cl ear fro m the map (Figur e 1 (a)) that the w ater bo dy p assi ng f rom the west to the north side of Figur e 1(c) i s a pa rt o f th e Bay of Fun dy that i s cutoff due to the co nstruction of a causeway fo r Highway 10 1. Since thi s water is not tidal, the “Bay o f Fund y ” class is not used in the W indsor scene. The de ta iled class-by-class breakdo wn of the training and validation data s ize o f the three study ar eas ar e s hown in Table 1. The da ta were co lle cted by sam pl i ng several (b) Wol fville area (1 05 x 1 34 pix els) (d) Ken tville area (278 x 349 pixels ) (c) Win dsor area (236 x 239 pixe ls) (a) Study ar eas loca tion (©Goo gle map s) 14 di sj oin t hom ogeneo us p atches w ithout rep lacem ent u sing class-wise strat ified random sam pli ng. Note that the sample size in creases w ith th e size of the region . Table 1: Class-by-class distribution o f the training and validation samples for each of seven classes i n the three study areas. For all thr ee stud y a reas, we fo llowed the sa m e a pproa ch of distributing the data points in t he trai ning an d validat io n sets, that i s, f or each class, ap prox imately two-thir ds of the data p oints w ere assig ned to the tra ining set and the rem aind er to the validation set. Wolfville are a Classes Training Validation Total Built - up 37 17 54 Water 12 10 22 Bay of Fundy 14 12 26 Agricultural land 31 14 45 Grassland 21 17 38 Trees 16 10 26 Scrubland 23 15 38 Total 154 95 249 Windsor area Built - up 76 42 118 Water 27 13 40 Bay of Fundy 0 0 0 Agricultural lan d 27 17 44 Grassland 61 31 92 Trees 61 32 93 Scrubland 69 33 102 Total 321 168 489 Kentville are a Built - up 188 96 284 Water 35 19 54 Bay of Fundy 34 18 52 Agricultural lan d 78 46 124 Grassland 81 4 1 1 22 Trees 112 57 169 Scrubland 46 23 69 Total 574 300 874 15 The data points ( i.e., the ground truth or true cl ass labels) w e re collecte d using a com bin ati on o f i n-per son site visits, Google stree t views and G oog le satellite views. Altho ugh the data points were collected in 2012-2013, th e lan d-use has not changed m uch (e xcept a few di fferences i n th e built-up a nd scrubland classe s) since 2009 (when the satellite i mage was taken) . W e want to emphasize tha t the m ain purpose of this paper is to “co m pare th e cla ssification a ccuracy” of m BACT with SVM an d CART, which should not be affected by a few incorre ctly lab ele d ground observations. RESU LTS AND DIS CUSSI ON This section starts with a brief review of a few co mm onl y used accuracy m ea sures i n the r em ote sensi ng lit erature f or both the overall a nd class -wise compari s on of the predi cted class labels and g round truth a t the vali dat ion data sites. Then we discuss a few measures ba sed on ( ) for quantifying uncertainty in predi c ting the class labels. Finally, w e prese nt the classified images and tabulated results. Accurac y Measures For a given cla ss ifie r and a study area , let = be t he error (or confusion) matrix, where denot es th e n um ber of vali dati on or refer ence p oints in th e - th cl ass with predi cted cla ss l abel s . The n, i s th e n um ber of cor rectly classified validation p oints in the - th cl ass , ( -th row su m ) i s th e nu m ber of va lidation points pr edicted to be i n cla ss , and ( - th colum n sum) i s the tr ue num b er of va lidation po ints i n c lass . Defi ne = ( ∑ )/ , = ( ∑ )/ , = [ ∑ ( + ) ]/ , and = [ ∑ ∑ + ]/ , where = ∑ ∑ = ∑ = ∑ i s the gran d total of the er ror m atri x o r the size of the validation data. T hen, m easures the overa ll accuracy o f predict ed class l abels. Cl ass- wise accura c ies from user ’s an d prod ucer’s pe rspect ive s can be measured by / a nd / r espectively. The remaini ng quantities , an d are used i n defining a nother popular accura cy m easure called kappa ( ) which q uantifies the agre em ent bet w een t he pr edicted class la bels and the reality. The overall kappa coefficient is estim ated b y 16 = − 1 − , and th e assoc ia ted uncertainty is measured by ( ) = 1 ( 1 − ) ( 1 − ) + 2(1 − ) ( 2 − ) ( 1 − ) + ( 1 − ) ( − 4 ) ( 1 − ) . The prediction accuracy for - th class can be m easured by conditiona l ka ppa = − − , with the associated variance ( ) = ( − ) [ ( − )] {( − )( − ) + ( − − + ) } . Note tha t all o f th ese accuracy m e asures ar e based on the di screpancy between the predi cted an d true class l abels . Next, we p resent a few uncer tainty m easure s t hat are based on the m ult iclass-classification pr obabi litie s. Uncerta inty Measures Assuming { ( ) , = 1 , … , } are estimated multi nomial probabilities for the cla ss la bels of any pixel (or site) i n the valid ation data, one can define the dev ian ce as = − 2 lo g ( ) ⋅ I [ ( ) = ] , where I [ ( ) = ] = 1 , i f the pred icted c lass l abel is , and zero otherwise. Sm all val ues of deviance r epresent confident p re diction of correct class labels (i .e., less uncer tainty). Since ( ) corr esponds to max { ( ) , = 1 , … , } f or all , i n both CART and m BACT an d not i n SVM, i t i s expected that the d eviances for CART and m BACT would be sm aller as com p ared to that f or SVM classifie d im ages. W e also use a few ( ) -based impuri ty i ndice s f or m easuri ng unce rta int y i n pre dicting the class la bels for every inp ut s ite i n the study area. Probabilit y of m iss- classification: ( ) = 1 − max { ( ) , = 1, 2, … , } Gi ni index : ( ) = 1 − ∑ [ ( ) ] 17 Entropy: ( ) = − ∑ ( ) log ( ) In pr actice, w e use the e stim ates of ( ) for computing these impurity indices. Since none of these three measures are linked with the p redicted class, we do no t expect a system ati c bi as tow ards a p art icula r classifier. Classif ied Im ages and Tabulated Re sults W e now com pare the classified i m ages obtained fr om SV M, CART an d mB ACT. Both overall and class-wise goo dness-of-fit measur es a re t abulated a nd com pared for these cla ss ifiers. Since the i m puri ty i ndices ( ( ) , ( ) , and ( ) ) are com puted for every site in the st udy area, one can com pare the u ncertain ty im ages i nstea d of cla ss- w ise avera ges; however, due to l i m i ted space we onl y present un certainty i m a ges for Kentvill e area. The results presented here are in the order of W olfville, W indsor a nd Kentvill e (ordered b ased on the size of th e regi on). At th e end of this section, w e will di scuss the overall performance of th e three classifiers. Figur e 2 shows the classified images of W olfv ill e area. It is cle ar from F ig ure 2 t hat bo t h SVM and m BACT yi eld m ore accu rate classified images as co m p ared to CART. In particul ar, the predicted class labels for “ built-up” and “trees” in CART appear to be relativel y noi s ier as co m pared to that o bt ained f rom SVM and mBACT. A qui ck com pari son of SVM with m BACT gen erate d i m a ges does n ot s how m uch diff erence. However, a clo ser look at the accura cy m easur es (Tab le 2) reveals m ore pr ecise in form at ion and a clear overall trend in the prediction accuracy a nd uncertainty. It is clear from Tabl e 2 that i n term s o f o verall a cc uracy measures ( and ), SVM gener ates th e m o st a ccurate p redicted c la ss l ab el in the validatio n set. Fur therm ore, mBA CT is better than CART but i nfer io r to SV M (i .e., SVM > m BACT > C ART). Class - wise accur acy m easur es (use r’s and prod ucer’s accuracy and condition kappa) do n ot exhibi t a consistent trend over the seven c la ss es. In terms of overall un certai nty measu res (d eviance, Gini an d entropy) , T able 2 show s a di fferent overall tr end (CART > mBA CT > SV M). Unl i ke accura cy measures, cl ass- wise 18 uncer tainty measures (Gini and e ntropy) show the sam e consisten t trend (CART > mBA CT > SVM) f or every class as w ell. By com bin in g th e information on accu racy and uncer tainty m easur es, i t appears that CART i s a more conf ide nt but le ss a ccurate predi ctor than m BACT (mBACT > CART in term s of and ). Si nce t he pr edicted cla ss la bels do not nece ssarily m atch with the maxim u m pred icte d cla ss ifica tion probabilities, it is n ot surprising th at SVM l eads to the lar gest d eviance. However, the ra nking of SVM based on ove rall and class- wise G ini and e ntrop y v a lues i ndicate that SV M-based predi ct io ns are m ore un certa in than m BACT. (a) CART (b) SVM (c) mBACT Figure 2 : Classified images of Wolfville area 19 Table 2: Overall and class-wise acc uracy and uncertainty measures for W olfville. CART - Wolf ville Overall =8 3.16 % , Kappa ( ) =0.8 02 , Var(K ap pa )=0.00 20 Overall Gini = 0.127 , O verall Entropy = 0.236, Overa ll deviance = 12.03 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 80.95 100.00 0. 768 0.000 0.000 Water 100.00 80.00 1.000 0.000 0.000 Bay of Fundy 100.00 75.00 1.000 0.000 0.000 Agricultural lan d 59.09 92. 86 0.5 20 0.23 3 0.462 Grassland 100.00 94. 12 1.000 0.13 5 0.211 Trees 76.92 100.00 0.742 0.111 0.224 Scrubland 100.00 40.00 1.000 0.234 0.470 SVM - Wolfville Overall = 8 8 .42 % , Kappa ( ) =0.8 64 , Var(K appa )=0.001 5 Overall Gini = 0.599 , O verall Entropy = 1.310, Overall devi ance = 110.69 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 94. 12 94.12 0.9 28 0.5 35 1. 172 Water 90.00 90.00 0.888 0.531 1.147 Bay of Fundy 100.00 91.6 7 1.000 0.685 1.485 Agricultural lan d 66.67 100.00 0.6 0 9 0.763 1.653 Grassland 94.12 94.12 0.92 8 0.516 1.131 Trees 100.00 90.00 1.000 0.626 1.375 Scrubland 90. 00 60.00 0.8 81 0.771 1.684 mBACT - Wolf ville Overall = 84.21 % , Kappa ( ) = 0.814 , Var(K appa )=0.0019 Overall Gini = 0.471 , O verall Entropy = 0.856, Overall devi ance = 48.62 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 80.00 94.12 0. 756 0.558 0.9 17 Water 100.00 80.00 1.000 0.501 0.933 Bay of Fundy 100.00 83.33 1.000 0.282 0.758 Agricultural lan d 66.67 100.00 0.609 0.372 0.718 Grassland 89.47 100.00 0.872 0.385 0.792 Trees 90.91 100.00 0.898 0.536 0.901 Scrubland 83.33 33.33 0.802 0.566 0.963 20 The classified i m ages of W indsor area are shown i n Fi gure 3 . A q uick view of Figure 3 shows th at all three cla ssifiers perfor m r easonabl y well i n capturing the m ain fe atures of the lan d-use. See Table 3 for a detailed perform ance co m pari son of the c lassifie rs. In t erm s of both overall accuracy measures ( an d ) , i t is clear from T abl e 3 that mBA CT is sligh tly better than SVM and m uch bette r than CART. In this case, even th e cla ss-wise accuracy measures (u ser’s, producer’s and conditional kapp a) support the superi or pe rform ance of mBA CT i n m ost of the c l asse s . The uncertainty m e asure s (devia nce, Gin i a nd entropy) follo w the same trend a s in the W olfville image. (a) CART (b) SVM (c) mBACT Figure 3 : Classified images of Windsor a rea 21 Table 3: Overall and class-wise measures of accur acy and uncertainty for W inds or. CART - Wi ndsor Overall = 87.50% , Kappa ( ) = 0.84 7 , Var(K appa )=0.00 10 Overall Gini = 0.130 , Overall Entropy = 0.268, Ove rall de viance = 22.99 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 9 7.06 78.57 0.9 61 0.182 0.363 Water 86.6 7 100.00 0.85 6 0.06 9 0.154 Bay of Fundy - - - - - Agricultural lan d 8 7.50 82.35 0.8 61 0.180 0.325 Grassland 8 0.00 90.32 0.7 55 0.19 1 0.335 Trees 93. 10 84.38 0.91 5 0.062 0.14 3 Scrubland 82.05 96.9 7 0.77 5 0.134 0.329 SVM - Wi ndsor Overall = 92 . 26 % , Kappa ( ) =0. 905 , Var (K appa )=0.00 06 Overall Gini = 0.463 , O verall Entropy = 0.990, Overall deviance = 129.30 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 95 .00 90.48 0.933 0.4 46 0.903 Water 100.00 100.00 1.000 0.1 17 0.254 Bay of Fundy - - - - - Agricultural lan d 100.00 76.47 1.000 0. 594 1.249 Grassland 90. 62 93.55 0.8 85 0.491 1.058 Trees 87.88 90.62 0.850 0.350 0.773 Scrubland 89.1 9 100.00 0.866 0.592 1.250 mBACT - Winds or Overall = 93.45 % , Kappa ( ) =0. 919 , Var (K appa )=0.00 05 Overall Gini = 0.311 , Overall Ent ropy = 0.492, Overa ll deviance = 49.73 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 95. 00 9 0.48 0.93 3 0. 413 0.6 03 Water 100.00 100.00 1.000 0.107 0.385 Bay of Fundy - - - - - Agricultural land 93.75 88.2 4 0.931 0.364 0.648 Grassland 93.33 90.32 0.918 0.362 0.534 Trees 93.75 93.75 0.92 3 0.201 0.333 Scrubland 89.19 100.00 0.8 66 0.3 89 0.598 22 Figur e 4 di s plays t he classified images of K entvill e area. It is c lear f rom F igure 4 that CART yi elds so m e what noi sier classified map ( particularly in built-up and scrubland cla sses) than SVM and mB ACT. Figure 4: Classified images of Kentville area Goodn ess-of-fit m easures for Kentville area i m ages are summari zed i n Tab le 4 . The overall accuracy ( and ) indica te tha t m B ACT i s com p arabl e to SVM and produces m ore a ccurate pr edicted class labels than CART. Simil ar to the W ol fville i m age, th e cla ss-wise accuracy m easures (u ser’s, producer’s and con ditional kap pa) do not exhibit a cl ear trend acr oss the c la ss ifie rs, but the u ncertai nty measures show a consi stent patter n with CART being the most confi dent c lassifi er and SVM the m ost uncer tain. (a) CART (b) SVM (c) mBACT 23 Note that the value o f de viance has been i ncreasing with t he size of the validation set, because it is a sum and not an average . Table 4: Overall and class-wise measures of accur acy and uncertainty for Kentville. CART - Kent ville Overall = 8 4 .33 % , Kappa ( ) =0. 807 , Var(K appa )=0.00 07 Overall Gini= 0.204, Overall Entr opy= 0.418, Overa ll devian ce =50.29 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 8 3.33 83.33 0.7 55 0.126 0.28 1 Water 95.00 100.00 0.947 0.05 9 0.136 Bay of Fundy 82.35 77.7 8 0.812 0.17 1 0.40 5 Agricultural lan d 84.8 5 60.8 7 0.821 0.35 3 0.665 Grassland 90.24 90.24 0.887 0.106 0.21 1 Trees 100.00 8 6.49 1.000 0.03 6 0.103 Scrubland 5 2.63 8 6 . 96 0.4 87 0.51 7 1.01 1 SVM - Ken tville Overall = 90 .00% , Kappa ( ) =0.8 75 , Var(K appa )=0.000 5 Overall Gini= 0.716, Ov erall Entropy = 1.571, Overa ll devian ce = 393.15 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 8 9.11 93.75 0.840 0.507 1.147 Water 89.47 89.47 0.888 0.4 44 0.948 Bay of Fundy 100.00 83.33 1.000 0.721 1.577 Agricultural lan d 92.31 78.26 0 .909 0.778 1.726 Grassland 88.10 90.24 0.862 0.725 1.551 Trees 91.80 98.25 0.899 0.783 1.723 Scrubland 82.61 82.61 0.812 0.776 1.676 mBACT - Kentville Overall = 90.00 % , Kappa ( ) = 0.875 , Var(K appa )=0.000 6 Overall Gini = 0.365 , O verall Entropy = 0.619, Overa ll devia nce = 106.67 Classes User’s Producer’s Co. Kappa Gini Entropy Built - up 87.38 9 3.75 0.8 14 0.421 0.671 Water 86.36 100.00 0.854 0.359 0.601 Bay of Fundy 100.00 72.22 1.000 0.258 0.619 Agricultural lan d 9 0.00 78.26 0.882 0.435 0.715 Grassland 88.37 92.68 0.865 0.384 0.649 Trees 100.00 96.49 1.000 0.245 0.460 Scrubland 79.17 82.61 0.774 0.564 0.902 24 Figur e 5 pr esents sit e-w ise comparison of the probability of m iss -classification ( ) , Gi ni index ( ) , a nd ent ropy ( ) f or all thr ee classifie rs. All uncer tainty images su pport the expected trend , that i s, CART i s the most confid ent classifier , and SVM base d predi ct io ns are m ost uncer tain. Figure 5: Uncertainty images of Kentvi lle area. The first ro w of plot s displ ays probability of miss- classifi cation; the second row shows the Gini index plots; and the third row depicts t he entropy. (a) C ART (b) SVM (c) mBACT 25 Overall Su mmar y The p erform ance com pari son of mBACT with SVM and CA RT ba sed on the three st udy area s suggest the following : 1. Base d on the over all accuracy measures ( and ) , mB ACT outper forms C ART in all cases, a nd performs sa m e or b etter than SVM i n two out of thr ee cases. In one ca se ( W olfville), SVM yielded higher ove rall accuracy than mBA CT. 2. In terms of u ncerta inty m easures ( deviance, Gini and entropy), mBACT gener ates pre dictions w ith s li ghtl y larger ( ) as com p ared to SVM , and CART turn s out to be the most confident predictor. Gi ven that CART yi elds the sm allest val ues of and for all three i mages, the ove r confide nce i n terms of devi ance, Gini and entr opy i s som ewhat que stio nab le. To in vestigate this further we pr esent a displ ay whi ch evaluates the accuracy of pr ed iction of the class probabilities. T his i s accom pli sh ed by com pari ng the max i m u m pr edi cted prob ability ( ) with the actual class label Y for each observation in t he validation set. A direct com pari son between ( ) and Y at t he level of individual observation s is not p ract ical, since th e observed class label will eith er equal the class tha t has m axi m u m pred icted p robability, or not. In other words, such a co m pari son would be between a predi cted probability an d a binar y indi cator for whether th e obser ved class i s the same as the p redicted class. Ho w ever, such a com pari son can be m ade by com bin ing observation s in to groups, a nd comparing t he propor tion of corre ctly predicted cla sses to the ave rage value of ( ) i n each group. W e g roup the observations as foll ows: For ea ch observation , obtain ( ) . The n sort t he obse rvat ion s from sm all est to largest value of ( ) . Di vide these so rted ob servations i nto 10 gro ups. Thus th e 1 st g roup will consist of observations with the s mallest am ount of cert ainty i n p red iction , and the 10 th group will consist of ob servations with th e l argest predicti on certainty. F or each g roup, a lso calculate the proportion of observatio ns for which the c la ss with m axi m u m predi cted p robability was equal to t he obse rved class. If a model is a ccurately predi ct in g the class proba bilities, the n th i s pr oportion of co rrect ly classifie d obser vations in each group should equal t he m ean o f the m axi m u m p redi cted c lass probabilities. A pl ot of t hese quant ities for the 10 g roups should correspond to a li ne with intercept 0 26 and slo pe 1, fo r th e i deal pr edictor. Figure 6 pr esents a co m p ari son of su ch plo t for all three classifiers in the three study area s. Figure 6: [1 st row: Wolfville, 2 nd row: Windsor, 3 rd row Kentville; 1 st column: CART, 2 nd column SVM, 3 rd column mBACT]. Each plot presents “ proportion of correctly classifi ed va lidation points” versus “average ( ) ” in 10 ( ) quantile bins over the validation set. It i s cl ear from Fi gu re 6 tha t mBACT (the thi rd col um n) i s the most rel iable cla ss ifier in thi s measure (with a sl ope closest to 1), and CART ( the fi rst column) l eads to most confide nt predi ction (with points w hose m ean predi cted ( ) exceed t he proportion 27 corre ctly c lassified within ea c h group). No te that in m BA CT-W olfville plot, the small est “pro portion of correct c la ss ifica tion” poi nt with 30% co rrect cl assification co rresponds to avera ge ( ) ≈ 0.55 , whereas i n CART- W olfville, average ( ) ≈ 0.9 . As a r esult CART can b e an unreliable (i .e. overconfident) classifier. Altho ugh we in c lu de plots in Fi gure 6 for SVM, we note tha t t he SVM packag e uses two di fferent model s to m ake a cla ss pr ediction an d to pre di c t cl ass probabilities. T hus there i s l ess reason to expect that the p oints i n the m id dle colum n o f F ig ure 6 will h ave a line w ith sl ope 1, since the class la be ls used i n calculating the pro port io n of correct cla ss ifica tions (vertical axis) are fro m a differe nt m o del than t he predict ed class prob abilities. Indeed, there see m s to b e a very weak co rresp ondence between ( ) and th e proportion of correct classifica tions for SV M in F igure 6. CONCLU DING REM ARKS Accurate prediction of cla ss l abels of a sate llite i m age h as be en a challenging p roblem in remote se nsing applications. In this ar tic le, we i ntr oduced a new reli able m ulti class- cla ss ifier, mBA CT, f or accurate i de ntificat ion of cl ass la bels. Th ough the classifica tion probl em consi dered in this paper comes from a remote sensi ng application , m BACT can be use d for other application s as w e ll. The main i dea o f mBA CT was to ge nerali ze the bi nary cla ssifier ( BART p rob it or BACT) for m ult iclass -classificat io n prob le m usi ng the one -against-all approa c h. This requires fit ting BART m odel ti m es ( the nu m ber of cl asses) fo r t he entire data se t, and the curre nt version of the R library (for fi tt ing BART m o del s) i s computationally m ore e xpensi ve than SVM and CART. Thi s is so m e what expected beca use BART i s a Bayesi an ensem bl e of trees m odel , and CART is b ased on a single decision t ree. The autho rs of l i brary are currently worki ng on using parallel com putati on to ols to speed up t he i m plementati on pro cess. Subsequently, i t m igh t be w orth i nvestigating th e relative performance of m BACT with one- aga inst-on e genera li zat io n of BART probit. 28 ACKNOW LEDGM ENT W e would li ke to t hank the referees fo r m any useful co mm ent s a nd sugge stions tha t l ed to significa nt improve m ent of t he a rticle. This w ork was suppo rted in part by Di scovery gran ts from t he Natu ral Sciences and Engi neering Research Co uncil of Canada. REFERE NCES Bazi, Y., and Melgani, F. 2 006. Toward an optim al SVM cl assifica tion system fo r hyper spectral r em ote sensi ng i m ages. IEE E T ransact i ons on Geoscience and Remote Sensing , Vol. 44, No . 11, pp. 3374-3385. Blinn , C.E. 200 5. Increasing th e precision o f fo rest are a estimates t hrough improved sampling for near est neighbor satelli te image classification . Ph.D di sserta tion i n For estry, Virginia Polytechnic Institu te and State Uni v ersity. Bottou, L., Co rtes, C., De nker, J., Drucker, H., Guyo n, I., Jackel, L., L eCun, Y., Mul ler, U., Sacki nger, E., Si m ard, P., and Vap nik, V. 1994. Co m pari son of c la ss ifiers m ethods- A case study i n handwriting digi t r ecognition. In Pro c. I nternational Confer ence on Pattern Recogniti on, pp . 77-8 7. Brei m an, L., Friedm an, J.H., Ol shen, R.A., and Stone, C. J. 198 4. Classifi ca tion and regr ession trees. Chapm an an d Hall /CRC, Boca Raton, Florida . Chi pm an, H., George, E., and McCulloch , R. 199 8. Bayesian CART m odel sear c h. Journ al of the American Stati stical Assoc i at ion , Vol. 93, No. 443, pp. 93 5–948. Chi pm an, H., and McCulloch , R. 2009 . BayesTree: Bayesian Methods for Tree Based Mode ls . R package version 0.3-1.1, URL http ://cran.r- project.org /w eb/pa ckages/BayesTree. Chi pm an, H.A., Geo rge, E.I., a nd McCull och, R. E. 2010. BART: Bayesian Addi tive Regre ss io n Trees. The Annals of Applied Stat i stics , Vol . 4, No. 1, pp. 266-298. 29 Fra nklin, S.E., Ped dle , D. R., De chka, J.A., an d Stenhou se, G.B. 2002 . Evi den ti al reaso ning with L andsat T M, DEM and GIS da ta for l and co v er classification i n suppor t of g rizzly b ear habitat m ap pi ng. Inte rnational Jo urna l o f Rem ote Sensing , Vol. 23, pp. 4633–4652. Frie dl, M.A., and Brodle y, C.E. 1997. Decision tre e cla ss ifica tion of l and cover f rom remotely sen sed data. Remot e Sensing of Envi ronmen t , Vol. 61, pp. 399–409. Frie dm an, J. 1996. Ano ther approach to polychoto-mo us classifi cat i on ( Techni cal Repor t). Stanford University, D epart m e nt of Statisti cs. Gall ego, F.J. 2 004. Remote sensing an d land cover area estimation . Internati onal Journ al of Remote Sensing , Vol. 25, pp. 3019–3047. Hansen, M., Duba yah, R., and Def ries, R. 1996 . Classification tree s: An al ternative to traditio nal la ndcover cla ssifiers. Internati onal Journal of Re mote Sensing , Vol. 17, pp. 10 75–1081. Hsu, C. W ., and Lin, C. J. 20 02. A com pa ri s on o f m ethods for m ul ti-class sup port vector m achin es, IEEE tra nsacti ons o n Neu ral Networ ks , Vol. 13. No. 2, pp. 415- 425. Karatzogl ou, A., Sm o la, A., and Hor ni k, K. 20 13. ker nlab: Kern el-based Mach i ne Lear ning Lab . R package version 0.9-18, URL http://cran.r- project.org /w eb/pa ckages/kernlab. Knerr, S., Per sonnaz, L., an d Dreyfus, G. 19 90. Single-layer le arning revisi ted: A stepwise pr ocedur e for bu i lding and tr aining ne ural network. Neurocom puti ng: Algorithms, Architecture s and Applicati o ns , NATO ASI, Ber lin: Sp ringer-Verl ag. 30 Lawrence, R., Bun n, A., Powell, S., an d Z am bon , M. 2 004. Cl assification of remotely sensed i m agery using stoch astic gradient boosting as a r efinement of cl assi ficat ion tree analysis. Remote Sensing of Environment , Vol . 90, pp. 331-336. Li , M., Crawford, M.M., and Jinwen T. 2010 . Local manifold l earning-based k-nearest nei ghbor for h yperspectral image classif ication. IEEE Tran s actions on Geosciences and Re mote Sensing , Vol. 48, No. 11, pp. 4099-4109. Lui , Y., an d Zheng, Y. F. 2005. One-against-a ll multiclass SVM classi fication u sing reliability measures. In Proc. IEEE I nternati onal Joint Co nfere nce on Neura l Networks , Vol. 2, pp. 849-85 4. Lu, D., and W eng, Q. 2007. A sur vey of image classification m ethods and techni ques for i m proving classificatio n per form a nce. Int ernational Jour nal of Remote Sensing , Vol. 28, No. 5, pp. 823-870. Pal, M., and Mather , P. M. 2003. An asse ssment of the effecti veness of decision tree m ethods for l and cove r classification . Remote Sensing of Environme nt , Vol. 8 6, pp. 554–5 65. Peddl e, D. R. 1 993. An e m p ir ical com pari son of evidential reasoning, lin ear di scrim ina nt anal ysis an d m aximum l ike lihood al gorithm s for alpine land cover classification . Canadian Jou rnal of Remo t e Sensing , Vol . 19 , No.1, p p. 31-44. Song, X., Dua n, Z ., and Jiang, X. 2012. Com pa ri son of ar tificial neur al network an d suppor t vector machine cla ss ifie rs for l and cover classificati on i n Northern China usi ng a SPOT-5 HRG image. Int ernational Jour nal o f Remote Sen si ng , Vol. 33, No.10, pp. 3301-3320. 31 Sudha, L. R., a nd Bha vani, R. 20 12. Perform ance co mpari son o f SVM and k- NN i n automatic cl assification of hum an gai t pattern. International Journa l o f Computers , Vol. 6, No.1, pp.19-28. Ther neau, T., Atkinson , B., and Ripley, B. 2013. r part: recur sive par ti tioni ng a nd regr ession trees , R package version 4.1-1, URL: http://cran.r- project.org /web/packages/rpart Vapni k. 1995. The Nature of Statisti cal Lear ning T heory . Springer Verlag, New York. Vapni k, V .B., Golowich , S. E., and Sm o la, A. J. 1996. Support vector m ethod for function approxim ation , regressio n estimation, and signa l processing. Advances in Neura l Information Processing System s, Vol. 9, pp. 281-2 87. W u, T.-F., Li n, C.-J., and W eng, R. C. 2004. Probability estim ates for multi -class cla ss ifica tion by pairwise coupling. Journal of M achine Learning Research , Vol 5, pp. 97 5-1005. Yang, C.C., Prasher, S. O., Enright, P., Madram oot oo, C., Burge ss, M., Goel, P. K., and Call um , I. 2 003. A pplication of de cision tree tech nology for i mage classifica tion usi ng rem ote sensing data. Agricultural Systems , Vol . 76, No. 3, pp . 1101-111 7. Zhan g, J. L., and Hardle, W . K. 201 0. T he Bayesian a dditive classifi c ation tree appl ied to cre dit ri sk m odeli ng. Com putati onal Statistics an d Data Analysis , Vol. 54, pp. 1197- 1205. Zhan g, Q., a nd W ang, J. 2003. A r ule-based urb an l and u se in ferr in g m e thod for f ine resolu t io n m ulti spectral i m agery . Canadian Journ al of Remo t e Sensing , Vol. 29 , pp. 1–13.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

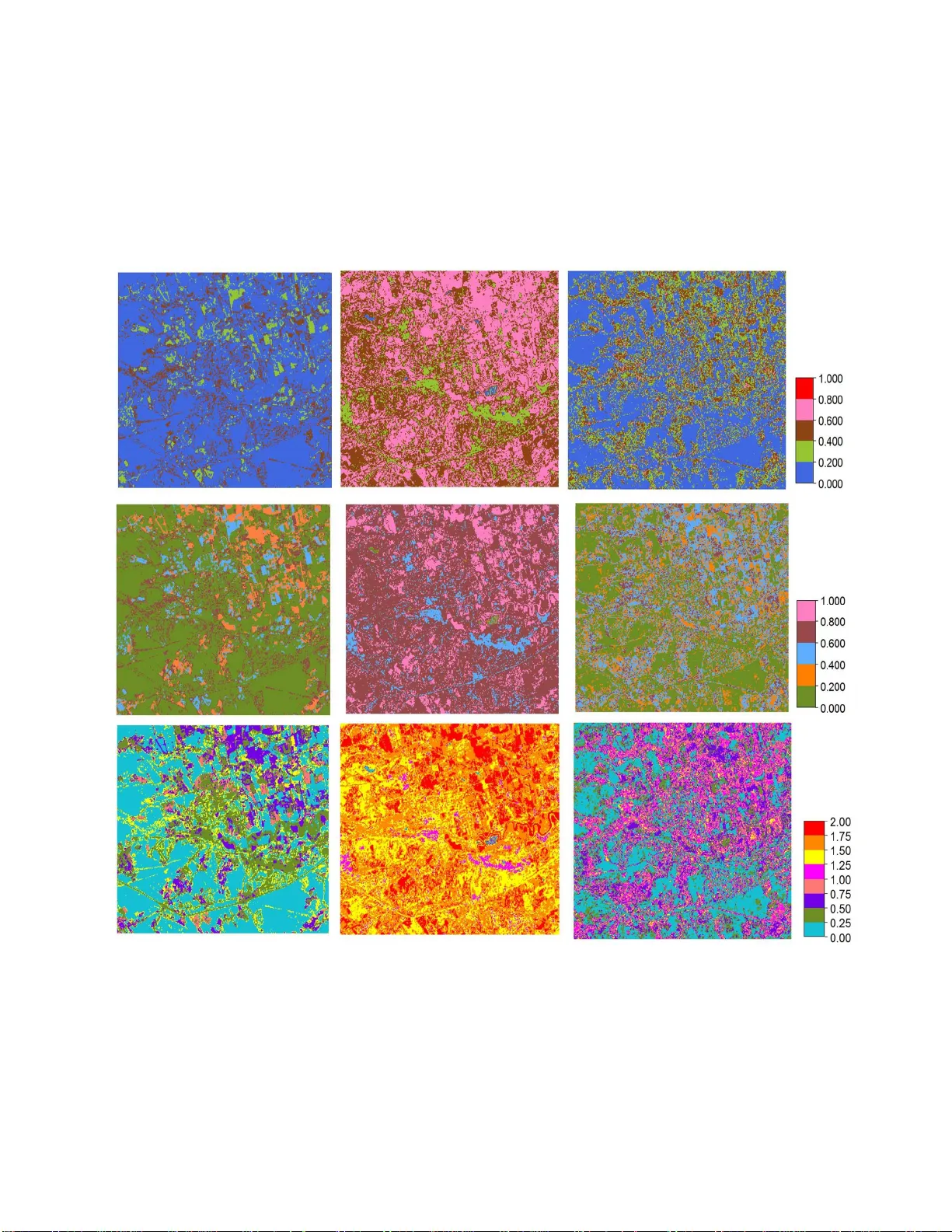

Leave a Comment