Layer-wise learning of deep generative models

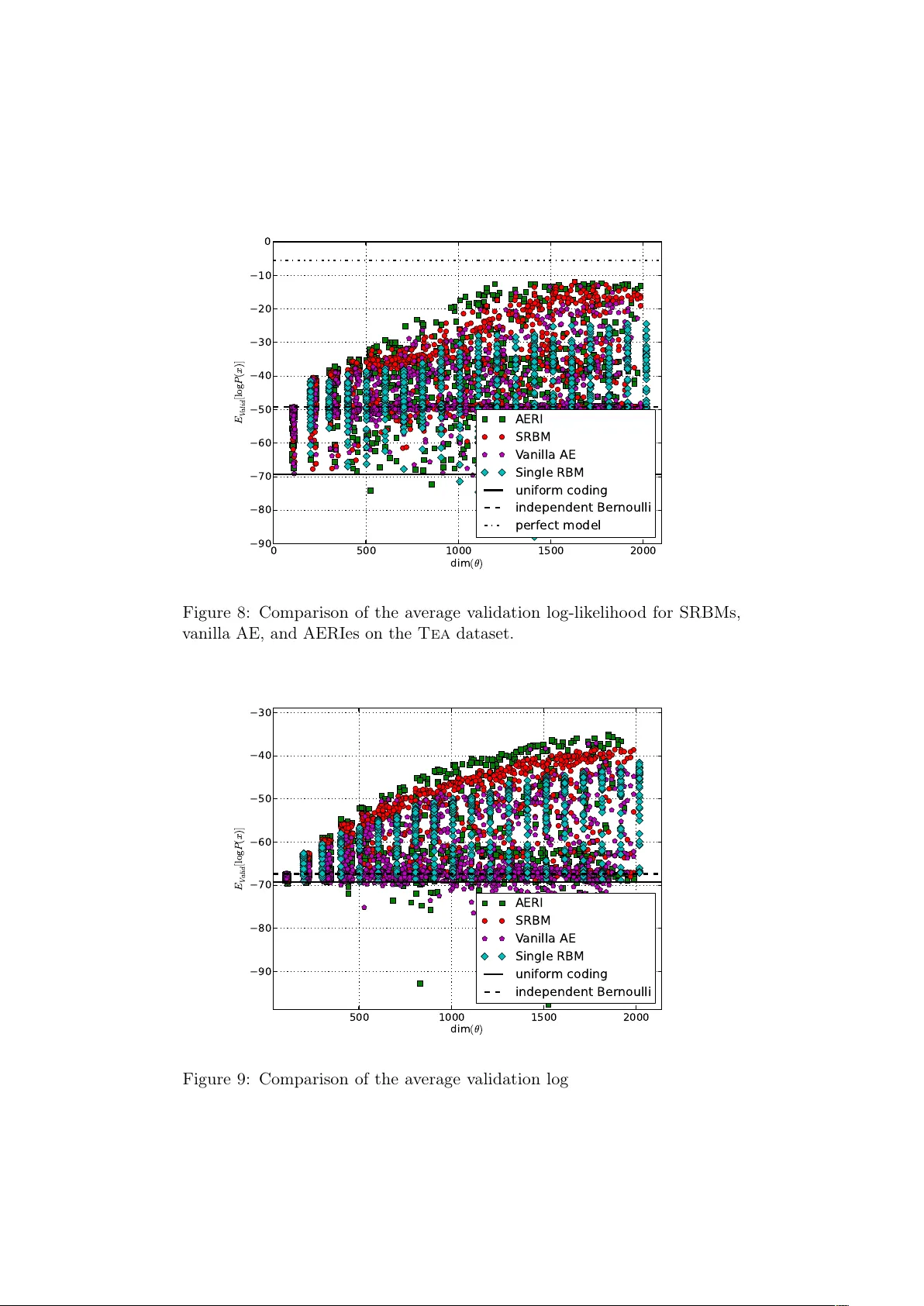

When using deep, multi-layered architectures to build generative models of data, it is difficult to train all layers at once. We propose a layer-wise training procedure admitting a performance guarantee compared to the global optimum. It is based on …

Authors: Ludovic Arnold, Yann Ollivier