A geometric analysis of subspace clustering with outliers

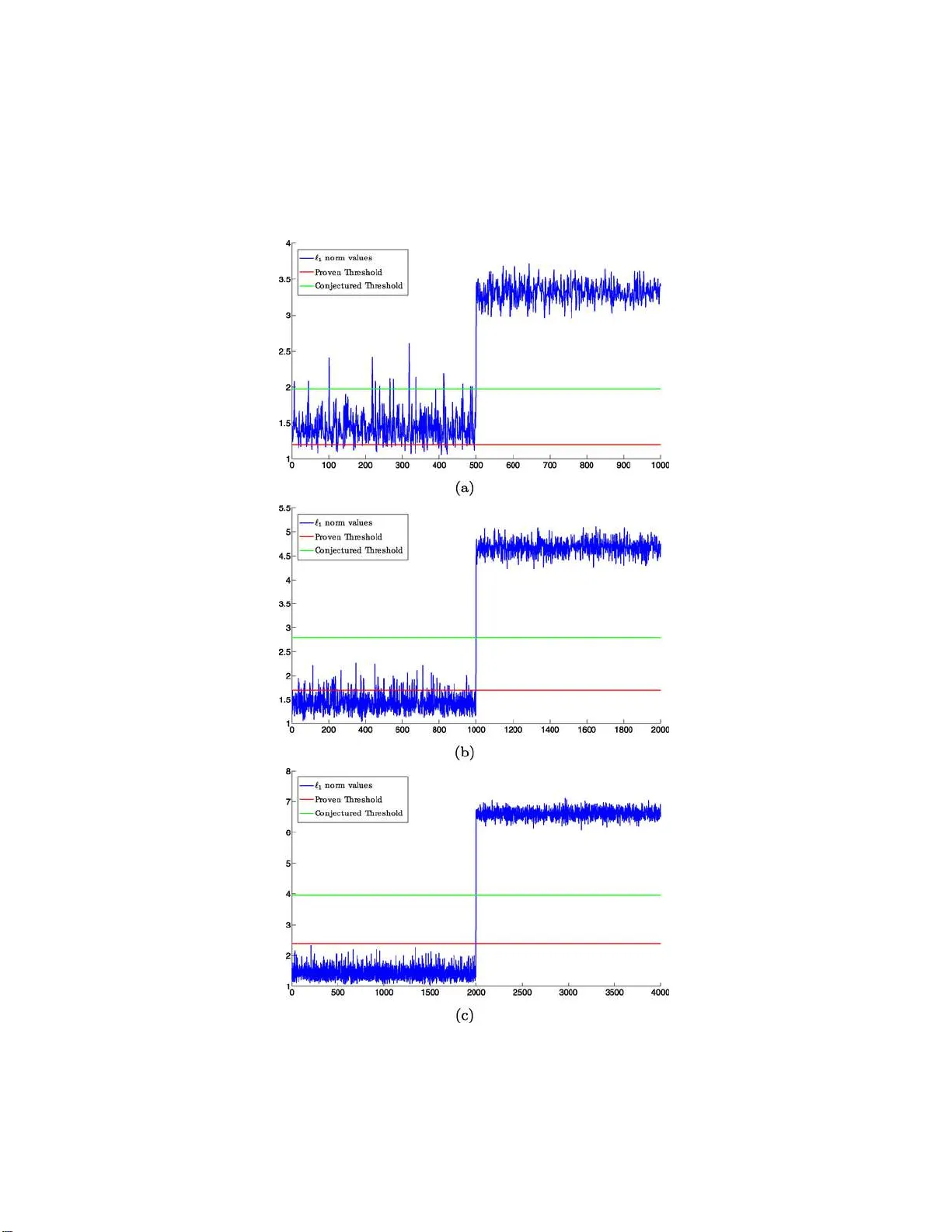

This paper considers the problem of clustering a collection of unlabeled data points assumed to lie near a union of lower-dimensional planes. As is common in computer vision or unsupervised learning applications, we do not know in advance how many su…

Authors: Mahdi Soltanolkotabi, Emmanuel J. C, es