An Overview of Codes Tailor-made for Better Repairability in Networked Distributed Storage Systems

The continuously increasing amount of digital data generated by today's society asks for better storage solutions. This survey looks at a new generation of coding techniques designed specifically for the needs of distributed networked storage systems…

Authors: Anwitaman Datta, Frederique Oggier

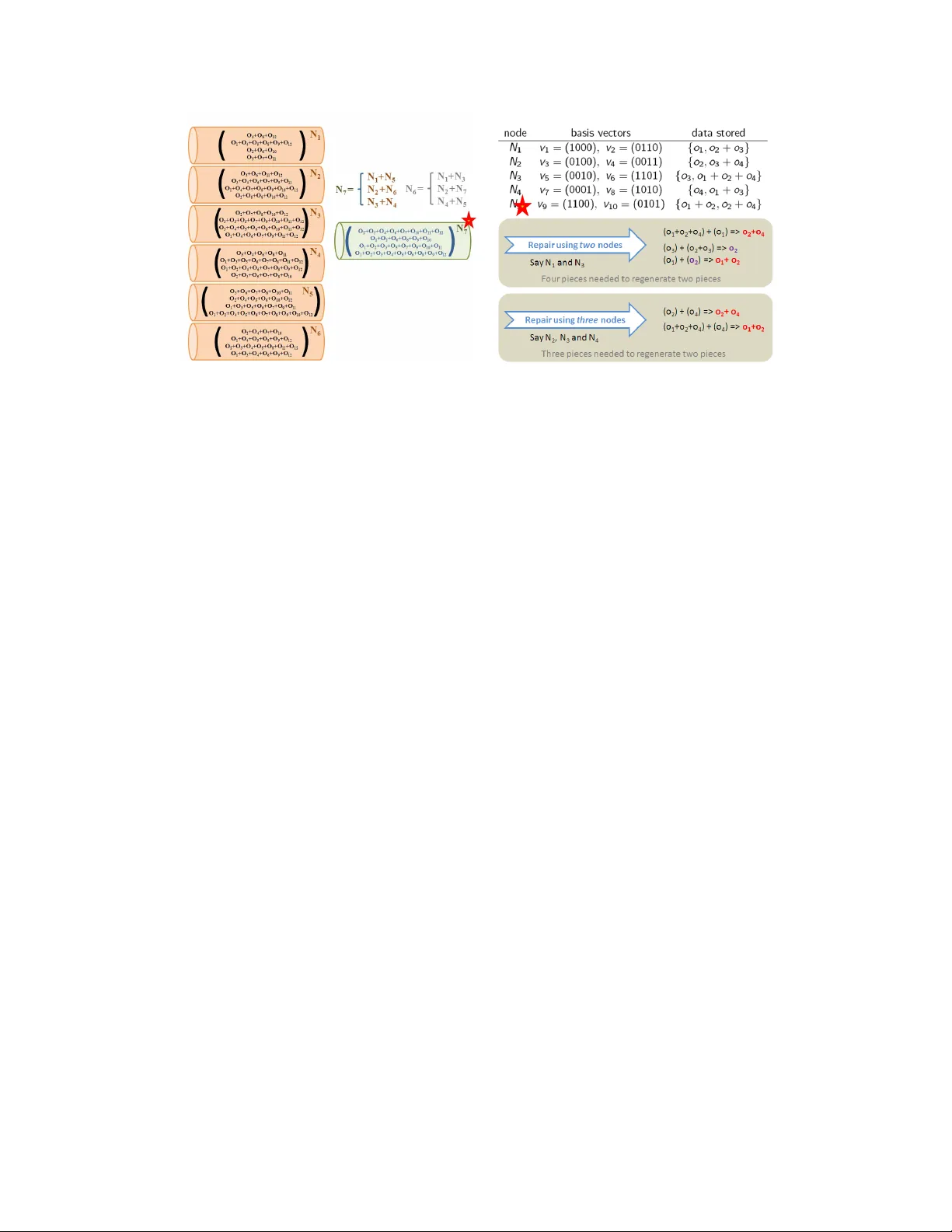

An Ov erview of Co des T ailor-made for Better Repairabilit y in Net w ork ed Distributed Storage Systems An witaman Datta, F r ´ ed ´ erique Oggier No vem ber 27, 2024 Abstract The increasing amoun t of digital data generated by today’s society asks for better storage solutions. This surv ey lo oks at a new generation of co ding techniques designed sp ecifically for the maintenance needs of net work ed distributed storage systems (NDSS), trying to reach the b est compromise among storage space efficiency , fault-tolerance, and maintenance ov erheads. F our families of co des, namely , p yramid, hierarc hical, regenerating and locally repairable co des such as self-repairing codes, along with a heuristic of cross-ob ject co ding to improv e repairabilit y in NDSS are presented at a high level. The co de descriptions are accompanied with simple examples emphasizing the main ideas b ehind each of these code families. W e discuss their pros and cons b efore concluding with a brief and preliminary comparison. This survey delib erately excludes tec hnical details and do es not contain an exhaustive list of co de constructions. Instead, it provides an ov erview of the ma jor nov el co de families in a manner easily accessible to a broad audience, by presen ting the big picture of adv ances in co ding tec hniques for main tenance of NDSS. Keyw ords: co ding techniques, netw orked distributed storage systems, hierarc hical co des, p yramid codes, regenerating co des, locally repairable co des, self-repairing co des, cross- ob ject co ding. 1 In tro duction W e liv e in an age of data deluge. A study sp onsored b y the information storage company EMC estimated that the w orld’s data is more than doubling every tw o y ears, reaching 1.8 zettabytes (1 ZB = 10 21 Bytes) of data to b e stored in 2011. 1 This includes v arious digital data contin uously b eing generated by individuals as well as business and go vernmen t organizations, who all need scalable solutions to store data reliably and securely . Storage tec hnology has been ev olving fast in the last quarter of a cen tury to meet the n umerous c hallenges p osed in storing an increasing amount of data and catering to div erse applications with different w orkload c haracteristics. In 1988, RAID (Redundant Arrays of Inexp ensiv e Disks) was prop osed [23], which combines m ultiple storage disks (typically from tw o to seven) to realize a single logical storage unit. Data is stored redundan tly , using replication, parity , or more recently erasure co des. Suc h redundancy makes a RAID logical unit significan tly more reliable than the individual constituent disks. Besides meeting cost effective reliable storage, RAID systems provide go od throughput by leveraging parallel I/O at the different disks, and, more recen tly , geographic distributions of the constituent disks to achiev e resilience against lo cal ev ents (suc h as a fire) that could cause correlated failures. While RAID has ev olved and sta yed an integral part of storage solutions to date, new classes of storage tec hnology hav e emerged, where multiple logical storage units (simply referred to as ‘storage nodes’) are assem bled together to scale out the storage capacity of a system. The massiv e volume of data in volv ed means that it would b e extremely exp ensiv e, if not imp ossible, to build single pieces of hardware with enough storage as well as I/O capabilities. By the term ‘netw ork ed’, we refer to these storage systems that 1 http://www.emc.com/about/news/press/2011/20110628- 01.htm p ool resources from multiple in terconnected storage no des, which in turn may or not use RAID. The data is distributed across these interconnected storage units and hence the name ‘netw orked distributed storage systems’ (NDSS). It is worth emphasizing at this juncture that though the term ‘RAID’ is no w also used in the literature for NDSS en vironments, for instance, HDFS-RAID [1] and ‘distributed RAID’ 2 , but in this article we use the term RAID to signify traditional RAID systems where the storage no des are collo cated, and the n umber of parity blo cks p er data ob ject is few, say one or t wo (RAID-1 to RAID-6). Unlik e in traditional RAID systems where the storage disks are collo cated, all data ob jects are stored in the same set of storage disks, and these disks share an exclusive c omm unication bus within a stand-alone unit, in NDSS, a shared interconnect is used across the storage no des, and different ob jects may b e stored across arbitrarily differen t (p ossibly intersecting) subsets of storage no des, and thus there is comp etition and interference in the usage of the netw ork resources. NDSS come in man y fla vors such as data centers and p eer-to-p eer (P2P) storage/backup systems. While data centers comprise thousands of compute and storage no des, individual clusters such as that of Go ogle File System (GFS) [9] are formed o ut of hundreds up to thousands of no des. P2P systems like W uala, 3 in con trast, formed swarms of tens to hundreds of nodes for individual files or directories, but would distribute suc h sw arms arbitrarily out of h undreds of thousands of p eers. While P2P systems are geographically distributed and connected through an arbitrary top ology , data cen ter interconnects hav e well defined top ologies and are either collo cated or distributed across a few geo- graphic regions. F urthermore, individual P2P no des may frequen tly go offline and come back online (temp o- rary ch urn), creating unreliable and heterogeneous connectivit y . On the contrary , data centers use dedicated resources with relatively infrequen t temp orary outages. Despite these differences, NDSS share several common characteristics. While I/O of individual no des con tinues to b e a p oten tial b ottlenec k, a v ailable bandwidth, b oth at the netw ork’s edges and within the in terconnect b ecomes a critical shared resource. Also, given the system scale, failure of a significant subset of the constituent no des, as well as other netw ork comp onen ts, is the norm rather than the exception. T o enable a highly a v ailable o verall service, it is th us essen tial to tolerate b oth short-term outages of some nodes and to provide resilience against p ermanen t failures of individual comp onen ts. F ault-tolerance is achiev ed using redundancy , while long-term resilience relies on replenishmen t of lost redundancy o ver time. A common practice to realize redundancy is to keep three copies of an ob ject to b e stored (called 3-wa y replication): when one cop y is lost, the second cop y is used to regenerate the first one, and hop efully , not both the remaining copies are lost before the repair is completed. There is of course a price to pa y: redundancy naturally reduces the efficiency , or alternatively put, increases the ov erheads of the storage infrastructure. The cost for suc h an infrastructure should be estimated not only in terms of the hardw are, but also of real estate and maintenance of a data center. A US Environmen tal Protection Agency rep ort of 2007 4 indicates that the US used 61 billion kilow att-hours of p o wer for data centers and serv ers in 2006. That is 1.5 p ercent of the US electricit y use, and it cost the companies that paid those bills more than $4.5 billion. There are different w ays to reduce these exp enses, starting from the physical media, which has witnessed a con tin uous shrinking of physical space and cost p er unit of data, as well as reductions in terms of co oling needs. This article fo cuses on a differen t asp ect, that of the trade-off b et ween fault-tolerance and efficiency in storage space utilization via coding techniques, or more precisely erasure co des. An erasure code E C ( n, k ) transforms a sequence of k symbols into a longer sequence of n > k symbols. Adding extra n − k symbols helps in reco vering the original data in case some of the n symbols are lost. An E C ( n, k ) induces a n/k ov erhead. Erasure co des were designed for data transmitted ov er a noisy channel, where co ding is used to app end redundancy to the transmitted signal to help the receiv er recov er the intended message, ev en when some sym b ols are erasured/corrupted b y noise (see Figure 1). Co des offering the b est trade-off betw een redundancy and fault-tolerance, called maxim um distance separable (MDS) codes, tolerate n − k erasures, that is, no matter which group of n − k symbols are lost, the original data can b e reco vered. The simplest examples are the rep etition co de E C ( n, 1) (given k = 1 sym b ol, rep eat it n times), which is the 2 http://www.disi.unige.it/project/draid/distributedraid.html 3 The current deployment of W uala ( www.wuala.com ) no longer uses a h ybrid p eer-to-peer architecture. 4 http://arstec hnica.com/old/con tent/2007/08/epa-pow er-usage-in-data-centers-could-double-b y-2011.ars 2 same as replication, and the parity c heck co de E C ( k + 1 , k ) (compute one extra symbol whic h is the sum of the first k sym b ols). The celebrated Reed-Solomon co des [26] are another instance of such codes: consider a sequence of k sym b ols as a degree k − 1 p olynomial, which is ev aluated in n sym b ols. Con v ersely , given n symbols, or in fact at least (any) k symbols, it is p ossible to interpolate them to reco ver the p olynomial and deco de the data. Think of a line in the plane. Given any k = 2 or more p oin ts, the line is completely determined, while with only one point, the line is lost. Figure 1: Co ding for erasure channels: a message of k symbols is enco ded in to n fragments b efore transmission o ver an erasure channel. As long as at least k 0 ≥ k sym b ols arriv e at destination, the receiver can deco de the message. This same storage o verhead/fault tolerance trade-off has also long been studied in the con text of RAID storage units. While RAID 1 uses replication, subsequen t RAID systems integrate parity bits, and Reed- Solomon co des can b e found in RAID 6. Notable examples of new co des designed to suit the p eculiarities of RAID systems include w eav er codes [12], array co des [29] as well as other heuristics [11]. Optimizing the co des for the nuances of RAID systems, such as physical proximit y of storage devices leading to clustered failures are natural asp ects [3] gaining traction. Note that even though w e do not detail here those co des optimized for traditional RAID systems, they ma y nonetheless pro vide some benefits in the con text of NDSS, and vice-versa. A similar evolution has b een observed in the world of NDSS, and a wide-sp ectrum of NDSS hav e started to adopt erasure co des: for example, the new version of Go ogle’s file system, Microsoft’s Windows Azure Storage [2] as w ell as other storage solution companies such as CleverSafe 5 and W uala. This has happ ened due to a com bination of sev eral factors, including y ears of implementation refinements, ubiquit y of significan tly p o w erful but cheap hardware, as w ell as the sheer scale of the data to be stored. W e will next elab orate how erasure co des are used in NDSS, and while MDS codes are optimal in terms of fault-tolerance and storage ov erhead tradeoffs, why there is a renewed in terest in the co ding theory comm unity to design new co des that tak e in to accoun t main tenance of NDSS explicitly . 2 Net w ork ed Distributed Storage Systems In an NDSS, if one ob ject is stored using an erasure co de and eac h ‘enco ded symbol’ is stored at a different no de, then the ob ject sta ys a v ailable as long as the n umber of node failures does not exceed the co de reco very capabilit y . 5 http://www.cleversafe.com/ 3 (a) Data retriev al: as long as k 0 ≥ k no des are aliv e, the ob ject can be retrieved. (b) No de repair: one no de has to reco ver the ob ject, re-encode it, and then distribute the lost blocks to the new nodes. Figure 2: Erasure co ding for NDSS: the ob ject to b e stored is cut into k , then enco ded into n fragmen ts, giv en to different storage no des. Reconstruction of the data is shown on the left, while repair after no de failures is illustrated on the righ t. No w let individual storage no des fail according to an i.i.d. random pro cess with the failure probability b eing f . The exp ected n umber of indep endent no de failures is binomially distributed, hence the probability of losing an ob ject with an E C ( n, k ) MDS erasure code is P k j =1 n n − k + j f n − k + j (1 − f ) k − j . In con trast, it is f r with r -wa y replication. F or example, if the probability of failure of individual no des is f = 0 . 1, then for the same storage ov erhead of 3, corresponding to r = 3 for replication and to an E C (9 , 3) erasure co de, the probabilities of losing an ob ject are 10 − 3 and ∼ 3 · 10 − 6 resp ectiv ely . Suc h resilience analysis illustrates the high fault-tolerance that erasure co des provide. Using erasure co des, how ev er, means that a larger num b er of storage no des are in volv ed in storing individual data ob jects. There is how ever a fundamental difference b etw een a communication channel, where erasures o ccur once during transmission, and an NDSS, where faults accum ulate ov er time, threatening data a v ailability in the long run. T raditionally , erasure co des were not designed to reconstruct subsets of arbitrary enco ded blo c ks effi- cien tly . When a data blo c k enco ded by an MDS erasure co de is lost and has to b e recreated, one w ould t ypically first need data equiv alent in amount to recreate the whole ob ject in one place (either by storing a full cop y of the data, or else by downloading an adequate num b er of enco ded blo c ks), even in order to recreate a single encoded blo c k, as illustrated in Figure 2. In recent years, the co ding theory comm unity has th us fo cused on designing co des which better suit NDSS nuances, particularly with resp ect to replenishing lost redundancy efficien tly . The fo cus of such works has b een on (i) bandwidth, whic h is t ypically a scarce resource in NDSS, (ii) the n umber of storage no des in volv ed in a repair pro cess, (iii) the num b er of disk accesses (I/O) at the no des facilitating a repair, and (iv) the repair time, since delay in the repair pro cess may lea ve the system vulnerable to further faults. Note that these asp ects are often interrelated. There are numerous other asp ects, such as data placement, meta-information management to co ordinate the netw ork, as w ell as interferences among multiple ob jects con tending for resources, to name a few prominent ones, which all together determine an actual system’s p erformance. The nov el co des we describ e next are y et to go through a comprehensive b enc hmarking across this wide sp ectrum of metrics. Instead, w e hop e to make these early and mostly theoretical results accessible to practitioners, in order to accelerate the pro cess of suc h further inv estigations. Th us the rest of this article assumes a net work of N nodes, storing one ob ject of size k , enco ded into n sym b ols, also referred to as encoded blo c ks or fragments, eac h of them being stored at distinct n nodes out of the N choices. When a node storing no sym b ol corresponding to the ob ject being repaired participates in the repair process by do wnloading data from nodes o wning data (also called live no des ), it is termed a newc omer . 4 T ypical v alues of n and k dep end on the en vironments considered: for data cen ters, the n umber of temporary failures is relatively lo w, thus small ( n, k ) v alues such as (9 , 6) or (13 , 10) (with resp ectiv e ov erheads of 1.5 and 1.3) are generally fine [1]. In P2P systems such as W uala, larger parameters like (517 , 100) are desirable to guarantee a v ailability since no des frequen tly go temp orarily offline. When discussing the repair prop erties of a co de, it is also imp ortan t to distinguish which repair strategy is b est suited: in P2P systems, a lazy approac h (where sev eral failures are tolerated b efore triggering repair) can av oid unnecessary repairs since no des may b e temp orarily offline. Data centers might instead opt for immediate repairs. Y et, proactiv e repairs can lead to cascading failures 6 . Thus in all cases, ability to repair multiple faults sim ultaneously is essen tial. In summary , co des designed to optimize the maintenance pro cess should take into account different co de parameters, repair strategies, the ability to replenish single as well as multiple lost fragments, and repair time. Recent co ding works aimed in particular at: (i) Minimize the absolute amount of data transfer needed to recreate one lost enco ded blo c k at a time when storage nodes fail. R e gener ating c o des [6] form a new family of co des achieving the minimum p ossible repair bandwidth (p er repair) given an amount of storage p er no de, where the optimal storage-bandwidth trade-off is determined using a net work co ding inspired analysis, assuming that each new-coming no de con tacts d ≥ k arbitrary live no des for eac h repair. Regenerating co des, like MDS erasure co des, allow data retriev ability from an y arbitrary set of k no des. Collab orativ e regenerating codes [27, 16] are a generalization allo wing sim ultaneous repair of multiple faults. (ii) Minimize the num b er of no des to b e con tacted for recreating one encoded blo c k, referred to as fan- in. Reduction in the n umber of no des needed for one repair typically increases the num b er of wa ys repair ma y b e carried out, thus av oiding b ottlenec ks caused by stragglers. It also makes multiple parallel repairs p ossible, all in turn translating into faster system recov ery . T o the b est of our knowledge, self-r ep airing c o des [17] w ere the first instances of E C ( n, k ) co de families achieving a repair fan-in of 2 for up to n − 1 2 sim ultaneous and arbitrary failures. Since then, such co des hav e b ecome a popular topic of study under the nomenclature of ‘lo cally repairable co des’ - the name b eing reminiscent of a relatively well established theoretical computer science topic of lo cally deco dable co des. Other sp ecific instances of lo cally repairable co de families such as [18, 10, 25], as well as study of the fundamen tal trade-offs and achiev ability of such co des [13] ha ve commenced in the last years. Lo cal repairability come at a price, since either no des store the minimum p ossible amount of data, in whic h case the MDS prop ert y has to b e sacrificed (if one enco ded symbol can b e repaired from other tw o, an y set of k no des including these 3 no des will not b e adequate to reconstruct the data), or the amount of data stored in each node has to b e increased. A r esilienc e analysis of self-repairing co des [17] has sho wn that ob ject retriev al is little impaired by it, and in fact, the MDS property might not b e as critical for NDSS as it is for comm unication, since NDSS ha ve the option of repairing data. There are other co des whic h fall somewhere ‘in b et w een’ these extremes. Prominent among these are hierarc hical and p yramid codes whic h we summarize first b efore taking a closer lo ok at regenerating and lo cally repairable codes. 3 Hierarc hical and Pyramid co des Consider an ob ject comprising eight data blo c ks o 1 , . . . , o 8 . Create three enco ded fragments o 1 , o 2 and o 1 + o 2 using the first tw o blo c ks, and rep eat the same pro cess for blo c ks o 2 j +1 and o 2 j +2 (for j = 1 ... 3). One can then build another lay er of enco ded blocks, namely o 1 + o 2 + o 3 + o 4 and o 5 + o 6 + o 7 + o 8 . The fragmen t o 1 + o 2 ma y b e viewed as pro viding lo c al r e dundancy , while o 1 + o 2 + o 3 + o 4 ac hieves glob al r e dundancy . The same idea can b e iterated to build a hierarch y (Figure 3), where the next lev el global redundan t fragmen t is o 1 + o 2 + o 3 + o 4 + o 5 + o 6 + o 7 + o 8 . Consequen tly , when some of the enco ded fragments are lost, lo calized repair is attempted, and global redundancy is used only if necessary . F or instance, if the no de storing o 1 is lost, then no des storing o 2 6 F or example http://storagemojo.com/2011/04/29/amazons- ebs- outage/ 5 Figure 3: Hierarc hical co des. and o 1 + o 2 are adequate for repair. How ever, if nodes storing o 1 and o 1 + o 2 are b oth lost, one may first reconstruct o 1 + o 2 b y retrieving o 1 + o 2 + o 3 + o 4 and o 3 + o 4 , and then rebuild o 1 . This basic idea can be extended to realize more complex schemes, where (an y standard) erasure co ding tec hnique is used in a b ottom-up manner to create lo cal and global redundancy at a level, and the process is iterated. That is the essen tial idea b ehind Hier ar chic al c o des [7]. F or the same example, one ma y also note that if b oth o 1 and o 2 are lost, then repair is no longer p ossible. This illustrates that the differen t enco ded pieces ha ve unequal imp ortance. Because of such assymmetry , the resilience of suc h co des ha ve only b een studied with simulations in [7]. In contrast, Pyr amid c o des [14] were designed in a top-down manner, but aiming again to hav e lo cal and global redundancy to provide b etter fault-tolerance and impro ve read p erformance by trading storage space efficiency for access efficiency . Such lo cal redundancy can naturally b e harnessed for efficient repairs as well. A new version of Pyramid co des, where the coefficients used in the enco ding hav e b een numerically optimized, namely Lo cally Reconstructable Co des [15] has more recently b een prop osed and is b eing used in the Azure [2] system. W e use an example to illustrate the design of a simple Pyramid co de. T ak e an E C (11 , 8) MDS co de, say a Reed-Solomon co de with generator matrix G , of the form [ x 1 , . . . , x 11 ] = [ o 1 , . . . , o 8 , c 1 , c 2 , c 3 ] . A Pyramid co de can b e built from this base co de, by retaining the pieces o 1 , . . . , o 8 , and t wo of the other pieces (without loss of generality , lets say , c 2 , c 3 ). Additionally , split the data blo c ks in to tw o groups o 1 , . . . , o 4 and o 5 , . . . , o 8 , and compute some more redundancy co efficien ts for each of the t w o groups, whic h is done by pic king a first symbol c 1 , 1 corresp onding to c 1 b y setting o 5 = . . . = o 8 = 0 and c 1 , 2 corresp onding to c 1 with o 1 = . . . = o 4 = 0. This results in an E C (12 , 8), whose co dew ords look lik e [ o 1 , . . . , o 8 , c 1 , 1 , c 1 , 2 , c 2 , c 3 ] where c 1 , 1 + c 1 , 2 is equal to the original code’s c 1 : c 1 , 1 + c 1 , 2 = c 1 . 6 F or b oth Hierarchical and Pyramid co des, at each hierarc hy lev el, there is some ‘lo cal redundancy’ whic h can repair lost blo c ks without accessing blocks outside the subgroup, while if there are to o many errors within a subgroup, then the ‘global redundancy’ at that level will b e used. One mov es further up the pyramid until repair is ev entually completed. Use of lo cal redundancy means that a small n umber of no des is con tacted, whic h translates into a smaller bandwidth fo otprin t. F urthermore, if multiple isolated (in the hierarch y) failures o ccur, they can be repaired independently and in parallel. In con trast to Hierarchical co des, where analysis of the resilience has not b een carried out, Pyramid co des’ top-do wn approach allows to discern distinct failure regimes under whic h data is recov erable, and regimes when data is not recov erable. F or instance, in the example ab o ve, as long as there are three or fewer failures, the ob ject is alwa ys reconstructable. Lik ewise, if there are five or more failures, then the data cannot b e reconstructed. How ev er, there is also an intermediate region, in this simple case, it b eing the scenario of four arbitrary failures, in which, for certain combinations of failures, data cannot b e reconstructed, while for others, it can be. Coinciding with these w orks, researchers from the netw ork coding communit y started studying the fun- damen tal limits and trade-offs of bandwidth usage for regeneration of a lost encoded block vis-a-vis the storage ov erhead (sub ject to the MDS constrain t) culminating in a new family of co des, broadly known as r e gener ating c o des , discussed next. 4 Regenerating co des The repair of lost redundancy in a storage system can be abstracted as an information flow gr aph [6]. (a) Information flo w graph for regenerating codes: eac h storage node is mo deled as t wo virtual no des, X in which collects β amount of information from arbitrary d live no des, while storing a max- imum of α amount of information, and X out , which is accessed by an y data collector contacting the storage no de. A max-flow min-cut analysis yields the feasible v alues for storage capacity α and repair bandwidth γ = dβ in terms of the num b er d of no des contacted and co de parameters n, k , where d ≥ k . 1.2 1.4 1.6 1.8 2 2.2 2.4 2.6 2.8 3 0.95 1 1.05 1.1 1.15 1.2 1.25 1.3 1.35 1.4 1.45 Repair cost ( γ ) Storage ( α ) Benefit of collaboration: Storage−Bandwidth tradeoff t=1 t=4 t=8 (b) T rade-off curv e for the amount of storage space α used per no de, and the amoun t of bandwidth γ needed to regenerate a lost no de. If multiple repairs t are carried out simultaneously , and the t new no des at which lost re- dundancy is being created collab orate among themselv es, then b etter trade-offs can be realized, as can be observ ed from the plot (done using k = 32, d = 48, n can b e any integer bigger than d + t ). Figure 4: The underlying netw ork co ding theory inspiring regenerating co des. First, the data ob ject is enco ded using an E C ( n, k ) MDS code and the enco ded blo c ks are stored across n storage no des. Each storage no de is assumed to store an amount α of data (meaning that the size of an enco ded blo c k is at most α , since only one ob ject is stored). When one no de fails, new no des contact d ≥ k liv e no des and download β amoun t of data from each contacted no de in order to p erform the repair. If 7 sev eral failures o ccur, the mo del [6] assumes that repairs are taken care of one at a time. Information flo ws from the data owner to the data collector as follows (see Figure 4(a) for an illustration): (1) The original placemen t of the data distributed ov er n no des is mo deled as directed edges of weigh t α from the sources (data owners) to the original storage no des. (2) A storage no de is denoted b y X and mo deled as tw o logical no des X in and X out , whic h are connected with a directed edge X in → X out with w eight α represen ting the storage capacit y of the no de. The data flows from the data owner to X in , then from X in to X out . (3) The regeneration pro cess consists of directed edges of w eight β from d contacted live no des to the X in of the new comer. (4) Finally , the reconstruction/access of the whole ob ject is abstracted with edges of w eight α to represen t the destination (data collector) downloading data from arbitrary k live storage no des. Then, the maxim um information that can flow from the source to the destination is determined by the max-flow ov er a min-cut of this graph. F or the original ob ject to b e reconstructible at the destination, this flow needs to b e at least as large as the size of the original ob ject. (a) An example of functional repair for k = 2 and n = 4, adapted from [6]: an ob ject is cut into 4 pieces o 1 , . . . , o 4 , and t wo linear combinations of them are stored at each node. When the 4th no de fails, a new no de downloads linear combinations of the tw o pieces at each node (the num b er on eac h edge describ es what is the factor that m ultiplies the encoded fragment), from which it c omputes tw o new pieces of data, different from those lost, but any k = 2 of the 4 nodes p ermit ob ject retriev al. (b) An example of exact repair from [24]: an ob ject o is en- coded by taking its inner product with 10 vectors v 1 , . . . , v 10 , to obtain o T v i , i = 1 , . . . , 10, as enco ded fragments. They are distributed to the 5 nodes N 1 , . . . , N 5 as shown. Say , no de N 2 fails. A new comer can regenerate by con tacting ev ery node left, and download one enco ded piece from each of them, namely o T v 1 from N 1 , o T v 5 from N 3 , o T v 6 from N 4 and o T v 7 from N 5 . Figure 5: Regenerating co des: functional v ersus exact repair. An y co de that enables the information flow to b e actually equal to the ob ject size is called a regenerating co de (R GC) [6]. Now, given k and n , the natural question is, what ar e the minimal stor age c ap acity α and b andwidth γ = dβ ne e de d for r ep airing an obje ct of a given size ? This can b e formulated as a linear non-con vex optimization problem: minimize the total download bandwidth dβ , sub ject to the constraint that the information flow equals the ob ject size. The optimal solution is a piecewise linear function, which describ es a trade-off b et ween the storage capacit y α and the bandwidth β as shown in Figure 4(b) [ t = 1], and has tw o distinguished b oundary p oin ts: the minimal storage repair (MSR) p oin t (when α is equal to the ob ject size divided b y k ), and the minimal bandwidth repair (MBR) point. The trade-off analysis only determines what can best b e achiev ed, but in itself do es not pro vide any sp ecific code construction. Several codes hav e since b een prop osed, most of which op erate either at the MSR or MBR p oin ts of the trade-off curve, e.g., [24]. The sp ecific co des need to satisfy the constraints determined by the max-flow min-cut analysis, ho wev er there is no constrain t or need to regenerate precisely the same (bitwise) data as w as lost (see Figure 5 (a)). When the regenerated data is in fact not the same as that lost, but nev ertheless provides equiv alen t redundancy , it is called functional r e gener ation , while if it is bitwise identical to what was lost, then it is called exact r e gener ation , as illustrated in Figure 5 (b). Note that the pro of of storage-bandwidth trade-off determined by the min-cut bound does not depend on the type of repair (functional/exact). 8 The original mo del [6] has since b een generalized [16, 27] to show that in case of m ultiple faults, the new no des carrying out regenerations can collab orate among themselv es to p erform several repairs in parallel, whic h was in turn shown to reduce the ov erall bandwidth needed p er regeneration (Figure 4(b) t > 1 represen ting the num b er of failures/new collab orating no des). Instances of co des for this setting, referred to as collab orative regenerating co des (CRGC) are rarer than classical regenerating co des, and up to no w, only a few co de constructions are known [27, 28]. (Collab orativ e) regenerating codes stem from a precise information theoretical c haracterization. How ev er, they also suffer from algorithmic and system design complexit y inherited from net w ork coding, whic h is larger than ev en traditional erasure codes, apart from the added computational o v erheads. The v alue of fan-in d for regeneration has also practical implications. With a high fan-in d even a small num ber of slow or ov erloaded no des can th wart the repairs. 5 Lo cally repairable co des The co des prop osed in the con text of netw ork co ding aim at reducing the repair bandwidth, and can b e seen as the combination of an MDS co de and a netw ork co de. Hierarc hical and Pyramid co des instead tried to reduce the repair degree or fan-in (i.e., the num ber of no des needed to b e contacted to repair) by using “erasure co des on top of erasure co des”. W e next present some recent families of lo cally repairable co des (LR C) [17, 19, 18, 25], whic h minimize the repair fan-in d , trying to achiev e d << k such as d = 2 or 3. F orcing the repair degree to b e small has adv an tages in terms of repair time and bandwidth, ho wev er, it migh t affect other co de parameters (such as its rate, or storage o verhead). W e will next elab orate a few sp ecific instances of lo cally repairable co des. The term “locally repairable” is inspired by [10], where the repair degree d of a node is called the “lo cality d ” of a codeword co ordinate, and is reminiscen t of lo c al ly de c o dable and lo c al ly c orr e ctable co des, which are w ell established topics of study in theoretical computer science. Self-repairing co des (SRC) [17, 19] w ere to our knowledge the first ( n, k ) co des designed to achiev e d = 2 p er repair for up to n − 1 2 sim ultaneous failures. Other families of lo cally repairable co des based on pro jective geometric construction (Pro jectiv e Self-repairing Codes) [18] and puncturing of Reed-Mueller codes [25] hav e been v ery recen tly prop osed. Some instances of these latter co des can achiev e a repair degree of either 2 or 3. With d = 2 resources of at most tw o live no des may get saturated due to a repair. Thus simultaneous repairs can b e carried out in parallel, which in turn pro vides fast reco very from multiple faults. F or example, in Figure 6(a) if the 7th node fails, it can be reconstructed in 3 differen t w ays, b y con tacting either N 1 , N 5 , or N 2 , N 6 , or N 3 , N 4 . If b oth the 6th and 7th no de fail eac h of them can still b e reconstructed in t wo differen t w ays. One newcomer can con tact first N 1 and then N 5 to repair N 7 , while another newcomer can in parallel con tact first N 3 then N 1 to repair N 6 . Figure 6(b) shows another example illustrating how the fan-in can b e v aried to achiev e different repair bandwidths while using SR C. If a node, sa y N 5 , fails, then the lost data can be reconstructed by contacting a subset of liv e no des. Two differen t strategies with different fan-ins d = 2 and d = 3 and corresp ondingly differen t total bandwidth usage hav e b een shown to demonstrate some of the flexibilities of the regeneration pro cess. Notice that the optimal storage-bandwidth trade-off of regenerating co des do es not apply here, since the constrain t d > k is relaxed. Th us b etter trade-off p oin ts in terms of total bandwidth usage for a repair can also b e ac hieved (not illustrated here, see [18] for details). Recall that if a node can b e repaired with d < k other nodes then there exist dep endencies among them. The data ob ject can b e reco vered only out of k indep enden t enco ded pieces, and hence when the k no des include d + 1 no des with mutual dep endency , then the data cannot b e recov ered from them. LRCs ho wev er allow recov ery of the whole ob ject using many sp ecific combinations of k enco ded fragments. F rom the closed form and numerical analyses of [17] and [18], resp ectively , one can observ e that while there is some deterioration of the static resilience 7 with resp ect to MDS co des of equiv alent storage ov erhead, the 7 Static resilience is a metric to quantify a storage system’s ability to tolerate failures based on its original configuration, and assuming that no repairs to compensate for failures are carried out. 9 (a) An example of self-repairing co des from [17]: the ob ject o has length 12, and enco ding is done by tak- ing linear combinations of the 12 pieces as shown, which are then stored at 7 no des. (b) An example of self-repairing co des from [18]: The ob ject o is split into four pieces, and xor -ed combinations of these pieces are generated. Two such pieces are stored at eac h no de, o ver a group of fiv e no des, so that contacting any t wo no des is adequate to reconstruct the original ob ject. F ur- thermore, systematic pieces are av ailable in the system, which can be downloaded and just ap- pended together to reconstruct the original data. Figure 6: Self-repairing co des. degradation is rather marginal. This can alternatively be in terpreted as that for a sp ecific desired v alue of fault-tolerance, the storage o verhead for using LR C is negligibly higher than MDS co des. An immediate ca veat emptor that is needed at this juncture is that, the rates of the kno wn instances of lo cally repairable co des in general, and self-repairing co des in particular, are prett y low, and m uch higher rates are desirable for practical usage. The static resilience of such relatively higher rate locally repairable co des, if and when suc h co des are inv ented, will need to b e revisited to determine their utility . Such trade-offs are yet to b e fully understo o d, though some early works ha ve recently b een carried out [10, 13]. 6 Cross-Ob ject Co ding All the co ding techniques we ha ve seen so far address the repairability problem at the granularit y of isolated ob jects that are stored using erasure co ding. How ever, a simple heuristic of sup erimp osing tw o co des, one o ver individual ob jects, and another across enco ded pieces from multiple ob jects [4] as shown in Figure 7, can provide go o d repairability prop erties as w ell. Consider m ob jects O 1 , . . . , O m to b e stored. F or j = 1 , . . . , m , ob ject O j is erasure enco ded into n enco ded pieces e j 1 , . . . , e j n , to b e stored in mn distinct storage no des. Additionally , p arity gr oups formed b y m enco ded pieces (with one enco ded piece c hosen from each of the m ob jects) can b e created, together with a parit y piece (or xor), where w.l.o.g, a parity group is of the form e 1 l , . . . , e ml for l = 1 , . . . , n , and the parit y piece p l is p l = e 1 l + . . . + e ml . The parity pieces are then stored in additional n distinct storage no des. Such an additional redundancy is akin to RAID-4. This co de design, called R e dundantly gr oup e d c o ding is similar to a tw o-dimensional pro duct co de [8] in that the co ding is done b oth horizontally and vertically . In the context of RAID systems, similar strategy has also b een applied to create intra-disk redundancy [5]. The design ob jectives here are somewhat different, namely: (i) the horizontal la yer of co ding primarily ac hieves fault-tolerance b y using an ( n, k ) erasure co ding of individual ob jects, while (ii) the v ertical single parity chec k code mainly enables c heap repairs (by choosing 10 Figure 7: Redundantly group ed co ding: a horizontal lay er of co ding is p erformed on each ob ject using an ( n, k ) co de, while a parity bit is computed vertically across m ob jects, where m is a design parameter. a suitable m ) b y creating RAID-4 lik e parit y of the erasure encoded pieces from different ob jects. The num b er of ob jects m that are cross-co ded indeed determines the fan-in for repairing isolated failures indep enden tly of the co de parameters n and k . If m < k , it can b e sho wn that the probabilit y that more than one failure o ccurs per column is small, and th us repair using the parity bit is often enough - resulting in cheaper repairs, while relatively infrequen tly repairs may hav e to be p erformed using the ( n, k ) co de. The choice of m determines trade-offs betw een repairability , fault-tolerance and storage ov erheads whic h hav e b een formally analyzed in [4]. Somewhat surprisingly , the analysis demonstrates that for man y practical parameter choices, this cross-ob ject co ding achiev es b etter repairabilit y while retaining equiv alen t fault-tolerance as maximum distance separable erasure codes incurring equiv alent storage o verhead. Suc h a strategy also leads to other practical concerns as well as opp ortunities, suc h as the issues of ob ject deletion or up dates, which need further rigorous inv estigation b efore considering them as a practical option. 7 Preliminary comparison of the co des The co ding tec hniques presented in this paper hav e so far undergone only partial ev aluation and b enchmark- ing, and more rigorous ev aluation of ev en the stand-alone approaches is ongoing work for most. Thus, it is somewhat premature to provide results from any comparativ e study , though some preliminary works on the same ha ve also recently b een carried out [22] taking into consideration realistic settings where multiple ob jects are collo cated in a common p ool of storage no des, and multiple storage no des may p oten tially fail sim ultaneously , all creating interferences b et ween the different repair op erations comp eting for the limited and shared netw ork resources. Instead, w e give one example of a theoretical result by considering the repair bandwidth p er repair in the presence of m ultiple failures for some of these co des, and we pro vide an ov erview of what a system designer ma y exp ect from all these co des in T able-1. W e further enumerate several other metrics that need to b e studied to better understand their applicability . One would not allow in practice failures to accumulate indefinitely , and instead a regeneration pro cess will hav e to b e carried out. If this regeneration is triggered when precisely x out of the n storage no des are still a v ailable, then the total bandwidth cost to regenerate each of the n − x failed no des is depicted in Figure 8. Note that delay ed repair where multiple failures are accumulated may b e a design choice, as in P2P systems with frequent temp orary outages, or an inevitable effect of correlated failures where multiple faults accumulate b efore the system can resp ond. F or lo cally repairable codes suc h as SRC the repairs can be done in sequence or in parallel, denoted γ seq and γ prl resp ectiv ely in the figure. This is compared with MDS erasure codes ( γ eclaz y ) when the repairs are done in sequence, as well as with R GC co des at MSR p oint ( γ M S RGC ) for a few choices of d . The bandwidth 11 co de family main design ob jective MDS fan-in d sim ultaneous repairs bandwidth p er repair EC/RS noisy channels y es k ≤ n − k 1 + k − 1 t R GC [6] min. repair bandwidth y es ≥ k 1 d d − k +1 CR GC [27, 16] min. repair bandwidth y es ≥ k t d + t − 1 d − k +1 SR C [17] min. fan-in no 2 ≤ n − 1 2 2 Pyramid [14] lo calize repairs (proba- bilistically) no dep ends dep ends dep ends Hierarc hical [7] lo calize repair (proba- bilistically) no dep ends dep ends dep ends Cross-ob ject co ding [4] constant repair fan-in (probabilistically) no dep ends: m or k dep ends depends: m or k T able 1: Co de design o verview: W e sp ecify ‘dep ends’ to some of the metrics, to signify that the corresponding v alue dep ends on the specific fault pattern and p ossible co de parameters. A case in p oin t being general Pyramid or Hierarchical co des. They ha ve several parameters, the details of which we hav e not delved into in this high lev el surv ey . But, one can already note from the simple Hierarchical co de example discussed in this pap er that parallel repairs ma y b e p ossible sometimes (for instance when o 1 and o 4 fail simultaneously), while it ma y ha ve to b e done in a serialized manner (for example, if o 1 and o 1 + o 2 fail sim ultaneously), while it ma y b e imp ossible in other scenarios (such as when o 1 and o 2 fail sim ultaneously). The other asp ects of repair likewise may v ary , dep ending on failure pattern as w ell as code parameters. 8 10 12 14 16 18 20 22 24 26 28 30 0 1 2 3 4 5 6 7 8 9 10 available nodes ‘x’ repair traffic per lost block n=31, k=8 γ prl γ seq γ eclazy γ MSRGC (d=k+1) γ MSRGC (d=k+2) Figure 8: Comparison among traditional erasure co des, regenerating co des and self-repairing codes (derived theoretically in [17]): Average traffic normalized with B /k p er lost blo c k for v arious choices of x ( B is the size of the stored ob ject) for (n=31,k=8) enco ding sc hemes. F or parallel repairs using erasure co des the traffic is k = 8 (not shown). The SRC co de parameters are denoted as SR C(n,k). 12 need has been normalized with the size of one enco ded fragment. W e notice that for up to a certain p oin t, self-repairing co des hav e the least (and a constan t of 2) bandwidth need for repairs even when they are carried out in parallel. F or larger num b er of faults, the absolute bandwidth usage for traditional erasure co des and regenerating co des is low er than that of self-repairing co des. How ever giv en that erasure co des and regenerating co des need to con tact k and d ≥ k no des resp ectiv ely , some preliminary empirical studies ha ve shown the regeneration pro cess for suc h co des to b e slow [21] which can in turn make the system vulnerable. In contrast, b ecause of an extremely small fan-in d = 2, self-repairing co des can supp ort fast and parallel repairs [17] while dealing with a m uch larger n umber of sim ultaneous faults. Comparison with some other co des suc h as hierarchical and p yramid co des has b een excluded here due to the lack of necessary analytical results, as well as the fact that the different enco ded pieces hav e assymetrical imp ortance, and thus, just the num b er of failures do es not adequately capture the system state for such co des. Giv en that repair pro cesses run con tinuously or as and when deemed necessary , the static resilience is not the most relev an t metric of interest for storage system designers. Often, another metric, namely me an time to data loss (MTTDL) is used to characterize the reliability of a system. MTTDL is determined b y taking in to account the cumulativ e effect of the failures along with that of the repair pro cesses. F or the nov el co des discussed in this manuscript, such study of MTTDL is yet to b e carried out in the literature. How ever, a qualitativ e remark worth emphasizing is that, precisely b ecause of the b etter repair c haracteristics suc h as fast repairs, some of these co des are likely to improv e MTTDL significantly . Whether the gains out weigh the drawbac ks, suc h as the lac k of MDS prop ert y (and consequent p oorer static resilience), is another op en issue. 8 Concluding remarks There is a long tradition of using co des for storage systems. This includes traditional erasure co des as w ell as turb o and low densit y parit y chec k co des (LDPC) coming from communication theory , rateless (digital fountain and tornado) co des originally designed for conten t distribution centric applications, or lo cally deco dable codes emerging from the theoretical computer science comm unity to cite a few. The long b eliev ed mantra in applying codes for storage has been ‘ the stor age devic e is the er asur e channel ’. Suc h a simplification ignores the maintenance pro cess in NDSS for long term reliabilit y . This realization has led to a renewed in terest in designing codes tailor-made for NDSS. This article surv eys the ma jor families of nov el co des whic h emphasize primarily b etter repairabilit y . There are man y other system asp ects whic h influence the o verall performance of these co des, that are y et to b e benchmark ed. This high level surv ey is aimed at exp osing the recent theoretica l adv ances providing a single and easy p oin t of entry to the topic. Those in terested in further mathematical details depicting the construction of these co des may refer to a longer and a more rigorous surv ey [20] in addition to the respective individual pap ers. Ac kno wledgemen t A. Datta’s w ork w as supported by MoE Tier-1 Grant RG29/09. F. Oggier’s work was supp orted by the Singap ore National Researc h F oundation under Research Grant NRF-CRP2-2007-03. References [1] Apache.org. Hado opFS-RAID. http://wiki.apac he.org/hadoop/HDFS-RAID, 2012. [2] B. Calder, et al., ”Windo ws Azure Storage: a highly av ailable cloud storage service with strong consis- tency” Twen ty-Third ACM Symp osium on Operating Systems Principles, SOSP 2011. [3] Y. Cassuto, J. Bruc k, “Lo w-Complexity Array Co des for Random and Clustered 4-Erasures”, IEEE T ransactions on Information Theory , 01/2012. 13 [4] A. Datta and F. Oggier, “Redundantly Group ed Cross-ob ject Co ding for Repairable Storage”, Asia- P acific W orkshop on Systems, APSys 2012. [5] A. Dholakia, E. Eleftheriou, X-Y. Hu, I. Iliadis, J. Menon, K.K. Rao, “A new intra-disk redundancy sc heme for high-reliability RAID storage systems in the presence of unrecov erable errors”, A CM T rans- actions on Storage, 2008. [6] A. G. Dimakis, P . B. Go dfrey , Y. W u, M. W ainwrigh t and K. Ramc handran, ”Netw ork Co ding for Distributed Storage Systems” IEEE T ransactions on Information Theory , V ol. 56, Issue 9, Sept. 2010. [7] A. Duminuco, E. Biersac k, “Hierarc hical Codes: Ho w to Make Erasure Codes A ttractiv e for P eer-to-Peer Storage Systems” , Eigh th In ternational Conference on In Peer-to-P eer Computing, P2P 2008. [8] P . Elias, “Error-free coding”, T ransactions on Information Theory , v ol. 4, no. 4, Septem b er 1954. [9] S. Ghemaw at, H. Gobioff, S-T. Leung, ”The Go ogle file system”, ACM symp osium on Operating systems principles, SOSP 2003. [10] P . Gopalan, C. Huang, H. Simitci, S. Y ekhanin, “On the lo cality of co dew ords sym b ols”, Electronic Collo quium on Computational Complexity (ECCC), v ol. 18, 2011. [11] K. M. Greenan, X. Li, J. J. Wylie, “Flat XOR-based erasure co des in storage systems: constructions, efficien t reco very , and tradeoffs”. IEEE conference on Massiv e Data Storage, 2010. [12] J. L. Hafner, ”WEA VER co des: highly fault tolerant erasure co des for storage systems”, 4th conference on USENIX Conference on File and Storage T ec hnologies, F AST 2005. [13] H. D. L. Hollmann, “Storage co des - co ding rate and repair lo calit y”, International Conference on Computing, Netw orking and Communications, ICNC 2013. [14] C. Huang, M. Chen, and J. Li, “Pyramid Co des: Flexible Schemes to T rade Space for Access Efficiency in Reliable Data Storage Systems”, Sixth IEEE International Symposium on Netw ork Computing and Applications, NCA 2007. [15] C. Huang, H. Simitci, Y. Xu, A. Ogus, B. Calder, P . Gopalan, J. Lin, S. Y ekhanin, “Erasure Co ding in Windo ws Azure Storage”, USENIX conference on Ann ual T echnical Conference, USENIX A TC 2012. [16] A.-M. Kermarrec, N. Le Scouarnec, G. Straub, “Repairing Multiple F ailures with Coordinated and Adaptiv e Regenerating Co des”, The 2011 In ternational Symposium on Netw ork Co ding, NetCo d 2011. [17] F. Oggier, A. Datta, “Self-repairing Homomorphic Co des for Distributed Storage Systems”, The 30th IEEE International Conference on Computer Communications, INFOCOM 2011. Extended version at [18] F. Oggier, A. Datta, “Self-Repairing Co des for Distributed Storage – A Pro jective Geometric Construc- tion”, IEEE Information Theory W orkshop, ITW 2011. [19] F. Oggier, A. Datta, “Homomorphic Self-Re pairing Codes for Agile Maintenance of Distributed Storage Systems”, [20] F. Oggier, A. Datta, “Coding T echniques for Repairability in Net work ed Distributed Stor- age Systems”, http://sands.sce.ntu.edu.sg/CodingForNetworkedStorage/pdf/longsurvey.pdf , Septem b er 2012. [21] L. P amies-Juarez, E. B iersac k, “Cost Analysis of Redundancy Schemes for Distributed Storage Sys- tems”, arXiv:1103.2662, 2011. 14 [22] L. P amies-Juarez, F. Oggier, A. Datta, “An Empirical Study of the Repair P erformance of No v el Coding Sc hemes for Netw orked Distributed Storage Systems”, arXiv:1206.2187, 2012. [23] D. A. Patterson, G. Gibson, R. H. Katz “A case for redundan t arrays of inexp ensive disks (RAID)” A CM SIGMOD International Conference on Managemen t of Data, 1988. [24] K. V. Rashmi, N. B. Shah, P . Vija y Kumar, K. Ramchandran, “Explicit Construction of Optimal Exact Regenerating Co des for Distributed Storage”, Allerton 2009. [25] A. S. Raw at, S.Vish wanath, “On Locality in Distributed Storage Systems”, IEEE Information Theory W orkshop, ITW 2012. [26] I. S. Reed, G. Solomon, “Polynomial Co des Over Certain Finite Fields”, Journal of the Society for Industrial and Appl. Mathematics, no 2, vol 8, SIAM, 1960. [27] K. W. Sh um, “Co operative Regenerating Co des for Distributed Storage Systems”, IEEE In ternational Conference on Communications, ICC 2011. [28] K. W. Shum, Y. Hu, “Co operative Regenerating Co des”, arXiv:1207.6762, 2012. [29] I. T amo, Z. W ang, J. Bruck, “MDS Arra y Co des with Optimal Rebuilding”, arXiv:1103.3737, 2011. 15

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment