Training Effective Node Classifiers for Cascade Classification

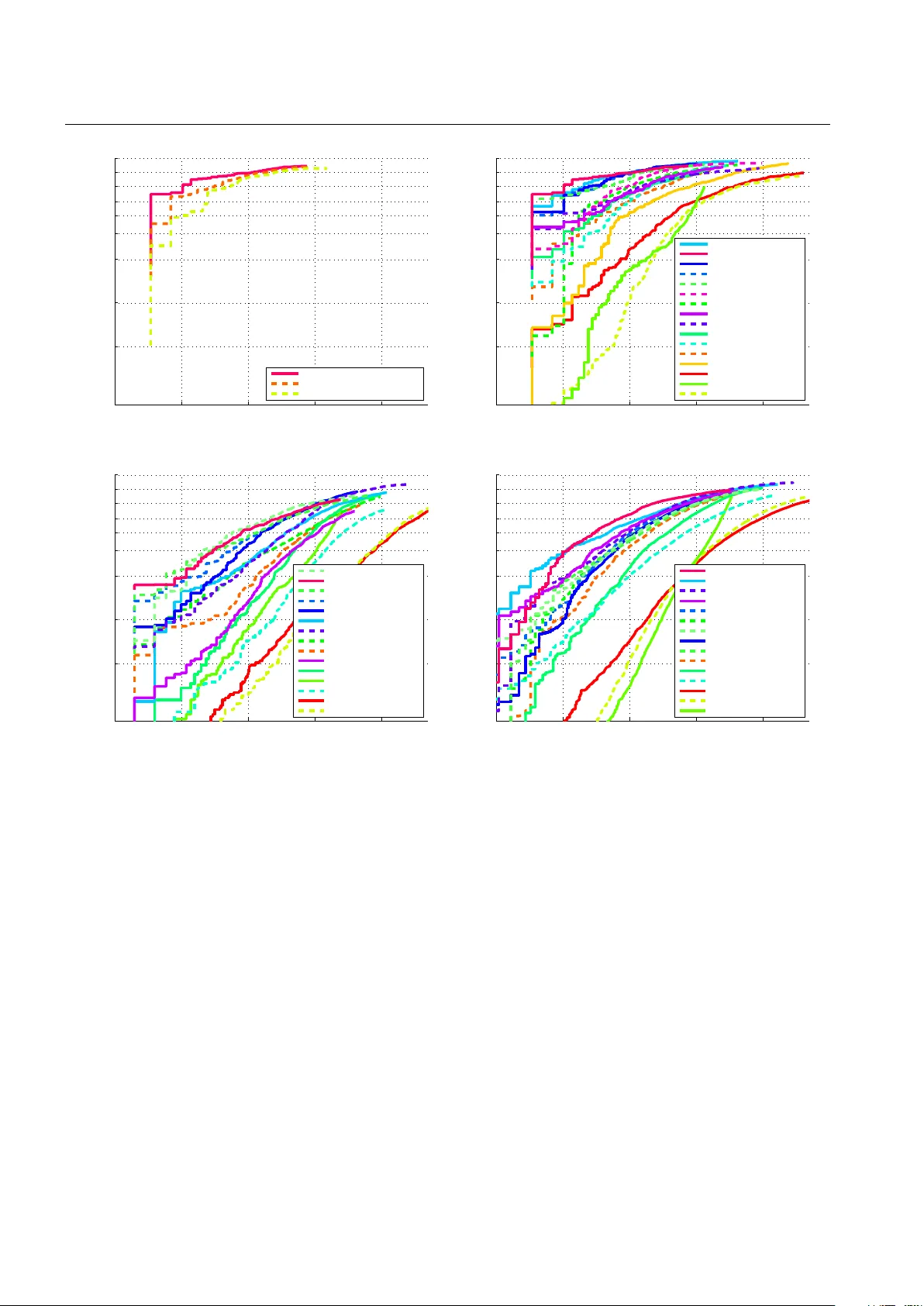

Cascade classifiers are widely used in real-time object detection. Different from conventional classifiers that are designed for a low overall classification error rate, a classifier in each node of the cascade is required to achieve an extremely hig…

Authors: Chunhua Shen, Peng Wang, Sakrapee Paisitkriangkrai