How to calculate the practical significance of citation impact differences? An empirical example from evaluative institutional bibliometrics using adjusted predictions and marginal effects

Evaluative bibliometrics is concerned with comparing research units by using statistical procedures. According to Williams (2012) an empirical study should be concerned with the substantive and practical significance of the findings as well as the si…

Authors: Lutz Bornmann, Richard Williams

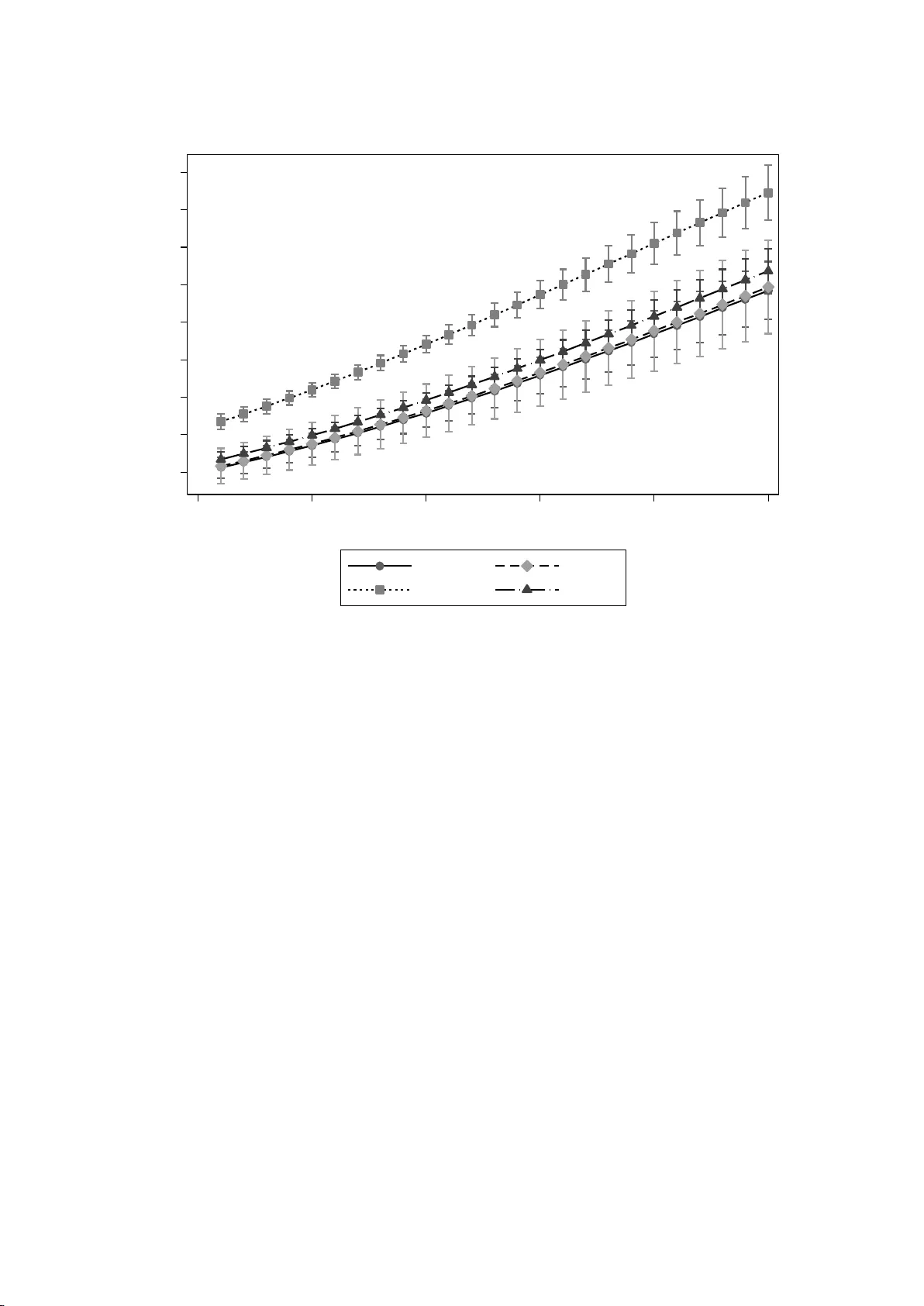

How to calcu late the prac tical significa nce of cita tion im pact differences? An em pirical exam ple from evaluative instituti onal bibliom etrics usin g adjusted predictions and m arginal effec ts Lutz Bornmann* and Richard Williams** *Division for Science and Innovation Studies Administrative Headquarters of the Max Planck Society Hofgartenstr. 8, 80539 Munich, Germany. E-mail: bornmann@gv.mpg.de **Department of Sociology 810 Flanner Hall University of Notre Dame Notre Dame, IN 46556 USA E-mail: Richard.A.Williams.5@ND.Edu Web Page: http://www.nd.edu/~rwilliam/ 2 Abstract Evaluative bibliometrics is concerned with comparing researc h units b y using statistical procedures. According to Williams (2012) an empirical study should be concerned with the substantive and practical significance of the findings as well as the sign and statistical significance of effects. In this study we will explain what adjusted predictions and marginal effects are and how useful they are for institutional eva luative bibliometrics. As an illustration, we will calculate a r egression model using publications (and citation data) produced by four universities in German-speaking countries from 1980 to 2010. We wil l show how these predictions and effects can be e stimated and plotted, and how this makes it far easier to get a practical feel for the substantive meaning of re sults in evaluative bibliometric studies. We will focus particularly on Average Adjusted Predictions (AAPs), Average Marginal Effects (AMEs), Adjusted Predictions at Representative Values (APRVs) and Marginal Effects at Representative Values (MERVs). Key words Evaluative bibliometrics; Practical significance; Highly-cited papers; Average adjusted predictions ; Average marginal effects ; Adjusted predictions at representative values ; Marginal effects at representative values 3 1 Introduction Evaluative bibliometrics is concerned with comparing research units: Has Researc her 1 performed better during his or her career so far than Researche r 2? Has University 1 achieved a higher citation impact over the la st five years than University 2? Good ex amples of comparative evaluations are the Le iden Ranking 2011/2012 (Waltman e t al., 2012) and the SCImago Institutions Ranking (SCImago Reseach Group, 2012), in which different bibliometric indicators are used to compare higher educa tion institutions and research- focused institutions. As well as assessing the research output (measured by the number of publications), the evaluations measure primarily the citation impact, an importa nt aspect of research quality. If sophisticated methods are emplo ye d in the evaluation, field and age normalised indicators are used to measure the citation impact. We consider PP top 10% to be currently the bibliometric indicator which should be preferred in the evaluation of institutions. PP top 10% is the proportion of an institution’s publications which belong to the top 10% most frequently cited publications; a publication belongs to the top 10% most frequently cited if it is cited more frequently than 90% of the publications published in the same field a nd in the same year. PP top 10% is seen as the most important indica tor in the Leiden Ranking by the Centre for Science and Technology Studies (Leiden University , The Netherlands): “ We therefore regard the PP top 10% indicator as the most important impac t indicator in the Leiden Ranking” (Waltman, et al., 2012). For an evaluation study, a population, defined as the whole bibliometric data for an institution, is usually split up into natural, non-overlapping groups such as different publication years (Bornmann & Mutz, 2013). Such groups provide for clusters in a two-stage sampling design (“cluster sampling”), in w hich, firstl y , one single cluster is randomly selected from a set of clusters (Levy & Le meshow, 2008). For example, for an evaluation stud y , the clusters would consist of ten consecutive publication years (e.g. cluster 1: 1971 to 1980; 4 cluster 2: 1981 to 1990 …). Secondly, all the bibliometric data ( publications and corresponding metrics) is ga thered (census) for the selected cluster (e.g. cluster 2). W altman, et al. (2012) include the 2005-2009 cluster in the Leiden Ranking 2011/2012 mentioned above. With statistical tests it is possible to verify the statistical significance of results (such as performance differences betwee n two universities) on the basis of a cluster sample. If a statistical test which looks at the difference between two institutions with reg ard to their performances turns out to be statistically significant, it can be a ssumed that the difference has not arisen by chance, but can be interpreted beyond the data at hand (the results can be related to the population). According to Williams (2012) a study should be concerned with the substantive and practical significance of the finding s as well as the sign and statistical significance of effects. Unfortunately, for many techniques, such as logistic regression, the practical significance of a finding may be difficult to determine f rom the model coefficients alone. For example, if the coefficient for X1 is .7, we may be able to easily determine that the effect of X1 is positive and statistically significant. But, it is much harde r to tell whether those with higher scores on X1 are slightly more likely to experience an event, moderately more likely, or much more likely. Further complicating things is that, in log istic regression, the effect that increases in X1 will have on the probability of an event occurring will vary with the values of the other variables in the model. For example, Williams (2012) shows that the effect of race on the likelihood of having diabetes is very small at young ages, but steadily incr eases at older ages. Hence, a s Long and Freese (2006) show, results can often be made more tangible by computing predicted/expected values for hypothetical or prototypica l cases. For example, if we want to get a practical feel for the perf ormance differences between two universities i n a logistic regression model, we mig ht compare the predicted probabilities of P top 10% for two publications (from the different universities) which both have low, average, and/or hig h values for other variables in the model which might have an effect on citation impact (e.g. 5 publication in low versus high impact journals). Such predictions are sometimes referred to as margins, predictive margins, or (our preferred terminology) adjusted predictions. Another useful aid to interpretation are marginal effec ts, which can, for example, show succinctl y how the adjusted predictions for university 1 differ from the adjusted pre dictions for universit y 2. In this study we will explain what adjusted predictions and marginal effects are a nd how useful they are for institutional evaluative bibliometrics. As an illustration, we will calculate a regression model using publication and citation data for four universities (univ1, univ 2, univ 3, and univ 4). We will show how these predictions and effects can be estimated and plotted, and how this makes it far easier to ge t a practical feel for the substantive meaning of results in evaluative bibliometric studies. We will focus particularly on Average Adjusted Predictions (AAPs), Average Marginal Effec ts (AMEs), Adjusted Predictions at Representative Values (APRVs) and Marginal Effec ts at Representative Values (MERVs). 2 Methods 2.1 Description of the data set and the variables Publications produced by four universities in German-speaking countries from 1980 to 2010 are used as data (see Table 1). The data was obtained from InCites (Thomson Reuters). InCites (http://incites.thomsonreuters.com/) is a web-based research evaluation tool allowing assessment of the productivity and citation impact of institutions. The metrics (such a s the percentiles for each individual publication) are generated from a dataset of 22 million Web of Science (WoS, Thomson Reuters) publications from 1980 to 2010. The calculation of PP top 10% or the determination of the top 10% most cited publica tions (P top 10% ) is based on percentile data. Table 1 about here Percentiles are defined by Thomson Reuters as follows: “The percentile in which the paper ranks in its category and database ye ar, based on total citations received by the paper. 6 The higher the number [of] citations, the smaller the percentile number [is]. The maximum percentile value is 100, indicating 0 cites rece ived. Only article types articl e, note, and review are used to determine the perce ntile distribution, and onl y those sa me article types eceive a percentile value. If a journal is classified into more than one subject area, the percentile is based on the subject area in which the paper performs best, i.e. the lowest value ” http://incites.isiknowledge.com/common/help/h_glossary .html). Since in a departure from convention low percentile values mean high citation impact (and vice versa), the percentiles received from InCites are called “inverted pe rcentiles.” To identif y P top 10% , publications from the universities with an inverted percentile smaller than or e qual to 10 are coded as 1; publications with an inverted percentile greater than 10 are coded as 0. As Table 1 shows, PP top 10% for all the universities is 20.7%. The universities thus have a 10.7% higher PP top 10% tha n one could expect were one to put together a sample consisting of percentiles for publications randomly in InCites (the e xpected value is therefore 10). As the distribution of publications over the univer sities in Table 1 shows, there are many more publications for univ 3 and univ 4 than for univ 1 and univ 2. In addition to the universities, other independent variables which have been shown in other studies to influence the citation impact of publications have been included in the regression model (see the overview in Bornmann & Daniel, 2008): (1) The more authors a publication has and the longer it is, the greater its citation impact. (2) According to Bornmann, Mutz, Marx, Schier, and Daniel (2011) a manuscript is more likely to be cited if it is published in a reputable journa l rather than in a journal with a poor reputation (see also Lozano, Larivière, & Gingras, 2012 ; van Raan, 2012). We include the Journal Impact Factor (JIF) a s a measure of the reputation of a journal here. The JIF is a quotient from the sum of citations for a journa l in one year and the publications in this journal in the previous two years (Garfield, 2006). In addition to the three factors that influence citation impact discussed above, we include three more variables. Although the influence of these varia bles is intended to be 7 reduced with the use of percentiles (a field a nd age normalised citation impact value where the document type is also controlled), we want to test in this study whether they nevertheless have an impact on the result. (3) First of the three variables is the subje ct area: The main categories of the Organisation for Economic Co-operation and Development (2007; OECD) are used as a subject area scheme for this study. The OECD scheme provides six broad subject categories for WoS data: (i) Natural Sciences, (ii) Engineering and Technology, (iii) Medical and Health Sciences, (iv) Agricultural Sciences, (v) Social Sciences, and (vi) Humanities. As the numbers in Table 1 show, the publications of the four universities belong to only three subject areas: (i) Natural Sciences, (ii) Engineering and Technology, and (iii) Medical and Health Sciences 1 . (4) The document types included in the study are articles, notes, proceedings papers (published in journals) and reviews. Reviews are usually cited more often than research papers, as they summarise the status of a research subject or a rea. Since arti cles as a rule have more research results than notes, we expect that they will have a higher citation impact. Proceedings papers will probably turn out to be less common than highl y cited publications as these papers are very often published in an identical form as articles. (5) The final independent variable included in the regression model is the publication y ear (coded in reverse order so that higher values indicate a n older publication, so that 1 = 2010 and 31 = 1980). Regarding this variable, we expect that the opportunity f or publications to be cited very frequently increases over time. 2 The reason for including these variables in this study is not primaril y in order to answer content-related questions (such as the extent of the influence of certain factors on 1 Only a few dozen articles were from other fields of stud y. They were deleted fro m the anal ysis. 2 Table 1 also makes clear that there is tremendous variab ility across publicatio ns in their number of aut hors and in their length. While the a verage publication has 4.2 authors, the nu mber of authors across publication s ranges between 1 and 23. Even more extreme, while the avera ge publication is only 7.7 pages long, the publicatio ns vary anywhere bet ween 1 page and 160 pages in length. In our later analyses we will primaril y f ocus on comparing universities acros s the ranges of values t hat tend to occur in pr actice, but we will also note the implications of our models for publications with more extreme value s. 8 citation impact). Regarding some factors influe ncing citation impact, other more suitable variables have already been proposed: Bornmann, et al. (2011) use, for example, the Normalized Journal Position (NJP) instead of the JIF, with which the importance of a journal can be determined within its subject area – which is not the case with the JIF. The JIF doe s not offer this subject normalisation but it is specified for each publication in InCites, unlike the NJP. We would like to use the varia bles included to show the way in which the substantive and practical significant of findings can be determined in addition to statistical significance. 2.2 Software The statistical software package Stata 12 (http://www.stata.com/) is used in this analysis; in particular, we make heavy use of the Stata commands log it, margins, and marginsplot. 2.3 Analytic Strategy To identify citation impact differenc es between the four universities, we begin b y estimating a series of multivariate logistic regression models (Hardin & Hilbe, 2012 ; Hosmer & Lemeshow, 2000 ; Mitchell, 2012). Such models are appropriate for the analysis of dichotomous (or binary) responses. Dichotomous responses arise when the outcome is the presence or absence of an event (Rabe-Hesketh & Everitt, 2004). In this study, the binary response is coded as 1 for P top 10% (the document is among the top 10% in citations of all documents) and as 0 otherwise. We then show how various types of Adjusted Predictions and Marginal Effects can make the results for both discrete and continuous variables far more easy to understand and interpret. 3 Results Logistic Regression models 9 Table 2 shows the results for the baseline regression model (model 1) which includes only the universities (and no other variables). As the results show, univ 2, univ 3 and univ 4 have significant fewer highly cited publications than does univ 1 (the reference category). Model 2 includes the possible variables of influence on cita tion impact in addition to the university variable. It is interesting to see that the differe nces between universities change substantially with the inclusion of the additional variables. Univ 2 and univ 4 no longer differ significantly from univ 1, while univ 3 performs statistically significa ntl y better than univ 1. This result indicates the importance of taking account of factors that influence citation impact in evaluation studies. Additional analyse s (not shown) suggest that this change in position is primarily due to controlling for journal impact. Univ 3 has the lowest average JIF (3.2) while univ 1 has the highest (8.4). Hence, univ 3 “overachieves” in the sense that it gets more citations than can be accounted for by the reputation of journals it publishes in. Table 2 about here The following results are obtained regarding these factors: (1) publications in Engineering and Technology are more frequently highly cited than publica t ions in other fields (although the difference between Engineering and Technology a nd Medical and Health Sciences is statistically not significa nt). This result is counter to expectations and is due presumably to the use of an indicator in this study which is already normalised for the field. (2) Proceedings papers are statistically significantly less likel y to be highly cited than other document types. However, differences in the effects of other ty pes of documents are not statistically significant. (3) Publications that were published in journals with a high JIF, that were published longer ago, that have more co-authors, and that are longer in length tend be highly cited more often. While model 2 fits much better than Model 1, it also makes some questionabl e assumptions. For example, it assumes that the more pages a paper has, the better. It is probably more reasonable to assume that, after a certain point, additional pages produce less 10 and less benefit or even decrease the likelihood of the paper being cited. Simil arly, we might expect diminishing returns for higher JIFs, i.e. it is better to be published in a more influential journal but after a certain point the benefits become smaller and smaller. To address such possibilities, Model 3 adds squared ter ms for J IF and paper length. Squared terms allow for the possibility that the variables involved eventually ha ve diminishing benefits or even a negative effect on citations, e.g. while a one page paper may be too short to have much impact, a paper that gets too long may be less likely to be read and cited. Both squared terms are negative, highly significant, and theoretica ll y plausible, so we will use Mode l 3 for the remainder of our analysis. Average adjusted predictions (AAPs) and ave rage marginal effects (AMEs ) for discrete independent variables The logistic regression models illustrate which effects are statistically significant, and what the direction of the effects is, but they give us little practica l feel for the substantive significance of the findings. For example, we know that universities differ in their likelihood of being highly cited, but we don ’ t have a practical feeling for how big those differences are. We also know that papers in journals with a higher JIF are more likely to be cited than papers in journals with a lower JIF, but how much more likely ? The addition of squ ared terms makes interpretation even more difficult. Adjusted predictions and marginal effects can provide clearer pictures of these issues. First, we will present the adjusted predictions and marginal effects, and then we will explain how those values can be computed for discrete variables. Table 3 about here The first column of Table 3 shows the average adjusted predictions (AAPs) for the discrete variables in the final logistic regression model, while the second column displays their Average Marginal Effects (AMEs). The two columns are very helpful in clarifying the magnitudes of the effects of the different indepe ndent variables. The AAPs in column 1 show that – after other variables are taken into account – about 16.2% of univ 1 ’ s publications are 11 highly cited, compared to almost 24.5% of univ 3 ’ s. The AMEs in column 2 show how the AAPs for each category differ from that of the reference categ ory. So , the AME of .0829 for univ 3 means that 8.3% more of univ 3’s publications are highly cited than are univ 1’s (i.e. 24.5% - 16.2% = 8.3%). Again, remember that this is after controlling for other variables. For whatever reason, univ 3’s papers are more likely to be highly cited than would be expected based on their values on the other variables in the model. This might reflect, for example, that univ 3 tends to publish more on topics that are of broader interest even though they appear in journals with a lesser impact overall. Whatever the reasons for the difference, the adjusted predictions and the marginal effects probably provide a much clearer pic ture of the differences across universities than the logistic regressions did. Similarly, we see that – after controlling for other variables – more than a quarter (26.5%) of the publications in Engineering and Technolog y are highl y c ited, compared to a little over a fifth of those in the Medical and Health Sciences (22.3%) The AMEs in Column 2 of Table 3 shows that this difference of 4.28% is statically significa nt. In other wor ds, even after controlling for all the other variables in the model, 4.3% more of Engineering and Technology papers are highly cited than is the case for papers in the Medical and Health Sciences. The AAPs and the AMEs further show us that Engineering and Technolog y papers also have an advantage of about 6.8% over papers in the Natural Sciences. Again, the coefficients from the logistic regressions had already shown us that papers i n Engineering and Technology were more likely to be highly cite d than papers in other fields, but the AAPs and AM Es give us a much more tangible feel for just how much more likely. Table 3 further shows us that, after adjusting for the other variables in the model, 20.8% of articles, 22.2% of notes, 15.7% of proceedings papers, and 24.4% of reviews are highly cited. The marginal effects show that the differences betwee n articles and proceedings papers is statistical significant, while the differe nce between articles and reviews falls just short of statistical significance. 12 Examining exactly how the AAPs and AMEs are computed for categorical variables will help to explain the approach. For c onvenience, we will focus on the university variable, but the logic is the same for document type and subjec t area. Intuitively, the AAPs and the AMEs for the universities are computed as follows: • Go to the first publication. Treat that publica tion as though it were from univ 1, regardless of where it actually came from. Lea ve all other independent variable values as is. Compute the probability that this publication (if it we re from u niv 1) would be highly cited. We will call this AP1 (where 1 refers to the category of the independent variable that we are referring to, i.e. the predicted probabilit y of P top 10% which this publication would have if it came f rom univ 1). • Now do the same for each of the other universities, e.g. treat the publication as though it was from univ 2, univ 3, or univ 4, while leaving the other variables at their observed values. Call the predicted probabilities AP2 through AP4. • Differences between the computed probabilities give you the marginal effects for that publication, i.e., ME2 = AP2 – AP1, ME3 = AP3 – AP1, ME4 = AP4 – AP1. • Repeat the procedure for every case in the sample. • Compute the averages of all the individual adjusted predictions y ou have generated. This will give y ou AAP1 through AAP4. Simil arly , compute the averages of the individual marginal effects. This gives you AME2 throug h AME4. With AAPs and AMEs for discrete variables, in effect different hypothetical populations are compared – one where every publication is from univ 1, another where every publication is from univ 2, etc. – that have the exact same values on the other independent variables in the regression model. The logic is similar to that of a matching study, where subjects have identical values on every independent variable except one (Williams, 2012 ). 13 Since the only difference betwee n these publication populations is their university (their origin), the university must be the cause of the differences in their probability of be ing highly cited 3 . Average adjusted predictions (AAPs) and ave rage marginal effects (AMEs) for continuous independent variables The effects of continuous variables (e.g. the JIF) in a logistic regression model are, other than their sign and statistical significance, a lso difficult to interpret. For example, publications in journals with high JIFs tend to be more frequently highly cited than publications in journals with low JIFs. The question is: How much more often is that the case? Continuous variables offer additional cha llenges in that (a) they have many more possible values than do discrete variables – indeed a continuous variable can potentially have an infinite number of values – and (b) the calculation of marginal effec ts is different for continuous variables than it is for discrete variables. It is therefore difficult (or, at least, of limited value) to come up with a single number that represents any sort of “average” effe ct for a continuous variable. Instead, it is useful to compute the Average Adjusted Predictions (AAPs) and Average Marginal Effects (AMEs) across a range of the variable ’ s plausible (or at least possible) values. Figure 1 about here Figure 1 therefore presents the AAPs for JIF. The grey bands represent the 95% confidence interval for each pre dicted value. AAPs are estimated for JIF values ranging between 0 and 35. We chose an upper bound of 35 because less than 1% of all publications have a higher JIF than that. 3 Another p opular way of getting at the idea of “average” values uses Adjusted Predictio ns at the Means (APMs) and Marginal Effects at the M eans (MEMs). With t his approach, rather than use all of the ob served values for all the publications, the mean values for each independent varia ble are computed and t hen used in the calculations. While widely used, this ap proach has various co nceptual pro blems, e.g., a publication ca nnot be .5 o f univ 1 or .1 of univ 2. In our examples, the means app roach produces similar results to those present ed here, but that is not always the case. 14 Th e figure shows that, not surprisingly, publications in journals with higher JIFs are more likely to be highly cited than publications in journals with low JIFs. We already knew that from the logistic regressions, but plotting the AAPs makes it much cleare r ho w great the differences are. Publications with a JIF of close to 0 have less than a 10% chance of being highly cited. A publication with a JIF of 10, however, has almost a 48% predicted probability of being highly cited. (Only about 8% of all publications have a JIF of 10 or higher, which means that publications that have a JIF of 10 are appearing in some of the most influential journals.) Publications in the most elite journals with a JIF of 30 have about an 88% predicted probability of being highly cited. The graph also reveals, however, tha t the beneficial effect of higher J IFs gradually decline as the JIF gets higher and higher. That is, the curve depicting the JIF predictions gradually becomes less and less steep. While there is a big gain in going fr om a JIF of 0 to 10, there is virtually no gain in going from a JIF of 25 to a JIF of 35. As we speculated earlier, after reaching a certain point there is little or nothing to be gained from publishing in a journal that has an ever higher JIF. Figure 2 about here The AMEs for JIF that are presented in Figure 2 further illustrate the declining benefits to higher JIFs. Initially, changes in JIFs between 0 and 10 produce gre ater and greater increases in the likelihood of being highly c ited. For example, goin g from a JIF of 0 to a JIF of 1 produces some increase in the likelihood of being highly cite d, but going from 9 to 10 produces an even greater benefit. For JIFs between 10 and 30, however, additional increases in JIFs produce smaller (but still positive) increases in the likelihood of being highly c ited. 15 After the JIF hits 30, though, there are no a dditional benefits to being in a journal that has an even higher JIF 4 . Figures 3 and 4 about here Figures 3 and 4 present similar analy ses. Figure 3 presents t he AAP s for document length, for values ranging between 1 page and 120 pages. This is a very wide range – 99% of all documents are 25 pages are less – but it illustrates the estimated declining benefits as papers get longer and longer. As Figure 3 shows, a 1 page paper has only about a 14% predicted probability of being highly cited, while an average length paper (about 8 pages) has an AAP of almost 21%. However, the benefits of greater length gradually become smaller and smaller. While an 80 page paper has an 80% predicted probability of being highly cited, making a publication longer than that actually reduce s the likelihood of it being highly cited. The AMEs for document length presented in Figure 4 further clarify the a t first rising and then declining effects of increa ses in document length. Up until about 20 pages, the benefits of greater document length get greater and grea ter, i.e. while moving from 1 p age to 2 is good, moving from 19 pages to 20 is even better. But, after 20 pages, the benefits of gr eater document length get smaller and smaller, and by about 80 pages (85 to be precise) an y additional pages actually reduce the likelihood of being highl y c ited. Of course, given how few documents approach such lengths, and given the huge confidence intervals for the estimates, we should view such conclusions with some caution. Adjusted predictions at representative values (APRs) and marginal effects at representative values (MERs) for continuous and discrete variables together 4 Indeed, if we extend the grap hs to include even higher values of JIF, gains in JIF actually pr oduce declines in the likelihood o f being highly cited, e.g. it is b etter to have a J IF of 30 than it is to have a JIF o f 50. This is a necessary conseque nce of including squared ter ms in the model. I n practice, however, hardl y any publications have JIFs higher than 35. We should be careful about making pred ictions involving values that generally fall well outside most of the o bserved values in the data. 16 As we show with our example of four universities, the AAPs and AMEs provide a much clearer feel for the differences that exist across categor ies o r ranges of the independent variables than statistical significance testing can. Still, as Williams (2012) points out, the use of averages with discrete variables can obscure important differences across publications. I n reality, the effect that variables like universities, document ty pe, and subject area have on th e probability of being highly cite d need not be the same for every publication. For example, as Williams (2012) shows in his analysis of data from the early 1980s, racial differences in the probability of diabetes are very small at young ages. This is primarily because young people, white or black, are very unlikely to have diabetes. As people get olde r, the likelihood of diabetes gets greater and greater; but it goes up more for blacks than it does for whites, hence racial differences in diabetes are substantial at older ages. In the case of the present study, Table 3 showed us that, on average, publications from univ 3 were about 8.3 percentage points more likely to be highl y c ited than publi cations from univ 1. But, this gap almost certainly differs across values of the other independent variables. For example, a 1 page paper, or a paper with a low JIF, isn’t that likely to be hig hly cited regardless of which university it came from. But, as increases in other variables increase the likelihood of a publication being highly c ited, the differences in the adjusted predictions across universities will likely increa se as well. Williams (2012) therefore argues for the use of marginal effects at representative values (MERs) and, by logical extension, adjusted predictions at representative values (APRs). These approaches basically combine analysis of the eff ects of discrete and continuous variables simultaneously. With APRs and MERs, plausible or at least possible ranges of values for one or more continuous independent variables are chosen. We then see how the adjusted predictions and marginal effects for discr ete variables vary across that range. Figures 5 and 6 about here 17 Figure 5 shows the APRs for the four universities for JIFs ranging between 0 and 13. Thirteen is chosen because 95% of all publications have JIFs of 13 or less; extending the range to include larger values than 13 makes the graph harder a nd harder to read. The graph shows that, for all four universities, increases in JI Fs increase the likelihood of the publication being highly cited. But, for JIFs near 0, the differences between univ 3 and the others are small – about a 4 percentage point difference. However, as the JIFs increase, the gap between univ 3 and the others becomes greater and greater. When the JIF reaches 13, univ 3 has about 14 percentage points more of its publications highly cited than do the others. Figure 6, which shows the MERs, makes it even clearer tha t a fairly small gap between the universities at low JIFs gets much larger as the JIF gets bigger a nd bigger. Figures 7 and 8 about here Figures 7 and 8 show the APRs and MERs for the four universities across varying document lengths. About 99% of all papers are 25 pages or less so we limit the range accordingly. Again, for all four universities, the longer the document is, the higher the predicted probability is that it will be highly cited. However, for a 1 page paper, the predicted difference between univ 3 and the other universities is only about 6%. But, for a 25 page paper, the predicted gap is much larger, about 13%. The MERs presented in Figure 8 are another way of showing how the predicted gap between universities ge ts greater and greater as the page length gets longer and longer. 4 Discussion When we compare research institutions in evaluative bibliometrics we are primarily interested in the differences that are sig nificant in practical terms. Statistical significance tests in this context only provide information on whether an effect that has been determined in a random sample applies beyond the random sample. These tests do not however indicate how large the effect is (Schneider, in press) nor whether differences have a practical significance 18 (Williams, 2012). One way to reveal significant diffe rences is to work with Goldstein - adjusted confidence intervals (Bornmann, Mutz, & Daniel, in press). With these confidence intervals, it is possible to interpret the signific ance of differences among research institutions meaningfully: For example, rank differences in the Leiden Ranking among universities should be interpreted as meaningful only if their confidence interva ls do not overlap. In this paper we present a different appr oach, and one which can be easily adapted to a wide array of substantive topics. With techniques like logistic regression, it is easy to determine the direction of effec ts and their statistical significance, but it is far more difficult to get a practical feel for what the effec ts reall y mean. I n the present ex ample, the logistic regressions showed us that, after controlling for other variables, univ 3 was more likely to have its publications highly cited than were other universities. We should be careful about interpreting this as meaning that univ 3 is “better” than its counterparts; for example, besides being highly cited, we might expect a g ood universit y to place more of its p apers in high impact journals, and univ 3 actually fares the worst in this respect. But the results do mean that, for whatever reason, univ 3 is more likely to have its publications highly cited than would be expected on the basis of its values on the other varia bles considered by the model. Further research might yield insights into what exactly univ 3 is doing that make its publications disproportionately successful. The logistic regression results also make clear that, for example, longer papers (at least up to a point) get cited more than shorter papers and publications in high impact journals ge t cited more than publications in low impact journals. The logistic regression results fail to make clear, however, how large a nd important these effects are in practice. The use of average adjusted predictions (AAPs) and average marginal effects (AMEs) – along with average predictions at representa ti ve values (APRs) and marg inal effects at representa ti ve values (MERs) – helped make these effects much more tangible and easier to grasp. We s aw, for example, that, after controlling for other variables, on ave rage univ 3 h ad about 8 19 percentage points more of its publications highly cited than did other universities. But, the expected gap was much smaller for very short documents and documents in low impac t journals (which, regardless of which university they come from, tend not to be heavily cited). Conversely the gap between the unive rsities was much greater for longer papers and higher impact journals. The magnitudes of other effects, such as subject area and document type, were also made explicit. The analyses yielded a number of other interesting insights. They illustrated, for exampling, the diminishing and even negative returns a s papers got longer and longer. They suggested that, after a certain point (about 25) higher JIFs produced little or no additional benefits. We hope that with this paper we are making a contribution to enabling the measurement of not only statistical significance but also practica l significance in evaluative bibliometric studies. These studies would then comply with the publication g uidelines such as those of the American Psy chological Association (2009 ) which recommend both significance and substantive tests for empirical studies. Effect size is crucial particularly in evaluative bibliometrics, as far-reaching decisions on careers and financing are often made on the basis of publication and citation data. The effect size gives information about how well a research institution is performing compare d to another. Bornmann (in press) has already prese nted a number of tests for effective size measurement. The use of adjusted predictions and marginal effects provide alternative ways by which differences across institutions can be visualized and made easier to interpret. 20 References American Psychological Association. (2009). Publication manual of the American Psychological Association (6. ed.). Washington, DC, USA: American Psychological Association (APA). Bornmann, L. (in press). How to analyse percentile citation impact data meaningfully in bibliometrics: The statistical analysis of distributions, perce ntile rank classes and top - cited papers. Journal of the American Society for Information Science and Technology . Bornmann, L., & Daniel, H.-D. (2008). What do citation counts measure? A review of studies on citing behavior. Journal of Documentation, 64 (1), 45-80. doi: 10.1108/00220410810844150. Bornmann, L., & Mutz, R. (2013). The advantage of the use of sa mples in evaluative bibliometric studies. Journal of Informetrics, 7 (1), 89-90. doi: 10.1016/j.joi.2012.08.002. Bornmann, L., Mutz, R., & Daniel, H.-D. (in press). A multilevel-statistical reformulation of citation-based university rankings: the Leiden Ranking 2011/2012. Journal of the American Society for Information Science and Technology . Bornmann, L., Mutz, R., Marx, W., Schier, H., & Daniel, H.-D. (2011). A multilevel modelling approach to investigating the predictive validity of e ditorial decisions: do the editors of a high-profile journal select manuscripts that are highly cited after publication? Journal of the Royal Statistical Society - Series A (Statistics in Society), 174 (4), 857-879. doi: 10.1111/j.1467-985X.2011.00689.x. Garfield, E. (2006). The history and meaning of the Journal I mpact Factor. Journal of the American Medical Association, 295 (1), 90-93. Hardin, J., & Hilbe, J. (2012). Generalized linear models and extensions . College Station, Texas, USA: Stata Corporation. Hosmer, D. W., & Lemeshow, S. (2000). Applied logistic regression (2. ed.). Chichester, UK: John Wiley & Sons, Inc. Levy, P., & Lemeshow, S. (2008). Sampling of population – methods and applications (4 ed.). New York, NY, USA: Wiley. Long, J. S., & Freese, J. (2006). Regression models for categorical dependent variables using Stata (2. ed.). College Station, TX, USA: Stata Press, Stata Corporation. Lozano, G. A., Larivière, V., & Gingras, Y. (2012). The weakening relationship betwe en the impact factor and papers' citations in the digital age. Journal of the American Society for Information Science and Technology, 63 (11), 2140-2145. doi: 10.1002/asi.22731. Mitchell, M. N. (2012). Interpreting and visualizing regression models using Stata . College Station, TX, USA: Stata Corporation. Organisation for Economic Co-operation and Development. (2007). Revised field of science and technology (FOS) classification in the Frascati manual . Paris, France: Working Party of National Experts on Science a nd Technology Indicators, Organisation for Economic Co-operation and Development (OECD). Rabe-Hesketh, S., & Everitt, B. (2004). A handbook of statistical analyses using Stata . Boca Raton, FL, USA: Chapman & Hall/CRC. Schneider, J. W. (in press). Caveats for using statistical significance tests in research assessments. Journal of Informetrics . SCImago Reseach Group. (2012). SIR World Report 2012 . Granada, Spain: University of Granada. 21 van Raan, A. (2012). Properties of journal impact in relation to bibliometric r esearch group performance indicators. Scientometrics, 92 (2), 457-469. doi: 10.1007/s11192-012- 0747-0. Waltman, L., Calero-Medina, C., Kosten, J., Noyons, E. C. M., Tijssen, R. J. W., van Eck, N. J., . . . Wouters, P. (2012). The Leiden Ranking 2011/2012: data collection, indicators, and interpretation. Journal of the American Society for Information Science and Technology, 63 (12), 2419-2432. Williams, R. (2012). Using the margins command to estimate and interpret adjusted predictions and marginal effects. The Stata Journal, 12 (2), 308-331. 22 Table 1. Description of the dependent and independent variables ( n =15,426 publications) Variable Percentage / Mean Standard deviation Minimum Maximum Dependent variable: PP top 10% 20.7% 0 1 Independent variable: University Univ 1 (reference category) 7.4% 0 1 Univ 2 3.3% 0 1 Univ 3 55.4% 0 1 Univ 4 33.9% 0 1 Subject area Engineering and Technology (reference category) 11.4% 0 1 Medical and Health Sciences 10.7% 0 1 Natural Sciences 77.9% 0 1 Document type Article (reference category) 82.9% 0 1 Note 4.3% 0 1 Proceedings Paper 9.7% 0 1 Review 3.2% 0 1 Journal Impact Factor 4.5 5.8 0.4 54.3 Years since Publication (1=2010, 31=1980) 17.7 8 1 31 Number of Authors 4.2 2.4 1 23 Number of Pages 7.7 6.1 1 160 23 Table 2. Logistic Regression Models for PP top 10% (1) (2) (3) Baseline All variables Squared terms added University Univ 2 -0.716 *** -0.184 0.0245 (-5.16) (-1.12) (0.15) Univ 3 -0.541 *** 0.375 *** 0.640 *** (-7.51) (4.19) (7.06) Univ 4 -0.195 ** 0.0989 0.135 (-2.64) (1.13) (1.55) Subject Area Medical and Health Sciences -0.162 -0.280 ** (-1.62) (-2.74) Natural Sciences -0.342 *** -0.464 *** (-4.89) (-6.48) Document Type Note 0.0589 0.0963 (0.54) (0.86) Proceedings Paper -0.614 *** -0.410 *** (-6.14) (-4.03) Review 0.233 0.241 (1.90) (1.96) Further variables Journal Impact Factor 0.149 *** 0.308 *** (27.81) (30.28) Years Since Publication 0.0259 *** 0.0328 *** (8.73) (10.81) Number of Authors 0.0626 *** 0.0511 *** (6.55) (5.27) Number of Pages 0.0600 *** 0.0878 *** (13.42) (14.53) Journal Impact Factor Squared -0.00502 *** (-19.44) # of Pages Squared -0.000519 *** (-6.86) _cons -0.968 *** -3.124 *** -3.961 *** (-14.61) (-23.51) (-27.53) N 15426 15426 15426 pseudo R 2 0.007 0.126 0.148 AIC 15617.5 13763.8 13419.5 BIC 15648.1 13863.2 13534.2 chi2 104.3 1976.0 2324.3 D.F. 3 12 14 Notes. z statistics in parenthese s * p < 0.05, ** p < 0.01, *** p < 0.001 24 Table 3. Average adjusted predictions (AAPs) and average marginal effects (AMEs) for the discrete variables in the regression model (n= 15,426 publications) (1) (2) AAPS AMES University Univ 1 0.162 *** (18.52) Univ 2 0.164 *** 0.00270 (10.08) (0.15) Univ 3 0.245 *** 0.0829 *** (48.65) (7.97) Univ 4 0.177 *** 0.0154 (39.27) (1.59) Subject Area Engineering and Technology 0.265 *** (24.69) Medical and Health Sciences 0.223 *** -0.0428 ** (21.06) (-2.76) Natural Sciences 0.197 *** -0.0679 *** (59.81) (-6.05) Document Type Article 0.208 *** (64.00) Note 0.222 *** 0.0136 (14.04) (0.84) Proceedings paper 0.157 *** -0.0509 *** (14.34) (-4.42) Review 0.244 *** 0.0352 (13.03) (1.86) Notes. z statistics in parenthese s * p < 0.05, ** p < 0.01, *** p < 0.001 25 Figure 1. Average Adjusted Predictions & 95% Confidence Interva ls for Journal Impac t Factor. 0 .1 .2 .3 .4 .5 .6 .7 .8 .9 Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 5 10 15 20 25 30 35 Journa l Impa ct Factor 26 Figure 2. Average Marginal Effects & 95% Confidence I ntervals for Journal I mpact Factor. -.01 0 .01 .02 .03 .04 .05 .06 Ef f e ct s o n Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 5 10 15 20 25 30 35 Journa l Impa ct Factor 27 Figure 3. Average Adjusted Predictions & 95% Confidence Interva ls for Length of Document. .1 .2 .3 .4 .5 .6 .7 .8 .9 1 Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 10 20 30 40 50 60 70 80 90 100 110 120 Numb er of pages 28 Figure 4. Average Marginal Effects & 95% Confidence Intervals for Length of Document. -.01 5 -.01 -.00 5 0 .005 .01 .015 Ef f e ct s o n Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 10 20 30 40 50 60 70 80 90 100 110 120 Numb er of pages 29 Figure 5. Adjusted Predictions at Representative Values & 95% Confidence Intervals for Four Universities and Journal Impact Factor. 0 .1 .2 .3 .4 .5 .6 .7 Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 1 2 3 4 5 6 7 8 9 10 11 12 13 Journa l Impa ct Factor Univ 1 Univ 2 Univ 3 Univ 4 30 Figure 6. Marginal Effects at Representative Values & 95% Confidence Intervals for Four Universities and Journal Impact Factor. -.1 -.05 0 .05 .1 .15 .2 Ef f e ct s o n Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 1 2 3 4 5 6 7 8 9 10 11 12 13 Journa l Impa ct Factor Univ 2 Univ 3 Univ 4 31 Figure 7. Adjusted Predictions at Representative Values & 95% Confide nce Intervals for Four Universities and Document Length. .1 .15 .2 .25 .3 .35 .4 .45 .5 Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 5 10 15 20 25 Numb er of pages Univ 1 Univ 2 Univ 3 Univ 4 32 Figure 8. Marginal Effects at Representative Values & 95% Confide nce Intervals for Four Universities and Document Leng th. -.05 0 .05 .1 .15 .2 Ef f e ct s o n Pr (H i g h l y C i t e d Pu b l i ca t i o n ) 0 2 4 6 8 10 12 14 16 18 20 22 24 26 Numb er of pages Univ 2 Univ 3 Univ 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment