Approximate Bayesian computation and Bayes linear analysis: Towards high-dimensional ABC

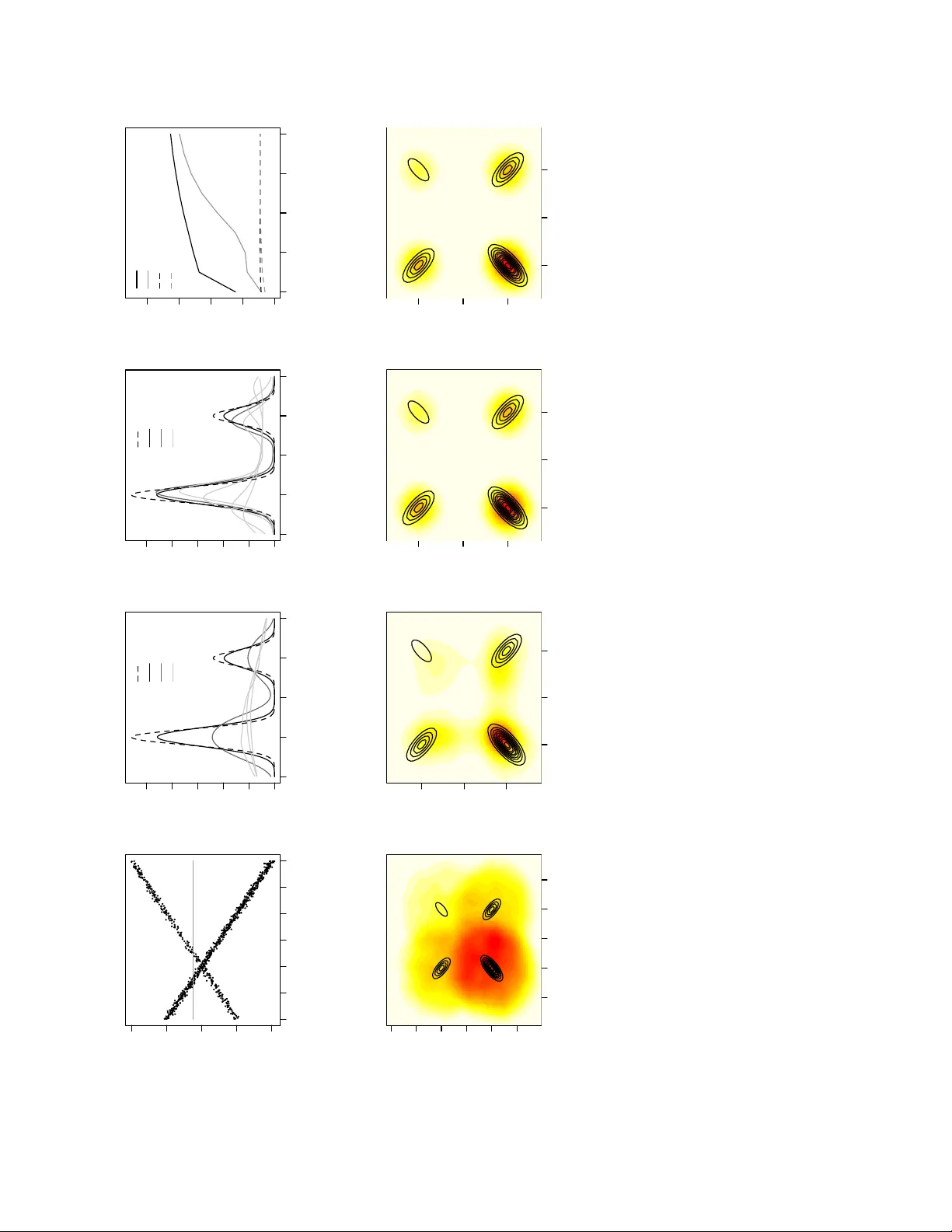

Bayes linear analysis and approximate Bayesian computation (ABC) are techniques commonly used in the Bayesian analysis of complex models. In this article we connect these ideas by demonstrating that regression-adjustment ABC algorithms produce sample…

Authors: D. J. Nott, Y. Fan, L. Marshall