Optimal rotation of a qubit under dynamic measurement and velocity control

In this article we explore a modification in the problem of controlling the rotation of a two level quantum system from an initial state to a final state in minimum time. Specifically we consider the case where the qubit is being weakly monitored -- …

Authors: Srinivas Sridharan

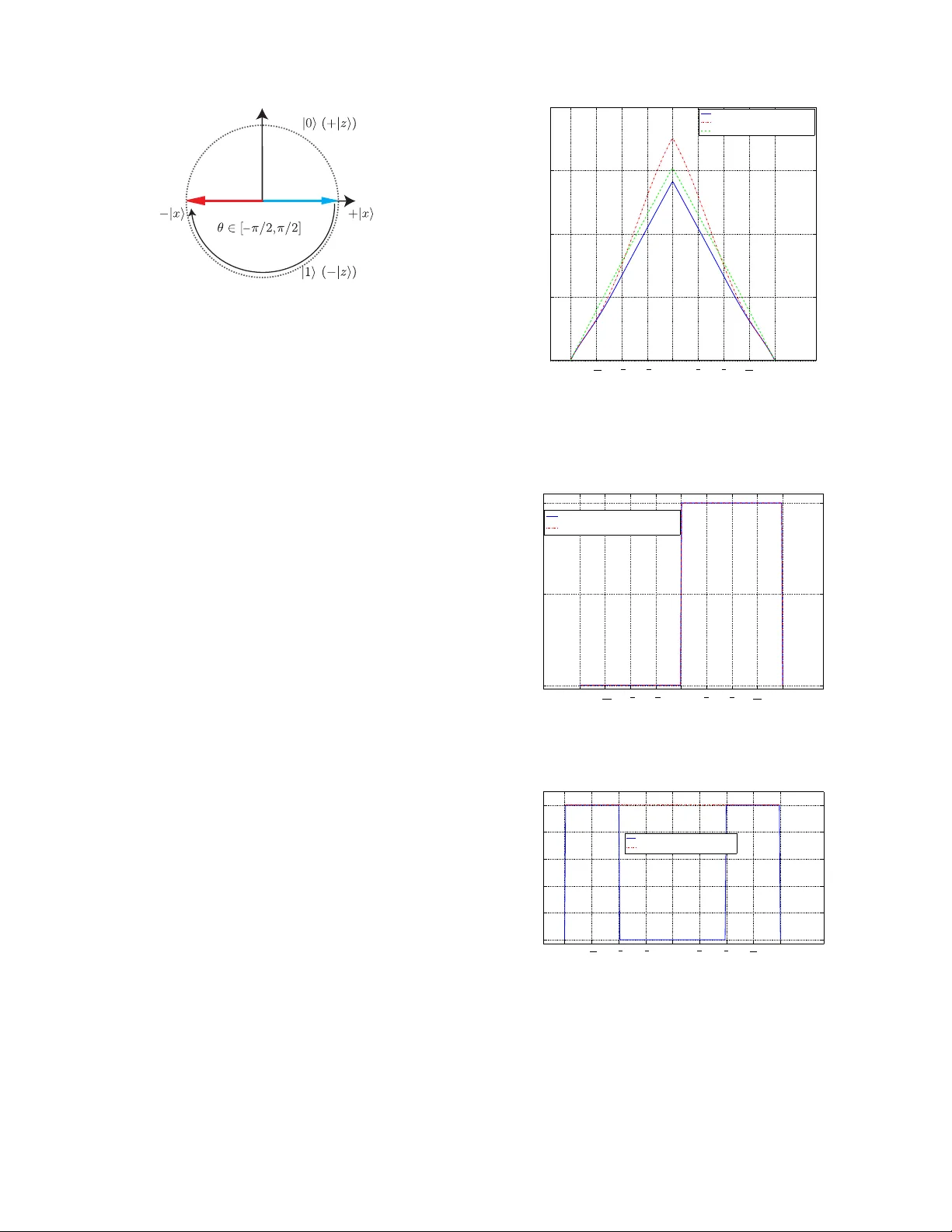

Optimal rotation of a qubit under dynamic measurement and v elocity control Srini v as Sridharan Dept. of Mechanical and Aerospace Engineering Univ ersity of California San Die go Email: srsridharan@ucsd.edu Abstract — In this article we explore a modification in the problem of controlling the rotation of a two level quantum system from an initial state to a final state in minimum time. Specifically we consider the case wher e the qubit is being weakly monitored – albeit with an assumption that both the measure- ment str ength as well as the angular velocity are assumed to be control signals. This modification alters the dynamics significantly and enables the exploitation of the measurement backaction to assist in achieving the control objective. The proposed method yields a significant speedup in achieving the desired state transfer compared to pr evious appr oaches. These results ar e demonstrated via numerical solutions f or an example problem on a single qubit. I . I N T R O D U C TI O N One of the requirements common to many applications of quantum engineering [1], [2], [3], is that of being able to create a quantum system in a particular pure quantum state. Moreov er , it is desirable to achiev e such a state v alue in as short a time as possible. This has motiv ated research into this domain of time optimal control of quantum systems; Although has been a significant amount of research on optimal control for closed quantum systems [4], [5], [6], [7], [8], the in vestigation into the optimal control of monitored open quantum systems still remains to be developed fully [9], [10], [11], [12], [13]. In this work we address the problem of transferring the state of a two lev el quantum system from an initial pure state to a final pure state. In particular de velop the idea proposed in [14] on the possible use of measurement backaction for speeding up the control of a qubit to a greater e xtent that that achiev able by fix ed measurement alone. The frame work for this problem is unique due to the fact that both the angular rotation as well as the measurement strength for the continuous measurement being performed are control signals that can be varied to achiev e the desired objectiv e. This leads to a significant speedup in the time required to reorient the system between two pure states. Intuitively we use the fundamental property of measurement backaction, unique to quantum systems, to help achiev e the state transition more rapidly than that possible using either: static measurement and feedback or that with no-measurement and a control driv en by a constant rotation (termed the Hamiltonian ev o- lution). The structure of this article is as follows. In section II we describe the system model and frame the optimal control Research supported by AFOSR grant F A9550-10-1-0233. problem of interest. T o solve the problem described above, we utilize the dynamic programming approach from optimal control theory in section III. It turns out howe ver , that the Hamilton-Jacobi-Bellman equations associated with this con- trol problem may not hav e classical solutions. W e discuss this issue and indicate a numerical method to obtain the solution in section III. T wo cases of interest in the optimal control of the state of a quantum system are transition between states which are: (a) parallel or (b) orthogonal to the axis of measurement. The solution to the control problem for these different cases are described in section IV. W e then highlight the performance of the dynamic measurement and control strategy introduced herein with respect to that achieved using either only static measurement strength or with pure rotation alone (with no measurement). W e conclude with a discussion of further interesting questions that arise from these results, in section VI. I I . S Y S T E M D E S C R I P T I O N A N D F U RT H E R BA C K G RO U N D A. The model W e now explain the model for the system of interest - the continuous measurement and feedback control of a single qubit. A more comprehensi ve introduction to continuous quantum measurements can be found in [15], [16]. The state of such a system may be represented by a vector in a 3 dimensional real unit sphere (termed the Bloch spher e ). Consider a quantum spin system subject to measurement along the z axis 1 , with control signals that induce a rotation around the three axis. Let γ denote the measurement strength – a parameter that determines the rate at which the informa- tion is extracted from a system. A lar ger value of γ leads to a greater rate of information extraction and therefore a higher rate at which the system is projected on to one of the eigenstates of the observable used [in this case it is the Pauli matrix σ z = diag ( 1 , − 1 ) ]. For the particular choice of measurement considered herein, these eigenstates correspond to the up ( + z ) direction and the do wn ( − z ) direction on the Bloch sphere. The goal of the feedback is to tak e the initial state (say | 0 i i.e., the + z direction) and control it to the orthogonal state (say | 1 i i.e., the − z direction) in a time optimal manner . W e assume that: 1) the initial state is pure and that measurements are efficient. This implies that the evolution of the state 1 i.e., this measurement is of the observable σ z . vector is confined to the surface of the Bloch sphere. 2) the av ailable control is equal in strength (isotropic) about all 3 axes. Under the above assumptions, by using the symmetry of the problem the Bloch representation reduces (after a change of variables) to a lo wer dimensional model; Herein the state is constrained to lie on the unit circle i.e., the x - z plane. The control signal causes the state to rotate around the y axis. The control problem for this system in v olves moving the state between any two states on the unit circle. The system described abov e is modeled using a stochastic differential equation (SDE) of the form d θ ( t ) = α ( t ) d t − 2 γ ( t ) sin ( 2 θ ( t )) d t − 2 p 2 γ ( t ) sin ( θ ( t )) dW . (1) where θ ∈ [ − π , π ] . The term α denotes the control signal applied. T o ensure that the control problem is well posed we apply a bounded strength control, i.e., the controls are constrained to a closed compact set V : = [ − Ω , Ω ] . Here the maximum and minimum values that the control signal can take up at an y time instant are symmetric and ha ve a magnitude Ω . W e denote the set of piecewise continuous angular velocity signals by the term V . The second term in the equation above is the quantum measurement backaction. This can be intuiti v ely understood by setting the angle to 0 or ± π , so the state vector and the measurement axis commute and the backaction goes to zero. Similarly at the point θ = ± π / 2 where the state and measurement ax es are maximally non-commuting, the measurement back-action is largest. The term γ ( t ) denotes the measurement strength which is also a control term in this problem. The values allowed for the measurement strength belong to the range [ 0 , Γ ] . The set of measurement strength signals is denoted by ℑ . The final term in Eq. (1) indicates the innov ation term arising from measurements. W e denote the control signal pair ( α , γ ) as η and the set from which this signal is drawn as Ξ : = V × ℑ . The solution to the SDE (1) for a trajectory , starting at a point θ 0 (at a time t 0 ) and using a control strategy η ∈ Ξ , at a time instant t ∈ [ t 0 , ∞ ) is denoted by θ ( t ; η , t 0 , θ 0 ) . Note that the this trajectory is a particular sample path of a stochastic process. In cases where the arguments used in this notation are clear from the context we represent the solution at time t by θ t . B. P erformance measur e: the expected (discounted) hitting time In order to capture the time optimality requirement of the problem, the most intuitiv e approach is to model the problem as an optimal control problem with a cost function that measures the expected hitting time to the target set (denoted by T ). Define the hitting time as τ η T ( θ 0 ) = inf { t | θ ( t ; η , t 0 , θ 0 ) ∈ T } , (2) i.e., for each sample path it is the first time at which the trajectory reaches the target set (and is a random variable). Ini tial St ate z x (Measurement Axi s) Ta r get Stat e Fig. 1. The Bloch sphere with a graphical depiction of our control problem. W e start in the plus eigenstate of the observable σ z and rotate to the orthogonal state −| z i . The cost function based on the expected hitting time will take the form C ( θ 0 ) : = inf η ∈ Ξ E τ η T ( θ 0 ) , (3) = inf η ∈ Ξ E n Z τ η T ( θ 0 ) 0 d s o . (4) Howe ver , as described in the next section, application of the dynamic programming principle to solve this optimal control problem leads to an associated Hamilton-Jacobi- Bellman (HJB) equation whose classical solution may not exist. Thus we use a re vised discounted cost function which ensures the uniqueness a weak solution in similar classes of optimal control problems (c.f. [17], [18]) 2 C ( θ 0 ) : = inf η ∈ Ξ E n Z τ η T ( θ 0 ) 0 exp {− β s } d s o . (5) The parameter β > 0 is called the discount factor . One interesting aspect of this discounting is that the cost function remains bounded for any choice of the control signal. The motiv ation for and advantages of this discounting will be discussed in detail below . I I I . O P T I M A L C O N T RO L F O R T H E H I T T I N G T I M E P RO B L E M In order to obtain the optimal cost function (Eq. (5)) and the corresponding control strategy for the system of interest, we apply the dynamic programming [19], [20] approach from optimal control theory . Note that the system dynamics in Eq. (1) is an SDE of the form d x = b ( x , α , γ ) dt + σ ( x , γ ) dW . (6) By comparison, the coefficients b , σ can be seen to be b ( x , α , γ ) : = α − 2 γ sin ( 2 θ ) , (7) σ ( x , γ ) : = 2 p 2 γ sin ( θ ) . (8) 2 W e defer the discussion of the weak solutions and a rigorous justification of the discounted cost in this problem to the future. W e introduce a differential operator L v [ φ ]( y ) given by the expression L η [ φ ]( y ) : = b ( y , η ) ∂ φ ∂ θ θ = y + 1 2 σ 2 ( y , γ ) ∂ 2 φ ∂ θ 2 θ = y , (9) which is the generator of the It ¯ o dif fusion process Eq.(1). The application of dynamic programming to this optimal con- trol problem yields the following Hamilton-Jacobi-Bellman equation ov er the set G : = ( − π , π ) : sup η ∈ Ξ { − 1 + β φ − L η [ φ ]( y ) } = 0 , ∀ y ∈ G (10) with boundary conditions φ ( T e ) = 0 . (11) The classical solution to this partial differential equation (PDE) yields the discounted cost function in Eq. (5). Note that the HJB equation (10) is an elliptic PDE [21] with a coef ficient for the second order deri v ati ve that can become zero at any point in the domain G – therefore it is called a degenerate elliptic PDE. The positivity (non- degenerac y) of this second order term is a suf ficient condition for the existence of a classical solution to this PDE [22], [17]. Hence, due to the nature of the σ ( · , · ) term in the system dynamics being able to take up a value of zero, there arises a degeneracy o wing to which the HJB equation is not guaranteed to have a sufficiently smooth solution. Therefore a rigorous study of the solution to the optimal control problem necessitates an analysis of the solution to this equation in a weak sense. It is interesting to note that an alternate approach used in the literature to determine the hitting time in v olves solving a PDE termed the Fokker -Planck equation [23], [24] . The solution to this equation is the probability density of the distribution of the hitting time (from which we can ev aluate the expectation of the hitting time). The degenerac y indicated abov e also arises naturally in the Fokker -Planck equation, thereby gi ving rise to the same issues of non-existence of classical solutions. In this article our focus is on obtaining and analyzing the optimal control strategy and the improv ement obtained in the time optimality in state transfer compared to that achieved by other strategies. Hence we defer the analysis of these questions of existence and uniqueness of the generalized solutions for this problem to a future publication. A. Numerical solution One widely applicable method for computing the solution to optimal control problems is the Marko v chain approx- imation method [25], [17]. In this approach the system dynamics are approximated by a controlled Markov chain on a finite state space. The cost function is then approximated by a discretization suited to this chain. Thus an iteration is constructed which con verges to the desired cost function under the limit that the discretization con verges towards the original formulation. For a more detailed introduction to this approach and other applications to quantum control we refer the reader to [25], [26], [18] and the references therein. W e now outline the iterativ e procedure to solve for the cost function. Define a + : = max { a , 0 } , a − : = max {− a , 0 } . (12) W e denote the spatial discretization interval by ‘ h ’. In addition, we use the expression exp {− β ∆ t } ≈ 1 1 + β ∆ t , (13) to approximate the exponential weighting term. W e generate a grid G h that approximates the set G (for instance using a mesh with step-size h ). The discretization for the HJB equation (10) yields φ h ( x ) = min η ∈ Ξ ( h ∑ y p h ( x , y | η ) φ h ( y ) + ∆ t h ( x , η ) i × 1 1 + β ∆ t h ( x , η ) ) , x ∈ ˜ G (14) where the summation is ov er all points y neighboring x . The terms ‘ p ’ in the equation abov e are functions that are giv en by [25]. p h ( x , x + h | η ) : = σ 2 ( x , γ ) / 2 + hb + ( x , α , γ ) σ ∗ 2 ( x ) + hB ∗ ( x ) , (15) p h ( x , x − h | η ) : = σ 2 ( x , γ ) / 2 + hb − ( x , α , γ ) σ ∗ 2 ( x ) + hB ∗ ( x ) , (16) p h ( x , x | η ) : = [ σ ∗ 2 ( x ) − σ 2 ( x , γ )] + hB ∗ ( x ) − h | b ( x , α , γ ) | σ ∗ 2 ( x ) + hB ∗ ( x ) , (17) where B ∗ ( x ) : = Ω + 2 Γ | sin ( 2 x ) | , σ ∗ ( x ) : = 2 √ 2 Γ sin ( θ ) , (18) ∆ t h ( x , η ) : = h 2 σ ∗ 2 ( x ) + hB ∗ ( x ) . (19) Denoting the RHS of Eq. 14 as an operator ξ acting on the value function φ ( · ) we obtain the iteration φ h k + 1 ( x ) = ξ ( φ h k )( x ) , x ∈ G h , (20) Under appropriate choices of parameters, this operator ξ can be sho wn to be a contraction mapping, thereby yielding the necessary conv er gence. The results obtained by applying this iterativ e method to the problem of interest will be described in the following section. I V . N U M E R I C A L E X A M P L E S In this section we describe the solution to the optimal control problem for two cases of interest: 1) when the initial and final states for the control problem are eigenstates of the observable σ z i.e., to move from | 0 i to | 1 i (ref. Fig. 1). Ini tial St ate z x (Measurement Axi s) Ta r get Stat e Fig. 2. The Bloch sphere with a graphical depiction of our control problem. W e start in the plus eigenstate of the observable σ x and rotate to the orthogonal state −| x i . 2) when the states are both maximally non-commuting with respect to the measurement (ref. Fig. 2) with σ z i.e., the problem is to go from + | x i to −| x i . These two cases are of interest since they help clarify whether the control problem between two orthogonal states depends on the nature of the initial and terminal points. The optimal control problems are solved numerically via the value iteration approach described in the previous section. In order to implement this approach we include a stop- ping criteria for the value iteration algorithm, which in our approach is obtained from a stopping test function – the maximum absolute value of the change in the cost function ov er all grid points. Once this goes belo w a fixed threshold, we stop the value iteration. Note that this is possible due to the fact that the value iteration operator ξ is a contraction mapping (if not, there would be no reason for this stopping test function to remain below the threshold in subsequent operations). A. Optimal transition between eigenstates In this case the states take up v alues from the set G : = ( − π , + π ) . Assume a control bound of Ω = 5, a discount factor of β = 0 . 1 and a measurement strength of Γ = 1. Ap- plying the value iteration procedure, we obtain the solution to the HJB PDE (10) subject to the boundary condition of C ( ± π ) = 0. The result obtained is as indicated in Fig. 3: the corresponding optimal control is as shown in Fig. 4(a) and measurement strength is indicated in Fig. 4(b). Hence it turns out that the optimal control is consistent with the intuition of ex ercising a clockwise rotation when starting at any point to the right of the + | z i (up) state and counterclockwise rotation to the left of this state. Note that the optimal measurement strategy in this case is to turn of f measurement till arriving at the state θ = π / 2. B. Optimal transition between non-eigenstates W e now study the optimal control problem of taking the initial state θ 0 = π / 2 to the target state of T ne = {− π / 2 , 3 π / 2 } as depicted in Fig. 2. In this case the region of interest is G : = ( − π / 2 , 3 π / 2 ) and the HJB equation as- sociated with this problem is (10) with a boundary condition 0 0.2 0.4 0.6 0.8 Angle " θ " Optimal cost function − π - 3 π 4 - π 2 - π 4 0 π 4 π 2 3 π 4 π Dynamic measurement strength Static measurement strength Pure rotation Fig. 3. Discounted hitting time cost function to the target T e : = {− π , π } , starting from various possible initial states with Ω = 5, β = 0 . 1 and Γ = 1. −5 0 5 Angle " θ " Control signal − π - 3 π 4 - π 2 - π 4 0 π 4 π 2 3 π 4 π Dynamic measurement strength Static measurement strength (a) Optimal angular velocity control signal to the target T e : = {− π , π } , starting from various possible initial states with Ω = 5, β = 0 . 1 and Γ = 1 . 0 0.2 0.4 0.6 0.8 1 Angle " θ " Observation strength γ − π - 3 π 4 - π 2 - π 4 0 π 4 π 2 3 π 4 π Dynamic measurement strength Static measurement strength (b) Optimal measurement strength signal to the target T e : = {− π , π } , starting from various possible initial states with Ω = 5, β = 0 . 1 and Γ = 1. Fig. 4. T ransition between eigenstates. of C ( T ne ) = 0. The value iteration approach for this problem yields the solution (ref. Fig. 5). This control strategy in this case in volves an angular rotation of + Ω for all states corresponding to angles between ( π / 2 , 3 π / 2 ) and counter- 0 0.2 0.4 0.6 0.8 Angle " θ " Optimal cost function − π 2 - π 4 0 π 4 π 2 3 π 4 π 5 π 4 3 π 2 Dynamic measurement strength Static measurement strength Pure rotation Fig. 5. Discounted hitting time cost function to the target T ne : = {− π / 2 , 3 π / 2 } , starting from v arious possible initial states with Ω = 5, β = 0 . 1 and Γ = 1. clockwise control for states in the domain ( − π / 2 , π / 2 ) . V . I N T E R P R E TA T I O N In this section we analyze and compare the performance of the variable measurement strength approach proposed herein to tw o alternate control approaches - pure Hamiltonian rotation and fixed strength control. The strategy used as the base line for these comparisons is the case of pure rotation with no measurement. W e now analyze the salient features of these strategies. A. F ixed measur ement strength For the case of rotation between eigen states, from Fig. 3 it can be seen that for angles from [ 0 , π / 2 ) the fixed measurement strength strategy performs worse than the pure rotation approach; Howe ver for angles between ( π / 2 , π ) the backaction from the fixed measurement helps project the state towards the target thereby outperforming the pure rotation strategy . This is in contrast to the control between non-eigen states ±| x i where the fixed measurement strategy performs better than the fixed rotation close to the starting point but worse for all points further away (e xcept at the target). B. Dynamic measurement strength As can be observ ed from Figures 3, 5 the time for the dynamic measurement approach is always smaller than that for both: the pure rotation strategy (in fact they are equal only at the boundary), and for the case of a static measurement. For θ ∈ [ 0 , π / 2 ] the time to reach θ = π is substantially different. Hence using a dynamic control and measurement scheme shortens the hitting time to the desired state by a significant margin. In the case of the transfer between the states orthogonal to | z i (i.e., | x i ) we hav e that this strategy does better than the pure rotation strategy only for starting points from ( 0 , π ) and equal to the rotation strate gy at all other points. This is intuiti ve as the the dynamic measurement strategy leads to the measurement signal being switched off for points between ( − π / 2 , 0 ) and ( π , 3 π / 2 ) - leading to the use of only the maximum magnitude of the av ailable angular rotation. V I . C O N C L U S I O N A N D F U T U R E W O R K In this article we described an approach for the time optimal rotation of a quantum tw o le v el system. The dynamic measurement and velocity control strate gy led to a speedup in the hitting time compared to strategies used pre viously in the literature. Numerical solutions to certain example problems were indicated and the analysis of the solutions led to the following interesting avenues for future in vestigation. The special form of the nonlinear dynamics in the system under consideration, leads to a degenerate Hamilton-Jacobi- Bellman equation associated with the optimal control prob- lem – this necessitates a weak (viscosity) solution interpre- tation for the solution to these control problems. Hence this remains to be in vestig ated in greater detail. Another aspect of note in this problem is the fact that the optimal measurement and control strategy are not separable 3 ; i.e., the observation strategy depends on a knowledge of the angular velocity con- trol. Certain aspects of this problem appear to parallel those in the well known Witsenhausen counterexample [27], [28], [29]. Inspired by these ideas, we note that in the quantum control problem, one possible vie wpoint is to analyze this problem as a combination of two interrelated controllers (i) rotational velocity control; (ii) measurement control, with an ov erall time optimal strategy depending on two controllers in a distributed control paradigm. In this arrangement, the knowledge of the state from the first controller is passed to the second controller . This potentially giv es rise to a non- classical information pattern. The analysis of the impact of this on optimal control and measurement and its relationship to the foundations of decentralized control also offers a fruitful topic for future work. Aknowledgements: The author would like to thank Joshua Combes for helpful technical discussions and comments on this manuscript. R E F E R E N C E S [1] M.A. Nielsen and I.L. Chuang. Quantum Computation and Quantum Information . Cambridge University Press, 2000. [2] B.L. Higgins, D W Berry , SD Bartlett, H.M. W iseman, and GJ Pryde. Entanglement-free Heisenberg-limited phase estimation. Natur e , 450(7168):393–396, 2007. [3] H.M. Wiseman and G.J. Milb urn. Quantum Measurement and Contr ol . Cambridge Univ ersity Press, 2009. [4] D. D’Alessandro. Uniform finite generation of compact lie groups. Systems and Contr ol Letters , 47(1):87–90, 2002. [5] Quantum control using Lie group decompositions , volume 1, 2001. [6] V . Ramakrishna, K.L. Flores, H. Rabitz, and R.J. Ober . Quantum control by decompositions of SU ( 2 ) . Physical Review A , 62(5):53409, 2000. 3 This is an interesting contrast to the work in [13] which utilizes the separation aspect of filtering and control. [7] M.A. Nielsen, M.R. Do wling, M. Gu, and A.C. Doherty . Quantum computation as geometry . Science , 311(5764):1133–1135, 2006. [8] N. Khaneja, R. Brockett, and S. J. Glaser . Time optimal control in spin systems. Phys. Rev . A , 63:032308, 2001. [9] V .P Belavkin. Measurement, Filtering and Control in Quantum Open Dynamical systems. Reports on Mathematical Physics , 43(3):405–425, 1999. [10] H.M. Wiseman and L. Bouten. Optimality of feedback control strategies for qubit purification. Quantum Information Pr ocessing , 7(2):71–83, 2008. [11] A. Shabani and K. Jacobs. Locally optimal control of quantum systems with strong feedback. Physical revie w letters , 101(23):230403, 2008. [12] V .P . Belavkin, A. Negretti, and K. Mølmer . Dynamical programming of continuously observed quantum systems. Physical Review A , 79(2):22123, 2009. [13] J. Gough, V .P Bela vkin, and O.G Smolyano v . Hamilton–Jacobi– Bellman equations for quantum optimal feedback control. J ournal of Optics B: Quantum and Semiclassical Optics , 7:S237–S244, 2005. [14] Sriniv as Sridharan, Masahiro Y anagisawa, and Joshua Combes. Op- timal rotation control and weak solutions for a qubit subject to continuous measurement. T ransactions on Automatic Contr ol, Special Issue on Quantum Contr ol , (under revie w). [15] T . A. Brun. A simple model of quantum trajectories. American Journal of Physics , 70:719, 2002. [16] K. Jacobs and D. A. Steck. A straightforward introduction to continuous quantum measurement. Contemporary Physics , 47:279, 2006. [17] W .H. Fleming and H.M. Soner . Contr olled Markov Pr ocesses and V iscosity Solutions . Springer V erlag, Berlin-NY , 2006. [18] Sriniv as Sridharan and Matthew R. James. Numerical Solution of the Dynamic Programming Equation for the Optimal Control of Quantum Spin Systems. Systems & Control Letters (T o appear) , 2011. [19] R.E. Bellman. Dynamic Pr ogramming . Courier Dov er Publications, 2003. [20] D.P . Bertsekas. Dynamic Pr ogramming and Optimal Control . Athena Scientific, 1995. [21] D. Gilbarg and N.S. Trudinger . Elliptic partial differ ential equations of second or der . Springer V erlag, 2001. [22] E. W ong and B. Hajek. Stochastic processes in engineering systems. Rev . ed . Springer T exts in Electrical Engineering, 1985. [23] C.W . Gardiner . Handbook of stochastic methods . Springer Berlin, 1985. [24] K. Jacobs. Stochastic pr ocesses for physicists: understanding noisy systems . Cambridge Univ Pr , 2010. [25] H.J. Kushner and PG Dupuis. Numerical Methods for Stoc hastic Contr ol Pr oblems in Continuous T ime . Springer V erlag, Berlin-NY , 1992. [26] Sriniv as Sridharan, Mile Gu, and Matthew R. James. Gate complexity using dynamic programming. Physical Review A (Atomic, Molecular , and Optical Physics) , 78(5):052327, 2008. [27] H.S. Witsenhausen. A counterexample in stochastic optimum control. SIAM Journal on Control , 6:131, 1968. [28] D.A. Castanon and N.R. Sandell. Signaling and uncertainty: a case study . In Decision and Contr ol including the 17th Symposium on Adaptive Pr ocesses, 1978 IEEE Conference on , volume 17, pages 1140–1144. IEEE, 1978. [29] H.S. Witsenhausen. Demystifying the W itsenhausen Counterexample. IEEE Contr ol Systems Magazine , 1066(033X/10), 2010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment