Adaptive sequential Monte Carlo by means of mixture of experts

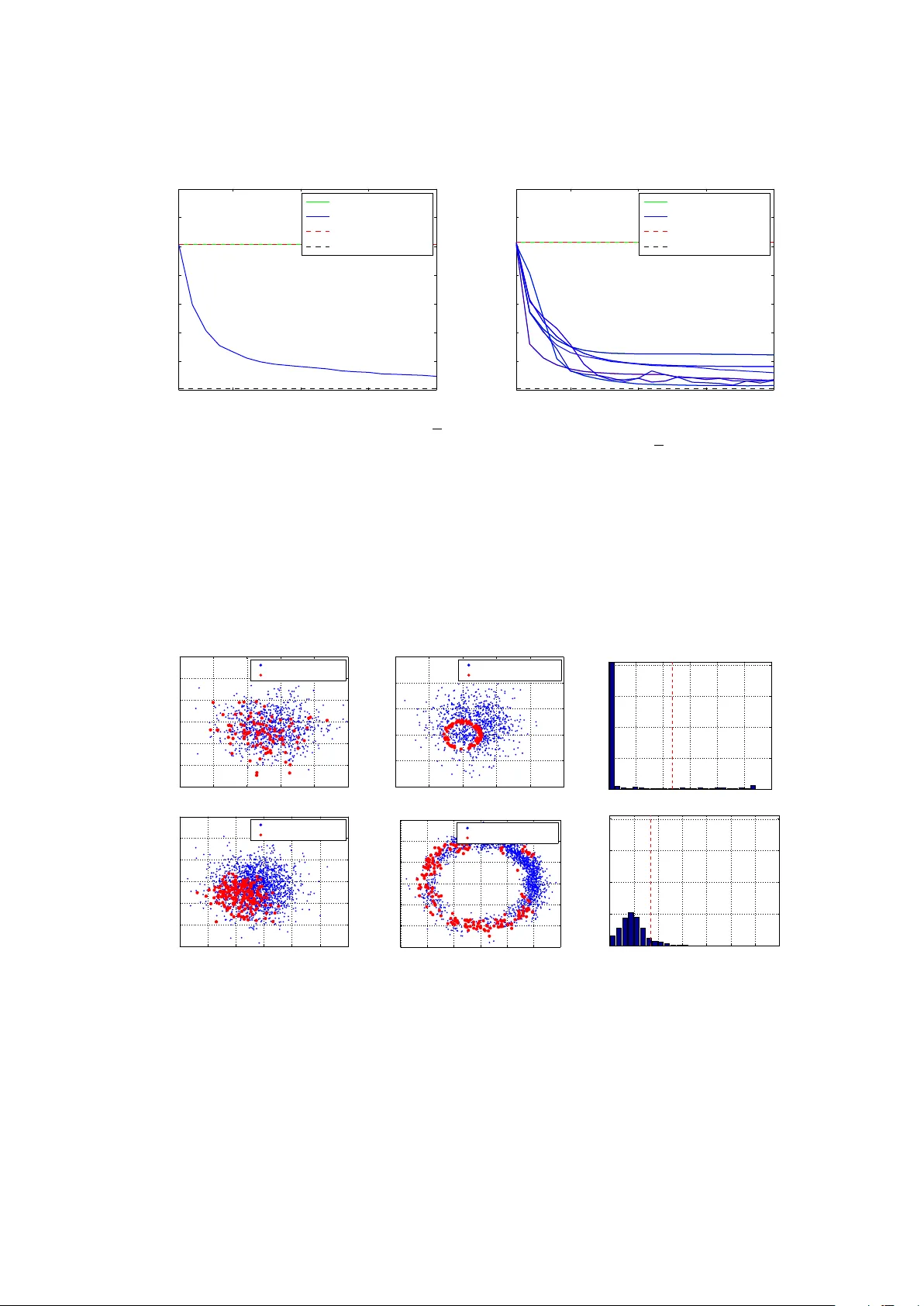

Appropriately designing the proposal kernel of particle filters is an issue of significant importance, since a bad choice may lead to deterioration of the particle sample and, consequently, waste of computational power. In this paper we introduce a n…

Authors: J. Cornebise, E. Moulines, J. Olsson