Multivariate information measures: an experimentalists perspective

Information theory is widely accepted as a powerful tool for analyzing complex systems and it has been applied in many disciplines. Recently, some central components of information theory - multivariate information measures - have found expanded use …

Authors: Nicholas Timme, Wesley Alford, Benjamin Flecker

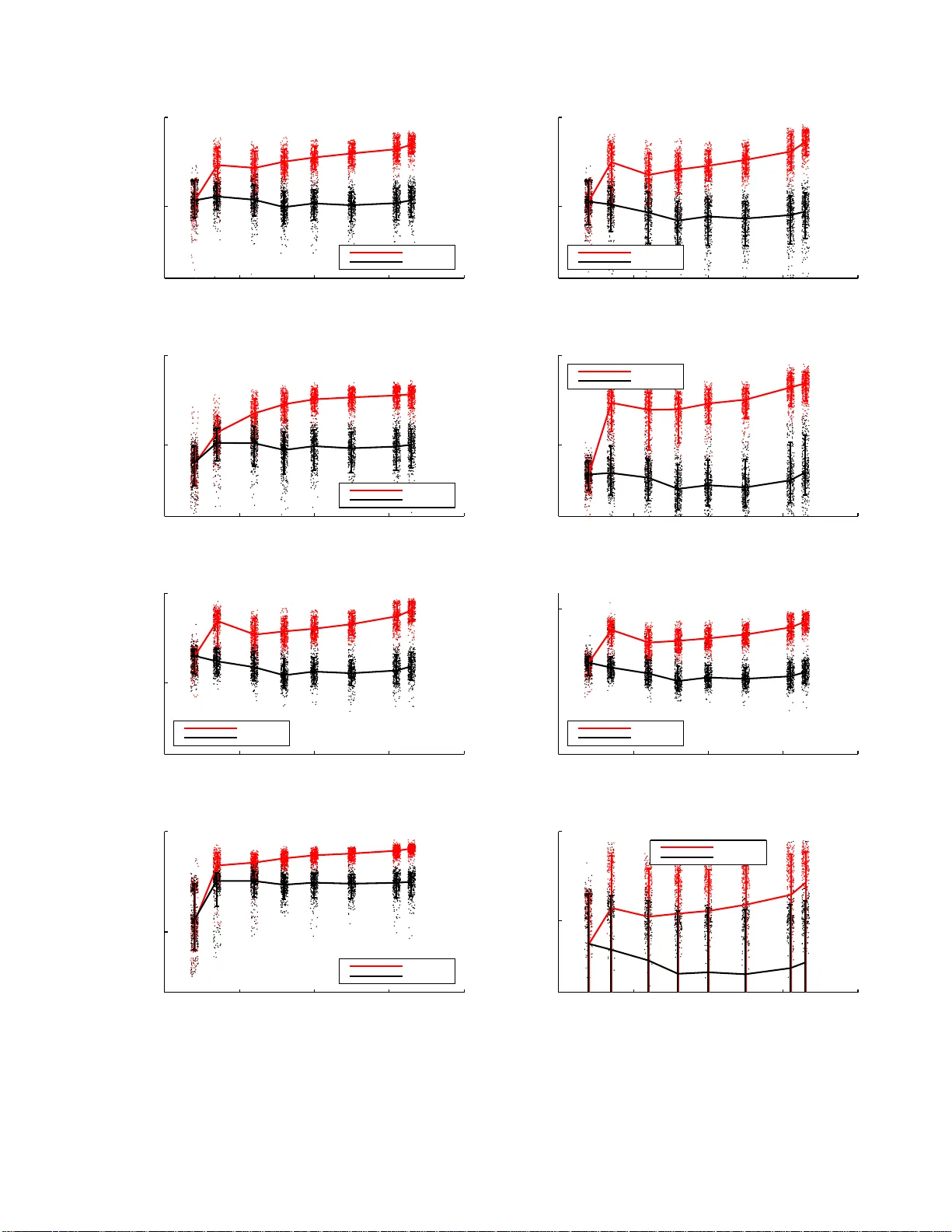

Multiv ariate information mea sures: an exp erimen talist’s persp ect iv e Nic holas Timme, ∗ W esley Alford, Benjamin Fleck er, and John M. Beggs Dep artment of Physics, Indiana University, Blo omington, IN 47405-71 05 (Dated: Nov em ber 27, 2024) Information theory has long b een u sed to quantify intera ctions b et w een tw o v aria bles. With the ri se of complex systems researc h, multiv ariate information measures are increasingl y needed. Although the biv ariate information measures d ev eloped by Shannon are commonly ag reed up on, the m ultiv ariate information measures in use t o day ha ve been developed by man y different groups, and differ in subtle, yet significan t wa ys. Here, w e will review the information theory b ehind eac h measure, as well as examine th e d ifferences b etw een these measures by app lying them to sever al simple mo del systems. In addition to these sy stems, we will illustrate the usefulness of the information measures by analyzing neural spiking data from a dissociated culture through early stages of its developmen t. W e h ope that this w ork will aid other researchers as they seek the b est multiv ariate information measure for their s p ecific researc h goals and system. Finally , w e ha ve made soft w are av ailable online whic h allo ws t he user to calculate all of the information measures discussed within this p aper. P ACS num bers: 89.70.Cf, 89.75.Fb, 87.19.lo, 87.19.lv I. INTRO DUCTION Information theory has pr ov ed to b e a useful to ol in many disciplines . It has be e n success fully applied in sev- eral ar eas of research, including neuroscience [1], data compressio n [2], co ding [3], dynamical systems [4], and genetic co ding [5], just to name a few. Information the- ory’s broad applicability is due in part to the fact that it relies only on the probability distribution a sso ciated with one o r mor e v ar iables. Generally sp eaking , infor mation theory uses the probability distributions asso cia ted with the v a lues of the v ariables to ascerta in whether or no t the v a lues of the v ariables are related a nd, dep ending o n the situation, the wa y in whic h they are rela ted. As a result of this, informatio n theor y can b e applied to linear and non-line a r systems, although this do es not g uarantee that an information-base d measur e will capture all non- linear contributions. In s ummary , information theory is a mo del-indep endent appro ach . Information theoretic approaches to problems in volv- ing one and tw o v ar iables are well understo o d and w idely used. In addition to the one and tw o v ar iable measures, several information measures hav e be en intro duce d to an- alyze the relationships or interactions b etw een three or more v aria bles [6 – 13]. These multiv aria te information measures have b een applied in physical systems [14, 1 5], biological systems [16, 17], and neuroscience [18 – 20]. How ev er, these multiv ariate information measures differ in significa n t and sometimes s ubtle wa ys. F urthermore, the notation and naming asso ciated with these measures is inconsistent throughout the litera ture (se e , for ex a m- ple, [7, 11, 21 – 2 3]) . Wit hin this pa per, w e will ex a mine a wide array of multiv aria te information mea sures in an attempt to clearly ar ticulate the differen t measures and their uses. After reviewing the information theor y b ehind ∗ nmt imme@umail.i u.edu each individual measur e, we will apply the informa tio n measures to several mo del sy stems in order to illuminate their differences a nd similarities. Also, we will apply the information measures to neural spiking data from a disso- ciated neural culture. O ur goal is to c la rify these metho ds for other resear c hers as they sea rch for the multiv aria te information meas ure that will b est a ddress their sp ecific resear ch g oals. In or der to facilitate the use of the information mea- sures discussed in this pap er, we ha ve made our MA T- LAB softw are freely av ailable, w hich can b e used to calcu- late all of the information meas ur es discusse d herein [24]. An e a rlier version of this work was previously p osted o n the arXiv [25]. II. SYNERG Y AND REDUNDANCY A crucial topic related to multiv ariate information measures is the distinction betw een sy ner gy and redun- dancy . With regard to these informatio n measures , the precise mea ning s of “syner gy” and “ redundancy” hav e not b een established, thoug h they have been inv ok ed by many r e s earchers in this field (see, for instance, [13, 1 8]). F o r a recent trea tmen t of synerg y in this context, see [ 2 6]. T o b e gin to understand synergy , we can use a simple system. Suppo se tw o v ar iables (call them X 1 and X 2 ) provide some information ab out a third v a riable (ca ll it Y ). In other words, if you know the state o f X 1 and X 2 , then you know something a b out the state Y . Lo osely , the p ortion of that information that is not pro vided by knowing b oth X 1 alone and X 2 alone is s aid to b e pro- vided synergistically b y X 1 and X 2 . The sy ne r gy is the bo n us information received b y knowing X 1 and X 2 to- gether , instea d o f separa tely . W e can take a similar initial a pproach to redundancy . Again, s uppose X 1 and X 2 provide some information ab out Y . The common p ortion of the information X 1 2 provides alone a nd the infor ma tion X 2 provides a lone is said to be provided redundantly by X 1 and X 2 . The r e- dundancy is the information received fro m b oth X 1 and X 2 . These imprecise de finitio ns may seem clear enough, but in attempting t o measure these quan tities, re- searchers hav e crea ted distinct measur e s that pro duce different res ults. Ba sed on the fact that the overall go al has not b een clearly defined, it cannot b e sa id that o ne of these measures is “correct.” Rather, ea c h mea sure has its own uses and limitations. Using the simple systems b e- low, we will attempt to clea rly articulate the differences betw een the multiv ariate information measures . II I. MUL TIV ARIA TE INF ORMA TION MEASURES In this s ection we will discus s the v a rious mult iv a ri- ate information theoretic mea sures that have b een int ro- duced previously . Of sp ecial note is the fact that the names and notation used in the literatur e hav e no t b een consistent. W e will attempt to clarify the discussion as m uch as p ossible by listing alternative na mes when ap- propriate. W e will refer to a n infor ma tion mea sure by its original name (or at lea st, its or iginal name to the b est of our knowledge). A. Entr opy and mutual information The informa tio n theoretic quantities in volving one and t wo v ar iables are well-defined and their r esults are well- understo o d. Regarding the proba bilit y distribution of one v ariable (call it p ( x )), the canonical mea sure is the ent ropy H ( x ) [27]. The entrop y is given by [28]: H ( X ) ≡ − X x ∈ X p ( x ) log( p ( x )) (1) The entropy q uan tifies the amount of uncertaint y that is present in the probability distr ibutio n. If the pr obability distribution is concentrated nea r one v alue, the en tropy will b e low. If the probability distribution is uniform, the ent ropy will be at a maximum. When examining the relationship betw een tw o v ari- ables, the mutual informa tion (I) qua n tifies the amount of informa tion provided ab out o ne of the v ariables by knowing the v alue of the other [27]. The mutual infor- mation is given by: I ( X ; Y ) ≡ H ( X ) − H ( X | Y ) = H ( Y ) − H ( Y | X ) = H ( X ) + H ( Y ) − H ( X , Y ) (2) where the c onditional entropy is g iv en by: H ( X | Y ) = X y ∈ Y p ( y ) H ( X | y ) = X y ∈ Y p ( y ) X x ∈ X p ( x | y ) log 1 p ( x | y ) (3) The mutu al informatio n ca n also be written as the Kullback-Leibler div ergence betw een the joint proba bil- it y distribution of the a ctual da ta a nd the joint probabil- it y distribution of the indep enden t mo del (wherein the joint dis tr ibution is eq ual to the pro duct o f the marginal distributions). This form is given by: I ( X ; Y ) = X x ∈ X,y ∈ Y p ( x, y ) log p ( x, y ) p ( x ) p ( y ) (4) The m utual information can be used as a meas ure of the interactions among more than tw o v a riables by grouping the v a r iables into sets and trea ting e a c h set as a single vector-v alued v a riable. F o r insta nce, the mu- tual information can b e calcula ted b etw een Y and the set S = { X 1 , X 2 } [29] in the following wa y: I ( Y ; S ) = X y ∈ Y x 1 ∈ X 1 ,x 2 ∈ X 2 p ( y , x 1 , x 2 ) log p ( y , x 1 , x 2 ) p ( y ) p ( x 1 , x 2 ) (5) How ev er, when the mutual information is considered as in E q . ( 5 ), it is no t p ossible to separa te con tributions from individual X v ariables in the s et S . Still, by v a ry- ing the num ber of v ariables in S , the mutual information in Eq. ( 5 ) can be used to measur e the gain or loss in in- formation ab out Y b y thos e v ariables in S . Along these lines, Bettencourt et al. used the mutual information betw een one v a riable (in their ca se, the activity of a neu- ron) and many other v ariables consider e d together (in their case, the activities of a gr oup of other neurons) in order to examine the relationship b etw een the a moun t of information the g roup of neurons provided a bout the single neuron to the num ber of neurons co nsidered in the group [3 0]. The mutual infor mation can be conditioned up on a third v ariable to yield the conditiona l m utual info r mation [27]. It is g iv en by: I ( X ; Y | Z ) = X z ∈ Z p ( z ) X x ∈ X,y ∈ Y p ( x, y | z ) log p ( x, y | z ) p ( x | z ) p ( y | z ) = X x ∈ X,y ∈ Y ,z ∈ Z p ( x, y , z ) log p ( z ) p ( x, y , z ) p ( x, z ) p ( y , z ) (6) The conditional m utual information quan tifies t he amount of infor mation one v ariable pr o vides ab out a sec- ond v ariable when a thir d v ariable is known. B. Int eraction i nformation The firs t attempt to qua ntify the relations hip among three v ar iables in a joint pr obability distribution w as the int eraction info r mation (I I), which was introduced by McGill [6]. It attempts to extend the concept of the m utual information as the information gained a bout o ne 3 v a r iable by knowing the other . The interaction informa- tion is g iv en by: I I ( X ; Y ; Z ) ≡ I ( X, Y | Z ) − I ( X ; Y ) = I ( X, Z | Y ) − I ( X ; Z ) = I ( Z, Y | X ) − I ( Z , Y ) (7) Of the in teraction informatio n, McGill sa id [6], “W e see that I I ( X ; Y ; Z ) is the g ain (or loss) in sample in- formation tra nsmitted b etw een any tw o o f the v ariables, due to the additional knowledge of the third v ar iable.” The int eraction information can a lso b e written as : I I ( X ; Y ; Z ) = I ( X , Y ; Z ) − ( I ( X ; Z ) + I ( Y ; Z )) (8) In the form given in Eq. ( 8 ), the int eraction infor- mation has b e e n widely used in the literatur e and has often b een referr ed to as the s ynergy [16, 18, 1 9, 3 1] and redundancy-syne r gy index [9]. Some author s have used the term “synergy ” b ecause they hav e interpreted a p os- itive interaction information result to imply a s ynergistic int eraction among the v a riables and a negative in terac- tion infor ma tion result to imply a redundant interaction among the v aria bles. Thu s, if we ass ume this in terpre- tation of the interaction informa tion a nd that the inter- action information cor rectly mea s ures m ultiv a r iate in ter- actions, then synergy a nd r edundancy are taken to b e m utually exclusive qualities of the interactions be tween v a r iables. This view will find a co un terp oin t in the par - tial information decomp osition to b e discusse d b elow. The interaction information ca n a lso b e written a s a n expansion of the entropies and joint entropies of the v ari- ables: I I ( X ; Y ; Z ) = − H ( X ) − H ( Y ) − H ( Z ) + H ( X , Y ) + H ( X , Z ) + H ( Y , Z ) − H ( X , Y , Z ) (9) This for m leads to a n expansio n for the interaction information for N num be r of v ariables [32]. If S = { X 1 , X 2 , . . . X N } , then the in teraction information b e- comes: I I ( S ) = − X T ⊆ S ( − 1) | S |−| T | H ( T ) (10) In Eq. ( 10 ), T is a subset of S and | S | denotes the set size o f S . A measure similar to the interaction inf ormation was introduced by Bell and is r eferred to as the co- information (CI) [33]. It is given by the following ex- pansion: C I ( S ) ≡ − X T ⊆ S ( − 1) | T | H ( T ) = ( − 1) | S | I I ( S ) (11) Clearly , the c o-information is e qual to the interaction information when S co n tains an even num ber of v a riables and is equal to the negative of the interaction infor ma tion when S contains an o dd num ber of v ariables. So , fo r the three v ariable case, the co-informa tion beco mes: C I ( X ; Y ; Z ) = I ( X ; Y ) − I ( X, Y | Z ) = I ( X ; Z ) + I ( Y ; Z ) − I ( X , Y ; Z ) (12) Because the co-informatio n is directly rela ted to the int eraction informatio n for systems with any num ber o f v a r iables, we will forgo pres en ting r esults fro m the co- information. The co-infor ma tion has also b een referred to as the gener alized mutual informatio n [15]. C. T otal correlation The in teraction infor mation finds its conceptual base in extending the idea of the m utual information as the information gained ab out a v ariable when the other v ari- able is kno wn. Alternatively , we could extend the idea of the mutual information as the Kullba c k-Leibler diver- gence b et ween the joint distribution and the indepe ndent mo del. If we do this, we arrive a t the total correla tion (TC) intro duced by W atanab e [7]. It is given by: T C ( S ) ≡ X ~ x ∈ S p ( ~ x ) log p ( ~ x ) p ( x 1 ) p ( x 2 ) . . . p ( x n ) (13) In Eq. ( 13 ), ~ x is a vector containing individua l states of the X v a riables. The total correlatio n can also b e written in terms o f entropies as: T C ( S ) = X X i ∈ S H ( X i ) ! − H ( S ) (14) In this form, the total correla tio n has b een re fer red to as the multi-information [11], the spatia l sto c hastic interac- tion [2 3], and the integration [21, 22]. Using Eq. ( 2 ), the total corre lation can also b e written using a s e r ies of mutual infor mation terms (see App endix A fo r mor e details): T C ( S ) = I ( X 1 ; X 2 ) + I ( X 1 , X 2 ; X 3 ) + . . . I ( X 1 , . . . , X n − 1 ; X n ) (15) D. Dual total correlation After the total corre la tion was intro duced, a measure with a similar str ucture, called the dual total correla tion (DTC), was introduced by Ha n [8 , 3 4]. The dual total correla tion is g iv en by: D T C ( S ) ≡ X X i ∈ S H ( S/X i ) ! − ( n − 1) H ( S ) (16) In Eq. ( 16 ), S/X i is the set S with X i remov ed a nd n is the num ber of X v ariables in S . The dual total correla tion can a lso b e written as [35]: D T C ( S ) = H ( S ) − X X i ∈ S H ( X i | S/X i ) (17) The dual total co r relation calculates the amount of en- tropy present in S b eyond the sum of the en tropies for 4 each v ariable conditioned up on all o ther v a riables. The dual to tal corr e lation has also b een r eferred to as the ex- cess entrop y [36] and the binding informa tion [35]. Using Eq. ( 2 ), ( 14 ), and ( 16 ), the dual total correla tion can also b e r elated to the total co rrelation by (see App endix B for more details): D T C ( S ) = X X i ∈ S I ( S/X i ; X i ) ! − T C ( S ) (18) E. ∆ I A distinct informatio n measure, called ∆ I , was intro- duced b y Nirenberg and Latham [10, 37]. It w as intro- duced to measure the impor tance of correlations in neural co ding. F or the purp oses of this pap er, we can apply ∆ I to the following situation: co nsider some set of X v ari- ables (call this set S ). The v alues of the v ariables in S are rela ted in some wa y to the v alue of a nother v ariable (call it Y ). In Nirenberg and Latham’s origina l work, the X v ariables are signals from neurons and the Y v ari- able is the v alue of some stimulus v ariable. ∆ I compa r es the true pro babilit y distributions a s so ciated with these v a r iables to one that assumes the X v ariables act inde- pendently (i.e., there are no cor relations b e t w een the X v a r iables b eyond thos e that can b e explained by Y ). If these dis tr ibutions are similar, then it can b e ass umed that there a re no relev ant corr e lations b et ween the X v a r iables. If, on the other hand, these dis tributions are not similar, then w e can conclude that r e lev ant cor rela- tions a re pres en t b etw een the X v ar iables. The indep endent mo del assumes that the X v ariables act indep endently , so we can form the probability for the X sta tes conditioned up on the Y v a r iable state using a simple pro duct: p ind ( ~ x | y ) = Y i p ( x i | y ) (19) Then, the conditional pro babilit y o f the Y v ariable o n the X v ar iables can b e found us ing Bay es’ theorem. p ind ( y | ~ x ) = p ind ( ~ x | y ) p ( y ) p ind ( ~ x ) (20) The independent joint distribution of the X v ariables is given by: p ind ( ~ x ) = X y ∈ Y p ind ( ~ x | y ) p ( y ) (21) Then, ∆ I is g iv en by the weigh ted Kullback-Leibler dis- tance b et ween the conditional proba bilit y of the Y v ari- able o n the X v ar iables for the independent mo del and the actual c onditional pr o babilit y o f the same type. ∆ I ( S ; Y ) ≡ X ~ x ∈ S p ( ~ x ) X y ∈ Y p ( y | ~ x ) log p ( y | ~ x ) p ind ( y | ~ x ) (22) Abo ut ∆ I , Nirenberg and Latham say [37], “[s]p ecifically , ∆ I is the cost in yes/no que s tions for no t knowing ab out corr elations: if one were guess ing the v a lue of the Y v ariable bas ed on the X v ariables, ~ x , then it would take, o n average, ∆ I more questions to guess the v alue of Y if o ne kne w nothing ab out the correla tions than if one knew e verything a bout them [V a riable na mes changed to match this work].” F. Redundancy-synergy index Another mult iv a riate information measure was intro- duced by Chechik et al. [9]. This measur e was o riginally referred to as the r edundancy-synergy index (RSI) and it was created as a n ex tension of the interaction informa - tion. It is given by: RS I ( S ; Y ) ≡ I ( S ; Y ) − X X i ∈ S I ( X i ; Y ) (23) The redundancy-s ynergy index is desig ned to b e ma x- imal and p ositive when the v ariables in S a re purp orted to pr o vide syner gistic information ab out Y . It sho uld be neg ativ e when the v ariables in S provide r edundan t information ab out Y . When S contains tw o v ariables, the r e dunda ncy-synergy index is eq ua l to the int eraction information. The negative of the redundancy- synergy in- dex has als o b een referre d to as the redundancy [11]. G. V aradan’s sy nergy Y et another multiv aria te infor ma tion mea sure was in- tro duced b y V a radan et al. [1 2]. In the o riginal w ork, this measure is referred to as the sy nergy , but to av oid confusing it with other meas ures, we will re fer to this measure a s V arada n’s synergy (VS). It is given b y: V S ( S ; Y ) ≡ I ( S ; Y ) − max X j I ( S j ; Y ) (24) In E q. ( 24 ), S j refers to the p ossible sub-sets o f S . So, for instance, if S = { X 1 , X 2 , X 3 } , V a radan’s syner gy would be given by: V S ( S ; Y ) = I ( S ; Y ) − max I ( X 1 ; Y ) + I ( X 2 , X 3 ; Y ) I ( X 1 ; Y ) + I ( X 2 , X 3 ; Y ) I ( X 1 ; Y ) + I ( X 2 , X 3 ; Y ) I ( X 1 ; Y ) + I ( X 2 ; Y ) + I ( X 3 ; Y ) (25) Similar to the int eraction information, when V aradan’s synergy is po sitiv e, the v ar iables in S are sa id to provide synergistic information a bout Y , while when V ar adan’s synergy is negative, the v ariables in S a re said to pr ovide redundant informatio n ab out Y . Note that, when S = { X 1 , X 2 } , V ara dan’s synergy is equa l to the in teraction information. 5 H. P artial information de composition Finally , we will examine the collection of infor mation v a lues introduced by Williams and Beer in the partial information deco mpositio n (PID) [13]. (F or three other applications of the partial infor mation decomp osition, see recent works by Ja mes et al. [38], Fleck er et al. [39], and Griffith and K och [26]). The par tial informa tion decom- po sition is a metho d of dissecting the mutual informa- tion b etw een a se t of v ariables S and one other v ariable Y into non-ov erlapping ter ms. These terms qua n tify the information provided b y the set o f v a riables in S ab out Y uniquely , redunda n tly , synergistically , and in mixed forms. The partial information deco mpositio n has sev- eral p otential adv an tages ov er other meas ures. First, it pro duces only non-negative res ults, unlik e the in teraction information. Second, it allows for the poss ibilit y o f syner - gistic and redundant in teractions simultaneously , unlike the in teraction information and ∆ I . F o r the sake of brev it y , we will no t describ e the e ntire partial infor mation decomp osition he r e, but we will de- scrib e the case wher e S = { X 1 , X 2 } . A description of the general ca se can b e found in Williams and Beer ’s orig inal work [13]. The r elev ant mutual informations a re equal to sums of the partial informatio n terms. F or the case of t wo X v ariables, there are o nly four p ossible terms. In- formation ab out Y can b e provided uniquely by each X v a r iable, redundan tly b y b oth X v ariables, or synergis- tically by bo th X v ariables together. W ritten out, the relev an t m utual informations are given by the follo wing sums: I ( X 1 , X 2 ; Y ) = S y nerg y ( X 1 , X 2 ) + U niq ue ( X 1 ) + U niq ue ( X 2 ) + R edundancy ( X 1 , X 2 ) (26) I ( X 1 ; Y ) = U niq ue ( X 1 ) + R edundancy ( X 1 , X 2 ) (27) I ( X 2 ; Y ) = U niq ue ( X 2 ) + R edundancy ( X 1 , X 2 ) (28) The relev a n t mutual information v alues can b e c alcu- lated easily . As describ ed by Williams and Beer, the redundancy term is equal to a new information expres- sion: the minim um information function. This function attempts to captur e the intuit ive view that the redun- dant informa tion fo r a giv en state of Y is the informa- tion that is c o n tributed by b oth X v ariables ab out that state o f Y (consult Williams a nd Beer’s original work [13] for details and further motiv ation). The minimum information function is related to the spe c ific information [40][41]. The sp ecific information is given by: I spec ( y ; X ) = X x ∈ X p ( x | y ) l og 1 p ( y ) − l og 1 p ( y | x ) (29) In Eq. ( 29 ), the sp ecific information qua n tifies the amount of information provided by X ab out a sp ecific state o f the Y v ariable. The minimum informa tio n can then b e calculated by comparing the amo un t of information provided by the different X v ariables for each state of the Y v ariable con- sidered individually . I min ( Y ; X 1 , X 2 ) = X y ∈ Y p ( y ) min X i I spec ( y ; X i ) (30) The minimum in Eq. ( 30 ) is taken over each X v a ri- able cons ide r ed separa tely . Once the r edundancy term is calculated via the minimum informatio n function, the re- maining partial informatio n terms can b e calculated with ease. It should als o b e noted that the partial informatio n de- comp osition provides a n expla nation for neg ative in ter- action informatio n v alues. T o see this, inser t the partial information expansio ns in Eq. ( 26 ), ( 27 ), and ( 28 ) to the m utual information terms in the interaction information: I I ( X 1 ; X 2 ; Y ) = I ( X 1 , X 2 ; Y ) − I ( X 1 , Y ) − I ( X 2 , Y ) = S yne rg y ( X 1 , X 2 ) − R edundancy ( X 1 , X 2 ) (31) Thu s, the par tial information decomp osition finds that a negative interaction infor mation v alue implies that the redundant contribution is grea ter than the syne r gistic contribution. F urthermore, the structure o f the partial information deco mpos ition implies tha t synerg istic and redundant interactions ar e not m utually exclusive, as was the case for the traditiona l interpretation of the interac- tion information. Thus, acco rding to the partial info r - mation decomp osition, there may b e no n-zero synergis tic and r edundan t contributions simultaneously . Throughout the remainder of this article, we w ill lab el the v a rious ter ms in the partial information decomp osi- tion in acc ordance with the notation used by Williams and Be e r. The term that has b een interpreted a s the synergy will b e referred to as Π R ( Y ; { 12 } ) or P ID syn- ergy . The term that has b een interpreted as the redun- dancy will b e lab eled as Π R ( Y ; { 1 }{ 2 } ) or PID redun- dancy . The unique informatio n terms will b e referr ed to as Π R ( Y ; { 1 } ) and Π R ( Y ; { 2 } ), or simply a s PID unique information. When the partia l informa tion dec o mpos ition is ex- tended to the ca se whe r e S = { X 1 , X 2 , X 3 } , new mixed terms ar e introduced to the expa nsions of the m utual in- formations. F or instance, information ca n be supplied ab out Y redundantly betw een X 3 and the sy nergistic contribution from X 1 and X 2 (this term is noted as Π R ( Y ; { 12 }{ 3 } )). In total, the partial informa tion de- comp osition contains 18 terms when S contains three v a r iables. It can be shown that the interaction infor- mation betw een Y and the X v ar iables contained in S is related to the partial informa tion terms by the following equation [13]: I I ( Y ; X 1 ; X 2 ; X 3 ) = Π R ( Y ; { 1 23 } ) + 6 Π R ( Y ; { 1 }{ 2 }{ 3 } ) − Π R ( Y ; { 1 }{ 23 } ) − Π R ( Y ; { 2 }{ 1 3 } ) − Π R ( Y ; { 3 }{ 12 } ) − Π R ( Y ; { 1 2 }{ 13 } ) − Π R ( Y ; { 1 2 }{ 23 } ) − Π R ( Y ; { 1 3 }{ 23 } ) − 2Π R ( Y ; { 1 2 }{ 13 } { 23 } ) (32) F r om Eq . ( 32 ), we can see that the four -w ay interaction information is related to the partial informatio n decom- po sition via a co mplicated summation of terms. IV. EXAMPLE SY STEMS W e will now apply the multiv aria te information mea- sures discussed ab ov e to several simple systems in an at- tempt to understand their s imila rities, differe nce s, and uses. These systems hav e b een chosen to ma ximize the contrast b etw een the infor mation measures , but many other systems exist for which the information measures pro duce identical results. A. Example s 1-3: t wo- input Bo olean logic gates The first set o f examples w e will consider ar e simple Bo olean logic gates . These logic gates ar e well known across many disciplines and offer a great de a l of simplic- it y . The results presented in T able I highlight some of the commonalities and disparities b etw een the v arious information measure s . It should b e noted that, due to the simple s tructure of the Bo olean logic gates, the to- tal corre lation is eq ua l to the m utual information. Also, due to the fact that only tw o-input Bo olea n log ic gates are b eing considered, the redundancy- synergy index and V a radan’s syner gy are directly rela ted to the interaction information. Additional examples will highlight differ- ences b etw een these informa tion measur e s. All information measur es pro vide a similar result for the XOR-gate (with the exception of the dual to tal cor- relation, see b elow). The interaction infor mation, ∆ I , the redunda ncy-synergy index, V ara dan’s s y nergy , and the partial informatio n decomp osition all indicate that the ent ire bit of information b et w een Y a nd { X 1 , X 2 } is accounted for b y synergy . W e might expec t this result bec ause, to know the state of Y for an XOR-gate, the state o f b oth X 1 and X 2 m ust b e k nown. The results for X 1 gate demo nstrate the p otential util- it y of the pa rtial informa tion decomp osition. The unique information term fro m X 1 is equal to o ne bit, thu s indi- cating that the X 1 v a r iable entirely and so lely deter mines the state of the output v ariable. This result is confirmed by the truth-table. This result can also be seen by c on- sidering the v alues of the other measures together (for instance, the three mutual information measures ), but the par tia l information decomp osition pr ovides these r e- sults more succ inctly . More significa n t differences amo ng the infor mation measures appea r when consider ing the AND-gate. The partial information decomp osition pr oduce s the result T ABLE I. Examples 1 to 3: tw o-input Bo olean logic gates. XOR-gate: All information measures produ ce consisten t re- sults. X 1 -gate: The p artial information decomposition suc- cinctly id entifies a relationship b etw een X 1 and Y . AND- gate: The partial info rmation decomposition iden tifies b oth synergistic and redundant interactions. The intera ction infor- mation find s only a synergistic interaction. ∆ I identifies th e imp ortance of correlations b et w een X 1 and X 2 . XOR X 1 AND p ( x 1 , x 2 , y ) x 1 x 2 y y y 1 / 4 0 0 0 0 0 1 / 4 1 0 1 1 0 1 / 4 0 1 1 0 0 1 / 4 1 1 0 1 1 I ( X 1 ; Y ) 0 1 0 . 311 I ( X 2 ; Y ) 0 0 0 . 311 I ( X 1 , X 2 ; Y ) 1 1 0 . 811 I I ( X 1 ; X 2 ; Y ) 1 0 0 . 189 T C ( X 1 ; X 2 ; Y ) 1 1 0 . 811 DT C ( X 1 ; X 2 ; Y ) 2 1 1 ∆ I ( X 1 , X 2 ; Y ) 1 0 0 . 104 RS I ( X 1 , X 2 ; Y ) 1 0 0 . 189 V S ( X 1 , X 2 ; Y ) 1 0 0 . 189 Π R ( Y ; { 1 }{ 2 } ) 0 0 0 . 311 Π R ( Y ; { 1 } ) 0 1 0 Π R ( Y ; { 2 } ) 0 0 0 Π R ( Y ; { 12 } ) 1 0 0 . 5 that 0.311 bits of information are provided r edundan tly and 0.5 bits a re provided synergistically . Since each X v a r iable provides the same amount o f information a bout each s tate of Y (see Eq. ( 30 )), the par tial infor mation de- comp osition finds tha t a ll of the m utual information b e- t ween each X v ariable individually a nd the Y v ariable is redundant. As a result of this, no information is provided uniquely , and subseq uen tly , the entirety of the remaining 0.5 bits o f infor ma tion b etw een Y a nd { X 1 , X 2 } must be synergistic. F rom this, we ca n see in action the fact that the par tial information dec ompo s ition emphasizes the amount o f information that ea c h X v ariable pr ovides ab out each state o f Y c onsider e d individually . The interaction information, and by extension the redundancy-syne r gy index and V a radan’s synergy , are limited to r eturning only a syner gy v alue of 0.189 bits for the AND-gate. This v alue is pro duced because the m utual infor mation b e t w een Y and { X 1 , X 2 } contains an ex cess of 0.189 bits b eyond the sum o f the mutual informations b etw een each X v ar ia ble individually a nd the Y v ariable. So, here w e can see in a c tion the inter- pretation of the int eraction information as the amount of information provided by the X v ariables taken together ab out Y , b eyond what they provide individually . Also, the AND-gate allows us to see the r e la tionship betw een the in teraction information and the partial information decomp osition a s expres sed by E q. ( 31 ). The v alue of ∆ I for the AND-gate ca n b e elucidated by ex amining the v alues of the conditional pr obability distributions that ar e r elev ant to the calculation of ∆ I (T a ble II ). F r om these re s ults, it is clear tha t if we use the 7 T ABLE I I. V alues of cond itional p robabilities used to calcu- late ∆ I for the AND- gate. y x 1 x 2 p ind ( y | x 1 , x 2 ) p ( y | x 1 , x 2 ) 0 0 0 1 1 0 1 0 1 1 0 0 1 1 1 0 1 1 0 . 25 0 1 0 0 0 0 1 1 0 0 0 1 0 1 0 0 1 1 1 0 . 75 1 independent mo del, a nd we ar e prese nted with the state x 1 = 1 and x 2 = 1, w e w ould conclude that there is a o ne - quarter chance that y = 0 and a thr e e -quarters c hance that y = 1. If we use the actual data , then we know that, for that spe cific sta te, y m ust e qual 1 . This exa mple po in ts to a subtle, but critical difference be tw een ∆ I and the other m ultiv aria te infor mation measures . Namely , the other information measur es are co ncerned with dis- cerning the in teractions among the v ariables in the s it- uation wher e you know the v alues of all the v aria bles simult aneously , wher eas ∆ I is co ncerned with compar - ing that situation to the indep endent mo del describ ed by Eq. ( 19 ) (see Section IV B for further dis cussion of this topic). The v alues of the dual total correlation fo r the XOR- gate example in T able I demonstrate a crucia l difference betw een the dual total cor relation and the other m ulti- v a r iate information measures. Namely , the dual tota l co r- relation do es not differentiate b et ween the X and Y v ar i- ables. So, depe ndenc ie s b et ween all v ar iables are treated equally . In the case of the X OR-gate, the entropy of any v a r iable conditioned on the other t wo is zero . Howev er, the joint entropy b etw een all v ariables is 2 bits, so the dual total correlation is e q ual to 2 . Clearly , this result is grea ter than I ( X 1 , X 2 ; Y ) for this e xample. So, if w e assume the synergy and redundancy are some p ortio n of I ( X 1 , X 2 ; Y ), the dual total corr elation c a nnot b e the synergy or the redundancy . How ev er, this result is no t surprising given the fact that, if we ass ume the sy nergy and redundancy are some p or tio n o f I ( X 1 , X 2 ; Y ), the synergy and redundancy require some differentiation b e- t ween the X v a riables a nd Y v ariables. Since the dua l total co rrelation do es not incorp orate this dis tinction, we should exp e ct that it mea sures a fundamen tally different quantit y (se e Section IV D for further discussion of this topic). B. Example 4 Another r e lev ant example for ∆ I is shown in T able II I . The crucial p oint to draw fr om this example is that ∆ I can b e g reater than I ( X 1 , X 2 ; Y ). This app ears to be in conflict with the intuitiv e notion o f sy nergy a s some part of the informatio n the X v ariables pr ovide ab out T ABLE I II . Example 4. F or this system, ∆ I is greater than I ( X 1 , X 2 ; Y ). Schneidman et. al. also presen t an example that demonstrates that ∆ I is n ot boun d by I ( X 1 , X 2 ; Y ) [19]. Ex. 4 p ( x 1 , x 2 , y ) x 1 x 2 y 1 / 10 0 0 0 1 / 10 1 1 0 2 / 10 0 0 1 6 / 10 1 1 1 I ( X 1 ; Y ) 0 . 0323 I ( X 2 ; Y ) 0 . 0323 I ( X 1 , X 2 ; Y ) 0 . 0323 I I ( X 1 ; X 2 ; Y ) − 0 . 0323 T C ( X 1 ; X 2 ; Y ) 0 . 9136 DT C ( X 1 ; X 2 ; Y ) 0 . 8813 ∆ I ( X 1 , X 2 ; Y ) 0 . 0337 RS I ( X 1 , X 2 ; Y ) − 0 . 0323 V S ( X 1 , X 2 ; Y ) − 0 . 0323 Π R ( Y ; { 1 }{ 2 } ) 0 . 0323 Π R ( Y ; { 1 } ) 0 Π R ( Y ; { 2 } ) 0 Π R ( Y ; { 12 } ) 0 the Y v ar iable. Wh y , in this c ase, ∆ I is gr eater than I ( X 1 , X 2 ; Y ) is not immediately c le ar. T o b etter under- stand this r esult, we c an examine the difference b etw een ∆ I and I ( X 1 , X 2 ; Y ). Using E q. ( 22 ), ( 20 ), and ( 5 ), this difference ca n b e expre s sed as: I ( S ; Y ) − ∆ I ( S ; Y ) = X ~ x ∈ S,y ∈ Y p ( y , ~ x ) log p ind ( ~ x | y ) p ind ( ~ x ) (33) The quantit y expresse d on the RHS of E q. ( 33 ), though similar in form, is no t a m utual information. B a sed on the example in T a ble II I and the exa mples in T able I , this quantit y ca n b e p ositive or neg ative. Sc hneidman et. al. further explore this and other noteworth y features of ∆ I [19]. F undamen tally , ∆ I is a co mparison b etw een the complete da ta and an independent mo del (as expressed in Eq. ( 19 )). As Schneidman et. al. note, alterna tiv e mo dels could b e chosen for the purp ose of measur ing the impo rtance of cor relations b etw een the X v ariables in the data. W e wis h to emphasize that ∆ I can provide useful information ab out a sy stem, but that it measures a fundamentally differ en t quantit y in compariso n to the other m ultiv ar iate infor ma tion measur es. C. Example 5 The exa mple shown in T able IV highlights some in- teresting differences betw een the information measures, esp ecially regar ding the par tial information decompo si- tion. Results from the partial informa tion decomp osition indicate that 1 bit of information ab out Y is pr o vided r e - dundant ly by X 1 and X 2 , while 1 bit is pr ovided synergis - tically . This situation is s imilar to the AND-ga te a bove. 8 T ABLE IV. Example 5: Y obtains a different state for eac h unique com bination of X 1 and X 2 . The p artial informa- tion decomp osition indicates th e presence of redun dancy b e- cause the X v ariables provide the same amount of information abou t each state of Y , despite t h e fact that the X v ariables provide information about differen t states of Y . The in ter- action information and ∆ I pro vide null results. Griffith and Koch also discuss this example in relation to multiv ariate in- formation measures [26]. Ex. 5 p ( x 1 , x 2 , y ) x 1 x 2 y 1 / 4 0 0 0 1 / 4 1 0 1 1 / 4 0 1 2 1 / 4 1 1 3 I ( X 1 ; Y ) 1 I ( X 2 ; Y ) 1 I ( X 1 , X 2 ; Y ) 2 I I ( X 1 ; X 2 ; Y ) 0 T C ( X 1 ; X 2 ; Y ) 2 DT C ( X 1 ; X 2 ; Y ) 2 ∆ I ( X 1 , X 2 ; Y ) 0 RS I ( X 1 , X 2 ; Y ) 0 V S ( X 1 , X 2 ; Y ) 0 Π R ( Y ; { 1 }{ 2 } ) 1 Π R ( Y ; { 1 } ) 0 Π R ( Y ; { 2 } ) 0 Π R ( Y ; { 12 } ) 1 Each X v ariable provides 1 bit of information ab out Y , but b oth X v ariables provide the same amount of infor- mation abo ut each state o f Y . So, the partial information decomp osition co ncludes that a ll of the informa tion is r e- dundant . It should b e noted that this is the c a se des pite the fact that X 1 and X 2 pr ovide information ab o ut dif- fer ent states of Y . X 1 can differentiate b etw een y = 0 and y = 2 on the one hand and y = 1 and y = 3 on the other, while X 2 can differentiate b etw een y = 0 a nd y = 1 on the one hand and y = 2 and y = 3 on the other. Even though the X v ariables pr o vide information ab out different states of Y , the par tial informatio n decomp osi- tion is blind to this distinction and co nc ludes , since the X v a r iables provide the sa me amount of information a bout each state of Y , that their contributions a r e r edundan t. Because all of the mutual information b et ween ea c h X v a r iable considered individually is taken up b y r edun- dant infor mation, the partial infor mation deco mpositio n concludes there is no unique information and, th us, the remaining 1 bit o f infor mation must b e s y nergistic. Example 5 demonstrates the conditions for n ull results from the interaction informa tion and ∆ I . When co n- sidering the rela tionship be tw een one of the X v ariables and Y , we see that knowing the state of the X v ariable reduces the uncertaint y ab out Y by 1 bit in a ll cases. How ev er, knowing bo th X v ariables only provides 2 bits of informa tion a bout Y . So, the in teraction infor ma tion m ust b e zer o b ecause no additiona l information ab out Y is gained or lost by knowing b oth X v a riables together compared to knowing them each individually . Simila r ly , T ABLE V . Example 6. All information measures, with the ex- ceptions of the total correlation and th e d ual total correlation, are zero. The total correlation and the d ual t otal correlation prod uce n on-zero results b ecause they detect interactions b e- tw een the X var iables. Ex. 6 p ( x 1 , x 2 , y ) x 1 x 2 y 1 / 2 0 0 0 1 / 2 1 1 0 I ( X 1 ; Y ) 0 I ( X 2 ; Y ) 0 I ( X 1 , X 2 ; Y ) 0 I I ( X 1 ; X 2 ; Y ) 0 T C ( X 1 ; X 2 ; Y ) 1 DT C ( X 1 ; X 2 ; Y ) 1 ∆ I ( X 1 , X 2 ; Y ) 0 RS I ( X 1 , X 2 ; Y ) 0 V S ( X 1 , X 2 ; Y ) 0 Π R ( Y ; { 1 }{ 2 } ) 0 Π R ( Y ; { 1 } ) 0 Π R ( Y ; { 2 } ) 0 Π R ( Y ; { 12 } ) 0 ∆ I must b e ze r o b ecause the knowledge of the state o f X 1 and X 2 simult aneously does not provide any additional knowledge abo ut Y compared to the indep enden t mo de ls for the r elationships b etw een each X v ariable and Y . D. Example 6 The example shown in T able V demons trates a sig- nificant feature o f the total corr elation. Even when no information is pas sing to one of the v ariables co ns idered, the total co rrelation and the dual total corr elation can still produce non-zero results if in teractions are pr esen t betw een o ther v a riables in the sys tem. This result can b e clearly understo o d using the expression for the to tal cor - relation in Eq . ( 15 ) and the expr e ssion for the dual total correla tion in Eq. ( 18 ). The total cor relation sums the information pass ing b etw een v ariables from the smallest scale (tw o v ariables) to the larg est sca le ( n v a riables). It will detect relationships at all levels a nd it is unable to differentiate b et w een thos e levels. The dual total co r- relation compares the total cor r elation to the amount of information passing betw een each individual v ariable a nd all other v ariables considered tog ether as a single vector v a lued v ar iable. As with the dua l total correlation, the total co rrelation do es not differentiate b etw een the X and Y v ariables, unlik e several of the other informa tion measures. In this case, Y has no e n tropy , so all information ter ms that dep end on the ent ropy of Y (i.e., all of the other in- formation mea sures consider ed here) a re zero . This is exp ected s inc e all of the other infor mation measures are either explicitly fo cused o n the r elationship b et ween the X v ar iables and the Y v ariable or only fo cus o n interac- tions that involv e all v ariables. 9 E. Examples 7 and 8: three-input Bo olean logic gates The thr e e -input B oo lean logic gate ex a mples shown in T able VI allow for a co mpa rison betw een the interaction information, the r edundancy-synergy index, V aradan’s synergy , and the partial information decomp osition. The three-wa y X OR gate pro duces similar results to the X OR-gate shown in T able I . All of the information mea - sures indica te the presence of a sy nergistic interaction. The pa rtial informatio n decomp osition is able to lo calize the synergy to an interaction b et ween all three X v ari- ables. Significant difference s app ear b et w een the informa tion measures when an extrane o us X 3 v a r iable is added to a basic XOR-gate b etw een X 1 and X 2 . In this case , the in- teraction information is zer o b ecause there is no syner gy present betw een all three X v a riables. This is des pite the fact that the interaction informa tion indicated syner gy was prese n t for the basic X OR-gate. Thus, we can see that the interaction information fo cuses only on interac- tions b et ween al l o f the X v ariables and the Y v ariable. A similar result is o bserved with V ar a dan’s syner g y . De- spite the fact that it indica ted the pre s ence of synergy in the basic XOR ga te, V arada n’s sy ner gy do es not indi- cate synergy is present in this logic gate b ecause it also fo cuses only o n interactions b et w een all of the X v ari- ables a nd the Y v a riable. Both the redundancy-s ynergy index and the partial information deco mpos itio n return results that indicate the presence of synerg y b etw een the X v ariables and the Y v ariable, but only the pa r tial in- formation decomp osition is a ble to lo calize the synergy to the X 1 and X 2 v a r iables. F. Examples 9 to 13: si mple mo del ne t w orks In an effort to discuss re s ults more directly a pplicable to several resea rch topics, w e will no w apply the m ulti- v a r iate information measures to s e v eral v ariations o f a simple mo del netw ork. The g eneral structure of the net- work is shown in Fig. 1 . The net work contains three no des, each of whic h can b e in one of tw o states (0 or 1) at any g iv en p oint in time. The default state of each no de is 0. At ea ch time step, there is a certain probabil- it y , call it p r , that a given no de will b e in s ta te 1. The probability that a given no de is in state 1 can also b e increased if it rec e iv es a connection from a nother no de. This driving effect is noted by p 1 y for the co nnection fro m X 1 to Y , p 12 for the connection from X 1 to X 2 , and p 2 y for the connectio n from X 2 to Y . All states of the net- work ar e deter mined sim ultaneously and are indep enden t of the previous states of the netw ork. (See Appendix C for fur ther details r egarding this mo del.) F o r this simple system, we will disc us s five combina- tions o f pr, p1y , p12, and p2y that corres p ond to note- worth y netw ork top ologies . The information theor etic results for thes e ex a mples ar e presented in T able VII . T ABLE VI. Examples 7 and 8: three- input Bo olean logic gates. All partial information decomp osition terms n ot shown in the table are zero. 3XOR: Three-wa y XOR-gate. All in- formation meas ures produce consisten t results. X 1 X 2 XOR: XOR-gate in volving only X 1 and X 2 . The redun dancy- synergy index identifies a syn ergistic interaction and ∆ I iden- tifies the imp ortance of correlations b etw een the X v ariables. The partial informatio n decomp osition also identifies th e v ari- ables invol ved in the synergistic interaction. The interaction information and V aradan’s syn ergy do not identify a syner- gistic interacti on. 3XOR X 1 X 2 XOR p ( x 1 , x 2 , y ) x 1 x 2 x 3 y y 1 / 8 0 0 0 0 0 1 / 8 1 0 0 1 1 1 / 8 0 1 0 1 1 1 / 8 1 1 0 0 0 1 / 8 0 0 1 1 0 1 / 8 1 0 1 0 1 1 / 8 0 1 1 0 1 1 / 8 1 1 1 1 0 I ( X 1 ; Y ) 0 0 I ( X 2 ; Y ) 0 0 I ( X 3 ; Y ) 0 0 I ( X 1 , X 2 ; Y ) 1 1 I I ( X 1 ; X 2 ; Y ) 1 0 T C ( X 1 ; X 2 ; Y ) 1 1 DT C ( X 1 ; X 2 ; Y ) 3 2 ∆ I ( X 1 , X 2 ; Y ) 1 1 RS I ( X 1 , X 2 ; Y ) 1 1 V S ( X 1 , X 2 ; Y ) 1 0 Π R ( Y ; { 12 } ) 0 1 Π R ( Y ; { 123 } ) 1 0 FIG. 1. Structu re of model netw ork used for Ex amples 9 to 13. Example 9 represents a system where the X no des in- depe ndently drive the Y no de. Similar ly to Example 5, the par tia l informa tio n de c ompos ition indicates that the information from X 1 and X 2 is en tirely redundant and synergistic. This result is somewhat c o un ter intuit ive b e- cause the X no des act indep enden tly . Again, this is due to the structure o f the minim um infor mation in Eq. ( 30 ). Each X v ar iable pr o vides the same information a bout each sta te of Y , so the partial infor mation decomp osition returns the result that all of the informatio n provided by 10 T ABLE VI I. Examples 9 to 13: simple model net w ork. All information v alues are in millibits. Ex. 9 Ex. 10 Ex. 11 Ex. 12 Ex. 13 Diagram p r 0 . 02 0 . 02 0 . 02 0 . 02 0 . 02 p 12 0 0 . 1 0 . 1 0 . 1 0 . 1 p 1 y 0 . 1 0 . 1 0 0 . 1 0 p 2 y 0 . 1 0 . 1 0 . 1 0 0 I ( X 1 ; Y ) 3 . 061 3 . 498 0 . 053 3 . 225 0 I ( X 2 ; Y ) 3 . 061 3 . 801 3 . 527 0 . 050 0 I ( X 1 , X 2 ; Y ) 6 . 239 6 . 750 3 . 527 3 . 225 0 I I ( X 1 ; X 2 ; Y ) 0 . 117 − 0 . 54 8 − 0 . 053 − 0 . 050 0 T C ( X 1 ; X 2 ; Y ) 6 . 239 9 . 975 6 . 752 6 . 450 3 . 225 DT C ( X 1 ; X 2 ; Y ) 6 . 356 9 . 427 6 . 698 6 . 400 3 . 225 ∆ I ( X 1 , X 2 ; Y ) 0 . 080 0 . 499 0 . 064 0 . 059 0 RS I ( X 1 , X 2 ; Y ) 0 . 117 − 0 . 54 8 − 0 . 053 − 0 . 050 0 V S ( X 1 , X 2 ; Y ) 0 . 117 − 0 . 54 8 − 0 . 053 − 0 . 050 0 Π R ( Y ; { 1 }{ 2 } ) 3 . 061 3 . 498 0 . 053 0 . 050 0 Π R ( Y ; { 1 } ) 0 0 0 3 . 175 0 Π R ( Y ; { 2 } ) 0 0 . 303 3 . 473 0 0 Π R ( Y ; { 12 } ) 3 . 178 2 . 950 0 0 0 each X v ariable ab out Y is r edundan t. The interaction information returns a result that indicates the presence of synergy , though the magnitude of this interaction is less than the magnitudes of the sy nergy a nd redundancy r e- sults fro m the partial infor mation decomp osition. Note that this is the only netw ork for which the interaction information indicates the presence of synerg y . Example 10 is similar to Exa mple 9 with the exception that X 1 now also driv es X 2 . Several interesting r esults are pr oduced for this example. F o r instance, the total correla tion a nd dual to tal corre lation ar e significantly el- ev a ted in comparison to the other exa mples. In this ex- ample, there is the maximum a moun t o f interactions b e- t ween all no des. So , this r esult agr ees with exp ectations bec ause the total correlatio n and dual total correla tio n reflect the total amoun t of in teractions a t all scales b e- t ween all v ariables. Also, ∆ I obtains its highest v alue for this example beca us e the actua l data and the inde- pendent mo del from Eq . ( 19 ) are more dissimilar due to the in teractions betw een X 1 and X 2 . Int erestingly , the partial information deco mpos itio n do es not indica te the presence o f unique informa tion fro m X 1 , despite the fact that X 1 is dir ectly influencing Y . As with example 9 ab ov e, the partial infor ma tion decomp osition returns the result that all of the information X 1 provides a bout Y is redundant. In this case, this result is more intuitiv e bec ause X 1 also drives X 2 . The in teraction information returns a sig nificant ly larger magnitude re s ult for this ex- ample. T his is intuitiv e given the fact that X 1 is driving X 2 and that b oth X v a riables a re driving the Y v ariable. How ev er, it should b e no ted that the magnitude of the int eraction infor mation is sig nifican tly less than the mag- nitude of the synergy and redundancy from the partial information decomp osition. Also, the interaction info r- mation r esult implies the presence o f redundancy , unlike Example 10. Example 11 repre s en ts a co mmon pr oblem case when attempting to infer connectivity base d sole ly on no de ac- tivit y . Node X 1 drives X 2 , which in turn dr iv es Y . If the ac tivit y of X 2 is not k no wn, it would app ear that X 1 is driv ing Y dir ectly . T he par tial information decompo - sition returns the res ult that any information provided by X 1 ab out Y is redundant and tha t the v ast ma jorit y of the informa tio n provided b y X 1 and X 2 ab out Y is unique information from X 2 . Both of these res ults ap- pea r to accur a tely reflect the structure of the netw ork. Example 1 2 also represents a common pro blem case when determining co nnectivit y . No de X 1 drives Y and X 2 . If the activity of X 1 is not known, it would app ear that X 2 is driving Y , when, in fact, no co nnection ex- ists fro m X 2 to Y . Similarly to E xample 11 , the partia l information decomp osition identifies the ma jorit y of the information from X 1 and X 2 ab out Y as unique informa- tion from X 1 and the remaining informa tion as r edun- dant. Again, these results a ppear to a c c urately reflect the structure of the netw ork. The final example is similar to Example 6 ab ov e. In this case , no connections exist from X 1 or X 2 to Y , but X 1 drives X 2 . Almost all of informatio n mea sures in- dicate a lack of information transmissio n. Howev er, the total correla tion and the dual total correla tion pick up the in teraction b et ween X 1 and X 2 . The v a lues of the total correla tion and the dual total cor relation v ary ap- proximately linear ly with the num ber of connections in each netw ork example. This, aga in, demo ns trates the fact that the total cor relation and the dual tota l corre- lation measure interactions b etw een all v ariables at all scales. G. Analys is of disso ciated neural cul ture W e will now pre s en t the results o f applying the infor- mation mea sures discussed ab ov e to spiking da ta from a disso ciated neur al culture as an illustration of the type of analysis that is p ossible using these information mea- sures. The data we chose to analyze are describ ed in W age- naar et. al. a nd are freely av ailable online [42]. The data contain multiunit spiking ac tiv ity for each of 6 0 electro des in the m ultielectrode array on which the dis- so ciated neural culture was grown. Spec ific a lly , we us e d data from neural culture 2-2. All details r egarding the pro duction a nd maint enance of the c ultur e can b e found in [42]. W e ana lyzed rec o rdings from eight p oints in the developmen t in the culture: days in vitro (DIV) 4, 7, 12 , 16, 20, 25 , 31, and 33 . The DIV 1 6 r ecording was 60 min utes long, while a ll o thers were 45 minutes long. F o r this ana lysis, the data were binned at 16 ms. The probability distributions necessar y for the computation of the information measures were created b y examining the spike trains for groups of three non-ident ical elec- tro des. F or a g iv en gro up o f electro des, one elec tr ode 11 was lab eled the Y electr o de, while the other tw o were lab eled the X 1 and X 2 electro des. Then, for all time steps in the spike trains, the states of the electro des (spiking or not spiking) were recor ded at time t for the X 1 and X 2 electro des and a t time t + 1 for the Y elec- tro de. Next, by counting how many times ea ch s ta te ap- pea red throug ho ut the s pik e train, the joint probabilities p ( y t +1 , x 1 ,t , x 2 ,t ) w ere calculated, whic h were then used to ca lculate the information measures discus sed ab ov e. This pro cess was rep eated for each group of non- iden tical electro des. How ever, to av oid double counting, groups with swapped X v ariable assignments were only analyze d once. F or instance, the group X 1 = electro de 3, X 2 = electro de 4, and Y = ele c tro de 5 was analyzed, but the group X 1 = electro de 4, X 2 = electro de 3, and Y = elec - tro de 5 was not analy zed. In or der to comp ensate for the changing firing ra te through development of the cultures, all information v a lue s for a given group were normalized by the entropy of the Y electr o de. T o illustra te the statistical significance of the infor- mation mea sure v alues, we als o created and a nalyzed a ra ndo mized data set from the orig inal neural culture data. The ra ndomization was accomplished by splitting each electro de spike tr ain at a ra ndomly chosen p oint and swapping the t w o rema ining pieces. By doing this, the structure of the electro de spike train is almost en- tirely preserved, but the tempo ral relationship betw een the electro de spike tra ins is significantly disrupted. The results o f these a na lyses a re pres en ted in Fig . 2 and Fig. 3 . The re s ults shown in Fig. 2 indicate that at day 4 essentially no information w as being transmitted in the net w ork. How ever, b y day 7, a great deal of in- formation w as b e ing tr a nsmitted, as can b e seen by the pea ks in mutual information (Fig. 2 E) and the total co r - relation (Fig. 2 F). As the cultur e co n tin ued to develop after day 7, most infor mation meas ur es decreas ed and then slowly increased to maxima on the las t day , DIV 33. Interestingly , ∆ I (Fig. 2 G) show ed an increase a t day 7 , but then a steady increas e a fterw ards. The to tal correla tion (Fig. 2 F) mimics the changes in the mutu al information (Fig. 2 E), but b ecause the total cor relation measures the total amount of information b eing tr ans- mitted among the X a nd Y v ariables, it p ossessed higher v a lues than the mutual infor mation. The relationship betw een the interaction information and the partial infor mation dec o mpos ition was als o illus - trated thr ough developmen t. As the culture dev elop ed, the PID synergy was la rger than the PID redundancy . Then, b etw een days 31 and 33, the PID syner gy b ecame significantly smaller than the PID r edundancy . In the int eraction information, this relationship w as expressed by po sitiv e v alues throug h most of the c ultur e’s devel- opment, with the exception of large negative v alues at days 7 and 3 3. How ev er, notice that groups o f electro des with p ositive and negative interaction information v a lues were found in each recording . T o fur ther inv estigate this relationship, w e plotted the distribution o f PID synergy and PID redundancy for g roups of electro des (Fig. 3 ). This plot shows that the netw ork contained gro ups of electro des with slightly more PID re dundancy than PID synergy at day 7, but that, after that p oint, the to ta l amount of informatio n decreas e d and b ecame more bi- ased to w ards PID synergy at da y 1 2. F rom that p oint, the total a moun t of informa tion increa sed up to the last recording where the net work was once again biased to- wards P ID redundancy . So, we ca n relate the res ults from the par tial informatio n decomp osition and the in- teraction informa tion us ing Fig . 3 by noting that, while the PID synergy and PID redundancy for a given gr oup of electro des determines a p oints p osition in Fig. 3 , the in- teraction information descr ibes how far that point is from the equilibrium line. Given the fa c t that many p oin ts in Fig. 3 are near the equilibr ium line, the partial informa- tion deco mpositio n finds that many groups of electro des contain s ynergistic and redundant in teractions simulta- neously . This feature would be lo st by o nly examining the in teraction infor mation. Obviously , this ana lysis could be made significantly more co mplex and in teresting. F or ins ta nce, the ana lysis could be improved by including mor e data sets, v arying the v ariable as s ignmen ts, using different bin sizes, using more robust metho ds to test statistica l significance , and so forth. How ev er, based on this simple illus tration, we belie v e that it is clear that the infor mation analy sis meth- o ds discussed herein could b e used to a ddress interesting questions related to this sy s tem, or other systems. F or in- stance, it may b e p ossible to relate these changes throug h developmen t to previous work on c hanges in disso ciated cultures thr ough development [4 2 – 46]. V. DISCUSSION Based on the r e sults from sev eral simple systems, we were able to explore the pro perties o f the multiv ariate information mea sures discuss e d in this pap er. W e will now discuss ea c h meas ure in turn. The oldest multiv ariate information measure - the in- teraction infor mation - was shown to fo cus on interac- tions betw een all X v ariables and the Y v a riable using the three- input Bo olean logic gate exa mples. F urther- more, the t w o-input AND-gate demonstrated how the int eraction information is related to the ex c ess informa- tion provided by b oth X v ariables ab out the Y v ariable beyond the tota l amount of infor mation those X v ariables provide ab out Y when considered individually . Also, that example demonstrated the relationship b etw een the in- teraction information and the pa rtial information decom- po sition as shown in E q. ( 31 ). F or the mo del netw ork ex- amples, the interaction information had its largest mag- nitude when the interactions were pres en t between all three no des. Also , for these examples, the interaction in- formation indicated the pr esence of syne r gy when b oth no des X 1 and X 2 drov e Y , but not ea c h o ther (Example 9), while it indicated the presence of redundancy when either no de X 1 or X 2 drov e Y and X 1 drov e Y (Exam- 12 0 10 20 30 40 10 −5 10 0 Days In Vitro PID Redundancy / H(Y) (B) Normalized PID Redundancy Original Data Shifted Data 0 10 20 30 40 10 −5 10 0 Days In Vitro PID Synergy / H(Y) (A) Normalized PID Synergy Original Data Shifted Data 0 10 20 30 40 10 −5 10 0 Days In Vitro I({X 1 X 2 };Y) / H(Y) (E) Normalized I({X 1 X 2 };Y) Original Data Shifted Data 0 10 20 30 40 10 −5 10 0 Days In Vitro II(X 1 ;X 2 ;Y) / H(Y) (C) Normalized Positive II(X 1 ;X 2 ;Y) Original Data Shifted Data 0 10 20 30 40 10 −5 10 0 Days In Vitro −II(X 1 ;X 2 ;Y) / H(Y) (D) Normalized Negative II(X 1 ;X 2 ;Y) Original Data Shifted Data 0 10 20 30 40 10 −10 10 0 Days In Vitro ∆ I({X 1 X 2 };Y) / H(Y) (G) Normalized ∆ I({X 1 X 2 };Y) Original Data Shifted Data 0 10 20 30 40 10 0 Days In Vitro TC(X 1 ;X 2 ;Y) / H(Y) (F) Normalized TC(X 1 ;X 2 ;Y) Original Data Shifted Data 0 10 20 30 40 10 −5 10 0 Days In Vitro PID Unique Information / H(Y) (H) Normalized PID Unique Information Original Data Shifted Data FIG. 2. Man y information measures sho w changes ov er neural developmen t. A) PID S ynergy . B) PID Redu ndancy . C) Posi tive Interaction Information v alues. D) Negative Interaction Information v alues. E) Mutual Information. F) T otal Correlation. G) ∆ I . H) PID U nique Information. All information va lues are normalized b y the entro py of the Y electrode. Each ind ividual data p oin t represents one group of electrodes. T o impro ve clarit y , the d ata p oints are jittered randomly around the DIV and only 0.4% of the data p oints are shown. The line plots show the 90th p ercentile, median, and 10th p ercentile of all th e d ata for a giv en DIV. Note th at as the culture matu red , the t otal amount of information transmitted increased and the typ es of intera ctions present in th e netw ork changed. 13 10 −6 10 −4 10 −2 10 0 10 −6 10 −4 10 −2 10 0 (PID Synergy) / H(Y) (PID Redundancy) / H(Y) PID Synergy vs. PID Redundancy DIV 4 DIV 7 DIV 12 DIV 16 DIV 20 DIV 25 DIV 31 DIV 33 FIG. 3. T he balance of PID sy nergy and PID redundancy changed du ring developmen t. Distribut ion of normalized PID synergy and PID redundancy . Eac h data p oin t represents the information va lues for one group of electrodes (only 2% of the d ata are shown to improve clarity). Diamonds represent mean v alues for a given DI V. ples 10 to 12). When the int eraction information was ap- plied to data fro m a developing neura l culture, it showed changes in the type of interactions present in the netw ork during developmen t. In contrast to the interaction information, the total correla tion was shown to s um interactions amo ng all v a ri- ables at all sca les using Exa mple 6. In other words, the v alue of the tota l correlation for any system incor - po rates int eractions b etw een groups of v ariables at all scales. This feature was made apparent using the mo del net work examples . There, the total corr elation v aried approximately linearly with the num ber o f connections present in the netw ork. F urthermor e, the total c o rre- lation is symmetric with regar d to all v ariables consid- ered, wherea s the o ther information measures fo cus on the rela tionship b etw een the set o f X v ariables and the Y v ariable. When applied to the data from the neural culture, the tota l co r relation and the mutual infor mation bo th show ed increases in the total amount of informa tion being transmitted in the netw ork thro ugh developmen t. The dual total corre la tion was found to be similar to the tota l cor relation in that b oth do not differe n tiate b e- t ween the X and Y v ar iables. Also, like the total cor- relation, the dual total co rrelation v a ried approximately linearly with the n um b er of co nnections in the mo del net work examples. The function of the dua l total cor re- lation was also highlig hted with the X OR-gate example. There, w e saw that the dual total cor relation compares the uncertaint y with reg ards to all v ar iables with the to- tal uncertaint y that remains ab out ea c h v aria ble if all the other v ar iables are known. Using the AND-gate, ∆ I was shown to mea sure a sub- tly different quantit y compared to the other information measures. The other information measure s seek to ev al- uate the in teractions b etw een the X v ar iables and the Y v a r iable given that one knows the v alues of all v a riables simult aneously (i.e. in the case that the total join t pr ob- ability distributio n is known). ∆ I compare s that s itua- tion to a mo del where it is as s umed that the X v ariables act indep enden tly of o ne ano ther in an effort to mea sure the imp orta nc e of knowing the cor relations b e tween the X v ariables. Clearly , this goal is similar to the goals of the other informa tion meas ur es. How ev er, g iv en the fact that ∆ I c a n be greater than I ( X 1 , X 2 ; Y ), as was shown in Exa mple 4, a nd if w e as s ume the synerg y and redun- dancy are some p ortion of I ( X 1 , X 2 ; Y ), ∆ I ca nnot be the synerg y o r the redunda ncy . ∆ I can provide useful information ab out a system, but the distinction b et ween the structure of ∆ I and the other information measures , along with the fact that ∆ I cannot b e the synerg y or redundancy as previously de fined, sho uld b e consider ed when choosing the a ppropriate information measure with which to p erform an analysis. Unlik e several of the other information measur e s which show ed changes in the types of interactions present in the developing neura l culture, ∆ I show ed a uniform increase in the imp ortance of cor- relations in the netw ork thr o ughout development. The redundancy- synergy index and V ar adan’s synergy are identical to the interaction information when only tw o X v ar iables are consider ed. Ho w ever, when w e examined three-input Bo olea n logic g ates, we found that V a r adan’s synergy - like the interaction infor mation - was unable to detect a s ynergistic interaction among a subset of the X v a r iables and the Y v ariable. The redundancy -synergy index was able to detect this synerg y , but it was unable to lo calize the subset of X v ariables involv ed in the in- teraction. The pa rtial informatio n decomp osition provided inter- esting and p ossibly useful results for several of the exam- ple systems. W hen applied to the Bo olean logic gates, the pa rtial information deco mpos ition was able to iden- tify the X v ariables inv olved in the interactions, unlike all other informa tio n measures. Using the AND-gate exam- ple, we saw that the par tial information dec ompos ition found that b oth synerg y and redundancy were present in the system, unlike the interaction informatio n, which indicated o nly syner gy was present. Perhaps the most illuminating example system for the pa r tial info r mation decomp osition was Example 5. In tha t ca se, the par- tial information decomp osition co ncluded that each X v a r iable provided entirely redundant informatio n b ecause each X v ariable provided the same a mo un t o f infor ma- tion ab out ea c h sta te of Y , even thoug h e ach X v ariable provided information abo ut different states of Y . This po in t highlights how the par tial information decomp osi- tion defines redundancy via Eq . ( 30 ). It calculates the redundant contributions ba sed o nly on the quantit y of 14 information each X v ar iable provides ab out each state of Y . In the developing neur a l culture, the partial infor - mation decomp osition, s imila r to the interaction infor- mation, showed a changing ba lance b etw een synerg y a nd redundancy through developmen t. How ev er, unlike the int eraction information, the partial information decom- po sition w as able to separate sim ultaneous synergistic and r edundan t in teractions. VI. CONCLUSION W e applied several multiv ariate information measures to simple example sy stems in an a ttempt to explore the prop erties of the information mea sures. W e found that the information meas ures pro duce similar or identical re- sults for some systems (e.g. X OR-gate), but that the measures pr oduce different results for other systems. In examining these results, we found several subtle differ- ences b et w een the infor mation measures that impacted the results. Based on the understanding gained from these s imple s ystems, we were a ble to apply the infor- mation measures to spiking data from a neura l culture through its development . Based on this illustr a tiv e anal- ysis, we saw in teresting changes in the amo unt o f infor- mation b eing transmitted a nd the interactions present in the net work. W e wish to emphasize that none of these infor mation measures is the “right” measure. All of them pr o duce results that ca n b e us e d to learn so mething ab out the system being studied. W e hop e that this w ork will a s sist other res e archers as they delib erate o n the sp ecific q ue s - tions they wish to ans wer ab out a given system so that they may use the multiv ariate info rmation meas ures that bes t suit their go a ls. ACKNO WLEDGMENTS W e would like to thank Paul Williams, Ra ndy Beer, Alexander Murph y-Nakhnikian, Shiny a Ito, Ben Nichol- son, Emily Miller, Virgil Griffith, and E lizabe th Timme for their helpful co mmen ts on this pap er. App endix A: Additional total correlation deriv ation Eq. ( 14 ) can be rewritten a s E q. ( 1 5 ) by adding and subtracting several joint entrop y ter ms and then using Eq. ( 2 ). F or instance, when n = 3, we have: T C ( S ) = X X i ∈ S H ( X i ) ! − H ( S ) = H ( X 1 ) + H ( X 2 ) + H ( X 3 ) − H ( X 1 , X 2 , X 3 ) = H ( X 1 ) + H ( X 2 ) − H ( X 1 , X 2 ) + H ( X 1 , X 2 ) + H ( X 3 ) − H ( X 1 , X 2 , X 3 ) = I ( X 1 ; X 2 ) + I ( X 1 , X 2 ; X 3 ) (A1) A s imilar substitution ca n b e pe formed for n > 3. App endix B: Additional dual total correlation deriv ation Eq. ( 16 ) can b e r ewritten as Eq. ( 18 ) by substituting the expre s sion for the total co rrelation in Eq. ( 14 ) and then a pplying Eq . ( 2 ). D T C ( S ) = X X i ∈ S H ( S/X i ) ! − ( n − 1) H ( S )) = X X i ∈ S H ( S/X i ) + H ( X i ) ! − nH ( S ) − T C ( S ) = X X i ∈ S I ( S/X i ; X i ) ! − T C ( S ) (B1) App endix C: Mo del Ne t w ork Given v a lues fo r p r , p 1 y , p 12 , and p 2 y , the joint prob- abilities fo r all p o ssible sta tes of the netw ork can b e cal- culated. F or example: p ( x 1 = 1) = p r (C1) p ( x 1 = 0) = 1 − p r (C2) p ( x 2 = 1 | x 1 = 1) = p r + p 12 − p r p 12 (C3) p ( x 2 = 0 | x 1 = 1) = 1 − p ( x 2 = 1 | x 1 = 1) (C4) The joint probabilities for the ex a mples discuss ed in the main text of the a rticle ar e shown in T able VI II . [1] F. Rieke, D. W arland, R. R. de Ru yter v an Steveninck, and W. Bialek, Spikes: Exploring the Neur al Co de (MIT Press, 1997). 15 T ABLE VI I I. Joint probab ilities for examples 9 to 13. Ex. 9 Ex. 10 Ex. 11 Ex. 12 Ex. 13 Diagram p r 0 . 02 0 . 02 0 . 02 0 . 02 0 . 02 p 12 0 0 . 1 0 . 1 0 . 1 0 . 1 p 1 y 0 . 1 0 . 1 0 0 . 1 0 p 2 y 0 . 1 0 . 1 0 . 1 0 0 x 1 x 2 y p ( x 1 , x 2 , y ) 0 0 0 0 . 9412 0 . 9412 0 . 9412 0 . 9412 0 . 9412 1 0 0 0 . 0173 0 . 0156 0 . 0173 0 . 0156 0 . 0173 0 1 0 0 . 0173 0 . 0173 0 . 0173 0 . 0192 0 . 0192 1 1 0 0 . 0003 0 . 0019 0 . 0021 0 . 0021 0 . 0023 0 0 1 0 . 0192 0 . 0192 0 . 0192 0 . 0192 0 . 0192 1 0 1 0 . 0023 0 . 0021 0 . 0004 0 . 0021 0 . 0004 0 1 1 0 . 0023 0 . 0023 0 . 0023 0 . 0004 0 . 0004 1 1 1 0 . 0001 0 . 0005 0 . 0003 0 . 0003 0 . 0000 [2] J. Ziv and A . Lemp el, IEEE T rans. Inf. Theory 23 , 337 (1977). [3] C. Berrou, A. Gla vieux, and P . Thitima jshima, in Pr o- c e e dings of IEEE Intern ational Confer en c e on Commu- nic ations , V ol . 2 (1993) p . 1064. [4] A. M. F raser and H. L. Swinney , Phys. R ev. A 33 , 1134 (1986). [5] A. J. Butte and I. S. Kohane, in Pacific Symp osium on Bio c omputing , V ol. 5 (2000) p. 415. [6] W. J. McGill, Psychometri k a 19 , 97 (1954). [7] S. W atanab e, IBM JJ. Res. Dev. 4 , 66 (1960). [8] T. S . H an, Information an d Control 29 , 337 (1975). [9] G. Chechik, A . Glob erson, N. Tishb y , M. J. Anderson, E. D. Y oun g, and I. Nelken, in Ne aur al Information Pr o c ess ing Systems 14 , V ol. 1, edited by T. G. Dietterich, S. Bec k er, and Z. Ghahramani ( MIT Press, 2001) p. 173. [10] S. N iren b erg, S. M. Carci eri, A. L. Jacobs, an d P . E. Latham, Nature 411 , 698 (2001). [11] E. Schneidman, S . Still, M. J. Berry I I, and W. Bialek, Physica l Review Letters 91 , 238701 (2003). [12] V. V ara dan, D . M. M. I I I, and D. Anastassiou, Bioin- formatics 22 , e497 ( 2006). [13] P . L. Williams and R. D. Beer, “D ecomp osing multiv ari- ate information,” (2010), arXiv:1004.25 15v1 . [14] N. J. Cerf and C. Adami, Phys. Rev. A 55 , 3371 (1997). [15] H. Matsuda, Phys. R ev. E 62 , 3096 (2000). [16] D. Anastassiou, Molecular Systems Biology 3 , 83 (2007). [17] P . Chanda, A . Zhang, D. Brazeau, L. Suc heston, J. L. F reudenheim, C. A m brosone, and M. R amanathan, American Journal of Human Genetics 81 , 939 (2007). [18] N. Brenner, S. P . Strong, R. Kob erle, W. Bialek, and R. R . d e R uyter v an Steveninc k, Neural Computation 12 , 1531 ( 2000). [19] E. Schneidman, W. Bialek, and M. J. Berry I I, Journal of N euroscience 23 , 11539 (2003). [20] L. M. A. Betten court , G. J. Stephens, M. I. Ham, and G. W. Gross, Phys. R ev. E 75 , 021915 (2007). [21] G. T ononi, O. S porns, and G. M. Edelman, Pro ceedings of t h e National A cadem y of Sciences 91 , 5033 (1994). [22] O . Sp orns, G. T ononi, and G. E. Edelman, Cerebral Cortex 10 , 127 (2000). [23] T. W enn ekers and N. A y , Theory in Bio science 122 , 5 (2003). [24] N . Timme, Http://mypage.i u.edu/ ∼ nmtimme. [25] N . Timme, W. Alford, B. Fleck er, and J. M. Beggs, “Multiv ari ate information measures: an ex perimental- ist’s p erspective,” (2011), arXiv:1111.68 57v4 . [26] V . Griffith and C. Ko ch, “Quantifying synergistic mutual information,” (2011), arXiv:1112.16 80v5 . [27] T. M. Co ve r and J. A. Thomas, El ements of information the ory , 2nd ed. (Wiley-Interscience, 2006). [28] Through ou t the pap er we will use capital letters to refer to vari ables and lo w er case letters to refer to individual v alues of th ose v ariables. W e will also use d iscrete v ari- ables, though several of the information measures dis- cussed can b e directly ext ended t o contin uous vari ables. When working with a contin uous va riable, v arious tech- niques exists, such as kernel densit y estimation, which can b e u sed to infer a discrete distribution from a con- tinuous v aria ble. Logarithms will b e base 2 throughout in order to pro duce information v alues in un its of bits. [29] W e will use S to refer to a set of N X vari ables such th at S = { X 1 , X 2 , . . . X N } throughout the pap er. [30] L . M. A. Bettencourt, V. Ginta utas, and M. I. Ham, Physica l Review Letters 100 , 238701 (2008). [31] I . Gat and N. Tishb y , in Neur al Information Pr o c essing Systems 11 , edited by M. S. Kearns, S . A. Solla, and D. A. Cohn (MIT Press) p. 111. [32] A . Jakulin and I. Bratk o, “Quan tifying and visualizing attribute interactions,” (2008), arXiv:cs/03080 02v3 . [33] A . J. Bell, in International workshop on indep endent c om- p one nt analysis and blind si gnal sep ar ation (2003) p. 921. [34] T. S. Han, Information and Control 36 , 133 ( 1978). [35] S . A. Ab dallah and M. D. Plumbley , “A measure of statistical complexity based on predictive information,” (2010), arXiv:1012.18 90v1 . [36] E. O lbric h, N. Bertschinger, N. A y , and J. Jost, Euro- p ean Physi cal Journal B 63 , 407 (2008). [37] P . E. Lath am and S. Nirenberg, Journal of Neuroscience 25 , 5195 (2005). [38] R . G. James, C. J. Ellison, and J. P . Crutchfield, Chaos 21 , 037109 (2011). [39] B. Fleck er, W. A lford, J. M. Beggs, P . L. Williams, and R. D. Beer, Chaos 21 , 037104 (2011). [40] M. R. DeW eese and M. Meister, Netw ork: Computation in Neural Systems 10 , 325 (1999). [41] I t should b e noted that DeW eese and Meister refer to the expression in Eq. ( 29 ) as t h e specific su rprise. [42] D . A. W agenaar, J. Pine, and S. M. P otter, BMC Neu- roscience 7 (2006). [43] H . Kamiok a, E. Maeda, Y. Jim bo, H. P . C. Robinson, and A. Kaw ana, Neu roscience Letters 206 , 109 (1996). [44] D . A. W agenaar, Z. Nadasdy , and S. M. P otter, Physic al Review E 73 , 051907 (2006). [45] V . Pasquale, P . Massobrio, L. L. Bologna, M. Chiap- palonea, and S. Martinoia, N euroscience 153 , 1354 (2008). [46] C. T etzlaff, S. Okujeni, U. Egert, F. W orgotter, and M. Butz, PLoS Computationa Biology 6 , e1001013 (2010).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment