Fast global convergence of gradient methods for high-dimensional statistical recovery

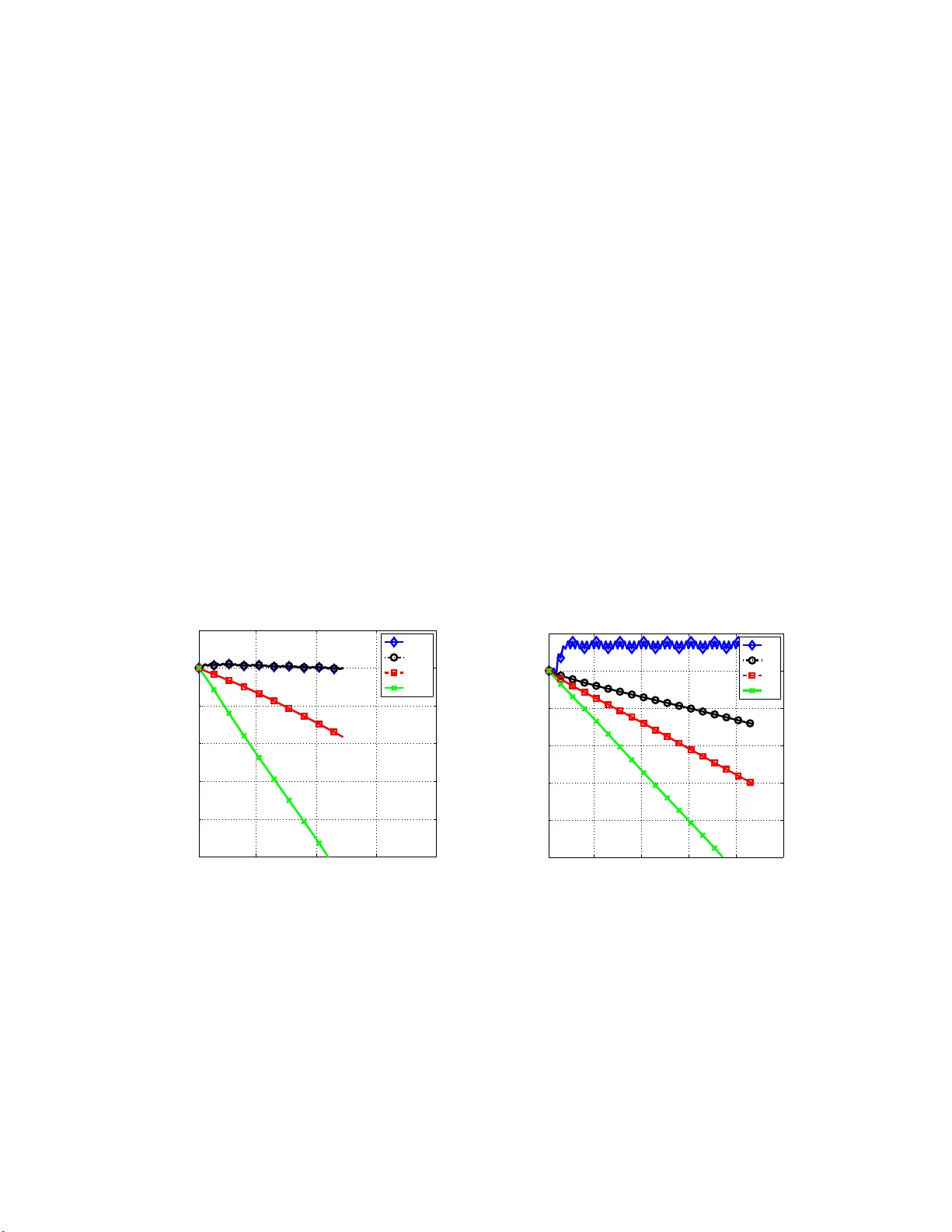

Many statistical $M$-estimators are based on convex optimization problems formed by the combination of a data-dependent loss function with a norm-based regularizer. We analyze the convergence rates of projected gradient and composite gradient methods…

Authors: Alekh Agarwal, Sah, N. Negahban