Randomized Smoothing for Stochastic Optimization

We analyze convergence rates of stochastic optimization procedures for non-smooth convex optimization problems. By combining randomized smoothing techniques with accelerated gradient methods, we obtain convergence rates of stochastic optimization pro…

Authors: John C. Duchi, Peter L. Bartlett, Martin J. Wainwright

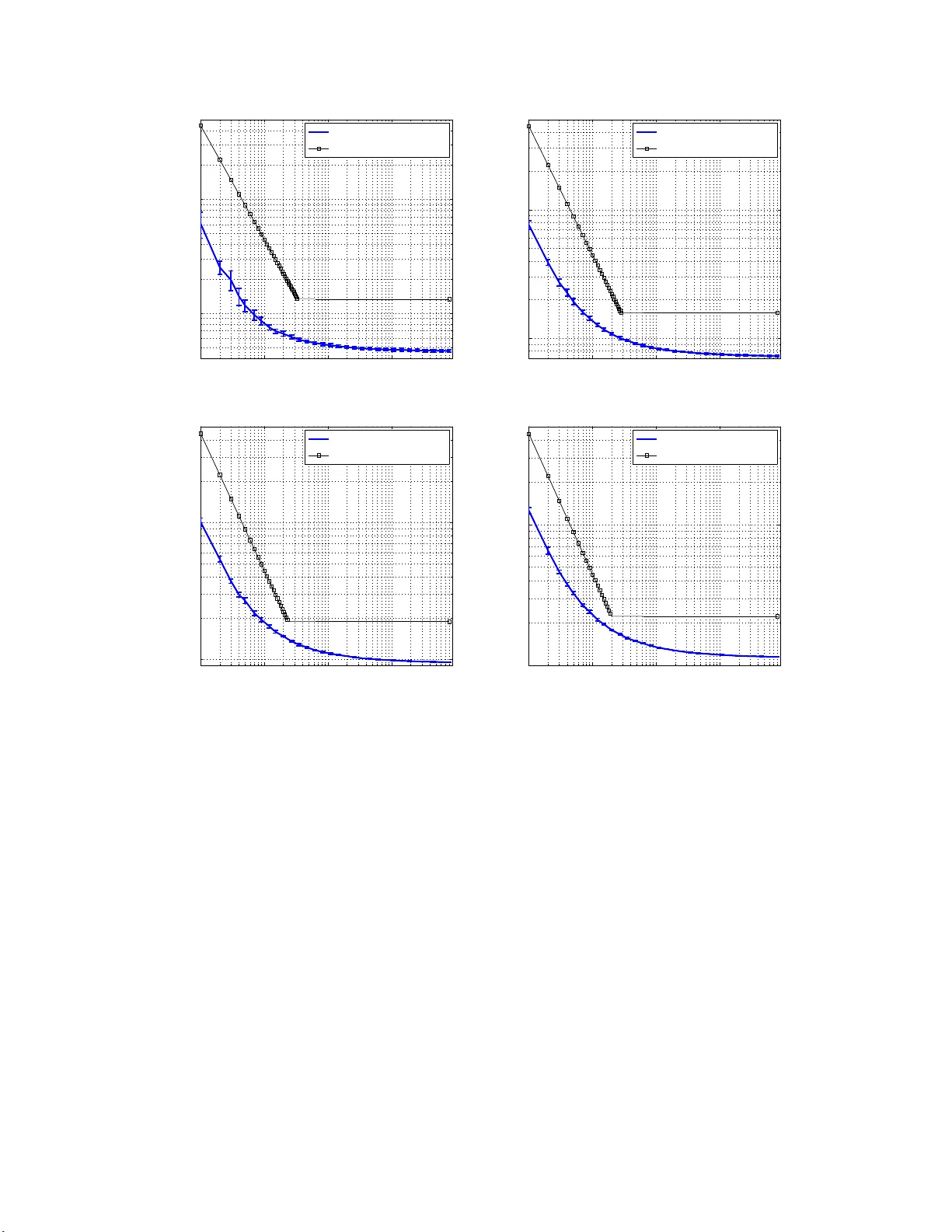

Randomized Smo othing for Sto c hastic Optimization John C. Duc hi 1 P eter Bartlett 1 , 2 Martin J. W ain wrigh t 1 , 2 jduchi@eec s.b erk eley .edu bartlett@eecs.ber keley .edu wain wrig@eecs.b e rke ley .edu Departmen t of Electrical Engineering and Computer Sciences 1 Departmen t of S t atistics 2 Univ ersit y of California Berkele y , Berkele y , CA 94720 Marc h 2012 Abstract W e analyze conv ergence rates of sto c hastic optimization pro cedures f or non-smo oth con- vex optimization problems. By co mbining randomized smo othing techniques with a ccelerated gradient metho ds, we obtain convergence rates o f sto c hastic optimization pr ocedures, bo th in exp ectation and with hig h pro bability , that ha v e optimal depe ndence on the v ar iance of the gradient estimates. T o the b est of our knowledge, these are the first v a riance-bas ed rates for non-smo oth optimization. W e give several applica tions of o ur results to statistica l estimation problems, and pr ovide experimental results that demonstrate the effectiveness of the prop osed algorithms. W e also describ e how a combination of our algo rithm with recent work on decentral- ized optimization yields a distributed stochastic optimization alg o rithm that is order-optimal. 1 In tro duction In this p ap er, we develo p and analyze r andomized smo othing p ro cedures for solving the follo wing class of sto c hastic optimization problems. Let { F ( · ; ξ ) , ξ ∈ Ξ } b e a collection of real-v alued functions, eac h with domain conta ining the closed con v ex set X ⊆ R d . Letting P b e a probability distribution o v er the index set Ξ, consider the f unction f : X → R defi ned via f ( x ) : = E F ( x ; ξ ) = Z Ξ F ( x ; ξ ) dP ( ξ ) . (1) In this p ap er, we analyze a family of randomized smo othing pro cedur es for solving p oten tially non-smo oth sto c hastic optimization p roblems of the form min x ∈X f ( x ) + ϕ ( x ) , (2) where ϕ : X → R is a k n o wn regularizing function. Thr oughout the p ap er, w e assu m e that f is con v ex on its domain X . This condition is satisfied, f or in stance, if the f unction F ( · ; ξ ) is con v ex for P -almost ev ery ξ . W e assume that ϕ is closed and con v ex, b ut we allo w for n on-differen tiabilit y so th at the framew ork includes the ℓ 1 -norm and related regularizers. While w e will later discuss the effects that ϕ ( x ) has on our optimization pr o cedures, throughout w e will mostly consider the prop erties of the sto c hastic fu nction f . Problem ( 2 ) is c hallenging mainly for t w o reasons. First, the function f may b e n on -sm o oth. Second, in man y cases, f c annot actually b e ev a luated. When ξ is h igh-d imensional, the in tegral ( 1 ) cannot b e efficien tly computed, and in statistical learning p roblems we usually do not ev en kno w what the d istr ibution P is. Thus, throughout this w ork, w e assume only that we ha v e access to a sto c hastic oracle that allo ws us to get i.i.d. samp les ξ ∼ P , and consequentl y w e fo cus on sto c hastic gradient pro cedures for the con v ex program ( 2 ). 1 T o addr ess the fi rst difficulty mentioned ab ov e—namely that f may b e n on-smo oth—sev eral researc hers ha v e considered techniques for smo othing th e ob jectiv e. Suc h appr oac hes for deter- ministic non-smo oth problems are by no w we ll-kno wn, and includ e Moreau-Y osida regularization (e.g. [ 22 ]), method s b ased on r ecession fun ctions [ 3 ]; and a m etho d that uses conjugate and pr o ximal functions [ 26 ]. Seve ral w orks study method s to replace constr aints f ( x ) ≤ 0 in con v ex programming problems with exact p enalties max { 0 , f ( x ) } in the ob jectiv e, after wh ic h smo othing is app lied to the max { 0 , ·} op erator (e.g., see the pap er [ 8 ] and references ther ein). The d ifficult y of such ap- proac hes is that most r equ ire quite detailed kn o wledge of the s tr ucture of the function f to b e minimized and hence are imp ractical in s to c hastic settings. The second difficulty of solving the con v ex program ( 2 ) is that the function cannot actually b e ev aluated except thr ough sto chastic realizations of f and its (sub)gradien ts. In this pap er , w e dev elop an algorithm for solving problem ( 2 ) b ased on sto c hastic subgradient metho d s. Although suc h metho ds are classical [ 30 , 11 , 28 ], r ecen t wo rk by Juditsky et al. [ 15 ] and Lan [ 18 , 19 ] h as sho wn that if f is smooth—its gradien ts are Lipsc hitz con tin uous—conv erge nce rates d ep endent on the v aria nce of the sto c hastic gradient estimator are ac hiev able. Sp ecifically , if σ 2 is th e v a riance of th e gradient estimator, the con v ergence rate of the resu lting sto chastic optimization pro cedure is O ( σ / √ T ). Of particular r elev ance to our stud y is the follo wing fact: if the oracle (instead of returning ju st a sin gle estimate) returns m unbiased estimates of the gradient, the v ariance of the gradient estimator is reduced by a factor of m . Dek el et al. [ 9 ] exploit this fact to dev elop asymptotically order-optimal distributed optimization algorithms, as we discuss in the sequel. T o the b est of our kn o wledge, there is no work on non-smo ot h sto c hastic problems for which a reduction in th e v ariance of the stochastic estimate of the true subgradient giv es an impro v emen t in con v ergence r ates. F or n on-smo oth sto c hastic optimization, kno wn conv e rgence rates are dep en den t only on the Lip sc hitz constan t of the f unctions F ( · ; ξ ) and the num b er of actual up dates p erformed. Within the oracle mo del of conv ex op timization [ 25 ], the optimizer has access to a blac k-b o x oracle that, given a p oint x ∈ X , r eturns an un biased estimate of a (sub)gradient of the ob j ectiv e f at the p oint x . In most s to chastic optimization pro cedures, an algorithm up dates a parameter x t at ev ery iteration by qu er y in g the oracle for one sto c hastic sub gradien t; w e consider the natural extension to the case when the optimizer issues sev eral queries to the sto chastic oracle at every iteration. A conv o lution-based s m o othing tec hniqu e amenable to non-smo oth sto chastic optimization problems is the starting p oin t for our approac h. A n umber of authors (e.g., [ 16 , 32 , 17 , 38 ]) ha v e noted that particular random p erturb ations of the v a riable x tran s form f in to a smo oth fun ction. The intuition underlyin g such approac hes is th at conv olving t w o fu n ctions yields a new function that is at lea st as smo oth as the smo othest of the tw o original fu nctions. In particular, let µ d enote the densit y of a random v ariable with resp ect to Leb esgue measure, and consider th e s mo othed ob jectiv e function f µ ( x ) := Z R d f ( x + y ) µ ( y ) dy = E µ [ f ( x + Z )] , (3) where Z is a rand om v ariable with probabilit y densit y µ . Clearly , f µ is con v ex whenev er f is con v ex; m oreov er, it is kno wn that if µ is a d ensit y with resp ect to L eb esgue measure, then f µ is differen tiable [ 4 ]. W e analyze minimization pro cedures that solv e the non-smo oth problem ( 2 ) b y using sto c hastic gradien t samples from the smo othed function ( 3 ) with appropr iate c hoice of sm o othing densit y µ . The main con tribution of our pap er is to sh o w that the ability to issue several queries to the sto c hastic oracle for the original ob jectiv e ( 2 ) can giv e faster rates of conv erge nce than a simple 2 sto c hastic oracle. Ou r t w o main theorems quan tify the ab ov e s tatemen t in terms of exp ected v alues (Theorem 1 ) an d , und er an add itional reasonable tail condition, with high prob ab ility (Theorem 2 ). One consequence of our results is that a pro cedur e that queries the non-smo oth sto chasti c oracle for m sub gradien ts at iteration t ac hiev es rate of conv erge nce O ( R L 0 / √ T m ) in exp ectation and with high p robabilit y . (Here L 0 is the Lip sc hitz constan t of the function f and R is th e ℓ 2 -radius of its domain.) As we discu ss in Section 2.4 , th is con v ergence r ate is optimal up to constant factors. Moreo v er, this fast rate of conv ergence has imp lications for applications in statistical p roblems, distributed optimization, an d other areas, as discu ssed in Section 3 . The remainder of the pap er is organized as follo ws. In the next section, we r eview standard tec hniques for sto c hastic optimization, n oting a few of their deficiencies. After th is, we state our algorithm and main theorems ac hieving faster r ates of con v ergence for non-smo oth stochastic prob- lems using th e rand omized smo othing tec hniqu e ( 3 ). W e mak e strong use of the fi ne analytic prop erties of rand omized smo othing, and collect several relev ant r esults in App endix E . In Sec- tion 3.1 , we outline seve ral applications of the smo othing techniques, which w e complement in Section 3.2 w ith exp erimen ts and simulatio ns s h o wing the merits of our new app roac h. Section 4 con tains pro ofs of our main results, though we defer more tec hnical asp ects to the app endices. Notation: F or the reader’s con v enience, h ere we sp ecify n otation as well as a few defin itions. W e use B p ( x, u ) = { y ∈ R d | k x − y k p ≤ u } to denote the closed p -norm b all of r adius u aroun d the p oin t x . Addition of sets A and B is defined as the Minko wski sum in R d , that is, A + B = { x ∈ R d | x = y + z , y ∈ A, z ∈ B } , and multiplica tion of a set A by a scalar α is defined to b e αA := { αx | x ∈ A } . F or any f u nction or distribu tion µ , we let supp µ := { x | f ( x ) 6 = 0 } den ote its su pp ort. Giv en a con v ex fun ction f with domain X , for any x ∈ X , we use ∂ f ( x ) to denote its sub d ifferen tial. W e define the sh orthand notation k ∂ f ( x ) k = sup {k g k | g ∈ ∂ f ( x ) } for an y norm k·k . The dual norm k·k ∗ with the norm k·k is defin ed as k z k ∗ := sup k x k≤ 1 h z , x i . A fu nction f is L 0 -Lipsc hitz with resp ect to the norm k·k o v er X if | f ( x ) − f ( y ) | ≤ L 0 k x − y k for all x, y ∈ X . F or conv ex f , it is known [ 12 ] that f is L 0 -Lipsc hitz in th is sense if and only if sup x ∈X k ∂ f ( x ) k ∗ ≤ L 0 . W e sa y the gradient of f is L 1 -Lipsc hitz con tin uous w ith resp ect to the norm k·k o v er X if k∇ f ( x ) − ∇ f ( y ) k ∗ ≤ L 1 k x − y k for x, y ∈ X . A fun ction ψ is strongly con v ex with resp ect to a norm k·k o v er X if for all x, y , ∈ X , ψ ( y ) ≥ ψ ( x ) + h∇ ψ ( x ) , y − x i + 1 2 k x − y k 2 . Giv en a con v ex and different iable function ψ , th e asso ciated Bregman d iv ergence [ 5 ] is giv en by D ψ ( x, y ) := ψ ( x ) − ψ ( y ) − h∇ ψ ( y ) , x − y i . When X ∈ R d 1 × d 2 is a matrix, we let ρ i ( X ) denote its i th largest sin gular v alue, and wh en X ∈ R d × d , we let λ i ( X ) d enote its i th largest eigen v alue b y m o dulus . Th e transp ose of X is d enoted X ⊤ . The notation ξ ∼ P indicates that ξ is drawn according to the distribu tion P . 2 Main r esults and some consequences In th is section, we b egin by motiv a ting the algorithm studied in this p ap er, and then state our main resu lts on its conv erge nce b ehavio r. 3 2.1 Some backgroun d W e fo cus on sto chastic gradient descen t metho ds 1 based on dual av eragi ng s c hemes [ 27 ] for solving the sto chasti c problem ( 2 ). Dual a v eraging metho ds are based on a pr o ximal fu nction ψ , w h ic h is assum ed strongly con v ex with resp ect to a n orm k·k . Th e up date sc heme of s u c h a metho d is as follo ws. Given a p oin t x t ∈ X , the algorithm queries a sto chastic oracle and receiv es a random v ector g t suc h that E [ g t ] ∈ ∂ f ( x t ). The algorithm then p erf orm s the up date x t +1 = argmin x ∈X t X τ =0 h g τ , x i + 1 α t ψ ( x ) (4) where α t > 0 is a sequence of stepsizes. Under some m ild assumptions, the algorithm is guaran teed to conv erge for sto chastic problems. F or instance, s u pp ose that ψ is strongly con v ex with resp ect to the norm k·k , and m oreo v er that E [ k g t k 2 ∗ ] ≤ L 2 0 for all t , where w e recall that k·k ∗ denotes the dual norm to k·k . T hen, with stepsizes α t ∝ R/L 0 √ t , it is known that the sequence { x t } ∞ t =0 generated b y th e u p dates ( 4 ) satisfies E " f 1 T T X t =1 x t !# − f ( x ∗ ) = O L 0 p ψ ( x ∗ ) √ T ! . (5) W e r efer the r eader to pap ers by Nestero v [ 27 ] an d Xiao [ 36 ] for resu lts of this t yp e. An unsatisfying asp ect of the b ound ( 5 ) is the absence of any role for th e v aria nce of the (sub)gradien t estimator g t . In particular, eve n if an algorithm is able to obtain m > 1 samples of the gradien t of f at x t —thereb y giving a significan tly more accurate gradien t estimate—this result fails to capture the lik ely imp ro v emen t of the metho d. W e address this problem by sto c hastically smo othing the n on-smo oth ob jectiv e f an d then adapt recent wo rk on s o-called “accelerate d” gradien t metho ds [ 19 , 34 , 36 ] to ac hiev e v ariance-based improv eme nts. Accelerated metho ds work only when the function f is smo oth—that is, wh en it has Lipschitz contin uous gradients. Thus, we turn no w to dev eloping the to ols n ecessary to sto chasti cally sm o oth the non-sm o oth ob jectiv e ( 2 ). 2.2 Description of algorithm Our algorithm is based on observ ations of sto chastic ally p erturb ed gradient information at eac h iteration, w h ere we slo wly d ecrease the p erturbation as the algorithm p r o ceeds. More precisely , our algorithm uses the f ollo wing sc heme. Let { u t } ⊂ R + b e a n on-increasing sequ ence of p ositive real num b ers; these q u an tities cont rol the p erturbation s ize. At iteration t , rather th an query th e sto c hastic oracle at the p oin t y t , the algorithm quer ies the oracle at m p oint s d r a wn randomly from some n eigh b orho o d around y t . Sp ecifically , it p erforms the follo wing three steps: (1) Dra ws r andom v ariables { Z i,t } m i =1 in an i.i.d. manner according to the distribu tion µ . (2) Queries the oracle at the m p oin ts y t + u t Z i,t , i = 1 , 2 , . . . , m , yielding sto c hastic gradient s g i,t ∈ ∂ F ( y t + u t Z i,t , ξ i,t ) , where ξ i,t ∼ P , for i = 1 , 2 , . . . , m . (6) 1 W e n ote in passing that essentially identical results can also b e obtained for metho ds based on mirror d escent [ 25 , 34 ], though we omit these so as not to o verburden the reader. 4 (3) Computes the av e rage g t = 1 m P m i =1 g i,t . Here and throughout w e den ote the d istribution of the random v ariable u t Z b y µ t , and we note that this pr o cedure ensu r es E [ g t | y t ] = ∇ f µ t ( y t ) = ∇ E [ F ( y t + u t Z ; ξ ) | y t ], w h ere f µ t is the smo othed function ( 3 ) and µ t is the density of u t . By com bining the sampling scheme ( 6 ) with extensions of Tseng’s recent w ork on accele rated gradien t metho ds [ 34 ], w e can ac hiev e stronger con v ergence rates for solving the non-smo oth ob- jectiv e ( 2 ). The up date we prop ose is essenti ally a smo othed version of the simpler metho d ( 4 ). The metho d uses thr ee series of p oints, d enoted { x t , y t , z t } ∈ X 3 . W e u se y t as a “query p oin t”, so that at iterati on t , the algo rithm receiv es a v ector g t as describ ed in the samp ling sc heme ( 6 ). The three sequences ev olv e according to a dual-a v eraging algorithm, whic h in our case inv olv es three scalars ( L t , θ t , η t ) to con trol step sizes. The r ecursions are as follo ws: y t = (1 − θ t ) x t + θ t z t (7a) z t +1 = argmin x ∈X t X τ =0 1 θ τ h g τ , x i + t X τ =0 1 θ τ ϕ ( x ) + L t +1 ψ ( x ) + η t +1 θ t +1 ψ ( x ) (7b) x t +1 = (1 − θ t ) x t + θ t z t +1 . (7c) In prior work on accelerated sc hemes for sto c hastic and n on-sto c hastic optimization [ 34 , 19 , 36 ], the term L t is set equal to the Lipschitz constant of ∇ f ; in con trast, our choic e of v arying L t allo ws our smo othing schemes to b e oblivious to the num b er of iterations T . The extra dampin g term η t /θ t pro vides con trol o v er the flu ctuations indu ced b y us ing the random v ector g t as opp osed to deterministic subgradient in formation. As in T seng’s work [ 34 ], we assum e that θ 0 = 1 and (1 − θ t ) /θ 2 t = 1 /θ 2 t − 1 ; th e latter equalit y is ensur ed by setting θ t = 2 / (1 + q 1 + 4 /θ 2 t − 1 ). 2.3 Con v ergence r ates W e now state our t w o main results on the conv erge nce r ate of the randomized smo othing pro ce- dure ( 6 ) with accelerated d ual av eragi ng up dates ( 7a )–( 7c ). S o as to a v oid cluttering the theorem statemen ts, w e b egin by stating our main assu mptions and notation. When we state that a function f is Lipsc hitz con tin uous, we mean with r esp ect to the norm k·k , whose du al norm w e denote k·k ∗ , and w e assume that ψ is nonnegativ e and strongly con v ex with resp ect to k·k . Ou r main assumption ensures that the smo othing op erator and smo othed function f µ are r elativ ely w ell-b eha v ed. Assumption A (Smo othing prop erties) . The r andom variable Z is zer o-me an with density µ (with r esp e ct to L eb esgue me a sur e on the affine hul l aff ( X ) of X ), and ther e ar e c onstants L 0 and L 1 such that for al l u > 0 , E [ f ( x + uZ )] ≤ f ( x ) + L 0 u , and E [ f ( x + uZ )] has L 1 u -Lipschitz c ontinuous gr adient with r esp e ct to the norm k·k . F or P -almost e v ery ξ ∈ Ξ , we have dom F ( · ; ξ ) ⊇ u 0 supp µ + X . Let µ t denote the densit y of the random vecto r u t Z and define th e instant aneous smo othed func- tion f µ t = R f ( x + z ) dµ t ( z ). As discu s sed in the introd u ction, the f unction f µ t is guaran teed to b e smo oth wh enev er µ (and hence µ t ) is a densit y with r esp ect to Leb esgue measure, so Assu mp- tion A ensures that f µ t is uniformly close to f and not to o “jagged.” Man y smo othing distr i- butions, includin g Gaussians and uniform distrib utions on n orm balls, satisfy Assum p tion A (see App end ix E ); w e use suc h examples in the corolla ries to follo w. The con tainmen t of u 0 supp µ + X in dom F ( · ; ξ ) guaran tees that the sub differen tial ∂ F ( · ; ξ ) is non-empt y at all samp led p oints y t + u t Z . 5 Indeed, since µ is a d en sit y with resp ect to L eb esgue measure on aff ( X ), with probab ility one y t + u t Z ∈ relint d om F ( · ; ξ ) and th us [ 12 ] the sub differentia l ∂ F ( y t + u t Z ; ξ ) 6 = ∅ . T here are many smo othing distributions µ , including standard Gaussian and u niform distributions on norm balls, for wh ic h Assump tion A holds (see Ap p endix E ), and we use su c h examples in the corollaries to follo w. In the algorithm ( 7a )–( 7c ), w e set L t to b e an up p er b ound on th e Lipsc hitz constant L 1 u t of the gradien t of E [ f ( x + u t Z )]; th is choic e ensu res go o d con v ergence p r op erties of the algorithm. The follo wing is the fir st of our main theorems. Theorem 1. Define u t = θ t u , use the sc alar se que nc e L t = L 1 /u t , and assume that η t is non- de cr e asing. Under Assumption A , for any x ∗ ∈ X and T ≥ 4 , E [ f ( x T ) + ϕ ( x T )] − [ f ( x ∗ ) + ϕ ( x ∗ )] ≤ 6 L 1 ψ ( x ∗ ) T u + 2 η T ψ ( x ∗ ) T + 1 T T − 1 X t =0 1 η t E k e t k 2 ∗ + 4 L 0 u T , (8) wher e e t : = ∇ f µ t ( y t ) − g t is the err or in the gr adient estimate. Remarks: Note that the con v ergence rate ( 8 ) inv ol ve s the v ariance E [ k e t k 2 ∗ ] explicitly . W e exploit this fact in the corollaries to b e stated shortly . In addition, note that Th eorem 1 do es not require a priori kno wledge of th e n umber of iterat ions T to b e p erformed, whic h renders it s u itable to online and streaming applications. If suc h kn owledge is a v ailable, then it is p ossible to giv e a similar re- sult using the smo othing parameter u t ≡ u for all t ; such a result is stated as Theorem 3 in S ection 4 . The p receding result, w h ic h provides con v ergence in exp ectation, can b e extended to b oun ds that hold with high p robabilit y un der su itable tail conditions on th e error e t : = ∇ f µ t ( y t ) − g t . In particular, let F t denote the σ -field of the r andom v ariables g i,s , i = 1 , . . . , m and s = 0 , . . . , t . In order to ac hiev e high-probability con v ergence results, a sub set of our results inv olv e the follo wing assumption. Assumption B (Su b-Gausian errors) . The err or is ( k·k ∗ , σ ) sub-Gaussian for some σ > 0 , me an- ing that with pr ob ability 1 E [exp( k e t k 2 ∗ /σ 2 ) | F t − 1 ] ≤ exp(1) for al l t ∈ { 1 , 2 , 3 , . . . } . (9) W e refer the reader to App endix F for more backg round on sub-Gaussian and sub-exp onential random v aria bles. In p ast w ork on smo oth optimization, other authors [ 15 , 19 , 36 ] ha v e imp osed this t yp e of tail assumption, and we discuss su fficien t conditions for the assumption to h old in Corollary 4 in the follo wing s ection. Theorem 2. In addition to the c onditions of The or em 1 , assume X is c omp act with k x − x ∗ k ≤ R for al l x ∈ X and that Assumption B holds. Th en with pr ob ability at le ast 1 − 2 δ , the algorithm with step size η t = η √ t + 1 satisfies f ( x T ) + ϕ ( x T ) − [ f ( x ∗ ) + ϕ ( x ∗ )] ≤ 6 L 1 ψ ( x ∗ ) T u + 4 L 0 u T + 4 η T ψ ( x ∗ ) T + 1 + θ T − 1 T − 1 X t =0 1 2 η t E [ k e t k 2 ∗ ] + 4 σ 2 max n log 1 δ , q 2 e (log T + 1) log 1 δ o η T + σ R q log 1 δ √ T . 6 Remarks: The fi rst four terms in the con v ergence r ate Th eorem 2 giv es are essen tially iden tical to the exp ected rate of Theorem 1 . The first of the additional terms d ecreases at a rate of 1 /T , while the second decreases at a rate of σ / √ T . As we discuss in the Corollaries that follo w, the dep end ence σ / √ T on the v ariance σ 2 is optimal, and an app ropriate c hoice of the sequ ence η t in Theorem 1 yields the same rates to constant factors. 2.4 Some consequences The corollaries of the ab ov e th eorems—and the consequential optimalit y guaran tees of the algo- rithm ab o v e—are our main f o cus for the remainder of this section. Sp ecifically , we sh o w concrete con v ergence b oun ds for algorithms us in g different choic es of the smo othing distribu tion µ . F or eac h corollary , we make the assu mption that x ∗ ∈ X satisfies ψ ( x ∗ ) ≤ R 2 , but is otherwise arbitrary , that the iteration num b er T ≥ 4, and that u t = uθ t . W e b egin with a corollary that p ro vides b ound s when the smo othing distribu tion µ is uniform on the ℓ 2 -ball. Th e conditions on F in the corollary hold, for example, w hen F ( · ; ξ ) is L 0 -Lipsc hitz with resp ect to the ℓ 2 -norm for P -a.e. sample of ξ . Corollary 1. L et µ b e uniform on B 2 (0 , 1) and assume E [ k ∂ F ( x ; ξ ) k 2 2 ] ≤ L 2 0 for x ∈ X + B 2 (0 , u ) , wher e we set u = Rd 1 / 4 . Wi th the step size choic es η t = L 0 √ t + 1 /R √ m and L t = L 0 √ d/u t , E [ f ( x T ) + ϕ ( x T )] − [ f ( x ∗ ) + ϕ ( x ∗ )] ≤ 10 L 0 Rd 1 / 4 T + 5 L 0 R √ T m . The follo wing corollary sho ws that similar con v ergence r ates are attained when smo othing with the normal distrib ution. Corollary 2. L et µ b e the d -dimensional normal distribution with zer o-me an and identity c ovari- anc e I and assume that F ( · ; ξ ) is L 0 -Lipschitz with r esp e ct to the ℓ 2 -norm for P -a.e. ξ . W ith smo othing p ar ameter u = R d − 1 / 4 and step sizes η t = L 0 √ t + 1 /R √ m and L t = L 0 /u t , we have E [ f ( x T ) + ϕ ( x T )] − [ f ( x ∗ ) + ϕ ( x ∗ )] ≤ 10 L 0 Rd 1 / 4 T + 5 L 0 R √ T m . W e r emark here (deferring deep er discussion to Lemma 10 ) that the dimension dep endence of d 1 / 4 on the 1 /T term in the p revious corollaries cannot b e improv ed by more than a constant factor. Essen tially , fun ctions f exist w hose smo othed ve rsion f µ cannot h a v e b oth Lipschitz con tin uous gradien t an d b e u niformly close to f in a dimension-ind ep endent sense, at least for the u niform or normal distrib utions. The adv an tage of u sing n orm al r an d om v ariables—as opp osed to Z u niform on B 2 (0 , u )—is that n o normalization of Z is necessary , though there are m ore str in gen t r equiremen ts on f . The lac k of norm alization is a usefu l pr op erty in v ery high dimensional scenarios, su c h as statistical natural language pro cessing [ 23 ]. S imilarly , w e can sample Z from an ℓ ∞ ball, which, lik e B 2 (0 , u ), is still compact, b ut gives slightly lo oser b ound s than sampling from B 2 (0 , u ). Nonetheless, it is m uc h easier to sample from B ∞ (0 , u ) in high dimensional settings, esp ecially sparse data scenarios suc h as NLP where only a few co ordinates of the rand om v ariable Z are n eeded. There are sev eral ob j ective s f + ϕ with d omains X for which the natural geometry is non- Euclidean, wh ich motiv ates the mirror descen t family of algorithms [ 25 ]. By using different dis- tributions µ for the random p erturb ations Z i,t in ( 6 ), we can tak e adv an tage of n on-Euclidean 7 geometry . Here we giv e an example that is quite useful for problems in wh ic h the optimizer x ∗ is sparse; for example, the optimization set X ma y b e a s im p lex or ℓ 1 -ball, or ϕ ( x ) = λ k x k 1 . The idea in this corollary is that we ac hiev e a pair of d u al norms that m a y give b etter optimization p erformance than th e ℓ 2 - ℓ 2 pair ab o v e. Corollary 3. L et µ b e the uni f orm density on B ∞ (0 , 1) and assume that F ( · ; ξ ) is L 0 -Lipschitz c o ntinuous with r esp e ct to the ℓ 1 -norm over X + B ∞ (0 , u ) for ξ ∈ Ξ , wher e we set u = R √ d log d . Use the pr oximal fu nction ψ ( x ) = 1 2( p − 1) k x k 2 p for p = 1 + 1 / log d and set η t = L 0 √ t + 1 /R √ m log d and L t = L 0 /u t . Ther e is a universal c onstant C such that E [ f ( x T ) + ϕ ( x T )] − [ f ( x ∗ ) + ϕ ( x ∗ )] ≤ C L 0 R √ d T + C L 0 R √ log d √ T m = O L 0 k x ∗ k 1 √ d log d T + L 0 k x ∗ k 1 log d √ T m . The dimension dep enden ce of √ d log d on the leading 1 /T term in the corollary is w eak er than th e d 1 / 4 dep end ence in th e earlier corollaries, so for v ery large m the corollary is n ot as strong as one desires when taking adv an tage of non-Eu clidean geometry . Nonetheless, for large T , the 1 / √ T m terms domin ate the con v ergence r ates, and Corollary 3 can b e an imp ro v emen t. Our final corollary sp ecializes the high p robabilit y con v ergence result in Th eorem 2 by showing that the err or is su b-Gaussian ( 9 ) under the assu m ptions in the corollary . W e state the corollary for p roblems w ith Eu clidean geometry , bu t it is clear that similar r esu lts hold for non-Euclidean geometry as ab ov e. Corollary 4. Assume that F ( · ; ξ ) i s L 0 -Lipschitz with r esp e ct to the ℓ 2 -norm. L et ψ ( x ) = 1 2 k x k 2 2 and assume that X is c o mp a ct with k x − x ∗ k 2 ≤ R for x, x ∗ ∈ X . Using smo othing distribution µ uniform on B 2 (0 , 1) , smo oth ing p ar ameter u = Rd 1 / 4 , damping p ar ameter η t = L 0 √ t + 1 /R √ m , and i nstantane ous Lipschitz estimate L t = L 0 √ d/u t , with pr ob ability at le ast 1 − δ , f ( x T ) + ϕ ( x T ) − f ( x ∗ ) − ϕ ( x ∗ ) = O L 0 Rd 1 / 4 T + L 0 R √ T m + L 0 R q log 1 δ √ T m + L 0 R max { log 1 δ , log T } T √ m ! . Remarks: W e make tw o remarks ab out the ab o v e corollaries. The first is that if one aband ons the requirement that the optimization pro cedur e b e an “anytime ” algorithm—alw a ys able to re- turn a resu lt—it is p ossible to giv e similar results by usin g a fixed s etting of u t ≡ u thr oughout. In particular, using Theorem 3 in Section 4.4 we can use u t = u/T to get essenti ally the same results as Corollaries 1 – 3 . As a side b enefit, it is then easier to satisfy the Lipschitz condition that E [ k ∂ F ( x ; ξ ) k 2 ] ≤ L 2 0 for x ∈ X + sup p µ . Our second observ ation is that Theorem 1 and the corol- laries app ear to require a v ery s p ecific setting of the constant L t to ac hiev e fast rates. Ho w ev er, the algorithm is in fact r obust to mis-sp ecification of L t , s in ce the instantaneo us sm o othness constan t L t is dominated b y the sto c hastic damp ing term η t in the algo rithm. Ind eed, since η t gro ws prop or- tionally to √ t for eac h corollary , w e alw a ys ha v e L t = L 1 /u t = L 1 /θ t u = O ( η t / √ tθ t ); that is, L t is order √ t s m aller than η t /θ t , so setting L t incorrectly up to order √ t h as essential ly neglig ible effect. 8 W e can sh o w the b ounds in the theorems ab o v e are tigh t, that is, u nimprov able up to constan t factors, by exploiting kno wn lo w er b ound s [ 25 , 1 ] for s to c hastic optimization problems. W e re-state some of these results here. F or instance, let X = { x ∈ R d | k x k 2 ≤ R 2 } , and consider all conv ex functions f that are L 0 , 2 -Lipsc hitz with r esp ect to the ℓ 2 -norm. Assu me that the sto c hastic oracle, when queried at a p oint x , r eturns a v ector g whose exp ectation is in ∂ f ( x ) with E [ k g k 2 2 ] ≤ L 2 0 , 2 . Then for any metho d that outputs a p oint x T ∈ X after T qu eries of the oracle , w e ha v e the low er b ound sup f n E [ f ( x T )] − min x ∈X f ( x ) o = Ω L 0 , 2 R 2 √ T , where the supremum is tak en o v er conv ex f that are L 0 , 2 -Lipsc hitz with resp ect to th e ℓ 2 -norm [ 1 , Section 3.1]. Similar b oun d s hold for problems with non-Euclidean geometry [ 1 ]; in particular, consider con v ex f th at are L 0 , ∞ -Lipsc hitz with resp ect to th e ℓ 1 -norm, that is, | f ( x ) − f ( y ) | ≤ L 0 , ∞ k x − y k 1 . Th en setting X = { x ∈ R d | k x k 1 ≤ R 1 } , we ha v e B ∞ (0 , R 1 /d ) ⊂ B 1 (0 , R 1 ) and th us sup f n E [ f ( x T )] − m in x ∈X f ( x ) o = Ω L 0 , ∞ R 1 √ T . In either geometry , no metho d can h av e optimiza tion error smaller than O ( LR / √ T ) after T queries of th e sto c hastic oracle. Let u s compare the ab o v e lo w er b ounds to the con v ergence rates in C orollaries 1 th r ough 3 . Examining the b ound in Corollaries 1 and 2 , w e see that the dominant terms are ord er L 0 R/ √ T m so long as m ≤ T / √ d . Since our metho d issues T m q u eries to the oracle, this is optimal. Similarly , the strategy of sampling uniformly from the ℓ ∞ -ball in Corollary 3 is optimal up to factors log arithmic in the dimension. In con trast to other optimization pr o cedures, ho w ev er, our algorithm p erforms an up date to the p arameter x t only after ev ery m qu eries to the oracle; as we show in the next section, th is is b eneficial in sev eral applications. 3 Applications and exp erimen tal results In th is section, w e d escrib e some applications of our results, and then giv e exp eriment al resu lts that illustrate our theoretical predictions. 3.1 Some applications The first application of our results is to p arallel computation and distr ibuted optimization. I magine that instead of querying the sto c hastic oracle serially , we can issue queries and aggregate the resulting sto c hastic gradien ts in parallel. In particular, assume that we ha v e a pro cedure in whic h the m qu er ies of th e sto chasti c oracle o ccur concurrently . Then Corollaries 1 – 4 imp ly th at in the same amount of time required to p erform T queries and up dates of the sto c hastic gradien t oracle serially , ac hieving an optimization error of O (1 / √ T ), the parallel implement ation can p ro cess T m queries and consequently has optimization error O (1 / √ T m ). W e n o w briefly describ e tw o p ossibilities for a distributed implementat ion of the ab o v e. The simplest arc hitecture is a master-w orke r arc hitecture, in wh ic h one master mainta ins th e parameters ( x t , y t , z t ), and eac h of m wo rkers has access to an u ncorrelated sto c hastic oracle for P and the smo othing d istribution µ . The master broadcasts the p oin t y t to the w ork ers, which sample ξ i ∼ P and Z i ∼ µ , retur ning s amp le gradients to the master. In a tree-structured net w ork, broadcast and 9 aggrega tion r equ ire at most O (log m ) steps; the relativ e sp eedup o v er a serial implementa tion is O ( m/ log m ). In recent w ork, Dek el et al. [ 9 ] giv e a series of r eductions showing h o w to d istribute v ariance-based sto chastic algorithms and ac hiev e an asymptotically optimal con v ergence rate. T h e algorithm giv en here, as sp ecified by equations ( 6 ) and ( 7a )–( 7c ), can b e exploited within their framew ork to ac hiev e an O ( m ) impr o v emen t in con v ergence rate o v er a serial implementat ion. More precisely , whereas ac hieving optimization error ǫ requires O (1 /ǫ 2 ) iterations for a cen tralized algorithm, the d istributed adaptation requires only O (1 / ( mǫ 2 )) iterations. Suc h an impr o v emen t is p ossible as a consequence of the v ariance reduction tec hniques w e ha v e describ ed. A second application of inte rest inv olv es pr ob lems where the set X and /or th e fu nction ϕ are complicated, so that calculat ing the proximal up date ( 7b ) b ecomes exp ensive. The proximal u p date ma y b e d istilled to computing min x ∈X h g , x i + ψ ( x ) or min x ∈X h g , x i + ψ ( x ) + ϕ ( x ) . (10) In suc h cases, it may b e b eneficial to accum ulate gradients by querying the sto c hastic oracle sev eral times in eac h iteration, u sing the a v eraged sub gradien t in th e up date ( 7b ), and th us s olv e only one pro ximal sub-pr ob lem f or a collectio n of samp les. Let us consider some concrete examples. I n statistica l applications in v olving the estimation of co v ariance matrices, th e d omain X is constrained in the p ositive semidefinite cone { X ∈ S n | X 0 } ; other applications inv olv e additional n uclear-norm constraints of the form X = { X ∈ R d 1 × d 2 | P min { d 1 ,d 2 } j =1 ρ j ( X ) ≤ C } . Examples of such problems include co v ariance matrix estimation, m atrix completion, and mo del identificatio n in v ector autoregressiv e pro cesses (see the pap er [ 24 ] and references therein for further discussion). Another example is the p r oblem of m etric learning [ 37 , 33 ], in whic h the learner is giv en a s et of n p oints { a 1 , . . . , a n } ⊂ R d and a matrix B ∈ R n × n indicating whic h p oints are close together in an unknown metric. T he goal is to estimate a p ositive semidefinite matrix X 0 such that h ( a i − a j ) , X ( a i − a j ) i is small when a i and a j b elong to the same class or are close, w hile h ( a i − a j ) , X ( a i − a j ) i is large when a i and a j b elong to different classes. It is desirable that the matrix X ha v e lo w rank , which allo ws th e statistician to d isco v er structur e or guaran tee p erformance on unseen d ata. As a concrete illustration, su pp ose that we are giv en a matrix B ∈ {− 1 , 1 } n × n , where b ij = 1 if a i and a j b elong to the same class, an d b ij = − 1 otherwise. In th is case, one p ossible optimization-based estimator inv ol ve s solving th e non-smo oth program min X,x 1 n 2 X i Λ 0 C 2 , (31) whic h yields the fir s t claim in Lemma 5 . The second statement in v olv es in v erting the b ound for th e different regimes of ǫ . Before pro ving the b ound , we note that for ǫ = Λ 0 C 2 , we ha v e exp( − ǫ 2 / 2 C 2 ) = exp( − Λ ǫ/ 2), so we can inv ert 25 eac h of the exp terms to solv e for ǫ and tak e the maxim um of the b ounds. W e b egin with ǫ in the regime closest to zero, recalling that η t = η √ t + 1. W e see that C 2 ≤ 16 eσ 4 η 2 T − 1 X t =0 1 t + 1 ≤ 16 eσ 4 η 2 log( T + 1) . Th us, in ve rting the b ou n d δ = exp( − ǫ 2 / 2 C 2 ), we get ǫ = q 2 C 2 log 1 δ , or that T − 1 X t =0 1 2 η t k e t k 2 ∗ ≤ T − 1 X t =0 1 2 η t E [ k e t k ∗ ] 2 + 4 √ 2 e σ 2 η r log 1 δ log( T + 1) with probabilit y at least 1 − δ . In the large ǫ r egime, we solv e δ = exp( − η ǫ/ 4 σ 2 ) or ǫ = 4 σ 2 η log 1 δ , whic h giv es that T − 1 X t =0 1 2 η t k e t k 2 ∗ ≤ T − 1 X t =0 1 2 η t E k e t k 2 ∗ + 4 σ 2 η log 1 δ with p r obabilit y at least 1 − δ , by the b ou n d ( 31 ). W e n o w return to prov e the in termediate claim ( 30 ). Let X := k e t k ∗ . By assumption, we hav e E exp( X 2 /σ 2 ) ≤ exp(1), so for λ ∈ [0 , 1] we see P ( X 2 /σ 2 > ǫ ) ≤ E [exp( λX 2 /σ 2 )] exp( − λǫ ) ≤ exp( λ − λǫ ) and replacing ǫ with 1 + ǫ we hav e P ( X 2 > σ 2 + ǫσ 2 ) ≤ exp( − ǫ ). If ǫσ 2 ≥ σ 2 − E X 2 , then σ 2 − E X 2 + ǫσ 2 ≤ 2 ǫσ 2 so P ( X 2 > E X 2 + 2 ǫσ 2 ) ≤ P ( X 2 > σ 2 + ǫσ 2 ) ≤ exp( − ǫ ) , while for ǫσ 2 < σ 2 − E X 2 , we clearly ha v e P ( X 2 − E X 2 > ǫσ 2 ) ≤ 1 ≤ exp(1) exp ( − ǫ ) since ǫ ≤ 1. In either case, w e ha v e P ( X 2 − E X 2 > ǫ ) ≤ exp(1) exp − ǫ 2 σ 2 . F or the opp osite concen tration inequalit y , we see that P (( E X 2 − X 2 ) /σ 2 > ǫ ) ≤ E [exp( λ E X 2 /σ 2 ) exp( − λX 2 /σ 2 )] exp( − λǫ ) ≤ exp( λ − λǫ ) or P ( X 2 − E X 2 < − σ 2 ǫ ) ≤ exp(1) exp( − ǫ ). Using the union b ound, we hav e P ( | X 2 − E X 2 | > ǫ ) ≤ 2 exp(1) exp − ǫ 2 σ 2 . (32) No w w e apply Lemma 17 to the b ound ( 32 ) to see that k e t k 2 ∗ − E [ k e t k 2 ∗ ] = X 2 − E [ X 2 ] is sub-exp on ential with p arameters Λ ≥ σ 2 and τ 2 ≤ 32 eσ 4 . 26 E Prop erties of randomized smo othing In this section, we d iscuss the analytic prop erties of the smo othed function f µ from the conv olu- tion ( 3 ). W e assu me throughout that functions are suffi cien tly in tegrable without b othering with measurabilit y conditions (since F ( · ; ξ ) is con v ex, this is no real loss of generalit y [ 4 , 31 ]). By F ub ini’s theorem, we hav e f µ ( x ) = Z R d Z Ξ F ( x + y ; ξ ) dP ( ξ ) µ ( y ) dy = Z Ξ Z R d F ( x + y ; ξ ) µ ( y ) dy dP ( ξ ) = Z Ξ F µ ( x ; ξ ) dP ( ξ ) . Here F µ ( x ; ξ ) = ( F ( · ; ξ ) ∗ µ ( −· ))( x ). W e b egin with the observ ation that sin ce µ is a dens ity w ith resp ect to Leb esgue measure, the function f µ is in fact d ifferen tiable [ 4 ]. So we hav e already made our problem somewhat smo other, as it is no w d ifferen tiable; for the remainder, we consider fin er prop erties of the sm o othing op eration. In particular, we will sho w that und er suitable cond itions on µ , F ( · ; ξ ), and P , the function f µ is uniformly close to f o v er X and ∇ f µ is Lipsc hitz con tin uous. E.1 Statemen ts of smo ot hing lemmas A remark on notation b efore p r o ceeding: since f is conv ex, it is almost-ev erywhere differen tiable, and w e can abuse notation and tak e its gradien t inside of int egrals and exp ectations with resp ect to Leb esgue measure. Similarly , F ( · ; ξ ) is almost ev erywhere differen tiable with resp ect to L eb esgue measure, so w e use the same abu s e of notation for F and wr ite ∇ F ( x + Z ; ξ ), which exists with probabilit y 1. W e p ro v e the follo wing set of smo othness lemmas at the end of this section. Lemma 6. L et µ b e the uniform density on the ℓ ∞ -b al l of r adius u . Assume that E [ k ∂ F ( x ; ξ ) k 2 ∞ ] ≤ L 2 0 for al l x ∈ X + B ∞ (0 , u ) Then (i) f ( x ) ≤ f µ ( x ) ≤ f ( x ) + L 0 d 2 u (ii) f µ is L 0 -Lipschitz with r e sp e ct to the ℓ 1 -norm over X . (iii) f µ is c ontinuously differ entiable; mor e over, its gr adient is L 0 u -Lipschitz c ontinuous with r esp e ct to the ℓ 1 -norm. (iv) L et Z ∼ µ . Then E [ ∇ F ( x + Z ; ξ )] = ∇ f µ ( x ) and E [ k∇ f µ ( x ) − ∇ F ( x + Z ; ξ ) k 2 ∞ ] ≤ 4 L 2 0 . Ther e exists a function f for which e ach of the estimates (i)–(iii) ar e tight simultane ously, and (iv) is tight at le ast to a factor of 1 / 4 . Remark: Note th at the h yp othesis of th is lemma is s atisfied if for an y fi xed ξ ∈ Ξ, the fu nction F ( · ; ξ ) is L 0 -Lipsc hitz with resp ect to the ℓ 1 -norm. The follo w ing lemma p ro vides b ounds for uniform smoothin g of functions Lipsc hitz w ith r esp ect to the ℓ 2 -norm wh ile sampling from an ℓ ∞ -ball. Lemma 7. L et µ b e the uniform density on B ∞ (0 , u ) and assume that E [ k ∂ F ( x ; ξ ) k 2 2 ] ≤ L 2 0 for x ∈ X + B ∞ (0 , u ) . Then (i) The function f satisfies the upp er b ound f ( x ) ≤ f µ ( x ) ≤ f ( x ) + L 0 u √ d 27 (ii) The function f µ is L 0 -Lipschitz over X . (iii) The function f µ is c ontinuously diffe r entiable; mor e over, its gr ad ient is 2 √ dL 0 u Lipschitz c on- tinuous. (iv) F or r ando m variables Z ∼ µ and ξ ∼ P , we have E [ ∇ F ( x + Z ; ξ )] = ∇ f µ ( x ) , and E [ k∇ f µ ( x ) − ∇ F ( x + Z ; ξ ) k 2 2 ] ≤ L 2 0 . The latter estimate is tight. A sim ilar lemma can b e pro v ed when µ is th e density of the uniform distribution on B 2 (0 , u ). In th is case, Y ou s efian et al. giv e (i)–(iii) of the follo wing lemma [ 38 ]. Lemma 8 (Y ousefian, Nedi ´ c, Shanbhag) . L et f µ b e define d as in ( 3 ) wher e µ is the uniform density on the ℓ 2 -b al l of r adius u . Assume that E [ k ∂ F ( x ; ξ ) k 2 2 ] ≤ L 2 0 for x ∈ X + B 2 (0 , u ) . Then (i) f ( x ) ≤ f µ ( x ) ≤ f ( x ) + L 0 u (ii) f µ is L 0 -Lipschitz over X . (iii) f µ is c ontinuously differ entiable; mor e over, its gr adient is L 0 √ d u -Lipschitz c on tinuous. (iv) L et Z ∼ µ . Then E [ ∇ F ( x + Z ; ξ )] = ∇ f µ ( x ) , and E [ k∇ f µ ( x ) − ∇ F ( x + Z ; ξ ) k 2 2 ] ≤ L 2 0 . In addition, ther e e xists a function f for which e ach of the b ounds (i)–(iv) is tight—c annot b e impr ove d by mor e than a c onstant factor—simultane ously. Lastly , for situations in whic h F ( · ; ξ ) is L 0 -Lipsc hitz w ith resp ect to the ℓ 2 -norm o v er all of R d and for P -a.e. ξ , w e can u s e the normal distrib ution to p erform smo othing of the exp ected f u nction f . Th e follo wing lemma is similar to a r esult of Lakshmanan and de F arias [ 17 , Lemma 3.3], but they consid er fu nctions Lipschitz-c on tin uous w ith resp ect to the ℓ ∞ -norm, i.e. | f ( x ) − f ( y ) | ≤ L k x − y k ∞ , which is to o stringen t for our p urp oses, and we carefully quan tify the dep end ence on the d imension of the u nderlying problem. Lemma 9. L et µ b e the N (0 , u 2 I d × d ) distribution. Assume that F ( · ; ξ ) is L 0 -Lipschitz with r esp e ct to the ℓ 2 -norm—that is sup {k g k 2 | g ∈ ∂ F ( x ; ξ ) , x ∈ X } ≤ L 0 for P - a.e. ξ . Then the fol lowing pr op erties hold: (i) f ( x ) ≤ f µ ( x ) ≤ f ( x ) + L 0 u √ d (ii) f µ is L 0 -Lipschitz with r e sp e ct to the ℓ 2 norm (iii) f µ is c ontinuously differ entiable; mor e over, its gr adient is L 0 u -Lipschitz c ontinuous with r esp e ct to the ℓ 2 -norm. (iv) L et Z ∼ µ . Then E [ ∇ F ( x + Z ; ξ )] = ∇ f µ ( x ) , and E [ k∇ f µ ( x ) − ∇ F ( x + Z ; ξ ) k 2 2 ] ≤ L 2 0 . 28 In addition, ther e exists a function f for which e ach of the b ounds (i)–(iv) c annot b e impr ove d by mor e than a c onstant factor. Our fin al lemma illustrates the sharpn ess of the b ound s we hav e pro ve d for fun ctions that are Lipsc hitz with resp ect to the ℓ 2 -norm. Sp ecifically , w e sho w that at lea st for the n ormal and uniform distributions, it is imp ossible to get more fav orable tradeoffs b etw ee n th e uniform approximat ion error of the smo othed function f µ and the Lipsc hitz con tin uit y of ∇ f µ . W e b egin with the follo wing definition of our t w o t yp es of error, then give the lemma: E U ( f ) := inf L ∈ R | sup x ∈X | f ( x ) − f µ ( x ) | ≤ L (33) E ∇ ( f ) := inf L ∈ R | k∇ f µ ( x ) − ∇ f µ ( y ) k 2 ≤ L k x − y k 2 ∀ x, y ∈ X (34) Lemma 10. F or µ e qual to either the uniform distribution on B 2 (0 , u ) or N (0 , u 2 I d × d ) , ther e exists an L 0 -Lipschitz c on tinuous function f and dimension i ndep endent c onstant c > 0 such that E U ( f ) E ∇ ( f ) ≥ cL 2 0 √ d. Remark The imp ortance of the ab ov e b ound is made clear b y insp ecting the con v ergence guaran tee of T heorem 1 . The terms L 1 and L 0 in the b ound ( 8 ) can b e replaced with E ∇ ( f ) and E U ( f ), resp ectiv ely . Minimizing o v er u , w e see that th e leading term in the conv erge nce guaran tee ( 8 ) is of ord er √ E ∇ ( f ) E U ( f ) ψ ( x ∗ ) T ≥ cL 0 d 1 / 4 √ ψ ( x ∗ ) T . In particular, this result shows that our analysis of the dimension dep end ence of the randomized smo othing in Lemmas 8 and 9 is sharp and cannot b e improv ed b y more than a constan t factor (see also Corollaries 1 and 2 ). E.2 Pro of of smo othing lemmas The follo wing tec hnical lemma is a bu ilding blo c k for our results; we pro vide a pr o of in Sec. E.2.5 . Lemma 11. L et f b e c onvex and L 0 -Lipschitz c ontinuous with r esp e ct to a norm k·k over the domain su pp µ + X . L et Z b e distribute d ac c or ding to the distribution µ . Then k∇ f µ ( x ) − ∇ f µ ( y ) k ∗ = k E [ ∇ f ( x + Z ) + ∇ f ( y + Z )] k ∗ ≤ L 0 Z | µ ( z − x ) − µ ( z − y )) | dz . (35) If the norm k·k is the ℓ 2 -norm and the density µ ( z ) is r otatio nal ly symmetric and non-incr e asing as a function of k z k 2 , the b ound ( 35 ) i s tight; sp e cific al ly, it is attaine d by the function f ( x ) = L 0 y k y k 2 , x − 1 2 . E.2.1 Pro of of L e mma 6 Throughout, w e let Z ∼ µ , where µ is the un iform d en sit y on B ∞ (0 , u )), and h u ( x ) denote the (shifted) Hub er loss h u ( x ) = x 2 2 u + u 2 for x ∈ [ − u, u ] | x | otherwise . (36) No w we prov e eac h of th e p arts of th e lemma in turn. 29 (i) Since E [ Z ] = 0, Jensen’s inequalit y shows f ( x ) = f ( x + E [ Z ]) ≤ E [ f ( x + Z )] = f µ ( x ), b y defin ition of f µ . No w recall the definition of k ∂ f ( x ) k = sup {k g k | g ∈ ∂ f ( x ) } from the in tro duction. T o get the up p er un iform b ound, n ote fi rst that by assumption, f is L 0 -Lipsc hitz con tin uous o v er X + B ∞ (0 , u ), since by assumption k ∂ f ( x ) k ∞ ≤ E [ k ∂ F ( x ; ξ ) k ∞ ] ≤ q E [ k ∂ F ( x ; ξ ) k 2 ∞ ] ≤ L 0 , again usin g Jensen’s inequalit y . Thus f is L 0 -Lipsc hitz w ith resp ect to the ℓ 1 -norm, f µ ( x ) = E [ f ( x + Z )] ≤ E [ f ( x )] + L 0 E [ k Z k 1 ] = f ( x ) + dL 0 u 2 . T o see that the estimate is tigh t, n ote that for f ( x ) = k x k 1 , we ha v e f µ ( x ) = P d i =1 h u ( x i ), where h u is the shifted Hub er loss ( 36 ), and f µ (0) = du/ 2, while f (0) = 0. (ii) W e n o w p ro v e that f µ is L 0 -Lipsc hitz with r esp ect to k· k 1 . Under the stated conditions, w e ha v e ∂ f ( x ) = E [ ∂ F ( x ; ξ )], whic h sho ws that k ∂ f ( x ) k 2 ∞ ≤ E [ k ∂ F ( x ; ξ ) k 2 ∞ ] ≤ L 2 0 . Th us, we obtain the upp er b oun d k∇ f µ ( x ) k ∞ = k E [ ∇ f ( x + Z )] k ∞ ≤ E [ k∇ f ( x + Z ) k ∞ ] ≤ L 0 . Tigh tness follo ws again by considering f ( x ) = k x k 1 , w here L 0 = 1. (iii) Recall that differen tiabilit y is directly implied b y earlier w ork of Bertsek as [ 4 ]. Since f is a.e.- differen tiable, we h a v e ∇ f µ ( x ) = E [ ∇ f ( x + Z )] for Z uniform on [ − u, u ] d . W e no w establish Lipsc hitz con tin uit y of ∇ f µ ( x ). F or a fixed p air x, y ∈ X + B ∞ (0 , u ), w e h a v e fr om Lemma 11 k E [ ∇ f ( x + Z )] − E [ ∇ f ( y + Z )] k ∞ ≤ L 0 · 1 (2 u ) d λ B ∞ ( x, u )∆ B ∞ ( y , u ) , where λ denotes Leb esgue m easure and ∆ d enotes the s ymmetric set-difference. By a straigh t- forw ard geometric calculation, we see that λ ( B ∞ ( x, u )∆ B ∞ ( y , u )) = 2 (2 u ) d − d Y i =1 [2 u − | x i − y i | ] + . (37) T o cont rol th e volume term ( 37 ) and complete the pro of, we need an aux iliary lemma (whic h w e pr o v e at the end of this su bsection). Lemma 12. L et a ∈ R d + and u ∈ R + . Then Q d i =1 [ u − a i ] + ≥ u d − k a k 1 u d − 1 . The volume ( 37 ) is easy to control usin g Lemma 12 . Indeed, we ha v e 1 2 λ ( B ∞ ( x, u )∆ B ∞ ( y , u )) ≤ (2 u ) d − (2 u ) d + k x − y k 1 (2 u ) d − 1 , whic h implies the desired result, that is, that k E [ ∇ f ( x + Z )] − E ∇ [ f ( y + Z )] k ∞ ≤ L 0 k x − y k 1 u . T o see the tigh tness claimed in the prop osition, consider as usual f ( x ) = k x k 1 and let e i denote the i th standard b asis v ect or. Then L 0 = 1, ∇ f µ (0) = 0, ∇ f µ ( ue i ) = e i , and k∇ f µ (0) − ∇ f µ ( ue i ) k ∞ = 1 = L 0 u k 0 − ue i k 1 . 30 (iv) The equalit y E [ ∇ F ( x + Z ; ξ )] = ∇ f µ ( x ) follo ws from F ubin i’s theorem. Th e seco nd state ment is simply a consequence of the triangle inequalit y . Finally , the tightness follo ws from the follo wing one-dimensional example. Let f ( x ) = L 0 | x | for x ∈ R and L 0 > 0. Then f µ ( x ) is L 0 times the Hu b er loss h u ( x ) defined earlier, and f ′ µ (0) = 0. Thus for Z u niform on [ − u, u ], E ( f ′ µ (0) − f ′ ( Z )) 2 = E [ L 2 0 sign( Z ) 2 ] = L 2 0 , whic h is the Lipschitz constan t of f . Pro of of Lemma 12 W e b egin by n oting that the statemen t of the lemma trivially h olds whenev er k a k 1 ≥ u , as the right hand side of the inequalit y is then n on -p ositiv e. No w, fix s ome c < u , and consider the p roblem min a d Y i =1 ( u − a i ) + s . t . a 0 , k a k 1 ≤ c. (38) W e sh o w that the minimum is ac hiev ed w hen one index is set to a i = c and the rest to 0. Ind eed, supp ose f or the sake of contradict ion th at ˜ a is the solution to ( 38 ) bu t that there are ind ices i, j with a i ≥ a j > 0, that is, at least t w o non-zero in dices. By taking a logarithm, it is clear that minimizing the ob jectiv e ( 38 ) is equiv alent to min im izing P d i =1 log( u − a i ). T aking the deriv ativ e of log ( u − a i ) for i and j , we see that ∂ ∂ a i log( u − a i ) = − 1 u − a i ≤ − 1 u − a j = ∂ ∂ a j log( u − a j ) . Since − 1 u − a is d ecreasing function of a , increasing a i sligh tly and d ecreasing a j sligh tly causes log( u − a i ) to decrease faster than log( u − a j ) increases, th us decreasing the o v erall ob jective . This is the desired contradicti on. E.2.2 Pro of of L e mma 7 The pro of of th is lemma is nearly identica l to the pro of of Lemma 6 , though we r eplace k·k ∞ norms with k·k 2 . W e pr o v e eac h of the statemen ts in turn , and throughout let Z denote a v ariable distributed u niformly on B ∞ (0 , u ). (i) Jensen’s inequalit y implies that f ( x ) = f ( x + E [ Z ]) ≤ E [ f ( x + Z )] = f µ ( x ). F or the u pp er b ound on f µ , u se the Lipschitz con tin uit y of f and Jensen’s in equalit y to see that f µ ( x ) ≤ f ( x ) + L 0 E [ k Z k 2 ] ≤ f ( x ) + L 0 q E [ k Z k 2 2 ] = f ( x ) + L 0 r du 2 3 . (ii) As earlier, sin ce E [ ∇ f ( x + Z )] = ∇ f µ ( x ), we h av e k E [ ∇ f ( x + Z )] k 2 ≤ E [ k∇ f ( x + Z ) k 2 ] ≤ L 0 . (iii) Using the same sequence of s teps as in the pr o of of part (iii) in Lemm a 6 , we see that k∇ f µ ( x ) − ∇ f µ ( y ) k 2 ≤ 1 (2 u ) d L 0 λ ( B ∞ ( x, u )∆ B ∞ ( y , u )) ≤ 2 (2 u ) d L 0 (2 u ) d − 1 k x − y k 1 ≤ L 0 √ d u k x − y k 2 . 31 (iv) As in the pr o of of Lemma 6 , F ubin i’s theorem implies the firs t p art of the statemen t, while the second part is a consequence of the fact that E [ k∇ f µ ( x ) − ∇ F ( x + Z ; ξ ) k 2 2 ] = E [ k∇ F ( x + Z ; ξ ) k 2 2 ] − k∇ f µ ( x ) k 2 2 ≤ L 2 0 b y th e assumptions on F . Tightness follo ws from considering the one d imensional fu n ction f ( x ) = | x | as earlier. E.2.3 Pro of of L e mma 9 Throughout this p ro of, we use Z to d enote a random v ariable distribu ted as N (0 , u 2 I ). (i) As in the earlier lemmas, Jensen’s inequalit y giv es f ( x ) = f ( x + E Z ) ≤ E f ( x + Z ) = f µ ( x ). Our assum ption on ∂ F ( · ; ξ ) imp lies that f is L 0 -Lipsc hitz, so f µ ( x ) = E [ f ( x + Z )] ≤ E [ f ( x )] + L 0 E [ k Z k 2 ] ≤ f ( x ) + L 0 q E [ k Z k 2 2 ] = f ( x ) + L 0 u √ d. (ii) This pro of is analogous to that of part (ii) of Lemmas 6 an d 7 . The tigh tness of the Lipsc hitz constan t can b e v erified b y taking f ( x ) = h v , x i for v ∈ R d , in wh ich case f µ ( x ) = f ( x ), and b oth hav e gradient v . (iii) No w w e sho w that ∇ f µ is Lipschitz con tin uous. Indeed, app lying Lemma 11 w e hav e k∇ f µ ( x ) − ∇ f µ ( y ) k 2 ≤ L 0 Z | µ ( z − x ) − µ ( z − y ) | dz . | {z } I 2 (39) What remains is to cont rol the integral term ( 39 ), d enoted I 2 . In order to do so, we follo w a technique used b y Lakshman an and Pucci d e F arias [ 17 ]. Since µ satisfies µ ( z − x ) ≥ µ ( z − y ) if and only if k z − x k 2 ≥ k z − y k 2 , we ha v e I 2 = Z | µ ( z − x ) − µ ( z − y ) | dz = 2 Z z : k z − x k 2 ≤k z − y k 2 ( µ ( z − x ) − µ ( z − y )) dz . By making the c hange of v ariable w = z − x for the µ ( z − x ) term in I 2 and w = z − y for µ ( z − y ), w e rewrite I 2 as I 2 = 2 Z w : k w k 2 ≤k w − ( x − y ) k 2 µ ( w ) dw − 2 Z w : k w k 2 ≥k w − ( x − y ) k 2 µ ( w ) dw = 2 P µ ( k Z k 2 ≤ k Z − ( x − y ) k 2 ) − 2 P µ ( k Z k 2 ≥ k Z − ( x − y ) k 2 ) where P µ denotes p robabilit y according to the densit y µ . Squ aring the terms insid e the probabilit y b oun ds, w e note that P µ k Z k 2 2 ≤ k Z − ( x − y ) k 2 2 = P µ 2 h Z, x − y i ≤ k x − y k 2 2 = P µ 2 Z, x − y k x − y k 2 ≤ k x − y k 2 32 Since ( x − y ) / k x − y k 2 has norm 1 and Z ∼ N (0 , u 2 I ) is rotationally in v aria nt, the random v ariable W = D Z, x − y k x − y k 2 E has distr ib ution N (0 , u 2 ). Consequent ly , we h a v e I 2 2 = P ( W ≤ k x − y k 2 / 2) − P ( W ≥ k x − y k 2 / 2) = Z k x − y k 2 / 2 −∞ 1 √ 2 π u 2 exp( − w 2 / (2 u 2 )) dw − Z ∞ k x − y k 2 / 2 1 √ 2 π u 2 exp( − w 2 / (2 u 2 )) dw ≤ 1 u √ 2 π k x − y k 2 , where we ha v e exploited symmetry an d the inequalit y exp( − w 2 ) ≤ 1. Com bining th is b ound with the earlier inequalit y ( 39 ), we hav e k∇ f µ ( x ) − ∇ f µ ( y ) k 2 ≤ 2 L 0 u √ 2 π k x − y k 2 ≤ L 0 u k x − y k 2 . (iv) The pro of of the v ariance b ound is completely identica l to that for Lemma 7 . That eac h of the b oun ds ab o v e is tigh t is a consequence of Lemma 10 . E.2.4 Pro of of L e mma 10 Throughout this p ro of, c will denote a dimension indep endent constant and ma y c hange from line to line and inequalit y to inequalit y . W e will sho w the r esult holds by considering a conv e x com bi- nation of “difficult” f unctions, in this case f 1 ( x ) = L 0 k x k 2 and f 2 ( x ) = L 0 |h x, y / k y k 2 i − 1 / 2 | , and c ho osing f = 1 2 f 1 + 1 2 f 2 . Our fi rst step in the pro of will b e to con trol E U . By definition of the constan t E U in Eq. ( 33 ), for any con v ex f 1 and f 2 w e ha v e E U ( 1 2 f 1 + 1 2 f 2 ) ≥ 1 2 max { E U ( f 1 ) , E U ( f 2 ) } . Thus for Z ∼ N (0 , u 2 I d × d ) w e hav e E [ f 1 ( Z )] ≥ cL 0 u √ d , i.e. E U ( f ) ≥ cL 0 u √ d , and for Z uniform on B 2 (0 , u ), w e h a v e E [ f 1 ( Z )] ≥ cL 0 u , i.e. E U ( f ) ≥ cL 0 u . T u rning to con trol of E ∇ , we n ote th at for an y random v ariable Z rotationally symmetric ab out the origin, sym m etry implies that E [ ∇ f 1 ( Z + y )] = L 0 E Z + y k Z + y k 2 = a z y where a z > 0 is a constan t dep enden t on Z . Thus we ha v e E [ ∇ f 1 ( Z )] − E [ ∇ f 1 ( Z + y )] + E [ ∇ f 2 ( Z )] − E [ ∇ f 2 ( Z + y )] = 0 − a z y − L 0 y k y k 2 Z | µ ( z ) − µ ( z − y ) | dz from Lemma 11 . As a consequence (since a z y is p arallel to y / k y k 2 ), we see that E ∇ 1 2 f 1 + 1 2 f 2 ≥ 1 2 L 0 Z | µ ( z ) − µ ( z − y ) | dz . So what remains is to low er b oun d R | µ ( z ) − µ ( z − y ) | dz for the u n iform and normal distributions. As we sa w in th e p ro of of Lemma 9 , for the normal d istribution Z | µ ( z ) − µ ( z − y ) | dz = 1 u √ 2 π Z k y k 2 / 2 −k y k 2 / 2 exp( − w 2 / (2 u 2 )) dw = 1 u √ 2 π k y k 2 + O k y k 2 2 u ! . 33 By taking small enough k y k 2 , we achiev e the inequalit y E ∇ 1 2 f 1 + 1 2 f 2 ≥ c L 0 u when Z ∼ N (0 , u 2 I d × d ). T o sho w that the b ound in the lemma is sh arp for the case of the un iform distribution on B 2 (0 , u ), w e sligh tly mo dify the pro of of Lemma 2 in [ 38 ]. In particular, by usin g a T a ylor expansion instead of firs t-order con ve xit y in inequalit y (11) of [ 38 ], it is not difficult to show that Z | µ ( z ) − µ ( z − y ) | dz = κ d !! ( d − 1)!! k y k 2 u + O d k y k 2 2 u 2 , where κ = 2 /π if d is even and 1 otherwise. Since d !! / ( d − 1)!! = Θ( √ d ), we ha v e pro v ed that for small enough k y k 2 , th ere is a constant c such that R | µ ( z ) − µ ( z − y ) | dz ≥ c √ d k y k 2 /u . E.2.5 Pro of of L e mma 11 Without loss of generalit y , we assume that x = 0 (a linear c hange of v ariables allo ws this). Let g : R d → R d b e a vect or-v alued f unction su c h that k g ( z ) k ∗ ≤ L 0 for all z ∈ { y } + supp µ . Th en E [ g ( Z ) − g ( y + Z )] = Z g ( z ) µ ( z ) dz − Z g ( y + z ) µ ( z ) dz = Z g ( z ) µ ( z ) dz − Z g ( z ) µ ( z − y ) dz = Z I > g ( z )[ µ ( z ) − µ ( z − y )] dz − Z I < g ( z )[ µ ( z − y ) − µ ( z )] dz (40) where I > = { z ∈ R d | µ ( z ) > µ ( z − y ) } an d I < = { z ∈ R d | µ ( z ) < µ ( z − y ) } . It is no w clear that when we tak e norms w e ha v e k E g ( Z ) − g ( y + Z ) k ∗ ≤ sup z ∈ I > ∪ I < k g ( z ) k ∗ Z I > [ u ( z ) − u ( z − y )] dz + Z I < [ u ( z − y ) − u ( z )] dz ≤ L 0 Z I > µ ( z ) − µ ( z − y ) dz + Z I < µ ( z − y ) − µ ( z ) dz = L 0 Z | µ ( z ) − µ ( z − y ) | dz . T aking g ( z ) to b e an arbitrary element of ∂ f ( z ) completes th e p ro of of the b ound ( 35 ). T o see that the result is tight when µ is rotationally s y m metric and th e norm k·k = k·k 2 , w e note the follo wing. F rom th e equalit y ( 40 ), w e see that k E [ g ( Z ) − g ( y + Z )] k 2 is maximized by c ho osing g ( z ) = v f or z ∈ I > and g ( z ) = − v for z ∈ I < for any v such that k v k 2 = L 0 . Sin ce µ is rotationally symmetric and non-increasing in k z k 2 , I > = n z ∈ R d | µ ( z ) > µ ( z − y ) o = n z ∈ R d | k z k 2 2 < k z − y k 2 2 o = z ∈ R d | h z , y i < 1 2 k y k 2 2 I < = n z ∈ R d | µ ( z ) < µ ( z − y ) o = n z ∈ R d | k z k 2 2 > k z − y k 2 2 o = z ∈ R d | h z , y i > 1 2 k y k 2 2 . So all we need do is fi n d a function f for whic h there exists v w ith k v k 2 = L 0 , and su ch that ∂ f ( x ) = { v } for x ∈ I > and ∂ f ( x ) = {− v } for x ∈ I < . By insp ection, the function f defin ed in the statemen t of th e lemma satisfies these tw o d esiderata for v = L 0 y k y k 2 . 34 F Sub-Gaussian and sub -exp onent ial tail b ounds F or reference purp oses, w e state here some standard definitions and facts ab out sub -Gaussian and sub-exp on ential random v ariables (see the b o oks [ 7 , 21 , 35 ] for fu rther details). F.1 Sub-Gaussia n v ariables This class of rand om v a riables is characte rized by a quad r atic up p er b oun d on the momen t gener- ating fu n ction: Definition F.1. A zer o-me an r and om v ariable X is c al le d sub-Gaussian with parameter σ 2 if E exp( λX ) ≤ exp( σ 2 λ 2 / 2) for al l λ ∈ R . Remarks: If X i , i = 1 , . . . , n are indep endent sub-Gaussian with parameter σ 2 , it follo ws from this definition that 1 n P n i =1 X i is sub-Gaussian with parameter σ 2 /n . Moreo v er, it is w ell-kno wn that an y zero-mean r andom v ariable X satisfying | X | ≤ C is sub -Gaussian w ith parameter σ 2 ≤ C 2 . Lemma 13 (Buldygin and Kozac henk o [ 7 ], Lemma 1.6) . L et X − E X b e sub- Gaussian with p a- r ameter σ 2 . Then for s ∈ [0 , 1] , E exp sX 2 2 σ 2 ≤ 1 √ 1 − s exp ( E X ) 2 2 σ 2 · s 1 − s . The maxim um of d su b-Gaussian random v ariables gro ws loga rithmically in d , as sh o wn b y the follo wing result: Lemma 14. L et X ∈ R d b e a r andom v e cto r with sub-Gaussian c omp onents, e ach with p ar am eter at most σ 2 . Then E k X k 2 ∞ ≤ max { 6 σ 2 log d, 2 σ 2 } . Using the d efinition of s ub-Gaussianit y , the result can b e pro v ed by a com bination of union b ounds and Ch ernoff ’s inequalit y (see v an der V aart and W ellner [ 35 , Lemma 2.2.2] or Bu ldygin and Kozac henk o [ 7 , Ch apter I I] for d etails). The follo wing martingale-based b ound for v ariables with conditionally su b-Gaussian b eha vior is essen tially standard [ 2 , 13 , 7 ]. Lemma 15 (Azuma-Hoeffdin g) . L et X i b e a martingale differ enc e se quenc e adapte d to the filtr a- tion F i , and assume that e ach X i is c ond itional ly sub-Gaussian with p ar ameter σ 2 i , me an ing that E [exp( λX i ) | F i − 1 ] ≤ exp( λ 2 σ 2 i / 2) . Then for al l ǫ > 0 , P 1 n n X i =1 X i ≥ ǫ ≤ exp − nǫ 2 2 P n i =1 σ 2 i /n . (41) The next lemma uses martingale tec hniques to establish the sub-Gaussianit y of a n ormed sum: Lemma 16. L et X 1 , . . . , X n b e indep endent r and om ve ctors with k X i k ≤ L for al l i . Define S n = P n i =1 X i . Then k S n k − E k S n k is sub-Gaussian with p ar ameter at most 4 nL 2 . 35 Pro of The pr o of follo ws f rom the realization that wh en k X i k ≤ L , the quantit y k S n k − E k S n k can b e controlle d using sin gle-dimensional martingale tec hniques [ 21 , Chapter 6]. W e constr u ct the Do ob m artingale for th e sequence X i . Let F i b e the σ -field of X 1 , . . . , X i and define the real- v alued rand om v ariables Z i = E [ k S n k | F i ] − E [ k S n k | F i − 1 ], where F 0 is the trivial σ -field. Let S n \ i = P j 6 = i X j . T hen E [ Z i | F i − 1 ] = 0 and | Z i | = | E [ k S n k | F i − 1 ] − E [ k S n k | F i ] | ≤ E S n \ i | F i − 1 − E S n \ i | F i + E [ k X i k | F i − 1 ] + E [ k X i k | F i ] = k X i k + E [ k X i k ] ≤ 2 L since X j is indep en den t of F i − 1 for j ≥ i . Th us Z i defines a b ound ed m artin gale d ifference se- quence, and P n i =1 Z i = k S n k − E [ k S n k ]. Since | Z i | ≤ 2 L , the Z i are conditionally sub-Gaussian with p arameter at most 4 L 2 . T h us P n i =1 Z i is sub-Gaussian with p arameter at most 4 nL 2 . F.2 Sub-exponential random v ariab les A sligh tly less restrictive tail condition defines the class of su b-exp onential random v ariables: Definition F.2. A zer o-me an r ando m v ariable X is sub-exp onen tial with p ar ameters (Λ , τ ) if E [exp( λX )] ≤ exp λ 2 τ 2 2 for al l | λ | ≤ Λ . The follo wing lemma provides an equiv alen t c haracterizatio n of sub-exp onen tial v ariable via a tail b ound : Lemma 17. L et X b e a zer o-me an r an dom v ariable. If ther e ar e c onstants a, α > 0 such that P ( | X | ≥ t ) ≤ a exp( − αt ) for al l t > 0 then X is sub-exp onentia l with p ar am eters Λ = α/ 2 and τ 2 = 4 a/α 2 . The p ro of of th e lemma follo ws f rom a T a ylor expansion of exp( · ) and the id en tit y E [ | X | k ] = R ∞ 0 P ( | X | k ≥ t ) dt (for similar results, see Buld y gin and Kozac henk o [ 7 , Ch apter I.3]). Lastly , any rand om v ariable whose square is sub-exp onen tial is sub-Gaussian, as sho wn by the follo wing result: Lemma 18 (Lan, Nemiro vski, Shapiro [ 20 ], Lemm a 6) . L et X b e a ze r o-me an r andom v ariable satisfying the mom ent gener ating ine quality E [exp( X 2 /σ 2 )] ≤ exp(1) . Then X is sub-Gaussian with p ar ameter at most 3 / 2 σ 2 . 36 References [1] A. Agarw al, P . L. Bartlett, P . Ravikumar, and M. J . W ainwrigh t. Information-theoretic lo we r b ound s on the oracle complexit y of conv ex optimization. IE EE T r ansactions on Information The ory , 2012. T o app ear. [2] K. Azuma. W eigh ted sums of certain dep endent random v ariables. T ohoku Mathematic al Journal , 68:357–367 , 1967. [3] A. Ben-T al and M. T eb oulle. A smo othing tec hnique for nondifferentia ble optimization pr ob- lems. In Optimization , Lecture Notes in Mathematics 140 5, pages 1–11. Sprin ger V erlag, 1989 . [4] D. P . Bertsek as. S to c hastic optimization problems with nondifferentia ble cost functionals. Journal of Optimization The ory and Applic ations , 12(2) :218–231 , 1973. [5] L. M. Bregman. The relaxation metho d of fi n ding the common p oint of con v ex s ets and its application to the s olution of prob lems in con v ex pr ogramming. USSR Computationa l Mathematics and Mathematic al Physics , 7:200–217, 1967. [6] P . Bruc k er. An O ( n ) algorithm for quadratic knapsac k pr oblems. O p er ations R ese ar c h L etters , 3(3):1 63–166, 1984. [7] V. Buldy gin and Y. Kozac henk o. Me tric Char acterization of R and om V ariables and R andom Pr o c esses , vo lume 188 of T r ansl ations of Mathematic a l Mono gr aphs . American Mathematical So ciet y , 2000. [8] C. Chen and O. L. Mangasarian. A class of smo othing functions for nonlinear and mixed complemen tarit y pr oblems. Computational Optimization and A pplic ations , 5:97– 138, 1996. [9] O. Dekel , R. Gilad-Bac hrac h, O . Sh amir , and L. Xiao. Optimal distributed online p rediction using min i-batc hes. Journal of Machine L e arning R ese ar ch , 13:16 5–202, 2012. [10] J. C. Duc hi, S. Shalev-Shw artz , Y. Singer, and A. T ew ari. Comp osite ob jectiv e mirr or d escen t. In Pr o c e e dings of the Twenty Thir d Annual Confer enc e on Computational L e arning The or y , 2010. [11] Y. M. Erm oliev. On the s to c hastic quasi-gradient m etho d and sto chastic qu asi-Fey er sequences. Kib ernetika , 2:72–8 3, 1969 . [12] J. Hiriart-Urrut y and C. Lemar ´ ec hal. Convex Analysis and M inimization Algorith ms I . Springer, 1996. [13] W. Ho effding. Pr obabilit y inequalities f or s ums of b ound ed rand om v ariables. Journal of the Americ an Statistic al A sso ciation , 58(301):13 –30, Mar. 1963. [14] P . J . Hub er. R obust Statistics . John Wiley and S ons, New Y ork, 1981. [15] A. Juditsky , A. Nemiro vski, and C. T auv el. Solving v ariatio nal inequalities with the sto c hastic mirror-prox algorithm. URL http://a rxiv.org /abs/0809.0815 , 2008. [16] V. Katk o vnik and Y. Kulchitsky . C onv ergence of a class of rand om searc h algorithms. Au- tomation and R emo te Contr o l , 33(8) :1321–13 26, 1972. 37 [17] H. Lakshm anan and D. P . de F arias. Decen tralized resource allo cation in d ynamic net wo rks of agen ts. SIAM Journal on Optimization , 19(2):911 –940, 2008. [18] G. Lan. Convex Optimization under Inexact First-or der Information . PhD thesis, Georgia Institute of T ec hnology , 2009. [19] G. Lan. An optimal metho d for s to c hastic comp osite optimization. Mathematic al Pr o gr am- ming , 2010. On line firs t. URL http://w ww.ise.u fl.edu/glan/papers/OPT_SA4.pdf . [20] G. Lan, A. Nemiro vski, and A. S hapiro. V alidation analysis of robust sto c has- tic approximat ion metho d . Mathema tic al Pr o gr amming , 2010. Online fi rst. URL http://w ww.ise.u fl.edu/glan/papers/SA- test- May05.pdf . [21] M. L edoux and M. T alagrand. P r ob ability in Banach Sp ac es . Sprin ger, 1991. [22] C. Lemar´ ec hal and C. S agastiz´ a bal. Practical asp ects of the Moreau-Yosida regularization: theoretical preliminaries. SIAM J ournal on Optimization , 7(2):367–3 85, 1997. [23] C. Manning, P . Ragha v an, and H. Sc h ¨ utze. Intr o duction to Information R etrieval . Cam bridge Univ ersit y Press, 2008. [24] S. Negah ban and M. J . W ainwrigh t. Estimation of (n ear) low-rank matrices with noise and high-dimensional scaling. Annals of Statistics , 39(2):10 69–1097 , 2011. [25] A. Nemiro vski and D. Y ud in. Pr oblem Complexity and M etho d Efficiency in Optimization . Wiley , 1983. [26] Y. Nestero v. S mo oth minimization of nonsmo oth f unctions. Mathematic al Pr o gr amming , 103:12 7–152, 2005 . [27] Y. Nestero v. Primal-dual subgradient metho d s for con v ex p roblems. Mathematic al Pr o gr am- ming , 120(1):261– 283, 2009. [28] B. T. P oly ak and A. B. Jud itsky . Acceleratio n of stochastic approxima tion b y a v eraging. SIAM Journal on Contr ol and Optimization , 30(4):83 8–855, 1992. [29] B. T . Po ly ak and J. Tsypkin. Robust identi fication. Automatic a , 16:53–63, 1980. [30] H. Robbins and S. Monro. A sto c hastic app ro ximation metho d. Annals of Mathematic al Statistics , 22:400 –407, 1951. [31] R. T. Ro ck afellar and R. J. B. W ets. V ar iational Analysis . Sp ringer, New Y ork, 1998. [32] R. Y. Rub instein. Simulation and the Monte Carlo Metho d . Wiley , 1981. [33] S. S halev-Sh wa rtz, Y. S inger, and A. Ng. Onlin e and batc h learning of p seudo-metrics. In Pr o c e e d ings of the Twenty-First Internationa l Confer enc e on M achine L e arning , 2004. [34] P . Tseng. On accelerated pr o ximal gradien t metho ds for con v ex-conca v e optimization. URL http://w ww.math. washington.edu/ ~ tseng/pa pers/apg m.pdf , 2008. 38 [35] A. W. v an der V aart and J . A. W ellner. We ak Conver genc e and Empiric al P r o c esses: With Applic ations to Statistics . Sprin ger, 1996. [36] L. Xiao. Dual a ve raging metho ds for r egularized sto c hastic learnin g and online optimizatio n. Journal of Machine L e arning R ese ar ch , 11:25 43–2596 , 2010. [37] E. Xing, A. Ng, M. Jordan, and S. Russell. Distance metric learning, with application to clustering with side-information. In A dva nc es in Neur al Information Pr o c essing Systems 15 , 2003. [38] F. Y ousefian, A. Nedi ´ c, and U. V. Shan bhag. On stochasti c gradien t and subgradien t metho d s with adaptive steplength sequences. Automa tic a , 48:56–67, 2012. 39

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment