Generalized Beta Mixtures of Gaussians

In recent years, a rich variety of shrinkage priors have been proposed that have great promise in addressing massive regression problems. In general, these new priors can be expressed as scale mixtures of normals, but have more complex forms and bett…

Authors: Artin Armagan, David B. Dunson, Merlise Clyde

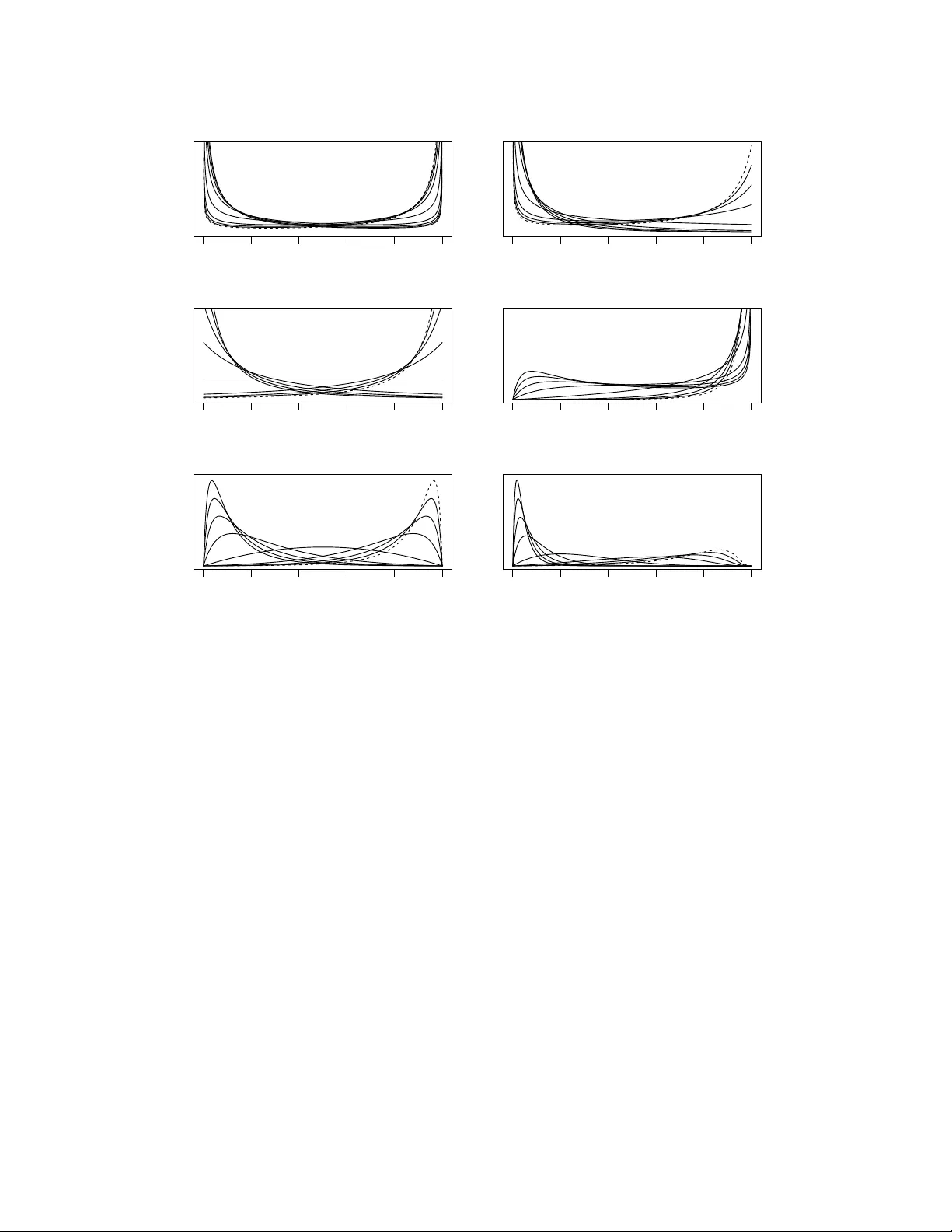

Generalized Beta Mixtur es of Gaussians Artin Armagan Dept. of Statistical Science Duke Uni versity Durham, NC 27708 artin@stat.duke.edu David B. Dunson Dept. of Statistical Science Duke Uni versity Durham, NC 27708 dunson@stat.duke.edu Merlise Clyde Dept. of Statistical Science Duke Uni versity Durham, NC 27708 clyde@stat.duke.edu Abstract In recent years, a rich v ariety of shrinkage priors hav e been proposed that hav e great promise in addressing massiv e regression problems. In general, these new priors can be expressed as scale mixtures of normals, but have more complex forms and better properties than traditional Cauchy and double exponential priors. W e first propose a ne w class of normal scale mixtures through a no vel general- ized beta distribution that encompasses many interesting priors as special cases. This encompassing framew ork should prove useful in comparing competing pri- ors, considering properties and re vealing close connections. W e then dev elop a class of variational Bayes approximations through the ne w hierarchy presented that will scale more efficiently to the types of truly massive data sets that are no w encountered routinely . 1 Introduction Penalized likelihood estimation has e v olved into a major area of research, with ` 1 [22] and other regularization penalties now used routinely in a rich variety of domains. Often minimizing a loss function subject to a regularization penalty leads to an estimator that has a Bayesian interpretation as the mode of a posterior distrib ution [8, 11, 1, 2], with dif ferent prior distrib utions inducing dif ferent penalties. For example, it is well known that Gaussian priors induce ` 2 penalties, while double ex- ponential priors induce ` 1 penalties [8, 19, 13, 1]. V ie wing massi ve-dimensional parameter learning and prediction problems from a Bayesian perspectiv e naturally leads one to design new priors that hav e substantial advantages ov er the simple normal or double exponential choices and that induce rich new families of penalties. For example, in high-dimensional settings it is often appealing to hav e a prior that is concentrated at zero, fav oring strong shrinkage of small signals and potentially a sparse estimator , while having heavy tails to a void over -shrinkage of the larger signals. The Gaus- sian and double exponential priors are insufficiently flexible in having a single scale parameter and relativ ely light tails; in order to shrink many small signals strongly tow ards zero, the double expo- nential must be concentrated near zero and hence will o ver -shrink signals not close to zero. This phenomenon has moti vated a rich v ariety of ne w priors such as the normal-e xponential-gamma , the horseshoe and the gener alized double P ar eto [11, 14, 1, 6, 20, 7, 12, 2]. An alternative and widely applied Bayesian framew ork relies on variable selection priors and Bayesian model selection/averaging [18, 9, 16, 15]. Under such approaches the prior is a mix- ture of a mass at zero, corresponding to the coefficients to be set equal to zero and hence excluded from the model, and a continuous distribution, pro viding a prior for the size of the non-zero signals. This paradigm is very appealing in fully accounting for uncertainty in parameter learning and the unknown sparsity structure through a probabilistic framework. One obtains a posterior distribution ov er the model space corresponding to all possible subsets of predictors, and one can use this pos- terior for model-averaged predictions that tak e into account uncertainty in subset selection and to obtain marginal inclusion probabilities for each predictor providing a weight of e vidence that a spe- cific signal is non-zero allowing for uncertainty in the other signals to be included. Unfortunately , 1 the computational complexity is e xponential in the number of candidate predictors ( 2 p with p the number of predictors). Some recently proposed continuous shrinkage priors may be considered competitors to the con- ventional mixture priors [15, 6, 7, 12] yielding computationally attractiv e alternatives to Bayesian model averaging. Continuous shrinkage priors lead to sev eral adv antages. The ones represented as scale mixtures of Gaussians allow conjugate block updating of the regression coef ficients in linear models and hence lead to substantial improv ements in Markov chain Monte Carlo (MCMC) effi- ciency through more rapid mixing and con ver gence rates. Under certain conditions these will also yield sparse estimates, if desired, via maximum a posteriori (MAP) estimation and approximate inferences via variational approaches [17, 24, 5, 8, 11, 1, 2]. The class of priors that we consider in this paper encompasses many interesting priors as special cases and reveals interesting connections among dif ferent hierarchical formulations. Exploiting an equi v alent conjugate hierarchy of this class of priors, we dev elop a class of v ariational Bayes approximations that can scale up to truly massi ve data sets. This conjugate hierarchy also allows for conjugate modeling of some pre viously proposed priors which have some rather complex yet advantageous forms and facilitates straightforw ard computation via Gibbs sampling. W e also argue intuitiv ely that by adjusting a global shrinkage parameter that controls the ov erall sparsity lev el, we may control the number of non-zero parameters to be estimated, enhancing results, if there is an underlying sparse structure. This global shrinkage parameter is inherent to the structure of the priors we discuss as in [6, 7] with close connections to the con ventional v ariable selection priors. 2 Background W e pro vide a brief background on shrinkage priors focusing primarily on the priors studied by [6, 7] and [11, 12] as well as the Stra wderman-Berger (SB) prior [7]. These priors possess some very appealing properties in contrast to the double exponential prior which leads to the Bayesian lasso [19, 13]. They may be much heavier -tailed, biasing large signals less drastically while shrinking noise- like signals hea vily tow ards zero. In particular , the priors by [6, 7], along with the Stra wderman- Berger prior [7], have a very interesting and intuitiv e representation later giv en in (2), yet, are not formed in a conjugate manner potentially leading to analytical and computational complexity . [6, 7] propose a useful class of priors for the estimation of multiple means. Suppose a p -dimensional vector y | θ ∼ N ( θ , I ) is observed. The independent hierarchical prior for θ j is giv en by θ j | τ j ∼ N (0 , τ j ) , τ 1 / 2 j ∼ C + (0 , φ 1 / 2 ) , (1) for j = 1 , . . . , p , where N ( µ, ν ) denotes a normal distribution with mean µ and v ariance ν and C + (0 , s ) denotes a half-Cauchy distribution on < + with scale parameter s . W ith an appropriate transformation ρ j = 1 / (1 + τ j ) , this hierarchy also can be represented as θ j | ρ j ∼ N (0 , 1 /ρ j − 1) , π ( ρ j | φ ) ∝ ρ − 1 / 2 j (1 − ρ j ) − 1 / 2 1 1 + ( φ − 1) ρ j . (2) A special case where φ = 1 leads to ρ j ∼ B (1 / 2 , 1 / 2) (beta distribution) where the name of the prior arises, hor seshoe (HS) [6, 7]. Here ρ j s are referred to as the shrinkage coefficients as they determine the magnitude with which θ j s are pulled toward zero. A prior of the form ρ j ∼ B (1 / 2 , 1 / 2) is natural to consider in the estimation of a signal θ j as this yields a very desirable behavior both at the tails and in the neighborhood of zero. That is, the resulting prior has hea vy-tails as well as being unbounded at zero which creates a strong pull to wards zero for those v alues close to zero. [7] further discuss priors of the form ρ j ∼ B ( a, b ) for a > 0 , b > 0 to elaborate more on their focus on the choice a = b = 1 / 2 . A similar formulation dates back to [21]. [7] refer to the prior of the form ρ j ∼ B (1 , 1 / 2) as the Strawderman-Berger prior due to [21] and [4]. The same hierarchical prior is also referred to as the quasi-Cauchy prior in [16]. Hence, the tail behavior of the Strawderman- Berger prior remains similar to the horseshoe (when φ = 1 ), while the behavior around the origin changes. The hierarchy in (2) is much more intuitiv e than the one in (1) as it explicitly re veals the behavior of the resulting marginal prior on θ j . This intuiti ve representation makes these hierarchical priors interesting despite their relati vely complex forms. On the other hand, what the prior in (1) or (2) lacks is a more trivial hierarch y that yields recognizable conditional posteriors in linear models. 2 [11, 12] consider the normal-exponential-g amma (NEG) and normal-gamma (NG) priors respec- tiv ely which are formed in a conjugate manner yet lack the intuition the Strawderman-Ber ger and horseshoe priors provide in terms of the behavior of the density around the origin and at the tails. Hence the implementation of these priors may be more user -friendly but they are very implicit in how they behav e. In what follo ws we will see that these two forms are not far from one another . In fact, we may unite these two distinct hierarchical formulations under the same class of priors through a generalized beta distrib ution and the proposed equiv alence of hierarchies in the follo wing section. This is rather important to be able to compare the behavior of priors emerging from different hierarchical formulations. Furthermore, this equiv alence in the hierarchies will allow for a straight- forward Gibbs sampling update in posterior inference, as well as making v ariational approximations possible in linear models. 3 Equivalence of Hierar chies via a Generalized Beta Distrib ution In this section we propose a generalization of the beta distribution to form a flexible class of scale mixtures of normals with very appealing behavior . W e then formulate our hierarchical prior in a conjugate manner and re veal similarities and connections to the priors gi ven in [16, 11, 12, 6, 7]. As the name gener alized beta has pre viously been used, we refer to our generalization as the thr ee- parameter beta (TPB) distrib ution. In the forthcoming text Γ( . ) denotes the gamma function, G ( µ, ν ) denotes a gamma distri- bution with shape and rate parameters µ and ν , W ( ν, S ) denotes a W ishart distribution with ν degrees of freedom and scale matrix S , U ( α 1 , α 2 ) denotes a uniform distribution over ( α 1 , α 2 ) , G I G ( µ, ν , ξ ) denotes a generalized in v erse Gaussian distribution with density function ( ν /ξ ) µ/ 2 { 2 K µ ( √ ν ξ ) } − 1 x µ − 1 exp { ( ν x + ξ /x ) / 2 } , and K µ ( . ) is a modified Bessel function of the second kind. Definition 1. The three-par ameter beta (TPB) distribution for a random variable X is defined by the density function f ( x ; a, b, φ ) = Γ( a + b ) Γ( a )Γ( b ) φ b x b − 1 (1 − x ) a − 1 { 1 + ( φ − 1) x } − ( a + b ) , (3) for 0 < x < 1 , a > 0 , b > 0 and φ > 0 and is denoted by T P B ( a, b, φ ) . It can be easily shown by a change of v ariable x = 1 / ( y + 1) that the abov e density integrates to 1 . The k th moment of the TPB distribution is gi v en by E ( X k ) = Γ( a + b )Γ( b + k ) Γ( b )Γ( a + b + k ) 2 F 1 ( a + b, b + k ; a + b + k ; 1 − φ ) (4) where 2 F 1 denotes the hypergeometric function. In fact it can be shown that TPB is a subclass of Gauss hyper geometric (GH) distribution proposed in [3] and the compound confluent hypergeomet- ric (CCH) distribution proposed in [10]. The density functions of GH and CCH distributions are gi v en by f GH ( x ; a, b, r, ζ ) = x b − 1 (1 − x ) a − 1 (1 + ζ x ) − r B ( b, a ) 2 F 1 ( r , b ; a + b ; − ζ ) , (5) f CCH ( x ; a, b, r, s, ν , θ ) = ν b x b − 1 (1 − x ) a − 1 ( θ + (1 − θ ) ν x ) − r B ( b, a ) exp( − s/ν )Φ 1 ( a, r , a + b, s/ν, 1 − θ ) , (6) for 0 < x < 1 and 0 < x < 1 /ν , respectively , where B ( b, a ) = Γ( a )Γ( b ) / Γ( a + b ) denotes the beta function and Φ 1 is the degenerate h ypergeometric function of tw o v ariables [10]. Letting ζ = φ − 1 , r = a + b and noting that 2 F 1 ( a + b, b ; a + b ; 1 − φ ) = φ − b , (5) becomes a TPB density . Also note that (6) becomes (5) for s = 1 , ν = 1 and ζ = (1 − θ ) /θ [10]. [20] considered an alternative special case of the CCH distribution for the shrinkage coef ficients, ρ j , by letting ν = r = 1 in (6). [20] refer to this special case as the hypergeometric-beta (HB) distribution. TPB and HB generalize the beta distribution in two distinct directions, with one prac- tical advantage of the TPB being that it allo ws for a straightforward conjugate hierarchy leading to potentially substantial analytical and computational gains. 3 Now we move onto the hierarchical modeling of a flexible class of shrinkage priors for the estimation of a potentially sparse p -vector . Suppose a p -dimensional vector y | θ ∼ N ( θ , I ) is observed where θ = ( θ 1 , . . . , θ p ) 0 is of interest. No w we define a shrinkage prior that is obtained by mixing a normal distribution o ver its scale parameter with the TPB distrib ution. Definition 2. The TPB normal scale mixtur e r epr esentation for the distrib ution of random variable θ j is given by θ j | ρ j ∼ N (0 , 1 /ρ j − 1) , ρ j ∼ T P B ( a, b, φ ) , (7) wher e a > 0 , b > 0 and φ > 0 . The r esulting marginal distribution on θ j is denoted by T P B N ( a, b, φ ) . Figure 1 illustrates the density on ρ j for varying v alues of a , b and φ . Note that the special case for a = b = 1 / 2 in Figure 1(a) giv es the horseshoe prior . Also when a = φ = 1 and b = 1 / 2 , this representation yields the Strawderman-Berger prior . For a fixed value of φ , smaller a values yield a density on θ j that is more peaked at zero, while smaller values of b yield a density on θ j that is heavier tailed. For fixed values of a and b , decreasing φ shifts the mass of the density on ρ j from left to right, suggesting more support for stronger shrinkage. That said, the density assigned in the neighborhood of θ j = 0 increases while making the ov erall density lighter -tailed. W e next propose the equi valence of three hierarchical representations revealing a wide class of priors encompassing many of those mentioned earlier . Proposition 1. If θ j ∼ T P BN ( a, b, φ ) , then 1) θ j ∼ N (0 , τ j ) , τ j ∼ G ( a, λ j ) and λ j ∼ G ( b, φ ) . 2) θ j ∼ N (0 , τ j ) , π ( τ j ) = Γ( a + b ) Γ( a )Γ( b ) φ − a τ a − 1 (1 + τ j /φ ) − ( a + b ) which implies that τ j /φ ∼ β 0 ( a, b ) , the in verted beta distrib ution with parameters a and b . The equiv alence gi ven in Proposition 1 is significant as it makes the work in Section 4 possible under the TPB normal scale mixtures as well as further revealing connections among previously proposed shrinkage priors. It provides a rich class of priors leading to great flexibility in terms of the induced shrinkage and mak es it clear that this new class of priors can be considered simultaneous extensions to the work by [11, 12] and [6, 7]. It is worth mentioning that the hierarchical prior(s) giv en in Proposition 1 are different than the approach taken by [12] in how we handle the mixing. In particular , the first hierarchy presented in Proposition 1 is identical to the NG prior up to the first stage mixing. While fixing the values of a and b , we further mix over λ j (rather than a global λ ) and further over φ if desired as will be discussed later . φ acts as a global shrinkage parameter in the hierarchy . On the other hand, [12] choose to further mix ov er a and a global λ while fixing the values of b and φ . By doing so, they forfeit a complete conjugate structure and an explicit control ov er the tail behavior of π ( θ j ) . As a direct corollary to Proposition 1, we observe a possible equiv alence between the SB and the NEG priors. Corollary 1. If a = 1 in Pr oposition 1, then TPBN ≡ NEG. If ( a, b, φ ) = (1 , 1 / 2 , 1) in Pr oposition 1, then TPBN ≡ SB ≡ NEG. An interesting, yet expected, observation on Proposition 1 is that a half-Cauchy prior can be repre- sented as a scale mixture of gamma distributions, i.e. if τ j ∼ G (1 / 2 , λ j ) and λ j ∼ G (1 / 2 , φ ) , then τ 1 / 2 j ∼ C + (0 , φ 1 / 2 ) . This makes sense as τ 1 / 2 | λ j has a half-Normal distrib ution and the mixing distribution on the precision parameter is gamma with shape parameter 1 / 2 . [7] further place a half-Cauchy prior on φ 1 / 2 to complete the hierarchy . The aforementioned obser- vation helps us formulate the complete hierarchy proposed in [7] in a conjugate manner . This should bring analytical and computational advantages as well as making the application of the procedure much easier for the av erage user without the need for a relati vely more comple x sampling scheme. Corollary 2. If θ j ∼ N (0 , τ j ) , τ 1 / 2 j ∼ C + (0 , φ 1 / 2 ) and φ 1 / 2 ∼ C + (0 , 1) , then θ j ∼ T P B N (1 / 2 , 1 / 2 , φ ) , φ ∼ G (1 / 2 , ω ) and ω ∼ G (1 / 2 , 1) . Hence disre garding the different treatments of the higher-le vel hyper-parameters, we have shown that the class of priors given in Definition 2 unites the priors in [16, 11, 6, 7] under one family and rev eals their close connections through the equiv alence of hierarchies giv en in Proposition 1. The first hierarchy in Proposition 1 makes much of the work possible in the follo wing sections. 4 0.0 0.2 0.4 0.6 0.8 1.0 (a) 0.0 0.2 0.4 0.6 0.8 1.0 (b) 0.0 0.2 0.4 0.6 0.8 1.0 (c) 0.0 0.2 0.4 0.6 0.8 1.0 (d) 0.0 0.2 0.4 0.6 0.8 1.0 (e) 0.0 0.2 0.4 0.6 0.8 1.0 (f) Figure 1: ( a, b ) = { (1 / 2 , 1 / 2) , (1 , 1 / 2) , (1 , 1) , (1 / 2 , 2) , (2 , 2) , (5 , 2) } for (a)-(f) respecti vely . φ = { 1 / 10 , 1 / 9 , 1 / 8 , 1 / 7 , 1 / 6 , 1 / 5 , 1 / 4 , 1 / 3 , 1 / 2 , 1 , 2 , 3 , 4 , 5 , 6 , 7 , 8 , 9 , 10 } considered for all pairs of a and b . The line corresponding to the lowest v alue of φ is drawn with a dashed line. 4 Estimation and Posterior Infer ence in Regr ession Models 4.1 Fully Bayes and A ppr oximate Inference Consider the linear re gression model, y = X β + , where y is an n -dimensional vector of responses, X is the n × p design matrix and is an n -dimensional vector of independent residuals which are normally distributed, N ( 0 , σ 2 I n ) with v ariance σ 2 . W e place the hierarchical prior giv en in Proposition 1 on each β j , i.e. β j ∼ N (0 , σ 2 τ j ) , τ j ∼ G ( a, λ j ) , λ j ∼ G ( b, φ ) . φ is used as a global shrinkage parameter common to all β j , and may be inferred using the data. Thus we follow the hierarchy by letting φ ∼ G (1 / 2 , ω ) , ω ∼ G (1 / 2 , 1) which implies φ 1 / 2 ∼ C + (0 , 1) that is identical to what w as used in [7] at this le vel of the hierarchy . Howe v er , we do not believe at this le vel in the hierarchy the choice of the prior will have a huge impact on the results. Although treating φ as unknown may be reasonable, when there exists some prior knowledge, it is appropriate to fix a φ value to reflect our prior belief in terms of underlying sparsity of the coef ficient vector . This sounds rather natural as soon as one starts seeing φ as a pa- rameter that governs the multiplicity adjustment as discussed in [7]. Note also that here we form the dependence on the error variance at a lower level of hierarchy rather than forming it in the prior of φ as done in [7]. If we let a = b = 1 / 2 , we will have formulated the hierarchical prior gi ven in [7] in a completely conjugate manner . W e also let σ − 2 ∼ G ( c 0 / 2 , d 0 / 2) . Under a normal likelihood, an efficient Gibbs sampler may be obtained as the fully conditional posteriors can be extracted: β | y , X , σ 2 , τ 1 , . . . , τ p ∼ N ( µ β , V β ) , σ − 2 | y , X , β , τ 1 , . . . , τ p ∼ G ( c ∗ , d ∗ ) , τ j | β j , σ 2 , λ j ∼ G I G ( a − 1 / 2 , 2 λ j , β 2 j /σ 2 ) , λ j | τ j , φ ∼ G ( a + b, τ j + φ ) , φ | λ j , ω ∼ G ( pb + 1 / 2 , P p j =1 λ j + ω ) , ω | φ ∼ 5 G (1 , φ + 1) , where µ β = ( X 0 X + T − 1 ) − 1 X 0 y , V β = σ 2 ( X 0 X + T − 1 ) − 1 , c ∗ = ( n + p + c 0 ) / 2 , d ∗ = { ( y − X β ) 0 ( y − X β ) + β 0 T − 1 β + d 0 } / 2 , T = diag ( τ 1 , . . . , τ p ) . As an alternative to MCMC and Laplace approximations [23], a lower -bound on marginal like- lihoods may be obtained via variational methods [17] yielding approximate posterior distribu- tions on the model parameters. Using a similar approach to [5, 1], the approximate marginal posterior distributions of the parameters are giv en by β ∼ N ( µ β , V β ) , σ − 2 ∼ G ( c ∗ , d ∗ ) , τ j ∼ G I G ( a − 1 / 2 , 2 h λ j i , h σ − 2 ih β 2 j i ) , λ j ∼ G ( a + b, h τ j i + h φ i ) , φ ∼ G ( pb + 1 / 2 , h ω i + P p j =1 h λ j i ) , ω ∼ G (1 , h φ i + 1) , where µ β = h β i = ( X 0 X + T − 1 ) − 1 X 0 y , V β = h σ − 2 i − 1 ( X 0 X + T − 1 ) − 1 , T − 1 = diag ( h τ − 1 1 i , . . . , h τ − 1 p i ) , c ∗ = ( n + p + c 0 ) / 2 , d ∗ = ( y 0 y − 2 y 0 X h β i + P n i =1 x i h β β 0 i x i + P p j =1 h β 2 j ih τ − 1 j i + d 0 ) / 2 , h β β 0 i = V β + h β ih β 0 i , h σ − 2 i = c ∗ /d ∗ , h λ j i = ( a + b ) / ( h τ j i + h φ i ) , h φ i = ( pb + 1 / 2) / ( h ω i + P p j =1 h λ j i ) , h ω i = 1 / ( h φ i + 1) and h τ i = ( h σ − 2 ih β 2 j i ) 1 / 2 K a +1 / 2 (2 h λ j ih σ − 2 ih β 2 j i ) 1 / 2 (2 h λ j i ) 1 / 2 K a − 1 / 2 (2 h λ j ih σ − 2 ih β 2 j i ) 1 / 2 , h τ − 1 i = (2 h λ j i ) 1 / 2 K 3 / 2 − a (2 h λ j ih σ − 2 ih β 2 j i ) 1 / 2 ( h σ − 2 ih β 2 j i ) 1 / 2 K 1 / 2 − a (2 h λ j ih σ − 2 ih β 2 j i ) 1 / 2 . This procedure consists of initializing the moments and iterating through them until some con ver - gence criterion is reached. The deterministic nature of these approximations make them attractive as a quick alternativ e to MCMC. This conjugate modeling approach we ha ve taken allo ws for a very straightforward implementation of Strawderman-Ber ger and horseshoe priors or , more generally , TPB normal scale mixture priors in regression models without the need for a more sophisticated sampling scheme which may ultimately attract more audiences towards the use of these more fle xible and carefully defined normal scale mixture priors. 4.2 Sparse Maximum a P osteriori Estimation Although not our main focus, many readers are interested in sparse solutions, hence we gi ve the following brief discussion. Given a , b and φ , maximum a posteriori (MAP) estimation is rather straightforward via a simple expectation-maximization (EM) procedure. This is accomplished in a similar manner to [8] by obtaining the joint MAP estimates of the error variance and the regression coefficients having taken the expectation with respect to the conditional posterior distribution of τ − 1 j using the second hierarchy giv en in Proposition 1. The k th expectation step then would consist of calculating h τ − 1 j i ( k ) = R ∞ 0 τ a − 1 / 2 j (1 + τ j /φ ) − ( a + b ) exp {− β 2( k − 1) j / (2 σ 2 ( k − 1) τ j ) } dτ − 1 j R ∞ 0 τ 1 / 2+ a j (1 + τ j /φ ) − ( a + b ) exp {− β 2( k − 1) j / (2 σ 2 ( k − 1) τ j ) } dτ − 1 j (8) where β 2( k − 1) j and σ 2 ( k − 1) denote the modal estimates of the j th component of β and the error variance σ 2 at iteration ( k − 1) . The solution to (8) may be e xpressed in terms of some special function(s) for changing values of a , b and φ . b < 1 is a good choice as it will keep the tails of the marginal density on β j heavy . A careful choice of a , on the other hand, is essential to sparse esti- mation. Admissible values of a for sparse estimation is apparent by the representation in Definition 2, noting that for any a > 1 , π ( ρ j = 1) = 0 , i.e. β j may nev er be shrunk exactly to zero. Hence for sparse estimation, it is essential that 0 < a ≤ 1 . Figure 2 (a) and (b) giv e the prior densities on ρ j for b = 1 / 2 , φ = 1 and a = { 1 / 2 , 1 , 3 / 2 } and the resulting marginal prior densities on β j . These marginal densities are gi v en by π ( β j ) = 1 √ 2 π 3 / 2 e β 2 j / 2 Γ(0 , β 2 j / 2) a = 1 / 2 1 √ 2 π − | β j | 2 e β 2 j / 2 + β j 2 e β 2 j / 2 Erf ( β j / √ 2) a = 1 √ 2 π 3 / 2 n 1 − 1 2 e β 2 j / 2 β 2 j Γ(0 , β 2 j / 2) o a = 3 / 2 where Erf ( . ) denotes the error function and Γ( s, z ) = R ∞ z t s − 1 e − t dt is the incomplete gamma function. Figure 2 clearly illustrates that while all three cases have very similar tail behavior , their behavior around the origin dif fer drastically . 6 0.0 0.2 0.4 0.6 0.8 1.0 ρ (a) −3 −2 −1 0 1 2 3 β (b) Figure 2: Prior densities of (a) ρ j and (b) β j for a = 1 / 2 (solid), a = 1 (dashed) and a = 3 / 2 (long dash). 5 Experiments Throughout this section we use the Jeffre ys’ prior on the error precision by setting c 0 = d 0 = 0 . W e generate data for two cases, ( n, p ) = { (50 , 20) , (250 , 100) } , from y i = x 0 i β ∗ + i , for i = 1 , . . . , n where β ∗ is a p -v ector that on average contains 20 q non-zero elements which are index ed by the set A = { j : β ∗ j 6 = 0 } for some random q ∈ (0 , 1) . W e randomize the procedure in the following manner: (i) C ∼ W ( p, I p × p ) , (ii) x i ∼ N ( 0 , C ) , (iii) q ∼ B (1 , 1) for the first and q ∼ B (1 , 4) for the second cases, (iv) I ( j ∈ A ) ∼ Bernoulli ( q ) for j = 1 , . . . , p where I ( . ) denotes the indicator function, (v) for j ∈ A , β j ∼ U (0 , 6) and for j / ∈ A , β j = 0 and finally (vi) i ∼ N (0 , σ 2 ) where σ ∼ U (0 , 6) . W e generated 1000 data sets for each case resulting in a median signal-to-noise ratio of approximately 3 . 3 and 4 . 5 . W e obtain the estimate of the regression coefficients, ˆ β , using the variational Bayes procedure and measure the performance by model error which is calculated as ( β ∗ − ˆ β ) 0 C ( β ∗ − ˆ β ) . Figure 3(a) and (b) display the median relative model error (RME) values (with their distributions obtained via bootstrapping) which is obtained by dividing the model error observed from our procedures by that of ` 1 regularization (lasso) tuned by 10 -fold cross-validation. The boxplots in Figure 3(a) and (b) correspond to dif ferent ( a, b, φ ) values where C + signifies that φ is treated as unknown with a half-Cauchy prior as giv en earlier in Section 4.1. It is worth mentioning that we attain a clearly superior performance compared to the lasso, particularly in the second case, despite the fact that the estimator resulting from the variational Bayes procedure is not a thresholding rule. Note that b = 1 choice leads to much better performance under Case 2 than Case 1. This is due to the fact that Case 2 in volves a much sparser underlying setup on a verage than Case 1 and that the lighter tails attained by setting b = 1 leads to stronger shrinkage. T o give a high dimensional example, we also generate a data set from the model y i = x 0 i β ∗ + i , for i = 1 , . . . , 100 , where β ∗ is a 10000 -dimensional very sparse vector with 10 randomly chosen components set to be 3 , i ∼ N (0 , 3 2 ) and x ij ∼ N (0 , 1) for j = 1 , . . . , p . This β ∗ choice leads to a signal-to-noise ratios of 3 . 16 . For the particular data set we generated, the randomly chosen components of β ∗ to be non-zero were index ed by 1263 , 2199 , 2421 , 4809 , 5530 , 7483 , 7638 , 7741 , 7891 and 8187 . W e set ( a, b, φ ) = (1 , 1 / 2 , 10 − 4 ) which implies that a priori P ( ρ j > 0 . 5) = 0 . 99 placing much more density in the neighborhood of ρ j = 1 (total shrinkage). This choice is due to the fact that n/p = 0 . 01 and to roughly reflect that we do not want any more than 100 predictors in the resulting model. Hence φ is used, a priori, to limit the number of predictors in the model in relation to the sample size. Also note that with a = 1 , the conditional posterior distribution of τ − 1 j is reduced to an in verse Gaussian. Since we are adjusting the global shrinkage parameter, φ , a priori, and it is chosen such that P ( ρ j > 0 . 5) = 0 . 99 , whether a = 1 / 2 or a = 1 should not matter . W e first run the Gibbs sampler for 100000 iterations ( 2 . 4 hours on a computer with a 2 . 8 GHz CPU and 12 Gb of RAM using Matlab ), discard the first 20000 , thin the rest by picking ev ery 5 th sample to obtain the posteriors of the parameters. W e observed that the chain conv er ged by the 10000 th iteration. For comparison purposes, we also ran the variational Bayes procedure using the v alues from the con ver ged chain as the initial points ( 80 seconds). Figure 4 giv es the posterior means attained by 7 0.9 1.0 1.1 1.2 ( .5,.5,C + ) ( 1,.5,C + ) ( .5,.5,1 ) ( 1,.5,1 ) ( .5,1,C + ) ( 1,1,C + ) ( .5,1,1 ) ( 1,1,1 ) (a) 0.4 0.5 0.6 0.7 ( .5,.5,C + ) ( 1,.5,C + ) ( .5,.5,1 ) ( 1,.5,1 ) ( .5,1,C + ) ( 1,1,C + ) ( .5,1,1 ) ( 1,1,1 ) (b) Figure 3: Relativ e ME at dif ferent ( a, b, φ ) v alues for (a) Case 1 and (b) Case 2. 0 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000 0 1 2 3 1263 2199 2421 4809 5530 7483 7638 7741 7891 8187 Variable # β Figure 4: Posterior mean of β by sampling (square) and by approximate inference (circle). sampling and the variational approximation. The estimates corresponding to the zero elements of β ∗ are plotted with smaller shapes to prev ent clutter . W e see that in both cases the procedure is able to pick up the lar ger signals and shrink a significantly large portion of the rest to wards zero. The approximate inference results are in accordance with the results from the Gibbs sampler . It should be noted that using a good informed guess on φ , rather than treating it as an unknown in this high dimensional setting, improv es the performance drastically . 6 Discussion W e conclude that the proposed hierarchical prior formulation constitutes a useful encompassing framew ork in understanding the behavior of different scale mixtures of normals and connecting them under a broader family of hierarchical priors. While ` 1 regularization, or namely lasso, arising from a double exponential prior in the Bayesian frame work yields certain computational advantages, it demonstrates much inferior estimation performance relative to the more carefully formulated scale mixtures of normals. The proposed equiv alence of the hierarchies in Proposi- tion 1 makes computation much easier for the TPB scale mixtures of normals. As per differ- ent choices of hyper-parameters, we recommend that a ∈ (0 , 1] and b ∈ (0 , 1) ; in particular ( a, b ) = { (1 / 2 , 1 / 2) , (1 , 1 / 2) } . These choices guarantee that the resulting prior has a kink at zero, which is essential for sparse estimation, and leads to heavy tails to avoid unnecessary bias in large signals (recall that a choice of b = 1 / 2 will yield Cauchy-like tails). In problems where oracle knowledge on sparsity e xists or when p >> n , we recommend that φ is fixed at a reasonable quantity to reflect an appropriate sparsity constraint as mentioned in Section 5. Acknowledgments This work was supported by A ward Number R01ES017436 from the National Institute of En vi- ronmental Health Sciences. The content is solely the responsibility of the authors and does not necessarily represent the official vie ws of the National Institute of En vironmental Health Sciences or the National Institutes of Health. References [1] A. Armagan. V ariational bridge regression. JMLR: W&CP , 5:17–24, 2009. 8 [2] A. Armag an, D. B. Dunson, and J. Lee. Generalized double P areto shrinkage. arXiv:1104.0861v2, 2011. [3] C. Armero and M. J. Bayarri. Prior assessments for prediction in queues. The Statistician , 43(1):pp. 139–153, 1994. [4] J. Berger . A robust generalized Bayes estimator and confidence region for a multiv ariate normal mean. The Annals of Statistics , 8(4):pp. 716–761, 1980. [5] C. M. Bishop and M. E. Tipping. V ariational relev ance vector machines. In U AI ’00: Pr o- ceedings of the 16th Confer ence on Uncertainty in Artificial Intelligence , pages 46–53, San Francisco, CA, USA, 2000. Morgan Kaufmann Publishers Inc. [6] C. M. Carvalho, N. G. Polson, and J. G. Scott. Handling sparsity via the horseshoe. JMLR: W&CP , 5, 2009. [7] C. M. Carv alho, N. G. Polson, and J. G. Scott. The horseshoe estimator for sparse signals. Biometrika , 97(2):465–480, 2010. [8] M. A. T . Figueiredo. Adaptiv e sparseness for supervised learning. IEEE T ransactions on P attern Analysis and Machine Intelligence , 25:1150–1159, 2003. [9] E. I. Geor ge and R. E. McCulloch. V ariable selection via Gibbs sampling. J ournal of the American Statistical Association , 88, 1993. [10] M. Gordy . A generalization of generalized beta distributions. Finance and Economics Discus- sion Series 1998-18, Board of Gov ernors of the Federal Reserve System (U.S.), 1998. [11] J. E. Grif fin and P . J. Brown. Bayesian adapti v e lassos with non-conv ex penalization. T echnical Report , 2007. [12] J. E. Griffin and P . J. Brown. Inference with normal-gamma prior distributions in regression problems. Bayesian Analysis , 5(1):171–188, 2010. [13] C. Hans. Bayesian lasso re gression. Biometrika , 96:835–845, 2009. [14] C. J. Hogg art, J. C. Whittaker , and Da vid J. Balding M. De Iorio. Simultaneous analysis of all SNPs in genome-wide and re-sequencing association studies. PLoS Genetics , 4(7), 2008. [15] H. Ishwaran and J. S. Rao. Spike and slab variable selection: Frequentist and Bayesian strate- gies. The Annals of Statistics , 33(2):pp. 730–773, 2005. [16] I. M. Johnstone and B. W . Silverman. Needles and straw in haystacks: Empirical Bayes esti- mates of possibly sparse sequences. Annals of Statistics , 32(4):pp. 1594–1649, 2004. [17] M. I. Jordan, Z. Ghahramani, T . S. Jaakkola, and L. K. Saul. An intr oduction to variational methods for graphical models . MIT Press, Cambridge, MA, USA, 1999. [18] T . J. Mitchell and J. J. Beauchamp. Bayesian variable selection in linear regression. J ournal of the American Statistical Association , 83(404):pp. 1023–1032, 1988. [19] T . Park and G. Casella. The Bayesian lasso. Journal of the American Statistical Association , 103:681–686(6), 2008. [20] N. G. Polson and J. G. Scott. Alternati ve global-local shrinkage rules using hypergeometric- beta mixtures. Discussion P aper 2009-14, Department of Statistical Science, Duke Uni v ersity , 2009. [21] W . E. Strawderman. Proper Bayes minimax estimators of the multiv ariate normal mean. The Annals of Mathematical Statistics , 42(1):pp. 385–388, 1971. [22] R. T ibshirani. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society . Series B (Methodological) , 58(1):267–288, 1996. [23] L. Tierne y and J. B. Kadane. Accurate approximations for posterior moments and marginal densities. Journal of the American Statistical Association , 81(393):82–86, 1986. [24] M. E. Tipping. Sparse Bayesian learning and the relev ance vector machine. J ournal of Machine Learning Resear ch , 1, 2001. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment