Perfect Simulation for Mixtures with Known and Unknown Number of components

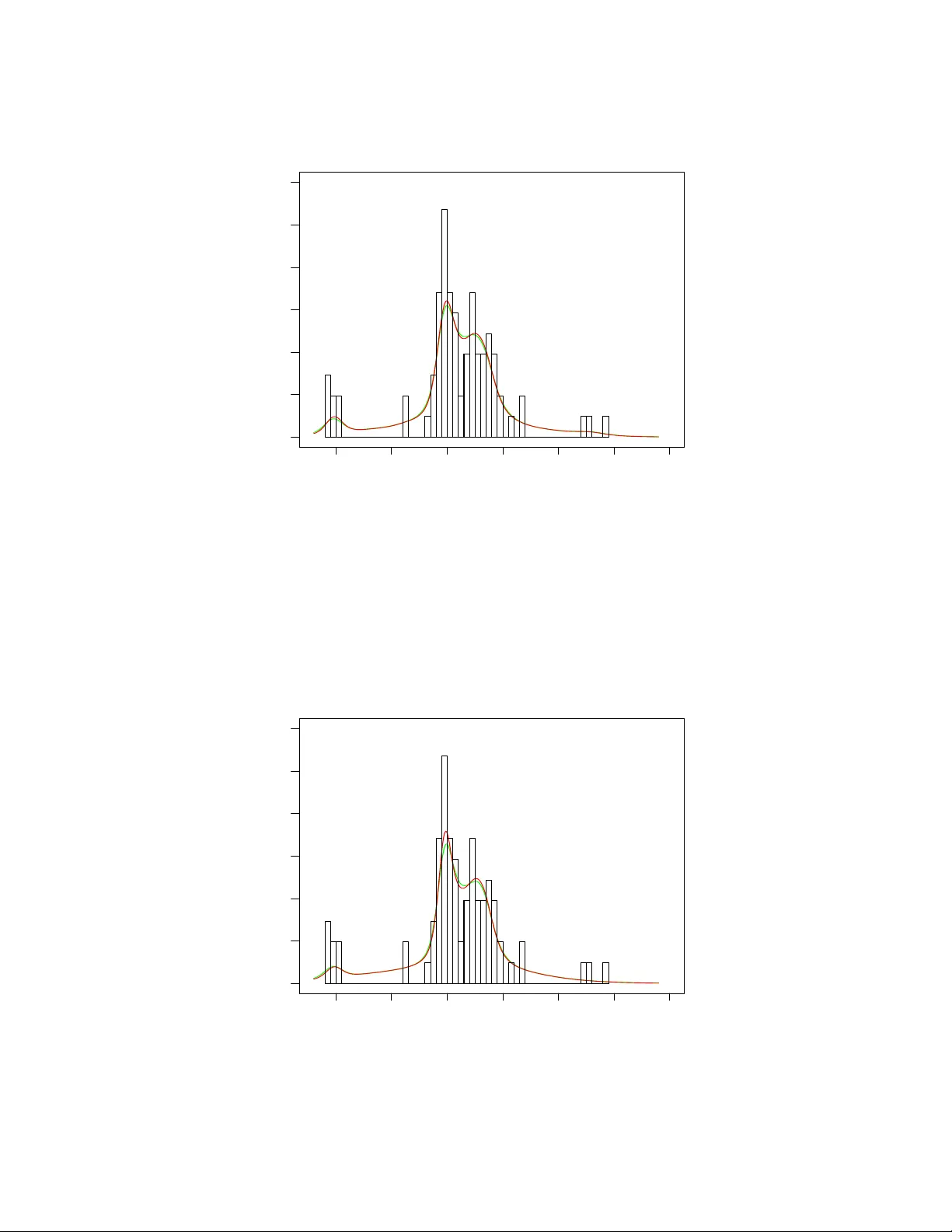

We propose and develop a novel and effective perfect sampling methodology for simulating from posteriors corresponding to mixtures with either known (fixed) or unknown number of components. For the latter we consider the Dirichlet process-based mixtu…

Authors: Sabyasachi Mukhopadhyay, Sourabh Bhattacharya (Bayesian, Interdisciplinary Research Unit