Interpreting Graph Cuts as a Max-Product Algorithm

The maximum a posteriori (MAP) configuration of binary variable models with submodular graph-structured energy functions can be found efficiently and exactly by graph cuts. Max-product belief propagation (MP) has been shown to be suboptimal on this c…

Authors: Daniel Tarlow, Inmar E. Givoni, Richard S. Zemel

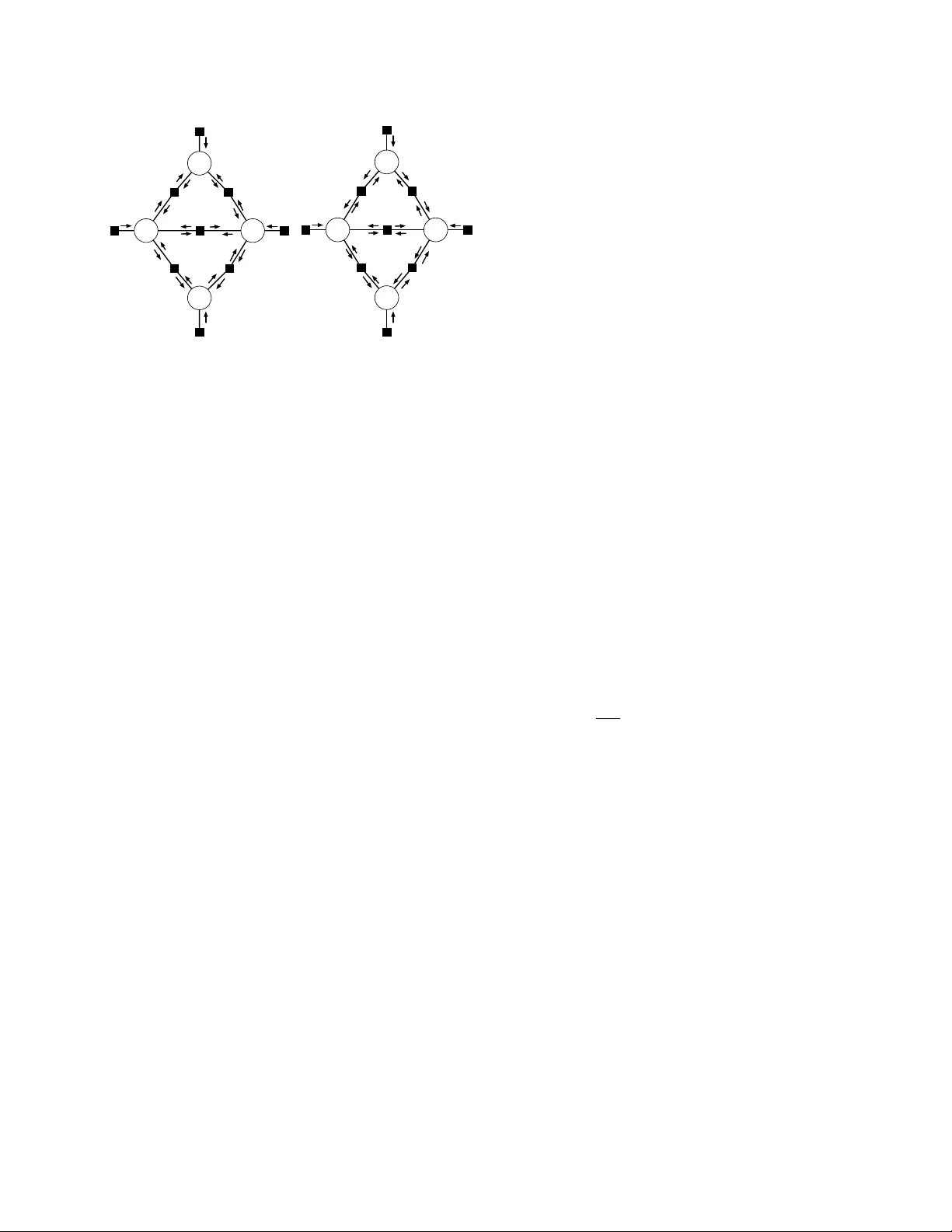

In terpreting Graph Cuts as a Max-Pro duct Algorithm Daniel T arlo w, Inmar E. Givoni, Ric hard S. Zemel, Brendan J. F rey Univ ersity of T oronto T oronto, ON M5S 3G4 { dtarlow@cs, inmar@psi, zemel@cs, frey@psi } .toronto.edu Abstract The maxim um a p osteriori (MAP) configu- ration of binary v ariable mo dels with sub- mo dular graph-structured energy functions can b e found efficiently and exactly b y graph cuts. Max-pro duct belief propagation (MP) has b een sho wn to b e suboptimal on this class of energy functions b y a canonical counterex- ample where MP conv erges to a sub optimal fixed p oin t (Kulesza & Pereira, 2008). In this w ork, w e show that under a partic- ular scheduling and damping scheme, MP is equiv alen t to graph cuts, and th us opti- mal. W e explain the apparen t contradiction b y showing that with prop er scheduling and damping, MP alwa ys con v erges to an optimal fixed p oin t. Th us, the canonical counterex- ample only sho ws the sub optimalit y of MP with a particular suboptimal c hoice of sc hed- ule and damping. With prop er choices, MP is optimal. 1 In tro duction Maxim um a p osteriori (MAP) inference in probabilis- tic graphical mo dels is a fundamental machine learn- ing task with applications to fields such as computer vision and computational biology . There are v arious algorithms designed to solve MAP problems, eac h pro- viding differen t problem-dep enden t theoretical guar- an tees and empirical p erformance. It is often difficult to c ho ose which algorithm to use in a particular ap- plication. In some cases, ho wev er, there is a “gold- standard” algorithm that clearly outp erforms comp et- ing algorithms, such as the case of graph cuts for bi- nary submodular problems. 1 A popular and more gen- 1 F rom here on, we drop “graph-structured” and refer to the energy functions just as binary submo dular. Unless eral, but also o ccasionally erratic, algorithm is max- pro duct b elief propagation (MP). Our aim in this work is to establish the precise re- lationship b et ween MP and graph cuts, namely that graph cuts is a sp ecial case of MP . T o do so, we map analogous asp ects of the algorithms to each other: mes- sage scheduling in MP to selecting augmenting paths in graph cuts; passing messages on a chain to pushing flo w through an augmenting path; message damping to limiting flo w to b e the b ottlenec k capacity of an augmen ting path; and letting messages reinforce them- selv es on a lo opy graph to the graph cuts connected comp onen ts deco ding scheme. This equiv alence implies strong statements regarding the optimality of MP on binary submo dular energies defined on graphs with arbitrary top ology , which may app ear to con tradict muc h of what is known about MP—all empirical results sho wing MP to b e sub opti- mal on binary submo dular problems, and the theoret- ical results of Kulesza and Pereira (2008); W ainwrigh t and Jordan (2008) which sho w analytically that MP con verges to the wrong solution. W e analyze this is- sue in depth and sho w there is no contradiction, but implicit in the previous analysis and exp erimen ts is a sub optimal choice of scheduling and damping, leading the algorithms to conv erge to bad fixed p oin ts. Our results give a more complete c haracterization of these issues, sho wing ( a) there alwa ys exists an optimal fixed p oin t for binary submo dular energy functions, and (b) with prop er scheduling and damping MP can alwa ys b e made to conv erge to an optimal fixed p oint. The existenc e of the optimal MP fixed p oin t can alter- nativ ely b e derived as a consequence of the analysis of the zero temp erature limit of conv exified sum-pro duct in W eiss et al. (2007) along with the w ell-known fact that the standard linear program relaxation is tight for binary submo dular energies. Our pro of of the ex- explicitly sp ecified otherwise, though, we alwa ys assume that energies are defined on a simple graph. istence of the fixed p oin t, then, is an alternative, more direct pro of. How ever, we believe our c onstruction of the fixed point to b e no vel and significant, particularly due to the fact that the construction comes from sim- ply running ordinary max-pro duct within the standard algorithmic degrees of freedom, namely damping and sc heduling. Our analysis is significant for many reasons. Two of the most imp ortant are as follows. First, it shows that previous constructions of MP fixed p oints for bi- nary submodular energy functions critically dep end on the particular sc hedule, damping, and initialization. Though there exist sub optimal fixed p oin ts, there also alw ays exist optimal fixed p oin ts, and with prop er care, the bad fixed p oin ts can alwa ys b e av oided. Sec- ond, it simplifies the space of MAP inference algo- rithms, making explicit the connection b et ween tw o p opular and seemingly distinct algorithms. The map- ping improv es our understanding of message schedul- ing and gives insigh t in to how graph cut-like algo- rithms might b e developed for more general settings. 2 Bac kground and Notation W e are in terested in finding maximizing assign- men ts of distributions P ( x ) ∝ e − E ( x ) where x = { x 1 , . . . , x M } ∈ { 0 , 1 } M . W e can equiv alently seek to minimize the energy E , and for the sake of exp osition w e choose to presen t the analysis in terms of energies 2 . Binary Submo dular Energies: W e restrict our atten tion to submo dular energy functions ov er binary v ariables. Gr aph-structur e d energy functions are de- fined on a simple graph, G = ( V , E ), where eac h no de is asso ciated with a v ariable x . P otential functions Θ i and Θ ij map configurations of individual v ariables and pairs of v ariables whose corresp onding no des share an edge, resp ectiv ely , to real v alues. W e write this energy function as E ( x ; Θ) = X i ∈V Θ i ( x i ) + X ij ∈E Θ ij ( x i , x j ). (1) E is said to b e submo dular if and only if for all ij ∈ E , Θ ij (0 , 0) + Θ ij (1 , 1) ≤ Θ ij (0 , 1) + Θ ij (1 , 0). W e use the shorthand notation [ θ 0 i , θ 1 i ] = [Θ i (0) , Θ i (1)]. When E is submo dular, it is alwa ys p ossible to repre- sen t all pairwise p oten tials in the canonical form Θ ij (0 , 0) Θ ij (0 , 1) Θ ij (1 , 0) Θ ij (1 , 1) = 0 θ 01 ij θ 10 ij 0 2 This makes “max-pro duct” a bit of a misnomer, since in realit y , we will be analyzing min-sum belief propagation. The tw o are equiv alen t, ho wev er, so w e will use “max- pro duct” (MP) throughout, and it should b e clear from con text when we mean “min-sum”. with θ 01 ij , θ 10 ij ≥ 0 without changing the energy of any assignmen t. W e assume that energies are expressed in this form throughout. 3 In our notation, θ 01 ij and θ 10 j i refer to the same quantit y . 2.1 Graph Cuts Graph cuts is a well-kno wn algorithm for minimiz- ing graph-structured binary submo dular energy func- tions, whic h is known to conv erge to the optimal so- lution in low-order polynomial time by transformation in to a maximum netw ork flow problem. The energy function is conv erted into a weigh ted directed graph G C = ( V GC , E GC , C ), where C is an edge function that maps each directed edge ( i, j ) ∈ E GC to a non-negativ e real num b er representing the initial capacity of the edge. One non-terminal no de v i ∈ V GC is constructed for each v ariable x i ∈ V , and t wo terminal no des, a source s , and a sink t , are added to V GC . Edges in E are mapp ed to tw o edges in E GC , one p er direction. The initial capacity of the directed edge ( i, j ) ∈ E GC is set to θ 01 ij , and the initial capacity of the directed edge ( j, i ) ∈ E GC is set to θ 10 ij . In addition, directed edges are created from the source no de to every non- terminal no de, and from every non-terminal no de to the sink no de. The initial capacit y of the terminal edge from s to v i is set to b e θ 1 i , and the initial capac- it y of the terminal edge from v i to t is set to be θ 0 i . W e assume that the energy function has b een normalized so that one of the initial terminal edge capacities is 0 for every non-terminal no de. Residual Graph: Throughout the course of an augmen ting paths-based max-flow algorithm, r esidual c ap acities (or equiv alen tly hereafter, c ap acities ) are main tained for eac h directed edge. The residual capac- it y is the amoun t of flow that can b e pushed through an edge either by using unused capacity or by reversing flo w that has b een pushed in the opp osite direction. Giv en a flow of f ij from v i to v j via edge ( i, j ) and a flow of f j i from v j to v i via edge ( j, i ), the residual capacit y is r ij = θ 10 ij − f ij + f j i . An augmenting p ath is a path from s to t through the residual graph that has p ositiv e capacity . W e call the minimum residual capacit y of any edge along an augmenting path the b ottlene ck c ap acity for the augmenting path. Tw o Phases of Graph Cuts : Augmen ting path al- gorithms for graph cuts pro ceed in t wo phases. In Phase 1, flow is pushed through augmenting paths un- til all source-connected no des (i.e., those with an edge from source to no de with p ositiv e capacity) are sep- arated from all sink-connected no des (i.e., those with an edge to the sink with p ositiv e capacity). In Phase 3 See Kolmogoro v and Zabih (2002) for a more thorough discussion of representational matters. 2, to determine assignmen ts, a connected comp onen ts algorithm is run to find all nodes that are reachable from the source and sink, resp ectively . Phase 1 – Reparametrization : The first phase can b e view ed as reparameterizing the energy function, mo ving mass from unary and pairwise potentials to other pairwise p oten tials and from unary potentials to a constant p oten tial (Kohli & T orr, 2007). The constan t potential is a lo wer b ound on the optimum. W e b egin by rewriting (1) as E ( x ; Θ) = X i ∈V θ 0 i (1 − x i ) + X i ∈V θ 1 i x i + X ij ∈E θ 01 ij (1 − x i ) x j + X ij ∈E θ 10 ij x i (1 − x j ) + θ const , (2) where we added a constant term θ const , initially set to 0, to E ( x ; Θ) without c hanging the energy . A reparametrization is a change in p oten tials from Θ to ˜ Θ such that E ( x ; Θ) = E ( x ; ˜ Θ) for all assignments x . Pushing flow corresp onds to factoring out a con- stan t, f , from some subset of terms and applying the follo wing algebraic identit y to terms from (2): f · [ x 1 + (1 − x 1 ) x 2 + . . . + (1 − x N − 1 ) x N + (1 − x N )] = f · [ x 1 (1 − x 2 ) + . . . + x N − 1 (1 − x N ) + 1] . By ensuring that f is positive (choosing paths that can sustain flow), the constant p otential can be made to grow at each iteration. When no paths exist with nonzero f , θ const is the optimal energy v alue (F ord & F ulkerson, 1956). In terms of the individual co efficien ts, pushing flo w through a path corresp onds to reparameterizing en- tries of the p oten tials on an augmen ting path: θ 1 1 := θ 1 1 − f (3) θ 0 N := θ 0 N − f (4) θ 01 ij := θ 01 ij − f for all ij on path (5) θ 10 ij := θ 10 ij + f for all ij on path (6) θ const := θ const + f . (7) Phase 2 – Connected Comp onen ts : After no more paths can b e found, most no des will not b e directly connected to the source or the sink by an edge that has p ositiv e capacity in the residual graph. In order to determine assignments, information must b e prop- agated from no des that are directly connected to a terminal via p ositive capacit y edges via non-terminal no des. A connected components procedure is run, and an y no de that is (p ossibly indirectly) connected to the sink is assigned lab el 0, and any no de that is (p ossi- bly indirectly) connected to the source is given lab el 1. No des that are not connected to either terminal can b e given an arbitrary lab el without changing the energy of the configuration, so long as within a con- nected comp onen t the lab els are consistent. In prac- tice, terminal-disconnected no des are typically given lab el 0. 2.2 Strict Max-Pro duct Belief Propagation Strict max-pro duct b elief propagation (Strict MP) is an iterative, local, message passing algorithm that can b e used to find the MAP configuration of a distribution sp ecified by a tree-structured graphical mo del. The al- gorithm can equally b e applied to lo op y graphs. Em- plo ying the energy function notation, the algorithm is usually referred to as min-sum. Using the factor-graph represen tation (Kschisc hang et al., 2001), the iterative up dates on simple graph-structured energies inv olves sending messages from factors to v ariables m Θ i → x i ( x i ) = Θ i ( x i ) (8) m Θ ij → x j ( x j ) = min x i Θ ij ( x i , x j ) + m x i → Θ ij ( x i ) (9) and from v ariables to factors, m x i → Θ ij ( x i ) = P i 0 ∈N ( i ) \{ j } m Θ i 0 i → x i ( x i ), where N ( i ) is the set of neigh b or v ariables of i in G . In Strict MP , we require that all messages are up dated in parallel in eac h it- eration. Assignments are t ypically deco ded from b e- liefs as ˆ x i = arg min x i b i ( x i ), where b i ( x i ) = Θ i ( x i ) + P j ∈N ( i ) m Θ j i → x i ( x i ). Pairwise b eliefs are defined as b ij ( x i , x j ) = Θ ij ( x i , x j )+ m x i → Θ ij ( x i )+ m x j → Θ ij ( x j ). 4 2.3 Max-Pro duct Belief Propagation In practice, Strict MP do es not conv erge well, so a com bination of damping and asynchronous message passing schemes is typically used. Th us, MP is ac- tually a family of algorithms. W e formally define the family as follows: Definition 1 (Max-Product Belief Propagation) . MP is a message p assing algorithm that c omputes mes- sages as in (9) . Messages may b e initialize d arbitr ar- ily, sche dule d in any (p ossibly dynamic) or dering, and damp e d in any (p ossibly dynamic) manner, so long as the fixe d p oints of the algorithm ar e the same as the fixe d p oints of Strict MP. W e b eliev e this definition to b e broad enough to con- tain most algorithms that are considered to b e max- pro duct, yet restrictiv e enough to exclude e.g., fun- damen tally different linear program-based algorithms lik e tree-rew eighted max-pro duct. 4 Note that w e only need message v alues to be correct up to a constant, so it is common practice to normalize mes- sages and b eliefs so that the minimum entry in a message or b elief vector is 0. There has b een m uch work on scheduling messages, including a recent string of work on dynamic asyn- c hronous scheduling (Elidan et al., 2006; Sutton & McCallum, 2007), which shows that adaptive sched- ules can lead to impro ved con v ergence. An equally im- p ortan t practical concern is message damping. Dueck (2010), for example, discusses the imp ortance of damp- ing in detail with resp ect to using MP for exemplar- based clustering (affinit y propagation). Our definition of MP includes these v arian ts. 2.4 Augmen ting Path = Chain Subgraph Our scheduling mak es use of dynamically chosen c hains, which are analogous to augmenting paths. F or- mally , an augmen ting path is a sequence of no des T = ( s, v T 1 , v T 2 , . . . , v T n − 1 , v T n , t ) (10) where a nonzero amoun t of flow can be pushed through the path. It will b e useful to refer to E GC ( T ) as the set of edges encoun tered along T . Let x T ⊆ x b e the v ariables corresp onding to non- terminal no des in T . The p oten tials corresp onding to the edges E GC ( T ) and the entries of these p oten tials are denoted by Θ T and a subset of p oten tial v alues θ T . F ormally , x T = { x T 1 , . . . , x T n } Θ T =Θ T 1 ∪ { Θ T i , T i +1 } n − 1 i =1 ∪ Θ T n θ T = θ 1 T 1 ∪ { θ 01 T i , T i +1 } n − 1 i =1 ∪ θ 0 T n . (11) Note that there are only tw o unary potentials on a c hain corresp onding to an augmenting path, whic h cor- resp ond to terminal edges in E GC ( T ). It will b e useful to map edges in E GC ( T ) to edges in the equiv alent fac- tor graph representation. W e use E F G ( T ) to denote all edges in G b et ween p oten tials in Θ T and v ariables in x T . As an example, an augmenting path T = ( s, v i , v j , v k , t ) in the graph cut form ulation w ould b e mapp ed to x T = { x i , x j , x k } , Θ T = { Θ i , Θ ij , Θ j k , Θ k } , and θ T = { θ 1 i , θ 01 ij , θ 01 j k , θ 0 k } . 3 Augmen ting Paths Max-Pro duct In this section, we present Augmenting Paths Max- Pro duct (APMP), a particular scheduling and damp- ing scheme for MP , that—like graph cuts—has tw o phases. At each iteration of the first phase, the sched- uler returns a chain on which to pass messages. Hold- ing all other messages fixed, messages are passed for- w ard and bac kward on the c hain, with standard mes- sage normalization applied, to complete the iteration. Adaptiv e message damping applied to messages leav- ing unary factors (describ ed b elo w) ensures that mes- sages propagate across the chain in a particularly structured wa y . Messages leaving pairwise factors and messages from v ariables to factors are not damped. Phase 1 terminates when the scheduler indicates there are no more messages to send, then in Phase 2, Strict MP is run until conv ergence (we guaran tee it will con- v erge). The full APMP is giv en in Algorithm 1. 3.1 Phase 1: P ath Scheduling and Damping F or con venience, we use the conv ention that chains go from “left” to “right,” where the left-most v ariable on a chain corresp onding to an augmenting path is x T 1 , and the right-most v ariable is x T N . In these terms, a forwar d pass is from left to right, and a b ackwar d pass is from right to left. Supp ose that at the end of iteration t − 1, the outgoing message from the unary factor at the start of the chain used in iteration t , T = T ( t ), is m ( t − 1) Θ T 1 → x T 1 ( x T 1 ) = (0 , b ) T . If the factor increments its outgoing message in such a wa y as to guarantee that b + f ≤ θ 01 β for all steps along T , the messages as shown in Fig. 1 will b e computed (see Corollary 1 b elo w). Later analysis will explain why this is desirable. Accoun ting for message normalization, this can be accomplished b y limiting the change ∆ m ( . ) = m ( t ) ( . ) − m ( t − 1) ( . ) in outgoing message from the first unary v ariable on a path to b e ∆ m Θ T 1 → x T 1 ( x T 1 ) = (0 , f ) T . W e also constrain the incremen t in the backw ard direction to equal the in- cremen t in the forward direction. Under the constraints, the largest f we can choose is f = min θ 0 T N − m ( t − 1) Θ T N → x T N (0) , θ 1 T 1 − m ( t − 1) Θ T 1 → x T 1 (1) , min ij | Θ ij ∈ Θ T h θ 01 ij − m ( t − 1) x i → Θ ij (1) + m ( t − 1) x i → Θ ij (0) i (12) whic h is exactly the b ottlenec k capacit y of the corre- sp onding augmenting path. In other words, limiting the change in outgoing message v alue from unary fac- tors to b e the b ottleneck capacity of the augmenting path will ensure that messages incremen ts propagate through a c hain unmodified–that is, when one v ariable on the path receives an increment of (0 , f ) T as x i do es in Fig. 1(b), it will propagate the same increment to the next v ariable on the path ( x j ), as in Fig. 1(c). This is prov ed in Lemma 1. Damping: The key , simple idea to the damping sc heme is that we wan t unary factors to increment their messages by the b ottlenec k capacit y of the cur- ren t c hain. The necessary v alue of f can b e achiev ed b y damping the outgoing message from the first and last unary p oten tial on each c hain. F or the first unary x i x j a 1 b 1 a 2 b 2 a 3 b 3 b 1 a 1 b 2 a 2 b 3 a 3 b a b a a b a b 0 b+f a 0 [ ] (a) x i 0 b+f a 0 [ ] x j a 1 b 1 a 2 b 2 +f ( ) a 3 b 3 b 1 a 1 b 2 a 2 b 3 a 3 b a b a a b a b (b) x i 0 b+f a 0 [ ] x j a 1 b 1 a 2 b 2 +f a 3 b 3 b 1 a 1 b 2 a 2 b 3 a 3 b a b a a b+f ( ) a b+f ( ) (c) x i 0 b+f a 0 [ ] x j a 1 b 1 a 2 b 2 +f a 3 b 3 b 1 a 1 b 2 +f a 2 ( ) b 3 a 3 b+f a ( ) b+f a ( ) a b+f a b+f (d) Figure 1: The pairwise p oten tial is in square brac kets. Only messages changed relative to previous subfigure are shown in parentheses. Let a = a 1 + a 2 + a 3 and b = b 1 + b 2 + b 3 . (a) Start of iteration. The capac- it y of the edge ij is f . (b) Inductiv e assumption that eac h no de on the augmenting path will receive a mes- sage incremen t of (0 , f ) from the left-neighbor. (c) P assing messages completes the inductive step where x j receiv es an incremented message. (d) Similarly , re- ceiving an incremented message in the backw ards di- rection then up dating messages from j to i completes the iteration. factor, if we previously hav e message (0 , b ) T on the edge, then to pro duce message (0 , b + f ) T , w e can ap- ply damping λ T 1 ( t ) where λ T 1 ( t ) is chosen by solving the equation: λ T 1 ( t ) · b + (1 − λ T 1 ( t )) · θ 1 T 1 = f + b , (13) yielding λ T 1 ( t ) = θ 1 T 1 − f − b θ 1 T 1 − b . The algorithm nev er c ho oses an augmen ting path with 0 capacity , so w e will never get a zero denominator. Analogous damping is applied in the opposite direc- tion. This dynamic damping will then pro duce the same message increments in the forward and bac kw ard direction, which will b e a key prop erty used in later analysis. SCHEDULE Implementation: The combination of potentials and messages on the edges contain the same information as the residual capacities in the graph cuts residual graph. Using this equiv alence, any algorithm for finding augmenting paths in the graph cut setting can b e used to find c hains to pass messages on for MP . The terms b eing minimized o ver in Eq. (12) are residual capacities, whic h are defined in terms of messages and p oten tials. Sp ecifically , at the end of an y iteration of the MP algorithm describ ed in the next section, the residual capacities of edges b et ween non-terminal no des can be constructed from potentials Algorithm 1 Augmenting Paths Max-Pro duct f (0) ← ∞ t ← 0 while f ( t ) > 0 do { Phase 1 } T ( t ) , f ( t ) ← SCHEDULE( F G ( t )) λ T 1 ( t ) , λ T N ( t ) ← DAMPING( F G ( t ) , T ( t ) , f ( t )) F G ( t +1) ← MP( F G ( t ) , E F G ( T ( t )) , λ T 1 ( t ) , λ T N ( t )) t ← t + 1 end while while not conv erged do { Phase 2 } Run Strict MP end while and current messages m as follows: r ij = θ 01 ij − m x i → Θ ij (1) + m x i → Θ ij (0). (14) The difference in messages m x i → Θ ij (1) − m x i → Θ ij (0) is then equiv alen t to the difference in flows f ij − f j i in the graph cuts form ulation. The residual capacities for terminal edges can b e constructed from messages and p oten tials related to unary factors: r si = θ 1 i − m Θ i → x i (1) (15) r it = θ 0 i − m Θ i → x i (0). (16) 3.2 Phase 2: Strict MP When the sc heduler cannot find a p ositiv e-capacity path on which to pass messages, it switches to its sec- ond phase and passes all messages at all iterations, with no damping i.e., Strict MP . It contin ues until reac hing a fixed point. (W e will prov e in Section 5 that if p oten tials are finite, it will alwa ys conv erge). The choice of Strict MP is not essential. W e can prov e the same results for any reasonable scheduling of mes- sages. 4 APMP Phase 1 Analysis Assume that at the b eginning of iteration t , each v ari- able x i ∈ x T ( t ) has receiv ed an incoming message from its left-neigh b oring factor Θ α , m Θ α → x i ( x i ) = ( a, b ) T . W e wan t to sho w that when each v ariable receives an incremented message, ( a, b + f ) T , the incremen t (0 , f ) T —up to a normalizing constant—will b e prop- agated through the v ariable and the next factor, Θ ij , to the next v ariable on the path. The pairwise potential at the next pairwise factor along the c hain will b e Θ ij . The damping scheme ensures that θ 10 ij ≥ a and θ 01 ij ≥ b + f . Lemma 1 sho ws that under these conditions, factors will propa- gate messages unchanged. Lemma 1 (Message-Preserving F actors) . When p ass- ing standar d MP messages with the factors as ab ove, θ 10 ij ≥ a , and θ 01 ij ≥ b + f , the outgoing factor-to- variable message is e qual to the inc oming variable- to-factor message i.e. m Θ ij → x j = m x i → Θ ij and m Θ ij → x i = m x j → Θ ij . Pr o of. This follows from plugging in v alues to the mes- sage up dates. See supplementary materials. Lemma 1 allo ws us to easily compute v alue of all mes- sages passed during the execution of Phase 1 of APMP and thus the c hange in b eliefs at each v ariable. Corollary 1 (Structured Belief Changes) . Befor e and after an iter ation t of Phase 1 APMP, the change in unary b elief at e ach variable in x T ( t ) wil l b e (0 , 0) T , up to a c onstant normalization. Pr o of. Under the APMP damping scheme, the change in message from the first unary factor in T ( t ) will b e (0 , f ) T , and the c hange in message from the last unary factor in T ( t ) will b e ( f , 0) T where f is as defined in Eq. (12). Without message normalization, these mes- sages will propagate unchanged through the pairwise factors in T ( t ) by Lemma 1. V ariable to factor mes- sages will also propagate the change unaltered. Message normalization subtracts a p ositiv e constant c = min( a, b + f ) from b oth entries in a message vec- tor. Existing message v alues will only get smaller, so the message-preserving prop ert y of factors will b e main tained. Th us, each v ariable will receiv e a message c hange of ( − c L , f − c L ) T from the left and a message c hange of ( f − c R , − c R ) T from the right. The total c hange in b elief is then ( f − c L − c R , f − c L − c R ) T , whic h completes the pro of. Fig. 1 illustrates the structured message c hanges. 4.1 Message F ree View Here, using the reparametrization view of max- pro duct from W ain wright et al. (2004), we analyze the equiv alen t “message-free” version of the first phase of APMP—one that directly mo difies potentials rather than sending messages. Corollary 1 shows that all messages in APMP can b e analytically computed. W e then use these message v alues to compute the change in parameterization due to the messages at each itera- tion. The main result in this section is that this change in parameterization is exactly equiv alen t to that p er- formed by graph cuts. An imp ortan t identit y , which is a sp ecial case of the junction tree representation (W ainwrigh t et al., 2004), states that we can equiv alently view MP on a tree as reparameterizing Θ according to b eliefs b : X i ∈V ˜ Θ i ( x i ) + X ij ∈E ˜ Θ ij ( x i , x j ) = X i ∈V b i ( x i ) + X ij ∈E [ b ij ( x i , x j ) − b i ( x i ) − b j ( x j )] (17) where ˜ Θ is a reparametrization i.e. E ( x ; Θ) = E ( x ; ˜ Θ) ∀ x . A t any point, w e can stop and calculate cur- ren t b eliefs and apply the reparameterization (i.e., re- place original p oten tials with reparameterized p oten- tials and set all messages to 0). This holds for damp ed factor graph max-pro duct even if factor to v ariable messages are damp ed. “Used” and “Remainder” Energies: T o ana- lyze reparameterizations, we b egin by splitting E in to t wo comp onents: a part that has b een used so far, and a remainder part. The used part is defined as the energy function that w ould ha ve produced the cur- ren t messages if no damping were used. The remain- der is everything else. Since damping is only applied at unary p oten tials, we assign all pairwise p otentials to the used comp onen t: Θ ( U ) ij ( x i , x j ) = Θ ij ( x i , x j ). The used comp onen t of unary p oten tials can easily b e defined as the curren t message leaving the factor: Θ ( U ) i ( x i ) = m Θ i → x i ( x i ). Consequently , the remainder pairwise p oten tials are zero, and the remainder unary p oten tials are Θ ( R ) i ( x i ) = Θ i ( x i ) − Θ ( U ) i ( x i ). W e apply the message-free in terpretation to get a reparameter- ized version of E ( x ; Θ ( U ) ) then add in the remainder comp onen t of the energy unmo dified. Analyzing Beliefs: The parameterization in Eq. (17) dep ends on unary and pairwise beliefs. W e consider the change in b eliefs from that defined by messages at the start of an iteration of APMP to that defined by messages at the end of an iteration. There are three cases to consider. Case 1 V ariables and p oten tials not in or neighboring x T ( t ) will not ha ve an y p oten tials or adjacen t b eliefs c hanged, so the reparametrization will not change. Case 2 Poten tials neighboring x ∈ x T ( t ) but not in E F G ( T ( t )) could p ossibly b e affected by the b elief at a v ariable in x T ( t ) , since the b elief at an edge dep ends on the beliefs at v ariables at each of its endp oin ts. Ho wev er, b y Corollary 1, after applying standard nor- malization, this b elief do es not change after a forward and bac kward pass of messages, so ov erall they are unaltered. Case 3 W e now consider the b elief of p otentials Θ ( U ) ij ∈ Θ ( U ) T ( t ) . This is the most inv olv ed case, where the parametrization do es change, but it do es so in a v ery structured wa y . Lemma 2. The change in p airwise b elief on the cur- r ent augmenting p ath T ( t ) fr om the b e ginning of an iter ation t to the end of an iter ation is ∆ b ij ( x i , x j ) = 0 − f + f 0 + f ij ∈ T ( t ) . (18) Pr o of. This follows from applying the standard repa- rameterization (17) to messages before and after an iteration of Phase 1 APMP . See supplemen tary mate- rial for details. Unary Reparameterizations: As discussed ab o v e, the used part of the energy is group ed with messages and reparameterized as standard, while the remainder part is left unc hanged and is added in at the end: ˜ Θ i ( x i ) = b i ( x i ; Θ ( U ) ) + Θ ( R ) i ( x i ). (19) P arameterizations defined in this w ay are proper repa- rameterizations of the original energy function. Lemma 3. The changes in p ar ameterization during iter ation t of Phase 1 APMP at variables x T 1 and x T N r esp e ctively ar e (0 , − f ) T and ( − f , 0) T . The change in al l other unary p otentials is (0 , 0) T . Pr o of. The Phase 1 damping scheme ensures that the message lea ving the first factor on T = T ( t ) is in- cremen ted b y (0 , f ) T . This means that Θ ( U ) T 1 ( x T 1 ) is incremen ted b y (0 , f ) T , so Θ ( R ) T 1 ( x T 1 ) is decremented b y (0 , f ) T to maintain the decomp osition constraint. Unary b eliefs do not change, so the new parameteriza- tion is then ∆Θ T 1 ( x T 1 ) = ∆ b x T 1 ( x T 1 ) + ∆Θ ( R ) x T 1 ( x T 1 ) = (0 , − f ) T . A similar argument holds for ∆Θ x T N . The only unary p oten tials inv olv ed in an iteration of APMP are endp oin ts of T ( t ), so no other Θ ( R ) v alues will change. The total change in parameterization at non-endp oin t unary p otentials is then (0 , 0) T . F ull Reparameterizations: Finally , we are ready to prov e our first main result. Theorem 1. The differ enc e b etwe en two r ep ar ametrizations induc e d by the messages in Phase 1 APMP, b efor e and after p assing messages on the chain c orr esp onding to augmenting p ath T ( t ) , is e qual to the differ enc e b etwe en r ep ar ametrizations of gr aph cuts b efor e and after pushing flow thr ough the e quivalent augmenting p ath. Pr o of. The change in unary parameterization is given b y Lemma 3. The c hange in pairwise parameterization is ∆Θ ij ( x i , x j ) = ∆ b ij ( x i , x j ) − ∆ b i ( x i ) − ∆ b j ( x j ) = ∆ b ij ( x i , x j ), where ∆ b ij ( x i , x j ) is given by Lemma 2. [ ] 0 0 [ ] 0 b [ ] b 0 b 0 b 0 0 b 0 b 0 b b 0 [ ] 0 0 (a) [ ] b 0 [ ] 0 b [ ] 0 b [ ] b 0 b 0 b 0 0 b 0 b 0 b b 0 0 b 0 b 0 b b 0 b 0 b 0 (b) Figure 2: Illustration of first t wo “used” energies and asso ciated fixed p oints constructed by APMP on the problem from Fig. 4. Poten tials Θ ( U ) are giv en in square brac k ets. Messages ha v e no paren theses. Edges with messages equal to (0 , 0), and pairwise p oten tials, whic h are assumed strong, are not drawn to reduce clutter. (a) First energy . (b) Second energy . Note that b oth sets of messages give a max-pro duct fixed p oin t for the resp ectiv e energy . Putting the t wo together, w e see that the changes in p oten tial en tries are exactly the same as those p er- formed by graph cuts in (3) - (7): ∆Θ T 1 ( x T 1 ) = 0 − f (20) ∆Θ T N ( x T N ) = − f 0 (21) ∆Θ T i ( x T i ) = 0 0 i 6 = 1 , N (22) ∆Θ T i , T j ( x T i , x T j ) = 0 − f + f 0 Θ T i , T j ∈ Θ T ( t ) . (23) This completes the pro of of equiv alence betw een Phase 1 APMP and Phase 1 of graph cuts. Fig. 2 sho ws Θ ( U ) and Phase 1 APMP messages from running tw o iterations on the example from Fig. 4. 5 APMP Phase 2 Analysis W e now consider the second phase of APMP . Through- out this section, we will w ork with the reparameterized energy that results from applying the equiv alent repa- rameterization view of MP at the end of APMP Phase 1—that is, we hav e applied the reparameterization to p oten tials, and reset messages to 0. All results could equiv alen tly b e sho wn by w orking with original p o- ten tials and messages at the end of Phase 1, but the c hosen presen tation is simpler. A t this p oin t, there are no paths b et ween a unary p o- ten tial of the form (0 , a ) T , a > 0 and a unary p oten- ( ) * 0 ( ) 0 * ( ) * 0 Figure 3: A decomposition in to three homogeneous islands. The left-most and right-most islands hav e b e- liefs of the form ( α, 0) T , while the middle has b eliefs of the form (0 , β ) T . Non-touching cross-island lines indicate that messages passed from one island to an- other will b e identically 0 after an y num b er of internal iterations of message passing within an island. tial of the form ( b, 0) T , b > 0 with nonzero capacity . Practically , as in graph cuts, breadth first searc h could b e used at this p oin t to find an optimal assignment. Ho wev er, w e will sho w that running Strict MP leads to con vergence to an optimal fixed p oint. This pro ves the existence of an optimal MP fixed p oin t for any binary submo dular energy and gives a constructive algorithm (APMP) for finding it. Our analysis relies up on the reparameterization at the end of Phase 1 defining what w e term homo gene ous islands of v ariables. Definition 2. A homogeneous island is a set of vari- ables x H c onne cte d by p ositive c ap acity e dges such that e ach variable x i ∈ x H has normalize d b eliefs ( α i , β i ) T wher e either ∀ i.α i = 0 or ∀ i.β i = 0 . F urther, after any numb er of r ounds of message p assing amongst vari- ables within the island, any message m Θ ij → x j ( x j ) fr om a variable inside the island x i to a variable outside the island x j is identic al ly 0, and vic e versa. Call the v ariables inside a homogeneous island with nonzero unary p oten tials se e ds of the island. Fig. 3 sho ws an illustration of homogeneous islands. Homo- geneous islands allow us to analyze mess ages indep en- den tly within each island, without considering cross- island messages. Lemma 4. At the end of Phase 1, the messages of APMP define a c ol le ction of homo gene ous islands. Pr o of. This is essentially equiv alent to how the max- flo w min-cut theorem prov es that the F ord-F ulk erson algorithm has found a minimum cut when no more augmen ting paths can b e found. The b oundaries b e- t ween islands are the lo cations of the cuts. See sup- plemen tary material. Lemma 4 lets us analyze Strict MP independently within each homogeneous island, b ecause it sho ws that no non-zero messages will cross island boundaries. Th us, we can prov e that internally , each island will reac h a MP fixed point: Lemma 5 (Internal Con vergence) . Iter ating Strict MP inside a homo gene ous island of the form ( α, 0) T (or (0 , β ) T ) ) wil l le ad to a fixe d p oint wher e b eliefs ar e of the form ( α 0 i , 0) T , α 0 i ≥ 0 (or (0 , β i ) T , β 0 i ≥ 0 ) at e ach variable in the island. Pr o of. (Sketc h) W e prov e the case where the unary p o- ten tials inside the island hav e form ( α i , 0) T . The case where they hav e form (0 , β i ) T is entirely analogous. A t the b eginning of Phase 2, all unary p oten tials will b e of the form ( α, 0) T , α ≥ 0. By the p ositiv e-capacit y edge connectivity of homogeneous islands property , messages of the form ( α, 0) T , α > 0 will ev entually b e propagated to all v ariables in the island by Strict MP . In addition, messages can only reinforce (and not cancel) each other. F or example, in a single lo op ho- mogeneous island, messages will cycle around the loop, getting larger as unary p oten tials are added to incom- ing messages and passed around the lo op. Messages will only stop growing when the the v ariable-to-factor messages become stronger than the pairwise p oten tial. On acyclic island structures, Strict MP will obviously con verge. On loopy graphs, messages will be monoton- ically increasing until they are capp ed by the pairwise p oten tials (i.e., the pairwise potential is satur ate d ). The rate of message increase is lo wer b ounded by some constan t (that dep ends on the strength of unary p o- ten tials and size of lo ops in the island graph, which are fixed), so the sequence will conv erge when all pairwise p oten tials are saturated. W e can no w pro ve our second main result: Theorem 2 (Guaran teed Conv ergence and Optimal- it y of APMP Fixed Poin t) . APMP c onver ges to an optimal fixe d p oint on binary submo dular ener gy func- tions. Pr o of. After running Phase 2 of APMP , Lemma 5 sho ws that each homogeneous island will con verge to a fixed p oin t where b eliefs at all v ariables in the island can b e deco ded to give the same assignmen t as the initial seed of the island. This is the same assignment as the optimal graph cuts-style connected comp onen ts deco ding w ould yield. Cross-island messages are all zero, and if a v ariable is not in an island, it has zero p oten tial, sends and receiv es all zero messages, and can b e assigned arbitrarily . Thus, we are globally at a MP fixed p oin t, and b eliefs can b e deco ded at each v ariable to give the optimal assignment. Finally , we return to the canonical example used to sho w the sub optimality of MP on binary submo dular 0 b+2 λ -2a 1 2 4 3 [ ] a 0 [ ] 0 b [ ] 0 b [ ] a 0 a 0 a λ 0 λ 0 b+2 λ -a a λ a 0 0 b 0 b 0 λ -a 0 b+2 λ -a 0 λ 0 b+2 λ -2a 0 b+2 λ -a 0 λ 0 λ a λ 0 λ -a 0 b+2 λ -a 0 λ -a a λ 0 λ -a 0 λ 0 λ (a) Bad Fixed Poin t 1 2 4 3 [ ] a 0 [ ] 0 b [ ] 0 b [ ] a 0 λ +a 0 a 0 λ 0 λ 0 λ 0 λ +a 0 2 λ b 2 λ b λ 0 λ 0 λ 0 λ 0 2 λ b 2 λ b 2 λ b λ +a 0 λ 0 λ 0 λ +a 0 λ 0 2 λ b a 0 0 b 0 b (b) Optimal Fixed Poin t Figure 4: The canonical counterexample used to show that MP is sub optimal on binary submo dular energy functions. P otentials are given in square brack ets. Messages hav e no parentheses. Pairwise potentials are symmetric with strength λ , and λ > 2 a > 2 b , making the optimal assignment (1 , 1 , 1 , 1). (a) The previously analyzed fixed p oint. Beliefs at 1 and 4 are ( a, 2 λ ) T , and at 2 and 3 are (0 , b + 3 λ − 2 a ) T , which giv es a sub- optimal assignment. (b) W e in tro duce a second fixed p oin t. Beliefs at 1 and 4 are (2 λ + a, 0) T , and at 2 and 3 are (3 λ, b ) T , which gives the optimal assignmen t. Our new scheduling and damping sc heme guarantees MP will find an optimal fixed p oin t like this for any binary submo dular energy function. energies. The p oten tials and messages defining a sub- optimal fixed point, whic h is reac hed b y certain sub op- timal scheduling and damping schemes, are illustrated in Fig. 4 (a). If, how ever, w e run APMP , Phase 1 ends with the messages shown in Fig. 2(b) and Phase 2 con- v erges to the fixed point shown in Fig. 4 (b). Deco ding b eliefs from the messages in Fig. 4 (b) indeed giv es the optimal assignment of (1 , 1 , 1 , 1). 6 Con v ergence Guarantees There are several v arian ts of message passing algo- rithms for MAP inference that hav e b een theoretically analyzed. There are generally t wo classes of results: (a) guaran tees ab out the optimality or partial opti- malit y of solutions, assuming that the algorithm has con verged to a fixed p oin t; and (b) guarantees ab out the monotonicity of the up dates with resp ect to some b ound and whether the algorithm will conv erge. Notable optimality guarantees exist for TR W algo- rithms (Kolmogorov & W ainwrigh t, 2005) and MPLP (Glob erson & Jaakk ola, 2008). Kolmogoro v and W ain- wrigh t (2005) pro ve that fixed points of TR W satis- fying a we ak tr e e agr e ement (WT A) condition yield optimal solutions to binary submo dular problems. Glob erson and Jaakk ola (2008) show that if MPLP con verges to b eliefs with unique optimizing v alues, then the solution is optimal. Con vergence guarantees for message passing algo- rithms are generally significantly w eaker. MPLP is a co ordinate ascent algorithm so is guaranteed to con- v erge; how ever, in general it can get stuc k at sub opti- mal p oin ts where no improv emen t is p ossible via up- dating the blo c ks used by the algorithm. Somewhat similarly , TR W-S is guaran teed not to decrease a lo wer b ound. In the limit where the temp erature go es to 0, con vexified sum-pr o duct is guaran teed to conv erge to a solution of the standard linear program relaxation, but this is not numerically practical to implement (W eiss et al., 2007). How ev er, even for binary submo dular energies, w e are una w are of results that guaran tee con- v ergence for con vexified b elief propagation, MPLP , or TR W-S in p olynomial time. Our analysis rev eals schedules and me ssage passing up- dates that guarantee con vergence in low order p olyno- mial time to a state where an optimal assignment can b e deco ded for binary submo dular problems. This fol- lo ws directly from analysis of max-flow algorithms. By using shortest augmenting paths, the Edmonds-Karp algorithm con verges in O ( |V ||E | 2 ) time (Edmonds & Karp, 1972). Analysis of the conv ergence time of Phase 2 is slightly more in v olved. Given an island with a large single loop of M v ariables, with strong pairwise p oten tials (sa y strength λ ) and only one small nonzero unary p oten tial, sa y ( α, 0) T , conv ergence will take on the order of M · λ α time, which could b e large. In prac- tice, though, we can reach the same fixed p oin t b y mo difying nonzero unary p oten tials to b e ( λ, 0) T , in whic h case conv ergence will take just order M time. In terestingly , this modification causes Strict MP to b ecome equiv alen t to the connected comp onen ts al- gorithm used by graph cuts to deco de solutions. 7 Related W ork There are close relationships b et ween many MAP in- ference algorithms. Here we discuss the relationships b et w een some of the more notable and similar algo- rithms. APMP is closely related to dual blo c k co- ordinate ascent algorithms discussed in (Son tag & Jaakk ola, 2009)—Phase 1 of APMP can be seen as blo c k co ordinate ascen t in the same dual. In ter- estingly , ev en though both are optimal ascen t steps, APMP reparameterizations are not identical to those of the sequential tree-blo c k co ordinate ascent algo- rithm in (Sontag & Jaakk ola, 2009) when applied to the same chain. Graph cuts is also highly related to the Augmen ting D AG algorithm (W erner, 2007). Augmenting DA Gs are more general constructs than augmen ting paths, so with a prop er choice of schedule, the Augmen ting D AG algorithm could also implemen t graph cuts. Our work follows in the spirit of RBP (Elidan e t al., 2006), in that we are considering dynamic schedules for b elief propagation. RBP is more general, but our analysis is muc h stronger. Finally , our w ork is also related to the COMPOSE framew ork of Duchi et al. (2007). In COMPOSE, sp e- cial purp ose algorithms are used to compute MP mes- sages for certain combinatorial-structured subgraphs, including binary submo dular ones. W e show here that sp ecial purpose algorithms are not needed: the in ter- nal graph cut algorithm can b e implemented purely in terms of max-pro duct. Given a problem that con tains a graph cut subproblem but also has other high order or nonsubmo dular potentials, our work sho ws how to in terleav e solving the graph cuts problem and passing messages elsewhere in the graph. 8 Conclusions While the proof of equiv alence to graph cuts w as mo d- erately inv olv ed, the APMP algorithm is a simple sp e- cial case of MP . The analysis tec hnique is nov el: rather than relying on the computation tree mo del for anal- ysis, we directly mapp ed the op erations b eing p er- formed by the algorithm to a known combinatorial al- gorithm. It would b e in teresting to consider whether there are other cases where the MP execution might b e mapp ed directly to a combinatorial algorithm. W e hav e pro ven strong statements ab out MP fixed p oin ts on binary submo dular energies. The analysis has a similar flav or to that of W eiss (2000), in that we construct fixed p oin ts where optimal assignments can b e deco ded, but where the magnitudes of the b eliefs do not (generally) correspond to meaningful quanti- ties. The strategy of isolating subgraphs might apply more broadly . F or example, if we could isolate single lo op structures as we isolate homogeneous islands in Phase 1, a second phase migh t then b e used to find optimal solutions in non-homogeneous, lo op y regions. An alternate view of Phase 1 is that it is an intelli- gen t initialization of messages for Strict MP in Phase 2. In this light, our results sho w that initialization can pro v ably determine whether MP is sub optimal or op- timal, at least in the case of binary submo dular energy functions. The connection to graph cuts simplifies the space of MAP algorithms. There are now precise mappings be- t ween ideas from graph cuts and ideas from belief prop- agation (e.g., augmenting path strategies to sc hedul- ing). It allows us, for example, to map the capacity scaling method from graph cuts to schedules for mes- sage passing. A broad, interesting direction of future work is to fur- ther inv estigate how insigh ts related to graph cuts can b e used to improv e inference in the more gene ral set- tings of multilabel, nonsubmo dular, and high order energy functions. At a high level, APMP separates the concerns of impro ving the dual ob jective (Phase 1) from concerns regarding deco ding solutions (Phase 2). In lo opy MP , this delays ov ercounting of messages un til it is safe to do so. W e b eliev e that this and other concepts presented here will generalize. W e are cur- ren tly exploring the non-binary , non-submodular case. Ac knowledgemen ts W e are indebted to Pushmeet Kohli for introducing us to the reparameterization view of graph cuts and for man y insightful conv ersations throughout the course of the pro ject. W e thank anonymous reviewers for v aluable suggestions that led to improv emen ts in the pap er. References Bo yko v, Y., & Kolmogorov, V. (2004). An exp erimen- tal comparison of min-cut/max-flo w algorithms for energy minimization in vision. IEEE T r ans- actions on Pattern Analysis and Machine Intel- ligenc e , 26 , 1124-1137. Duc hi, J., T arlow, D., Elidan, G., & Koller, D. (2007). Using combinatorial optimization within max- pro duct b elief propagation. In NIPS. Duec k, D. (2010). Affinity pr op agation: Clustering data by p assing messages . Unpublished do ctoral dissertation, Universit y of T oron to. Edmonds, J., & Karp, R. M. (1972). Theoretical im- pro vemen ts in algorithmic efficiency for netw ork flo w problems. Journal of the ACM , 19 , 248264. Elidan, G., McGraw, I., & Koller, D. (2006). Resid- ual b elief propagation: Informed sc heduling for async hronous message passing. In UAI. F ord, L. R., & F ulk erson, D. R. (1956). Maximal flo w through a netw ork. Canadian Journal of Mathematics , 8 , 399-404. Glob erson, A., & Jaakkola, T. (2008). Fixing max pro duct: Conv ergen t message passing al- gorithms for MAP LP-relaxations. In NIPS. Kohli, P ., & T orr, P. H. S. (2007). Dynamic graph cuts for efficien t inference in marko v random fields. P AMI , 29 (12), 2079–2088. Kolmogoro v, V., & W ainwrigh t, M. (2005). On the optimality of tree-reweigh ted max-pro duct message-passing. In UAI (p. 316-32). Kolmogoro v, V., & Zabih, R. (2002). What energy functions can b e minimized via graph cuts? In ECCV (p. 65-81). Ksc hischang, F., F rey , B. J., & Lo eliger, H.-A. (2001). F actor Graphs and the Sum-Pro duct Algorithm. IEEE T r ansa. Info. The ory , 47 (2), 498 – 519. Kulesza, A., & Pereira, F. (2008). Structured learning with approximate inference. In NIPS. Sc hrijver, A. (2003). Combinatorial optimization - p olyhe dr a and efficiency . Berlin, Germany: Springer-V erlag. Son tag, D., & Jaakk ola, T. (2009). T ree blo ck co ordi- nate descen t for map in graphical models. In A r- tificial Intel ligenc e and Statistics (AIST A TS). Sutton, C., & McCallum, A. (2007). Improv ed dy- namic schedules for b elief propagation. In UAI. W ainwrigh t, Jaakkola, T., & Willsky , A. (2004). T ree consistency and b ounds on the p erformance of the max-pro duct algorithm and its generaliza- tions. Statistics and Computing , 14 (2). W ainwrigh t, & Jordan. (2008). Graphical mo dels, exp onen tial families, and v ariational inference. F oundations and T r ends in Machine L e arning . W eiss, Y. (2000). Correctness of lo cal probabil- it y propagation in graphical mo dels with lo ops. Neur al Computation , 12 , 1–41. W eiss, Y., Y ano v er, C., & Meltzer, T. (2007). MAP estimation, linear programming and b elief prop- agation with conv ex free energies. In The 23r d c onfer enc e on unc ertainty in artificial intel li- genc e. W erner, T. (2007). A linear programming approac h to max-sum problem: A review. P AMI , 29 (7), 1165–1179. A Supplemen tary Material Accompan ying “Graph Cuts is a Max-Pro duct Algorithm” W e provide additional details omitted from the main pap er due to space limitations. Lemma 1 (Message-Preserving F actors) When p ass- ing standar d MP messages with the factors as ab ove, θ 10 ij ≥ a , and θ 01 ij ≥ b + f , the outgoing factor-to- variable message is e qual to the inc oming variable- to-factor message i.e. m Θ ij → x j = m x i → Θ ij and m Θ ij → x i = m x j → Θ ij . Pr o of. This follows simply from plugging in v alues to the message up dates. W e sho w the i to j direction. m x i → Θ ij ( x i ) = a b + f m Θ ij → x j ( x j ) = min x i Θ ij ( x i , x j ) + m x i → Θ ij ( x i ) = min x i θ 10 ij · x i (1 − x j ) + θ 01 ij · (1 − x i ) x j + a · (1 − x i ) + ( b + f ) · x i ] m Θ ij → x j (0) = min( θ 10 ij + b + f , a ) = a m Θ ij → x j (1) = min( a + θ 01 ij , b + f ) = b + f m Θ ij → x j ( x j ) = a b + f where the final ev aluation of the min functions used the assumptions that θ 10 ij ≥ a and θ 01 ij ≥ b + f . Lemma 2 The change in p airwise b elief on the cur- r ent augmenting p ath T ( t ) fr om the b e ginning of an iter ation t to the end of an iter ation is ∆ b ij ( x i , x j ) = 0 − f + f 0 + f ij ∈ T ( t ) . (24) Pr o of. At the start of the iteration, message m x i → Θ ij ( x i ) = ( a, b ) T for some a, b . As mentioned in the pro of of Corollary 1, during APMP , m x j → Θ ij ( x j ) will b e incremented by exactly the same v alues as m x i → Θ ij ( x i ), except in opp osite p ositions. All mes- sages are initialized to 0, so m x j → Θ ij ( x j ) = ( b, a ) T . The initial b elief is then b init ij ( x i , x j ) = a + b θ 01 ij + 2 a θ 10 ij + 2 b a + b (25) = 0 θ 01 ij + a − b θ 10 ij + b − a 0 + κ 1 . (26) After passing messages on T ( t ), m x i → Θ ij ( x i ) = ( a, b + f ) T and m x j → Θ ij ( x j ) = ( b + f , a ) T . The new b elief is b final ij ( x i , x j ) = a + b + f θ 01 ij + 2 a θ 10 ij + 2 b + 2 f a + b + f (27) = 0 θ 01 ij + a − b − f θ 10 ij + b − a + f 0 + κ 2 . (28) Here κ 1 = a + b and κ 2 = a + b + f . Subtracting the initial b elief from the final b elief finishes the pro of: ∆ b ij ( x i , x j ) = 0 − f f 0 + f . (29) Messages at the end of Phase 1 define homoge- neous islands: W e pro ve that messages at the end of Phase 1 define homogeneous islands in t wo parts: Lemma 6 (Binary Mask Prop ert y) . If a p airwise factor Θ ij c omputes outgoing message m Θ ij → j ( x j ) = (0 , 0) T given inc oming message m i → Θ ij ( x i ) = ( α, 0) T for some α > 0 , then it wil l c ompute the same (0 , 0) T outgoing message given any inc oming message of the form, m i → Θ ij ( x i ) = ( α 0 , 0) T , α 0 ≥ 0 . (The same is true of messages with a zer o in the opp osite p osition.) Pr o of. This essentially follows from plugging in v alues to message up date equations. Supp ose m i → Θ ij ( x i ) = ( α, 0) T and m Θ ij → j ( x j ) = (0 , 0) T . Plugging in to the message up date equation, we s ee that, m Θ ij → x j ( x j ) = min x i Θ ij ( x i , x j ) + m x i → Θ ij ( x i ) = min x i θ 10 ij · x i (1 − x j ) + θ 01 ij · (1 − x i ) x j + α · (1 − x i )] m Θ ij → x j (0) = min( θ 10 ij , α ) m Θ ij → x j (1) = min( α + θ 01 ij , 0) = 0 m Θ ij → x j ( x j ) = min( θ 10 ij , α ) 0 . In order for this to ev aluate to (0 , 0) T when α > 0, θ 01 ij m ust b e 0. Since θ 01 ij = 0, no matter what v alue of α 0 ≥ 0 we are given, it is clear that min( θ 10 ij , α 0 ) = 0. Lemma 7 (Iterated Homogeneity) . Homo gene ous is- lands of typ e ( α, 0) (or (0 , β ) ) ar e close d under p assing Strict MP messages b etwe en variables in the island. That is, a variable that starts with b elief ( α, 0) T , α ≥ 0 wil l have b elief ( α 0 , 0) T , α 0 ≥ 0 after any numb er of r ounds of message p assing. Pr o of. Initially , all b eliefs hav e the form ( α i , 0) T , α i ≥ 0 b y definition. Given an incoming message of the form ( α, 0) T , α ≥ 0, a submo dular pairwise factor will com- pute outgoing message (min( α , θ 10 ij ) , 0) T , where θ 10 ij ≥ 0. The minim um of t wo non-negativ e quan tities is pos- itiv e. V ariable to factor messages will sum messages of this same form, and the sum of t wo non-negative quan tities is non-negative. Thus, all messages passed within the island will b e of the form ( α, 0) T , α ≥ 0, whic h b eliefs will be of the prop er form. Lemma 6 sho ws that edges previously defining the b oundary of the island will still define the b oundary of the island. The case of incoming message (0 , β ) T is analogous. Lemma 4. At the end of Phase 1, the messages of APMP define a c ol le ction of homo gene ous islands. Pr o of. (Sketc h) This is essen tially equiv alen t to the max-flo w min-cut theorem, which prov es the optimal- it y of the F ord-F ulk erson algorithm when no more aug- men ting paths can be found. In our form ulation, at the end of Phase 1, there are by definition no paths with nonzero capacity , whic h implies that along an y path b et w een a v ariable i with b elief ( α, 0) T , α > 0 and a v ariable k with b elief (0 , β ) T , β > 0, there m ust be a factor-to-v ariable message that giv en incoming mes- sage ( α, 0) T , α > 0 would pro duce outgoing message (0 , 0) T . (This is similarly true of opp osite direction messages.) Th us, to define the islands, start at each v ariable will nonzero b elief, sa y of the form ( α , 0) T , and searc h out wards by tra versing each edge iff it would pass a nonzero message given incoming message ( α , 0) T . Merge all v ariables encountered along the search in to a single homogeneous island.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment