Bounds on the Bayes Error Given Moments

We show how to compute lower bounds for the supremum Bayes error if the class-conditional distributions must satisfy moment constraints, where the supremum is with respect to the unknown class-conditional distributions. Our approach makes use of Curt…

Authors: Bela A. Frigyik, Maya R. Gupta

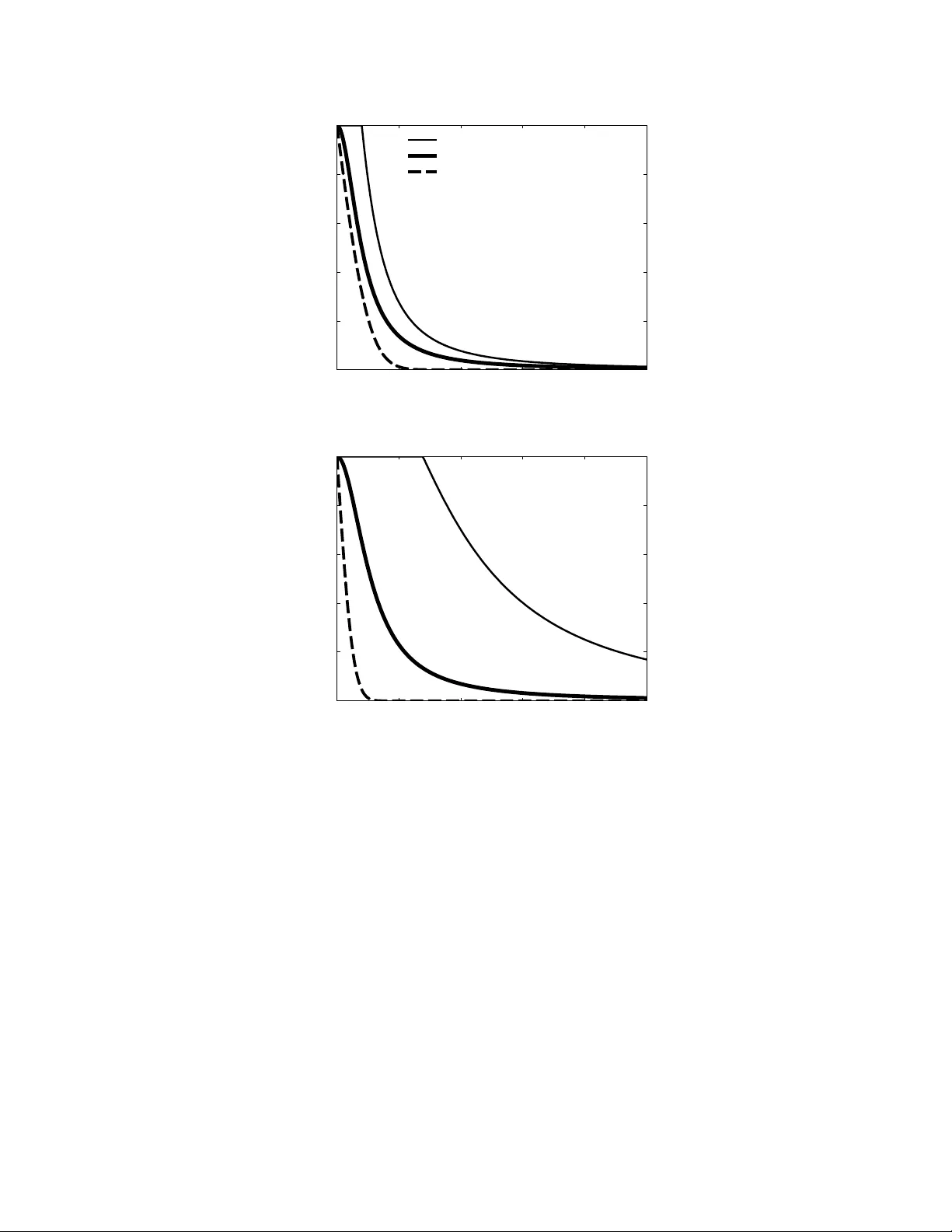

1 Bounds on the Bayes Error Gi v en Moments Bela A. Frigyik and Maya R. Gupta Abstract W e show ho w to compute lower bounds for the supremum Bayes error if the class-conditional distributions must satisfy moment constraints, where the supremum is with respect to the unknown class-conditional distributions. Our approach mak es use of Curto and Fialkow’ s solutions for the truncated moment problem. The lower bound shows that the popular Gaussian assumption is not robust in this regard. W e also construct an upper bound for the supremum Bayes error by constraining the decision boundary to be linear . Index T erms Bayes error , maximum entropy , moment constraint, truncated moments, quadratic discriminant analysis I . I N T RO D U C T I O N A standard approach in pattern recognition is to estimate the first two moments of each class-conditional distribution from training samples, and then assume the unknown distributions are Gaussians. Depending on the exact assumptions, this approach is called linear or quadratic discriminant analysis (QD A) [1], [2]. Gaussians are known to maximize entropy giv en the first two moments [3] and to have other nice mathematical properties, but how robust are they with respect to maximizing the Bayes error? T o answer that, in this paper we inv estigate the more general question: “What is the maximum possible Bayes error gi ven moment constraints on the class-conditional distributions?” W e present both a lo wer bound and an upper bound for the maximum possible Bayes error . The lo wer bound means that there exists a set of class-conditional distributions that hav e the gi ven moments and have a Bayes error abov e the given lower bound. The upper bound means that no set of class-conditional distributions can exist that hav e the gi ven moments and hav e a higher Bayes error than the giv en upper bound. Our results provide some insight into ho w confident one can be in a classifier if one is confident in the estimation of the first n moments. In particular, gi ven only the certainty that two equally-likely classes have dif ferent means (and no trustworthy estimate of their variances), we show that the Bayes error could be 1 / 2 , that is, the classes may not be separable at all. Gi ven the first two moments, our results show that the popular Gaussian assumption for the class distrib utions is f airly optimistic - the true Bayes error could be much worse. Ho wev er, we show that the closer the class v ariances are, the more rob ust the Gaussian assumption is. In general, the gi ven lower -bound may be a helpful way to assess the robustness of the assumed distributions used in generati ve classifiers. The giv en upper bound may also be useful in practice. Recall that the Bayes error is the error that would arise from the optimal decision boundary . Thus, if one has a classifier and finds that the sample test error is much higher than the gi ven upper bound on the worst-case Bayes error, two possibilities should be considered. First, it may imply that the classifier’ s decision boundary is far from optimal, and that the classifier should be improved. Or , it could be that the test samples used to judge the test error are an unrepresentativ e set, and that more test samples should be taken to get a useful estimate of the test error . There are a number of other results regarding the optimization of different functionals given moment constraints (e.g. [4]–[12]). Howe ver , we are not aware of any previous work bounding the maximum Bayes error giv en moment constraints. Some related problems are considered by Antos et al. [13]; a ke y difference to their work is that while we assume moments are giv en, they instead take as given iid samples from the class-conditional distributions, and they then bound the av erage error of an estimate of the Bayes error . After some mathematical preliminaries, we giv e lower bounds for the maximum Bayes error in Section III. W e construct our lo wer bounds by creating a truncated moment problem. The e xistence of a particular lo wer bound then depends on the feasibility of the corresponding truncated moment problem, which can be checked using Curto and Fialkow’ s solutions [14] (revie wed in the appendix). In Section IV, we show that the approach of Lanckreit et al. [10], which assumes a linear decision boundary , can be extended to provide an upper bound on the maximum Frigyik and Gupta are with the Department of Electrical Engineering, Univ ersity of W ashington, Seattle, W A 98195 (e-mail: frigyik@uw .edu, gupta@ee.washington.edu). This work was supported by the United States PECASE A ward. 2 Bayes error . W e provide an illustration of the tightness of these bounds in Section V, then end with a discussion and some open questions. I I . B AY E S E R R OR Let X be a vector space and let Y be a finite set of classes. W ithout loss of generality we may assume that Y = { 1 , . . . , G } . Suppose that there is a measurable classification function h : X → S G where S G is the ( G − 1) probability simplex. Then the i th component of h ( x ) can be interpreted as the probability of class i giv en x , and we write p ( i | x ) = h ( x ) i . For a giv en x ∈ X , the Bayes classifier selects the class ˆ y ( x ) that maximizes the posterior probability p ( i | x ) (if there is a tie for the maximum, then any of the tied classes can be chosen). The probability that the Bayes classifier is wrong for a given x is P e ( x ) = 1 − max i ∈Y p ( i | x ) . (II.1) Suppose there is a probability measure ν defined on X . Then the Bayes err or is the expectation of P e : E [ P e ] = 1 − Z x ∈X max i ∈Y p ( i | x ) dν ( x ) . (II.2) The fact that the p ( i | x ) must sum to one over i , and thus max i p ( i | x ) ≥ 1 /G , implies a trivial upper bound on the Bayes error giv en in (II.2): E [ P e ] ≤ 1 − Z x 1 G dν ( x ) = G − 1 G . Suppose that the probability measure ν is defined on X such that it is absolutely continuous w .r .t. the Lebesgue measure such that it has density p ( x ) . Or suppose that it is discrete and expressed as ν = ∞ X j =1 α j δ x j , where δ x j is the Dirac measure with support x j , α j > 0 for all j = 1 , 2 , . . . and P ∞ j =1 α j = 1 , and we say the density p ( x j ) = α j . In either case, (II.2) can be expressed in terms of the i th class prior p ( i ) = R p ( i | x ) dν ( x ) and i th class-conditional density p ( x | i ) (or probability mass function p ( x j | i ) ) as follows: E [ P e ] = 1 − ∞ X j =1 max i ∈Y p ( x j | i ) p ( i ) in the discrete case 1 − Z max i ∈Y p ( x | i ) p ( i ) dx in the absolutely continuous case. (II.3) If ν is a general measure then Lebesgue’ s decomposition theorem says that it can be written as a sum of three measures: ν = ν d + ν ac + ν sc . Here ν d is a discrete measure and the other two measures are continuous; ν ac is absolutely continuous w .r .t. the Lebesgue measure, and ν sc is the remaining singular part. W e hav e a con venient representation for both the discrete and the absolutely continuous part of a measure b ut not for the singular portion. For this reason we are going to restrict our attention to measures that are either discrete or absolutely continuous (or a linear combination of these kind of measures). I I I . L OW E R B O U N D S F O R W O R S T - C A S E B AY E S E R RO R Our strate gy to providing a lower bound on the supremum Bayes error is to constrain the G probability distributions p ( x | i ) , i ∈ Y to ha ve an overlap of size ∈ (0 , 1) . Specifically , we constrain the G distributions to each hav e a Dirac measure of size at the same location. In the case of uniform class prior probabilities this makes the Bayes error at least G − 1 G . The largest such for which this ov erlap constraint is feasible determines the best lower bound on the worst-case Bayes error this strate gy can pro vide. The maximum such feasible can be determined by checking whether there is a solution to a corresponding truncated moment problem (see the appendix for details). Note that this approach does not restrict the distrib utions from ov erlapping else where which would increase the Bayes error, and thus this approach only provides a lower bound to the maximum Bayes error . 3 W e first present a constructive solution sho wing that no matter what the first moments are, the Bayes error can be arbitrarily bad if only the first moments are gi ven. Then we deriv e conditions for the size of the lower bound for the two moment case and three moment case, and end with what we can say for the general case of n moments. Lemma III.1. Suppose the first moments γ 1 ,i ar e given for each i in a subset of { 1 , . . . , G } and the r emaining class-conditional distributions ar e unconstrained. Then for all 1 > > 0 one can construct G discrete or absolutely continuous class-conditional distributions such that the Bayes err or E [ P e ] ≥ (1 − max i ∈Y p ( i )) . Pr oof: This lemma works for any vector space X . The moment constraints hold if the i th class-conditional distribution is taken to be p ( x | i ) = δ 0 ( x ) + (1 − ) δ z i ( x ) where z i = γ 1 ,i (1 − ) . This constructiv e solution exists for any ∈ (0 , 1) and yields a Bayes error of at least (1 − max i ∈Y p ( i )) . T o see this, substitute the G measures { δ 0 + (1 − ) δ z i } into (II.3) to produce E [ P e ] = 1 − ∞ X j =1 max i ∈Y ( δ 0 ( x j ) + (1 − ) δ z i ( x j )) p ( i ) = 1 − max i ∈Y p ( i ) + ∞ X j =1 max i ∈Y p ( i )(1 − ) δ z i ( x j ) ≥ 1 − max i ∈Y p ( i ) + (1 − ) G X i =1 p ( i ) ! = 1 − max i ∈Y p ( i ) . For an absolutely continuous example, consider X = R . The uniform densities p l ( x | i ) = 1 2 lp ( i ) I [ γ 1 ,i − ( lp ( i )) ,γ 1 ,i +( lp ( i ))] ( x ) with i = 1 , 2 , . . . , G (where I E is the indicator function of the set E ) provide class-conditional distributions such that as l → ∞ the Bayes error goes to 1 − max i ∈Y p ( i ) . T o see this, let i ∗ ∈ argmax i ∈Y p ( i ) and consider the difference d i = γ 1 ,i ∗ + lp ( i ∗ ) − ( γ 1 ,i + lp ( i )) = ( γ 1 ,i ∗ − γ 1 ,i ) + l ( p ( i ∗ ) − p ( i )) . If p ( i ∗ ) − p ( i ) > 0 then d i → ∞ as l → ∞ therefore there is an l 0 > 0 such that if l > l 0 then d i > 0 and hence γ 1 ,i ∗ + lp ( i ∗ ) > γ 1 ,i + lp ( i ) . A similar deri vation sho ws that there is an l 00 > 0 such that if l > l 00 then γ 1 ,i ∗ − lp ( i ∗ ) < γ 1 ,i − lp ( i ) . In other words, if p ( i ∗ ) − p ( i ) > 0 then p ( i ∗ ) p ( x | i ∗ ) e ventually dominates p ( i ) p ( x | i ) since all the functions p ( j ) p ( x | j ) , j = 1 , . . . , G hav e the same amplitude 1 2 l . If p ( i ∗ ) − p ( i ) = 0 then the integral of the function p ( i ) p ( x | i ) that is not dominated by p ( i ∗ ) p ( x | i ∗ ) is | γ 1 ,i ∗ − γ 1 ,i | 2 l → 0 as l → ∞ . Finally , the integral of the dominant function p ( i ∗ ) p ( x | i ∗ ) is 1 2 l 2 lp ( i ∗ ) = p ( i ∗ ) and therefore the Bayes error approaches 1 − max i ∈Y p ( i ) as l → ∞ . Theorem III.1. Suppose that X = R and that there exist G class-conditional measur es with moments { γ 1 ,i , γ 2 ,i } , i ∈ Y . Given only this set of moments { γ 1 ,i , γ 2 ,i } , i ∈ Y , a lower bound on the supr emum Bayes err or is sup E [ P e ] ≥ sup ∆ ∈ R " G X i =1 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i − max i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i # ≥ ( G − 1) sup ∆ ∈ R min i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i , wher e the supremum on the left-hand-side is taken over all combinations of G class-conditional measur es satisfying the moment constraints. If G = 2 then sup E [ P e ] ≥ sup ∆ ∈ R min i =1 , 2 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i . (III.1) Further , if the class priors ar e equal, then, in terms of the center ed second moment σ 2 i = γ 2 ,i − γ 2 1 ,i , the optimal ∆ value is one of ∆ = − ( γ 1 , 2 σ 2 1 − γ 1 , 1 σ 2 2 ) ± σ 1 σ 2 | γ 1 , 1 − γ 1 , 2 | σ 2 2 − σ 2 1 (III.2) 4 if σ 1 6 = σ 2 . Otherwise, if σ 1 = σ 2 , ∆ = γ 1 , 1 + γ 1 , 2 2 , (III.3) and the lower bound simplifies: sup E [ P e ] ≥ 2 σ 2 1 4 σ 2 1 + ( γ 1 , 1 − γ 1 , 2 ) 2 . Pr oof: Consider some 0 < < 1 . If the class prior is uniform then a suf ficient condition for the Bayes error to be at least G − 1 G is if all of the unkno wn measures share a Dirac measure of at least . First, we place this Dirac measure at zero and find the maximum for which this can be done. Then later in the proof we show that a larger (and hence a tighter lower bound on the maximum Bayes error) can be found by placing this shared Dirac measure in a more optimal location, or equiv alently , by shifting all the measures. Suppose a probability measure µ can be expressed in the form δ 0 + ˜ µ where ˜ µ is some measure such that ˜ µ ( { 0 } ) = 0 . If µ satisfies the original moment constraints then ˜ µ also satisfies them; this follows directly from the moment definition for n ≥ 1 : Z x n dµ ( x ) = 0 n + Z x n d ˜ µ ( x ) = Z x n d ˜ µ ( x ) . Also ˜ µ ( X ) = 1 − . Thus, we require a measure ˜ µ with a zeroth moment γ 0 = 1 − > 0 and the original first and second moments γ 1 , γ 2 . Then, as described in Section VII, there are two conditions that we hav e to check. In order to have a measure with the prescribed moments, the matrix A = A (1) = 1 − γ 1 γ 1 γ 2 . has to be positive semidefinite, which holds if and only if ≤ 1 − γ 2 1 γ 2 . (Note that the Theorem assumes that there exists a distrib ution with the given moments, and thus the above implies that γ 2 ≥ γ 2 1 ). Moreov er , the rank of matrix A and the rank of γ (for notation see Section VII) have to be the same. Matrix A can have rank 1 or 2. If rank( A ) = 1 then the columns of A are linearly dependent and therefore rank( γ ) = 1 . If rank( A ) = 2 then A is in vertible and rank( γ ) = 2 . Thus there is a measure ˜ µ with moments { 1 − , γ 1 , γ 2 } if f 0 ≤ ≤ 1 − γ 2 1 γ 2 . If such a ˜ µ e xists, then there also exists a discrete probability measure with moments { 1 − , γ 1 , γ 2 } and 0 ≤ ≤ 1 − γ 2 1 γ 2 by Curto and Fialkow’ s Theorem 3.1 and Theorem 3.9 [14]. Suppose we ha ve G such discrete probability measures satisfying the corresponding moments constraints giv en in the statement of this theorem. Denote the i th discrete probability measure by ν i = i δ 0 + P ∞ j =1 α j,i δ x j where 0 ≤ i ≤ 1 − γ 2 1 ,i γ 2 ,i and x j 6 = 0 for all j , and j inde xes the set of all non-zero atoms in the G discrete measures { ν i } . Then the supremum Bayes error is bounded below by the Bayes error for this set of discrete measures: sup E [ P e ] ≥ 1 − max i ∈Y { i p ( i ) } − ∞ X j =1 max i ∈Y α j,i p ( i ) (III.4) ≥ 1 − max i ∈Y { i p ( i ) } − ∞ X j =1 G X i =1 α j,i p ( i ) (III.5) = 1 − max i ∈Y { i p ( i ) } − G X i =1 p ( i ) ∞ X j =1 α j,i (III.6) = 1 − max i ∈Y { i p ( i ) } − G X i =1 p ( i )(1 − i ) = G X i =1 p ( i ) i − max i ∈Y { i p ( i ) } . (III.7) This is true for any collection of i , i ≤ 1 − γ 2 1 ,i γ 2 ,i . This means that sup E [ P e ] is an upper bound for (III.7) for these admissible i and we can find a tighter inequality by finding the supremum of (III.7) over the set of admissible i . The domain of the function (III.7) is the Cartesian product Q G i =1 h 0 , 1 − γ 2 1 ,i γ 2 ,i i . It is a non-empty 5 compact set and (III.7) is continuous, so we can expect to find a maximum. The maximum is unique and to find it let ( 1 , . . . , G ) be any element in the domain and let i ∗ ∈ argmax i n p ( i ) 1 − γ 2 1 ,i γ 2 ,i o . Since p ( i ) ≥ 0 , we ha ve G X i =1 p ( i ) i − max i ∈Y { p ( i ) i } ≤ X i 6 = i ∗ p ( i ) i ≤ X i 6 = i ∗ p ( i ) 1 − γ 2 1 ,i γ 2 ,i ! = G X i =1 p ( i ) 1 − γ 2 1 ,i γ 2 ,i ! − max i ∈Y ( p ( i ) 1 − γ 2 1 ,i γ 2 ,i !) . Therefore sup E [ P e ] ≥ G X i =1 p ( i ) 1 − γ 2 1 ,i γ 2 ,i ! − max i ∈Y ( p ( i ) 1 − γ 2 1 ,i γ 2 ,i !) ≥ ( G − 1) min i ∈Y ( p ( i ) 1 − γ 2 1 ,i γ 2 ,i !) , (III.8a) where the supremum on the left-hand-side is taken over all the combination of class-conditional measures that satisfy the giv en moments constraints. The next step follows from the fact that the Lebesgue measure and the counting measure are shift-in variant measures and the Bayes error is computed by integrating some functions against those measures. Suppose we had G class distrib utions, and we shift each of them by ∆ . The Bayes error would not change. Howe ver , our lo wer bound gi ven in (III.8a) depends on the actual gi ven means { γ 1 ,i } , and in some cases we can produce a better lower bound by shifting the distrib utions before applying the abov e lower bounding strategy . The shifting approach we present next is equiv alent to placing the shared measure someplace other than at the origin. Shifting a distribution by ∆ does change all of the moments (because they are not centered moments), specifically , if µ is a probability measure with finite moments γ 0 = 1 , γ 1 , . . . , γ n , and µ ∆ is the measure defined by µ ∆ ( D ) = µ ( D + ∆) for all µ -measurable sets D , then the n -th non-centered moment of the shifted measure µ ∆ is ˜ γ n = Z x n dµ ∆ ( x ) = Z ( x − ∆) n dµ ( x ) = n X k =0 ( − 1) n − k n k ∆ n − k γ k , where the second equality can easily be proven for any σ -finite measure using the definition of integral. This same formula shows that shifting back the measure will transform back the moments. For the two-moment case, the shifted measure’ s moments are related to the original moments by: ˜ γ 1 = γ 1 − ∆ ˜ γ 2 = γ 2 + ∆ 2 − 2∆ γ 1 . Then a tighter lo wer bound can be produced by choosing the shift ∆ that maximizes the shift-dependent lower bound giv en in (III.8a): sup E [ P e ] ≥ sup ∆ ∈ R " G X i =1 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i − max i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i # ≥ ( G − 1) sup ∆ ∈ R min i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i . If G = 2 then this lo wer bound is sup ∆ ∈ R " 2 X i =1 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i − max i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i # = sup ∆ ∈ R min i =1 , 2 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i . 6 W e can say more in the case of equal class priors, that is, if p (1) = p (2) = 1 / 2 . The functions f i (∆) = 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i are maximized at ∆ = γ 1 ,i , where the maximum v alue is 1 and the deriv ativ e of function f i is strictly positi ve for ∆ < γ 1 ,i and strictly ne gativ e for ∆ > γ 1 ,i , i = 1 , 2 . This means that the potential maximum occurs at the point where the two functions are equal. This results in a quadratic equation if σ 2 2 6 = σ 2 1 with solutions (III.2), and otherwise a linear one with solution (III.3). If γ 1 , 1 = γ 1 , 2 then the function f i with smaller γ 2 ,i will provide us with the lower bound which is 1 / 2 , as expected. If γ 1 , 1 6 = γ 1 , 2 then since f i ( γ 1 ,i ) = 1 the maximum occurs at a ∆ value which is between the two γ 1 ,i . T o see this let J be the interv al defined by the two γ 1 ,i . As a consequence of the strict nature of the deriv atives, for any ∆ value outside of the interv al J the function min i ∈Y 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i is less than on J . But on J the function f 1 (∆) − f 2 (∆) is continuous and thanks to the fact that f i ( γ 1 ,i ) = 1 and the beha vior of the deriv atives, it has dif ferent sign at the two endpoints of J . This means that there is a ∆ ∈ J such that f 1 (∆) − f 2 (∆) = 0 . This theorem applies only to one-dimensional distrib utions. The approach of constraining the distributions to hav e measure at a common location can be extended to higher-dimensions, but actually determining whether the moment constraints can still be satisfied becomes significantly hairier; see [14] for a sketch of the truncated moment solutions for higher dimensions. An argument similar to the one gi ven in the last two paragraphs of the previous proof can be used to sho w that if γ 2 ,i − γ 2 1 ,i are all equal for all i ∈ Y and any finite G , and if the class priors are equal, then the optimal ∆ is γ 1 , min + γ 1 , max 2 , where γ 1 , min and γ 1 , max are the smallest and the largest values in the set { γ 1 ,i } i ∈Y , respectiv ely . T o see this, we start with re writing the function f i : f i (∆) = 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i = σ 2 i σ 2 i + (∆ − γ 1 ,i ) 2 . This shows, that if the condition mentioned above holds then the functions f i are shifted versions of each other . Let f min and f max be the functions corresponding to γ 1 , min and γ 1 , max , respectiv ely and let ∆ 0 be the point where f min and f max intersect. Because of the strict nature of the deri vati ves of f i and because the functions are shifted versions of each other , for any ∆ ≥ ∆ 0 , f min is smaller than any other f i . Because of symmetry , it is true that for any ∆ ≤ ∆ 0 , f max is smaller than any other f i . Again, by symmetry we have that ∆ 0 = γ 1 , min + γ 1 , max 2 and therefore this is the optimal ∆ . Corollary III.1. Suppose that X = R and that the first, the second and the third moments ar e given for G class- conditional measur es, i.e. for the ith class-conditional measur e we ar e given { γ 1 ,i , γ 2 ,i , γ 3 ,i } . Then the Bayes err or has lower bound sup E [ P e ] ≥ sup δ > 0 sup ∆ ∈ R " G X i =1 p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i − δ − max i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i − δ # ≥ ( G − 1) sup ∆ ∈ R min i ∈Y p ( i ) 1 − ( γ 1 ,i − ∆) 2 γ 2 ,i + ∆ 2 − 2∆ γ 1 ,i . Pr oof: In this case we hav e a list of four numbers { 1 − , γ 1 , γ 2 , γ 3 } and again A = A (1) = 1 − γ 1 γ 1 γ 2 . If γ 0 = 1 − > 0 then A is positiv e definite if ≤ 1 − γ 2 1 γ 2 − δ < 1 − γ 2 1 γ 2 . In this case v 2 = ( γ 2 , γ 3 ) T and it is in the range of A since A is in vertible. The statements in Section VII imply that there is a measure with moments 7 { 1 − , γ 1 , γ 2 , γ 3 } and consequently that sup E [ P e ] ≥ sup δ > 0 " G X i =1 p ( i ) 1 − γ 2 1 ,i γ 2 ,i − δ ! − max i ∈Y ( p ( i ) 1 − γ 2 1 ,i γ 2 ,i − δ !)# ≥ ( G − 1) min i ∈Y ( p ( i ) 1 − γ 2 1 ,i γ 2 ,i !) , The rest of the proof follo ws analogously to the proof of Theorem III.1. The proof of Corollary III.1 relies on the fact that for δ > 0 the matrix A (1) featured in the proof is in vertible, so one of the conditions for the existence of a measure with the gi ven moments is automatically satisfied (see Appendix). If δ = 0 then A (1) is only positi ve semidefinite and it is not obvious that the vector v 2 is in the range of A (1) . The following lemma is stated for completeness. Lemma III.2. Suppose that X = R and that the first n moments ar e given for G equally likely class-conditional measur es, i.e. for the ith class-conditional measur e we ar e given { γ 1 ,i , γ 2 ,i , . . . , γ n,i } . Then if there exist measur es of the form i δ 0 + ν i wher e ν i satisfies the moments conditions given above the corr esponding Bayes err or can be bounded fr om below: sup E [ P e ] ≥ 1 − 1 G " max i ∈Y { i } + G X i =1 (1 − i ) # = 1 G " G X i =1 i − max i ∈Y { i } # , wher e the supr emum on the left is taken over all the measur es satisfying the moment constraints noted above. Pr oof: The first part of the proof of Theorem III.1 is applicable in this case. As in the case for two moments, the lower bound can be further tightened by optimizing ov er all possible shifts of the overlap Dirac measure. I V . U P P E R B O U N D F O R W O R S T - C A S E B AY ES E R R O R Because the Bayes error is the smallest error over all decision boundaries, one approach to constructing an upper bound on the maximum Bayes error is to restrict the set of considered decision boundaries to a set for which the worst-case error is easier to analyze. For example, Lanckreit et al. [10] take as giv en the first and second moments of each class-conditional distribution, and attempt to find the linear decision boundary classifier that minimizes the worst-case classification error rate with respect to any choice of class-conditional distributions that satisfy the given moment constraints. Here we show that this approach can be extended to produce an upper bound on the supremum Bayes error for the G = 2 case. Let X be any feature space. Suppose one has two fixed class-conditional measures ν 1 , ν 2 on X . As in Lanckreit et al. [10], consider the set of linear decision boundaries. Any linear decision boundary splits the domain into two half- spaces S 1 and S 2 . W e work with linear decision boundaries because these are the only kind of decision boundaries that split the domain into two conv ex subsets. The error produced by a linear decision boundary corresponding to the split ( S 1 , S 2 ) is p (1) ν 1 ( S 2 ) + p (2) ν 2 ( S 1 ) ≥ E [ P e ]( ν 1 , ν 2 ) . (IV .1) That is, the error from any linear decision boundary upper bounds the Bayes error E [ P e ] for two giv en measures. T o obtain a tighter upper bound on the Bayes error , minimize the left-hand side over all linear decision boundaries: inf S 1 ,S 2 ( p (1) ν 1 ( S 2 ) + p (2) ν 2 ( S 1 )) ≥ E [ P e ]( ν 1 , ν 2 ) . (IV .2) Now suppose ν 1 and ν 2 are unkno wn, but their first moments (means) and second centered moments ( µ 1 , Σ 1 ) and ( µ 2 , Σ 2 ) are given. Then we note that the supremum over all measures ν 1 and ν 2 with those moments of the smallest linear decision boundary error forms an upper bound on the supremum Bayes error where the supremum is taken with respect to the feasible measures ν 1 , ν 2 : sup E [ P e ] ≤ sup ν 1 | µ 1 , Σ 1 sup ν 2 | µ 2 , Σ 2 inf S 1 ,S 2 ( p (1) ν 1 ( S 2 ) + p (2) ν 2 ( S 1 )) (IV .3) ≤ inf S 1 ,S 2 p (1) sup ν 1 | µ 1 , Σ 1 ν 1 ( S 2 ) + p (2) sup ν 2 | µ 2 , Σ 2 ν 2 ( S 1 ) ! . (IV .4) 8 This upper bound can be simplified using the follo wing result 1 by Bertsimas and Popescu [15] (which follows from a result by Marshall and Olkin [16]): sup ν | µ, Σ ν ( S ) = 1 1 + c ( S ) where c ( S ) = inf x ∈ S ( x − µ ) T Σ − 1 ( x − µ ) , (IV .5) where the sup in (IV .5) is ov er all probability measures ν with domain X , mean µ , and centered second moment Σ ; and S is some con vex set in the domain of ν . Since S 1 and S 2 in (IV .4) are half-spaces, the y are conv ex and (IV .5) can be used to quantify the upper bound. For the rest of this section let X be one dimensional. Then the cov ariance matrices Σ 1 and Σ 2 are just scalars that we denote by σ 2 1 and σ 2 2 , respectively . In one-dimension, any decision boundary that results in a half-plane split is simply a point s ∈ R . W ithout loss of generality with respect to the Bayes error , let µ 1 = 0 and µ 1 ≤ µ 2 . Then c ( S 1 ) and c ( S 2 ) in (IV .5) simplify (for details, see Appendix A of [10]), so that (IV .4) becomes sup E [ P e ] ≤ min inf s p (1) 1 + s 2 σ 2 1 + p (2) 1 + ( µ 2 − s ) 2 σ 2 2 , 1 . (IV .6) If σ 1 = σ 2 and p (1) = p (2) = 1 / 2 , then the infimum occurs at s = µ 2 / 2 and the upper bound becomes sup E [ P e ] ≤ min 4 4 + µ 2 2 σ 2 1 , 1 2 . For this case, the giv en upper bound is twice the gi ven lo wer bound. V . C O M PA R I S O N T O E R R O R W I T H G AU S S I A N S W e illustrate the bounds described in this paper for the common case that the first two moments are kno wn for each class, and the classes are equally likely . W e compare with the Bayes error produced under the assumption that the distributions are Gaussians with the giv en moments. In both cases the first distrib ution’ s mean is 0 and the v ariance is 1, and the second distribution’ s mean is varied from 0 to 25 as shown on the x-axis. The second distribution’ s v ariance is 1 for the comparison shown in the top of Fig. 1. The second distribution’ s v ariance is 5 for the comparison sho wn in the bottom of Fig. 1. F or the first case, σ 1 = σ 2 so the infimum in (IV .6) occurs at s = µ 2 / 2 and the upper bound is min 4 / (4 + µ 2 2 ) , 1 2 . For the second case with different v ariances we compute (IV .6) numerically . Fig. 1 shows that the Bayes error produced by the Gaussian assumption is optimistic compared to the given lower bound for the worst-case (maximum) Bayes error . Further, the dif ference between the Gaussian Bayes error and the lower bound is much larger in the second case when the v ariances of the two distributions differ . V I . D I S C U S S I O N A N D S O M E O P E N Q U E S T I O N S W e have provided a lo wer and upper bound on the worst-case Bayes error , but a number of open questions arise from this work. Lower bounds for the worst-case Bayes error can be constructed by constraining the distributions. W e have shown that constraining the distributions to be Gaussians produces a weak lower bound, and we provided a tighter lower bound by constraining the distrib utions to ov erlap in a Dirac measure of . Gi ven only first moments, our lo wer bound is tight in that it is arbitrarily close to the worst possible Bayes error . Given two moments, we hav e shown that the common QD A Gaussian assumption for class-conditional distrib utions is much more optimistic than our lower bound and increasingly optimistic for increased dif ference between the v ariances. Ho wev er, because in our constructions we do not control all the possible overlap between the class-conditional distributions, we belie ve it should be possible to construct tighter lo wer bounds. On the other hand, upper bounds on the worst-case Bayes error can be constructed by constraining the considered decision boundaries. Here, we considered an upper bound resulting from restricting the decision boundary to be linear . For the two moment case, we have shown that work by Lanckreit et al. leads almost directly to an upper bound. Ho wev er , the inequality we had to introduce in (IV .4) when we switched the inf and sup may make this upper bound loose. It remains an open question if there are conditions under which the upper bound is tight. 1 Some readers may recognize this result as a strengthened and generalized version of the Chebyshev-Cantelli inequality . 9 0 5 10 15 20 25 0 0.1 0.2 0.3 0.4 0.5 Difference Between Means Error Upper Bound on Max Bayes Error Lower Bound on Max Bayes Error Bayes Error with Gaussians σ 2 2 = 1 0 5 10 15 20 25 0 0.1 0.2 0.3 0.4 0.5 Difference Between Means Error σ 2 2 = 5 Fig. 1. Comparison of the gi ven lower bound for the worst-case Bayes error with the Bayes error produced by Gaussian class-conditional distributions. Our result that the popular Gaussian assumption is generally not very robust in terms of worst-case Bayes error prompts us to question whether there are other distributions that are mathematically or computationally con venient to use in generative classifiers that would have a Bayes error closer to the gi ven lo wer bound. In practice, a moment constraint is often created by estimating the moment from samples drawn iid from the distribution. In that case, the moment constraint need not be treated as a hard constraint as we ha ve done here. Rather , the observed samples can imply a probability distrib ution over the moments, which in turn could imply a distribution over corresponding bounds on the Bayes error . A similar open question is a sensitivity analysis of ho w changes in the moments would affect the bounds. Lastly , consider the opposite problem: given constraints on the first n moments for each of the class-conditional distributions, how small could the Bayes error be? It is tempting to suppose that one could generally find discrete measures that overlapped nowhere, such that the Bayes error was zero. Howe ver , the set of measures which satisfy a set of moment constrains may be no where dense, and that impedes us from being able to make such a guarantee. Thus, this remains an open question. 10 A P P E N D I X V I I . E X I S T E N C E O F M E A S U R E S W I T H C E RTA I N M O M E N T S The proof of our theorem reduces to the problem of ho w to check if a giv en list of n numbers could be the moments of some measure. This problem is called the truncated moment problem; here we revie w the relev ant solutions by Curto and Fialkow [14]. Suppose we are giv en a list of numbers γ = { γ 0 , γ 1 , . . . , γ n } , with γ 0 > 0 . Can this collection be a list of moments for some positive Borel measure ν on R such that γ i = Z s i dν ( s )? (VII.1) Let k = b n/ 2 c , and construct a Hankel matrix A ( k ) from γ where the i th ro w of A is [ γ i − 1 γ i . . . γ i − 1+ k ] . For example, for n = 2 or n = 3 , k = 1 : A (1) = γ 0 γ 1 γ 1 γ 2 . Let v j be the transpose of the vector ( γ i + j ) k i =0 . For 0 ≤ j ≤ k this v ector is the j + 1 th column of A ( k ) . Define rank( γ ) = k + 1 if A ( k ) is inv ertible, and otherwise rank( γ ) is the smallest r such that v r is a linear combination of { v 0 , . . . , v r − 1 } . Then whether there exists a ν that satisfies (VII.1) depends on n and k : 1) If n = 2 k + 1 , then there exists such a solution ν if A ( k ) is positiv e semidefinite and v k +1 is in the range of A ( k ) . 2) If n = 2 k , then there exists such a solution ν if A ( k ) is positive semidefinite and rank( γ ) = rank( A ( k )) . Also, if there exists a ν that satisfies (VII.1), then there definitely exists a solution with atomic measure. R E F E R E N C E S [1] T . Hastie, R. Tibshirani, and J. Friedman, The Elements of Statistical Learning . New Y ork: Springer-V erlag, 2001. [2] S. Sriv astava, M. R. Gupta, and B. A. Frigyik, “Bayesian quadratic discriminant analysis, ” J. Mach. Learn. Res. , vol. 8, pp. 1277–1305, 2007. [3] T . Cov er and J. Thomas, Elements of Information Theory . New Y ork: John Wiley and Sons, 1991. [4] D. C. Dowson and A. Wragg, “Maximum entropy distributions having prescribed first and second moments, ” IEEE T rans. on Information Theory , vol. 19, no. 5, pp. 689–693, 1973. [5] V . Poor, “Minimum distortion functional for one-dimensional quantisation, ” Electr onics Letters , vol. 16, no. 1, pp. 23–25, 1980. [6] P . Ishwar and P . Moulin, “On the existence and characterization of the maxent distribution under general moment inequality constraints, ” IEEE T rans. on Information Theory , vol. 51, no. 9, pp. 3322–3333, 2005. [7] T . T . Georgiou, “Relativ e entropy and the multiv ariable multidimensional moment problem, ” IEEE T rans. on Information Theory , vol. 52, no. 3, pp. 1052–1066, 2006. [8] M. P . Friedlander and M. R. Gupta, “On minimizing distortion and relative entropy , ” IEEE T rans. on Information Theory , vol. 52, no. 1, pp. 238–245, 2006. [9] A. Dukkipati, M. N. Murty , and S. Bhatnagar , “Nonextensi ve triangle equality and other properties of Tsallis relati ve entropy minimization, ” Physica A: Statistical Mechanics and Its Applications , vol. 361, no. 1, pp. 124–138, 2006. [10] G. R. G. Lanckriet, L. El Ghaoui, C. Bhattacharyya, and M. I. Jordan, “ A robust minimax approach to classification, ” Journal Machine Learning Researc h , vol. 3, pp. 555–582, 2002. [11] I. Csiszar , G. Tusnady , M. Ispany , E. V erdes, G. Michaletzky , and T . Rudas, “Diver gence minimization under prior inequality constraints, ” IEEE Symp. Information Theory , 2001. [12] I. Csiszar and F . Matus, “On minimization of multiv ariate entropy functionals, ” IEEE Symp. Information Theory , 2008. [13] A. Antos, L. Devroye, and L. Gy ¨ orfi, “Lower bounds for Bayes error estimation, ” IEEE T rans. P attern Analysis and Machine Intelligence , vol. 21, no. 7, pp. 643–645, 1999. [14] R. E. Curto and L. A. Fialko w , “Recursiveness, positivity , and truncated moment problems, ” Houston J ournal of Mathematics , v ol. 17, no. 4, pp. 603–635, 1991. [15] D. Bertsimas and I. Popescu, “Optimal Inequalities in Probability Theory: A Conve x Optimization Approach, ” SIAM Journal on Optimization , vol. 15, no. 3, pp. 780–804, 2005. [16] A. W . Marshall and I. Olkin, “Multiv ariate Chebyshev inequalities, ” The Annals of Mathematical Statistics , vol. 31, no. 4, pp. 1001–1014, 1960.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment