Sparse Non Gaussian Component Analysis by Semidefinite Programming

Sparse non-Gaussian component analysis (SNGCA) is an unsupervised method of extracting a linear structure from a high dimensional data based on estimating a low-dimensional non-Gaussian data component. In this paper we discuss a new approach to direc…

Authors: Elmar Diederichs, Anatoli Juditsky, Arkadi Nemirovski

Sparse Non Gaussian Comp onen t Analysis b y Semidefinite Programming Elmar Diederic hs ∗ W eierstrass Institute Mohrenstr. 39, 10117 Berlin, German y diederic@wias-berlin.de Anatoli Juditsky LJK, Univ ersit´ e J. F ourier, BP 53 38041 Grenoble cedex 9, F rance juditsky@imag.fr Ark adi Nemiro vski ISyE, Georgia Institute of T ec hnology A tlanta, Georgia 30332, USA nemirovs@isye.gatech.edu Vladimir Sp ok oin y W eierstrass Institute and Hum b oldt Univ ersity , Mohrenstr. 39, 10117 Berlin, German y spokoiny@wias-berlin.de Octob er 29, 2021 Abstract Sparse non-Gaussian component analysis (SNGCA) is an unsupervised metho d of extracting a linear structure from a high dimensional data based on estimating a low-dimensional non-Gaussian data component. In this pap er w e discuss a new approach to direct estimation of the pro jector on the target space based on semidefinite programming whic h impro ves the method sensitivit y to a broad v ariety of deviations from normalit y . W e also discuss the pro cedures which allows to reco v er the structure when its effectiv e dimen- sion is unknown. Keywor ds: dimension reduction, non-Gaussian components analysis, feature extraction Mathematic al Subje ct Classific ation: 62G07, 62H25, 62H30, 90C48, 90C90 1 In tro duction Numerous statistical applications are confronted to da y with the so-called curse of dimensionality (cf. [13, 31]). Using high-dimensional datasets implies an exp onen tial increase of computational effort for man y statistical routines, while the data thin out in the lo cal neigh b orho od of an y given p oin t and classical statistical metho ds b ecome unreliable. When a random phenomenon is observed in the high dimensional space R d the ”intrinsic dimension” m co vering degrees of freedom asso ciated with same features may b e m uch smaller than d . Then introducing structur al assumptions allo ws to reduce the problem complexit y without sacrificing any statistical information [17, 25]. In this study we ∗ The author is partially supported b y the Lab oratory of Structural Methods of Data Analysis in Predictiv e Modeling, MIPT, through the RF go vernmen t grant, ag. 11.G34.31.0073. F urthermore the author is grateful for funding to the Comp etitiv e Procedure of the Leibniz Asso ciation within the ”Pact for Research and Innov ation” framew ork. 1 consider the case where the phenomenon of interest is (approximately) lo cated in a linear subspace I . When compared to other approac hes which in volv e construction of nonlinear mappings from the original data space onto the ”subspace of in terest”, suc h that isomaps [29], lo cal-linear embedding [13] or Laplacian eigenmaps [4], a linear mapping app ears attractiv e due to its simplicity — it may b e identified with a simple ob ject, the pro jector Π ∗ from R d on to I . T o find the structure of interest a statistician may seek for the non-Gaussian comp onents of the data distribution, while its Gaussian comp onen ts, as usual in the statistical literature ma y b e treated as non-informative noise. Sev eral tec hniques of estimating the “non-Gaussian subspace” ha v e been prop osed recen tly . In particular, NGCA (for Non-Gaussian Comp onent A nalysis ) pro cedure, introduced in [6], and then dev elop ed in to SNGCA (for Sp arse NGCA ) in [10], is based on the decom position the problem of dimension reduction in to t wo tasks: the first one is to extract from the data a set { b β j } of candidate v ectors b β j whic h are ”close” to I . The second is to reco ver an estimation b Π of the pro jector Π ∗ on I from { b β j } . In this pap er we discuss a new metho d of SNGCA based on Semidefinite Relaxation of a nonconv ex minmax problem whic h allo ws for a direct recov ery of Π ∗ . When compared to previous implemen tations of the SNGCA in [6, 7, 10], the new approac h ”shortcuts” the intermediary stages and makes the b est use of av ailable information for estimation of I . F urthermore, it allows to treat in a transparent wa y the case of unknown dimension m of the target space I . The pap er is organized as follows: in Section 2 w e presen t the setup of SNGCA and briefly review some existing techniques. Then in Section 3 we introduce the new approach to recov ery of the non-Gaussian subspace and analyze its accuracy . F urther w e pro vide a sim ulation study in Section 5, where we compare the p erformance of the prop osed algorithm SNGCA to that of some kno wn pro jective metho ds of feature extraction. 2 Sparse Non-Gaussian Comp onen t Analysis 2.1 The setup The Non-Gaussian Comp onent Analysis (NGCA) approach is based on the assumption that a high dimensional distribution tends to b e normal in almost an y randomly selected direction. This in tuitive fact can be justified b y the central limit theorem when the n umber of directions tends to infinit y . It leads to the NGCA-assumption: the data distribution is a sup erp osition of a full dimensional Gaussian distribution and a lo w dimensional non-Gaussian comp onen t. In many practical problems lik e clustering or classification, the Gaussian comp onen t is uninformative and it is treated as noise. The approac h suggests to identify the non-Gaussian component and to use it for the further analysis. The NGCA set-up can be formalized as follows; cf. [6]. Let X 1 , ..., X N b e i.i.d. from a distri- bution P in R d describing the random phenomenon of in terest. W e supp ose that P possesses a densit y ρ w.r.t. the Leb esgue measure on R d , whic h can b e decomposed as follo ws: ρ ( x ) = φ µ, Σ ( x ) q ( T x ) . (1) Here φ µ, Σ denotes the density of the m ultiv ariate normal distribution N ( µ, Σ) with parameters µ ∈ R d (exp ectation) and Σ ∈ R d × d p ositiv e definite (cov ariance matrix). The function q : R m → R with m ≤ d is p ositiv e and b ounded. T ∈ R m × d is an unknown linear mapping. W e refer to I = range T as tar get or non-Gaussian subsp ac e . Note that though T is not uniquely defined, I 2 is well defined, same as the Euclidean pro jector Π ∗ on I .In what follows, unless it is explicitly sp ecified otherwise, we assume that the effe ctive dimension m of I is known a priori . F or the sak e of simplicit y we assume that the exp ectation of X v anishes: E [ X ] = 0. The mo del (1) allo ws for the following in terpretation (cf. Section 2 of [6]): suppose that the observ ation X ∈ R d can b e decomp osed into X = Z + ξ , where Z is an “informative lo w-dimensional signal” suc h that Z ∈ I , I being an m -dimensional subspace of R d , and ξ is indep enden t and Gaussian. One can easily sho w (see, e.g., Lemma 1 of [6]) that in this case the density of X can b e represen ted as (1). 2.2 Basics of SNGCA estimation pro cedure The estimation of I relies up on the follo wing result, pro ved in [6]: supp ose that the function q is smo oth, then for an y smo oth function ψ : R d → R the assumptions of (1) and E [ X ] = 0 ensure that for β ( ψ ) def = E [ ∇ ψ ( X )] = Z ∇ ψ ( x ) ρ ( x ) dx, (2) there is a vector β ∈ I such that | β ( ψ ) − β | 2 ≤ | Σ − 1 E [ X ψ ( X )] | 2 where ∇ ψ denotes the gradien t of ψ and | · | p is the standard ` p -norm on R d . In particular, if ψ satisfies E [ X ψ ( X )] = 0 , then β ( ψ ) ∈ I . Consequently | ( I − Π ∗ ) β ( ψ ) | 2 ≤ Σ − 1 Z xψ ( x ) ρ ( x ) dx 2 , (3) where I is the d -dimensional iden tity matrix and Π ∗ is the Euclidean pro jector on I . The abov e result suggests the follo wing t wo-stage estimation pro cedure: first compute a set of estimates { b β ` } of elemen ts { β j } of I , then recov er an estimation of I from { b β ` } . This heuristics has b een first used to estimate I in [6]. T o be more precise, the construction implemen ted in [6] can b e summarized as follo ws: let for a family { h ` } , ` = 1 , ..., L of smo oth b ounded (test) functions on R d γ ` def = E [ X h ` ( X )] , η ` def = E [ ∇ h ` ( X )] , (4) and let b γ ` def = N − 1 N X i =1 X i h ` ( X i ) , b η ` def = N − 1 N X i =1 ∇ h ` ( X i ) (5) b e their ”empirical counterparts”. The set of ”approximating vectors” { b β ` } used in [6] is as follows: b β ` = b η ` − b Σ − 1 b γ ` , ` = 1 , ..., L , where b Σ is an estimate of the cov ariance matrix Σ. The pro jector estimation at the second stage is b Π = P m j =1 e j e T j , where e j , j = 1 , ..., m , are m principal eigenv ectors of the matrix P L ` =1 b β ` b β T ` . A numerical study , pro vided in [6], has sho wn that the abov e pro cedure can b e used successfully to recov er I . On the other hand, such implementation of the t wo-stage 3 pro cedure p ossesses t wo important dra wbacks: it relies up on the estimation of the cov ariance matrix Σ of the Gaussian comp onen t, whic h can b e hard even for moderate dimensions d . P o or estimation of Σ then will result in badly estimated v ectors b β ` , and as a result, p oorly estimated I . F urther, using the eigen v alue decomp osition of the matrix P L ` =1 b β ` b β T ` en tails that the v ariance of the estima- tion b Π of the pro jector Π ∗ on I is prop ortional to the num b er L of test-functions. As a result, the estimation pro cedure is restricted to utilizing only relatively small families { h ` } , and is sensitive to the initial selection of ”informative” test-functions. T o circumv ent the ab o ve limitations of the approac h of [6] a different estimation pro cedure has been prop osed in [10]. In that pro cedure the estimates b β of v ectors from the target space are obtained by the metho d, whic h was referred to as c onvex pr oje ction . Let c ∈ R L and let β ( c ) = L X l =1 c ` η ` γ ( c ) = L X l =1 c ` γ ` . Observ e that β ( c ) ∈ I conditioned that γ ( c ) = 0 . Indeed, if ψ ( x ) = P ` c ` h ` ( x ) , then P ` c ` E [ X h ` ( X )] = 0 , and by (3), η ( c ) = X ` c ` E [ ∇ h ` ( X )] ∈ I . Therefore, the task of estimating β ∈ I reduces to that of finding a ”go o d” corresp onding co efficient v ector. In [10] vectors { b c j } are computed as follows: let b η ( c ) = L X l =1 c ` b η ` and b γ ( c ) = L X l =1 c ` b γ ` , ` = 1 , ..., L and let ξ j ∈ R d , j = 1 , ..., J constitute a set of pr ob e unit vectors. Then it holds b c j = arg min c {| ξ j − b η ( c ) | 2 | b γ ( c ) = 0 , | c | 1 ≤ 1 } , (6) and we set b β j = b β ( b c j ) = P ` b c ` j b η ` . Then I is reco vered b y computing m principal axes of the minimal v olume ellipsoid (F ritz-John ellipsoid) containing the estimated p oin ts {± b β j } J j =1 . The reco very of b I through the F ritz-John ellipsoid (instead of eigenv alue decomp osition of the matrix P ` b β j b β T j ) allo ws to b ound the estimation error of I by the maximal error of estimation b β of elemen ts of the target space (cf. Theorem 3 of [10]), while the ` 1 -constrain t on the co efficien ts b c j allo ws to con trol efficien tly the maximal sto chastic error of the estimations b β j (cf. Theorem 1 of [10, 28]). On the other hand, that construction hea vily relies upon the choice of the probe v ectors ξ j . Indeed, in order to reco ver the pro jector on I , the collection of b β j should comprise at least m v ectors with non-v anishing pro jection on the target space. T o cop e with this problem a multi-stage pro cedure has b een used in [10]: giv en a set { ξ j } k =0 of probe v ectors an estimation b I k =0 is computed, whic h is used to dra w new probe v ectors { ξ j } k =1 from the vicinity of b I k =0 ; these v ectors are employ ed to compute a new estimation b I k =1 , and so on. The iterativ e pro cedure impro ves significan tly the accuracy of the reco very of I . Nevertheless, the c hoice of ”informativ e” prob e vectors at the first iteration k = 0 remains a c hallenging task and hitherto is a weak point of the pro cedure. 4 3 Structural Analysis b y Semidefinite Programming In the presen t pap er w e discuss a new choice of vectors β whic h solv es the initialization problem of prob e v ectors for the SNGCA pro cedure in quite a particular wa y . Namely , the estimation pro cedure w e are to presen t b elo w do es not require any prob e vectors at all. 3.1 Informativ e vectors in the target space F urther developmen ts are based on the follo wing simple observ ation. Let η ` and γ ` b e defined as in (4), and let U = [ η 1 , ..., η L ] ∈ R d × L , G = [ γ 1 , ..., γ L ] ∈ R d × L . Using the observ ation in the previous section w e conclude that if c ∈ R L satisfies Gc = P L ` =1 c ` γ ` = 0 then U c = P L ` =1 c ` η ` b elongs to I . In other words, if Π ∗ is the Euclidean pro jector on I , then ( I − Π ∗ ) U c = 0 . Supp ose now that the set { h ` } of test functions is ric h enough in the sense that vectors U c span I when c spans the subspace Gc = 0. Recall that we assume the dimension m of the target space to b e kno wn. Then pro jector Π ∗ on I is ful ly identifie d as the optimal solution to the problem Π ∗ = arg min Π max c | ( I − Π) U c | 2 2 Π is a pro jector on an m -dimensional subspace of R d c ∈ R L , Gc = 0 . (7) In practice v ectors γ ` and η ` are not a v ailable, but w e can supp ose that their “empirical coun terparts” – v ectors b γ ` , b η ` , ` = 1 , ..., L can b e computed, suc h that for a set A of probability at least 1 − ε , | b η ` − η ` | 2 ≤ δ N , | b γ ` − γ ` | 2 ≤ ν N , ` = 1 , ..., L. (8) Indeed, it is well known (cf., e.g., Lemma 1 in [10] or [30]) that if functions h ` ( x ) = f ( x, ω ` ), ` = 1 , ..., L , are used, where f is con tinuously differentiable, ω ` ∈ R d are vectors on the unit sphere and f and ∇ x f are b ounded, then (8) holds with δ N = C 1 max x ∈ R d , | ω | 2 =1 |∇ x f ( x, ω ) | 2 N − 1 / 2 p min { d, ln L } + ln ε − 1 , ν N = C 2 max x ∈ R d , | ω | 2 =1 | xf ( x, ω ) | 2 N − 1 / 2 p min { d, ln L } + ln ε − 1 , (9) where C 1 , C 2 are some absolute constants dep ending on the smo othness prop erties and the second momen ts of the underlying densit y . Then for an y c ∈ R L suc h that | c | 1 ≤ 1 w e can control the error of approximation of P ` c ` γ ` and P ` c ` η ` with their empirical versions. Namely , we ha v e on A : max | c | 1 ≤ 1 X ` c ` ( b η ` − η ` ) 2 ≤ δ N and max | c | 1 ≤ 1 X ` c ` ( b η ` − η ` ) 2 ≤ ν N . 5 Let now b U = [ b η 1 , ..., η L ], b G = [ b γ 1 , ..., b γ L ]. When substituting b U and b G for U and G in to (7) we come to the following minmax problem: min Π max c | ( I − Π) b U c | 2 2 Π is a pro jectoron an m -dimensional subspace of R d c ∈ R L , | c | 1 ≤ 1 , | b Gc | 2 ≤ % . (10) Here w e ha ve substituted the constraint Gc = 0 with the inequalit y constrain t | b Gc | 2 ≤ % for some % > 0 in order to k eep the optimal solution c ∗ to (7) feasible for the mo dified problem (10) (this will b e the case with probability at least 1 − ε if % ≥ ν N ). As we will see in a moment, when c runs the ν N -neigh b orho od of intersection C N of the standard h yp ero ctahedron { c ∈ R L , | c | 1 ≤ 1 } with the subspace b Gc = 0, v ectors b U c span a close vicinity of the target space I . 3.2 Solution b y Semidefinite Relaxation Note that (10) is a hard optimization problem. Namely , the candidate maximizers c i of (10) are the extreme p oin ts of the set C N = { c ∈ R L , | c | 1 ≤ 1 , | b Gc | 2 ≤ ν N } , and there are O ( L d ) of suc h p oin ts. In order to b e efficiently solv able, the problem (10) is to b e ”reduced” to a con vex-conca v e saddle-p oin t problem, which is, to the b est of our knowledge, the only class of minmax problems whic h can b e solved efficien tly (cf. [18]). Th us the next step is to transform the problem in (10) into a conv ex-conca ve minmax problem using the Semidefinite R elaxation (or SDP-relaxation) technique (see e.g., [5, Chapter 4]). W e obtain the relaxed version of (10) in tw o steps. First, let us rewrite the ob jective function (recall that I − Π is also a pro jector, and thus an idemp oten t matrix): | ( I − Π) b U c | 2 2 = c T b U T ( I − Π) 2 b U c = c T b U T ( I − Π) b U c = trace h b U T ( I − Π) b U X i , where the p ositiv e semidefinite matrix X = cc T is the ”new v ariable”. The constrain ts on c can b e easily rewritten for X : 1. the constrain t | c | 1 ≤ 1 is equiv alen t to | X | 1 ≤ 1 (we use the notation | X | 1 = P L i,j =1 | X ij | ); 2. because X is positive semidefinite, the constrain t | b Gc | 2 ≤ % is equiv alen t to in to trace [ b GX b G T ] ≤ % 2 . The only ”bad” constraint on X is the rank constrain t: rank X = 1, and w e simply remo ve it. Now w e are done with the v ariable c and w e arrive at min Π max X trace h b U T ( I − Π) b U X i Π is a pro jector on an m -dimensional subspace of R d X 0 , | X | 1 ≤ 1 , trace [ b GX b G T ] ≤ % 2 . Let us recall that an m -dimensional pro jector Π is exactly a symmetric d × d matrix of rank Π = m and trace Π = m , with the eigenv alues 0 ≤ λ i (Π) ≤ 1 , i = 1 , ..., d . Once again w e remov e the “difficult” rank constraint rank Π = m and finish with min P max X trace h b U T ( I − P ) b U X i 0 P I , trace P = m, X 0 , | X | 1 ≤ 1 , trace [ b GX b G T ] ≤ % 2 (11) 6 (w e write P Q if the matrix Q − P is p ositiv e semidefinite). There is no reason for an optimal solution b P of (11) to b e a pro jector matrix. If an estimation of Π ∗ whic h is itself a pro jector is needed, one can use instead the pro jector b Π on to the subspace spanned by m principal eigenv ectors of b P . Note that (11) is a linear matrix game with bounded con vex domains of its argumen ts - positive semidefinite matrices P ∈ R d × d and X ∈ R L × L . W e are ab out to describ e the accuracy of the estimation b Π of Π ∗ . T o this end we need an iden tifiability assumption on the system { h ` } of test functions as follows: Assumption 1 Supp ose that ther e ar e ve ctors c 1 , ..., c m , m ≤ m ≤ L such that | c k | 1 ≤ 1 and Gc k = 0 , k = 1 , ..., m , and non-ne gative c onstants µ 1 , . . . , µ m such that Π ∗ m X k =1 µ k U c k c T k U T . (12) We denote µ ∗ = µ 1 + . . . + µ m . In other w ords, if Assumption 1 holds, then the true pro jector Π ∗ on I is µ ∗ × con vex com bination of rank-one matrices U cc T U T where c satisfies the constraint Gc = 0 and | c | 1 = 1. Theorem 1 Supp ose that the true dimension m of the subsp ac e I is known and that % ≥ ν N as in (8). L et b P b e an optimal solution to (11) and let b Π b e the pr oje ctor onto the subsp ac e sp anne d by m princip al eigenve ctors of b P . Then with pr ob ability ≥ 1 − ε : (i) for any c such that | c | 1 ≤ 1 and Gc = 0 , | ( I − b Π) U c | 2 ≤ √ m + 1(( % + ν N ) λ − 1 min (Σ) + 2 δ N ); (ii) further, if Assumption 1 holds then trace h ( I − b P )Π ∗ i ≤ µ ∗ (( % + ν N ) λ − 1 min (Σ) + 2 δ N ) 2 , (13) and k b Π − Π ∗ k 2 2 ≤ 2 µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 τ , τ = ( m + 1) ∧ (1 − µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 ) − 1 (14) (her e k A k 2 = P i,j A 2 ij 1 / 2 = trace [ A T A ] 1 / 2 is the F r ob enius norm of A ). Note that if w e w ere able to solve the minimax problem in (10), w e could expect its solution, let us call it e Π, to satisfy with high probability | ( I − e Π) U c | 2 ≤ ( % + ν N ) λ − 1 min (Σ) + 2 δ N (cf. the pro of of Lemma 1 in the app endix). If we compare this b ound to that of the statemen t (i) of Theorem 1, we conclude that the loss of the accuracy resulting from the substitution of (10) by 7 its treatable appro ximation (11) is bounded with √ m + 1. In other words, the “price” of the SDP- relaxation in our case is √ m + 1 and do es not dep end on problem dimensions d and L . F urthermore, when Assumption 1 holds true, we are able to provide the b ound on the accuracy of recov ery of pro jector Π ∗ whic h is seemingly as go od as if w e were using instead of b Π the solution e Π of (10). Supp ose no w that the test functions h ` ( x ) = f ( x, ω ` ) are used, with ω l on the unit sphere of R d , that % = ν N is chosen, and that Assumption 1 holds with “not to o large” µ ∗ , e.g., µ ∗ ≤ 1 2 ( % + ν N ) λ − 1 min (Σ) + δ N . When substituting the b ounds of (9) for δ N and ν N in to (14) w e obtain the b ound for the accuracy of the estimation b Π (with probability 1 − ): k b Π − Π ∗ k 2 2 ≤ C ( f ) µ ∗ N − 1 min( d, ln L ) + ln − 1 where C ( f ) dep ends only on f . This b ound claims the ro ot- N consistency in estimation of the non-Gaussian subspace with the log-price for relaxation and estimation error. 3.3 Case of unknown dimension m The problem (11) may b e mo dified to allow the treatmen t of the case when the dimension m of the target space is unknown a priori . Namely , consider for ρ ≥ 0 the following problem min P,t ( t trace P ≤ t, max X trace h b U T ( I − P ) b U X i ≤ ρ 2 , 0 P I , X 0 , | X | 1 ≤ 1 , trace [ b GX b G T ] ≤ % 2 ) (15) The problem (15) is closely related to the ` 1 -reco very estimator of sparse signals (see, e.g., the tutorial [8] and the references therein) and the trace minimization heuristics widely used in the Sparse Principal Comp onent Analysis (SPCA) (cf. [2, 3]). As we will see in an instant, when the parameter ρ of the problem is ”prop erly chosen”, the optimal solution b P of (15) possesses essen tially the same prop erties as that of the problem (11). A result analogous to that in Theorem 1 holds: Theorem 2 L et b P , b X and b t = trace b P b e an optimal solution to (15) (note that (15) is cle arly solvable), b m = c b t b , 1 and let b Π b e the pr oje ctor onto the subsp ac e sp anne d by b m princip al eigenve ctors of b P . Supp ose that % ≥ ν N as in (8) and that ρ ≥ λ − 1 min (Σ)( % + ν N ) + δ N . (16) Then with pr ob ability at le ast 1 − ε : (i) b t ≤ m and | ( I − b Π) U c | 2 ≤ √ m + 1( ρ + 2 δ N ); (ii) furthermor e, if Assumption 1 hold then trace h ( I − b P )Π ∗ i ≤ µ ∗ ( ρ + δ N ) 2 , and k b Π − Π ∗ k 2 2 ≤ 2 µ ∗ ( ρ + δ N ) 2 ( m + 1) ∧ (1 − µ ∗ ( ρ + δ N ) 2 ) − 1 (17) (her e k A k 2 = P i,j A 2 ij 1 / 2 is the F r ob enius norm of A ). 1 Here c a b is the smallest integer ≥ a . 8 The pro of of the theorems is p ostp oned until the app endix. The estimation pro cedure based on solving (15) allo ws to infer the target subspace I without a priori knowledge of its dimension m . When the constrain t parameter ρ is close to the right-hand side of (16), the accuracy of the estimation will b e close to that, obtained in the situation when dimension m is kno wn. Ho wev er, the accuracy of the estimation hea vily dep ends on the precision of the a v ailable (lo wer) b ound for λ min (Σ). In the high-dimensional situation this information is hard to acquire, and the necessit y to compute this quantit y may b e considered as a serious drawbac k of the prop osed procedure. 4 Solving the saddle-p oin t problem (11) W e start with the following simple observ ation: by using bisection or Newton search in ρ (note that the ob jective of (15) is obviously con vex in ρ 2 ) we can reduce (15) to a small sequence to feasibility problems, closely related to (11): given t 0 rep ort, if exists, P such that max X ( trace h b U T ( I − P ) b U X i ≤ ρ 2 , 0 P I , trace P ≤ t 0 , X 0 , | X | 1 ≤ 1 , trace [ b GX b G T ] ≤ % 2 ) . In other words, w e can easily solve (15) if for a giv en m w e are able to find an optimal solution to (11). Therefore, in the sequel we concentrate on the optimization technique for solving (11). 4.1 Dual extrap olation algorithm In what follows we discuss the dual extrap olation algorithm of [22] for solving a v ersion of (11) in whic h, with a certain abuse, w e substitute the inequalit y constrain t trace b GX b G T ≤ % 2 with the equalit y constraint trace [ b GX b G T ] = 0. This wa y we come to the problem: min P ∈P max X ∈X trace h b U T ( I − P ) b U X i (18) where X = { X ∈ S L , X 0 , | X | 1 ≤ 1 , trace [ b G T b GX ] = 0 } (here S L stands for the space of L × L symmetric matrices) and P = { P ∈ S d , 0 P I , trace [ P ] ≤ m } . Observ e first that (18) is a matrix game ov er t wo con vex subsets (of the cone) of p ositiv e semidefinite matrices. If we use a large n umber of test functions, sa y L 2 ∼ 10 6 , the size of the v ariable X rules out the p ossibilit y of using the interior-point metho ds. The methodology whic h app ears to b e adequate in this case is that b ehind dual extrap olation metho ds, recently introduced in [19, 20, 21, 22]. The algorithm w e use b elongs to the family of subgradien t descent-ascen t metho ds for solving con vex- conca ve games. Though the rate of conv ergence of suc h metho ds is slo w — their precision is only O (1 /k ) , where k is the iteration count, their iteration is relativ ely cheap, what makes the metho ds 9 of this type appropriate in the case of high-dimensional problems when the high accuracy is not required. W e start with the general dual extrapolation scheme of [22] for linear matrix games. Let E n and E m b e t w o Euclidean spaces of dimension n and m resp ectiv ely , and let A ⊂ E n and B ⊂ E m b e closed and conv ex sets . W e consider the problem min x ∈A max y ∈B h x, Ay i + h a, x i + h b, y i . (19) Let k · k x and k · k y b e some norms on E n and E m resp ectiv ely . W e sa y that d x (resp., d y ) is a distance-generating function of A (resp., of B ) if d x (resp., d y ) is strongly conv ex mo dulus α x (resp., α y ) and differen tiable on A (resp., on B ). 2 Let for z = ( x, y ) d ( z ) = d x ( x ) + d y ( y ) (note that d is differen tiable and strongly conv ex on A × B with resp ect to the norm, defined on A × B according to, e.g. k z k = k x k x + k y k y ). W e define the pr ox-function V of A × B as follows: for z 0 = ( x 0 , y 0 ) and z = ( x, y ) in A × B w e set V ( z 0 , z ) def = d ( z ) − d ( z 0 ) − h∇ d ( z 0 ) , z − z 0 i . (20) Next, for s = ( s x , s y ) w e define the pr ox-tr anform T ( z 0 , s ) of s : T ( z 0 , s ) def = arg min z ∈A×B [ h s, z − z 0 i − V ( z 0 , z )] . (21) Let us denote F ( z ) = ( − A T y − a, Ax + b ) the vector field of descend-ascend directions of (19) at z = ( x, y ) and let z b e the minimizer of d o ver A × B . Given v ectors z k , z + k ∈ A × B and s k ∈ E ∗ at the k -th iteration, w e define the up date z k +1 , z + k +1 and s k +1 according to z k +1 = T ( z , s k ) , z + k +1 = T ( z k +1 , λ k F ( z k +1 )) , s k +1 = s k + λ k F ( z + k +1 ) , where λ k > 0 is the current stepsize. Finally , the curren t approximate solution b z k +1 is defined with b z k +1 = 1 k + 1 k +1 X i =1 z + i . The k ey element of the ab o v e construction is the c hoice of the distanc e-gener aing function d in the definition of the prox-function. It should satisfy tw o requiremen ts: • let D b e the v ariation of V ov er A × B and let α b e the parameter of strong con vexit y of V with resp ect to k · k . The complexity of the algorithm is proportional to D /α , so this ratio should b e as small as p ossible; • one should b e able to compute efficiently the solution to the auxiliary problem (21) whic h is to b e solv ed twice at eac h iteration of the algorithm. 2 Recall that a (sub-)differen tiable on F function f is called strongly conv ex on F with resp ect to the norm k · k of mo dulus α if h f 0 ( x ) − f 0 ( y ) , x − y i ≥ α k x − y k 2 for all x, y ∈ F . 10 Note that the prox-transform preserve the additiv e structure of the distance-generating function. Th us, in order to compute the prox-transform on the feasible domain P × X of (18) we need to compute its “ P and X comp onen ts” – the corresp onding pro x-transforms on P and cX . There are sev eral evident choices of the prox-functions d P and d X of the domains P and X of (18) which satisfy the first requirement ab o ve and allo w to attain the optimal v alue O ( √ m ln d ln L ) of the ratio D /α for the pro x-function V of (18). How ev er, for suc h distance-generating functions there is no kno wn w ay to compute efficien tly the X -comp onen t of the prox-transform T in (21) for the set X . This is why in order to admit an efficient solution the problem (18) is to b e mo dified one more time. 4.2 Mo dified problem W e act as follows: first we eliminate the linear equality constraint whic h, tak en along with X 0, sa ys that X = Q T Z Q with Z 0 and certain Q ; assuming that the d ro ws of b G are linearly indep enden t, w e can c ho ose Q as an appropriate ( L − d ) × L matrix satisfying QQ T = I (the orthogonal basis of the k ernel of b G ). Note that from the constraints on X it follows that trace [ X ] ≤ 1, whence trace [ Q T Z Q ] = trace [ Z QQ T ] = trace [ Z ] ≤ 1 . Th us, although there are additional constraints on Z as w ell, Z belongs to the standar d sp e ctahe dr on Z = { Z ∈ S L − d , Z 0 , trace [ Z ] ≤ 1 } . No w can rewrite our problem equiv alently as follo ws: min P ∈P max Z ∈Z , | Q T Z Q | 1 ≤ 1 trace [ b U T ( I − P ) b U ( Q T Z Q )] . (22) Let, further, W = { W ∈ S L , k W k 2 ≤ 1 } , and Y = { Y ∈ S L , | Y | 1 ≤ 1 } . W e claim that the problem (22) can b e reduced to the saddle p oin t problem min ( P,W ) ∈P ×W max ( Z,Y ) ∈Z ×Y n trace [ b U T ( I − P ) b U Y ] + λ trace [ W ( Q T Z Q − Y )] o | {z } F ( P ,W ; Z,Y ) . (23) pro vided that λ is not to o small. No w, “can b e reduced to” means exactly the following: Prop osition 1 Supp ose that λ > L | b U | 2 2 , wher e | U | 2 is the maximal Euclide an norm of c olumns of U . L et ( b P , c W ; b Z , b Y ) b e a fe asible solution -solution to (23), that is ( b P , c W ; b Z , b Y ) ∈ ( P , W ; Z , Y ) , and F ( b P , c W ) − F ( b Z , b Y ) ≤ wher e F ( P , W ) = max ( Z,Y ) ∈Z ×Y F ( P , W ; Z, Y ) , F ( Z, Y ) = min ( P,W ) ∈P ×W F ( P , W ; Z, Y ) . 11 Then setting e Z = ( b Z , if | Q T Z Q | 1 ≤ 1 , | Q T Z Q | − 1 1 b Z otherwise , the p air ( b P , e Z ) is a fe asible -solution to (22). Sp e cific al ly, we have ( b P , e Z ) ∈ P × Z with | Q T e Z Q | 1 ≤ 1 , and G ( b P ) − G ( e Z ) ≤ , wher e G ( P ) = max Z ∈Z , | Q T Z Q | 1 ≤ 1 trace [ b U T ( I − P ) b U Q T Z Q ]; G ( Z ) = min P ∈P trace [ b U T ( I − P ) b U Q T Z Q ] . The pro of of the prop osition is giv en in the app endix A.3. Note that feasible domains of (23) admit evident distance-generating functions. W e pro vide the detailed computation of the corresp onding prox-transforms in the app endix A.4. 5 Numerical Exp erimen ts In this section we compare the numerical performance of the presented approac h, which w e refer to as SNGCA(SDP) with other statistical metho ds of dimension reduction on the sim ulated data. 5.1 Structural adaptation algorithm W e start with some implemen tation details of the estimation pro cedure. W e use the c hoice of the test functions h ` ( x ) = f ( x, ω ` ) for the SNGCA algorithm as follo ws: f ( x, ω ) = tanh( ω T x ) e − α k x k 2 2 / 2 , where ω ` , l = 1 , ..., L are unit vectors in R d . W e implement here a m ulti-stage v arian t of the SNGCA (cf [10]). At the first stage of the SNGCA(SDP) algorithm we assume that the directions ω ` are drawn randomly from the unit sphere of R d . A t each of the following stages we use the current estimation of the target subspace to “impro ve” the choice of directions ω ` as follows: we dra w a fixed fraction of ω ’s from the estimated subspace and draw randomly ov er the unit square the remaining ω ’s. The simulation results b elo w are presen t for the estimation pro cedure with three s tages. The size of the set of test function is set to L = 10 d , and the target accuracy of solving the problem (11) is set to 1 e − 4. 12 W e can summarize the SNGCA(SDP) algorithm as follows: Algorithm 1: SNGCA (SDP) % Initialization: The data ( X i ) N i =1 are re-cen tered. Let σ = ( σ 1 , . . . σ d ) be the standard deviations of the comp onen ts of X i . W e denote Y i = diag( σ − 1 ) X i the standardized data. Set the current estimator b Π 0 = I d . % Main iteration loop: for i=1 to I do Sample a fraction of ω ( i ) ’s from the normal distribution N (0 , b Π i − 1 ) (zero mean, with co v ariance matrix b Π i − 1 ), sample the remaining ω ( i ) ’s from N (0 , I d ), then normalize to the unit length; % Compute estimations of η ` and γ ` for ` =1 to L do b η ( i ) ` = 1 N P N j =1 ∇ h ω ( i ) ` ( Y j ); b γ ( i ) ` = 1 N P N j =1 Y j h ω ( i ) l ( Y j ); end Solv e the corresp onding problem (11) and up date the estimation b Π i ; end 5.2 Exp erimen t description Eac h simulated data set X N = [ X 1 , ..., X N ] of size N = 1000 represen ts N i.i.d. realizations of a random v ectors X of dimension d . Each simulation is rep eated 100 times and w e report the a verage o ver 100 simulations F rob enius norm of the error of estimation of the pro jection on the target space. In the examples b elo w only m = 2 comp onents of X are non-Gaussian with unit v ariance, other d − 2 comp onen ts of X are independent standard normal r.v.. The densities of the non-Gaussian comp onen ts are chosen as follo ws: (A) Gaussian mixture: 2-dimensional indep enden t Gaussian mixtures with density of each com- p onen t given by 0 . 5 φ − 3 , 1 ( x ) + 0 . 5 φ 3 , 1 ( x ). (B) Dependent sup er-Gaussian: 2-dimensional isotropic distribution with density prop ortional to exp( −k x k ). (C) Dependent sub-Gaussian: 2-dimensional isotropic uniform with constan t p ositiv e density for k x k 2 ≤ 1 and 0 otherwise. (D) Dependent sup er- and sub-Gaussian: a component of X , say X 1 , follo ws the Laplace dis- tribution L (1) and the other is a dep endent uniform U ( c, c + 1), where c = 0 for | X 1 | ≤ ln 2 and c = − 1 otherwise. 13 (E) Dependent sub-Gaussian: 2-dimensional isotropic Cauch y distribution with density prop or- tional to λ ( λ 2 − x 2 ) − 1 where λ = 1. W e provide the 2-d plots of the densities of the non-Gaussian comp onen ts on Figure 1. (A) (B) (C) (D) (E) Figure 1: (A) indep enden t Gaussian mixtures, (B) isotropic super-Gaussian, (C) isotropic uniform and (D) dep enden t 1d Laplacian with additiv e 1d uniform, (E) isotropic sub-Gaussian W e start with comparing the presented algorithm with Pro jection Pursuit (PP) method [16] and the NGCA for d = 10. The results are presented on Figure 2 (the corresp onding results for PP and NGCA has b een already rep orted in [10] and [6]). 14 Figure 2: Comparison of PP , NGCA and SNGCA(SDP) Since the minimization pro cedure of PP tends to b e trapp ed in a local minim um, in each of the 100 sim ulations, the PP algorithm is restarted 10 times with random starting p oints. The b est result is rep orted for each PP-simulation. W e observe that SNGCA(SDP) outp erforms NGCA and PP in all tests. In the next sim ulation w e study the dependence of th e accuracy of the SNGCA(SDP) on the noise lev el and compare it to the corresponding data for PP and NGCA. W e presen t on Figure 3 the results of exp eriments when the non-Gaussian coordinates ha v e unit v ariance, but the standard deviation of the comp onents of the 8-dimensional Gaussian distribution follows the geometrical progression 10 − r , 10 − r +2 r / 7 , . . . , 10 r where r = 1 , . . . , 8. 15 Figure 3: estimation error with resp ect to the standard deviation of Gaussian components following a geometrical progression on [10 − r , 10 r ] where r is the parameter on the abscissa The conditioning of the cov ariance matrix heavily influences the estimation error of PP(tanh) and NGCA, but not that of S NGCA(SDP). The latter method app ears to be insensitiv e to the differences in the noise v ariance along differen t direction in all test cases. Next we compare the b eha vior of SNGCA(SDP), PP and NGCA as the dimension of the Gaus- sian component increases. On Figure 4 we plot the mean error of estimation against the problem dimension d . 16 Figure 4: mean-square estimation error vs problem dimension d F or PP and NGCA methods we observ e that the estimation b ecomes meaningless (the estimation error explo des) already for d = 30 − 40 for the mo dels (A), (C) and for d = 20 − 30 of the mo del (D). In the case of the models (B) and (E) w e observe the progressive increase of the error for methods PP and NGCA. The prop osed metho d SNGCA(SDP) b eha ves robustly with resp ect to the increasing dimension of the Gaussian comp onen t for all test mo dels. 5.3 Application to Geometric Analysis of Metastability Some biologically activ e molecules exhibit different large geometric structures at the scale m uch larger than the diameter of the atoms. If there are more than one suc h structures with the life span m uch larger that the time scale of the lo cal atomic vibrations, the structure is called metastable conformation [27]. In other words, metastable conformations of biomolecules can b e seen as connected subsets of state-space. When compared to the fluctuations within each conformation, the transitions b et w een different conformations of a molecule are rare statistical even ts. Suc h multi-scale dynamic b eha vior of biomolecules stem from a decomp osition of the free energy landscap e in to particulary deep w ells eac h con taining man y lo cal minima [23, 12]. Suc h w ells represen t differen t almost inv ariant geometrical large scale structures [1]. The macroscopic dynamics is assumed to be a Mark ov jump pro cess, hopping b et ween the metastable sets of the state space while the microscopic dynamics within these sets mixes on m uc h shorter time scales [14]. Since the shap e of the energy landscape and the inv ariant density of the Mark ov pro cess are unkno wn, the “essen tial degrees of freedom”, in whic h the rare conformational c hanges o ccur, are of imp ortance. W e will no w illustrate that SNGCA(SDP) is able to detect a m ultimo dal comp onen t of the data densit y as a sp ecial case of non-Gaussian subspace in high-dimensional data obtained from molecular 17 dynamics sim ulation of oligop eptides. Clustering of 8-alanine The first example is a times series, generated by an equilibrium molec- ular dynamics simulation of 8-alanine. W e only consider the bac kb one dihedral angles in order to determine differen t conformations. The 14-dimensional time series consists of the cyclic data set of all backbone torsion angles. The sim ulation using CHARMM was done at T = 300 K with implicit water by means of the solven t mo del ACE2 [26]. A symplectic V erlet in tegrator with integration step of 1 f s w as used; the total tra jectory length w as 4 µs and ev ery τ = 50 f s a set of co ordinates w as recorded. The dimension reduction rep orted in the next figure was obtained using SNGCA(SDP) with for a giv en dimension m = 5 of the target space con taining the multimodal comp onen t. Figure 5: lo w dimensional multimodal comp onen t of 8-alanine A concentration of the clustered data in the target space of SNGCA ma y b e clearly observ ed. In comparison, the complement of the target space is almost completely filled with Gaussian noise. 18 Figure 6: Gaussian noise in the complement of the SNGCA target space. Clustering of a 3-p eptide molecule In the next example w e inv estigate Phen ylalan yl-Glycyl- Glycine T rip eptide, which is assumed to realize all of the most imp ortan t folding mechanisms of p olypeptides [24]. The simulation is done using GROMACS at T = 300 K with implicit w ater. An in tegration step of a symplectic V erlet integrator is set to 2 f s , and every τ = 50 f s a set of 31 diedre angles was recorded. As in the previous exp erience, the dimension of the target space is set to m = 5 Figure 7 sho ws that the clustered data can b e primarily found in the target space of SNGCA(SDP). 19 Figure 7: lo w dimensional multimodal comp onen t of 3-p eptide 6 Conclusions W e ha ve studied a new pro cedure of non-Gaussian comp onen t analysis. The suggested metho d, same as the tec hniques prop osed in [6, 10], has tw o stages: on the first stage certain linear functionals of unkno wn distribution are computed, then this information is used to recov er the non-Gaussian subspace. The no velt y of the proposed approac h resides in the new method of non-Gaussian subspace iden tification, based up on semidefinite relaxation. The new pro cedure allo ws to ov ercome the main dra wbacks of the previous implementations of the NGCA and seems to improv e significan tly the accuracy of estimation. On the other hand, the proposed algorithm is computationally demanding. While the first-order optimization algorithm we prop ose allows to treat efficiently the problems which are far b ey ond the reach of classical SDP-optimization techniques, the numerical difficult y seems to b e the main practical limitation of the prop osed approach. 20 References [1] A. Amadei & A. B. Linssen & H. J. Berendsen (1993) Essential dynamics of proteins. Pr oteins , 17 (4):412-425. [2] A. d’Aspremon t, L. El Ghaoui, M.I. Jordan, and G. R. G. Lanckriet (2007) A direct form ulation for sparse PCA using semidefinite programming. SIAM R eview , 49 (3):434-448. [3] A. d’Aspremont, F. Bac h and L. El Ghaoui (2008) Optimal solutions for sparse principal com- p onen t analysis. Journal of Machine L e arning R ese ar ch , 9:1269-1294. [4] M. Belkin, P . Niyogi (2009) Laplacian Eigenmaps for dimensionalit y reduction and data repre- sen tation Neur al Computation , 15 (6):1373-1396. [5] A. Ben T al & A. Nemirovski (2001) L e ctur es on Mo dern Convex Optimization. Analysis, Algo- rithms and Engine ering Applic ations , V olume 1 of MPS/ SIAM Series on Optimization, SIAM, Philadelphia. [6] G. Blanc hard, M. Kaw anab e, M. Sugiyama, V. Sp ok oiny , K.-R. M ¨ uller (2006) In Search of Non- Gaussian Comp onen ts of a High-Dimensional Distribution. J. of Machine L e arning R ese ar ch , p. 247-282. [7] M.Ka wanabe, M.Sugiyama, G.Blanc hard and K.-R.M ´ ’uller” (2007) A new algorithm of non- Gaussian comp onen t analysis with radial k ernel functions, A nnals of the Institute of Statistic al Mathematics , v ol. 59(1), 57-75. [8] E. Cand` es (2006) Compressive sampling. Int. Congr ess of Mathematics, Madrid, Sp ain , 3 :1433- 1452. [9] P . Diaconis & D. F reedman (1984) Asymptotics of graphical pro jection pursuit A nnals of Statis- tics , 12 :793-815. [10] E. Diederic hs & A. Juditsky & V. Sp ok oiny & C. Sch¨ utte (2009) Sparse NonGaussian Comp onen t Analysis. IEEE T r ansactions on Information The ory , 15 (7):5249-5262. [11] I. Guyon & A. Elisseeff (2003) An Introduction to V ariable and F eature Selection. Journal of Machine L e arning R ese ar ch , 3 :1157-1182. [12] H. F rauenfelder & B.H. McMahon (2000) Energy Landscape and fluctations in proteins. Ann. Phys. (L eipzig) , 9 :655-667. [13] T. J. Hastie & R. Tibshirani & J. F riedman (2001) The Elements of Statistic al L e arning . New Y ork, Springer Series in Statistcs. [14] I. Horenk o & Ch. Sc h ¨ utte (2008) Likelihoo d-Based Estimation of Multidimensional Langevin Mo dels and its Application to Biomolecular Dynamics. Mult. Mo d. Sim. , 7 (2):731-773. [15] R. A. Horn and C. R. Johnson (1985) Matrix A nalysis . Cambridge Univ ersity Press. 21 [16] A. Hyv¨ arinen. (1999) Surv ey on independent comp onen t analysis. Neur al Computing Surveys , 2 :94-128. [17] M. Mizuta (2004) Dimension Reduction Metho ds. In J.E. Gentle & W. H¨ ardle, and Y. Mori (eds.): Handb o ok of Computational Statistics pp. 566-89. [18] A. Nemiro vski & D. Y udin (1983) Pr oblem Complexity and Metho d Efficiency in Optimization . New Y ork, J. Wiley and Sons [19] A. Nemirovski (2004) Prox-method with rate of conv ergence O (1 /t ) for v ariational inequali- ties with Lipschitz contin uous monotone op erators and smo oth conv ex-concav e saddle p oin t problems. SIAM Journal on Optimization , 15 :229-251 [20] Z. Lu & R. Mon teiro &A. Nemirovski (2007) Large-Scale Semidefinite Programming via Saddle P oint Mirror-Pro x Algorithm. Mathematic al Pr o gr amming , 109 :2-3, 211-237. [21] Y u. E. Nestero v (2005) Smo oth minimization of non-smo oth functions. Mathematic al Pr o gr am- ming: Series A and B , 103 (1):127-152. [22] Y u. E. Nesterov (2007) Dual extrap olation and its applications for solving v ariational inequalities and related problems. Mathematic al Pr o gr amming: Series A and B , 109 (2):319-344. [23] J. Pillardy & L. Piela (1995) Molecular dynamics on deformed energy h yp ersurfaces. J.Phys.Chem. , 99 :11805-11812. [24] D. Reha & H. V aldes & J.V ondrasek & P . Hobza & A. Abu-Riziq & B. Crews & M.S. de V ries (2005) Structure an IR Sp ectrum of Phen ylalanyl-Glycyl-Glycine T rip eptide in the Gas-Phase. Cem. Eur. J. , 11 :6083-6817. [25] S. Row eis & L. Saul (2000) Nonlinear dimensionality reduction by lo cally linear embedding. Scienc e , 290 :2323-2326. [26] M. Schaefer & M. Karplus (1996) A Comprehensive Analytical T reatment of Con tinuum Elec- trostatics. J. Chem. Phys. , 100 :1578-1599. [27] C. Sch¨ utte & W. Huisinga (2003) Biomolecular Conformations can b e iden tified as metastable sets of melcular dynamics. Computational Chemistry, Handb o ok of Numeric al Analysis , 699-744. [28] V. Sp ok oiny (2009) A p enalize d exp onential risk b ound in p ar ametric estimation . http://arxiv.org/abs/0903.1721 . [29] T enen baum & V. de Silv a & J.C. Langford (2000) A global geometric framework for nonlinear dimensionalit y reduction. Scienc e , 290 :2319-2323. [30] A. v an der V aart & J.A. W ellner (1996) We ak Conver genc e and Empiric al Pr o c c esses . Springer Series in Statistics - New Y ork [31] L. W asserman (2006) Al l of Nonp ar ametric Statistics . New Y ork, Springer T exts in Statistcss 22 A App endix Let X = X T ∈ R L × L b e p ositiv e semidefinite with | X | 1 ≤ 1, and let Y = X 1 / 2 b e the symmetric p ositiv e semidefinite square ro ot of X . If we denote y i , i = 1 , .., L the columns of Y , then | X | 1 ≤ 1 implies that X 1 ≤ i,j ≤ L | y T i y j | ≤ 1 . W e m ak e here one trivial though useful observ ation: for any matrix A ∈ R d × L , when denoting B = A T A , w e hav e k AY k 2 2 = trace ( A T AX ) = trace [ B X ] = L X j =1 L X i =1 B j i X ij ≤ max ij | B ij | = | A | 2 2 . (24) (Recall that for a matrix A ∈ R d × L with columns a i , i = 1 , ..., L , | A | 2 stands for the maximal column norm: | A | 2 = max 1 ≤ i ≤ L | a i | 2 ). W e can rewrite the problem (11) using Y = X 1 / 2 , so that the ob jectiv e b f ( X , P ) = trace [ b U T ( I − P ) b U X ] of (11) becomes b g ( Y , P ) = k ( I − P ) 1 / 2 b U Y k 2 2 . Let no w ( b X , b P ) b e a saddle p oin t of (11). Namely , we hav e for any feasible P and X : b f ( X , b P ) ≤ [ b f ∗ ≡ b f ( b X , b P )] ≤ b f ( b X , P ) , W e denote b Y = b X 1 / 2 . In what follows w e supp ose that vectors γ ` and η ` , ` = 1 , ..., L satisfy (8). In other words,it holds | b U − U | 2 ≤ δ N and | b G − G | 2 ≤ γ N . A.1 Pro of of Theorem 1. Lemma 1 L et b P b e an optimal solution to (11). Then max c n | ( I − b P ) 1 / 2 U c | 2 | | c | 1 ≤ 1 , Gc = 0 o ≤ λ − 1 min (Σ)( % + ν N ) + 2 δ N . (25) Pr o of. W e write: max c n | ( I − b P ) 1 / 2 U c | 2 | | c | 1 ≤ 1 , Gc = 0 o ≤ max Y n k ( I − b P ) 1 / 2 U Y k 2 | Y 2 | 1 ≤ 1 , GY = 0 o ≤ max Y n k ( I − b P ) 1 / 2 b U Y k 2 | Y 2 | 1 ≤ 1 , GY = 0 o + max Y n k ( I − b P ) 1 / 2 ( b U − U ) Y k 2 | Y 2 | 1 ≤ 1 , GY = 0 o 23 ≤ max Y n k ( I − b P ) 1 / 2 b U Y k 2 | Y 2 | 1 ≤ 1 , k b GY k 2 ≤ % o (b y (24)) + | ( I − b P ) 1 / 2 ( b U − U ) | 2 (due to 0 I − b P I ) = k ( I − b P ) 1 / 2 b U b Y k 2 + δ N ≤ k ( I − Π ∗ ) 1 / 2 b U b Y k 2 + δ N (again b y (24)) ≤ k ( I − Π ∗ ) 1 / 2 U b Y k 2 + 2 δ N . On the other hand, as k b G b Y k 2 ≤ ν N , w e get k G b Y k 2 ≤ k b G b Y k 2 + k ( b G − G ) b Y k 2 ≤ % + | b G − G | 2 ≤ % + ν N , and b y (3), k ( I − Π ∗ ) U b Y k 2 ≤ λ − 1 min (Σ)( % + ν N ) . This implies (25). W e now come back to the pro of of the theorem. Let b λ j and b θ j , j = 1 , . . . , d b e resp ectiv ely the eigen v alues and the eigen vectors of b P . Assume that b λ 1 ≥ b λ 2 ≥ . . . ≥ b λ d . Then b P = P d j =1 b λ j b θ j b θ T j and b Π = P m j =1 b θ j b θ T j . Let β = U c for c such that | c | 1 ≤ 1 and Gc = 0. W e hav e β T ( I − b P ) β = m X j =1 (1 − b λ j )( b θ T j β ) 2 + X j >m (1 − b λ j )( b θ T j β ) 2 ≥ X j >m (1 − b λ j )( b θ T j β ) 2 ≥ (1 − b λ m +1 )( b θ T j β ) 2 = (1 − b λ m +1 ) β T ( I − b Π) β = (1 − b λ m +1 ) | ( I − b Π) β | 2 2 . Since, for obvious reasons, b λ m +1 ≤ m m +1 , it applies (i) due to (25). Let us show (ii). W e ha ve due to (12) and (25): trace h ( I − b P )Π ∗ i = trace h ( I − b P ) 1 / 2 Π ∗ ( I − b P ) 1 / 2 i ≤ m X k =1 µ k trace h ( I − b P ) 1 / 2 U c k c T k U T ( I − b P ) 1 / 2 i = m X k =1 µ k | ( I − b P ) 1 / 2 U c k | 2 2 ≤ m X k =1 µ k max c n | ( I − b P ) 1 / 2 U c | 2 2 | | c | 1 ≤ 1 , Gc = 0 o = µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 , (26) whic h is (13). Note that trace [ b P Π ∗ ] ≤ P j ≤ m b λ j (cf, e.g., Corollary 4.3.18 of [15]), th us by (26), b λ m +1 ≤ m − X j ≤ m b λ j ≤ trace [( I − b P )Π ∗ ] ≤ µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 . 24 On the other hand, trace [( I − b P )Π ∗ ] = m X j =1 (1 − b λ j ) b θ T j Π ∗ b θ j + X j >m (1 − b λ j ) b θ T j Π ∗ b θ j ≥ X j >m (1 − b λ j ) b θ T j Π ∗ b θ j ≥ (1 − b λ m +1 ) b θ T j Π ∗ b θ j = (1 − b λ m +1 )trace [( I − b Π)Π ∗ ] , and w e conclude that trace [( I − b Π)Π ∗ ] ≤ trace [(1 − b P )Π ∗ ] 1 − b λ m +1 ≤ µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 1 − µ ∗ ( λ − 1 min (Σ)( % + ν N ) + 2 δ N ) 2 . No w, using the relation trace b Π = trace Π ∗ = m , w e come to k b Π − Π ∗ k 2 2 = trace [ b Π 2 − 2 b ΠΠ ∗ + (Π ∗ ) 2 ] = 2 m − 2trace [ b ΠΠ ∗ ] = 2trace [( I − b Π)Π ∗ ] , and w e arrive at (14). A.2 Pro of of Theorem 2. Let no w b P , b X and b t = trace b P b e a triplet of optimal solution to (15). Lemma 2 L et b P b e an optimal solution to (15). (i) In the pr emises of the the or em Π ∗ is a fe asible solution of (15) and trace b P ≤ trace Π ∗ = m . (ii) We have max c n | ( I − b P ) 1 / 2 U c | 2 | | c | 1 ≤ 1 , Gc = 0 o ≤ ρ + δ N . (27) Pr o of. W e act as in the pro of of Lemma 1: to verify ( i ) w e observe that max X n trace [ b U T ( I − Π ∗ ) b U X ] X 0 , | X | 1 ≤ 1 , trace [ b GX b G T ] ≤ % 2 o = max Y n k ( I − Π ∗ ) b U Y k 2 2 | Y 2 | 1 ≤ 1 , k b GY k 2 ≤ % o ≤ max Y n ( k ( I − Π ∗ ) U Y k 2 + δ N ) 2 | Y 2 | 1 ≤ 1 , k b GY k 2 ≤ % o ≤ λ − 1 min (Σ)( % + ν N ) + δ N 2 . Th us, if ρ ≥ λ − 1 min (Σ)( % + ν N ) + δ N , Π ∗ is a feasible solution of (15) and, as a result, trace b P ≤ trace Π ∗ . T o show ( ii ) it suffices to note that max c n | ( I − b P ) 1 / 2 U c | 2 | | c | 1 ≤ 1 , Gc = 0 o ≤ max Y n k ( I − b P ) 1 / 2 U Y k 2 | Y 2 | 1 ≤ 1 , GY = 0 o ≤ max Y n k ( I − b P ) 1 / 2 b U Y k 2 | Y 2 | 1 ≤ 1 , GY = 0 o + | ( I − b P ) 1 / 2 ( b U − U ) | 2 ≤ max Y n k ( I − b P ) 1 / 2 b U Y k 2 | Y 2 | 1 ≤ 1 , k b GY k 2 ≤ % o + δ N ≤ ρ + δ N 25 b ecause of the feasibilit y of b P . No w using the b ound b m ≤ m we complete the pro of following exactly the lines of the pro of of Theorem 1. A.3 Pro of of Prop osition 1 Observ e that F ( b Z , b Y ) = min ( P,W ) ∈P ×W n trace [ B T ( I − P ) B Q T b Z Q ] + λ trace [ W ( Q T b Z Q − b Y )] o = min P ∈P n trace [ B T ( I − P ) B Q T b Z Q ] − λ k Q T b Z Q − b Y k 2 o ≤ min P ∈P n trace [ B T ( I − P ) B Q T b Z Q ] o = G ( b Z ); (28) and F ( b P , c W ) = max ( Z,Y ) ∈Z ×Y n trace [ B T ( I − b P ) B Q T Z Q ] + λ trace [ c W ( Q T Z Q − Y )] o ≥ max Z ∈Z , | Q T Z Q | 1 ≤ 1 , Y = Q T Z Q n trace [ B T ( I − b P ) B Q T Z Q ] + λ trace [ c W ( Q T Z Q − Y )] o = G ( b P ) : Assume first that | Q T b Z Q | 1 ≤ 1. In this case e Z = b Z and ≥ F ( b P , c W ) − F ( b Z , b Y ) = G ( b P ) − G ( b Z ) = G ( b P ) − G ( e Z ) (the second ¸ is giv en by (28)), as claimed. No w assume that s = Q T b Z Q | 1 > 1. W e hav e already established the first equality of the follo wing chain: F ( b Z , b Y ) = min P ∈P n trace ( B T ( I − P ) B Q T b Z Q ) − λ k Q T b Z Q − b Y k 2 o ≤ min P ∈P trace [ B T ( I − P ) B Q T b Z Q ] − λ L | Q T b Z Q − b Y | 1 ≤ min P ∈P trace [ B T ( I − P ) B Q T b Z Q ] − λ L ( s − 1) = min P ∈P s trace [ B T ( I − P ) B Q T e Z Q ] − λ L ( s − 1) ≤ min P ∈P trace [ B T ( I − P ) B Q T e Z Q ] + ( s − 1) | B T ( I − P ) B | ∞ | Q T e Z Q | 1 | {z } ≤ ( s − 1) | B T B | ∞ =( s − 1) | B | 2 2 − λ L ( s − 1) ≤ min P ∈P n trace [ B T ( I − P ) B Q T e Z Q ] o = G ( e Z ) , where the concluding ≤ is readily giv en by the definition of λ . 3 F urther, we hav e already seen that F ( b P , c W ) ≥ G ( b P ) . 3 W e denote | A | ∞ = max ij | A ij | . 26 Consequen tly , ≥ F ( b P , c W ) − F ( b Z , b Y ) ≥ G ( b P ) − G ( e Z ) , as claimed. A.4 Computing the prox-transform Recall that b ecause of the additivit y of the distance-generating function d the computation of the pro x-transform on the set P × W × Z × Y can b e decomp osed into indep enden t computations on the four domains of (23). Pro x-transform on P . The pro xy-function of P is the matrix entrop y: d ( P 0 , P ) = β P trace P m ln P m − ln P 0 m for P, P 0 ∈ P , β P > 0 . T o compute the corresp onding comp onen t of T we need to find, giv en S ∈ S d , T β ( P 0 , S ) = arg max P ∈P trace [ S ( P − P 0 )] − β P trace P m ln P m − ln P 0 m (29) = arg max P ∈P trace S + β P m ln P 0 m P − β P trace P m ln P m . By the symmetry considerations w e conclude that the optimal solution of this problem is diagonal in the basis of eigenv ectors of S + β P m ln( P 0 m ). Thus the solution of (29) can b e obtained as follo ws: compute the eigenv alue decomp osition S + β P m ln( P 0 m ) = ΓΛΓ T and let λ b e the diagonal of Λ. Then solve the “v ector” problem p ∗ = arg max 0 ≤ p ≤ 1 , P p ≤ m λ T p − β P m d X i =1 p i ln( p i /m ) . (30) and comp ose T β ( P , S ) = Γdiag ( y ∗ )Γ T . No w, the solution of (30) can b e obtained by simple bisection: indeed, using Lagrange duality w e conclude that the comp onen ts of y ∗ satisfies p ∗ i = exp λ i β − ν ∧ 1 , i = 1 , ..., d, and the Lagrange m ultiplier ν is to b e set to obtain P p ∗ i = m , what can b e done b y bisection in ν . When the solution is obtained, the optimal v alue of (29) can b e easily computed. 27 Pro x-transform on W . The distance-generating function of W is β W trace [ W 2 ] / 2 = k W k 2 2 / 2 so that w e hav e to solve for S ∈ S L T β ( W 0 , S ) = arg max k W k 2 ≤ 1 trace [ S ( W − W 0 )] − β W 2 k W − W 0 k 2 2 2 . (31) The optimal solution to (31) can b e easily computed T β ( W 0 , S ) = W 0 + S/β W if k W 0 + S/β W k 2 ≤ 1 , ( W 0 + S/β W ) / k W 0 + S/β W k 2 if k W 0 + S/β W k 2 > 1 . Pro x-transform on Z . The pro x-function of Z is the matrix en tropy and w e hav e to solv e for S ∈ S L − d T β ( Z, S ) = arg max Z ∈Z trace [ S ( Z − Z 0 )] − β Z trace [ Z (ln( Z ) − ln( Z 0 ))] = arg max Z ∈Z trace [( S + β Z ln( Z 0 )) Z ] − β Z trace [ Z ln Z ] . Once again, in the basis of eigen vectors of S + β Z ln( Z 0 ) the problem reduces to z ∗ = arg max z ≥ 0 , P z ≤ 1 λ T z − β Z d X i =1 z i ln( z i ) , where λ is the diagonal of Λ with S + β Z ln Z 0 = ΓΛΓ T . In this case z ∗ i = exp( λ i β ) P L j =1 exp( λ j β ) , i = 1 , ..., L − d. Pro x-transform on Y . The distance generating function for the domain Y is defined as follo ws: d ( Y ) = min L X i,j =1 ( u ij ln[ u ij ] + v ij ln[ v ij ] ) : n X i =1 ( u ij + v ij ) = 1 , Y ij = u ij − v ij , u ij ≥ 0 , v ij ≥ 0 , 1 ≤ i, j ≤ L } . In other w ords, the element Y ∈ Y is decomp osed according to Y = u − v , where ( u, v ) is an eleme n t of the 2 L 2 -dimensional simplex ∆ = n x ∈ R 2 L 2 , x ≥ 0 , P i x i = 1 o . T o find the Y -comp onent of the pro x-transform amounts to find for S ∈ S L T β Y ( Y 0 , S ) = T β Y ( u 0 , v 0 , S ) = arg max u,v ∈ ∆ trace [ S ( u − v )] − β Y X ij " u ij ln( u ij u 0 ij ) + v ij ln( v ij v 0 ij ) # . (32) One can easily obtain an explicit solution to (32): let a ij = u 0 ij exp S ij β Y , b ij = v 0 ij exp − S ij β Y . 28 Then T β Y ( Y 0 , S ) = u ∗ − v ∗ , where u ∗ ij = a ij P ij ( a ij + b ij ) , v ∗ ij = b ij P ij ( a ij + b ij ) . 29

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

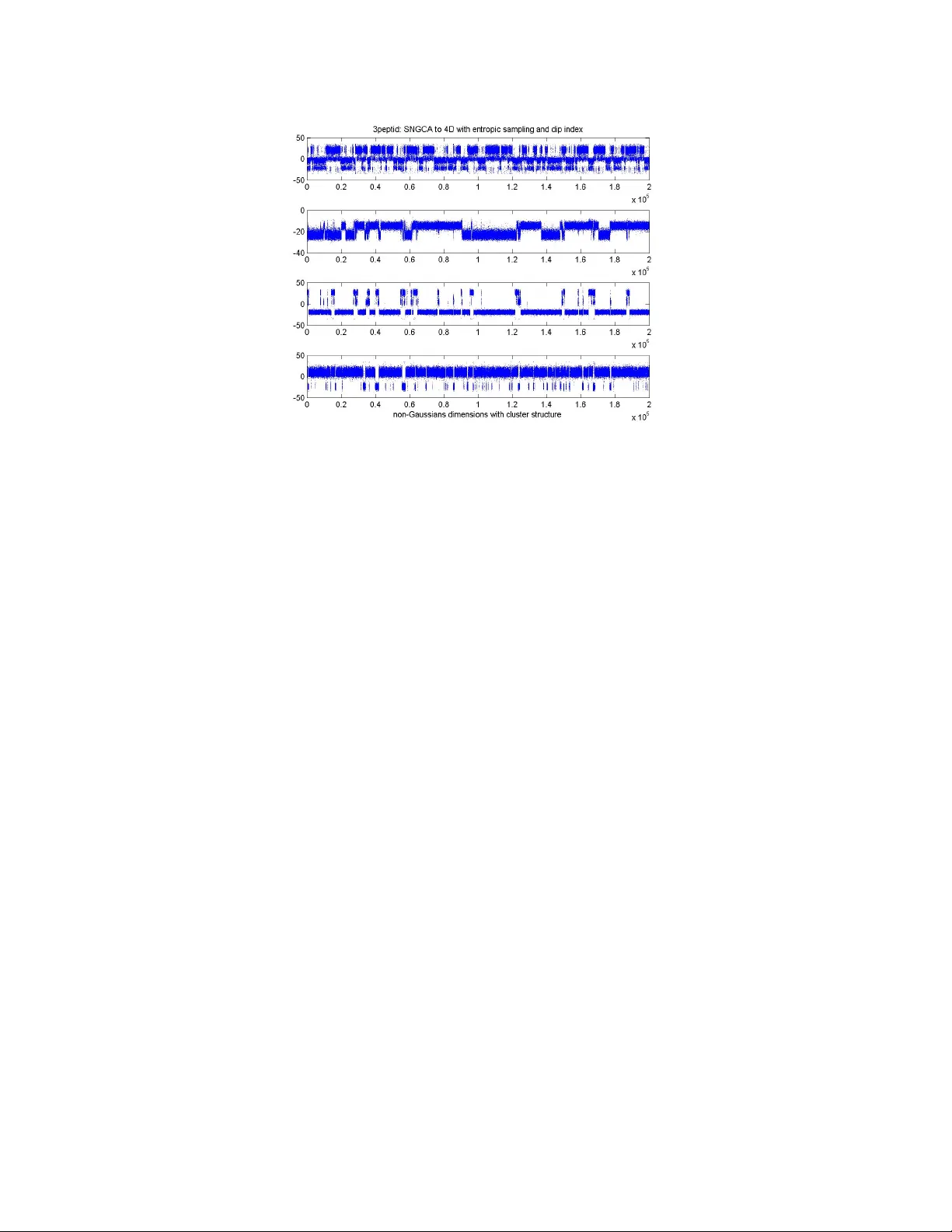

Leave a Comment