Data Traffic Dynamics and Saturation on a Single Link

The dynamics of User Datagram Protocol (UDP) traffic over Ethernet between two computers are analyzed using nonlinear dynamics which shows that there are two clear regimes in the data flow: free flow and saturated. The two most important variables af…

Authors: ** Reginald D. Smith **

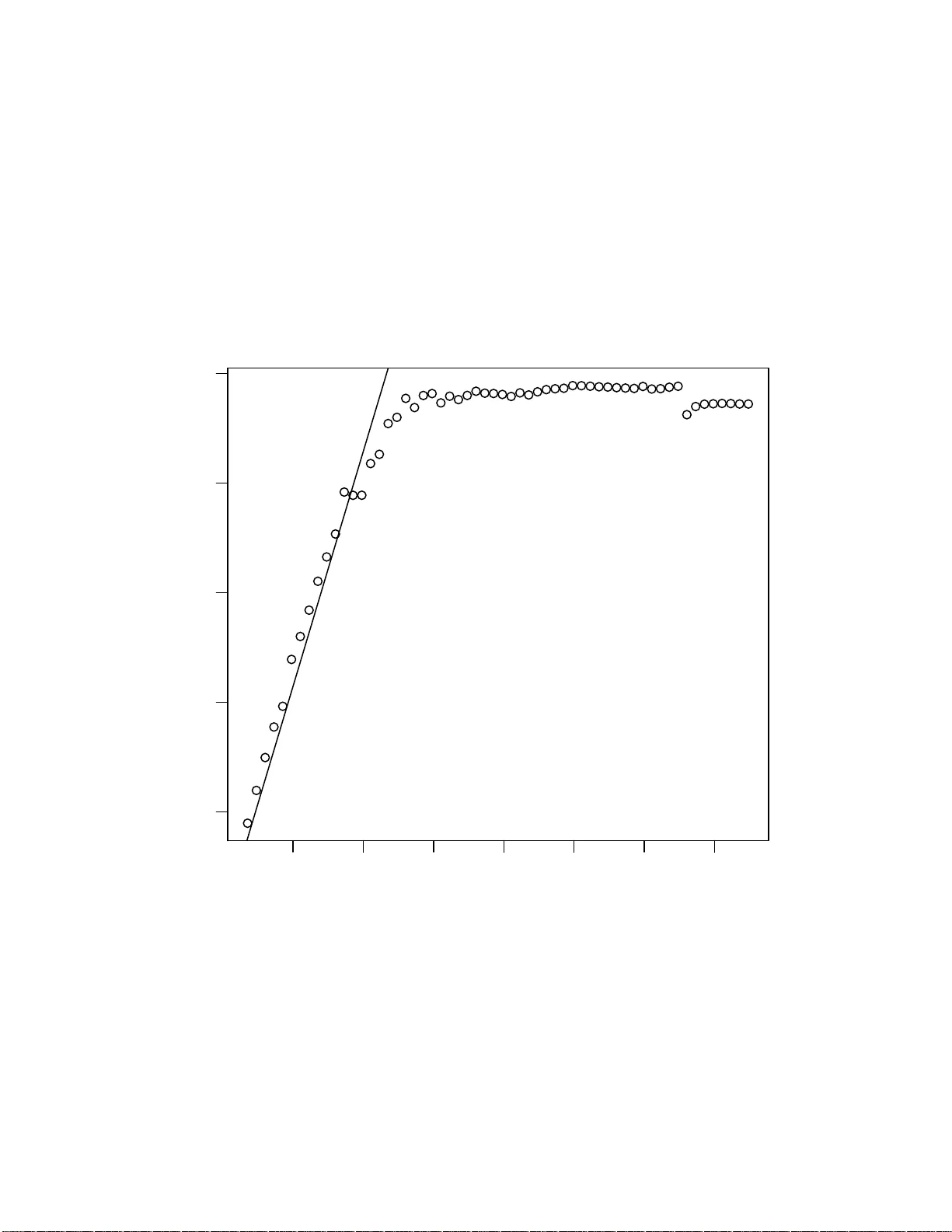

Data T raffic Dynamics and Saturation on a Single Link Reginald D. Smith Bouc het-F ranklin Researc h Inst itute, P .O. Bo x 14610 ,Ro c hester, NY, 14610 E-mail: rsmith@sl oan.mit.ed u Abstract. The dynamics of User Datag ram Proto col (UDP) traffic o v er Ethernet bet w een t w o compute rs are analyzed using nonlinear dynamics which sho ws that there are tw o clear regimes in the data flow: free flo w and saturated. The t w o most imp ortan t v ariables affecting this are the pac k et size an d pack et flo w r ate. How ev er, this transition is due to a transcritical bif urcation rather than phase transition in models such as in ve hicle traffic or theorized large-scale computer net w ork congestion. It is hop ed this model wil l help la y the groundwo rk for further research on the dynamics of net works, esp ecially computer netw orks. Data T r affic Dynamics and Satur ation on a Single Link 2 1. In tro duction The 196 9 the In ternet (then ARP ANET) was first established a s a distributed pa c ket communications netw ork that would not o nly re liably op erate if s ome o f its no des were destroyed in an enemy atta ck, but allow ea sier communications of computer resear ch results b y universities. T o day the In ternet has grown to b ecome a sprawling net work of every asp ect of h umanit y dwarfing pre v ious tec hnological mediums in bo th complexit y and behavior. It w as therefore only a matter o f time tha t adv anced statistical tec hniques, such as those develop ed by physicists in statistical mec hanics were applied to inv estigate it. Since the late 1 990s, the In ternet has bee n of In terest to the physics communit y , bec oming aw are to most in the semina l Nature pap er of W atts and Strogatz [1]. This was con tinued or par alleled by the work of co untless others [2, 3, 4, 5 , 6, 7] . How ev er, un til recen tly this research has fo cused mostly on the top ologica l as pects of netw orks and m uch less on dynamics. A par ticula rly fertile are a on netw ork dyna mics, a nd one related to this paper is the study of phase transitions fr o m free flow to congestion in computer net works [8, 9, 10, 11]. Most r esults give a critical pac ket flow on netw orks which sepa r ates free flo w fro m congested traffic. There hav e be e n some inv estigations of dynamics asp ects such as synchronization of coupled oscilla tors [12, 13, 14, 15] and some metab olic dynamics [16, 17] as well thus dyna mics is ra pidly moving from b e ing a p eriphera l to a pr imary discussion abo ut netw orks. A v ery in teresting reverse situation is visible in the studies of the statistical mechanics of vehicular tra ffic. V ehicle traffic on roa ds has b een inv estigated, also using statistical mechanics, but fo cusing on the dynamics a nd flow o f traffic versus the topo lo gy o f the road net w ork [1 8, 19, 20]. Though there is s o me overlap b et w een these tw o topics, traffic flow has b een describ ed in many wa ys with the most common description using the fundamental diag ram of vehicle flow vs. v ehicle density . Most mo dels pro pos e a t wo or three phase mo del o f traffic. The first phase is free flow, where cars dr iv e near the sp eed limit with little congestio n and influence on each other’s velocity . The final phase is cong ested traffic where traffic flow b ecomes spo n taneously congested after rea c hing a cr itical de ns it y . In the thr ee phase mo del, there is an int ermediate pha se called synchronized flow where traffic is not congested but cars match their sp eed at a reduced sp eed level effectively increasing the corr elation length of the system as a pre lude to c o ngestion. In [21] Ga´ ab or and Csaba i conduct a similar study comparing vehicle tr affic flow to da ta pack et flow using the num ber of TCP connections a s the v ariable for flow density . They find that a fundamental dia gram like pattern app ears in da ta traffic when the flow density is mo deled as the num ber o f TCP connections b et ween tw o differe n t endp oints. 2. In ternet T raffic R esearc h Issu e s In inv estigating In ternet dynamics, one is struck by how simila r the dynamics of the net work can be sup erficially similar to vehicle traffic. In ternet netw orks are made o f the flow of coun tless pack ets across net work links and can be pr one to the same free flow or congestion that vehicle traffic can b e. Like traffic data, data on Internet traffic is easily o bta inable either by setting up packet sniffer s and traffic ana lyzers betw een certain no des or using public data sets suc h as the WIDE Pro ject’s MA WI data trac e s from trans -Pacific US-Japa n T1 lines [22] or the Stanford Linear Accelerator Center (SLA C)’s P ingER pro ject which has monito red ICMP ping resp onse aga inst different Data T r affic Dynamics and Satur ation on a Single Link 3 no des across the In ternet o n a con tin uous basis for y ears [23]. How ev er, Internet traffic data analysis is complicated by many factors tha t are not ea sily accountable for in theoretica l mo dels. First, since the In ternet is a decentralized net work based on dy na mic routing, the r oute o f the traffic is not completely transpare n t. The pa th from one p oint to another can b e fairly fluid and changing even within a con tin uous flow o f tr a nsmitted data. Man y of the in termediate routers or auto no mous sys tems (AS), whic h are basic a lly Internet servic e providers, hav e priv ate and consta ntly changing routing r ules a nd configuratio ns that alter tra ffic in unpredictable wa ys to obtain certain quality of serv ice metrics or traffic shaping priorities [24]. This makes analysis of data collected from Internet tr affic fraught with questions and confusio n as far a s dis am biguating the effect of netw ork top ology on dynamics. In additio n to top ological constra in ts, the dynamics of Internet traffic itself ca n affect dynamics studies. Under the Internet Proto col (IP) suite, ther e are many transp ort level proto cols such as TCP and U DP and co un tless application level proto cols such as HTTP among others. Proto cols at all levels, including tr anspo rt and a pplication levels, ca n influence data traffic. F or example, TCP has a congestion control a lgorithm which will actually throttle netw ork sp eed g iv en the feedback it receives from pack et loss data on the netw ork, r equires p erio dic acknowledgemen ts from the des tina tion before sending more data, a nd will buffer data to send dep ending on the r ound trip time (R TT ) of the connection in or der to guar an tee delivery [25, 26]. There fore, measur emen ts o f TCP/ IP net work sp eeds, even in rela tiv ely ”clean” netw orks, can actually b e extremely complicated and dep endent on m uch more than the topo logy of the net w ork or v olume o f tra ffic. Finally , the statistical nature of Internet traffic is still p o orly understo o d. In ternet traffic v olumes a nd pack et in terarriv al times to not follow typical distributions in other communications net works such as Poisson or Erlang distr ibutions but rather exhibit bursty , self-similar traffic patterns whic h have b een very difficult to model and predict [27, 28, 2 9, 30]. Like in measure ments of In ternet top ology whe r e a r elatively small amount o f no des hav e many edges , almost e verything in the Internet that can b e measured seems to hav e a lo ng-tailed distr ibution as a matter of course. TCP a nd UDP traffic flows follow this trend where a r elatively small num b er of flows ca rry to bulk of da ta transferr ed (so-c alled ”elephant flows”) [31, 32].The orig ins o f these patterns o f traffic ar e still a matter of r esearch and debate. Add to this other inherent uncertainties and patterns in Internet tra ffic flow suc h as tr imodal distributions of pack et s izes [33], traffic spikes to due to malicious co de s uch as viruses or T ro jan directed distr ibuted denial-of-ser v ice attacks [34, 35], a nd p erio dicities in the volume of traffic caused by 12 hour, 24 hour, and 7 day cycles (with a 3.5 day har monic) [36, 37]. There is also an issue of lo ng-range correla tions of Internet tra ffic b etw een different routers which can b e r elatively uncorrela ted or v ery correlated depending on the nature of tr affic a nd the level o f co ngestion [38]. All of these factors ar e mentioned to demonstrate that mo deling a nd understanding Internet traffic dynamics is a problem likely of greater magnitude than top ological a nalysis. Given these difficulties a nd more, understanding the basics o f traffic dynamics a nd the interactions b et ween top ology and dyna mics in computer netw orks is essential to understanding theo retical asp ects, creating accurate simulations, a nd conducting useful experiments. Data T r affic Dynamics and Satur ation on a Single Link 4 3. Exp erimenta l Setup The purpo se of the exp eriment describ ed by this pa per is to ask a basic question ab out traffic dynamics: how is the thro ug hput (sp eed) of a link affected by three fundamen tal v a riables that determine the nature of net w ork traffic: the av erage packet size, the av erage packet flow ra te, and the bandwidth of the link. The bandwidth of the net work, in this case 100 Mbps (meg abits per second), is the theoretica lly ma xim um po ssible throughput. The throughput itself ca n be desc r ibed in terms o f the av erage pack et size and average flow r ate by h T i = h p ih λ i (1) Where h T i is the average throughput o f the link, p is the a v erag e size of pac kets in a transmission ov er a giv en per iod o f time and h λ i is the av erage flow rate in pack ets per second. In this exp eriment since a ll pack ets will b e off the same size in each sample we can sa y h T i = p h λ i (2) Two c omputers, b oth r unning Windows XP , were connected using an Cat 5e cable that connected to the Ethernet netw ork adapter (NIC) of b oth computer s. The Ethernet flow con trol was disabled to ensure that the flow of data, and no t signaling b etw een the computers to preven t dro pped pack ets that flow control entails, determines the throughput. T raffic w as generated using the program Iperf which genera tes a stream of identically sized pack ets a t a throughput inputted by the user. The throug hput in Iper f was desig nated at 100 Mbps in order to test what the maxim um throughput the netw ork would a ctually demonstrate given an attempt at maximum throughput. The transp ort pro to col UDP was c hosen ov er TCP for several reasons. UDP is a connectionless proto col, mea ning it do es not gua rant ee delivery , and will solely submit a string o f pac kets. TCP in a connection based pr o toco l whose delivery guarantee req uires frequent ” handshaking” be tw een the source and destinatio n and whose congestion control algor ithm can affect throughput in a non- trivial fashion delivering a low er thro ughput due to pro tocol soft ware, not the maximum throughput of the link. Finally , TCP can give differ en t p erfor ma nce ov er links with different latencies, as meas ured by packet R TT, so the results may not b e sub ject to lar ger generaliza tion [25]. T o test the pe rformance of the netw ork under differ en t sized pack ets, the UDP pack et payload was v aried from 25 bytes up to 1450 bytes in 25 byte incre men ts. In or der to ensure that eq uation 2 holds, you m ust ensur e that all pack ets are the same size. The structure of packets under Ethernet/IP is shown in figur e 1. The payload, a v ar iable selected in the Ip erf softw are, is encapsulated by a header for UDP (8 bytes), IP (20 bytes), and a heade r a nd fo oter in the Ethernet fra me (total 18 bytes). F rame is just a general term for a n E thernet pack et. These headers mostly provide routing data , priorities, chec ksums, and other information imp ortant to pack et logistics. In addition, in standard Ether net the maximum frame s ize, min us Ethernet headers a nd fo oters, is 1500 bytes. With the Ethernet overhead the total maximum size for an Ethernet frame is 1518 b ytes. Therefore, at 1475 b ytes pa yload, the total would b e 1 503 bytes and you would have pack et fragmentation - instead o f one frame you would hav e tw o, one with a fra me payload of 150 0 b ytes and a sec ond with a frame pa yload of 3 bytes. This w ould affect throughput b y showing a sudden change since av erage frame payload size w ould drop to abo ut 75 0. Given the phenomenon Data T r affic Dynamics and Satur ation on a Single Link 5 Figure 1. Structure of a pac k et in this paper. Pr oportions based o n a 50 byte pa yload. Num bers are size of headers or payload in byte s. A is the Ethernet header which con tains MAC address source and destination and payloa d type, B is the In ternet P r otocol (IP) header, C is the Us er Datagram Protocol (UDP) header, D is the data pay load and E is the Ethernet CRC chec ksum hash to prev ent acciden tal corruption of the frame. of fragmen tation, a n actual a nalysis of the effect of pac k et size on th roughput is only useful up the fragmentation size limit. In the exp erimen t, Iperf delivered a pack ed s tream of UDP traffic from the client to the ser v er computer, trying to se nd as close to bandwidth as p ossible, and outputted the av erage thro ughput in K bps (kilobits p er second). It a lso gave the pack et loss, a nd a measure called jitter which is not used but mea s ures the deviation in pa c ket interarriv al times versus interdeparture times. F or throug hput, Ipe r f measures a related measured called go o dput whic h measures data speed in ter ms of the payload siz e, not including any pack et overhead in the calculation of bytes transferred. How ev er, the pack et flow rate is a ccurate a nd is calculated from equatio n 2. Therefore the actual thro ug hput was reca lculated using pack et sizes that include bo th payload and pack et ov erhead. 4. Results A t all pack et sizes, pack et loss was small, m uc h less than 1%. The results of the exp erimen t are shown in fig ure 2. First, it is clear that thro ughput decr eases with decreasing pack et size . This first fact is well-known in the netw ork eng ineering communit y [39]. This is a n inherent prop erty of a ll net work adapters, Ethernet or otherwise. In fact, one of the key requir emen ts in next genera tion net works is the net work capabilit y to send ”jumbo frames” wher e fragmentation limits at the ne tw ork lay er are m uch larger than 1500 b ytes, up to 9000 bytes in some cases . Thes e larger pack et sizes ca use higher thro ughput and mo re efficient net w orking b e cause within the net work adapter and computer hardware, there is a per- pa c ket pro cess ing ov erhead. Despite the bandwidth r ating of ne tw ork adapters, be it 1 0,100, or 100 0 Mbps, there is a ma xim um pack et flow that they ca n effectiv ely handle. Given equa tions 1 and 2 it is clear that to maintain any given throughput, by low ering the pack et size you are increasing the pack et flow. Because of the pack et flo w pro cessing b ottlenec k in the hardware, how ever, this can make high throughput imp ossible at low pa c ket sizes. As s e en in fig ure 2, for larg e packet size s , the t hroughput is very clo s e to bandwidth and can b e r o ughly equiv ale nt to fr ee flow tra ffic. At a s pecific cr itical pack et size, how ev er, p c , the throughput b egins to rapidly degrade to the p oint it is only ab out 6 % of ba ndwidth a t 25 bytes. This is the sa turated state. Here satura tion is used instead of congestion since congestion is us ually a net w ork wide phenomenon while this pack et slowdown in throughput is due to o v erwhelming the pro cessing p ow er at the NIC. In a sup erficial wa y this b eha vior is similar to the fundament al diagra m flow-densit y curve in v ehicle traffic. Pack et flow increases with sma lle r pa c ket s iz e un til Data T r affic Dynamics and Satur ation on a Single Link 6 200 400 600 800 1000 1200 1400 20 40 60 80 100 Packet Size (bytes) including headers Throughput (Mbps) 200 400 600 800 1000 1200 1400 10 15 20 25 30 Packet Size (bytes) including headers Packet Flow (kilopackets/s) 10 15 20 25 30 2 e+04 4 e+04 6 e+04 8 e+04 1 e+05 Packet Flow (kilopackets/s) Throughput (Kbps) Figure 2. Graph s of the a verage throughput vs. pac k et si ze, av erage pack et flo w vs. pack et size, and av erage throughput vs. a ve rage pack et flo w respectfully . V ertical lines on the first t wo graphs represent the calculated critical pack et size of 451 byte s. The fitte d line on the first graph is the l ine predicted by maxim um pac k et flo w. The slight decrease at high pack et s i ze in the first graph is due to unkno wn system effects and not inconsisten t enough to reject exp eriment al results. saturation forc e s pack et flo w to b egin to slow its incre a se and approa c h a maximum v a lue. Comparis ons b etw een vehicle tra ffic should b e qua lified thoug h. In vehicle traffic, there is interaction betw een cars on the road g iving rise to the collectiv e dynamics whic h justify a statistical mec hanics interpretation. On data netw orks, pack ets do not interact with each other and packet collisio ns are erro rs, not intrinsic asp ects of the packet flo w. Therefor e, as will b e shown b elow, the transition from free flow to saturation should b e viewed a s a bifurcation in the system dy namics, not as a phase change. O n the netw ork level where there are man y interacting no des, p erhaps congestion can b e seen as a phase c hange but this p erspe c tiv e is not appropria te at the single link level. As stated e arlier, in free flo w the throughput is nearly bandwidth and comparatively , though not completely , steady state. In this regio n, there is a m utual relationship betw een pac ket size and pac k et flow. Differentiating e q uation 2 w e ha ve d h T i = pd h λ i + λdp (3) Data T r affic Dynamics and Satur ation on a Single Link 7 assuming d h T i is 0 in free flo w re g ardless of the pac k et size or flow we can conclude dp d h λ i = − p h λ i (4) So there is a tradeoff curve, like the pr oduction p ossibilit y frontiers in economics, betw een pack et size and flow in free flow traffic. Also , dp P = − d h λ i h λ i (5) demonstrating that every increase in pack et flo w is matc hed b y a cor resp onding decrease in pack et size a nd vice v ersa. Because the netw orking and computer equipment hav e v a r ious pro cesses and imperfectio ns our free flow region never reaches bandwidth and stea dily erodes with smaller pack et size, how ever, the hig h throughput feature is rela tiv ely constant compar ed to the satur ated state. A t a pack et size p c , w e have a n increasing ly r apid br eakdown in throughput. This co r resp onds a pproximately to the maximum flo w to the ne tw ork adapter a nd the breakdown into satura tion. W e ca lculate the theoretical maxim um flow as h λ i c = h T i max p c (6) where in the th eoretica lly idea l situation h T i max = B where B is the bandwidth. Therefore in the saturated r egion, the throughput h T i is given by h T i = p p c h T i max (7) so the ratio of packet size to the cr itical packet size deter mines the throughput under s aturation compared to the maximum p o ssible throughput. In the fir st graph of fig ur e 2, is a compariso n of this prediction with the obser v ed data in the saturated region w he r e h T i max is a bout 96 Mbps . Giv en data fr o m the satura ted region for h T i , h T i max , and p we can estimate the critica l packet siz e p c . In this c a se, the critical pack et siz e is approximately 45 1 bytes a t around 25 k pac k ets/s. Tho ugh this mo del se ems to accurately predict the throughput v alues for almo st the entire region of saturatio n, there is a seemingly glar ing co n tradiction in the second graph of figur e 2 where the pac ket flow keeps incr easing with decr easing packet size. Thoug h the packet flow do es not s tagnate, as it should a ccording to ideal theory , the third gra ph sho ws that the decline in thr oughput ov er a fa ir ly shor t range of pack et flow demonstrates pack et flow is the limiting factor in the saturated region. In the cong ested reg ion the pack et flow inc r eases from ab out 25 to 30 tho us and flows per second whic h is a definite increase but relativ ely s mall co mpared to the region of free flo w when it increas ed from 7 to ab out 25 without affecting throughput. Note, p c is spe c ific to the equipment and configuratio n used and do es not hav e a general v alue o f 451 b ytes. 5. Bifurcation Analysis The fact that the stable throughput of the system changes with the para meter of pack et size lea ds us to susp ect a possible bifurcation. The exchange of stabilit y b et ween quasi- bandwidth throughput and packet flow limited throughput leads to the hypothesis of Data T r affic Dynamics and Satur ation on a Single Link 8 1400 1200 1000 800 600 400 200 0 20 40 60 80 Packet Size (bytes) including headers Max Throughput − Throughput (Mbps) Figure 3. Graph of the maximum throughput measured minu s t hroughput vs pac k et size on a rev erse axis. The estimated cri tical pac k et size is gi ven b y the v ertical l i ne. a transcr itical bifurca tio n with stability changing a t p c . Assuming h T i max = B the t wo stable throughput r egimes are h T i = B a nd h T i = ( p/p c ) B , so d h T i /dt = ( h T i − B )( p p c B − h T i ) (8) and d h T i /dt = − p p c B 2 + h T i B (1 + p p c ) − h T i 2 (9) This can be see n as the traditional form of a transcritical bifurca tion wher e dx/dt = px − x 2 , how ever, this is made more clear b y making the independent v a riable B − h T i : d ( B − h T i ) /dt = B (1 − p p c )( B − h T i ) − ( B − h T i ) 2 (10) which fits the normal form for a transcritical bifurcation, dx/dt = px − x 2 . The transcritica l bifurca tion can b e mor e clearly seen in the graph of ( h T i max − h T i ) vs . the rev erse o rder axis with p in fig ure 3. 6. Discussion As men tioned b efore, the transition from fre e flow to saturated traffic here is a bifurcation not a phase change g iven the lack of interaction among the co nstituen t particles in the sys tem. This conclusion can also give pause to extrap olations of ”classica l” flo w on netw ork theory to complex netw orks such as computer netw orks. If lo oking a t the weigh ts and capa c ities of the netw ork from the p erspec tiv e of throughput, t ypical maximum flow a lgorithms such a s ma x-flow min-cut may g iv e incorrect a nsw ers if the limiting asp ect o f the flow is the pa c ket flow rate, s ize of the pack ets, or another issue that is not plainly visible. Flows in co mputer netw orks do not behav e lik e incompressible fluid or s imila r flo ws assumed in most flow models where any flo w rate freely flows up to the capa c it y minus costs incur r ed by friction, etc. Data T r affic Dynamics and Satur ation on a Single Link 9 Another p oint is other similar ities to vehicle traffic dynamics. Int erestingly , the first gr a ph of Figure 2 is similar to a flow-densit y curve see n in traffic mo dels. Even equation 1 has similarity to suc h traffic models where Q = D V (11) Where Q is the traffic flow in cars / h and D is traffic density in cars /km and V is flow velocity in km/h. There is a cr itical traffic density that s eparates free flow from synchronized flo w or congestio n that plays a simila r role to pack et size in data net works. This pap er do es not addr e s s the la rger problem of dynamics o n netw orks, ho wev er, it is the con tention o f this pap er tha t unders tanding the simple dynamics at the net work level is essential to understanding the wider implications of netw ork sized dynamics. Giv en the problems with using In ternet traffic data describ ed earlier, more understanding in the basics of traffic dyna mic may b e obtained through exp erimen tal setups o r computer netw ork simulations. F uture resear c h should lo ok at how top ological inv ar ian ts in net works a ffect dynamics and how dyna mics may affect the ev olution o f netw orks and changes in their top ologica l in v arian ts. As a final no te regarding resear c h o n dynamics in netw orks, pa r ticularly data net works and the Internet, the a utho r b eliev es that mor e interaction and cr oss- referencing b et ween the eng ineering and ph ysics commun ities will help promote better understanding and adv ancement. Althoug h there is rese a rch at an a lmo st feverish pitc h in both the physics and engineering co mm unit y on netw orking , both sets of publications seem to b e a lmo st entirely ignorant of each other. In physics, o ne of the o nly such publications commonly cited is the F aloutsos team’s w ork on the router topolo gy of the Internet [6]. Engineering researchers, in journals such as those published by IEEE and ACM, also hav e rarely quoted physics literature b esides the most well-known pap ers of Ba rab´ asi, W atts, or Str ogatz and seem la rgely unaw are of the later r esults being rea c hed b y physics in the topo logy of net works. Without a detailed understanding of the net w ork proto cols and engineering literature regarding the function of data netw orks and the Internet, this pap er would not hav e b een po ssible. A similar situatio n is seen in socio logy where a w ealth of r esearch has been done on asp ects of s ocia l net work dy namics such a s the diffusion of tr e nds along net works, in a qua n titativ ely rig orous fashio n, but again b oth communities do not usually infor m eac h other o f progress or motiv ate colla b ora tion. As the study of net works ex pa nds and matures , it will beco me necessar y to read and r each acro s s int erdisciplinar y b oundaries to share to ols and knowledge that will allow the mo st profound and predictive insig h ts to be reached. [1] D.J. W att s and S.H. Strogatz , “Coll ectiv e dynamics of ’small -w orld’ net w orks”, Natur e , v ol. 393, pp.440-442, 1998 [2] R. Alb ert, H. Jeong, and A.L. Bar ab´ asi, “Diameter of the W orl d- Wide W eb”, Natur e , vol. 401, pp.130-131, 1999 [3] M.E.J. Newman, “Scientific collab oration netw orks. I. Net w ork construction and fundamental results”, Phys. R ev. E , vol. 64, 016131, 2001 [4] M.E.J. Newman, “Scien tific collaboration net w orks. II. Shortest paths, weigh ted netw orks, and cen trality ”, Phys. R ev. E , v ol. 64, 016132, 2001 [5] S.N. Dorogo vtsev and J.F.F. Mendes, “Exactly solv able small- w orld net w ork” Eur ophys. L ett. v ol. 50, pp. 1-7, 2000 [6] M. F alout sos, P . F al outsos, and C. F aloutsos, “On p o wer-la w relationships of the Internet topology”, Comp. Comm. R ev. , vol. 29, pp.251-262, 1999 [7] R. Past or-Satorras R and A. V espigna ni, “Epidemic spreading in s cale-free netw orks”, Phys. Rev. L ett. , v ol. 86, pp.3200-3203 , 2001 [8] T. Ohira and R. Saw atari, “Phase transition i n a computer net w ork traffic mo del”, Ph ys. R ev. E , vol. 58, pp.193-195, 1998 Data T r affic Dynamics and Satur ation on a Single Link 10 [9] M. T ak a ya su, H. T ak ay asu, and K. F uku da, “Dynamic phase transition observ ed i n the In ternet traffic flow”, Physic a A, v ol. 277, pp.248-255, 2000 [10] R.V. Sol´ e and S. V alverde, “Inform ation transfer and phase transitions in a model of internet traffic ”, Physic a A , v ol. 289, pp.595-605, 2001 [11] L. Zhao, Y. C. Lai , K .H. Park, and N. Y e, “Onset of traffic congestion in co mplex net w orks”, Phys. R ev. E , vol. 71, 026125, 2005 [12] L. Lag o-F ern´ andez, R. Huerta, F. Corbac ho, and J. Si g ´ ’uenza, “F ast Response and T emp oral Coheren t Osci l lations in Small-W orld Netw orks”, Phys. R ev.L e tt. , v ol. 84, pp.2758-2761, 2000 [13] S.F. W ang and G.R. Chen, “Sync hronization in small-world dynamical netw orks”, Intl J. of Bifur c ation and Chaos , v ol. 12, pp.187-192, 2002 [14] H. Hong, Y.M. Choi, and B.J. Kim, “Sync hronization on small- w orld net w orks”, Phys. Rev. E , v ol. 65, 026139, 2002 [15] M. Barahona and L.M . P ecora, “Synchroniza tion in Small-W or ld Systems”, Phys. R e v. L ett. , vol. 89, 054101, 2002 [16] E. Almaas, A.N. Ol tv ai, and A.L. Barab´ asi, “The activit y reaction core and pl asticit y of metabolic net works” PL oS Comp. Bio. , vol. 1, pp.557-563, 2005 [17] R. Guimera and L. Nunes-Amaral, “F unctional cartography of complex metabolic netw orks” Natur e , vol. 433, pp.895-900, 2005 [18] B.S. Kerner and H. Rehborn, “Exp eriment al properties of phase transitions in traffic flow” , Phys. R ev. Lett. , v ol. 79, pp.4030-4033, 1997 [19] C.F. Daganzo, M.J. Cassi dy , and R.L. Bertini, “Causes And Effects Of Phase T ransitions In Highw a y T raffic (Researc h Rep ort)”, Unive rsity of Califor nia, Berkeley Institute of T ransp ortation Studies Research Rep ort, Rep or t No. UCB-ITS-RR-97-8, 1997 [20] D. Chowdh ury , L. Santen , and A. Schadsc hneider, “Statistical Physics of V ehicular T raffic and Some Related Systems” Phys. R ep. , vo l. 329, pp.199-329, 2000 [21] S. G´ abor and I. Cs abai, “The analogies of high wa y and computer net work traffic”, Physic a A , v ol. 307, pp.516-526, 2002 [22] Widely Integrate d Distributed Environmen t (WIDE) Pro ject, Kanaga w a, Japan, MA WI W orking Group T raffic Archiv e (WIDE Backbone traffic traces)[Online]. Av ailable: h ttp://ma wi.wide.ad.jp/mawi/ [23] Stanford Linear Accelerator Center (SLAC) PingER End- to-End Pe rformance Measuring Pr o ject [Online]. Av ailable: ht tp://www-iepm.slac.stanford.edu/pinger/ [24] H. Chang, S. Jamin, Z.M. Mao, and W. Willinger, “An Empiri cal Approach to Mo deling Int er-AS T raffic M atrices” in Pr o c. of t he 5th A CM SIGCOMM Confer enc e on Internet Me asur ement , Berke ley , CA 2005, pp.139-152 [25] M. M athis, J. Semk e, J. M ahda vi, and T. Ott, “The macroscopic behavior of the TCP congestion a voidan ce”, Comp. Comm. R ev. , v ol. 27, no. 3, pp.67-82, 1997 [26] A. V eres and M. Bo da, “The Chaotic Nature of TCP Congestion Cont rol’, in. Pr o c. of INF OCOM 2000 , v ol. 3, T el Aviv, Israel, 2000, pp. 1715-1723 [27] W.E. Leland, M. S. T aqqu, W. Willinger, and D.V. Wilson, “On the Self-Sim ilar Nature of Ethernet T raffic (Extended V ersion)”, IEEE/A CM T r ans. on Ne tworking , vol. 2, pp.1-15, 1994 [28] A. F eldmann, A. Gi lbert, and W. Wil linger, “Data netw orks as cascades: Inv estigating the multifractal nature of In ternet W AN traffic”, Pr o c. of ACM SIGCOMM ’98 , V ancouv er, Canada, 1998, pp.42-55 [29] S. Uhlig, “Non-stationarit y and high-order scali ng in TCP flow arriv als: a methodological analysis”, Comp. Comm. Rev. , v ol. 34, no. 2, pp.9-24, 2004 [30] K. F ukuda, H. T ak ay asu, and M . T ak a y asu, “Origin of Critical Behavior in Ethernet T raffic”, Physic a A , vol. 287, pp.289-301, 2000 [31] W. F ang and L. Peterson, “Inter-AS traffic patterns and their impl i cations” in Pr o c. Of the Glob al T ele c ommunic ations Confer enc e, GLOBECOM ’ 99 , Rio de Janeiro, B r azil, v ol. 3, 1999, pp.1859-1868 [32] T. Mori, R. Kaw ahara, S. Naito, and S. Goto, “On the Characteristics of Inte rnet T r affic V ariabili ty: Spik es and Elephants” , in Pr o c. of the 2004 International Symp osium on Applic ati ons and the Internet , T oky o, Japan, 2004, pp.99-106 [33] C. Dovrolis, P . Ramanathan, and D. Moor e, “What Do Pac k et Disp ersion T echnique s Measure?” in Pr o c . of INF OCOM 2001 Anchorage , A L, v ol. 2, 2001, pp.905-914 [34] J. Cowie and A. Ogiels ki, “Global Rout ing Instabilities during Co de Red 2 and Nimda W orm Propagation (conference report)”, CAIDA Internet Sta tistics and Metrics Analysis W orkshop, San Diego, CA , Decem ber 2001 [35] D. Moore, C. Shannon, D.J. Bro wn, G.M. V oelker, an d S. Sa v age, “Inferri ng In ternet Denial-of- Data T r affic Dynamics and Satur ation on a Single Link 11 Service Activity” ACM T r ans. on Comp. Sys. , v ol.24, no. 2, pp.115-139, 2006 [36] X.M. He, C. Papadopoulos, J. Heidemann, and A. Hussain, “Sp ectral Characteristics of Saturated Links (research rep ort)”, Universit y of Southern Cali fornia USC/CS Report, Los Angeles, CA, 2004 [37] K. Papagiannak i, N. T aft, Z.L. Zhang, and C. Diot, “Long-term forecasting of Int ernet backbone traffic: observ ations and initial mo dels” in Pr o c. of INF OCOM 2003 , San F rancisco, CA, vol. 2, 2003, pp.1178-1188 [38] M. Barth´ elemy , B. Gondran, and E. Guichard, “Large s cale cross - correlations in internet traffic”, Phys. R ev. E , vol. 66, 056110, 2002 [39] M. Mathis, “Pushing up the Internet MTU (conference rep ort)”, Join t-T ec hs W orkshop , Miami, FL, 2003 200 400 600 800 1000 1200 1400 20 40 60 80 100 Packet Size (bytes) including headers Throughput (Mbps)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment