Spectral clustering based on local linear approximations

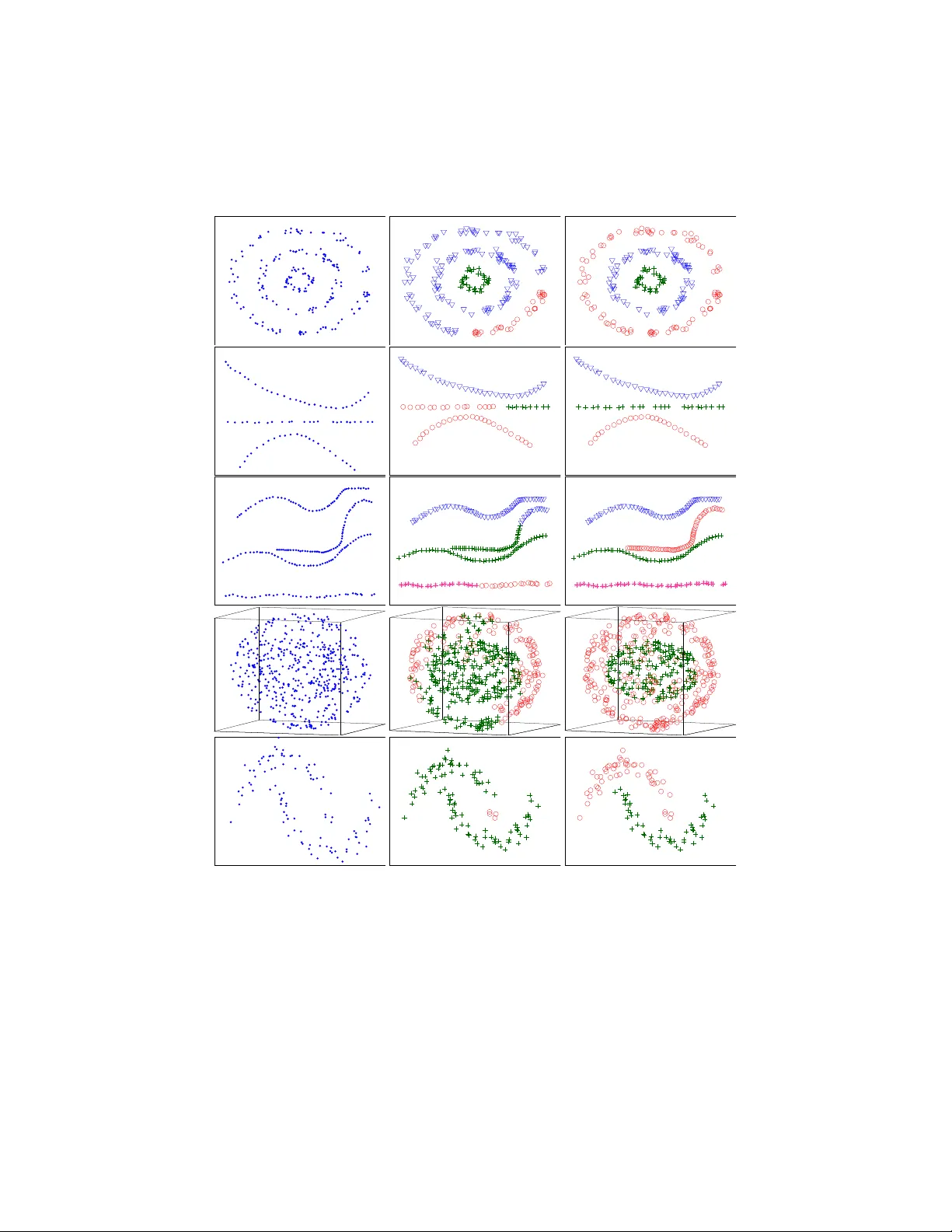

In the context of clustering, we assume a generative model where each cluster is the result of sampling points in the neighborhood of an embedded smooth surface; the sample may be contaminated with outliers, which are modeled as points sampled in spa…

Authors: Ery Arias-Castro, Guangliang Chen, Gilad Lerman