Multi-Instance Multi-Label Learning

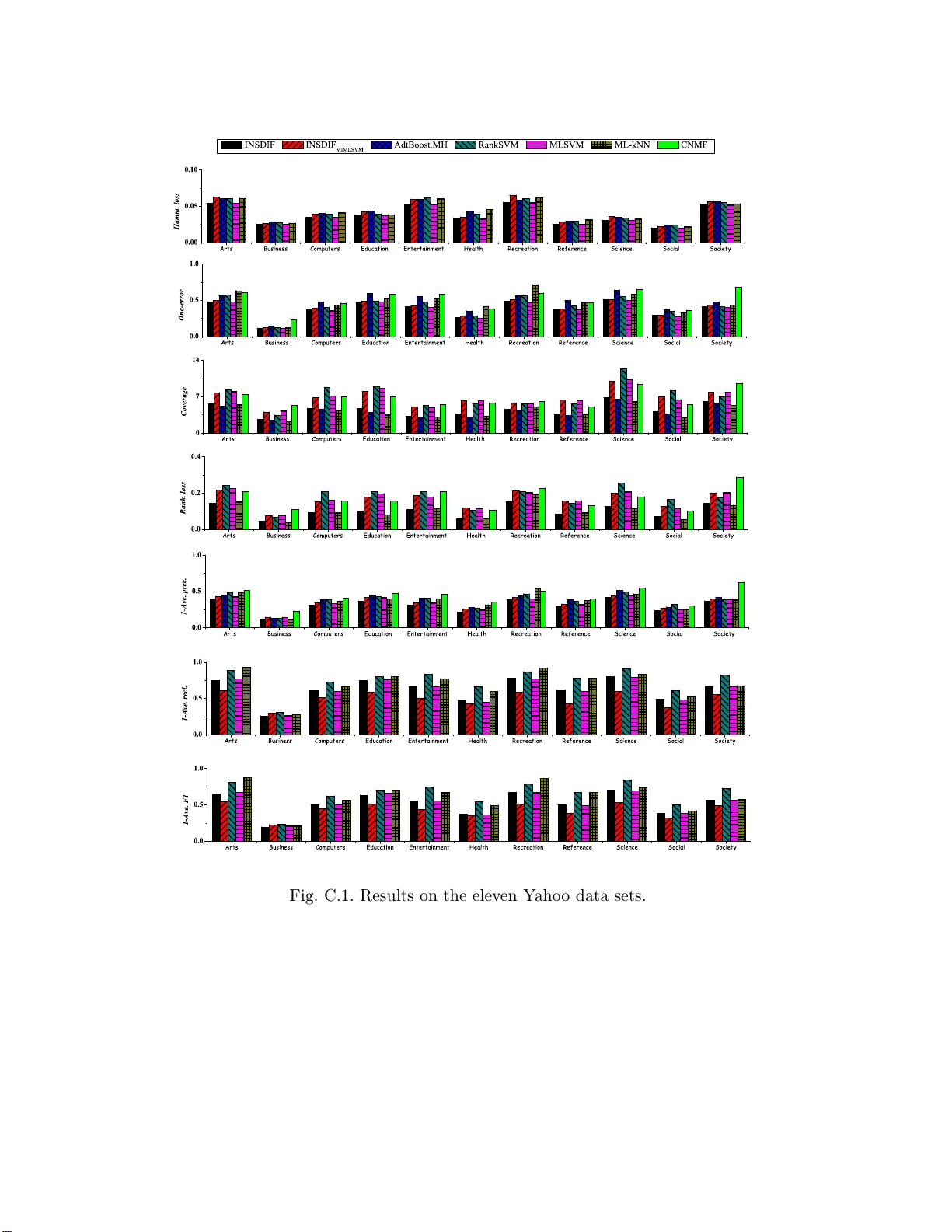

In this paper, we propose the MIML (Multi-Instance Multi-Label learning) framework where an example is described by multiple instances and associated with multiple class labels. Compared to traditional learning frameworks, the MIML framework is more …

Authors: Zhi-Hua Zhou, Min-Ling Zhang, Sheng-Jun Huang